Section: New Results

Audio-Visual Speaker Tracking and Diarization

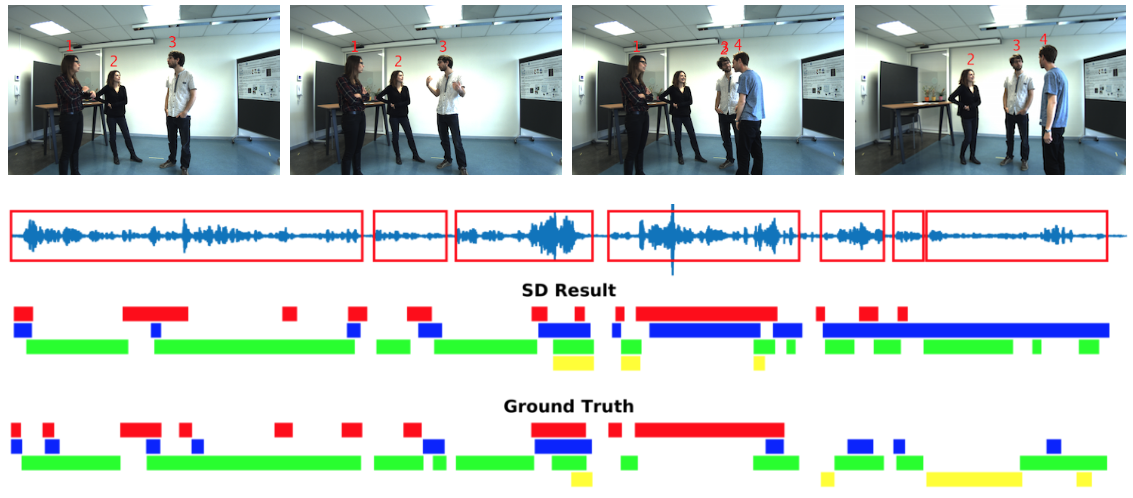

We are particularly interested in modeling the interaction between an intelligent device and a group of people. For that purpose we develop audio-visual person tracking methods [33], [41], [52], [39]. As the observed persons are supposed to carry out a conversation, we also include speaker diarization into our tracking methodology. We cast the diarization problem into a tracking formulation whereby the active speaker is detected and tracked over time. A probabilistic tracker exploits the spatial coincidence of visual and auditory observations and infers a single latent variable which represents the identity of the active speaker. Visual and auditory observations are fused using our recently developed weighted-data mixture model [10], while several options for the speaking turns dynamics are fulfilled by a multi-case transition model. The modules that translate raw audio and visual data into image observations are also described in detail. The performance of the proposed method are tested on challenging datasets that are available from recent contributions which are used as baselines for comparison [33].

Websites:

https://team.inria.fr/perception/research/wdgmm/

https://team.inria.fr/perception/research/speakerloc/

https://team.inria.fr/perception/research/speechturndet/

https://team.inria.fr/perception/research/avdiarization/

|