Keywords

Computer Science and Digital Science

- A1.3.2. Mobile distributed systems

- A1.5.2. Communicating systems

- A2.3.1. Embedded systems

- A3.4.1. Supervised learning

- A3.4.2. Unsupervised learning

- A3.4.3. Reinforcement learning

- A3.4.4. Optimization and learning

- A3.4.5. Bayesian methods

- A3.4.6. Neural networks

- A3.4.8. Deep learning

- A5.1. Human-Computer Interaction

- A5.4.1. Object recognition

- A5.4.2. Activity recognition

- A5.4.4. 3D and spatio-temporal reconstruction

- A5.4.5. Object tracking and motion analysis

- A5.4.6. Object localization

- A5.4.7. Visual servoing

- A5.10.2. Perception

- A5.10.3. Planning

- A5.10.4. Robot control

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A5.10.6. Swarm robotics

- A5.10.7. Learning

- A5.11.1. Human activity analysis and recognition

- A6.1.2. Stochastic Modeling

- A6.1.3. Discrete Modeling (multi-agent, people centered)

- A6.2.3. Probabilistic methods

- A6.2.6. Optimization

- A6.4.1. Deterministic control

- A6.4.2. Stochastic control

- A6.4.3. Observability and Controlability

- A8.2. Optimization

- A8.2.1. Operations research

- A8.2.2. Evolutionary algorithms

- A8.11. Game Theory

- A9.2. Machine learning

- A9.5. Robotics

- A9.6. Decision support

- A9.7. AI algorithmics

- A9.9. Distributed AI, Multi-agent

- A9.10. Hybrid approaches for AI

Other Research Topics and Application Domains

- B5.2.1. Road vehicles

- B5.6. Robotic systems

- B7.1.2. Road traffic

- B8.4. Security and personal assistance

1 Team members, visitors, external collaborators

Research Scientists

- Christian Laugier [INRIA, Emeritus, HDR]

- Agostino Martinelli [INRIA, Researcher]

- Alessandro Renzaglia [INRIA, Researcher]

- David Sierra Gonzalez [INRIA, Starting Research Position]

Faculty Members

- Olivier Simonin [Team leader, INSA LYON, Professor, HDR]

- Jilles Dibangoye [INSA LYON, Associate Professor]

- Christine Solnon [INSA LYON, Professor, HDR]

- Anne Spalanzani [UGA, Professor, HDR]

Post-Doctoral Fellows

- Wenqian Liu [INRIA]

- LoÏc Rouquette [CNRS, from Oct 2022]

PhD Students

- Rabbia Asghar [INRIA]

- Théotime Balaguer [INSA LYON, from Nov 2022]

- Edward Beeching [INRIA]

- Alexandre Bonnefond [INRIA]

- Estéban Carvalho [UGA]

- Aurélien Delage [INSA LYON]

- Niranjan Deshpande [INRIA, ANR grant]

- Manuel Diaz Zapata [INRIA]

- Romain Fontaine [INSA LYON]

- Jean-Baptiste Horel [INRIA]

- Pierre Marza [INSA Lyon]

- Xiao Peng [INSAVALOR]

- Benoit Renault [CPE LYON / INSAVALOR]

- LoÏc Rouquette [CNRS, until Nov 2022]

- Samuele Zoboli [INSA LYON]

Technical Staff

- Andres Gonzalez Moreno [INRIA]

- Robin Baruffa [INRIA, Engineer, from Oct 2022]

- Johan Faure [INRIA, Engineer, from Mar 2022]

- Simon Ferrier [INSAVALOR, from Sep 2022]

- Thomas Genevois [INRIA, Engineer]

- Pierrick Koch [INRIA, Engineer, from Mar 2022]

- Khushdeep Singh Mann [INRIA, Engineer]

- Anshul Paigwar [INRIA, until Jul 2022]

- Lukas Rummelhard [INRIA, SED Inria Grenoble]

- Gustavo Andres Salazar Gomez [INRIA, Engineer, from Sep 2022, INRIA]

- Amrita Suresh [INRIA, until Jul 2022]

- Abhishek Tomy [INRIA, Engineer]

- Stéphane d'Alu [INSA LYON, Engineer]

Interns and Apprentices

- Samir Abou Haidar [INRIA, from Feb 2022 until Jun 2022]

- Marco Ambrogi [INSAVALOR, from Sep 2022]

- Olivier Idir [INSA Lyon (CITI), from Feb 2022 until Jul 2022]

- Gustavo Andres Salazar Gomez [INRIA, from Mar 2022 until Aug 2022]

Administrative Assistant

- Anouchka Ronceray [INRIA]

External Collaborators

- Ozgur Erkent [UNIV HACETTEPE]

- Fabrice Jumel [CPE LYON]

- Jacques Saraydaryan [CPE LYON]

2 Overall objectives

2.1 Origin of the project

Chroma is a bi-localized project-team at Inria Lyon and Inria Grenoble (in Auvergne-Rhône-Alpes region). The project was launched in 2015 before it became officially an Inria project-team on December 1st, 2017. It brings together experts in perception and decision-making for mobile robotics and intelligent transport, all of them sharing common approaches that mainly relate to the field of Artificial Intelligence. It was originally founded by members of the working group on robotics at CITI lab1, led by Prof. Olivier Simonin (INSA Lyon2), and members from Inria project-team eMotion (2002-2014), led by Christian Laugier, at Inria Grenoble. Earlier members include Olivier Simonin (Prof. INSA Lyon), Christian Laugier (Inria researcher DR, Grenoble), Anne Spalanzani (Prof., UGA), Jilles Dibangoye (Asso. Prof. INSA Lyon) and Agostino Martinelli (Inria researcher CR, Grenoble). On January 2020, Christine Solnon (Prof. INSA Lyon) joined the team, thanks to her transfer from LIRIS lab. to CITI lab. On October 2021, Alessandro Renzaglia (Inria researcher CR, Lyon) was recruited through the Inria researcher recruitment campaign.

The overall objective of Chroma is to address fundamental and open issues that lie at the intersection of the emerging research fields called “Human Centered Robotics” 3, “Multi-Robot Systems" 4, and AI for humanity.

More precisely, our goal is to design algorithms and models that allow autonomous agents to perceive, decide, learn, and finally adapt to their environment. A focus is given to unknown and human-populated environments, where robots or vehicles have to navigate and cooperate to fulfill complex tasks.

In this context, recent advances in embedded computational power, sensor and communication technologies, and miniaturized mechatronic systems, make the required technological breakthroughs possible.

Chroma is clearly positioned in the "Artificial Intelligence and Autonomous systems" research theme of the Inria 2018-2022 Strategic Plan. More specifically we refer to the "Augmented Intelligence" challenge (connected autonomous vehicles) and to the "Human centred digital world" challenge (interactive adaptation).

2.2 Research themes

To address the mentioned challenges, we take advantage of recent advances in: probabilistic methods, machine learning, planning techniques, multi-agent decision making, and constrained optimisation tools. We also draw inspiration from other disciplines such as Sociology, to take into account human models, or Physics/Biology, to study self-organized systems.

Chroma research is organized in two main axes : i) Perception and Situation Awareness ii) Decision Making. Next, we elaborate more about these axes.

-

Perception and Situation Awareness.

This theme aims at understanding complex dynamic scenes, involving mobile objects and human beings, by exploiting prior knowledge and streams of perceptual data coming from various sensors.

To this end, we investigate three complementary research problems:

- Bayesian & AI based Perception: How to interpret in real-time a complex dynamic scene perceived using a set of different sensors, and how to predict the near future evolution of this dynamic scene and the related collision risks ? How to extract the semantic information and to process it for the autonomous navigation step.

- Modeling and simulation of dynamic environments: How to model or learn the behavior of dynamic agents (pedestrians, cars, cyclists...) in order to better anticipate their trajectories?

- Robust state estimation: Acquire a deep understanding on several sensor fusion problems and investigate their observability properties in the case of unknown inputs.

-

Decision making.

This theme aims to design algorithms and architectures that can achieve both scalability and quality for decision making in intelligent robotic systems and more generally for problem solving.

Our methodology builds upon advantages of three (complementary) approaches: online planning, machine learning, and NP-hard optimization problem solving.

- Online planning: In this theme we study planning algorithms for single and fleet of cooperative mobile robots when they face complex and dynamics environments, i.e. populated by humans and/or mostly unknown.

- Machine learning: We search for structural properties–e.g., uniform continuity–which enable us to design efficient planning and (deep) reinforcement learning methods to solving complex single or multi-agent decision-making tasks.

- Offline constrained optimisation problem: We design and study approaches based on Constraint Programming (CP) and meta-heuristics to solve NP-hard problems such as planning and routing problems, for example.

Chroma is also concerned with applications and transfer of the scientific results. Our main applications include autonomous and connected vehicles, service robotics, exploration & mapping tasks with ground and aerial robots. Chroma is currently involved in several projects in collaboration with automobile companies (Renault, Toyota) and some startups (see Section 4).

The team has its own robotic platforms to support the experimentation activity (see5). In Grenoble, we have two experimental vehicles equipped with various sensors: a Toyota Lexus and a Renault Zoe; the Zoe car has been automated in December 2016. We have also developed two experimental test tracks respectively at Inria Grenoble (including connected roadside sensors and a controlled dummy pedestrian) and at IRT Nanoelec & CEA Grenoble (including a road intersection with traffic lights and several urban road equipments). In Lyon, we have a fleet of UAVs (Unmanned Aerial Vehicles) composed of 4 PX4 Vision, 2 IntelAero and 5 mini-UAVs Crazyflies. We have also a fleet of ground robots composed of 16 Turtlebot and 3 humanoids Pepper. The platforms are maintained and developed by contractual engineers, and by Lukas Rummelhard, from SED, in Grenoble.

3 Research program

3.1 Introduction

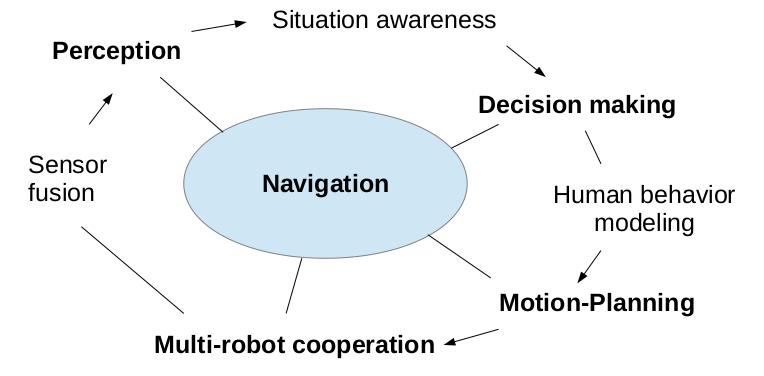

The Chroma team aims to deal with different issues of autonomous navigation and intelligent transport: perception, decision-making and cooperation. Figure 1 schemes the different themes and sub-themes investigated by Chroma.

Research themes of the team: Sensor fusion, perception, situation awareness, decision making, motion planning, multi-robot cooperation

We present here after our approaches to address these different themes of research, and how they combine altogether to contribute to the general problem of robot navigation. Chroma pays particular attention to the problem of autonomous navigation in highly dynamic environments populated by humans and cooperation in multi-robot systems. We share this goal with other major robotic laboratories/teams in the world, such as Autonomous Systems Lab at ETH Zurich, Robotic Embedded Systems Laboratory at USC, KIT 6 (Prof. Christoph Stiller lab and Prof. Ruediger Dillmann lab), UC Berkeley, Vislab Parma (Prof. Alberto Broggi), and iCeiRA 7 laboratory in Taipei, to cite a few. Chroma collaborates at various levels (visits, postdocs, research projects, common publications, etc.) with most of these laboratories.

3.2 Perception and Situation Awareness

Project-team positioning

The team carries out research across the full (software) stack of an autonomous driving system. This goes from perception of the environment to motion forecasting and trajectory optimization. While most of the techniques that we develop can stand on their own and are typically tested on our experimental platforms, we slowly move towards their complete integration. This will result in a functioning autonomous driving software stack. Beyond that point, we envision to focus our research on developing new methods that improve the individual performance of each of the stack’s components, as well as the global performance. This is alike to how autonomous driving companies operate (e.g. Waymo, Cruise, Tesla). The research topics that we explore are addressed by a very long list of international research laboratories and private companies. However, in terms of scope, we find the research groups of Prof. Dr.-Ing. Christoph Stiller (KIT), Prof. Marcelo Ang (NUS), Prof. Daniela Rus (MIT) and Prof. Wolfram Burgard (TRI California) to be the closest to us; we have active interconnections with these research groups (e.g. in the scope of the IEEE RAS Technical Committee AGV-ITS we are co-chairing any of the related annual Workshops). At the national level, we are cooperating in the framework of several R&D projects with UTC, Institut Pascal Clermont-Ferrand, University Gustave Eiffel, and the Inria Teams RITS, Acentauri and Convecs. Our main research originality relies in the combination of Bayesian approaches, AI technologies, Human-Vehicle interactions models, Robust State Estimation formalism, and a mix of Real experiments and Formal methods for validating our technologies in complex real world dynamic scenarios.

Our research on visual-inertial sensor fusion is closely related to the research carried out by the group of Prof. Stergios Roumeliotis (University of Minnesota) and the group of Prof. Davide Scaramuzza (University of Zurich). Our originality in this context is the introduction of closed-form solutions instead of filter-based approaches.

Scientific achievements

1. Bayesian & AI based Environment Perception and Forecasting

In order to be able to operate in a dynamic environment surrounded by humans or other robots, an autonomous system needs to Perceive, Understand and Forecast any changes of its surroundings. We have investigated the perception task from different angles, most of them with the shared nexus of involving both Bayesian approaches and Deep Learning models.

Bayesian Perception: The team's work on Bayesian approaches has given rise over the last decade to numerous publications and to some patents and licensed software8. Over the past 4 years, we have focused on collision risk assessment, safe navigation and semantic processing issues, with a strong emphasis on the integration and the validation of our technologies on real-time experimental platforms. The new results gave rise to the filing of two patents and to a transfer of technology with a French SME and with a Japanese international company (R&D work still ongoing). The main advances relate to (1) the generalization of the principle of Bayesian fusion in order to be able to integrate connected sensors located outside the ego-vehicle (infrastructure or other vehicles), (2) new real-time methods allowing to better estimate the risks of collision and make navigation safer (including path planning), and (3) experimental validation principle combining real tests, synchronized simulated environments (augmented reality principle) and formal methods 49, 86, 93, 48. A new PhD thesis addressing the subject of validating AI-based perception components in autonomous vehicles, with a particular focus on formal validation methods, has started in 2021 in cooperation with the Inria Convecs team (funding: French project PRISSMA).

Situation awareness & Environment Forecasting: In addition to the above-mentioned work, we have developed AI-based methods for extracting the semantic information of observed scenes 83, 80, 84, 111. In particular, we have developed hybrid methods for 3D object detection 95, 11496, semantic mapping of the environment from a top-view 67, 68, 69 and ground plane estimation and segmentation 94. We have likewise investigated the use of domain adaptation techniques to mitigate the degradation of some of the previous algorithms across different weather conditions 65, 62 and sensor modalities 111. In terms of environment forecasting, two PhD theses were dedicated to the task of predicting the future behavior of surrounding traffic 47, 115. The first of them targeted road intersections; the second focused on highway scenarios and leveraged a behavioral model learned from driving demonstrations 112 to cope with the long-term prediction horizon necessary for highways. Both theses also explored how to integrate the forecasting models into a behavioral planning framework 113. In addition to these forecasting models, we have also carried out research on localization 46, tracking 66, detection of abnormal behaviors 122, and contextualization of complex risk mitigation scenarios 110. A new PhD thesis addressing the subject of “Hybrid Sensor fusion” was launched in 2021 (funding: EU CPS4EU).

2. Modeling and simulation of dynamic environments

Modeling pedestrian's behaviors with a limited amount of data is a challenge. In Pavan Vasishta's PhD and the ANR Valet project, we proposed to learn typical pedestrian behavior using HMM, starting from prior knowledge itself derived from the JJ Gibson’s sociological principles of “Natural Vision” and “Natural Movement”. The work assumes that there are some parts of the observed scene which are more attractive to pedestrians and some areas, repulsive 118.

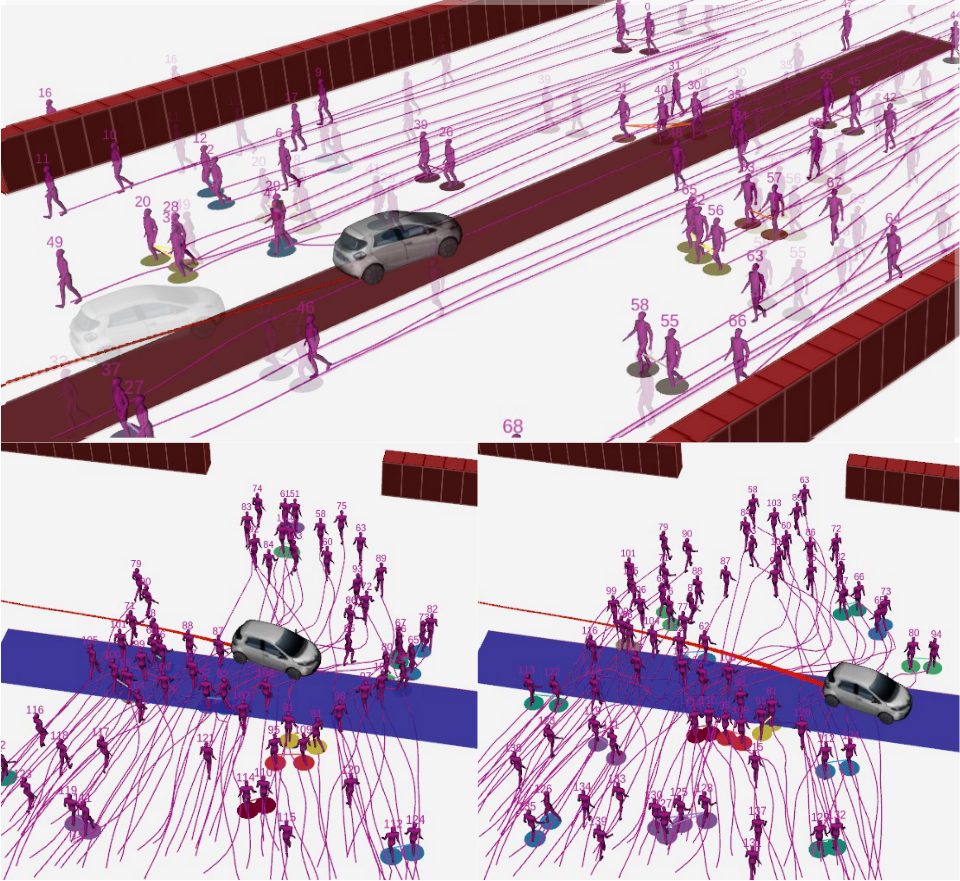

Pedestrians simulation. We studied the behavior of pedestrians in a shared space with an autonomous vehicle by modeling and simulating realistic pedestrian behavior. Our approach integrates empirical observations and concepts from social science into an agent-based model and simulator for an application in robotics. At each step, the proposed model has been evaluated and validated through simulations of many scenarios and comparisons with real-world data. Then we proposed the simulator SPACiSS, "Simulator for Pedestrians and an Autonomous Car in Shared Spaces", an open source simulator based on the PedSim framework that can simulate interactions between pedestrians and vehicles in different shared space scenarios. With the integration in the ROS framework, commonly used in robotics, the software is designed as an environment to test autonomous navigation systems 98, 99100, 101.

Robots in human flows. Another work, led by J. Saraydaryan, was conduced on simulating pedestrians and mobile robots in indoor environments, by extending PedSim. This work aimed to simulated scenarios where robots perceive and map human flows and compute paths by exploiting such information (in the cost-function of A* algorithm) 10874.

3. Robust State Estimation

Our investigations have been in the framework of the unknown input observability problem. This problem was introduced and firstly investigated during the sixties and has remained unsolved for 50 years. During the last 8 years, we have studied this fundamental open problem. Due to its complexity, the general solution was published in a book 89. The main result of this year is the extension of the above solution. Specifically, in 89, we provided the solution by restricting our investigation to systems that satisfy a special assumption that is called canonicity with respect to the unknown inputs. In 2022, after an exhaustive characterization of the concept of canonicity, we also accounted for the case when this assumption is not satisfied and we obtained the most general solution of the problem. These results have been published by the Journal of Information Fusion 13. A further result obtained in 2022, is the extension of the well known observability rank condition to time-varying systems. This extension has been published by the Transaction on Automatic Control 12.

Collaborations

- Main long-term industrial collaborations: IRT Nanoelec & CEA 10 years), Toyota Motor Europe (12 years, R&D center Zaventen-Brussels), Renault (10 years), SME Easymile (3 years), Sumitomo Japan (started in 2019), Iveco (3 years in the framework of STAR project). These collaborations are funded either by Industry or by National, Regional or European projects. The main outputs are common publications, softwares development, patents or technological transfers. Two former doctoral students of our team were recruited some years ago by respectively TME Zaventen and Renault R&D centers.

- Main academic collaborations: Institut Pascal Clermont-Ferrand, University Compiegne (UTC), University Gustave Eiffel, several Inria Teams (RITS, Acentauri, Convecs, Rainbow). These collaborations are most of the time conducted in the scope of various National R&D projects (FUI, PIA, ANR, etc.). The main outputs are scientific and software exchanges. In addition, we had a fruitful collaboration with B. Mourrain from the Inria-Aromath team. The goal was to find the analytical solution of a polynomial equation system that fully characterizes the cooperative visual-inertial sensor fusion problem with 2 agents.

- International cooperation for scientific exchanges and IEEE scientific events organization, e.g. NUS & NTU Singapore, Peking University, TRI Mountain View, KIT Karlsruhe, Coimbra University. We have constantly interacted with Prof. D. Scaramuzza from the university of Zurich to make our findings in visual inertial sensor fusion more usable in a realistic context (e.g., for the autonomous navigation of drones).

3.3 Decision Making

Project-team positioning

In his reference book Planning algorithms78, S. LaValle discusses the different dimensions that make the motion-planning a complex problem, which are the number of robots, the obstacle regions, the uncertainty of perception and action, and the allowable velocities. In particular, it is emphasized that multiple robot planning in complex environments are NP-hard problems which implies that exact approaches have exponential time complexities in the worst case (unless P=NP). Moreover, dynamic and uncertain environments, as human-populated ones, expand this complexity. In this context, we aim at scaling up decision-making in human-populated environments and in multi-robot systems, while dealing with the intrinsic limits of the robots and machines (computation capacity, limited communication). To address these challenges, we explore new algorithms and AI architectures following three directions: online (or real-time) planning, machine learning to adapt to the complexity of uncertain environments, and combinatorial optimization to deal with offline constrained problems. Combining these approaches, seeking to scale up them, and also evaluating them with real platforms are also elements of originality of our work.

We share these goals with other laboratories/teams in the world, such as the Mobile Robotics Laboratory and Intelligent Systems "IntRoLab" at Sherbrooke University (Montreal) led by Prof. F. Michaud, the Learning Agents Research Group (LARG) within the AI Lab at the University of Texas (Austin) led by Prof. P. Stone, the Autonomous Systems Lab at ETH Zurich (Switzerland) led by R. Siegwart, the Robotic Sensor Networks Lab at the University of Minnesota (USA) led by Prof. V. Isler, and the Autonomous Robots Lab at NTNU (Norway) to cite a few. At Inria, we share some of the objectives with the LARSEN team in Nancy (multi-agent machine learning, multi-robot planning) and the RAINBOW team in Rennes (multi-UAV planning). We have also collaborations with the ACENTAURY team, in Sophia Antipolis, about autonomous navigation among humans. In France, among other labs involved on similar subjects, we can cite the LAAS (CNRS, Toulouse), the ISIR lab (CNRS, Paris Sorbonne Univ.) and the MAD team in Caen University. In the more generic domain of problem solving, we can mention the TASC team at LS2N (Nantes), the CRIL lab (Lens) and, at the international level, we can cite the Insight Centre for Data Analytics (Cork, Ireland).

Scientific achievements

1. Online Planning

In this theme, we address online planning when robots/vehicles/UAVs have to navigate or work in complex and generally unknown environments. The challenge comes from the necessity for entities to decide actions in real time and with limited information. Hereafter we present our main results following different problem contexts.

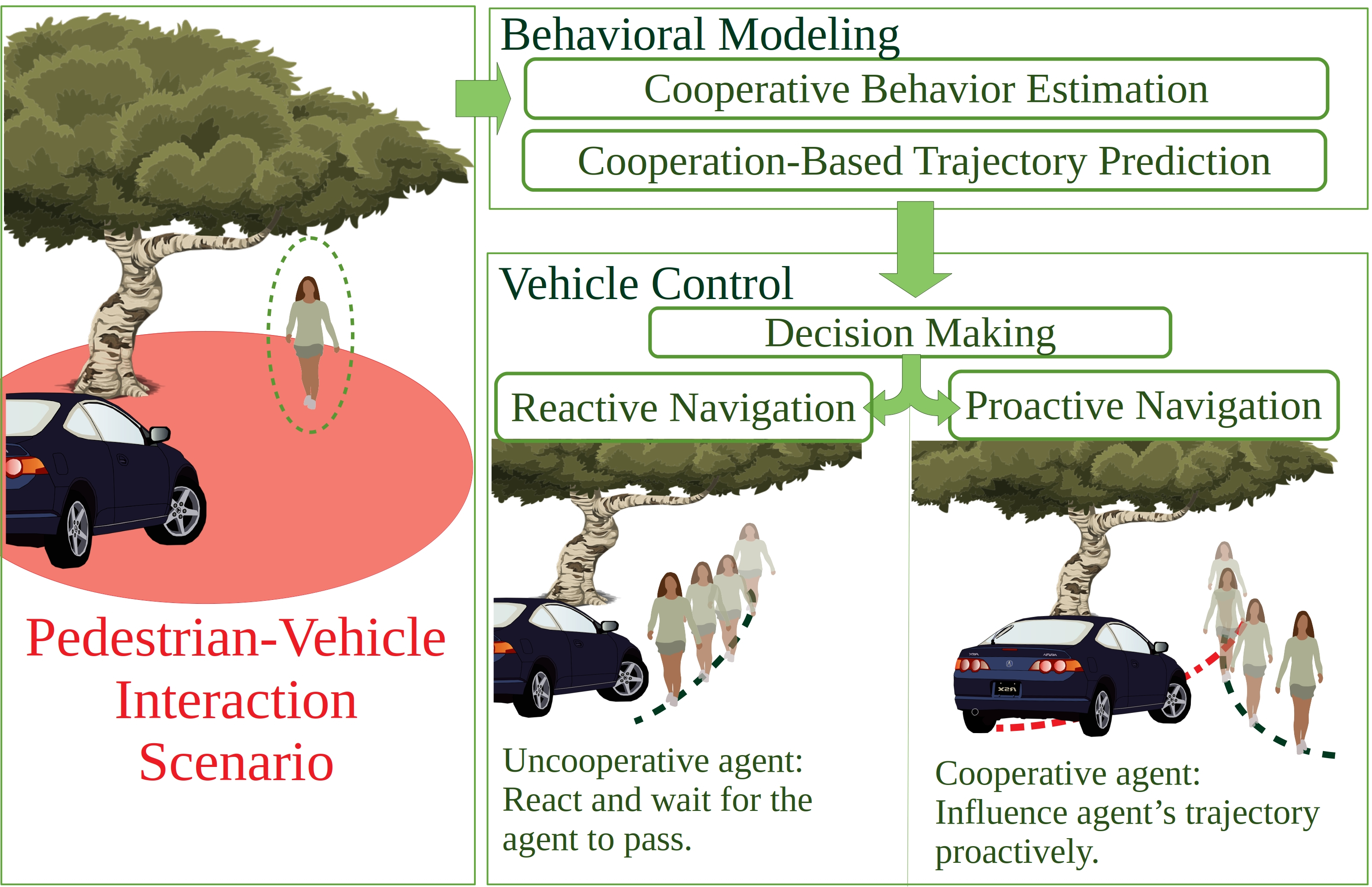

Social and ethic navigation

We investigated new methods to build safe and socially compliant trajectories among humans using models of behaviors presented in Axis I. A focus on navigation of an autonomous vehicle among crowds has been done were there is no free space to plan a safe trajectory (M. Kabtoul's PhD, ANR Hianic Project). We proposed a solution that is a proactive social navigation framework. The system is based on the idea of the coupled navigation behavior between the pedestrian and the vehicle in shared spaces. The system takes into account the cooperative nature of human behavior and exploits it to explore new navigation options, and navigate the shared space “proactively" 76, 75. We obtained promising results using the SPACISS simulator previously described. We also investigated ethic navigation when an autonomous vehicle cannot avoid a collision with an object or a vulnerable road user (L. Serafim Guardini's PhD). We exploit accidentology data where each class of object or agent presents an injury probability with respect to the impact speed and ethical/economical/political factors. Our method generates a cost map containing a collision probability along with its associated risk of injury, which are used to plan trajectories with the lowest risk 110.

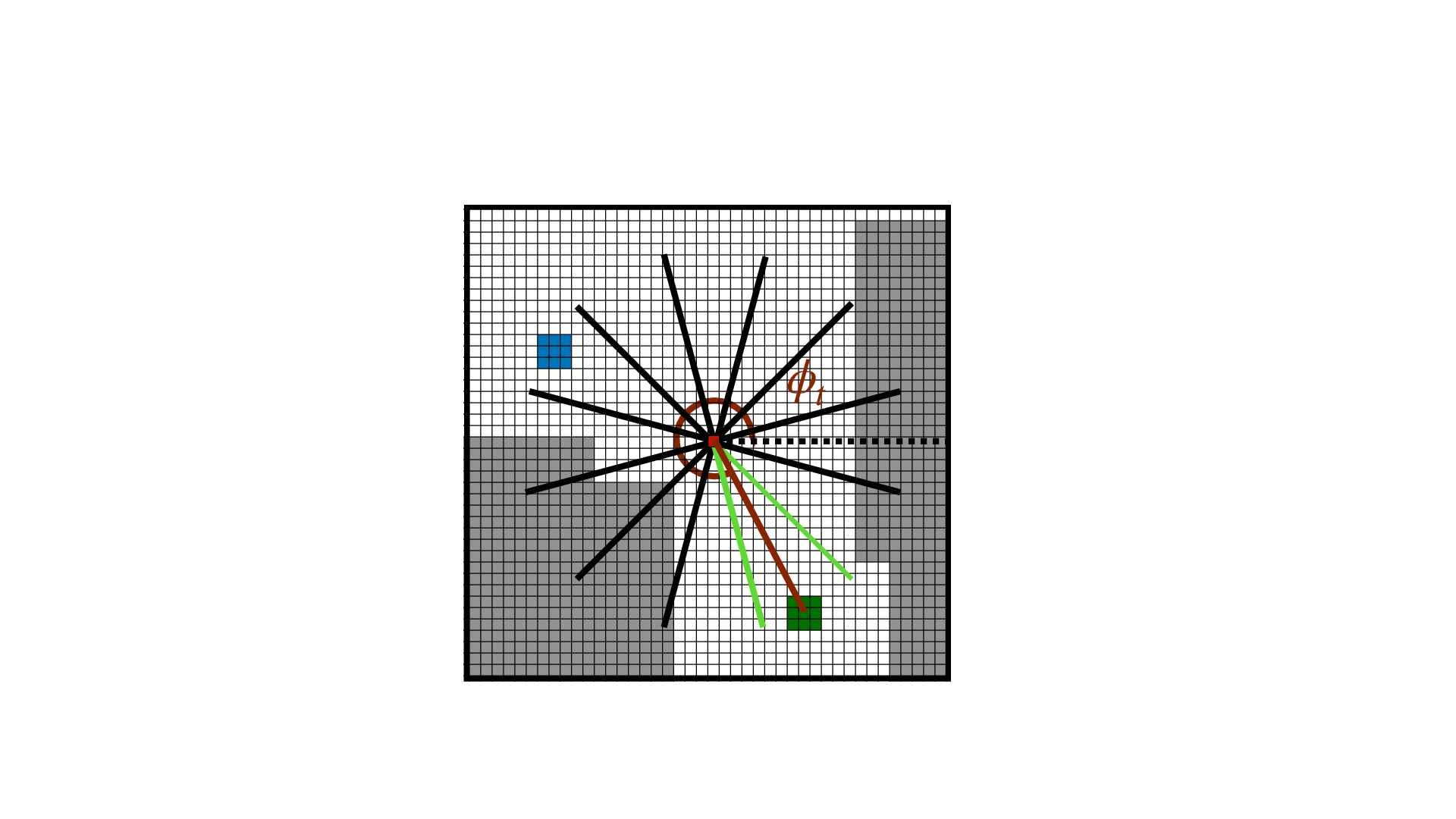

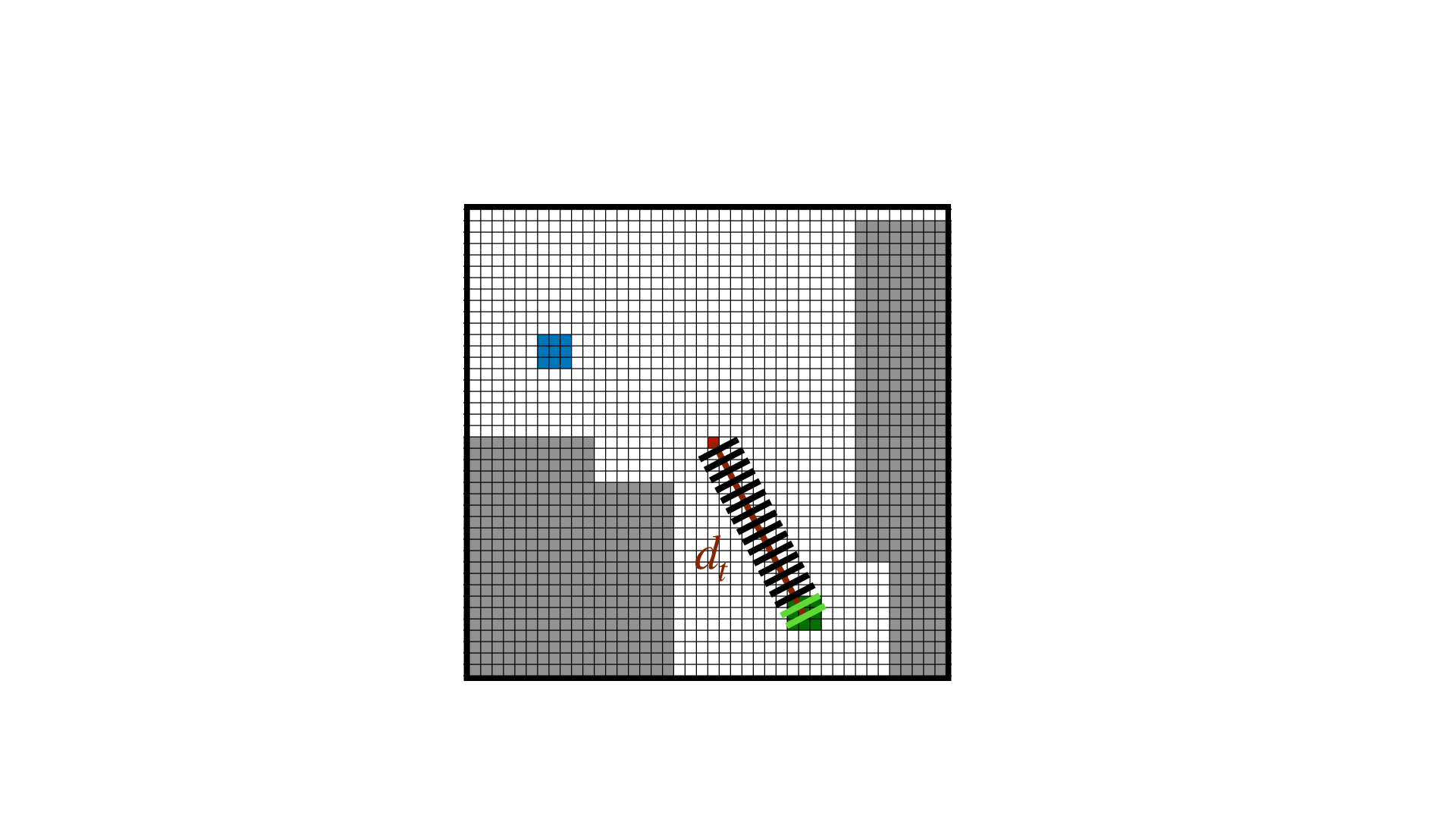

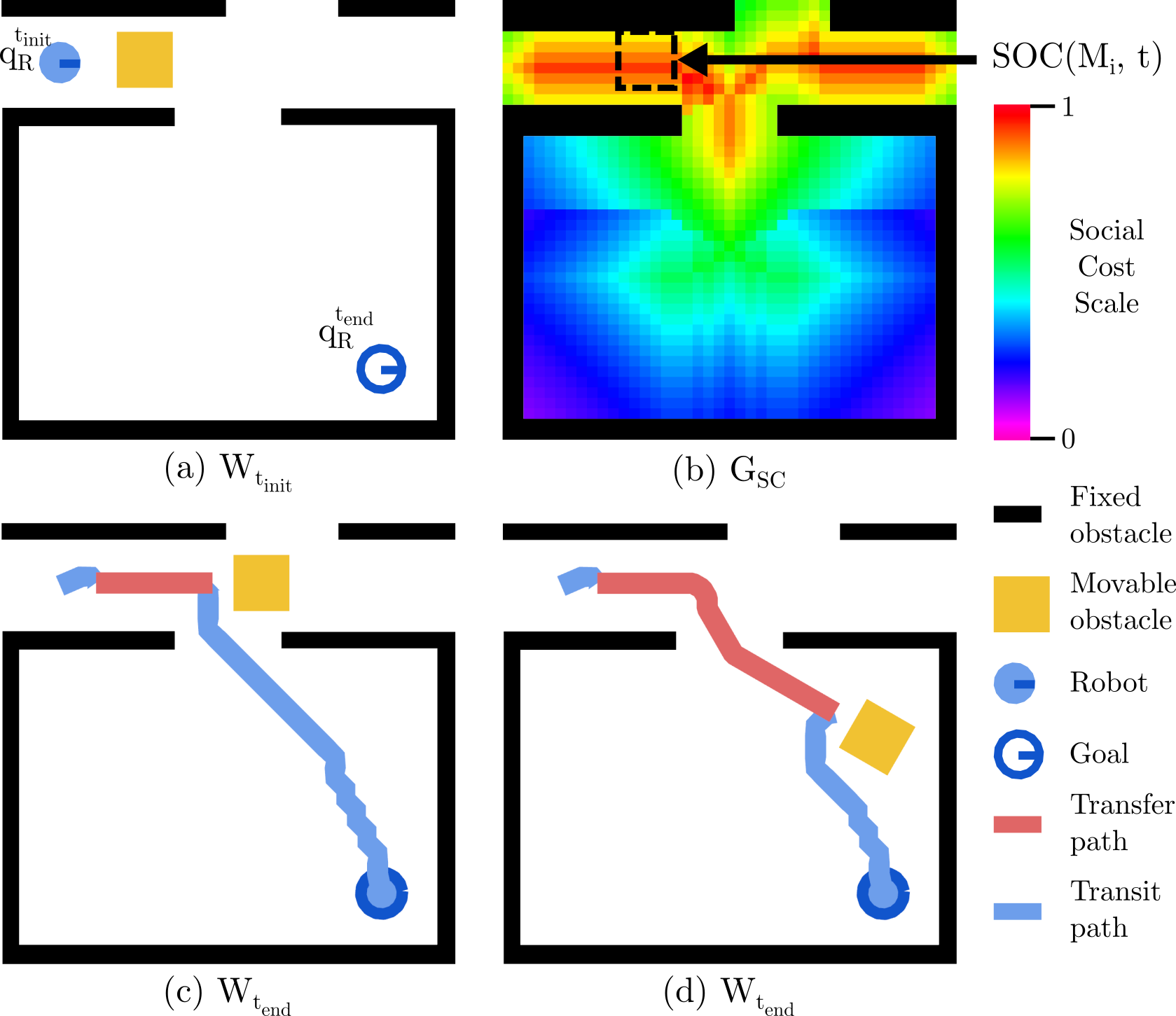

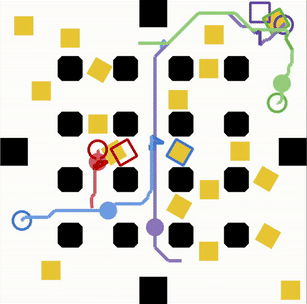

We also investigated another navigation problem, which is NAMO (Navigation Among Movable Obstacles). We extended NAMO in two directions considering social constraints when robots move obstacles (B. Renault's PhD). First, we examined where obstacles should be optimally moved with regards to space access (called Social-NAMO). We derived new spatial cost functions and NAMO algorithms preserving accesses 102. Second, we generalized the problem to robots (called MR-NAMO). We proposed a local coordination strategy exploiting Social-NAMO rearrangement properties and offering online efficient plans (under review). For evaluation, we developped a simulator (S-NAMO-SIM) that we share with the communauty.

Multi-robot exploration

An important part of our research is focused on designing new online planning solutions for multi-robot systems to accomplish exploration and observation gathering tasks in complex and dynamic environments. Problems related to computational and communication constraints are here crucial, and distributed approaches are fundamental to overcome them and obtain robust solutions.

Following these goals, a particular attention has been given to the exploration/mapping of 3D environments with a team of aerial vehicles, for which we presented a new decentralized solution based on the combination of a stochastic optimization approach with a more classic strategy that exploits frontier points 105. In 104, we then showed how this approach is part of a more general formulation that can deal with the optimal deployment coverage as well, for which we also studied the impact of an offline initialization step based on very partial information on the environment. Stochastic-based planning methods have been also exploited to propose a new source seeking strategy 103. This solution is based on robust estimations of the signal gradient obtained by the robots while exploring the environment in predefined symmetric formations 56 and that are then used to bias a correlated random walk that guides the robots.

Connected to this subject, we also investigated multi-robot strategies to cover complex scenes/ environments, by introducing multi-resolution maps. This concerned human activity observation by a set of ground robots (90) and more recently inspection of large structures with aerial robots (in the framework of the European project BugWright2, in collaboration with LSL, Austria).

Swarm navigation

In the context of self-reorganized systems, or swarm robotics, we explore how collective navigation can be defined and adapted to fleets of communicating robots. In A. Bonnefond's PhD, we studied flocking models, where each agent communicates locally its speed and position, and by simulating radio propagation we examined how obstacles impact the flocking robustness. Then we extended and combined two standard models, Olfati-Saber and Vásárhelyi, to improve their ability to stay connected while evolving in environments with different obstacle distributions 54. This work is supported by the Inria/DGA Dynaflock project and by the national Equipex+ TIRREX infrastructure. Related to this work, we address Controled Mobility where navigation must take into account the need of maintaining the fleet connectivity. In R. Grunblatt's PhD, we proposed a distributed algorithm that optimizes communication in a swarm of UAVs by changing their antenna orientation online 11. This work is continued in the ANR CONCERTO project.

2. Machine Learning

Machine learning techniques for decision-making are autonomous algorithms for computing behaviors, also called policies or strategies, which describe what action an agent should take when at a given state. Learning to act in complex environments requires knowledge about the structure of underlying decision-making problems. We contribute in revealing insightful underlying structures, exploiting them within existing or customized algorithms, and showing they improve performances in a wide variety of decision-making problems. For instance, optimal policies may have concise representations of their mental states, e.g., sufficient statistics, ego-centric neural-network architectures.

In the context of POMDPs9, dec-POMDPs, zs-POSGs10, and st-POSGs, we have identified a unified framework for solving these problems 64. Our methodological framework splits into five steps. First, we have shown that all these problems are convertible into a single problem called a common-knowledge game 64. Then, we recast the (possibly discrete) problem into a continuous one. Furthermore, we establish uniform continuity properties for general and hierarchical dec-POMDPs. We proved that optimal value functions for general and hierarchical dec-POMDPs are PWLC 64, 121. We also established optimal value functions for general zs-POSGs that are Lipschitz continuous 57, 58. Finally, we designed planning algorithms for games against Nature 70, pure competitive games 59, as well as for pure cooperative games 64. We extended exact and deep reinforcement learning algorithms for general dec-POMDPs 64. We finally conducted applications of deep reinforcement along two main directions: autonomous transportation systems 55; and automatic control systems 123.

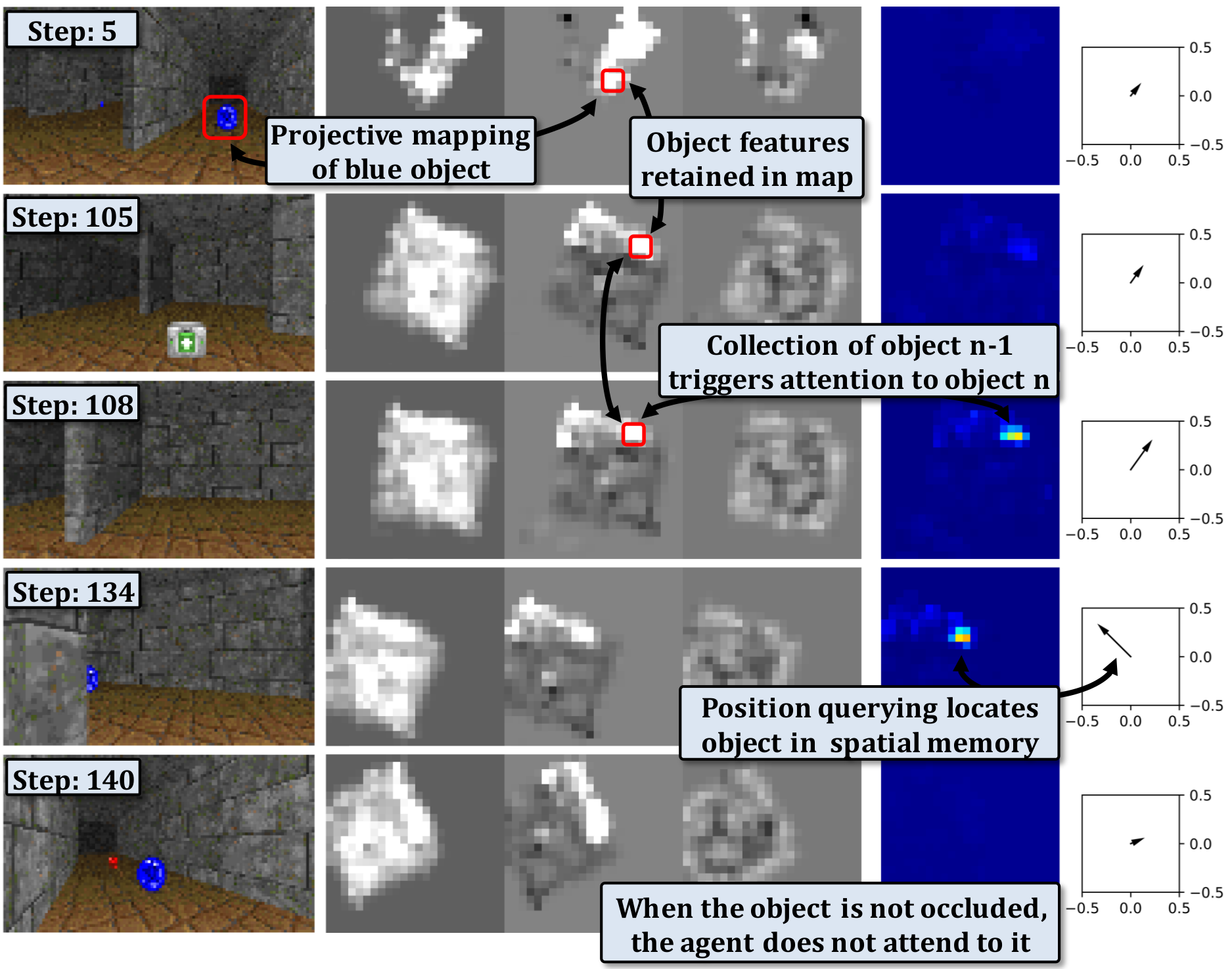

Creating agents capable of high-level reasoning based on structured memory was the main topic of C. Wolf during its Inria "delegation" in Chroma (2017-19). In the PhD thesis of E. Beeching we proposed EgoMap, a spatially structured metric neural memory architecture in deep reinforcement learning. Through visualizations, the agent learns to map relevant objects in its spatial memory without any supervision purely from reward, see 52 (ICPR), 51 (ECCV) and 50 (ECML). In late 2019, C. Wolf obtained the AI Chair "REMEMBER", involving O. Simonin and J. Dibangoye from Chroma and L. Matignon from LIRIS lab/Univ Lyon 1. In this context, the PhD of P. Marza proposes to use an implicit representation of the environment to navigate towards targets. Neural fields are a recent category of approaches where the geometry, and eventually the semantics, of a 3D scene are encapsulated into the weights of a trained neural network.

As mentioned in Axis I, making appropriate decisions for safe robot / vehicle navigation is highly dependent on the results of the perception step. Indeed, the critical elements of a complex dynamic scene must first be extracted, classified and tracked in real time, by combining Bayesian and AI approaches to build "semantic grids" 8285, 67, 65. Two types of decision-making based on RL were developed for autonomous driving on highways and road junctions respectively, as part of the doctoral theses of David Sierra-Gonzalez 115 and Mathieu Barbier 47.

A last activity concerns our involvement in the RoboCup11 competition. In 2017 we founded the LyonTech team12 to participate to the RoboCup@Home challenge within the Pepper league. We worked on learning and integrating high-level functionalities such as object and people recognition, mapping and planning in indoor environments (see 73). We reached the 5th place in 2018, the 3rd place in 2019, the 2d place in 2021, and awarded the best scientific paper at the RoboCup conference in 2019 109.

3. Offline constrained optimisation problems

In this theme, we study and design algorithms for solving NP-hard constrained optimization problems (COPs) such as vehicle routing problems, or multi-agent path finding problems. We more particularly study approaches based on Constraint Programming (CP), that provides declarative languages for describing these problems by means of constraints, and generic algorithms for solving them by means of constraint propagation.

In the context of the European project BugWright2 and the PhD thesis of Xiao Peng (started in Nov. 2020), we study a new multi-agent path finding problem for tethered robots, that are attached with cables to anchor points. In 97, we show how to compute bounds for this problem by solving well known assignment problems, and we introduce a CP model for solving the problem to optimality. In 92, we extend this approach to the case of non point-sized robots.

In the context of the PhD thesis of Romain Fontaine (started in Oct. 2020), we study vehicle routing problems with time-dependent cost functions (such that travel times between locations to visit may change along the day), and we design new solving approaches based on an anytime extension of A* 71, 41. We study the impact of time-window constraints on the satisfiability and the difficulty of routing problems in 106.

Besides planning and routing problems which are at the core of Chroma topics, we also investigate the interest of using CP solvers for solving cryptanalysis problems 26.

Collaborations

- Social navigation: collaboration with Julie Dugdale and Dominique Vaufreydaz, Asso. Prof. at LIG Laboratory and with Prof. Philippe Martinet from Acentori Inria team (common PhD students and projects: ANR VALET and ANR HIANIC).

- Multi-robot exploration: Prof. Cedric Pradalier from GeorgiaTech Metz/CNRS (France) leading the European project BugWright2 — Prof. Luca Schenato from University of Padova (Italy).

- Swarm of UAVs: Prof. Isabelle Guérin-Lassous from LIP lab/Inria DANTE team (common PhD students and projects: ANR CONCERTO, INRIA/DGA DynaFlock) — Research Director Isabelle Fantoni from CNRS/LS2N Lab in Nantes (common projects: ANR CONCERTO, Equipex TIRREX) — Dr. Melanie Schranz, senior researcher at LakeSide Lab, Austria (BugWright2 project).

- Machine Learning: strong collaborations with Christian Wolf, Naver Labs Europe & LIRIS lab/INSA13 (common PhD students and projects: ANR DELICIO, ANR AI Chair REMEMBER) and with Laetitia Matignon, Asso. Prof. at LIRIS lab/Univ Lyon 1 (common projects and Phd/Master students) — Akshat Kumar, Asso. Prof. at School of Computing and Information Systems (Singapore) — Abdallah Saffidine, Research Asso. at University of New South Wales (Sydney) — Prof. Stéphane Canu at INSA Rouen — Olivier Buffet, Vincent Thomas and François Charpillet, Inria researchers in LARSEN team, Nancy (common publications and project ANR PLASMA).

- Semantic grids for autonomous navigation: Collaboration with Toyota Motor Europe (TME), R&D center Zaventen (Brussels). R&D contracts, common publications and patents.

- Constrained optimization: Omar Rifki (Post-doc) and Thierry Garaix (Ass. Prof) at LIMOS Ecole des Mines de Saint Etienne — Pascal Lafourcade and François Delobel, Asso. Prof. at LIMOS Université Clermont-Ferrand.

4 Application domains

4.1 Introduction

Applications in Chroma are organized in two main domains : i) Future cars and transportation of persons and goods in cities, ii) Service robotics with ground and aerial robots. These domains correspond to the experimental fields initiated in Grenoble (eMotion team) and in Lyon (CITI lab). However, the scientific objectives described in the previous sections are intended to apply equally to these applicative domains. Even if our work on Bayesian Perception is today applied to the intelligent vehicle domain, we aim to generalize to any mobile robots. The same remark applies to the work on multi-agent decision making. We aim to apply algorithms to any fleet of mobile robots (service robots, connected vehicles, UAVs). This is the philosophy of the team since its creation.

4.2 Future cars and transportation systems

Thanks to the introduction of new sensor and ICT technologies in cars and in mass transportation systems, and also to the pressure of economical and security requirements of our modern society, this application domain is quickly changing. Various technologies are currently developed by both research and industrial laboratories. These technologies are progressively arriving at maturity, as it is witnessed by the results of large scale experiments and challenges such as the Google’s car project and several future products announcements made by the car industry. Moreover, the legal issue starts to be addressed in USA (see for instance the recent laws in Nevada and in California authorizing autonomous vehicles on roads) and in several other countries (including France).

In this context, we are interested in the development of ADAS 14 systems aimed at improving comfort and safety of the cars users (e.g., ACC, emergency braking, danger warnings), of Fully Autonomous Driving functions for controlling the displacements of private or public vehicles in some particular driving situations and/or in some equipped areas (e.g., automated car parks or captive fleets in downtown centers or private sites), and of Intelligent Transport including optimization of existing transportation solutions.

Since about 8 years, we are collaborating with Toyota and with Renault-Nissan on these applications (bilateral contracts, PhD Theses, shared patents), but also recently with Volvo group (2016-20). We are also strongly involved (since 2012) in the innovation project Perfect then now Security for autonomous vehicle of the IRT 15 Nanoelec (transportation domain). Since 2016, we have been awarded two European projects (the "ENABLE" H2020 ECSEL project 16 and the "CPS4EU" H2020 ECSEL project) involving major European automotive constructors and car suppliers. In these projects, Chroma is focusing on the embedded perception component (models and algorithms, including the certification issue). Chroma (A. Spalanzani) led also the ANR Hianic (2018-21) dealing with pedestrian-vehicle interaction for a safe navigation.

In this context, Chroma has two experimental vehicles equipped with various sensors (a Toyota Lexus and a Renault Zoe. , see. Fig. 2) , which are maintained by Inria-SED 17 and that allow the team to carry out experiments in realistic traffic conditions (Urban, road and highway environments). The Zoe car has been automated in December 2016, through our collaboration with the team of P. Martinet (IRCCyN Lab, Nantes), that allow new experiments in the team.

4.3 Service robotics with ground and aerial robots

Service robotics is an application domain quickly emerging, and more and more industrial companies (e.g., IS-Robotics, Samsung, LG) are now commercializing service and intervention robotics products such as vacuum cleaner robots, drones for civil applications, entertainment robots, etc. One of the main challenges is to propose robots which are sufficiently robust and autonomous, easily usable by non-specialists, and marked at a reasonable cost. We are involved in developing fleet of ground robots and aerial ones, see Fig. 2. Since 2016, we study solutions for 3D observation/exploration of complex scenes or environments with a fleet of UAVs (eg. BugWright2 H2020 project, Dynaflock Inria/DGA project) or ground robots (COMODYS FIL project 90).

A more recent challenge for the coming decade is to develop robotized systems for assisting elderly and/or disabled people. In the continuity of our work in the IPL PAL 18, we aim to propose smart technologies to assist electric wheelchair users in their displacements and also to control autonomous cars in human crowds (see Figure 6 for illustration). This concerns our recent "Hianic" ANR project. Another emerging application is humanoid robots helping humans at their home or work. In this context, we address the problem of NAMO (Navigation Among Movable Obstacles) in indoor environments (see PhD of B. Renault started on 2018). More generally we address navigation and object search with humanoids robots such as Pepper, in the context of the RoboCup-Social League competition.

5 Social and environmental responsibility

5.1 Footprint of research activities

- Due to the Covid, the vast majority of our trips for PhD defenses and for selection committees were replaced by videoconferences in 2021. While there is no question of systematizing the use of videoconferences, we changed our habits to offer a real alternative and seek a better compromise between environmental impact and user-friendliness.

- Some of our research topics involve high CPU usage. Some researchers in the team use the Grid5000 computing cluster, which guarantees efficient management of computing resources and facilitates the reproducibility of experiments. Some researchers in the team keep a log summarizing all the uses of computing servers in order to be able to make statistics on these uses and assess their environmental footprint more finely.

5.2 Impact of research results

- Romain Fontaine's thesis aims to develop algorithms for the efficient optimization of delivery rounds in the city. Our work is motivated by taking into account environmental constraints, set out in the report "Assurer le fret dans un monde fini" from The Shift Project (theshiftproject.org/wp-content/uploads/2022/03/Fret_rapport-final_ShiftProject_PTEF.pdf)

- Léon Fauste's thesis focuses on the design of a decision support tool to choose the scale when relocating production activities (work in collaboration with the Inria STEEP team). This resulted in a publication in collaboration with STEEP at ROADEF 32 and the joint organization of a workshop on the theme "Does techno-solutionism have a future?" during the Archipel conference which has been organized in June 2022 (archipel.inria.fr/).

- Christine Solnon has co-organized in Lyon (with Sylvain Bouveret, Nadia Brauner, Pierre Fouilhoux, Alexandre Marié, and Michael Poss), a one day workshop on environmental and societal challenges of decision making, on the 17th of November (sponsored by GDR RO and GDR IA).

- Chroma will organize in 2024 the national Archipel conference (archipel.inria.fr) on anthropocene challenges.

- Some of us are strongly involved in the development of INSA Lyon training courses to integrate DDRS issues (Christine Solnon is a member of the CoPil and the GTT on environmental and social digital issues). This participation feeds our reflections on the subject.

6 Highlights of the year

6.1 Awards

- Best Paper award IEEE ICARCV 2022 (17e Int. Conf. on Control, Automation, Robotics and Vision) : Rabbia Asghar, Lukas Rummelhard, Anne Spalanzani and Christian Laugier, "Allo-Centric Occupancy Grid Prediction for Urban Traffic Scene Using Video Prediction Networks".

- Best Paper award IEEE IV 2022 (IEEE Intelligent Vehicles Symposium) : Thomas Genevois, Jean-Baptiste Horel, Alessandro Renzaglia, and Christian Laugier, “Augmented Reality on LiDAR data: Going beyond Vehicle-in-the-Loop for Automotive Software Validation".

- Manon Predhumeau obtained the award of the Best PhD in computer science 2022 of Grenoble-Alpes-University.

6.2 New Projects

- ANR JCJC "AVENUE" coordinated by Alessandro Renzaglia (250 K€)

- ANR "MAMUT", Chroma is partner of the project, Christine Solnon coordinates our participation (128 K€ for Chroma partner, the total funding is 497 K€)

- ANR Annapolis, Chroma is partner of the project, Anne Spalanzani coordinates our participation (163 K€ for Chroma partner, the total funding is 831 K€)

- PEPR Agroécologie et numérique : Chroma is partner of the NINSAR project (New ItiNerarieS for Agroecology using cooperative Robots, 60 months). Olivier Simonin coordinates our participation (168 K€ for Chroma partner, the total funding is 2,16 M€)

6.3 Community activities

- Christian Laugier is appointed Editor-in-Chief of the IROS Conference Paper Review Board (CPRB) for the period 2023-25 (IEEE/RSJ International Conference on Intelligent Robots and Systems).

- Christine Solnon has written the endorsement quote on the cover of the last Volume of the Art of Computer Programming (Volume 4B) written by Donald E. Knuth

- Olivier Simonin was a member of the international evaluation committee of 3IT Institute, University of Sherbrooke, Canada (incl. a one week visit in september).

6.4 Advancement in grade / career

- Anne Spalanzani is promoted to the grade of Professor (PR2)

- Olivier Simonin is promoted to the grade of Professor PREX1

7 New software and platforms

7.1 New software

7.1.1 CMCDOT

-

Keywords:

Robotics, Environment perception

-

Functional Description:

CMCDOT is a Bayesian filtering system for dynamic occupation grids, allowing parallel estimation of occupation probabilities for each cell of a grid, inference of velocities, prediction of the risk of collision and association of cells belonging to the same dynamic object. Last generation of a suite of Bayesian filtering methods developed in the Inria eMotion team, then in the Inria Chroma team (BOF, HSBOF, ...), it integrates the management of hybrid sampling methods (classical occupancy grids for static parts, particle sets for parts dynamics) into a Bayesian unified programming formalism , while incorporating elements resembling the Dempster-Shafer theory (state "unknown", allowing a focus of computing resources). It also offers a projection system of the estimated scene in the near future, to reference potential collisions with the ego-vehicle or any other element of the environment, as well as very low cost pre-segmentation of coherent dynamic spaces (taking into account speeds). It takes as input instantaneous occupation grids generated by sensor models for different sources, the system is composed of a ROS package, to manage the connectivity of I / O, which encapsulates the core of the embedded and optimized application on GPU Nvidia (Cuda), allowing real-time analysis of the direct environment on embedded boards (Tegra X1, X2). ROS (Robot Operating System) is a set of open source tools to develop software for robotics. Developed in an automotive setting, these techniques can be exploited in all areas of mobile robotics, and are particularly suited to highly dynamic and uncertain environment management (e.g. urban scenario, with pedestrians, cyclists, cars, buses, etc.).

-

Authors:

Amaury Nègre, Lukas Rummelhard, Jean-Alix David, Christian Laugier

-

Contact:

Christian Laugier

-

Partners:

CEA, CNRS

7.1.2 Ground Elevation and Occupancy Grid Estimator (GEOG - Estimator)

-

Keywords:

Robotics, Environment perception

-

Functional Description:

GEOG-Estimator is a system of joint estimation of the shape of the ground, in the form of a Bayesian network of constrained elevation nodes, and the ground-obstacle classification of a pointcloud. Starting from an unclassified 3D pointcloud, it consists of a set of expectation-maximization methods computed in parallel on the network of elevation nodes, integrating the constraints of spatial continuity as well as the influence of 3D points, classified as ground-based or obstacles. Once the ground model is generated, the system can then construct a occupation grid, taking into account the classification of 3D points, and the actual height of these impacts. Mainly used with lidars (Velodyne64, Quanergy M8, IBEO Lux), the approach can be generalized to any type of sensor providing 3D pointclouds. On the other hand, in the case of lidars, free space information between the source and the 3D point can be integrated into the construction of the grid, as well as the height at which the laser passes through the area (taking into account the height of the laser in the sensor model). The areas of application of the system spread across all areas of mobile robotics, it is particularly suitable for unknown environments. GEOG-Estimator was originally developed to allow optimal integration of 3D sensors in systems using 2D occupancy grids, taking into account the orientation of sensors, and indefinite forms of grounds. The ground model generated can be used directly, whether for mapping or as a pre-calculation step for methods of obstacle recognition or classification. Designed to be effective (real-time) in the context of embedded applications, the entire system is implemented on Nvidia graphics card (in Cuda), and optimized for Tegra X2 embedded boards. To ease interconnections with the sensor outputs and other perception modules, the system is implemented using ROS (Robot Operating System), a set of opensource tools for robotics.

-

Authors:

Amaury Nègre, Lukas Rummelhard, Lukas Rummelhard, Jean-Alix David, Christian Laugier

-

Contact:

Christian Laugier

7.1.3 Zoe Simulation

-

Name:

Simulation of INRIA's Renault Zoe in Gazebo environment

-

Keyword:

Simulation

-

Functional Description:

This simulation represents the Renault Zoe vehicle considering the realistic physical phenomena (friction, sliding, inertia, ...). The simulated vehicle embeds sensors similar to the ones of the actual vehicle. They provide measurement data under the same format. Moreover the software input/output are identical to the vehicle's. Therefore any program executed on the vehicle can be used with the simulation and reciprocally.

-

Authors:

Christian Laugier, Nicolas Turro, Thomas Genevois

-

Contact:

Christian Laugier

7.1.4 Hybrid-state E*

-

Name:

Path planning with Hybrid-state E*

-

Keywords:

Planning, Robotics

-

Functional Description:

Considering a vehicle with the kinematic constraints of a car and an environment which is represented by a probabilistic occupancy grid, this software produces a path from the initial position of the vehicle to its destination. The computed path may include, if necessary, complex maneuvers. However the suggested path is often the simpler and the shorter.

This software is designed to take benefit from bayesian occupancy grids such as the ones computed by the CMCDOT software.

- URL:

-

Authors:

Christian Laugier, Thomas Genevois

-

Contact:

Christian Laugier

-

Partner:

CEA

7.1.5 Pedsim_ros_AV

-

Name:

Pedsim_ros_AV

-

Keywords:

Simulator, Multi-agent, Crowd simulation, Autonomous Cars, Pedestrian

-

Scientific Description:

These ROS packages are useful to support robotic developments that require the simulation of pedestrians and an autonomous vehicle in various shared spaces scenarios. They allow: 1. in simulation, to pre-test autonomous vehicle navigation algorithms in various crowd scenarios, 2. in real crowds, to help online prediction of pedestrian trajectories around the autonomous vehicle.

Individual pedestrian model in shared space (perception, distraction, personal space, pedestrians standing, trip purpose). Model of pedestrians in social groups (couples, friends, colleagues, family). Autonomous car model. Pedestrian-autonomous car interaction model. Definition of shared space scenarios: 3 environments (business zone, campus, city centre) and 8 crowd configurations.

-

Functional Description:

Simulation of pedestrians and an autonomous vehicle in various shared space scenarios. Adaptation of the original Pedsim_ros model to simulate heterogeneous crowds in shared spaces (individuals, social groups, etc.). The car model is integrated into the simulator and the interactions between pedestrians and the autonomous vehicle are modeled. The autonomous vehicle can be controlled from inside the simulation or from outside the simulator by ROS commands.

- URL:

- Publications:

-

Contact:

Manon Predhumeau

-

Participants:

Manon Predhumeau, Anne Spalanzani, Julie Dugdale, Lyuba Mancheva

-

Partner:

LIG

7.1.6 S-NAMO-SIM

-

Name:

S-NAMO Simulator

-

Keywords:

Simulation, Navigation, Robotics, Planning

-

Functional Description:

2D Simulator of NAMO algorithms (Navigation Among Movable Obstacles) ROS compatible

-

Release Contributions:

Creation

-

Author:

Benoit Renault

-

Contact:

Benoit Renault

7.1.7 SimuDronesGR

-

Name:

Simultion of UAV fleets with Gazebo/ROS

-

Keywords:

Robotics, Simulation

-

Functional Description:

The simulator includes the following functionality : 1) Simulation of the mechanical behavior of an Unmanned Aerial Vehicle : * Modeling of the body's aerodynamics with lift, drag and moment * Modeling of rotors' aerodynamics using the forces and moments' expressions from Philppe Martin's and Erwan Salaün's 2010 IEEE Conference on Robotics and Automation paper "The True Role of Accelerometer Feedback in Quadrotor Control". 2) Gives groundtruth informations : * Positions in East-North-Up reference frame * Linear velocity in East-North-Up and Front-Left-Up reference frames * Linear acceleration in East-North-Up and Front-Left-Up reference frames * Orientation from East-North-Up reference frame to Front-Left-Up reference frame (Quaternions) * Angular velocity of Front-Left-Up reference frame expressed in Front-Left-Up reference frame. 3) Simulation of the following sensors : * Inertial Measurement Unit with 9DoF (Accelerometer + Gyroscope + Orientation) * Barometer using an ISA model for the troposphere (valid up to 11km above Mean Sea Level) * Magnetometer with the earth magnetic field declination * GPS Antenna with a geodesic map projection.

-

Release Contributions:

Initial version

-

Author:

Vincent Le Doze

-

Contact:

Vincent Le Doze

-

Partner:

Insa de Lyon

7.1.8 spank

-

Name:

Swarm Protocol And Navigation Kontrol

-

Keyword:

Protocoles

-

Functional Description:

Communication and distance measurement in an uav swarm

- URL:

-

Contact:

Stéphane d'Alu

-

Participant:

Stéphane d'Alu

7.2 New platforms

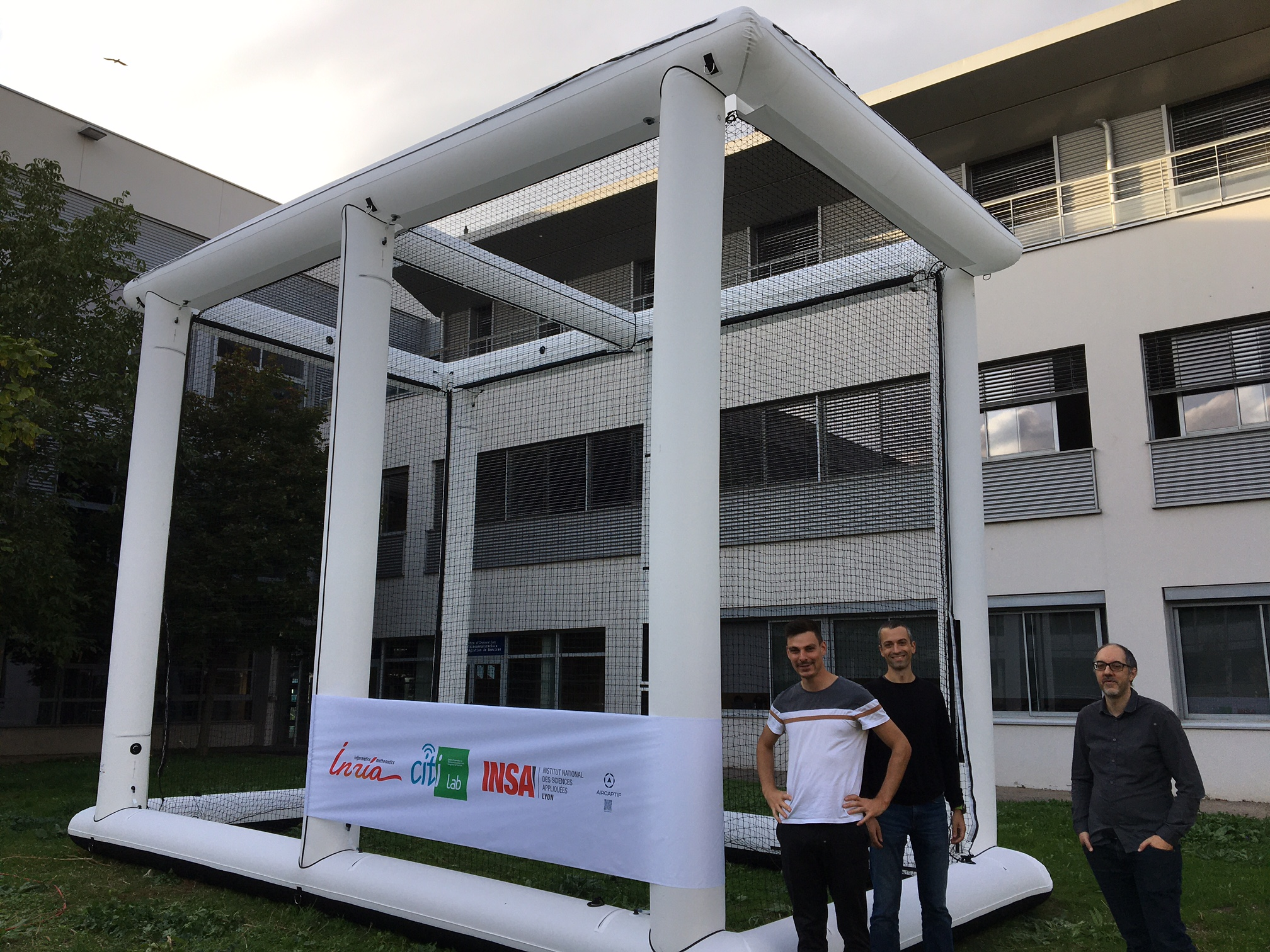

7.2.1 Chroma Aerial Robots platform

Participants: Olivier Simonin, Alessandro Renzaglia, Johan Faure.

This platform is composed of :

- Four quadrirotor PX4 Vision UAVs (Unmaned Aerial Vehicles), acquired 4 in 2021 and 2022. This platform is funded and used in the projects "Dynaflock" (Inria-DGA) and ANR "CONCERTO" (the team also owns 5 Parrot Bebop UAVs).

- Two outdoor inflatable aviaries of 6m(L) x 4m(l) x 5m(H) each.

- Two indoor inflatable aviaries of 5m(L) x 3m(l) x 2.5m(H) each.

8 New results

8.1 Bayesian Perception

Participants: Christian Laugier, Lukas Rummelhard, Andres Gonzalez Moreno, Jerome Lussereau, Thomas Genevois, Alessandro Renzaglia, Nicolas Turro, Amrita Suresh, Rabbia Asghar, Jean-Baptiste Horel.

Recognized as one of the core technologies developed within the team over the years (see related sections in previous activity report of Chroma, and previously e-Motion reports), the CMCDOT framework is a generic Bayesian Perception framework, designed to estimate a dense representation of dynamic environments and the associated risks of collision, by fusing and filtering multi-sensor data. This whole perception system has been developed, implemented and tested on embedded devices, incorporating over time new key modules. In 2022, this framework, and the corresponding software, has continued to be the core of many important industrial partnerships and academic contributions, and to be the subject of important developments, both in terms of research and engineering.

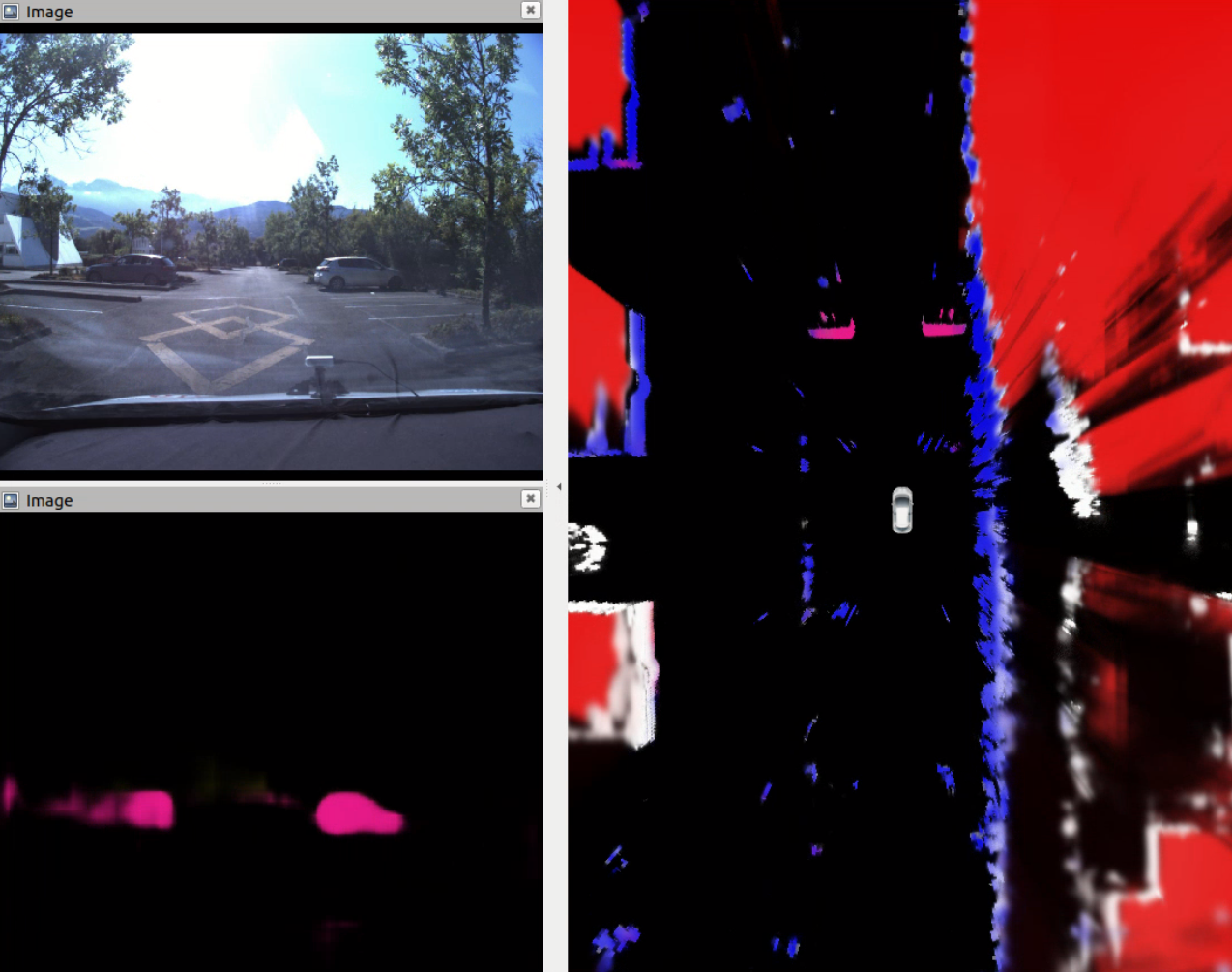

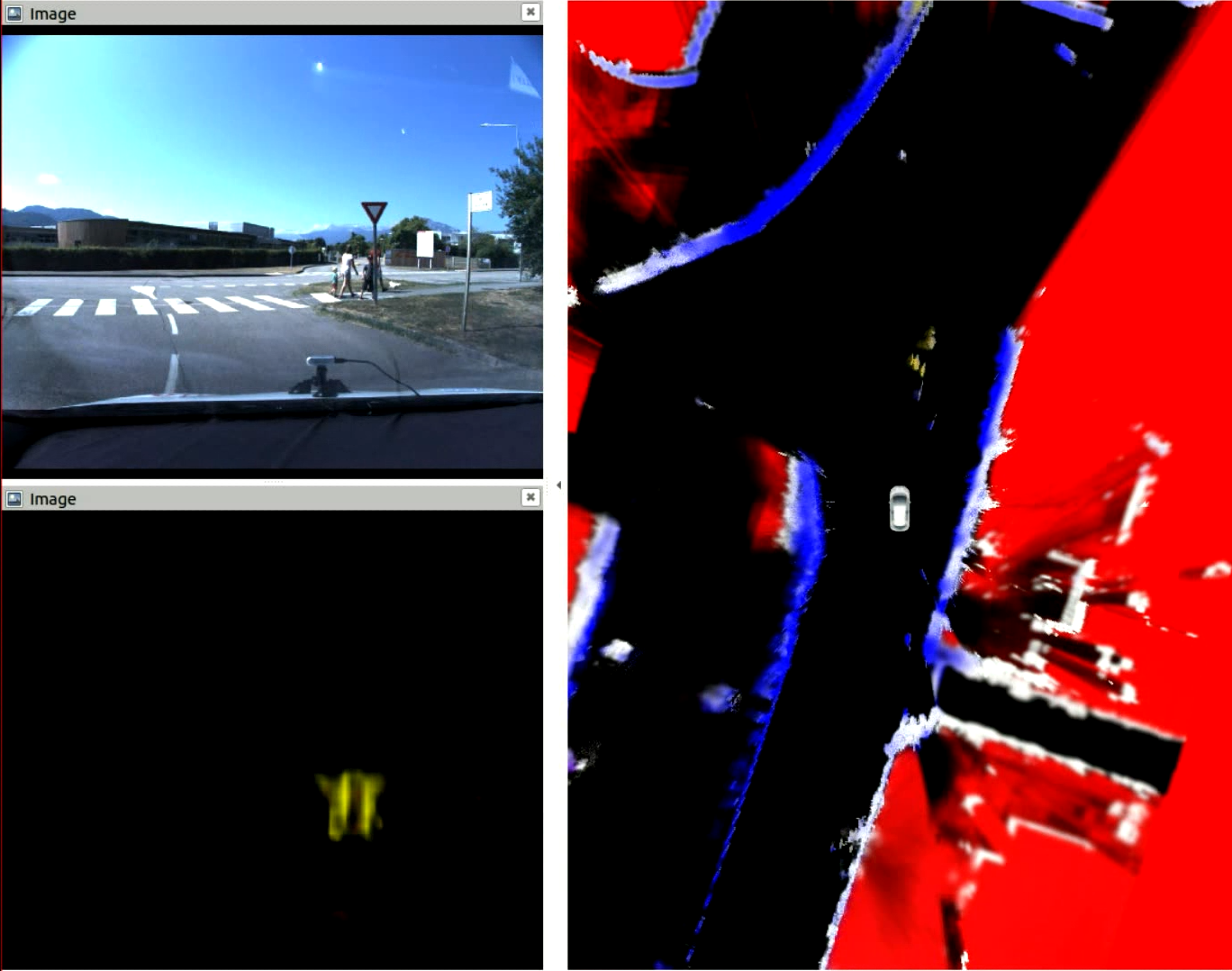

8.1.1 Extension of the CMCDOT framework to 3D Semantic Occupancy Grids

Participants: Andres Gonzalez Moreno, Lukas Rummelhard, Christian Laugier.

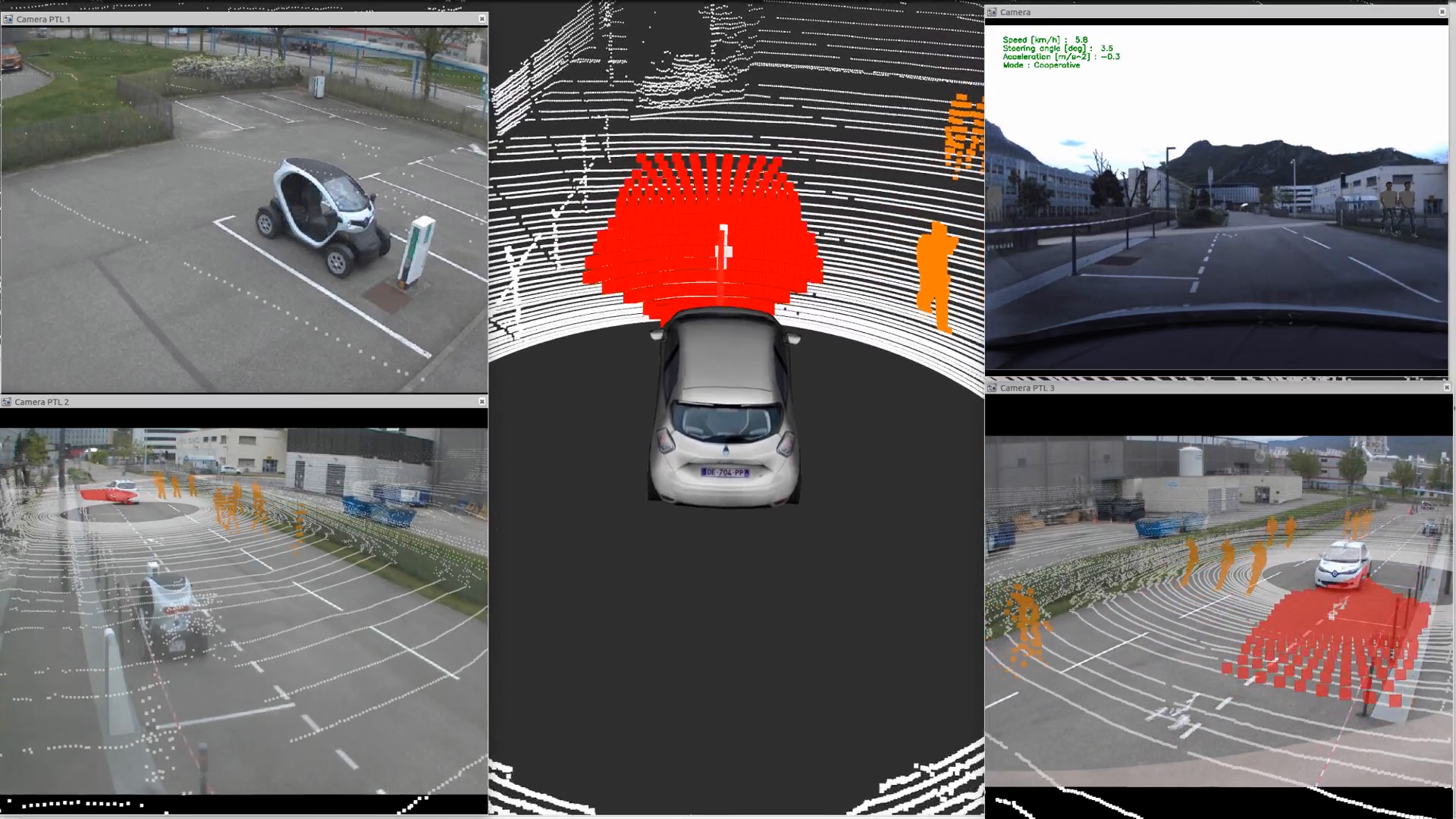

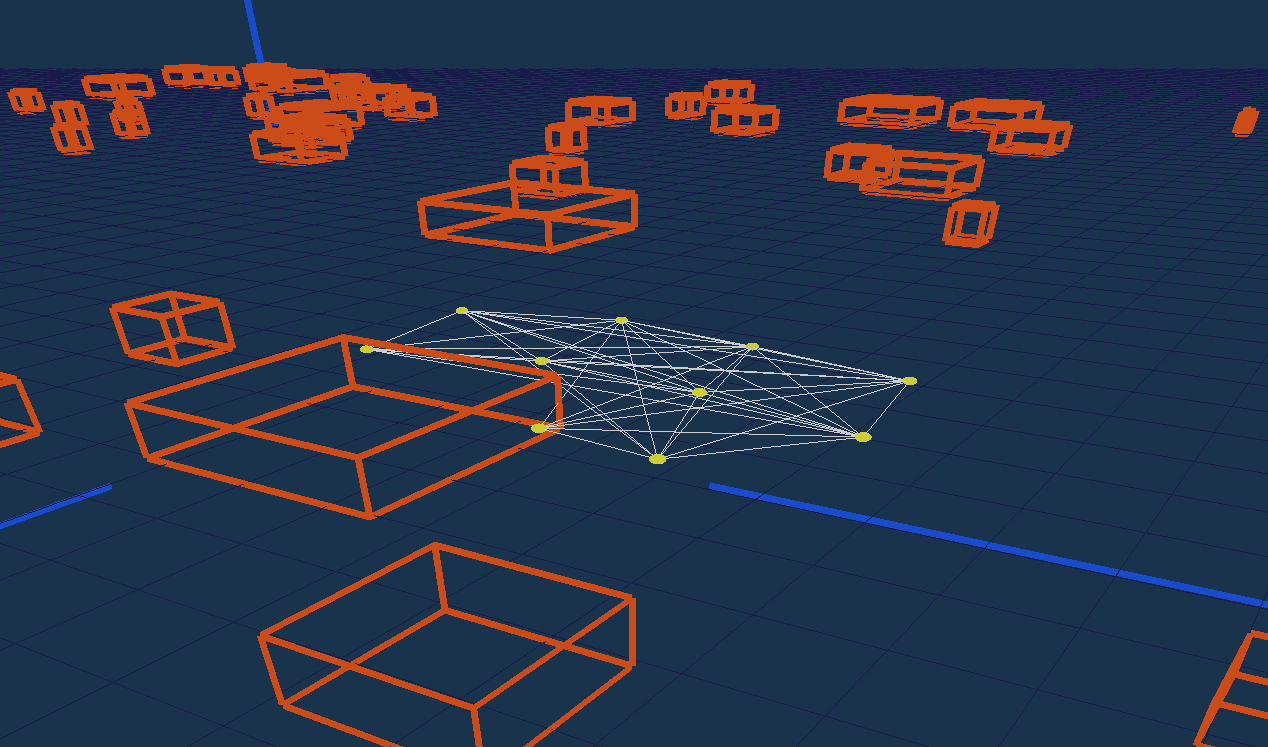

Following 2021 work, the CMCDOT-framework expansion to 3D semantic states, instead of 2D occupancy-only grids, has continued. Such an evolution includes the reorganization and software adaptation of the architecture and the various component parts, the introduction of modules for generating semantic occupation grids based on artificial intelligence, the extension of grid fusion functions to 3D and semantics, as well as software and algorithmic developments in grid filtering 27. Now integrated up to the filtering of estimated states, the system is able to perceive semantic states (pedestrian, car, etc.) using cameras, project them onto occupation grids, merge them with information from 'other sensors (lidar, ultrasound, etc.), and filter them over time, opening up many possibilities for future developments to improve the perception, dynamic estimations and predictions, or the quality, safety and acceptability of the navigation of autonomous systems (see illustrations fig. 3).

8.1.2 Cooperative Perception with Dynamic Occupancy Grids

Participants: Andres Gonzalez Moreno, Rabbia Asghar, Jerome Lussereau, Nicolas Turro, Lukas Rummelhard, Christian Laugier.

In order to expand coverage and overall perception capabilities of an embedded perception system, data exchange between sensing agents is a valuable solution. If occupancy grids are a well-suited tool to fuse data from heterogeneous sources with different viewpoints, the mere size of these structures makes their efficient communication a real challenge. On an experimental plateform consisting of a infrastructure unit equipped with 2 lidars, a Nvidia TX2 card executing the CMCDOT framework and VTX capabilities, and a connected autonomous Zoe plateform (with its own sensors and CMCDOT framework running), two main methods of grid transmission from the infrastructure to the autonomous vehicle have been developed and tested. Following 2021 work, a new communication framework has been developped, which extracts objects from the occupancy grid of the infrastructure, sends object information to the vehicle using VTX communication, then reconstructs the corresponding occupancy grid based on these (taking into account added uncertainties) and fuses it with local perception. The communication software has been implemented based on the ETSI TR 103 562 protocol from the European Telecomunications Standards Institute, Intelligent Transport Systems.

8.1.3 Augmented Reality and realistic pedestrian simulation tools for advanced automotive software testing

Participants: Thomas Genevois, Andres Gonzalez Moreno, Nicolas Turro, Lukas Rummelhard, Christian Laugier.

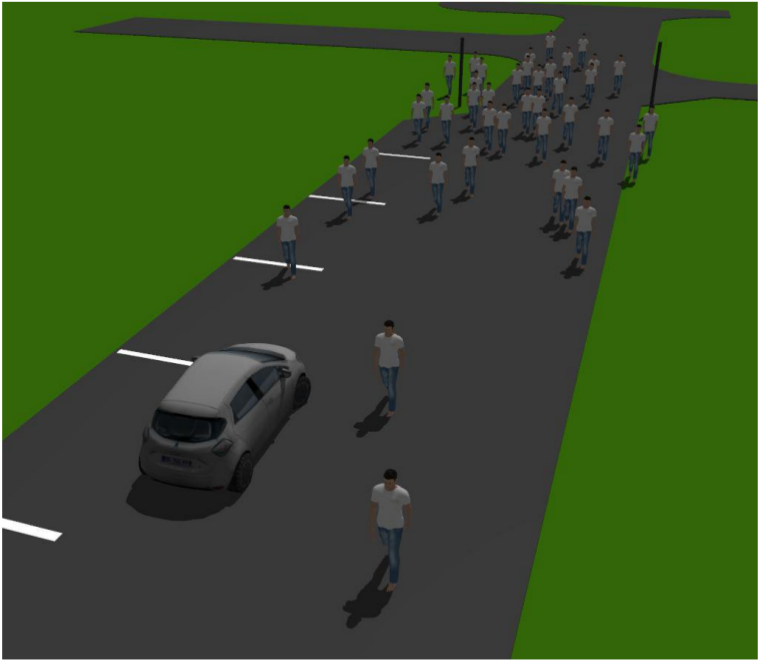

In 2021, CHROMA developed an augmented reality system on sensor data from the experimental Zoe. This system makes it possible during tests of the vehicle perception and navigation software to realistically introduce virtual elements in the real environment of the vehicle, and in its perception. It allows to validate the simulation tools associated with the vehicle by integrating them into an augmented reality scene and comparing them to their real equivalents, and also, it makes it possible to envisage much richer test scenes, with many pedestrians and vehicles, without any risk of collision, while guaranteeing the repeatability of the tests. In 2022, this method and the results on the experimental vehicle were published 19 at the "IEEE Intelligent Vehicles Symposium (IV22)" and were rewarded with the event "best paper award". Several works were also carried out to make the best use of this augmented reality technology.

In order to simulate realistic pedestrians, the pedestrian crowd simulator SPACiSS, developed by the team, was interfaced with the augmented reality tool on the experimental vehicle (see Fig. 4). SPACiSS is a state-of-the-art simulator, dedicated to the simulation of pedestrians and an autonomous vehicle in a shared space, based on agent-based modeling for pedestrian trajectories around an autonomous vehicle14. This simulator is particularly distinguished by its ability to generate realistic pedestrian behavior in social interactions between pedestrians and interactions with a vehicle.

A clear visualization of the augmented reality scenes was needed, to interpret and present the experimental results generated with this tool. Indeed, the augmented reality tool intervenes in real time on the data from the vehicle sensors (LiDAR and camera) but does not generate a clear visualization of the experiment from a point of view external to the car, making it difficult to understand the performance of the navigation software during collision avoidance tests with multiple targets. In order to generate an external view of the augmented scene, infrastructure cameras have been connected, calibrated, and can now be used to display real-time views of the augmented reality scene from outside the vehicle.

Thanks to this developments, the experimental vehicle and environment now constitute a state-of-the-art experimental platform for augmented reality testing, allowing for realistic autonomous vehicle navigation tests in densely-populated urban areas (see Fig. 5).

Presentation of the tools used to analyze the behavior of the experimental vehicle during an augmented reality test.

8.1.4 Development of an industrial AIV, light perception and navigation systems

Participants: Thomas Genevois, Amrita Suresh, Lukas Rummelhard, Christian Laugier.

CHROMA has been working with the company AKEOPLUS, in a new use case, in the scope of the regional R&D project Booster MoovIT, to continue the development of the embedded version of perception and navigation software. This project involves developing a versatile AIV (Autonomous Intelligent Vehicle) dedicated to the logistics of warehouses and industrial workshops. While AKEOPLUS develops the mechanics, the electronics, the basic automatisms of the robot as well as the supervision system and the user interface, CHROMA develops the functions of perception, path planning and local navigation. The jointly developed AIV should be tested in the workshop of a third-party company, Spanninga. If this specific use case implies new developments, these will also benefit the other applications of the software suite built around the CMCDOT. Such developments included in 2022 an evolution of the ground estimation software, allowing for a refined estimation of the ground slope, and the generation of an accessibility map according to this slope for a robot, then integrated in the perception and navigation framework. This evolution has been experimentally validated with the project sensors.

8.1.5 Validation of AI-based algorithms in autonomous vehicles

Participants: Jean-Baptiste Horel, Alessandro Renzaglia, Pierrick Koch, Anshul Paigwar, Radu Mateescu [Convecs], Wendelin Serwe [Convecs], Christian Laugier.

In the last years, there has been an increasing demand for regulating and validating intelligent vehicle systems to ensure their correct functioning and build public trust in their use. Yet, the analysis of safety and reliability poses a significant challenge. More and more solutions for autonomous driving are based on complex AI-based algorithms whose validation is particularly challenging to achieve. An important part of our work has been recently devoted to tackle this problem, finding new suitable approaches to validate probabilistic algorithms for perception and decision making in autonomous driving, and investigating how simulations and experiments in controlled environment can help to solve this challenge. This activity, started with our participation in the European project Enable-S3 (2016-2019), is now continuing with the PRISSMA project, started in April 2021, where we participate in collaboration the the Inria Convecs team. This project, funded by the French government in the framework of the “Grand Défi: Sécuriser, certifier et fiabiliser les systèmes fondés sur l’intelligence artificielle”, regroups several companies and public institutes in France and tackles the challenge of the validation and certification of artificial intelligence based solutions for autonomous mobility.

Within this context, our main work in 2022 has been focused on the automatic generation of large numbers of relevant critical scenarios for autonomous driving simulators 21. This approach is based on the generation of behavioral conformance tests from a formal model (specifying the ground truth configuration with the range of vehicle behaviors) and a test purpose (specifying the critical feature to focus on). The obtained abstract test cases cover, by construction, all possible executions exercising a given feature, and can be automatically translated into the inputs of autonomous driving simulators. We illustrate our approach by generating thousands of behavior trees for the CARLA simulator for several realistic configurations. An extension of this work, where we model check the traces of the simulation runs, combining the advantages of temporal logic verification and quantitative analyses to validate a probabilistic collision risk estimation, has been recently accepted for pubblication in the Journal of Intelligent and Robotic Systems 72.

8.2 Situation Awareness & Decision-making for Autonomous Vehicles

Participants: Ozgur Erkent, David Sierra-González, Anshul Paigwar, Christian Laugier, Manuel Alejandro Diaz-Zapata, Alessandro Renzaglia, Jilles Dibangoye, Luiz Serafim-Guardini, Anne Spalanzani, Wenqian Liu, Abhishek Tomy, Khushdeep Singh Mann, Rabbia Asghar, Lukas Rummelhard, Gustavo Salazar-Gomez, Olivier Simonin.

In this section, we present all the novel results in the domains of perception, motion prediction and decision-making for autonomous vehicles.

8.2.1 Situation Understanding and Motion Forecasting

Participants: David Sierra-González, Anshul Paigwar, Ozgur Erkent, Christian Laugier.

Forecasting the motion of surrounding traffic is one of the key challenges in the quest to achieve safe autonomous driving technology. Current state-of-the-art deep forecasting architectures are capable of producing impressive results. However, in many cases, they also output completely unreasonable trajectories, making them unsuitable for deployment. In 2022, we have developed a deep forecasting architecture that leverages the map lane centerlines available in recent datasets to predict sensible trajectories; that is, trajectories that conform to the road layout, agree with the observed dynamics of the target, and react to the presence of surrounding agents. To model such sensible behavior, the proposed architecture first predicts the lane or lanes that the target agent is likely to follow. Then, a navigational goal along each candidate lane is predicted, allowing the regression of the final trajectory in a lane- and goal-oriented manner. Our experiments in the Argoverse dataset show that our architecture achieves performance on-par with lane-oriented state-of-the-art forecasting approaches and not far behind goal-oriented approaches, while consistently producing sensible trajectories. This work was published at IEEE ITSC 2022 30.

Current work is focused on bridging the gap between semantic grid prediction approaches and motion forecasting. The goal is to leverage the semantic information of the environment that can be extracted from the cameras to improve the performance of the proposed centerline-based motion forecasting methods.

8.2.2 Semantic Grid Generation from Camera and LiDAR Sensor Fusion

Participants: Manuel Alejandro Diaz-Zapata, David Sierra-González, Ozgur Erkent, Christian Laugier, Jilles Dibangoye, Olivier Simonin.

Continuing the work towards semantic grid generation, during 2022 a novel method was created called LiDAR-Aided Projective Transform Network (LAPTNet) 18. By using depth information taken from a LiDAR sensor, this approach is able to project image features to a bird's-eye-view which are then used to estimate semantic grids that can describe the layout of the scene around a car.

This method was later extended to work with multiple image scales taken from the image encoder (LAPT-FPN), as well as the addition of a LiDAR-dependent branch (LAPT-PP) to further process the point clouds incoming from the LiDAR. The final proposed method uses these two extensions (LAPT-FPN-PP) to predict semantic grids in real time (25 FPS), and is able to outperform other competing approaches that use only camera or LiDAR information, or a fusion of both. LAPT-FPN-PP achieves a result close to the state-of-the-art for vehicle semantic grid segmentation while being 3.7x faster. This work has been accepted for publication at ICRA 2023.

8.2.3 Lidar-RGB Sensor Fusion with Transformers for Semantic Grid Prediction

Participants: Gustavo Salazar-Gomez, David Sierra-González, Manuel Alejandro Diaz-Zapata, Anshul Paigwar, Wenqian Liu, Ozgur Erkent, Christian Laugier.

Semantic grids are a succinct and convenient approach to represent the environment for mobile robotics and autonomous driving applications. While the use of Lidar sensors is now generalized in robotics, most semantic grid prediction approaches in the literature focus only on RGB data. In the work performed we explore different fusion algorithms and methods, and we propose an approach for semantic grid prediction that uses a transformer architecture to fuse Lidar sensor data with RGB images from multiple cameras. Our proposed method, TransFuseGrid, first transforms both input streams into topview embeddings, and then fuses these embeddings at multiple scales with Transformers. We evaluate the performance of our approach on the nuScenes dataset.

The results of this work are presented in the Master thesis “Transformer-based Lidar-RGB Fusion for Semantic Grid Prediction in Autonomous Vehicles” 44, and as a paper at the 17th International Conference on Control, Automation, Robotics and Vision (ICARCV), Dec 2022, Singapore 28.

In the next steps, we explore algorithms for semantic grid prediction, relying only on camera data and transformers, understanding the role of different positional encoding approaches.

8.2.4 Allo-centric Occupancy Grid Prediction for Urban Traffic Scene Using Video Prediction Networks

Participants: Rabbia Asghar, Lukas Rummelhard, Anne Spalanzani, Christian Laugier.

Prediction of dynamic environment is crucial to safe navigation for an autonomous vehicle. Urban traffic scenes are particularly challenging to forecast due to complex interactions between various dynamic agents, such as vehicles and vulnerable road users. Previous approaches have used ego-centric occupancy grid maps to represent and predict dynamic environments. However, these predictions suffer from blurriness, loss of scene structure at turns, and vanishing of agents over longer prediction horizon. In this work, we propose a novel framework to make long-term predictions by representing the traffic scene in a fixed frame, referred as allo-centric occupancy grid. This allows for the static scene to remain fixed and to represent motion of the ego-vehicle on the grid like other agents'. We study the allo-centric grid prediction with different video prediction networks and validate the approach on the real-world Nuscenes dataset. The results demonstrate that the allo-centric grid representation significantly improves scene prediction, in comparison to the conventional ego-centric grid approach.

This work has been published and presented at the 2022 IEEE International Conference on Control, Automation, Robotics and Vision (ICARCV) 16.

8.2.5 Lidar-Camera Sensor Fusion for Object Detection and Tracking

Participants: Wenqian Liu, Ozgur Erkent, David Sierra-González, Christian Laugier.

Modern tracking by detection algorithms heavily rely on accurate detections. Yet to precisely localize the objects in the three-dimensional space is still very challenging for 3D detectors. One of the key reasons is the inherent sparsity and noise present in point clouds when using LiDAR as the sole input sensor. However, RGB camera images, as a natural complementary modality for point clouds, possess higher resolution and richer color and texture cues. Conversely, 3D structural information obtained from point clouds can compensate the lack of depth information from RGB images. As a result, we propose to adopt Transformers models to generate comprehensive and contextual fused features from both modalities and develop our Lidar-Camera fusion techniques to improve the performance of the existing 3D object detectors and trackers. The proposed models are trained and evaluated on NuScenes dataset. Our approach outperforms the baseline Lidar-only object detection models under the same experimental setups.

8.2.6 End-to-End Driving using Attention based Reinforcement Learning

Participants: Khushdeep Singh Mann, Abhishek Tomy, Anshul Paigwar, Alessandro Renzaglia, Christian Laugier.

One important area of research in autonomous driving is the extraction of semantic representations through the fusion of many sensor modalities. The semantic representations can now be utilized to drive policies for executing end-to-end decision making thanks to previous research from the team, such as "LAPTNet: LiDAR-Aided Perspective Transform Network" 18. Under no traffic and busy traffic scenarios, we retrieved semantic representation for training attention-based deep reinforcement learning (RL) policies. The ego-vehicle picks up driving habits and methods for interacting with other agents. In comparison to vanilla RL policies, we show that self-attention and cross-attention systems provide superior performance. We intend to conduct further experiments in future work and release the results as a conference paper.

8.2.7 Transformer-based Event Camera Disparity Estimation

Participants: Abhishek Tomy, Khushdeep Singh Mann, Anshul Paigwar, Alessandro Renzaglia, Christian Laugier.

We continue our work from 2021 on disparity estimation from stereo event-based cameras. Within this project's scope, fundamental design choices that would lead to improved performance in the case of depth estimation using an event camera are explored. To achieve state-of-art results in the event-based depth estimation task, the type of event representation and transformer based network architecture were experimented on. In the case of an input representation, event voxels (stacking based on time) and stacking based on the number of events (SBN) are explored to determine if these representations can lead to improved generalization to various conditions. Both these representations for depth estimations are compared with concentrating the events to a single channel by the state-of-the-art "concentration network" model. For model architecture, a transformer-based network that can handle the temporal information in events is being designed to handle sparse events from the camera.

8.2.8 Detection and Tracking Model Deployment on Chroma's Experimental Driving Platform

Participants: Khushdeep Singh Mann, Abhishek Tomy, David Sierra-Gonzalez, Christian Laugier.

Detection and tracking of the surrounding agents is a crucial activity for an autonomous vehicle. Currently, the software packages deployed on the Zoe experimental platform lack 3D object detection. In this project, a 3D object detection and tracking pipeline is being deployed. For 3D object detection, a PointPillars model trained on the nuScenes dataset is used for detection. For object tracking, a constant acceleration based Kalman filter methodology with greedy matching is used to track and associate detected objects. For deployment, the deep-learning models are optimized using TensorRT for faster inference on real-time applications.

In future work, the detection and tracking packages will be combined with prediction and planning modules for safe autonomous navigation. Recent research work from the team on semantic grid prediction will be translated to a deployable solution for generating semantic grids and will be one of the building blocks for the planning module. All these packages would complete the autonomous driving stack and enable real-world experiments on the Zoe experimental platform.

8.3 Robust state estimation (Sensor fusion)

Participants: Agostino Martinelli.

This research is the follow up of Agostino Martinelli's investigations carried out during the last seven years, which mainly consisted in the derivation of the analytical solution of the unknown input observability problem. This is the problem of obtaining a simple analytical criterion to check the observability of the state that characterizes a nonlinear system when its dynamics are driven by inputs which are unknown and act as disturbances. This was an open problem, formulated during the 1960's by the automatic control community and that remained unsolved for half century.

In December 2018, Martinelli was invited by the Society for Industrial and Applied Mathematics (SIAM) to write a book with the solution of this problem. This was the main work during 2019 89. The book contains a general analytical solution of this open problem. The solution is an algorithm that automatically provides the observability of a given system (technically speaking it provides the observability codistribution), independently of its complexity and nonlinearity, and in the presence of unknown inputs. On the other hand, this solution is based on an important assumption that, in the book, was called canonicity with respect to the unknown inputs. In addition, even in the case of systems that satisfy this assumption, the convergence criterion of the algorithm provided in 89 can fail in some special cases.

The research carried out during 2021-2022 has been focused on the following three main topics:

- Obtaining a general convergence criterion of the algorithm in the canonical case.

- Dealing with systems that do not satisfy the canonicity assumption.

- Investigating the problem of unknown input reconstruction, which is strongly related to the problem of state observability in the presence of unknown inputs.