Keywords

Computer Science and Digital Science

- A3.4.2. Unsupervised learning

- A3.4.7. Kernel methods

- A3.4.8. Deep learning

- A5.3. Image processing and analysis

- A5.3.2. Sparse modeling and image representation

- A5.3.3. Pattern recognition

- A5.3.5. Computational photography

- A5.7. Audio modeling and processing

- A5.7.3. Speech

- A5.7.4. Analysis

- A5.9. Signal processing

- A5.9.2. Estimation, modeling

- A5.9.3. Reconstruction, enhancement

- A5.9.5. Sparsity-aware processing

- A5.9.6. Optimization tools

Other Research Topics and Application Domains

- B2. Health

- B2.2. Physiology and diseases

- B2.2.1. Cardiovascular and respiratory diseases

- B2.2.6. Neurodegenerative diseases

- B3. Environment and planet

- B3.3. Geosciences

- B3.3.2. Water: sea & ocean, lake & river

- B3.3.4. Atmosphere

1 Team members, visitors, external collaborators

Research Scientists

- Hussein Yahia [Team leader, INRIA, Researcher, HDR]

- Luc Bourrel [IRD, from Mar 2022]

- Nicolas Brodu [INRIA, Researcher, HDR]

- Khalid Daoudi [INRIA, Researcher, HDR]

Post-Doctoral Fellows

- Anis Fradi [INRIA]

- Alexandra Jurgens [UNIV BERKELEY, from Apr 2022 until May 2022]

- Sarah Marzen [UNIV CALIFORNIE, from Dec 2022]

- Marina Saez Andreu [INRIA, from Sep 2022]

- Farouk Yahaya [INRIA, from Jul 2022]

Technical Staff

- Zhe Li [INRIA, Engineer]

Administrative Assistants

- Flavie Blondel [INRIA, from Oct 2022]

- Sabrina Duthil [INRIA]

2 Overall objectives

Geostat is a research project which investigates the analysis of some classes of natural complex signals (physiological time series, turbulent universe and earth observation data sets) by determining, in acquired signals, the properties that are predicted by commonly admitted or new physical models best fitting the phenomenon. Consequently, when statistical properties discovered in the signals do not match closely enough those predicted by accepted physical models, we question the validity of existing models or propose, whenever possible, modifications or extensions of existing models. A new direction of research, based on the Concaust exploratory action and the newly accepted (in February 2021) associated team Comcausa proposed by N. Brodu with USA / UC Davis, Complexity Sciences Center, Physics Department is developped in the team.

An important aspect of the methodological approach is that we don't rely on a predetermined "universal" signal processing model to analyze natural complex signals. Instead, we take into consideration existing approaches in nonlinear signal processing (wavelets, multifractal analysis tools such as log-cumulants or micro-canonical multifractal formalism, time frequency analysis etc.) which are used to determine the micro structures or other micro features inside the acquired signals. Then, statistical analysis of these micro data are determined and compared to expected behaviour from theoretical physical models used to describe the phenomenon from which the data is acquired. From there different possibilities can be contemplated:

- The statistics match behaviour predicted by the model: complexity parameters predicted by the model are extracted from signals to analyze the dynamics of underlying phenomena. Examples: analysis of turbulent data sets in Oceanography and Astronomy.

- The signals displays statistics that cannot be attainable by the common lore of accepted models: how to extend or modify the models according to the behaviour of observed signals ? Example: audio speech signals.

Geostat is a research project in nonlinear signal processing which develops on these considerations: it considers the signals as the realizations of complex extended dynamical systems. The driving approach is to describe the relations between complexity (or information content) and the geometric organization of information in a signal. For instance, for signals which are acquisitions of turbulent fluids, the organization of information may be related to the effective presence of a multiscale hierarchy of coherent structures, of multifractal nature, which is strongly related to intermittency and multiplicative cascade phenomena ; the determination of this geometric organization unlocks key nonlinear parameters and features associated to these signals; it helps understand their dynamical properties and their analysis. We use this approach to derive novel solution methods for super-resolution and data fusion in Universe Sciences acquisitions 12. Specific advances are obtained in Geostat in using this type of statistical/geometric approach to get validated dynamical information of signals acquired in Universe Sciences, e.g. oceanography or astronomy. The research in Geostat encompasses nonlinear signal processing and the study of emergence in complex systems, with a strong emphasis on geometric approaches to complexity. Consequently, research in Geostat is oriented towards the determination, in real signals, of quantities or phenomena, usually unattainable through linear methods, that are known to play an important role both in the evolution of dynamical systems whose acquisitions are the signals under study, and in the compact representations of the signals themselves.

Signals studied in Geostat belong to two broad classes:

- Acquisitions in astronomy and earth observation.

- Physiological time series.

3 Research program

3.1 General methodology

- Fully Developed Turbulence (FDT) Turbulence at very high Reynolds numbers; systems in FDT are beyond deterministic chaos, and symmetries are restored in a statistical sense only, and multi-scale correlated structures are landmarks. Generalizing to more random uncorrelated multi-scale structured turbulent fields.

- Compact Representation Reduced representation of a complex signal (dimensionality reduction) from which the whole signal can be reconstructed. The reduced representation can correspond to points randomly chosen, such as in Compressive Sensing, or to geometric localization related to statistical information content (framework of reconstructible systems).

- Sparse representation The representation of a signal as a linear combination of elements taken in a dictionary (frame or Hilbertian basis), with the aim of finding as less as possible non-zero coefficients for a large class of signals.

- Universality class In theoretical physics, the observation of the coincidence of the critical exponents (behaviour near a second order phase transition) in different phenomena and systems is called universality. Universality is explained by the theory of the renormalization group, allowing for the determination of the changes followed by structured fluctuations under rescaling, a physical system is the stage of. The notion is applicable with caution and some differences to generalized out-of-equilibrium or disordered systems. Non-universal exponents (without definite classes) exist in some universal slowing dynamical phenomena like the glass transition and kindred. As a consequence, different macroscopic phenomena displaying multiscale structures (and their acquisition in the form of complex signals) may be grouped into different sets of generalized classes.

Geostat is a research project which investigates the analysis of some classes of natural complex signals (physiological time series, turbulent universe and earth observation data sets) by determining, in acquired signals, the properties that are predicted by commonly admitted or new physical models best fitting the phenomenon. The team makes fundamental and applied research in the analysis of complex natural signals using paradigms and methods from statistical physics such as: scale invariance, predictability, universality classes. We study the parameters related to common statistical organization in different complex signals and systems, we derive new types of sparse and compact representations, and machine learning approaches. We are also developing tools for the analysis of complex signals that better match the statistical and geometrical organisation inside these data: as a typical example, we cite the evaluation of cascading properties of physical variables inside complex signals.

When statistical properties discovered in the signals do not match closely enough those predicted by accepted physical models, we question the validity of existing models or propose, whenever possible, modifications or extensions of existing models. Also, a direction of research, based on the Concaust exploratory action and the newly accepted (in February 2021) associated team Comcausa proposed by N. Brodu with USA / UC Davis, Complexity Sciences Center, Physics Department is developped in the team.

Every signal conveys, as a measure experiment, information on the physical system whose signal is an acquisition of. As a consequence, it seems natural that signal analysis or compression should make use of physical modelling of phenomena: the goal is to find new methodologies in signal processing that goes beyond the simple problem of interpretation. Physics of disordered systems, and specifically physics of (spin) glasses is putting forward new algorithmic resolution methods in various domains such as optimization, compressive sensing etc. with significant success notably for NP hard problem heuristics. Similarly, physics of turbulence introduces phenomenological approaches involving multifractality. Energy cascades are indeed closely related to geometrical manifolds defined through random processes. At these structures’ scales, information in the process is lost by dissipation (close to the lower bound of inertial range). However, all the cascade is encoded in the geometric manifolds, through long or short distance correlations depending on cases. How do these geometrical manifold structures organize in space and time, in other words, how does the scale entropy cascades itself? To unify these two notions, a description in term of free energy of a generic physical model is sometimes possible, such as an elastic interface model in a random nonlinear energy landscape : This is for instance the correspondence between compressible stochastic Burgers equation and directed polymers in a disordered medium. Thus, trying to unlock the fingerprints of cascade-like structures in acquired natural signals becomes a fundamental problem, from both theoretical and applicative viewpoints.

An important aspect of the methodological approach is that we don't rely on a predetermined "universal" signal processing model to analyze natural complex signals. Instead, we take into consideration existing approaches in nonlinear signal processing (wavelets, multifractal analysis tools such as log-cumulants or micro-canonical multifractal formalism, time frequency analysis etc.) which are used to determine the micro structures or other micro features inside the acquired signals. Then, statistical analysis of these micro data are determined and compared to expected behavior from theoretical physical models used to describe the phenomenon from which the data is acquired. From there different possibilities can be contemplated:

-

-

The statistics match behaviour predicted by the model: complexity parameters predicted by the model are extracted from signals to analyze the dynamics of underlying phenomena. Examples: analysis of turbulent data sets in Oceanography and Astronomy.

-

-

The signals displays statistics that cannot be attainable by the common lore of accepted models: how to extend or modify the models according to the behaviour of observed signals? Example: audio speech signals.

We focus on the following theoretical developments:

- Signal processing using methods from complex systems and statistical physics,

- Sparse and compact representations, signal reconstruction, machine learning,

- Predictability in complex systems,

- Analysis, classification, detection in complex signals.

The team conducts research in nonlinear signal processing on these considerations: we consider the signals as the realizations of complex extended dynamical systems. The driving approach is to describe the relations between complexity (or information content) and the geometric organization of information in a signal. For instance, for signals which are acquisitions of turbulent fluids, the organization of information may be related to the effective presence of a multiscale hierarchy of coherent structures, of multifractal nature, which is strongly related to intermittency and multiplicative cascade phenomena ; the determination of this geometric organization unlocks key nonlinear parameters and features associated to these signals; it helps understand their dynamical properties and their analysis. We use this approach to derive novel solution methods for super-resolution and data fusion in Universe Sciences acquisitions. Specific advances are obtained in using this type of statistical/geometric approach to get validated dynamical information of signals acquired in Universe Sciences, e.g. Oceanography or Astronomy. The approach encompasses nonlinear signal processing and the study of emergence in complex systems, with a strong emphasis on geometric approaches to complexity. Consequently, research is oriented towards the determination, in real signals, of quantities or phenomena, usually unattainable through linear methods, that are known to play an important role both in the evolution of dynamical systems whose acquisitions are the signals under study, and in the compact representations of the signals themselves.

Signals under study belong to two broad classes:

- Acquisitions in Astronomy and Earth Observation.

- Physiological time series.

3.2 Turbulence in insterstellar clouds and Earth observation data

The analysis and modeling of natural phenomena, specially those observed in geophysical sciences and in astronomy, are influenced by statistical and multiscale phenomenological descriptions of turbulence; indeed these descriptions are able to explain the partition of energy within a certain range of scales. A particularly important aspect of the statistical theory of turbulence lies in the discovery that the support of the energy transfer is spatially highly non uniform, in other terms it is intermittent46. Because of the absence of localization of the Fourier transform, linear methods are not successful to unlock the multiscale structures and cascading properties of variables which are of primary importance as stated by the physics of the phenomena. This is the reason why new approaches, such as DFA (Detrented Fluctuation Analysis), Time-frequency analysis, variations on curvelets 43 etc. have appeared during the last decades. Recent advances in dimensionality reduction, and notably in compressive sensing, go beyond the Nyquist rate in sampling theory using nonlinear reconstruction, but data reduction occur at random places, independently of geometric localization of information content, which can be very useful for acquisition purposes, but of lower impact in signal analysis. We are successfully making use of a microcanonical formulation of the multifractal theory, based on predictability and reconstruction, to study the turbulent nature of interstellar molecular or atomic clouds. Another important result obtained in Geostat is the effective use of multiresolution analysis associated to optimal inference along the scales of a complex system. The multiresolution analysis is performed on dimensionless quantities given by the singularity exponents which encode properly the geometrical structures associated to multiscale organization. This is applied successfully in the derivation of high resolution ocean dynamics, or the high resolution mapping of gaseous exchanges between the ocean and the atmosphere; the latter is of primary importance for a quantitative evaluation of global warming. Understanding the dynamics of complex systems is recognized as a new discipline, which makes use of theoretical and methodological foundations coming from nonlinear physics, the study of dynamical systems and many aspects of computer science. One of the challenges is related to the question of emergence in complex systems: large-scale effects measurable macroscopically from a system made of huge numbers of interactive agents 20, 40. Some quantities related to nonlinearity, such as Lyapunov exponents, Kolmogorov-Sinai entropy etc. can be computed at least in the phase space 21. Consequently, knowledge from acquisitions of complex systems (which include complex signals) could be obtained from information about the phase space. A result from F. Takens 44 about strange attractors in turbulence has motivated the theoretical determination of nonlinear characteristics associated to complex acquisitions. Emergence phenomena can also be traced inside complex signals themselves, by trying to localize information content geometrically. Fundamentally, in the nonlinear analysis of complex signals there are broadly two approaches: characterization by attractors (embedding and bifurcation) and time-frequency, multiscale/multiresolution approaches. In real situations, the phase space associated to the acquisition of a complex phenomenon is unknown. It is however possible to relate, inside the signal's domain, local predictability to local reconstruction 13 and to deduce relevant information associated to multiscale geophysical signals 14. A multiscale organization is a fundamental feature of a complex system, it can be for example related to the cascading properties in turbulent systems. We make use of this kind of description when analyzing turbulent signals: intermittency is observed within the inertial range and is related to the fact that, in the case of FDT (fully developed turbulence), symmetry is restored only in a statistical sense, a fact that has consequences on the quality of any nonlinear signal representation by frames or dictionaries.

The example of FDT as a standard "template" for developing general methods that apply to a vast class of complex systems and signals is of fundamental interest because, in FDT, the existence of a multiscale hierarchy which is of multifractal nature and geometrically localized can be derived from physical considerations. This geometric hierarchy of sets is responsible for the shape of the computed singularity spectra, which in turn is related to the statistical organization of information content in a signal. It explains scale invariance, a characteristic feature of complex signals. The analogy from statistical physics comes from the fact that singularity exponents are direct generalizations of critical exponents which explain the macroscopic properties of a system around critical points, and the quantitative characterization of universality classes, which allow the definition of methods and algorithms that apply to general complex signals and systems, and not only turbulent signals: signals which belong to a same universality class share common statistical organization. During the past decades, canonical approaches permitted the development of a well-established analogy taken from thermodynamics in the analysis of complex signals: if is the free energy, the temperature measured in energy units, the internal energy per volume unit the entropy and , then the scaling exponents associated to moments of intensive variables corresponds to , corresponds to the singularity exponents values, and to the singularity spectrum 19. The research goal is to be able to determine universality classes associated to acquired signals, independently of microscopic properties in the phase space of various complex systems, and beyond the particular case of turbulent data 35.

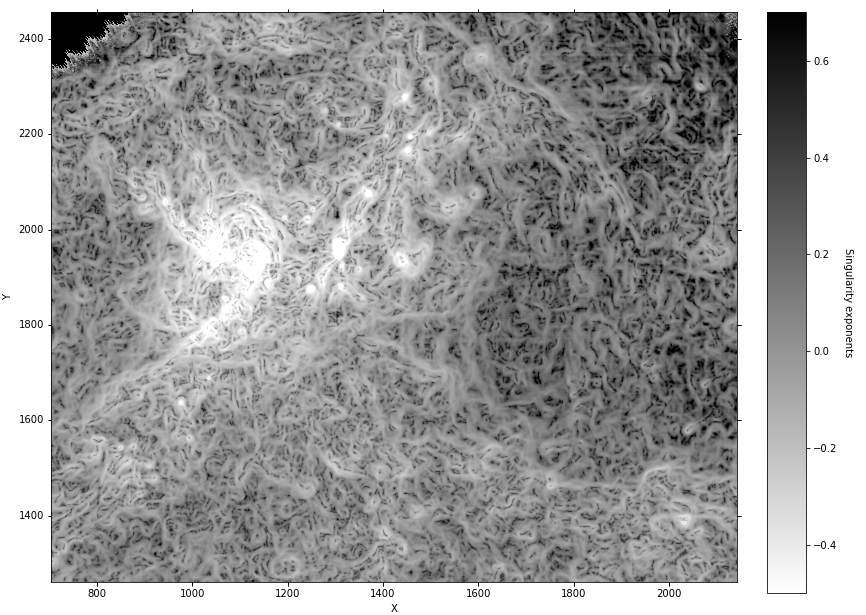

We show in figure 1 the result of the computation of singularity exponents on an Herschel astronomical observation map (Aquila galactic cloud, sub-region) which has been edge-aware filtered using sparse - filtering to eliminate the cosmic infrared background (or CIB), a type of noise that can modify the singularity spectrum of a signal.

3.3 Data analysis, causal modeling, application to earth sciences

Generally speaking, we are interested in developing new methods for inferring models of physical systems from data. The key questions are: What are the important objects of study at each description level? What are the patterns of their interactions? How to detect them in the first place? How to describe them using relevant variables? How to recover and formalize their dynamics, with analytical formula or via a computer program? Powerful mathematical tools and conceptual frameworks have been developed over years of research on complex systems and nonlinear dynamics 26, 32 ; yet the arousal of big data and regain in machine learning popularity have recently stimulated this domain of research 37, 38, 23. Our approach is to focus on how information is produced (emergence of patterns, of large-scale structures) and on how that information is maintained and transformed at different scales 25. We operate on states of similar information content, in the same way that thermodynamics operate on states of similar energy levels. Our goal is to search for the analog of Hamiltonian dynamics, but that describes information transformations instead of energy transformations. This approach would be particularly useful for modeling steady-state systems, which operate far from thermodynamic equilibrium. In these, energy dissipation is a prerequisite for maintaining the observed patterns, and thus not necessarily the most useful metric to investigate. In fact, most natural, physical and industrial systems we deal with fall in this category, while balanced quasi-static assumptions are practical approximation only for scales well below the characteristic scale of the involved processes. Open and dissipative systems are not locally constrained by the inevitable rise in entropy, thus allowing the maintaining through time of ordered structures. And, according to information theory, more order and less entropy means that these structures have a higher information content than the rest of the system, which usually gives them a high functional role. We propose to identify characteristic scales not only with energy dissipation, as usual in signal processing analysis (power spectrum analysis), but most importantly with information content. Information theory can be extended to look at which scales are most informative (e.g. multi-scale entropy 36, -entropy 24). Multiple complexity measures can quantify the presence of structures in the signal (e.g. statistical complexity 27, MPR 39 and others 31). With these notions, it is already possible to discriminate between random fluctuations and hidden order, such as in chaotic systems 25, 39. The theory of how information and structures can be defined through scales is not complete yet, but the state of art is promising 30. Building on these notions, it should also possible to fully automate the modeling of a natural system. Once characteristic scales are found, causal relationships can be established empirically. They are then clustered together in internal states of a special kind of Markov models called -machines 27. These are known to be the optimal predictors of a system, with the drawback that it is currently quite complicated to build them properly, except for small system 42. Recent extensions with advanced clustering techniques 22, 33, coupled with the physics of the studied system (e.g. fluid dynamics), have proved that -machines are applicable to large systems, such as global wind patterns in the atmosphere 34. Current research in the team focuses on the use of reproducing kernels, coupled possibly with sparse operators, in order to design better algorithms for -machines reconstruction.

3.4 Vocal biomarkers

Speech convey valuable information about the health (and emotional) state of the speaker. A disturbance in this state can affect some or all the speech production subsystems: respiratory, phonatory, articulatory, nasalic and prosodic. A vocal biomarker is thus a digital signature of a speech signal that is associated with a clinical finding/observation and can be used to assist in: diagnose a disease, monitor patients, assess the severity or stage of a disease, assess response to treatment. Vocal biomarkers could provide attractive solutions in (tele)medicine which are non- invasive, cost-effective and rapidly deployable on large scales. Consequently, during this century, there has been an ever increasing interest in the development of objective vocal biomarkers with a major peak since the Covid-19 pandemic 29, with the emergence of several start- ups in the area (www.sondehealth.com, www.evocalhealth.com,...). Traditionally, most of the research has been carried out on neurodegenerative diseases, particularly on dysarthria, a class of motor speech disorders 28. There is however now a growing contribution in mental, cognitive, respiratory, cardiovascular and other diseases. While there has been definitely a progress in understanding how some pathologies alter speech, the field is still in its early development. One reason is the domination of pure-IA research in this area, while the clinical involvement is crucial unlock the clinical, scientific and technological challenges. In GeoStat, the core strategy of research in this area is to conduct R&D with a close partnership with (top) clinician experts of the pathologies under study, in the form of clinical trials. Our main research has been on differential diagnosis of Parkinsonism. We have thus been conducting an ANR project, Voice4PD-MSA, with the neurology and ENT departments of the university hospitals of Bordeaux and Toulouse. We have been also closely collaborating in a clinical study with Czech partners in Charles university and Technical university of Prague. In these two partnerships, we address the difficult problem of early discrimination between Parkinson’s disease (PD) and atypical Parkinsonian disorders (APD) such as Progressive Supranuclear Palsy (PSP) and Multiple System Atrophy (MSA). Since the Covid-19 pandemic, we started 3 projects with different hospitals of Paris (AP-HP). The first one, VocaPnée, on the remote monitoring of patients with respiratory diseases. The second one, Respeak, on the dispatch assistance of emergency calls. The third (recent) one, AcroVox, on the diagnosis of a rare hormonal disease, acromegalia. All the projects with AP-HP are accompanied by the recently created Bernoulli lab (see). From the methodological perspective, our main goal is to develop a signal processing framework which can be more appropriate to handle the specificities of pathological voices than the classical framework. Indeed, most of the research in this area rely on methods and tools developed for healthy speech, mainly based on the linear and independent source-filter speech production model. In the presence of an impairment, many of the underlying assumptions and models can fail to capture the disease specific alteration(s). This makes it necessary to adapt existing techniques and/or to develop new ones in order to extract the useful features and cues and to achieve the classification or regression targets. Besides following this approach, our ambition is to provide a theoretical framework which can “unify” healthy and pathological speech analysis. We have been recently investigating that “Probabilistic time-frequency analysis (PTFA)” 45 under the framework of Gaussian processes 41 as a promising candidate to achieve this goal. This framework is appealing because it makes it easier and natural to provide adaptive time- frequency representations, control representation sparsity, propagate uncertainty, quantify noise, reveal non-linearity, sampling/synthesis (for data augmentation for instance). As an example, classical spectrograms, wavelets or (a class of) filter-banks become probabilistic inference in this framework. PTFA was introduced in 45 but did not attract a lot of attention from the community, mainly because of the significant computational complexity load of the approach. We are investigating low-rank covariance approximation techniques to overcome this issue. We underline that we chose not to follow (for the moment) the dominating deep learning flow (even in this area which is a small-data problem), because interpretability at the acoustico-phonetic and clinical level is crucial in our setting. We are also putting a significant effort on software development. There exists indeed no publicly available tool for the analysis of pathological speech. We have been thus developing a Python library, VocaPy, where we integrate confirmed, adapted and new algorithms, after solid validation on speech datasets altered by different pathologies. Such a software would be very beneficial to the community and would contribute considerably to the R&D progress in the field (as Praat does in healthy speech analysis).

3.5 InnovationLab, sparse and non-convex methods in image processing

This research topic involves Geostat team and is used to set up an InnovationLab with I2S company. In 2022, the theoretical efforts have been concentrated in super-resolution thematics (PhD of A. Rashidi, defended in March 2022) while setting up the InnovationLab resulted in a very successful INRIA transfer operation (link to INRIA article). The results obtained in the InnovationLab have been used in the research described in section 3.2. See also section 4.

4 Application domains

Sparse signals & optimization

This research topic involves Geostat team and is used to set up an InnovationLab with I2S company.

Sparsity can be used in many ways and there exist various sparse models in the literature; for instance minimizing the quasi-norm is known to be an NP-hard problem as one needs to try all the possible combinations of the signal's elements. The norm, which is the convex relation of the quasi-norm results in a tractable optimization problem. The pseudo-norms with are particularly interesting as they give closer approximation of but result in a non-convex minimization problem. Thus, finding a global minimum for this kind of problem is not guaranteed. However, using a non-convex penalty instead of the norm has been shown to improve significantly various sparsity-based applications. Nonconvexity has a lot of statistical implications in signal and image processing. Indeed, natural images tend to have a heavy-tailed (kurtotic) distribution in certain domains such as wavelets and gradients. Using the norm comes to consider a Laplacian distribution. More generally, the hyper-Laplacian distribution is related to the pseudo-norm () where the value of controls how the distribution is heavy-tailed. As the hyper-Laplacian distribution for represents better the empirical distribution of the transformed images, it makes sense to use the pseudo-norms instead of . Other functions that better reflect heavy-tailed distributions of images have been used as well such as Student-t or Gaussian Scale Mixtures. The internal properties of natural images have helped researchers to push the sparsity principle further and develop highly efficient algorithms for restoration, representation and coding. Group sparsity is an extension of the sparsity principle where data is clustered into groups and each group is sparsified differently. More specifically, in many cases, it makes sense to follow a certain structure when sparsifying by forcing similar sets of points to be zeros or non-zeros simultaneously. This is typically true for natural images that represent coherent structures. The concept of group sparsity has been first used for simultaneously shrinking groups of wavelet coefficients because of the relations between wavelet basis elements. Lastly, there is a strong relationship between sparsity, nonpredictability and scale invariance.

We have shown that the two powerful concepts of sparsity and scale invariance can be exploited to design fast and efficient imaging algorithms. A general framework has been set up for using non-convex sparsity by applying a first-order approximation. When using a proximal solver to estimate a solution of a sparsity-based optimization problem, sparse terms are always separated in subproblems that take the form of a proximal operator. Estimating the proximal operator associated to a non-convex term is thus the key component to use efficient solvers for non-convex sparse optimization. Using this strategy, only the shrinkage operator changes and thus the solver has the same complexity for both the convex and non-convex cases. While few previous works have also proposed to use non-convex sparsity, their choice of the sparse penalty is rather limited to functions like the pseudo-norm for certain values of or the Minimax Concave (MC) penalty because they admit an analytical solution. Using a first-order approximation only requires calculating the (super)gradient of the function, which makes it possible to use a wide range of penalties for sparse regularization. This is important in various applications where we need a flexible shrinkage function such as in edge-aware processing. Apart from non-convexity, using a first-order approximation makes it easier to verify the optimality condition of proximal operator-based solvers via fixed-point interpretation. Another problem that arises in various imaging applications but has attracted less works is the problem of multi-sparsity, when the minimization problem includes various sparse terms that can be non-convex. This is typically the case when looking for a sparse solution in a certain domain while rejecting outliers in the data-fitting term. By using one intermediate variable per sparse term, we show that proximal-based solvers can be efficient. We give a detailed study of the Alternating Direction Method of Multipliers (ADMM) solver for multi-sparsity and study its properties. The following subjects are addressed and receive new solutions:

-

Edge aware smoothing: given an input image , one seeks a smooth image "close" to by minimizing:

where is a sparcity-inducing non-convex function and a positive parameter. Splitting and alternate minimization lead to the sub-problems:

We solve sub-problem through deconvolution and efficient estimation via separable filters and warm-start initialization for fast GPU implementation, and sub-problem through non-convex proximal form.

- Structure-texture separation: design of an efficient algorithm using non-convex terms on both the data-fitting and the prior. The resulting problem is solved via a combination of Half-Quadratic (HQ) and Maximization-Minimization (MM) methods. We extract challenging texture layers outperforming existing techniques while maintaining a low computational cost. Using spectral sparsity in the framework of low-rank estimation, we propose to use robust Principal Component Analysis (RPCA) to perform robust separation on multi-channel images such as glare and artifacts removal of flash/no-flash photographs. As in this case, the matrix to decompose has much less columns than lines, we propose to use a QR decomposition trick instead of a direct singular value decomposition (SVD) which makes the decomposition faster.

- Robust integration: in many applications, we need to reconstruct an image from corrupted gradient fields. The corruption can take the form of outliers only when the vector field is the result of transformed gradient fields (low-level vision), or mixed outliers and noise when the field is estimated from corrupted measurements (surface reconstruction, gradient camera, Magnetic Resonance Imaging (MRI) compressed sensing, etc.). We use non-convexity and multi-sparsity to build efficient integrability enforcement algorithms. We present two algorithms : 1) a local algorithm that uses sparsity in the gradient field as a prior together with a sparse data-fitting term, 2) a non-local algorithm that uses sparsity in the spectral domain of non-local patches as a prior together with a sparse data-fitting term. Both methods make use of a multi-sparse version of the Half-Quadratic solver. The proposed methods were the first in the literature to propose a sparse regularization to improve integration. Results produced with these methods significantly outperform previous works that use no regularization or simple minimization. Exact or near-exact recovery of surfaces is possible with the proposed methods from highly corrupted gradient fields with outliers.

- Learning image denoising: deep convolutional networks that consist in extracting features by repeated convolutions with high-pass filters and pooling/downsampling operators have shown to give near-human recognition rates. Training the filters of a multi-layer network is costly and requires powerful machines. However, visualizing the first layers of the filters shows that they resemble wavelet filters, leading to sparse representations in each layer. We propose to use the concept of scale invariance of multifractals to extract invariant features on each sparse representation. We build a bi-Lipschitz invariant descriptor based on the distribution of the singularities of the sparsified images in each layer. Combining the descriptors of each layer in one feature vector leads to a compact representation of a texture image that is invariant to various transformations. Using this descriptor that is efficient to calculate with learning techniques such as classifiers combination and artificially adding training data, we build a powerful texture recognition system that outperforms previous works on 3 challenging datasets. In fact, this system leads to quite close recognition rates compared to latest advanced deep nets while not requiring any filters training.

5 Highlights of the year

5.1 Startup Bits2Beat

Startup co-founded by G. Attuel on predictive heart rate analysis. Bits2Beat aims to facilitate the early detection of some of the most common cardiovascular diseases, notably atrial fibrillation, using the research made by G. Attuel in Geostat. National INRIA article link.

5.2 Comcausa

N. Brodu set up the Comcausa associated team with the Complexity center at UC Davis and the Concaust exploratory action.

6 New software and platforms

6.1 New software

6.1.1 Fluex

-

Keywords:

Signal, Signal processing

-

Scientific Description:

Fluex is a package consisting of the Microcanonical Multiscale Formalism for 1D, 2D 3D and 3D+t general signals.

-

Functional Description:

Fluex is a C++ library developed under Gforge. Fluex is a library in nonlinear signal processing. Fluex is able to analyze turbulent and natural complex signals, Fluex is able to determine low level features in these signals that cannot be determined using standard linear techniques.

-

Contact:

Hussein Yahia

-

Participants:

Hussein Yahia, Rémi Paties

6.1.2 FluidExponents

-

Keywords:

Signal processing, Wavelets, Fractal, Spectral method, Complexity

-

Functional Description:

FluidExponents is a signal processing software dedicated to the analysis of complex signals displaying multiscale properties. It analyzes complex natural signals by use of nonlinear methods. It implements the multifractal formalism and allows various kinds of signal decomposition and reconstruction. One key aspect of the software lies in its ability to evaluate key concepts such as the degree of impredictability around a point in a signal, and provides different kinds of applications. The software can be used for times series or multidimensional signals.

-

Contact:

Hussein Yahia

-

Participants:

Antonio Turiel, Hussein Yahia

6.1.3 ProximalDenoising

-

Name:

ProximalDenoising

-

Keywords:

2D, Image filter, Filtering, Minimizing overall energy, Noise, Signal processing, Image reconstruction, Image processing

-

Scientific Description:

Image filtering is contemplated in the form of a sparse minimization problem in a non-convex setting. Given an input image I, one seeks to compute a denoised output image u such that u is close to I in the L2 norm. To do so, a minimization term is added which favors sparse gradients for output image u. Imposing sparse gradients lead to a non-convex minimization term: for instance a pseudo-norm Lp with 0 < p < 1 or a Cauchy or Welsh function. Half-quadratic algorithm is used by adding a new variable in the minimization functionnal which leads to two sub-problems, the first sub-problem is non-convex and solved by use of proximal operators. The second sub-problem can be written in variational form, and is best solved in Fourier space: it takes the form of a deconvolution operator whose kernel can be approximated by a finite sum of separable filters. This solution method produces excellent computation times even on big images.

-

Functional Description:

Use of proximal and non quadratic minimization. GPU implementation. If f is an input image, one seeks an output g such that the following functional is minimized:

l/2*(norme2(f-g) + psi(grad(g))) with : l positive constant, norme2 = L2 norm, psi is a Cauchy function used for parcimony.

This functional is also applied for debayerization.

-

Release Contributions:

This software implements H. Badri PhD thesis results.

- URL:

-

Authors:

Marie Martin, Chiheb Sakka, Hussein Yahia, Nicolas Brodu, Gabriel Zebadua Garcia, Khalid Daoudi

-

Contact:

Hussein Yahia

-

Partner:

Innovative Imaging Solutions I2S

6.1.4 Manzana

-

Name:

Manzana

-

Keywords:

2D, Image processing, Filtering

-

Scientific Description:

Software library developed in the framework of I2S-GEOSTAT innovationlab and made of high-level image processing functionalities based on sparsity and non-convex optimization.

-

Functional Description:

Library of software in image processing: filtering, hdr, inpainting etc.

-

Contact:

Hussein Yahia

-

Partner:

Innovative Imaging Solutions I2S

6.2 New platforms

Participants: N. Brodu, K. Daoudi.

- Concaust

-

Web site:

https://team.inria.fr/comcausa/continuous-causal-states/.

-

Software Family:research

-

Audience:team

-

Evolution and maintenance:proto

-

Duration of the Development:2 years

-

Scientific description:This software implements the algorithm resulting from the Concaust Exploratory Action, published in 15. Despite considerable time being spent on its development, the code itself is not that large. Indeed, the difficulty lies in the theoretical and algorithmic work. Once we know what to compute and how the implementation follows, but there were many rounds of developement with different theoretical approaches. Some of these points are still not stabilized, which reflects in the API and the code performances. These are regularly tested and updated as the code is run against concrete data sets. For these reasons, the code is constantly evolving and is actively being developped within the team, but it not ready yet for diffusion to the community at large. The code is open source (MIT licence) and shared with close partners from the Comcausa Associate Team.

-

Software Family:

-

VocaPy

-

Software family:

vehicle

-

Audience:

community

-

Evolution and maintenance:

basic (still under development)

-

Duration of the Development:

1 year (still 1 year left)

-

Scientific description:

VocaPy is an interface and a Python library implementing pathological speech analysis algorithms and vocal biomarkers for one clinical applications at the moment, differential diagnosis of Parkinsonism, but it will evolve to include other clinical targets, particularly the monitoring of respiratory diseases. VocaPy works in synergy with the ADT VocaPnée-Infra dedicated to the development of an infrastructure to interact with protected health data.

7 New results

7.1 Discovering Causal Structure with Reproducing-Kernel Hilbert Space -Machines

Participants: N. Brodu, J. P. Crutchfield.

We merge computational mechanics’ definition of causal states (predictively equivalent histories) with reproducing-kernel Hilbert space (RKHS) representation inference. The result is a widely applicable method that infers causal structure directly from observations of a system’s behaviors whether they are over discrete or continuous events or time. A structural representation—a finite- or infinite-state kernel -machine—is extracted by a reduced-dimension transform that gives an efficient representation of causal states and their topology. In this way, the system dynamics are represented by a stochastic (ordinary or partial) differential equation that acts on causal states. We introduce an algorithm to estimate the associated evolution operator. Paralleling the Fokker–Planck equation, it efficiently evolves causal-state distributions and makes predictions in the original data space via an RKHS functional mapping. We demonstrate these techniques, together with their predictive abilities, on discrete-time, discrete-value infinite Markov-order processes generated by finite-state hidden Markov models with (i) finite or (ii) uncountably infinite causal states and (iii) continuous-time, continuous-value processes generated by thermally driven chaotic flows. The method robustly estimates causal structure in the presence of varying external and measurement noise levels and for very high-dimensional data.Publication: Chaos, an Interdisciplinary Journal of Nonlinear Science , HAL.

7.2 CONCAUST Exploratory Action

Participants: N. Brodu, J. P. Crutchfield, L. Bourel, P. Rau, A. Rupe, Y. Li.

Concaust Exploratory Action Web page.The article detailing the exploratory action core method “Discovering Causal Structure with Reproducing-Kernel Hilbert Space -Machines”, has been accepted for publication in the Chaos journal. The remaining budget was reallocated into 2 years of post-doctorate positions: - 1 year for working on the core methodology - 1 year for researching the applicability of the method on real data, in particular the study of El Niño phenomena. Due to covid constraints, the postdoctorate candidate (Alexandra Jurgens) that was selected for the core methodology could not come to France in fall 2021. She may come again in fall 2022, if the situation allows, so the corresponding post-doc is reserved for her. The second post-doctorate offer on El Niño is currently opened for applications.

7.3 COMCAUSA Associated team

Participants: N. Brodu, J. P. Crutchfield, et al. (see Website).

Web page The associate team Comcausa was created as part of the Inria@SiliconValley international lab, between Inria Geostat and the Complexity Sciences Center at University of California, Davis. This team is managed by Nicolas Brodu (Inria) and Jim Crutchfield (UC Davis) and the full list of collaborators is given on the web site. We organized a series of 10 online seminars “Inference for Dynamical Systems A seminar series” in which we invited team members and external researchers to present their results. This online seminars series was a federative moment during the covid lockdowns and fostered new collaborations. Additional funding was obtained (co-PIs Nicolas Brodu, Jim Crutchfield, Sarah Marzen) from the Templeton Foundation in the form of 2×1 years post-doctorate, to work on bioacoustic signatures in whale communication signals. We recruited Alexandra Jurgens for one year, renewable, on an exploratory topic of research: seeking new methods for inferring how much information is being transferred at every scale in a signal. Nicolas Brodu is actively co-supervising her on this program, which she may pursue at Inria in fall 2022 on the Concaust exploratory action post-doc budget. More preliminary results from this associate team were obtained on CO and water flux in₂ ecosystems (collaboration between Nicolas Brodu, Yao Liu and Adam Rupe). Nicolas Brodu presented these at the yearly meeting of the ICOS network on monitoring stations, run mostly by INRAE. This in turn lead to the writing of a proposal for the joint Inria-INRAE « Agroécologie et numérique » PEPR, which passed the pre-selection phase in December 2021: this project is being proposed, jointly with a partner at INRAE, as one of the 10 flagship projects retained for the round 1 of this PEPR. The final decision for whether this PEPR will be funded or not will be made in 2022. Similarly, preliminary results from the El Niño data (collaboration between Nicolas Brodu and Luc Bourrel), have lead to the submission of an ANR proposal. This ANR funding would allow us to extend the work of the post-doctorate researcher which we will co-supervise on the Concaust budget. The Associated Team budget of 2021 could only be partially used as travels were restricted for most of the year. An extensive lab tour was still possible (Nov.-Dec. 2021), where Nicolas Brodu has met with most US associate team members. This tour was scientifically fruitful and we are currently preparing articles detailing our new results7.4 Speech acoustic indices for differential diagnosis between Parkinson's disease, multiple system atrophy and progressive supranuclear palsy

Participants: K. Daoudi, B. Das, T. Tykalova, J. Klempir, J. Rusz.

While speech disorder represents an early and prominent clinical feature of atypical parkinsonian syndromes such as multiple system atrophy (MSA) and progressive supranuclear palsy (PSP), little is known about the sensitivity of speech assessment as a potential diagnostic tool. Speech samples were acquired from 215 subjects, including 25 MSA, 20 PSP, 20 Parkinson's disease participants, and 150 healthy controls. The accurate differential diagnosis of dysarthria subtypes was based on the quantitative acoustic analysis of 26 speech dimensions related to phonation, articulation, prosody, and timing. A semi-supervised weighting-based approach was then applied to find the best feature combinations for separation between PSP and MSA. Dysarthria was perceptible in all PSP and MSA patients and consisted of a combination of hypokinetic, spastic, and ataxic components. Speech features related to respiratory dysfunction, imprecise consonants, monopitch, slow speaking rate, and subharmonics contributed to worse performance in PSP than MSA, whereas phonatory instability, timing abnormalities, and articulatory decay were more distinctive for MSA compared to PSP. The combination of distinct speech patterns via objective acoustic evaluation was able to discriminate between PSP and MSA with very high accuracy of up to 89% as well as between PSP/MSA and PD with up to 87%. Dysarthria severity in MSA/PSP was related to overall disease severity. Speech disorders reflect the differing underlying pathophysiology of tauopathy in PSP and α-synucleinopathy in MSA. Vocal assessment may provide a low-cost alternative screening method to existing subjective clinical assessment and imaging diagnostic approaches.Publication: HAL), Nature npj, parkinson's disease).

7.5 A comparative study on vowel articulation in Parkinson's disease and multiple system atrophy

Participants: K. Daoudi, B. Das, S. Milhé de Saint Victor, A. Foubert Samier, M. Fabbri, A. Pavy-Le Traon, O. Rascol, V. Woisard, W. G. Meissner.

Acoustic realisation of the working vowel space has been widely studied in Parkinson's disease (PD). However, it has never been studied in atypical parkinsonian disorders (APD). The latter are neurodegenerative diseases which share similar clinical features with PD, rendering the differential diagnosis very challenging in early disease stages. This paper presents the first contribution in vowel space analysis in APD, by comparing corner vowel realisation in PD and the parkinsonian variant of Multiple System Atrophy (MSA-P). Our study has the particularity of focusing exclusively on early stage PD and MSA-P patients, as our main purpose was early differential diagnosis between these two diseases. We analysed the corner vowels, extracted from a spoken sentence, using traditional vowel space metrics. We found no statistical difference between the PD group and healthy controls (HC) while MSA-P exhibited significant differences with the PD and HC groups. We also found that some metrics conveyed complementary discriminative information. Consequently, we argue that restriction in the acoustic realisation of corner vowels cannot be a viable early marker of PD, as hypothesised by some studies, but it might be a candidate as an early hypokinetic marker of MSA-P (when the clinical target is discrimination between PD and MSA-P).Publication: HAL), Interspeech 2022).

7.6 Advanced super-resolution

Participants: A. Rashidi, H. Yahia, A. Cherif [I2S], J. L. Vallancogne [I2S], A. Cailly [I2S].

In the framework of the acquisition chain and devices built by i2S, the objective of this PhD is to provide efficient algorithms able to merge different acquired images corresponding to slight spatial translational displacements to get a wider super-resolved image. Consequently, this PhD takes place within the general subject of super-resolution. In this thesis, super-resolution is performed using different acquisitions. We propose the use of sensor displacement within the cameras of I2S. We propose a scheme to achieve an image with up to two times higher resolution using this technique. Furthermore, we also propose an additional image deconvolution algorithm that helps to improve the image quality further and to address any degradation problem that may occur through the super-resolution scheme. Our image deconvolution algorithm is based on variable splitting and takes advantage of the proximal operator and Fourier transform. We also proposed the use of new potential functions that can be used as prior information in image inverse problems for the first time used in image processing. Experimental results show promising capabilities of the proposed algorithm. The algorithm is successfully implemented within various cameras and devices of I2S. The practical experiments on real-world data prove the effectiveness and flexibility of our image-deconvolution. Experiments were conducted on Herschel observation maps, and promising results were obtained on such imaging data. In the last part of the thesis, the idea of plug-and-play priors for image denoising and deconvolution is presented. This thesis proposes the implementation of plug-and play-priors in an alternating minimisation scheme. The early result has shown potential to be adequate for image denoising/deconvolution application.Publication: A. Rashidi PhD's thesis, HAL.

8 Partnerships and cooperations

8.1 International initiatives

Associate Teams in the framework of an Inria International Lab or in the framework of an Inria International Program

COMCAUSA

-

Associated team:

COMCAUSA

- Title: Computation of causal structures

- Duration: started 2021.

- Coordinators: J. P. Crutchfield (chaos@cse.ucdavis.edu), N. Brodu

- Partners: University of California Davis, Geostat contact: N. Brodu

- Summary: The associate team Comcausa was created as part of the Inria@SiliconValley international lab, between Inria Geostat and the Complexity Sciences Center at University of California, Davis. This team is managed by N. Brodu (Inria) and J. P. Crutchfield (UC Davis).

8.2 International research visitors

Visits of international scientists:

- Sarah Marzen, assistant professor at Keck Science Department, Pitzer, Scripps, and Claremont McKenna Colleges, participant in Comcausa associated team, visited Geostat in December 2022 during one week.

- Alexandra Jürgens, Post-doctoral researcher in the domains of stochastic modeling, information theory, stochastic thermodynamics, and member of the Comcausa associated team, visited Geostat in April-May 2022. Duration: 2 weeks.

8.3 European initiatives

Other european programs/initiatives:

-

GENESIS:

blackGENESIS : (GENreration and Evolution of Structure in the ISm). Funding: 385728 euros.

- Deutsch-franzosische Projekte in den - Natur - Lebens - Ingenieurwissenschaften. Duration: 3 ans, exrtended in 2022.

- Geostat, Laboratoire d'Astrophysique de Bordeaux, Institut de Physique de l'Université de Cologne (KOSMA)

- Precise evaluation of the multiscale MHD (magneto hydrodynamic) properties of ISMs (interstellar molecular clouds) acquisitions of Herschel data and their correlations with star formation process.

- https://astro.uni-koeln.de/stutzki/research/genesis.

8.4 National initiatives

-

PEPR:

with AgroParisTech. Title: Modeling the global states and dynamics of ecosystems - Applications to carbon flux and stock in ecosystems modified by agricultural activities. This project is supporting research on causality presently in Geostat and in the post-Geostat being currently set up by N. Brodu. PIs: N. Brodu, E. Vaudour. Duration: 60 months. Funding: 1,5 M euros.

- CONCAUST Exploratory Action The exploratory action « TRACME » was renamed « CONCAUST » and is going on with good progress. Collaboration with J. P. Crutchfield and its laboratory has lead to a first article, “Discovering Causal Structure with Reproducing-Kernel Hilbert Space -Machines”, available here. That article poses the main theoretical fundations for building a new class of models, able to reconstruct a measured process « causal states » from data.

- CovidVoice project: Inria Coind-19 mission. The CovidVoice project evolved into the VocaPnée project in partnership with AP-HP and co-directed by K. Daoudi and Thomas Similowski, responsible for the pulmonology and resuscitation service at La Pitié-Salpêtrière hospital and UMR-S 1158. The objective of the VocaPnée project is to bring together all the skills available at Inria to develop and validate a vocal biomarker for the remote monitoring of patients at home suffering from an acute respiratory disease (such as Covid) or chronic (such as asthma) . This biomarker will then be integrated into a telemedicine platform, ORTIF or COVIDOM for Covid, to assist the doctors in assessing the patient's respiratory status. VocaPnée is divided into 2 longitudinal pilot clinical studies, a hospital study and another in tele-medicine.

- Vocapy. The ADT (IA Plan) project VocaPy, led by K. Daoudi, started in November 2021 for a 2 years duration. The goal of VocaPy is to develop a Python library dedicated to pathological speech analysis and vocal biomarkers conception. In case of success, our R&D on vocal biomarkers would obviously have a non-negligible impact at the clinical, scientific, technological and socio-economical levels. VocaPy and VocaPnée-Infra will serve a kernel for the development of disease-specific IA solutions to achieve the clinical targets and for their technological transfer to the clinical (and/or future industrial) partners.

- ANR project Voice4PD-MSA, led by K. Daoudi, which targets the differential diagnosis between Parkinson's disease and Multiple System Atrophy. The total amount of the grant is 468555 euros, from which GeoStat has 203078 euros. Initial duration of the project was 42 months, and has been extended until January 2023. Partners: CHU Bordeaux (Bordeaux), CHU Toulouse, IRIT, IMT (Toulouse).

- GEOSTAT is a member of ISIS (Information, Image & Vision), AMF (Multifractal Analysis) GDRs.

- GEOSTAT is participating in the CNRS IMECO project Intermittence multi-échelles de champs océaniques : analyse comparative d’images satellitaires et de sorties de modèles numériques. CNRS call AO INSU 2018. PI: F. Schmitt, DR CNRS, UMR LOG 8187. Duration: 2 years.

9 Dissemination

9.1 Promoting scientific activities

Chair of conference program committees

K. Daoudi was session chair on vocal biomarkers at Interspeech 2022.

9.1.1 Journal

Member of the editorial boards

H. Yahia is a member of the editorial board of the journal Frontiers in Physiology.

9.1.2 Invited talks

H. Yahia, ENS Paris, talk given in the laboratory for radioastronomy, physics department, E. Falgaronne's team, January 27th, 2022, on the results of the GENESIS project.

9.1.3 Scientific expertise

H. Yahia is an expert for the ERC program at the European commission.

9.2 Teaching - Supervision - Juries

9.2.1 Supervision

H. Yahia is a co-supervisor for A. Rashidi PhD thesis, defended March 29, 2022.

9.2.2 Juries

H. Yahia was a member of the jury during the defense of N. Manoucheheri's PhD: Generative learning models and applications in healthcare, defended June 6, 2022, Concordia University.

10 Scientific production

10.1 Major publications

- 1 articleMultifractal Desynchronization of the Cardiac Excitable Cell Network During Atrial Fibrillation. II. Modeling.Frontiers in Physiology10April 2019, 480 (1-18)

- 2 articleMultifractal desynchronization of the cardiac excitable cell network during atrial fibrillation. I. Multifractal analysis of clinical data.Frontiers in Physiology8March 2018, 1-30

- 3 articleA Non-Local Low-Rank Approach to Enforce Integrability.IEEE Transactions on Image ProcessingJune 2016, 10

- 4 inproceedingsFast and Accurate Texture Recognition with Multilayer Convolution and Multifractal Analysis.European Conference on Computer VisionECCV 2014Zürich, SwitzerlandSeptember 2014

- 5 articleIncreasing the Resolution of Ocean pCO₂ Maps in the South Eastern Atlantic Ocean Merging Multifractal Satellite-Derived Ocean Variables.IEEE Transactions on Geoscience and Remote SensingJune 2018, 1 - 15

- 6 articleReconstruction of super-resolution ocean pCO 2 and air-sea fluxes of CO 2 from satellite imagery in the Southeastern Atlantic.BiogeosciencesSeptember 2015, 20

- 7 articleDetection of Glottal Closure Instants based on the Microcanonical Multiscale Formalism.IEEE Transactions on Audio, Speech and Language ProcessingDecember 2014

- 8 articleEfficient and robust detection of Glottal Closure Instants using Most Singular Manifold of speech signals.IEEE Transactions on Acoustics Speech and Signal Processingforthcoming2014

- 9 articleEdges, Transitions and Criticality.Pattern RecognitionJanuary 2014, URL: http://hal.inria.fr/hal-00924137

- 10 articleA Multifractal-based Wavefront Phase Estimation Technique for Ground-based Astronomical Observations.IEEE Transactions on Geoscience and Remote SensingNovember 2015, 11

- 11 articleSingularity analysis in digital signals through the evaluation of their Unpredictable Point Manifold.International Journal of Computer Mathematics2012, URL: http://hal.inria.fr/hal-00688715

- 12 articleOcean Turbulent Dynamics at Superresolution From Optimal Multiresolution Analysis and Multiplicative Cascade.IEEE Transactions on Geoscience and Remote Sensing5311June 2015, 12

- 13 articleMicrocanonical multifractal formalism: a geometrical approach to multifractal systems. Part I: singularity analysis.Journal of Physics A: Math. Theor412008, URL: http://dx.doi.org/10.1088/1751-8113/41/1/015501

- 14 articleMotion analysis in oceanographic satellite images using multiscale methods and the energy cascade.Pattern Recognition43102010, 3591-3604URL: http://dx.doi.org/10.1016/j.patcog.2010.04.011

10.2 Publications of the year

International journals

International peer-reviewed conferences

Doctoral dissertations and habilitation theses

10.3 Cited publications

- 19 bookOndelettes, multifractales et turbulence.Paris, FranceDiderot Editeur1995

- 20 bookModeling Complex Systems.New-York Dordrecht Heidelberg LondonSpringer2010

- 21 articlePredictability: a way to characterize complexity.Physics Report356arXiv:nlin/0101029v12002, 367--474URL: http://dx.doi.org/10.1016/S0370-1573(01)00025-4

- 22 articleRECONSTRUCTION OF EPSILON-MACHINES IN PREDICTIVE FRAMEWORKS AND DECISIONAL STATES.Advances in Complex Systems14052011, 761-794URL: https://doi.org/10.1142/S0219525911003347

- 23 articleModern Koopman Theory for Dynamical Systems.SIAM Review6422022, 229-340URL: https://doi.org/10.1137/21M1401243

- 24 bookChaos and Coarse Graining in Statistical Mechanics.Cambridge University Press2008

- 25 articleBetween order and chaos.Nature Physics82012, 17–24

- 26 articleEquations of Motion from a Data Series.Complex Syst.11987

- 27 articleInferring statistical complexity.Phys. Rev. Lett.632Jul 1989, 105--108URL: https://link.aps.org/doi/10.1103/PhysRevLett.63.105

- 28 bookMotor Speech Disorders Substrates, Differential Diagnosis, and Management.Elsevier2013

- 29 articleVoice for Health: The Use of Vocal Biomarkers from Research to Clinical Practice.Digit. Biomark512021

- 30 articleAbout the role of chaos and coarse graining in statistical mechanics.Physica A: Statistical Mechanics and its Applications418Proceedings of the 13th International Summer School on Fundamental Problems in Statistical Physics2015, 94-104URL: https://www.sciencedirect.com/science/article/pii/S0378437114004038

- 31 articleMeasures of statistical complexity: Why?Physics Letters A23841998, 244-252URL: https://www.sciencedirect.com/science/article/pii/S0375960197008554

- 32 articleApproaching complexity by stochastic methods: From biological systems to turbulence.Physics Reports50652011, 87-162URL: https://www.sciencedirect.com/science/article/pii/S0370157311001530

- 33 articleMixed LICORS: A Nonparametric Algorithm for Predictive State Reconstruction.2012, URL: https://arxiv.org/abs/1211.3760

- 34 articleMultifield visualization using local statistical complexity.IEEE Transactions on Visualization and Computer Graphics1362007, 1384-1391

- 35 book Statistical physics, statics, dynamics & renormalization.World Scie,tific2000

- 36 articleMultiscale entropy analysis of complex physiologic time series.Phys Rev Lett.89(6)2002

- 37 article Can the original equations of a dynamical system be retrieved from observational time series? Chaos 29 2019

- 38 articleKernel learning for robust dynamic mode decomposition: linear and nonlinear disambiguation optimization.Proc. R. Soc.A.47820210830202108302022

- 39 articleGeneralized statistical complexity measures: Geometrical and analytical properties.Physica A: Statistical Mechanics and its Applications36922006, 439-462URL: https://www.sciencedirect.com/science/article/pii/S0378437106001324

- 40 bookFrom Statistical Physics to Statistical Inference and Back.New York Heidelberg BerlinSpringer1994, URL: http://www.springer.com/physics/complexity/book/978-0-7923-2775-2

- 41 bookGaussian Processes for Machine Learning.The MIT Press11 2005, URL: https://doi.org/10.7551/mitpress/3206.001.0001

- 42 articleBlind Construction of Optimal Nonlinear Recursive Predictors for Discrete Sequences.2004, URL: https://arxiv.org/abs/cs/0406011

- 43 bookSparse Image and Signal Processing: Wavelets, Curvelets, Morphological Diversity.ISBN:9780521119139Cambridge University Press2010

- 44 articleDetecting Strange Attractors in Turbulence.Non Linear Optimization8981981, 366--381URL: http://www.springerlink.com/content/b254x77553874745/

- 45 articleTime-Frequency Analysis as Probabilistic Inference.IEEE Transactions on Signal Processing62232014, 6171-6183

- 46 articleRevisting multifractality of high resolution temporal rainfall using a wavelet-based formalism.Water Resources Research422006