2023Activity reportProject-TeamACENTAURI

RNSR: 202124072D- Research center Inria Centre at Université Côte d'Azur

- Team name: Artificial intelligence and efficient algorithms for autonomus robotics

- Domain:Perception, Cognition and Interaction

- Theme:Robotics and Smart environments

Keywords

Computer Science and Digital Science

- A3.4.1. Supervised learning

- A3.4.3. Reinforcement learning

- A3.4.4. Optimization and learning

- A3.4.5. Bayesian methods

- A3.4.6. Neural networks

- A3.4.8. Deep learning

- A5.4.4. 3D and spatio-temporal reconstruction

- A5.4.5. Object tracking and motion analysis

- A5.4.7. Visual servoing

- A5.10.2. Perception

- A5.10.3. Planning

- A5.10.4. Robot control

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A5.10.6. Swarm robotics

- A5.10.7. Learning

- A6.2.3. Probabilistic methods

- A6.2.4. Statistical methods

- A6.2.5. Numerical Linear Algebra

- A6.2.6. Optimization

- A6.4.2. Stochastic control

- A6.4.3. Observability and Controlability

- A6.4.4. Stability and Stabilization

- A6.4.6. Optimal control

- A7.1.4. Quantum algorithms

- A8.2. Optimization

- A8.3. Geometry, Topology

- A8.11. Game Theory

- A9.2. Machine learning

- A9.5. Robotics

- A9.6. Decision support

- A9.10. Hybrid approaches for AI

Other Research Topics and Application Domains

- B5.1. Factory of the future

- B5.6. Robotic systems

- B7.2. Smart travel

- B7.2.1. Smart vehicles

- B7.2.2. Smart road

- B8.2. Connected city

1 Team members, visitors, external collaborators

Research Scientists

- Ezio Malis [Team leader, INRIA, Senior Researcher, HDR]

- Philippe Martinet [INRIA, Senior Researcher, HDR]

- Patrick Rives [INRIA, Emeritus, HDR]

Post-Doctoral Fellows

- Minh Quan Dao [INRIA, Post-Doctoral Fellow, from Dec 2023]

- Hasan Yilmaz [INRIA, Post-Doctoral Fellow, from Jun 2023]

PhD Students

- Emmanuel Alao [UTC Compiegne, co-supervision]

- Matteo Azzini [UNIV COTE D'AZUR]

- Kaushik Bhowmik [Inria CHROMA, co-supervision]

- Enrico Fiasche [UNIV COTE D'AZUR]

- Monica Fossati [UNIV COTE D'AZUR, from Oct 2023]

- Stefan Larsen [INRIA]

- Fabien Lionti [INRIA]

- Ziming Liu [INRIA]

- Diego Navarro Tellez [CEREMA]

- Mathilde Theunissen [LS2N Nantes, co-supervision]

Technical Staff

- Erwan Amraoui [INRIA, Engineer]

- Marie Aspro [INRIA, Engineer, from Nov 2023]

- Matthias Curet [INRIA, Engineer, from May 2023]

- Pierre Joyet [INRIA, Engineer]

- Pardeep Kumar [INRIA, Engineer, from Aug 2023]

- Quentin Louvel [INRIA, Engineer]

- Louis Verduci [INRIA, Engineer]

Interns and Apprentices

- Thomas Campagnolo [INRIA, Intern, from Sep 2023]

- Monica Fossati [INRIA, Intern, from Feb 2023 until Jul 2023]

Administrative Assistant

- Patricia Riveill [INRIA]

2 Overall objectives

The goal of ACENTAURI is to study and to develop intelligent, autonomous and mobile robots that collaborate between them to achieve challenging tasks in dynamic environments. The team focuses on perception, decision and control problems for multi-robot collaboration by proposing an original hybrid model-driven / data driven approach to artificial intelligence and by studying efficient algorithms. The team focuses on robotic applications like environment monitoring and transportation of people and goods. In these applications, several robots will share multi-sensor information eventually coming from infrastructure. The team will demonstrate the effectiveness of the proposed approaches on real robotic systems like Autonomous Ground Vehicles (AGVs) and Unmanned Aerial Vehicles (UAVs) together with industrial partners.

The scientific objectives that we want to achieve are to develop:

- robots that are able to perceive in real-time through their sensors unstructured and changing environments (in space and time) and are able to build large scale semantic representations taking into account the uncertainty of interpretation and the incompleteness of perception.The main scientific bottlenecks are (i) how to exceed purely geometric maps to have semantic understanding of the scene and (ii) how to share these representations between robots having different sensomotoric capabilities so that they can possibly collaborate together to perform a common task.

- autonomous robots in the sense that they must be able to accomplish complex tasks by taking high-level cognitive-based decisions without human intervention. The robots evolve in an environment possibly populated by humans, possibly in collaboration with other robots or communicating with infrastructure (collaborative perception). The main scientific bottlenecks are (i) how to anticipate unexpected situations created by unpredictable human behavior using the collaborative perception of robots and infrastructure and (ii) how to design robust sensor-based control law to ensure robot integrity and human safety.

- intelligent robots in the sense that they must (i) decide their actions in real-time on the basis of the semantic interpretation of the state of the environment and their own state (situation awareness), (ii) manage uncertainty both on sensor, control and dynamic environment (iii) predict in real-time the future states of the environment taking into account their security and human safety, (iv) acquire new capacities and skills, or refine existing skills through learning mechanisms.

- efficient algorithms able to process large amount of data and solve hard problems both in robotic perception, learning, decision and control. The main scientific bottlenecks are (i) how to design new efficient algorithms to reduce the processing time with ordinary computers and (ii) how to design new quantum algorithms to reduce the computational complexity in order to solve problems that are not possible in reasonable time with ordinary computers.

3 Research program

The research program of ACENTAURI will focus on intelligent autonomous systems, which require to be able to sense, analyze, interpret, know and decide what to do in the presence of dynamic and living environment. Defining a robotic task in a living and dynamic environment requires to setup a framework where interactions between the robot or the multi-robots system, the infrastructure and the environment can be described from a semantic level to a canonical space at different levels of abstraction. This description will be dynamic and based on the use of sensory memory and short/long term memory mechanism. This will require to expand and develop (i) the knowledge on the interaction between robots and the environment (both using model-driven or data-driven approaches), (ii) the knowledge on how to perceive and control these interactions, (iii) situation awareness, (iv) hybrid architectures (both using model-driven or data-driven approaches), for monitoring the global process during the execution of the task.

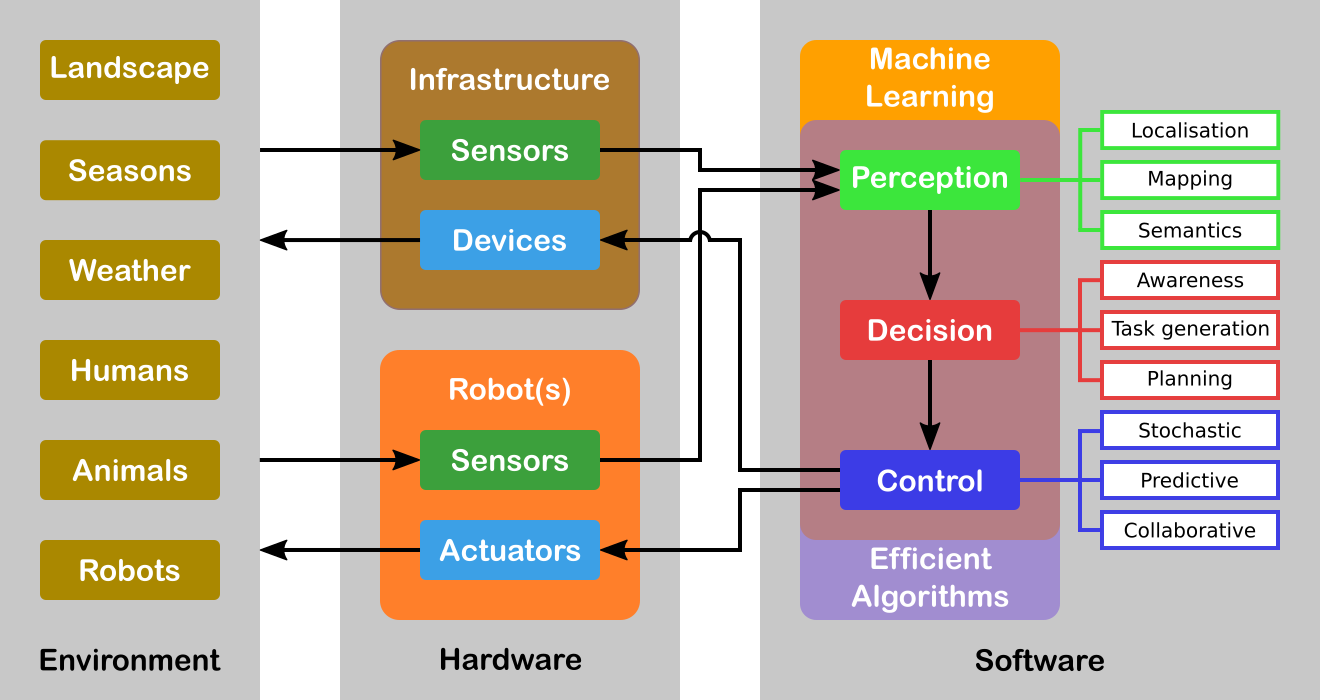

Figure 1 illustrates an overview of the global systems highlighting the core topics. For the sake of simplicity, we will decompose our research program in three axes related to Perception, Decision and Control. However, it must be noticed that these axes are highly interconnected (e.g. there is a duality between perception and control) and all problems should be addressed in a holistic approach. Moreover, Machine Learning is in fact transversal to all the robot's capacities. Our objective is the design and the development of a parameterizable architecture for Deep Learning (DL) networks incorporating a priori model-driven knowledge. We plan to do this by choosing specialized architectures depending on the task assigned to the robot and depending on the input (from standard to future sensor modalities). These DL networks must be able to encode spatio-temporal representations of the robot's environment. Indeed, the task we are interested in considers evolution in time of the environment since the data coming from the sensors may vary in time even for static elements of the environment. We are also interested to develop a novel network for situation awareness applications (mainly in the field of autonomous driving, and proactive navigation).

Another transversal issue concerns the efficiency of the algorithms involved. Either we must process a large amount of data (for example using a standard full HD camera (1920x1080 pixels) the data size to process is around 5 Terabits/hour) or the problem is hard to solve even when the underlying graph is planar. For example, path optimization problems for multiple robots are all non-deterministic polynomial-time complete (NP-complete). A particular emphasis will be given to efficient numerical analysis algorithms (in particular for optimization) that are omnipresent in all research axes. We will also explore a completely different and radically new methodology with quantum algorithms. Several quantum basic linear algebra subroutines (BLAS) (Fourier transforms, finding eigenvectors and eigenvalues, solving linear equations) exhibit exponential quantum speedups over their best known classical counterparts. This quantum BLAS (qBLAS) translates into quantum speedups for a variety of algorithms including linear algebra, least-squares fitting, gradient descent, Newton's method. The quantum methodology is completely new to the team, therefore the practical interest of pursuing such research direction should be validated in the long-term.

The research program of ACENTAURI will be decomposed in the following three research axes:

3.1 Axis A: Augmented spatio-temporal perception of complex environments

The long-term objective of this research axis is to build accurate and composite models of large-scale environments that mix metric, topological and semantic information. Ensuring the consistency of these various representations during the robot exploration and merging/sharing observations acquired from different viewpoints by several collaborative robots or sensors attached to the infrastructure, are very difficult problems. This is particularly true when different sensing modalities are involved and when the environments are time-varying. A recent trend in Simultaneous Localization And Mapping is to augment low-level maps with semantic interpretation of their content. Indeed, the semantic level of abstraction is the key element that will allow us to build the robot’s environmental awareness (see Axis B). For example, the so-called semantic maps have already been used in mobile robot navigation, to improve path planning methods, mainly by providing the robot with the ability to deal with human-understandable targets. New studies to derive efficient algorithms for manipulating the hybrid representations (merging, sharing, updating, filtering) while preserving their consistency are needed for long-term navigation.

3.2 Axis B: Situation awareness for decision and planning

The long-term objective of this research axis is to design and develop a decision-making module that is able to (i) plan the mission of the robots (global planning), (ii) generate the sub-tasks (local objectives) necessary to accomplish the mission based on Situation Awareness and (iii) plan the robot paths and/or sets of actions to accomplish each subtask (local planning). Since we have to face uncertainties, the decision module must be able to react efficiently in real-time based on the available sensor information (on-board or attached to an IoT infrastructure) in order to guarantee the safety of humans and things. For some tasks, it is necessary to coordinate a multi-robots system (centralized strategy), while for other each robot evolves independently with its own decentralized strategy. In this context, Situation Awareness is at the heart of an autonomous system in order to feed the decision-making process, but also can be seen as a way to evaluate the performance of the global process of perception and interpretation in order to build a safe autonomous system. Situation Awareness is generally divided into three parts: perception of the elements in the environment (see Axis A), comprehension of the situation, and projection of future states (prediction and planning). When planning the mission of the robot, the decision-making module will first assume that the configuration of the multi-robot system is known in advance, for example one robot on the ground and two robots on the air. However, in our long-term objectives, the number of robots and their configurations may evolve according to the application objectives to be achieved, particularly in terms of performance, but also to take into account the dynamic evolution of the environment.

3.3 Axis C: Advanced multi-sensor control of autonomous multi-robot systems

The long-term objective of this research axis is to design multi-sensor (on-board or attached to an IoT infrastructure) based control of potentially multi-robots systems for tasks where the robots must navigate into a complex dynamic environment including the presence of humans. This implies that the controller design must explicitly deal not only with uncertainties and inaccuracies in the models of the environment and of the sensors, but also to consider constraints to deal with unexpected human behavior. To deal with uncertainties and inaccuracies in the model, two strategies will be investigated. The first strategy is to use Stochastic Control techniques that assume known probability distribution on the uncertainties. The second strategy is to use system identification and reinforcement learning techniques to deal with differences between the models and the real systems. To deal with unexpected human behavior, we will investigate Stochastic Model Predictive Control (MPC) techniques and Model Predictive Path Integral (MPPI) control techniques in order to anticipate future events and take optimal control actions accordingly. A particular emphasis will be given to the theoretical analysis (observability, controllability, stability and robustness) of the control laws.

4 Application domains

ACENTAURI focus on two main applications in order to validate our researches using the robotics platforms described in section 7.2. We are aware that ethical questions may arise when addressing such applications. ACENTAURI follows the recommendations of the Inria ethical committee like for example confidentiality issues when processing data (RGPD).

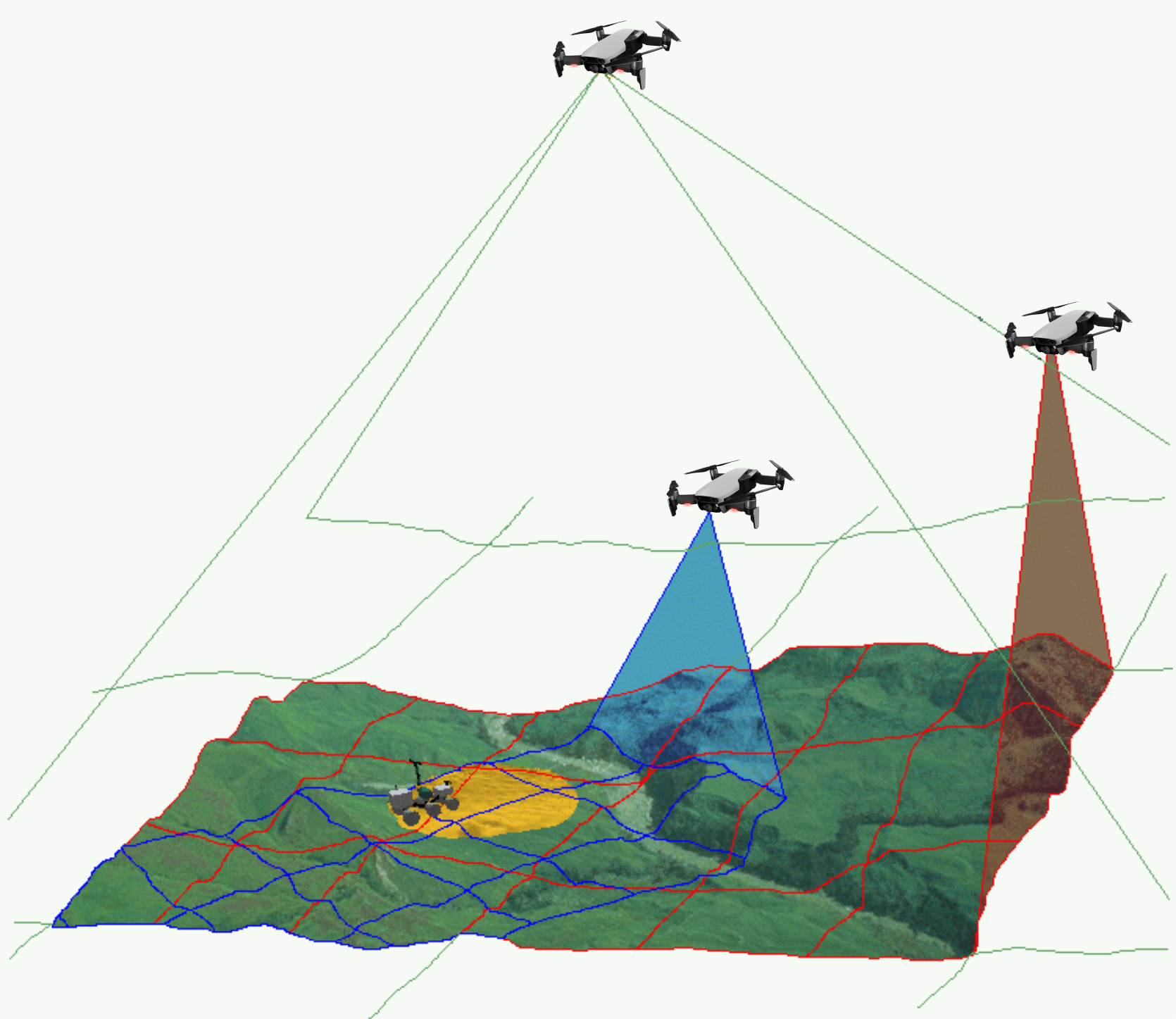

4.1 Environment monitoring with a collaborative robotic system

The first application that we will consider concerns monitoring the environment using an autonomous multi-robots system composed by ground robots and aerial robots (see Figure 2). The ground robots will patrol following a planned trajectory and will collaborate with the aerial drones to perform tasks in structured (e.g. industrial sites), semi-structured (e.g. presence of bridges, dams, buildings) or unstructured environments (e.g. agricultural space, forest space, destroyed space). In order to provide a deported perception to the ground robots, an aerial drone will be in operation while the second one will be recharging its batteries on the ground vehicle. Coordinated and safe autonomous take-off and landing of the aerial drones will be a key factor to ensure the continuity of service for a long period of time. Such a multi-robot system can be used to localize survivors in case of disaster or rescue, to localize and track people or animals (for surveillance purpose), to follow the evolution of vegetation (or even invasion of insects or parasites), to follow evolution of structures (bridges, dams, buildings, electrical cables) and to control actions in the environment like for example in agriculture (fertilization, pollination, harvesting, ...), in forest (rescue), in land (planning firefighting). To successfully achieve such an application will require to build a representation of the environment and localize the robots in the map (see Axis A in section 3.1), to re-plan the tasks of each robot when unpredictable events occurs (see Axis B in section 3.2) and to control each robot to execute the tasks (see Axis C in section 3.3). Depending on the application field, the scale and the difficulty of the problems to be solved will be increasing. In the Smart Factories field, we have a relatively small size environment, mostly structured, with highly instrumented (sensors) and with the possibility to communicate. In the Smart Territories field, we have large semi-structured or unstructured environments that are not instrumented. To set up demonstrations of this application, we intend to collaborate with industrial partners and local institutions. For example, we plan to set up a collaboration with the Parc Naturel Régional des Prealpes d'Azur to monitor the evolution of fir trees infested by bark beetles.

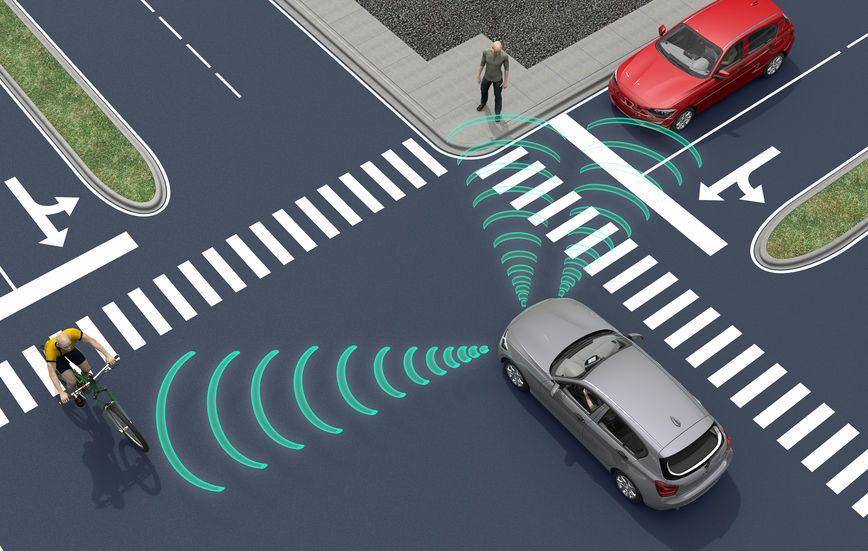

4.2 Transportation of people and goods with autonomous connected vehicles

The second application that we will consider, concerns the transportation of people and goods with autonomous connected vehicles (see Figure 3). ACENTAURI will contribute to the development of Autonomous Connected Vehicles (e.g. Learning, Mapping, Localization, Navigation) and the associated services (e.g. towing, platooning, taxi). We will develop efficient algorithms to select on-line connected sensors coming from the infrastructure in order to extend and enhance the embedded perception of a connected autonomous vehicle. In cities, there exists situations where visibility is very bad for historical reason or simply occasionally because of traffic congestion, service delivery (trucks, buses) or roadworks. It exists also situation where danger are more important and where a connected system or intelligent infrastructure can help to enhance perception and then reduce the risk of accident (see Axis A in section 3.1). In ACENTAURI, we will also contribute to the development of assistance and service robotics by re-using the same technologies required in autonomous vehicles. By adding the social level in the representation of the environment, and using techniques of proactive and social navigation, we will offer the possibility of the robot to adapt its behavior in presence of humans (see Axis B in section 3.2). ACENTAURI will study sensing technology on SDVs (Self-Driving Vehicles) used for material handling to improve efficiency and safety as products are moved around Smart Factories. These types of robots have the ability to sense and avoid people, as well as unexpected obstructions in the course of doing its work (see Axis C in section 3.3). The ability to automatically avoid these common disruptions is a powerful advantage that keeps production running optimally. To set up demonstrations of this application, we will continue the collaboration with industrial partners (Renault) and with the Communauté d'Agglomération Sophia Antipolis (CASA). Experiments with 2 autonomous Renault Zoe cars will be carried out in a dedicated space lend by CASA. Moreover, we propose, with the help of the Inria Service d'Expérimentation et de Développement (SED), to set up a demonstration of an autonomous shuttle to transport people in the future extended Inria/UCA site.

5 Social and environmental responsibility

ACENTAURI is concerned with the reduction of its environmental footprint activities and it is involved in several research projects related to the environmental challenges.

5.1 Footprint of research activities

The main footprint of our research activities comes from travels and power consumption (computers and computer cluster). Concerning travels, after the limitation due to the COVID-19 pandemic, they have increased again but we make our best efforts to privilegiate visioconferencing. Concerning power consumption, besides classical actions to reduce the waste of energy, our research focus on efficient optimization algorithms to minimize the computation time of computers onboard of our robotic platforms.

5.2 Impact of research results

We have planned to propose several projects related to the environmental challenges. We give below two examples of the most advanced projects that have been recently started.

The first two concerns the monitoring of forest, one in collaboration with the Parc Naturel Regional des Préalpes d'Azur, ONF and DRAAF (EPISUD Project) and one in collaboration with INRAE UR629 Ecologie des Forêts Méditerranéennes (see ROBFORISK project in section 10.3.7) .

The third concerns the autonomous vehicles in agricultural application in collaboration with INRAE Clermont-Ferrand in the context of the PEPR "Agrologie et numérique". We aim to develop robotic approaches for the realization of new cultural practices, capable of acting as a lever for agroecological practices (see NINSAR Project in section 10.3.5).

6 Highlights of the year

6.1 Team progression

This year that has proven not only promising but also dedicated to the continuous growth of our team. In particular we have:

- welcomed six new members to our team, each bringing unique skills, experiences, and perspectives.

- kick off three new collaborative projects (Robforrisk, Ninsar, Agrifood-TEF).

- started the AISENSE associated team with the AVELAB of KAIST in Korea.

We organised a two days team seminar in Saint Raphael to foster scientific discussion and collaborations between team members (see Figure 4).

We have also initiated a biweekly robotic seminar involving both Inria (HEPHAISTOS and ACENTAURI) and I3S (OSCAR, ROBOTVISION, ...) robotic teams in order to disseminate information about the latest advancements, trends, and research in robotics.

6.2 Awards

Mathilde Theunissen won the Best Poster Award at JJCR (Journée des Jeunes Chercheurs en Robotique), first day of the JNRR (Journées Nationales de la Recherche en Robotique) for her work titled "Multi-robots localization and navigation for infrastructure monitoring".

7 New software, platforms, open data

7.1 New software

7.1.1 OPENROX

-

Keywords:

Robotics, Library, Localization, Pose estimation, Homography, Mathematical Optimization, Computer vision, Image processing, Geometry Processing, Real time

-

Functional Description:

Cross-platform C library for real-time robotics:

- sensors calibration - visual identification and tracking - visual odometry - lidar registration and odometry

-

Authors:

Ezio Malis, Ezio Malis

-

Contact:

Ezio Malis

-

Partner:

Robocortex

7.2 New platforms

Participants: Ezio Malis, Philippe Martinet, Nicolas Chleq, Emmanuel Alao, Quentin Louvel.

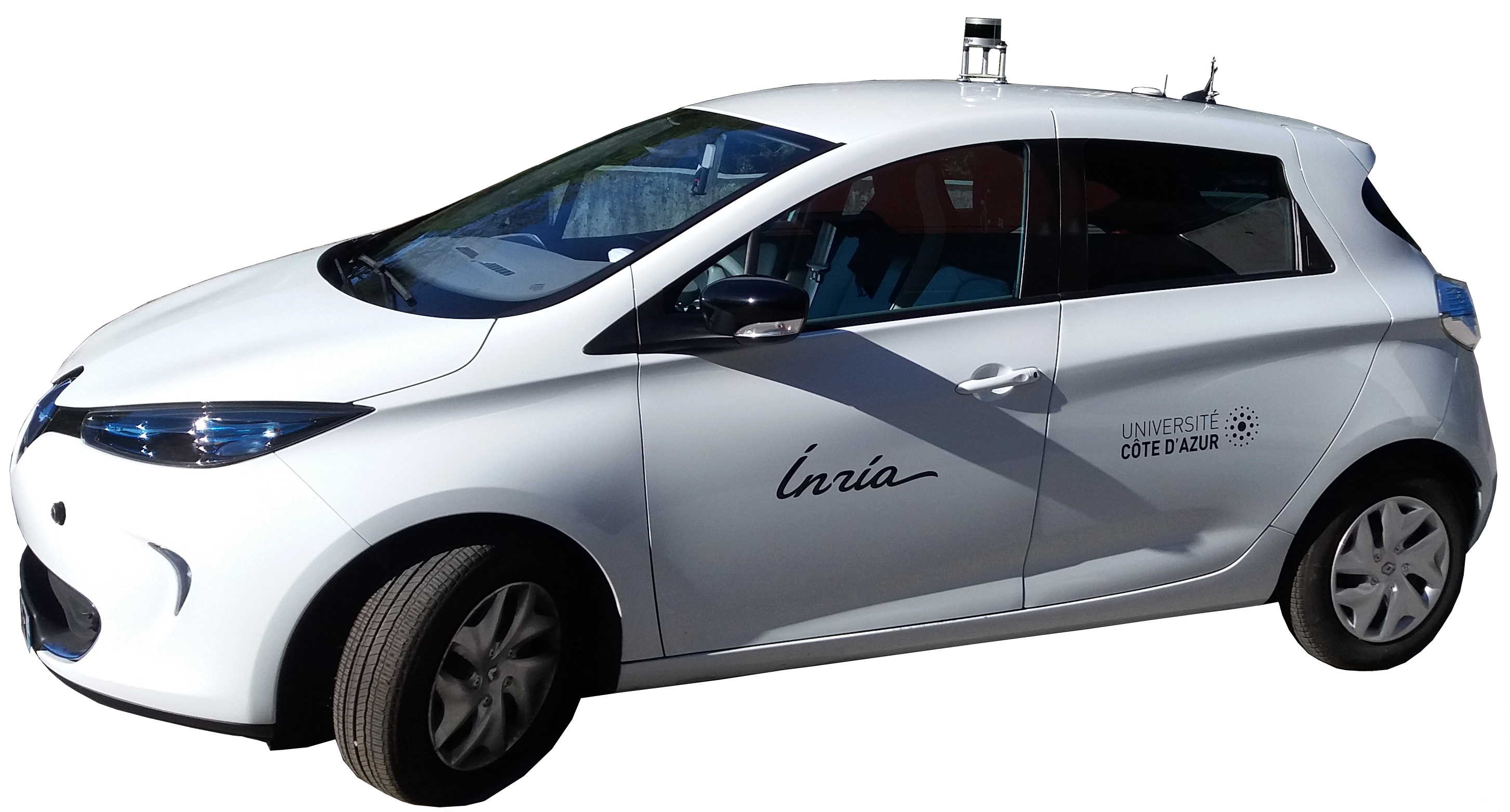

ICAV platform

ICAV platform has been funded by PUV@SOPHIA project (CASA, PACA Region and state), self funding, Digital Reference Center from UCA, and Academy 1 from UCA. We have now two autonomous vehicles, one instrumented vehicle, many sensors (RTK GPS, Lidars, Cameras), Communications devices (C-V2X, IEEE 802.11p), and one standalone localization and mapping system.

ICAV platform is composed of (see Figure 5)

- ICAV1 is an old generation of ZOE. It has been bought fully robotized and intrumented. It is equiped with Velodyne Lidar VLP16, low cost IMU and GPS, three cameras and one embedded computer.

- ICAV2 is a new generation of ZOE which has been instrumented and robotized in 2021. It is equiped with Velodyne Lidar VLP16, low cost IMU and GPS, three cameras, two solidstate Lidars RS-M1, one embedded computer and one NVIDIA Jetson AGX Xavier.

- ICAV3 will be instrumented with different LIDARS and multi cameras system (LADYBUG5+)

- A ground truth RTK system. An RTK GPS base station has been installed and a local server configured inside the Inria Center. Each vehicle is equiped with an RTK GPS receiver and connected to a local server in order to compute a centimeter localization accuracy.

- A standalone localization and mapping system. This system is composed of a Velodyne Lidar VLP16, low cost IMU and GPS, and one NVIDIA Jetson AGX Xavier.

- A communication system V2X based on the technology C-V2X and IEEE 802.11p.

- Different lidar sensors (Ouster OS2-128, RS-LIDAR16, RS-LIDAR32, RS-Ruby), and one multi-cameras system (LADYBUG5+)

The main applications of this platform are:

- datasets acquisition

- localization, Mapping, Depth estimation, Semantization

- autonomous navigation (path following, parking, platooning, ...), proactive navigation in shared space

- situation awareness and decision making

- V2X communication

- autonomous landing of UAVs on the roof

ICAV2 has been used by Maria Kabtoul in order to demonstrate the effectiveness of autonomous navigation of a car in a crowd.

Indoor autonomous mobile platform

The mobile robot platform has been funded by the MOBIDEEP project in order to demonstrate autonomous navigation capabilities in encumbered and crowded environment. This platform is composed of (see Figure 6):

- one omnidirectional mobile robot (SCOOT MINI with mecanum wheels from AGIL-X)

- one NVIDIA Jetson AGX Xavier for deep learning algorithm implementation

- one general labtop

- one Robosense RS-LIDAR16

- one Ricoh Z1 360° camera

- one Sony RGB-D D455 camera

The main applications of this platform are:

- indoor datasets acquisition

- localization, Mapping, depth estimation, Semantization

- proactive navigation in shared space

- pedestrian detection and tracking

This platform is used in MOBI-DEEP project for integration of different work from the consortium. It is used to demonstrate new results on social navigation.

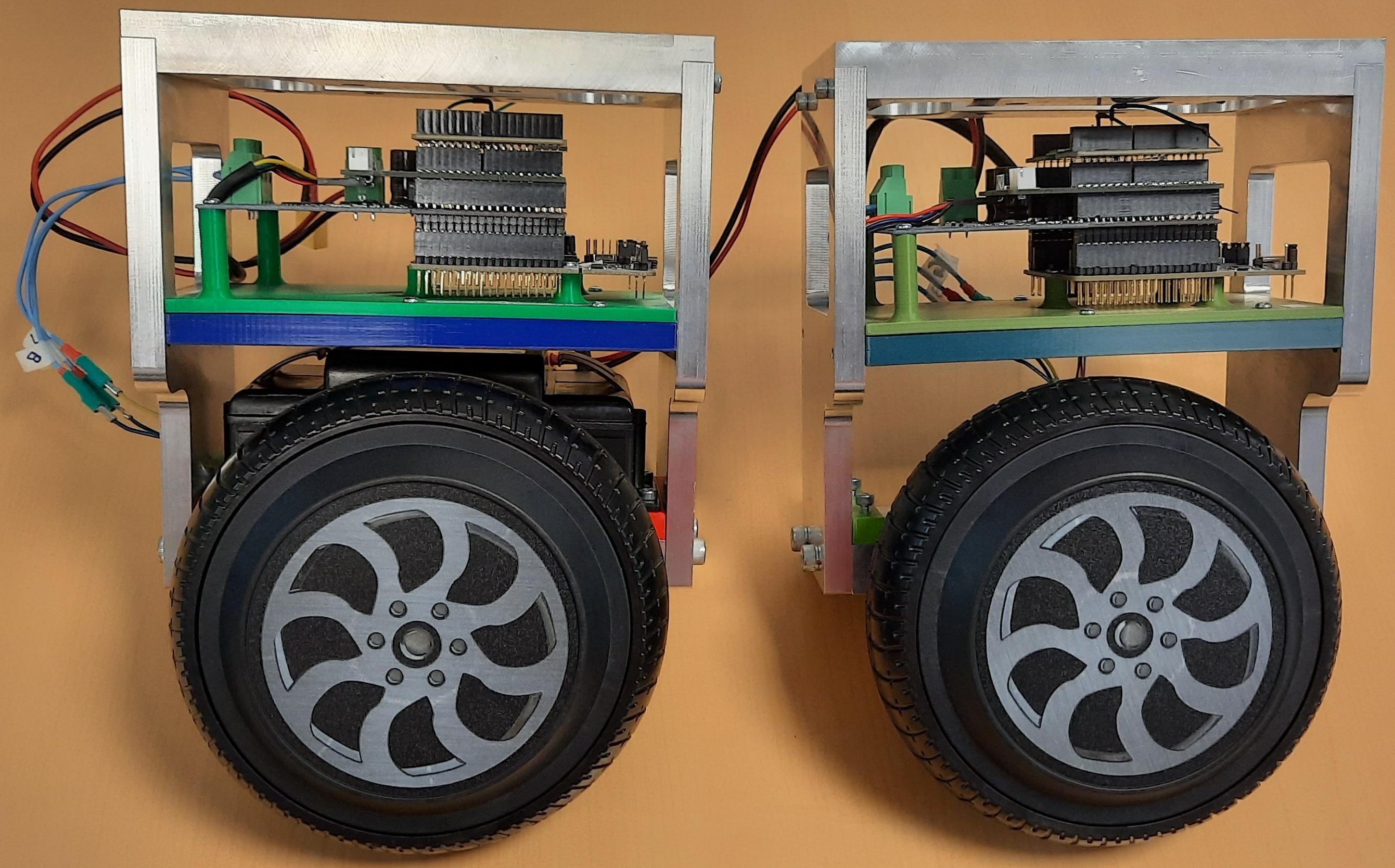

E-Wheeled platform

E-WHEELED is an AMDT Inria project (2019-22) coordinated by Philippe Martinet. The aim is to provide mobility to things by implementing connectivity techniques. It makes available an Inria expert engineer (Nicolas Chleq) in ACENTAURI in order to demonstrate the Proof of Concept using a small size demonstrator (see Figure 7). Due to the COVID19, the project has been delayed.

8 New results

Navigation of Autonomous Vehicles Around Pedestrians

Participants: Maria Kabtoul, Manon Prédhumeau [LIG Grenoble], Anne Spalanzani, Julie Dugdale [LIG Grenoble], Philippe Martinet.

The navigation of autonomous vehicles around pedestrians is a key challenge when driving in urban environments. It is essential to test the proposed navigation system using simulation before moving to real-life implementation and testing. Evaluating the performance of the system requires the design of a diverse set of tests which spans the targeted working scenarios and conditions. These tests can then undergo a process of evaluation using a set of adapted performance metrics. The work presented in 3 addresses the problem of performance evaluation for an autonomous vehicle in a shared space with pedestrians. The methodology for designing the test simulations is discussed. Moreover, a group of performance metrics is proposed to evaluate the different aspects of the navigation: the motion safety, the quality of the generated trajectory and the comfort of the pedestrians surrounding the vehicle. Furthermore, the success/fail criterion for each metric is discussed. The implementation of the proposed evaluation method is illustrated by evaluating the performance of a pre-designed proactive navigation system using a shared space crowd simulator under Robot Operating System (ROS).

Representation of the environment in autonomous driving applications

Participants: Ziming Liu, Ezio Malis, Philippe Martinet.

Visual odometry is an important task for the localization of autonomous robots and several approaches have been proposed in the literature. We consider visual odometry approaches that use stereo images since the depth of the observed scene can be correctly estimated. These approaches do not suffer from the scale factor estimation problem of monocular visual odometry approaches that can only estimate the translation up to a scale factor. Traditional model-based visual odometry approaches are generally divided into two steps. Firstly, the depths of the observed scene are estimated from the disparity obtained by matching left and right images. Then, the depths are used to obtain the camera pose. The depths can be computed for selected features like in sparse methods or for all possible pixels in the image like in dense direct methods 1.

Recently, more and more end-to-end deep learning visual odometry approaches have been proposed, including supervised and unsupervised models. However, it has been shown that hybrid visual odometry approaches can achieve better results by combining a neural network for depth estimation with a model-based visual odometry approach. Moreover, recent works have shown that deep learning based approaches can perform much better than the traditional stereo matching approaches and provide more accurate depth estimation.

State-of-the-art supervised stereo depth estimation networks can be trained with the ground truth disparity (depth) maps. However, ground truth depth maps are extremely difficult (almost impossible for dense depth maps) to be obtained for all pixels in real and variable environments. Therefore, some works explore to train a model on simulated environments and reduce its gap with the real world data. However, there are only few works focusing on unsupervised stereo matching. Besides using the ground truth depth maps or unsupervised training, we can also build temporal images reconstruction loss with ground truth camera pose which is easy to be obtained.

The main contributions of our work published in 2 are (i) a novel pose-supervised network (that is named PDENet) for dense stereo depth estimation, that can achieve State Of The Art (SOTA) results without ground truth depth maps and (ii) a dense hybrid stereo visual odometry system, which combines the deep stereo depth estimation network with a robust model-based Dense Pose Estimation module (that is named DPE).

In 7 we address the problem of increasing the precision of the previous work. Indeed, machine learning methods generate hallucinated depths even in areas where it is impossible to estimate the depth due to several reasons, like occlusions, homogeneous areas, etc. Generally, this produces wrong depth estimation that leads to errors in odometry estimation. To avoid this problem, we propose a new approach to generate multiple masks that will be combined to discard wrong pixels and therefore increase the accuracy of visual odometry. Our key contribution is to use the multiple masks not only in the odometry computation but also to improve the learning of the neural network for depth map generation. Experiments on several datasets show that masked dense direct stereo visual odometry provides much more accurate results than previous approaches in the literature.

Decision making for autonomous driving in urban environment

Participants: Monica Fossati, Philippe Martinet, Ezio Malis.

Autonomous vehicles operating in urban environments face a complex decision-making problem due to the uncertain and dynamic nature of their surroundings. Successful progress has been made in assisted and autonomous driving in certain contexts, but fully self-driving vehicles that can operate safely in a human environment and protect the safety of passengers and those around the vehicle have yet to be developed. This work focuses on two main aspects of the problem: the ability to learn from experience how to navigate in the environment, thus being able to handle new situations, and the partial observability of the navigation environment due to uncertainties in perception. To face the first challenge, reinforcement learning principles were applied, addressing navigation problems with progressively complex algorithms, ultimately solving a navigation problem on a multi-lane road using a Deep Q-Network (DQN). Additionally, the partial observability issue is tackled by modeling a multi-lane peripheral road driving problem as a Partially Observable Markov Decision Process (POMDP) and employing Partially Observable Monte Carlo Planner (POMCP) and Partially Observable Upper Confidence Trees (POUTC) for solving it. Performance analysis considers different model precision levels. The research contributes to enhancing safe and efficient autonomous navigation systems, enabling vehicles to learn navigation strategies from experience and handle uncertainties in real-world urban environments.

Autonomous navigation in human populated environment

Participants: Enrico Fiasché, Philippe Martinet, Ezio Malis.

Recently, robots are widely used around humans, in homes and in public, due to their advantages. In order to allow the robot and human to share the same space under natural interaction, the ability for robot to generate a socially acceptable trajectory is the key step. Autonomous robots find difficult to navigate in a crowded environment due to high level of uncertainty caused by dynamic agents present in the environment. This uncertainty leads to the so called Freezing Robot Problem (FRP). Many of the state-of-the-art techniques used to solve this problem, involving collaboration between humans and robots, are studied.

The work presented in 5 focuses on the control aspects of the autonomous navigation in human populated environment, using models that are able to predict the future outputs over a certain prediction time horizon, considering the social model for the pedestrian. Rather than letting the robot move passively to avoid people, the aim of the master thesis is to ensure safe and secure navigation in which the robot has a proactive role agents and AV collaboration. In particular, the master thesis studied and analyzed the Model Predictive Control (MPC) technique, focusing on the performances. Different types of Control Parameterization were used and compared in order to meet real-time feasibility. The Social Force Model (SFM) is used to predict the pedestrian motions. Simulations and experiments were carried out and evaluated in different types of social scenarios, demonstrating that the proactive navigation provided by the model is able to generate secure and safe trajectories avoiding the Freezing Robot Problem.

Multi-Spectral Visual Servoing

Participants: Enrico Fiasché, Philippe Martinet, Ezio Malis.

The objective of this work is to propose a novel approach for Visual Servoing (VS) using a multispectral camera, where the number of data are more than three times that of a standard color camera. To meet real-time feasibility, the multispectral data captured by the camera is processed using a dimensionality reduction technique. Unlike conventional methods that select a subset of bands, our technique leverages the best information coming from all the bands of the multispectral camera. This fusion process sacrifices spectral resolution but keeping spatial resolution, crucial for precise robotic control in forests. The proposed approach is validated through simulations, demonstrating its efficacy in enabling timely and accurate VS in natural settings. By leveraging the full spectral information of the camera and preserving spatial details, our method offers a promising solution for VS in natural environment, surpassing the limitations of traditional band selection approaches.

Efficient and accurate closed-form solution to pose estimation from 3D correspondences

Participants: Ezio Malis.

Computing the pose from 3D data acquired in two different frames is of high importance for several robotic tasks like odometry, SLAM and place recognition. The pose is generally obtained by solving a least-squares problem given points-to-points, points-to-planes or points to lines correspondences. The non-linear least-squares problem can be solved by iterative optimization or, more efficiently, in closed-form by using solvers of polynomial systems.

In 4, a complete and accurate closed-form solution for a weighted least-squares problem has been studied. Adding weights for each correspondence allow to increase robustness to outliers. Contrary to existing methods, the proposed approach is complete since it is able to solve the problem in any non-degenerate case and it is accurate since it is guaranteed to find the global optimal estimate of the weighted least-squares problem. The key contribution of this work is the combination of a robust u-resultant solver with the quaternion parametrisation of the rotation, that was not considered in previous works. Simulations and experiments on real data demonstrate the superior accuracy and robustness of the proposed algorithm compared to previous approaches.

During this year, we aimed to address the limitations and unanswered questions that emerged from earlier study and offer novel perspectives and methodologies to advance the field. Notably, one of the main contributing factors to the limited efficiency observed in the u-resultant approach was the failure to leverage the two-fold symmetry inherent in the quaternion parametrization, a missed opportunity that could have led to a more efficient solver. For these reasons, a new approach has been studied that shifts from utilizing the u-resultant method to adopting the h-resultant approach. The h-resultant approach consists in hiding one variable considering it as a parameter. Such a hidden variable approach has been used in the past to solve different problems in computer vision. Therefore, the key contribution of the current work is the design of a novel efficient h-resultant solver that exploits the two-fold symmetry of the quaternion parametrisation, that was not considered in previous work. This works has been accepeted for publication in RA-L 2024.

Reliable Risk Assessment and Management in autonomous driving

Participants: Emmanuel Alao, Lounis Adouane [UTC Compiegne], Philippe Martinet.

Autonomous driving in urban scenarios has become more challenging due to the increase in powered personal mobility platforms (PPMPs). PPMPs correspond mostly to electric devices such as gyropods and scooters. They exhibit varying velocity profiles as a result of their high acceleration capacity. Multiple hypotheses about their possible motion makes autonomous driving very difficult, leading to the highly conservative behavior of most control algorithms. This work proposes to solve this problem by performing continuous risk assessment using a Probabilistic Fusion of Predictive Inter-Distance Profile (PF-PIDP). It extends the traditional PIDP to account for uncertainties in the future trajectories of a PPMP. The proposed method takes advantage of the spatio-temporal characteristic of the PIDP and the robustness of multi-modal motion prediction methods. A combination of the proposed PF-PIDP and SMPC algorithm is then used for risk management to mitigate possible collisions and ensure a smooth motion of the autonomous vehicle for the comfort of passengers and other traffic participants. The advantages of the proposed approach are demonstrated through simulations of multiple scenarios.

Parameter estimation of the lateral vehicle dynamics

Participants: Fabien Lionti, Nicolas Gutowski [LERIA Angers], Sébastien Aubin [DGA], Philippe Martinet.

Estimating the parameters of a nonlinear dynamic system represents a major challenge in many areas of research and application. In 6 we present a new two-step method to estimate the parameters that govern the lateral dynamics of a vehicle, taking into account the scarcity and noise of the data. The method combines spline smoothing of observations of the system with a Bayesian approach to estimate the parameters. The first step of this method consists of applying a spline smoothing to the observations of the state variables of the system. This step filters out the noise present in the data and obtain accurate estimates of the derivatives of system state variables. Thus, the proposed method allows to estimate the parameters directly from the equations differentials describing the dynamics of the system, without resorting to to time-consuming integration methods. The second step is based on the estimation of the parameters from the residues of the differential equations, using a Bayesian approach called likelihood-free ABC-SMC. This Bayesian approach has several advantages. First of all, it makes it possible to compensate for the scarcity of data by incorporating a priori knowledge about the physical characteristics of the vehicle. In addition, it offers a high level of interpretability by providing a posteriori distribution on the parameters likely to have generated the observed data. Use of this new method has the advantage of allowing a robust estimation of the parameters of the lateral dynamics of the vehicle, even when the data are limited and noisy.

A Novel 3D Model Update Framework for Long-Term Autonomy

Participants: Stefan Larsen, Ezio Malis, El Mustafa Mouaddib, Patrick Rives.

Research on autonomous systems shows increased demands and capabilities within fields such as surveillance, agriculture and autonomous vehicles. However, the ability to perform long-term operations without human interventions persists as a bottleneck for real world applications. For long-term localization and monitoring in dynamic scenes, vision-based robotic systems can benefit from accurate maps of changes to improve their efficiency. Our work proposes a novel framework for long-term model update using image-based 3D change localization and segmentation. Specifically, images are used to detect and locate significant geometric change areas in a pre-made 3D mesh model. New and missing objects are detected and segmented, to provide model updates for robotic tasks such as localization. The method for geometric change localization is robust to seasonal, viewpoint, and illumination differences that may occur between operations. From testing with both our own and publically available datasets, the framework improves on relevant state-of-the-art methods.

Multi-robots localization and navigation for infrastructure monitoring

Participants: Mathilde Theunissen, Isabelle Fantoni, Ezio Malis, Philippe Martinet.

In the context of the ANR SAMURAI project we studied the interest in leveraging the robot formation control to enforce the localization precision. This involves optimizing the shape of the formation based on localizability indices that depend on the sensors used by the robots and the environment. The first focus is on the definition and optimization of a localizability index applied to collaborative localization using UWB antennas. This work has been presented at the JJCR (Journée des Jeunes Chercheurs en Robotique).

9 Bilateral contracts and grants with industry

9.1 Bilateral contracts with industry

ACENTAURI is responsible of two research contracts with Naval Group (2022-2024).

Usine du Futur

Participants: Ezio Malis, Philippe Martinet, Erwan Emraoui, Marie Aspro, Pierre Alliez (TITANE).

The context is that of the factory of the future for Naval Group in Lorient, for submarines and surface vessels. As input, we have a digital model (for example of a frigate), the equipment assembly schedule and measurement data (images or Lidar). Most of the components to be mounted are supplied by subcontractors. At the output, we want to monitor the assembly site to compare the "as-designed" with the "as-built". The challenge of the contract is a need for coordination on the construction sites for the planning decision. It is necessary to be able to follow the progress of a real project and check its conformity using a digital twin. Currently, as you have to see on board to check, inspection rounds are required to validate the progress as well as the mountability of the equipment: for example, the cabin and the fasteners must be in place, with holes for the screws, etc. These rounds are time-consuming and accident-prone, not to mention the constraints of the site, for example the temporary lack of electricity or the numerous temporary assembly and safety equipment.

La Fontaine

Participants: Ezio Malis, Philippe Martinet, Hasan Yilmaz, Pierre Joyet.

The context is that of decision support for a collaborative autonomous multi-agent system with a common objective and who try to get around ”obstacles” which, in turn, try to prevent them from reaching their goals. As part of a collaboration with NAVAL GROUP, we wish to study a certain number of issues related to the optimal planning and control of cooperative multi-agent systems. The objective of this contract is therefore to identify and test methods for generating trajectories responding to a set of constraints, dictated by the interests, the modes of perception, and the behavior of these actors. The first problem to study is that of the strategy to adopt during the game. The strategy consists in defining “the set of coordinated actions, skillful operations, maneuvers with a view to achieving a specific objective”. In this framework, the main scientific issues are (i) how to formalize the problem (often as optimization of a cost function) and (ii) how to be able to define several possible strategies while keeping the same tools for implementation (tactics).

The second problem to study is that of the tactics to be followed during the game in order to implement the chosen strategy. The tactic consists in defining the tools to execute the strategy. In this context, we study the use of techniques such as MPC (Model Predictive Control) and MPPI (Model Predictive Path) Integral) which make it possible to predict the evolution of the system over a given horizon and therefore to take the best action decision based on knowledge at time t.

The third problem is that of combining the proposed approaches with those based on AI and in particular the machine learning. Machine Learning can intervene both in the choice of the strategy and in the development of tactics. The possibility of simulating a large number of parts could allow the learning of a neural network whose architecture remains to be designed.

10 Partnerships and cooperations

10.1 International initiatives

10.1.1 Associate Teams in the framework of an Inria International Lab or in the framework of an Inria International Program

AISENSE associated team with the AVELAB of Kaist

Participants: Ezio Malis, Philippe Martinet, Matteo Azzini, Ziming Liu, Minh Quan Dao.

The main scientific objective of the AISENSE associated team is to study how to build a long-term perception system in order to acquire situation awareness for safe navigation of autonomous vehicles. The perception system will perform the fusion of different sensor data (lidar and vision) in order to localize a vehicle in a dynamic peri-urban environment, to identify and estimate the state (position, orientatation, velocity, ...) of all possible moving agents (cars, pedestrians, …), and to get high level semantic information. To achieve such objective, we will compare different methodologies. From one hand, we will study model-based techniques, for which the rules are pre-defined accordingly to a given model, that need few data to be setup. On the other hand, we will study end-to-end data-based techniques, a single neural network for aforementioned multi-tasks (e.g., detection, localization, and tracking) to be trained with data. We think that the deep analysis and comparison of these techniques will help us to study how to combine them in a hybrid AI system where model-based knowledge is injected in neural networks and where neural networks can provide better results when the model is too complex to be explicitly handled. This problem is hard to solve since it is not clear which is the best way to combine these two radically different approaches. Finally, the perception information will be used to acquire situation awareness for safe decision making.

10.2 European initiatives

10.2.1 Digital Europe

Agrifood-TEF (2023-2027)

Participants: Philippe Martinet, Ezio Malis, Nicolas Chleq, Pardeep Kumar, Matthias Curet.

AGRIFOOD-TEF project is a co-funded project by the Eurpean Union and the different countries involved. It is organized in three national nodes (Italy, Germany, France) and 5 satellite nodes (Poland, Belgium, Sweden, Austria and Spain), offers its services to companies and developers from all over Europe who want to validate their robotics and artificial intelligence solutions for agribusiness under real-life conditions of use, speeding their transition to the market.

The main objectives are:

- to foster sustainable and efficient food production, AgrifoodTEF empowers innovators with validation tools needed to bridge the gap between their brightest ideas and successful market products.

- to provides services that help assess and validate third party AI and Robotics solutions in real-world conditions aiming to maximise impact from digitalisation of the agricultural sector.

Five impact sectors propose tailor-made services for the testing and validation of AI-based and robotic solutions in the agri-food sector

- Arable farming: testing and validation of robotic, selective weeding and geofencing technologies to enhance autonomous driving vehicle performances and therefore decrease farmers' reliance on traditional agricultural inputs.

- Tree crop: testing and validation of AI solutions supporting optimization of natural resources and inputs (fertilisers, pesticides, water) for Mediterranean crops (Vineyards, Fruit orchards, Olive groves).

- Horticulture: testing and validation of AI-based solutions helping to strike the right balance of nutrients while ensuring the crop and yield quality.

- Livestock farming: testing and validation of AI-based livestock management applications and organic feed production improving the sustainability of cows, pigs and poultry farming.

- Food processing: testing and validation of standardized data models and self-sovereign data exchange technologies, providing enhanced traceability in the production and supply chains

Inria will put in place a Moving Living Lab going to the field in order to provide two kind of services: data collection with mobile ground robot or aerial robot, and mobility algorithms evaluation with mobile ground robot or aerial robot.

10.2.2 Other european programs/initiatives

The team is part of the euROBIN, the Network of Excellence on AI and robotics that was launched in 2021.

10.3 National initiatives

10.3.1 ANR project MOBIDEEP (2017-2023)

Participants: Philippe Martinet, Patrick Rives.

Technology-aided MOBIlity by semantic Deep learning: INRIA (ACENTAURI), GREYC, INJA, SAFRAN (Group, Electronics & Defense). We are involved in Personal assistance for blind people, Proactive navigation of a robot in human populated environment, and Deep learning in depth estimation and semantic learning.

10.3.2 ANR project ANNAPOLIS (2022-2025)

Participants: Philippe Martinet, Ezio Malis, Emmanuel Alao, Kaushik Bhowmik, Minh Quan Dao, Nicolas Chleq, Quentin Louvel.

AutoNomous Navigation Among Personal mObiLity devIceS: INRIA (ACENTAURI, CHROMA), LS2N, HEUDIASYC. This project has been accepted in 2021. We are involved in Augmented Perception using Road Side Unit PPMP detection and tracking, Attention map prediction, and Autonomous navigation in presence of PPMP.

10.3.3 ANR project SAMURAI (2022-2026)

Participants: Ezio Malis, Philippe Martinet, Patrick Rives, Nicolas Chleq, Stefan Larsen, Mathilde Theunissen.

ShAreable Mapping using heterogeneoUs sensoRs for collAborative robotIcs: INRIA (ACENTAURI), LS2N, MIS. This project has been accepted in 2021. We are involved in building Shareable maps of a dynamic environment using heterogeneous sensors, Collaborative task of heterogeneous robots, and Update the shareable maps.

10.3.4 ANR project TIRREX (2021-2029)

Participants: Philippe Martinet, Ezio Malis.

TIRREX is an EQUIPEX+ project funded by ANR and coordinated by N. Marchand. It is composed of six thematic axis (XXL axis, Humanoid axis, Aerial axis, Autonomous Land axis, Medical axis, Micro-Nano axis) and three transverse axis (Prototyping & Design, Manipulation, and Open infrastructure). The kick-off has been done in december 2021. Acentauri is involved in:

- Autonomous Land axis (ROB@t) is coordinated by P. Bonnifait and R. Lenain is covering Autonomous Vehicles and Agricultural robots.

- Aerial Axis is coordinated by I. Fantoni and F. Ruffier.

10.3.5 PEPR project NINSAR (2023-2026)

Participants: Philippe Martinet, Ezio Malis.

Agroecology and digital. In the framework on this PEPR, ACENTAURI is leading the coordination (R. Lenain (INRAE), P. Martinet (INRIA), Yann Perrot (CEA)) of a proposal called NINSAR (New ItiNerarieS for Agroecology using cooperative Robots) accepted in 2022. It gathers 17 research teams from INRIA (3), INRAE(4), CNRS(7), CEA, UniLasalle, UEVE.

10.3.6 Defi Inria-Cerema ROAD-AI

Participants: Ezio Malis, Philippe Martinet, Diego Navarro [Cerema], Pierre Joyet.

The aim of the Inria-Cerema ROAD-AI (2021-2024) defi is to invent the asset maintenance of infrastructures that could be operated in the coming years. This is to offer a significant qualitative leap compared to traditional methods. Data collection is at the heart of the integrated management of road infrastructure and engineering structures and could be simplified by deploying fleets of autonomous robots. Indeed, robots are becoming an essential tool in a wide range of applications. Among these applications, data acquisition has attracted increasing interest due to the emergence of a new category of robotic vehicles capable of performing demanding tasks in harsh environments without human supervision.

10.3.7 ROBFORISK (2023-2024)

Participants: Philippe Martinet, Ezio Malis, Enrico Fiasche, Louis Verduci, Thomas Boivin

[INRAE Avignon]

Pilar Fernandez

[INRAE Avignon]

ROBFORISK (Robot assistance for characterizing entomological risk in forests under climate constraints) is a common project between Inria-ACENTAURI and INRAE (UR629 Ecologie des Forêts Méditerranéennes). Three main objectives have been defined : to develop an innovative approach to insect hazard and tree vulnerability in forests, to tackle the challenge of drone visual servoing using multispectral camera information in natural environments, and to develop innovative research on pre-symptomatic stress tests of interest to forest managers and stakeholders.

Participants: Ezio Malis, Philippe Martinet. The team has received 2 master 2 students: The permanent team members supervised the following PhD students:

11 Dissemination

11.1 Promoting scientific activities

11.1.1 Scientific events: organisation

Member of the organizing committees

11.1.2 Scientific events: selection

Member of the conference program committees

Reviewer

11.1.3 Journal

Member of the editorial boards

11.1.4 Leadership within the scientific community

11.1.5 Scientific expertise

11.1.6 Research administration

11.2 Teaching - Supervision - Juries

11.2.1 Teaching

11.2.2 Supervision

11.2.3 Juries

11.3 Popularization

11.3.1 Articles and contents

11.3.2 Interventions

12 Scientific production

12.1 Major publications

12.2 Publications of the year

International journals

International peer-reviewed conferences