2023Activity reportProject-TeamASTRA

RNSR: 202224314M- Research center Inria Paris Centre

- In partnership with:Valeo

- Team name: Automated and Safe TRAnsportation systems

- Domain:Perception, Cognition and Interaction

- Theme:Robotics and Smart environments

Keywords

Computer Science and Digital Science

- A1.5. Complex systems

- A1.5.1. Systems of systems

- A1.5.2. Communicating systems

- A2.3. Embedded and cyber-physical systems

- A3.4. Machine learning and statistics

- A3.4.1. Supervised learning

- A3.4.2. Unsupervised learning

- A3.4.3. Reinforcement learning

- A3.4.5. Bayesian methods

- A3.4.6. Neural networks

- A3.4.8. Deep learning

- A5.3. Image processing and analysis

- A5.3.3. Pattern recognition

- A5.3.4. Registration

- A5.4. Computer vision

- A5.4.1. Object recognition

- A5.4.2. Activity recognition

- A5.4.4. 3D and spatio-temporal reconstruction

- A5.4.5. Object tracking and motion analysis

- A5.4.6. Object localization

- A5.5.1. Geometrical modeling

- A5.9. Signal processing

- A5.10. Robotics

- A5.10.2. Perception

- A5.10.3. Planning

- A5.10.4. Robot control

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A5.10.6. Swarm robotics

- A5.10.7. Learning

- A6. Modeling, simulation and control

- A6.1. Methods in mathematical modeling

- A6.2.3. Probabilistic methods

- A6.2.6. Optimization

- A6.4.1. Deterministic control

- A6.4.3. Observability and Controlability

- A6.4.4. Stability and Stabilization

- A6.4.5. Control of distributed parameter systems

- A8.6. Information theory

- A8.9. Performance evaluation

- A9.2. Machine learning

- A9.3. Signal analysis

- A9.5. Robotics

- A9.6. Decision support

- A9.7. AI algorithmics

Other Research Topics and Application Domains

- B5.2.1. Road vehicles

- B5.6. Robotic systems

- B6.6. Embedded systems

- B7.1.2. Road traffic

- B7.2. Smart travel

- B7.2.1. Smart vehicles

- B7.2.2. Smart road

- B9.5.6. Data science

1 Team members, visitors, external collaborators

Research Scientists

- Fawzi Nashashibi [Team leader, INRIA, Senior Researcher, HDR]

- Zayed Alsayed [VALEO, Industrial member]

- Alexandre Boulc'H [VALEO, Industrial member]

- Andrei Bursuc [VALEO, Industrial member]

- Guy Fayolle [INRIA, Emeritus]

- Fernando Garrido [VALEO, Industrial member]

- Axel Jeanne [VALEO, Industrial member]

- Jean-Marc Lasgouttes [INRIA, Researcher]

- Gerard Le Lann [INRIA, Emeritus]

- Renaud Marlet [VALEO, Industrial member, HDR]

- Gilles Puy [VALEO, Industrial member]

- Patrick Pérez [VALEO, Industrial member, HDR]

- Paulo Resende [VALEO, Industrial member]

- Tuan Hung Vu [VALEO, Industrial member]

- Raoul de Charette [INRIA, Researcher, HDR]

Post-Doctoral Fellows

- Nelson De Moura Martins Gomes [INRIA]

- Tiago Rocha Goncalves [INRIA, until Apr 2023]

PhD Students

- Yacine Ben Ameur [INRIA, until Jun 2023]

- Anh Quan Cao [INRIA]

- Karim Essalmi [VALEO, CIFRE, from Mar 2023]

- Mohammad Fahes [INRIA]

- Amina Ghoul [INRIA]

- Ivan Lopes [INRIA]

- Tetiana Martyniuk [VALEO, CIFRE, from Dec 2023]

- Noel Nadal [INRIA]

- Jiahao Zhang [IRT System X, CIFRE]

Interns and Apprentices

- Souhaiel Ben Salem [INRIA, Intern, from Apr 2023 until Oct 2023]

- Augustin Gervreau Mercier [INRIA, Intern, from May 2023 until Aug 2023]

- Tan Khiem Huynh [Inria, from Mar 2023 until Oct 2023]

Administrative Assistants

- Anne Mathurin [INRIA]

- Clotilde Monnet [INRIA , from Feb 2023, ASTRA Project Manager]

Visiting Scientist

- Weihao Xia [UCL, from Apr 2023, PhD visitor from UCL]

External Collaborator

- Itheri Yahiaoui [Université de Reims]

2 Overall objectives

Context

SAE International1 recently unveiled a new visual chart 91 that is designed to define the six levels of driving automation, from SAE Level 0 (no automation) to SAE Level 5 (full vehicle autonomy). It serves as the industry’s most-cited reference for automated-vehicle (AV) capabilities.

Fully autonomous cars (Level 5 of automation according to SAE J3016), which can work everywhere in all conditions, are not yet on the roads. Nevertheless, major advances are making vehicle automation a reality. Systems exist on serial vehicles with Level 2/2+ (assisted driving) and even Level 3 (high automation, driving only upon system request) since 2021 on privately owned vehicles as well as on public transport driverless vehicles are offered to passengers and goods around the world. Recent demonstrators (automated shuttles and robotaxis) have the merit of proving the feasibility of automated driving as a solution for improving mobility, comfort, safety and energy efficiency.

Current regulation (UN 157 – adopted in June 2020 and voted by 60 countries) allows today vehicles to drive in L3 up to 60 km/h on carriageway roads. Original Equipment Manufacturers (OEMs) are pushing for the extension of this regulation up to 130 km/h including automated lane changes. To allow that (L3/L4 on the highway), many challenges are still to be taken up; technical challenges of course, but also non-technical challenges which are not the easiest to deal with (legal, liability, ethical, monopoly, acceptance, economical...) and that are not in the scope of this document even though some intersect with some technical considerations 80, 99, 123.

In this context, the official ambition of France was previously recalled by the President of France, who reaffirmed his willingness to deploy these solutions, to extend transport services based on the autonomous vehicle by 2021 whenever this is possible.

For public transportation, on-road experiments are conducted around the world in specific Operational Design Domains (ODDs) and first commercial services are being deployed. For example, in Russia, Yandex has launched the first commercial service in Europe in 2019 in the city of Innopolis and Waymo. One ride-hailing service using highly automated vehicles in the Phoenix metropolitan area (US). These systems are operating in geofenced controlled environments due to the lack of technology maturity that are able to deal with all road types (missing lines, construction areas, reckless road users behaviour like scooters, etc.).

Therefore, the development of alternative solutions at a large scale needs other scientific foundations and technological breakthroughs. Car makers, suppliers, infrastructure operators and academics across the world are working today on ways to make driving safer, more comfortable, more efficient and more inclusive through automation, and the race is on to bring the technology to the mass market.

In this context Inria and Valeo are internationally distinguished players especially thanks to their R&D activities on automated unmanned vehicles, Cybercars and more generally on the development of advanced intelligent sensors-based decision systems.

Motivation

Partners in numerous collaborative research projects and bilateral projects, Inria and Valeo have also collaborated in the supervision of doctoral and post-doctoral students. Many Inria researchers have also joined Valeo's R&D teams for several years. Finally, numerous technology transfer actions and joint patent applications have taken place. Motivated by this very strong collaboration for over 15 years, Inria and Valeo wanted to formalize this synergy by strengthening their links, both in the fields of research and technology transfer.

What could be better than to create a joint research team to share the same visions on mobility and transport automation? And what could be better than working together upstream on breakthrough research topics? This naturally resulted in the creation of a joint research team: the ASTRA team. This team brings together talents from three entities: the former RITS team at Inria (Paris), members of the DAR team at Valeo (Créteil) and members at Valeo.ai (Paris). Beyond the strategic vision assumed by the management of these three entities, the France Relance national plan was an important incentive for the creation of this unusual joint entity.

3 Research program

Today, there are still many challenges facing the development and deployment of autonomous vehicles to reach an exploitable and commercially viable solution. This is due equally to technical and non-technical challenges. In particular, the challenges include aspects related to the performance of the systems, their efficiency, their integrability and their costs, not to mention the legal, social and ethical aspects.

A classic robust autonomous navigation architecture should take into account additional aspects related to real-time implementation, functional redundancy, durability, certification and purely technical aspects related to the design and development of functional bricks as well.

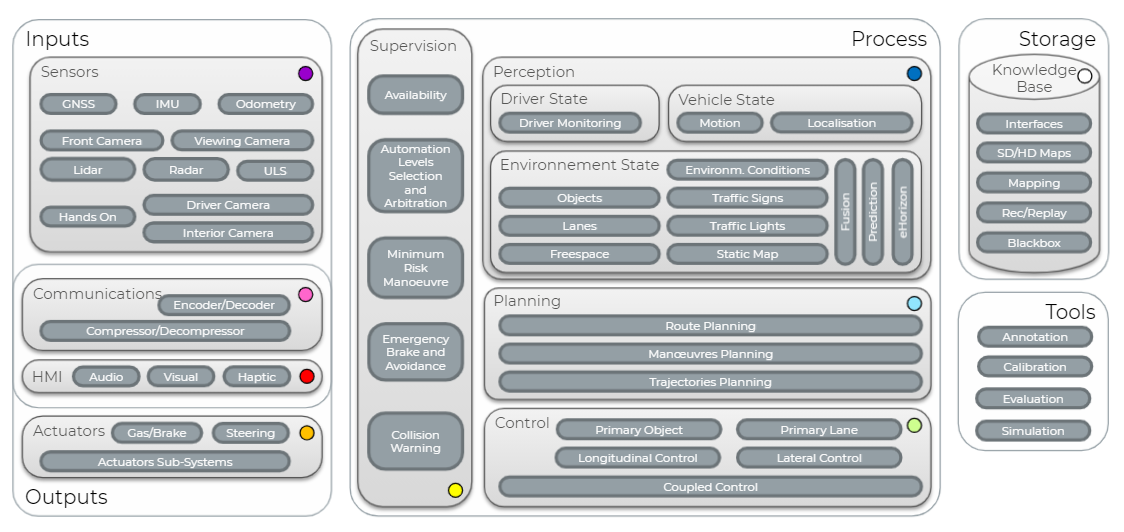

As part of this project-team we focus mainly on developments related to automated sensor-based navigation. The other aspects are be dealt with in the framework of collaborations and exchanges with other academic, industrial and institutional partners. Therefore, we focus on four research topics that are central to autonomous navigation and a major focus point for the scientific and technical communities. These components are: perception and understanding of the scene, decision systems and vehicle control, cooperative driving and system modeling. These components are linked one another through a complex but straightforward architecture depicted in Fig. 1.

Obviously, the ability to perceive and understand the scene is the starting point of any navigation architecture since it represents the first step of processing sensory data, capturing the world state, and creating the internal digital representations of the decision system. The latter relies on these representations, on the ego vehicle localization and the positions of other road users and on contextual data to build decision schemes which include maneuvers planning and trajectory generation. The control-command loop is then responsible of the execution of the trajectories by the generation of control laws that control the vehicle's actuators.

All these modules interact as shown in Fig. 1 and ensure an autonomous but individual navigation of a vehicle. However, it is important to study the behavior of these vehicles and their performance when the penetration rate (i.e., their ratio to total traffic) of these vehicles becomes critical. It is also very interesting to study the interactions between these vehicles and their potential cooperation. This is called cooperative driving; it can only take place in the presence of connectivity. The latter also ensures interaction and cooperation between autonomous vehicles and infrastructure. The benefits of this type of cooperation are significant, both in terms of the individual performance of each vehicle but also of the overall performance of the vehicle fleet and traffic in general.

3.1 Research Axis 1: Vision and 3D Perception for Scene Understanding

Navigation for mobile robotics requires a robust understanding of the environment from 2D or 3D sensors. Recent learning-based vision algorithms are now able to operate in highly cluttered environments, and tasks which were considered challenging — such as semantic segmentation or object detection — are soon to be solved to a certain extent. Still, the classical supervision paradigm, which relies on large annotated datasets, cannot encompass in practice all outdoor conditions and scenarios. There is therefore a need both to relax the requirement of massive annotations and to extend the perception capability to situations unseen or rarely seen in the training data.

To that aim, in this research axis, we investigate several broad topics. First, we transversely investigate learning with less supervision with applications to various perception tasks. Focusing on outdoor vision, we conduct research relying on data-driven or physics-guided paradigms to hallucinate complex lighting/weather conditions and compensate for missing data in the training sets. Because mobile robots evolve in the physical world we also investigate how vision algorithms can provide in-depth 3D understanding of the scene from images and/or LiDAR scans.

To evaluate our research as well as to foster reproducibility, we rely on relevant recent public datasets (nuScenes 47, Waymo Open 142, Woodscapes 153, SemanticKITTI 38, CADCD 130, etc.) and intend to openly share our research results.

3.1.1 Learning with less supervision

It is now widely accepted that supervised learning is a long-term dead end for computer vision. It relies on costly human- biased annotations, which will soon be unbearable with regard to the ever-increasing size of datasets, trying to cover data diversity. To circumvent the need for labels, strategies have been developed where a trained model is either (almost) directly applicable to unseen conditions (i.e., zero-/few-shot learning) or finetuned on a target domain (i.e., domain adaptation). On the need of data, we investigate automatic generation of data with Generative Adversarial Networks (GANs). Following recent work from the group members 94,8, 145, 146, 132, 131, 151, 122, we contribute to these research directions, investigating the remaining scientific locks that are detailed below.

Regarding zero-shot learning, we observe that current methods are limited by the low amount of geometric information featured in the embeddings that are used as auxiliary information; we therefore boost this geometric information in the embeddings, for example by jointly using text and images. As for few-shot learning, we use high-contrast dictionary-based approaches where generalization is controlled by the level of sparsity. We are also interested in category-agnostic models that can operate on (e.g., detect, segment) arbitrary objects, or that can adapt online to information retrieved from databases of rare objects. We build upon recent progress in representation learning to enforce separable features representations 96 while enforcing orthogonality of features 144. Besides, we investigate both zero- and few-shot learning in the context of a complete perception pipeline, instead of focusing on individual vision tasks as commonly done. In both cases, we will also investigate the use of multiple views and multiple modalities (using both images and LiDAR scans).

Concerning domain adaptation, common unsupervised strategy exploits resemblance between a source and a target domain using a self-supervised signal (e.g., pseudo labels 106) to discover statistics in the target domain. However, when the domain gap is too big, the model adaptation leads to sub-optimal minima 154, 50. To accommodate bigger domain gaps, we investigate the discovery of new statistics with the support of several modalities (e.g., both 2D and 3D), for a variety of tasks (e.g., semantics, depth and normal estimation). Regarding representation learning, we focus on disentangling latent space representations, working towards domain-invariant features by enforcing orthogonality of the domain features while enabling the discovery of exclusive task/domain features. We study bridging zero-/few-shot to the domain adaptation paradigm, investigating the open domain adaptation setting that accounts for novel unseen domains such as 114, 44.

Finally, to relax the need of training data we investigate automatic data generation with image-to-image (i2i) translations and style-transfer techniques, which both can help training in self-supervision settings 39, 131, 105. We observe that GANs commonly lack diversity and controllability in the generated data. To that aim, we study multi-domain setups 52 and automatic discovery of domain attributes 87 to foster controllable latent representations. We fight the lack of diversity in the generated datasets 39 with continuous 148 and multi-modal 131 strategies. Besides standard metrics, we also evaluate the quality of our generated data by training proxy vision tasks.

3.1.2 Vision in complex conditions

The wide variety and continual physical nature of physics prevent any dataset to encompass all lighting and weather conditions. Most outdoor datasets account exclusively for data recorded in clear weather daytime while only a handful of them include adverse conditions. In fact, regardless of the recording complexity some conditions are unlikely to be included in any dataset due to their inherent rarity (e.g., snow storm at sunset). Because they lead to drastically varying appearances we focus here on changing weathers, seasons and lighting conditions; with the complimentary goals to improve robustness of vision algorithms and to automatically assess failures cases.

Rather than agnostic data-driven models, we study training with a priori knowledge, with the ultimate goal to get representations invariant to these conditions. To compensate for the scarcity of data as well as to generalize training to unseen conditions, we rely on physics-guided learning to ease and accommodate the discovery of statistics. We rely here on physical guidance to discover the continuous underlying manifold where data lives 13. Using physical models to guide the training helps vision algorithms to accommodate better to partial or imbalanced distribution in the training set, as well as to better extrapolate to unseen conditions. We are focusing on invariant representations that can improve both the image translation setup and proxy vision tasks (segmentation, objects, etc.); relying on prior works from group members 13, 134, 16, 14.

Sometimes, weather conditions go even beyond the sensing capabilities of sensors, e.g., sun glare or very dark scenes can reduce dramatically the perception of standard cameras. In such cases, robustness is difficult to attain and the system should rather trigger an alert or fail gracefully. Unseen weather conditions encountered at runtime can be regarded as a dataset/distribution shift and can be addressed with predictive uncertainty estimation methods 127. Through a Bayesian lens we study and devise strategies for automatic assessment and detection of dataset drifts by leveraging approximate ensembles 116, 33, 70, observer networks 58, 88, and complementary information from other sensors 40. We rely on prior findings and works from group members 58, 70, 69,16, 134.

On application, we evaluate robustness of the proposed methods on core vision tasks of recent adverse weather datasets 138, 155, 142, 47, 40.

3.1.3 3D scene understanding

Robots still commonly lack the natural ability of humans to estimate the fine-grained geometry of a scene while understanding object interactions and reasoning beyond their field of view. To provide accurate geometry, 3D active sensors such as LiDARs are commonly used in autonomous driving 92, but they only provide a sparse sensing of the scene. In this third topic, we seek a fine-grained geometrical/semantics 3D understanding of the scene with or without 3D sensing, while also relying on frugal supervision. This topic benefits from prior work of group members 133, 42, 41, 94,15, 93, 152, 100, 48.

Building up on recent methods 42, 41, 143, 109, 82 that efficiently convolve point clouds, we look forward at improving 3D tasks (detection, segmentation, etc.) relying on contextual priors. Furthermore, we address 3D generative tasks like point cloud up-sampling, completion and generation, as well as surface reconstruction, which provides important navigation cues for robotics, and can also assist the human driver in augmented reality scenarios, particularly in adverse conditions. Temporally consecutive point clouds will also be leveraged to disambiguate occlusions and provide denser scene sensing 133, 48. Regarding richer scene representations, we study the intertwined relation of geometry and semantics 140 through the semantic scene completion task 15, 136, 135, which gained growing interest lately 38.

Another line of study is the interaction between modalities of different nature like for scene understanding, in particular the complementarity of 2D images and 3D scans. We study how multi-modal features can jointly improve performance of core tasks, but also how it can lead to improving the performance of single modalities by exploiting cross-modal features as self-supervision 94,8.

Besides the use of 3D devices, we also investigate 3D understanding from 2D images. As they originate from passive sensors, images carry less obvious geometrical cues but humans are still able to estimate depth and understand 3D from a photograph, heavily reasoning on learned priors. We study here challenging tasks like scene reconstruction or 6-DOF localization, which can be conveniently self-supervised from either 3D sensing or sequential data.

3.2 Research Axis 2: Localization & Mapping

Vehicle localization and environmental mapping are pillars of the perception task for an autonomous vehicle. While vehicle localization ensures the global positioning of the vehicle in its environment and local positioning with regard to the road and to the close road features, environment mapping contributes in building a useful internal representation that is exploited by the decision system.

Inria and Valeo teams have been working - separately and jointly - on the localization and mapping solutions for over the past 15 years. Many algorithms have been developed and showed their effectiveness in terms of accuracy, precision and safety expectations for autonomous driving. However, the integrity, safety, data size and costs are still challenging points that ASTRA wants to address while pursuing research on localization and pose registration using single/multisensor approaches.

3.2.1 Localization and Map Integrity

Many localization methods were developed mainly based on Particle Filter and GraphSLAM together with a point cloud representation of the environment. These solutions mainly focus on the accuracy and precision requirements of the pose estimations. Yet, the integrity of localization and integrity of maps used for localization are critical to ensure a safe use of the localization system for autonomous driving. State-of-the-art methods on localization integrity usually proceed by: 1. employing Fault Detection and Isolation algorithms (FDI) to remove outliers from input data. 2. computing Protection Levels (PL) to qualify the integrity zone 10386104 or by calculating the Protection Levels (without FDI) such as in 11235. Maps integrity is highly related to the feasibility to find a distinctive matching when using the map for localization. Indeed the map can be explored by an algorithm that aims to identify the zones or sections that represent a potential ambiguity for matching algorithms such as in 89.

3.2.2 Online Alignment of Multiple Map Layers

A wide diversity of maps that are dedicated to vehicle’s localization are nowadays available. These maps are different from each other regarding different key localization features. The most important aspects may be: the structure of the representation (e.g., grid, graph etc.), the underlying theory to represent the information of the environment (e.g., occupancy probabilities, landmarks, etc), and the sensor used to collect information (LiDAR, camera, etc). Map providers, such as Here and TomTom, usually provide maps with different layers to encode different information that are relevant to ADS features (Road model, lanes, and road features). Valeo, having the advantage of being the leader of automotive LiDAR sensor, wants to enhance his ADS solutions arsenal as a map provider by providing a map service based on the laser point clouds and potentially other information layers that are relevant to ADS. For this purpose it is important to find correspondences and align different map layers with other maps from maps providers. This subject is addressed by considering semantic information that can be extracted from heterogeneous sensors and maps data such as in [9] and [10].

3.2.3 Georeferencing of maps without RTK GNSS and IMU

Highly accurate maps that are used for AD localization are usually built using a very expensive Fusion box that includes a very precise RTK_GPS receiver and a first grade IMU. These solutions for map building are very expensive and require deployment of RTK bases in the environment to receive the corrections which imply extra cost. The idea of this subject is to be able to use available sensors (such as standard GNSS, IMU, CAN, LiDAR, Camera) and possibly maps from other providers to build a highly accurate (in the global reference) map using point clouds. Different inputs from sensors and maps can be considered together with an asynchronous fusion method to build an accurate estimation [11]. The method to achieve this goal constitutes the subject of this study.

3.3 Research Axis 3: Decision making, motion Planning & vehicle Control

Decision-making, maneuver and motion planning, and vehicle control are extremely vital components of the intelligent vehicle. These modules act as a bridge, connecting the perception subsystem of the environment and the bottom-level control subsystem in charge of the execution of the motion. We address these issues covering various strategies of designing the decision-making, trajectory planning, and tracking control, as well as shared driving of the human-automation to adapt to different levels of the automated driving system accounting with the driver profile.

The challenges related to decision making and path planning are mainly related to four distinct elements:

- Errors and uncertainties introduced by the perception subsystems

- Environment static and dynamic occlusions

- Lack of understanding and prediction of other road users behaviors

- Simultaneous consideration of several constraints related to: vehicles dynamics, energy consumption, passengers comfort, offense to driving rule...

Different approaches are investigated in the state of the art addressing one or several issues but, to our knowledge, none are capable of addressing all of them simultaneously. More specifically in most approaches decision and planning are dealt separately or in a way that favors one of them. Approaches based on Markov decision process (MPD, POMDP,...), path-speed profiles, ontologies, artificial potential fields coupled to MPC controllers are able to show interesting results in dedicated environments or in specific situations, however most of them do not tackle properly specific issues such as intention and behavior predictions, interactions or multi-criteria real time optimal maneuver decision.

While continuing the investigation of end-to-end driving approaches based (inverse-)reinforcement learning decision-making approaches, we keep on improving current path-planning methods already developed by both teams at RITS and DAR: Reachable Interaction Sets 37, Artificial Potentials Fields (coupled to MPC control) which are designed for obstacle avoidance, as well as traditional path planning methods. Optimal methods based on the convex optimization and cubic splines are investigated at DAR to design optimized and robust trajectories. More specifically, we are mainly focusing on the following three scientific topics (detailed in the next sections):

- Maneuvers and trajectories prediction of surrounding road users

- Schemes for ego-vehicle actions and maneuvers decision making and motion planning

- Motion planning and trajectories generation

3.3.1 Maneuver and trajectory prediction

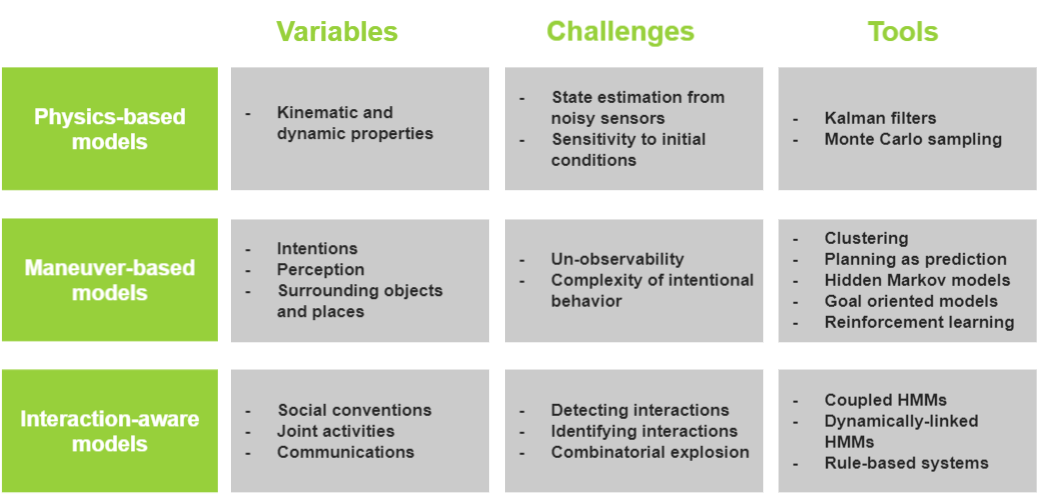

To achieve a safe and comfortable driving, an autonomous driving system must have an accurate knowledge of the future motions of all other traffic agents surrounding the autonomous vehicle, such as cars, pedestrians, cyclists, etc. Motion prediction is thus a key task in autonomous vehicles. Several methods of motion prediction have been studied in the literature. Lefèvre et al 107 propose their classification in three levels with an increasing degree of abstraction: Physics-based models, Maneuver-based models and interaction-based models.

- Physics-based motion models. They consider that the motion of vehicles only depends on the laws of physics. The future motion is predicted using dynamic and kinematic models linking some control inputs car properties and external conditions. These models are limited to short term prediction and are unable to anticipate any change in the motion of the car caused by the execution of a particular maneuver.

- Maneuver-based motion models. They consider that the future motion of a vehicle also depends on the maneuver that the driver intends to perform. The future motion of a vehicle on the road network corresponds to a series of maneuvers executed independently from the other vehicles. These models are Unadaptable to different road layouts.

-

Interaction-aware motion models. They take into account the inter-dependencies between vehicles’ maneuvers. These models require computing all the potential trajectories of the vehicles which is computationally expensive and no compatible with real-time risk assessment. Valeo has filed a patent to overcome this issue 149. This patented method is being developed in order to be tested in the automated driving prototypes.

Fig. 2 shows a comparison of the different models including their challenges and the used algorithms.

Motion prediction models comparison

Valeo has considered these categories in its development of the automated driving prototypes Cruise4U and Drive4U. The physical-based model is used in situations when their is no knowledge about the route geometry (for example in a big roundabout without lanes), the maneuver-based in highway and urban environment when the road topology is available from HD Map or valeo Drive4U Locate map.

In the few last years, machine learning based algorithms and particularly deep learning are used in order to solve the limits of the current prediction methods. Human motion trajectory prediction has been addressed in the literature 43, 137. A large amount of naturalistic road user trajectories in different contexts (highways 56, 57, 97 or urban 47, 49) needed to train and evaluate deep learning methods are now available. Our first works 12, 121,11, 119, taking as input the track history of a target vehicle and of its surrounding moving road users, obtained accurate prediction results of the target vehicle motion on highways and an extension 120, including the static scene structure, has been proposed for an urban context. Valeo is involved in this research area with activities in prediction of other road users and ego-vehicle trajectory. Different approaches have been implemented and tested in simulation and on test cars 46, 45.

However, work has still to be done in this domain in terms of performance, robustness and generalization before being used in real autonomous driving applications. In fact, the behavior of a human driver depends also on the contextual knowledge of the environment (speed limits, traffic density, day of the week, visibility, road equipment, driver's country, etc.) and on its goal 157. We plan to include these contextual cues in a prediction method, which should also compute multiple plausible trajectories representing the driver's diverse possible behaviors, give uncertainties estimations on the predictions, carry out multi-agents trajectory forecasts and should be usable in any environment. It will necessitate the use of a more complete dataset 156 composed of various driving scenarios collected from different countries, which may be completed by our own dataset collected with the help of Valeo if necessary. This work will be done in collaboration with Itheri Yahiaoui from Reims University and within the starting PhD thesis of Amina Ghoul funded by the SAMBA project.

3.3.2 Ego-vehicle actions and maneuvers decision making

The most important component of an autonomous vehicle navigation system is the decision system that elaborates the coming tactical actions and maneuvers to be executed. The selection of the optimal maneuver should be the result of relevant and simultaneous consideration of several factors. These factors are mainly: safety and risk assessment, respect of the dynamic constraints of the vehicle and its controllability, uncertainties related to the perception outputs, nearby uncertain interactions with/between close road users, and finally the criterion related to the navigation objectives such as journey duration minimization, driver/passenger comfort, fuel/energy consumption minimization, respect of driving rules, etc. The latter being expressed in terms of kinematics constraints.

In the literature, there are very few approaches describing unified decision architectures capable of taking into account all of the considerations mentioned above. Most approaches are developing planning schemes which separate motion generation and decision making. In these approaches, motion planning (including reactive planning) usually exploits geometry, configuration spaces and other optimization techniques. Decision making schemes rely on AI logic based approaches such as rule based 126, decision trees 54, 110, Finite Set Machines 158, Bayesian Networks and Markov Decision Processes like approaches (MDP, POMDP…), AI heuristics algorithms (SVM’s and evolutionary methods) or AI approximate reasoning methods (fuzzy logic) and Artificial Neural Networks (CNN’s, Reinforcement Learning…) 111, 147, 51. In 55 propose an architecture that provides an optimization of the motion generation using the decision making function as the evaluation function, the aggregation of fuzzy logic and belief theory allowing decision making on heterogeneous criteria and uncertain data.

In the coming period we will work on unified architectures, that tackles simultaneously decision making and motion planning. Very likely, we will focus on deep learning techniques based on reinforcement learning and inverse reinforcement learning where we deem a (dense) reward function that is suitable for a large class of behavioural planning tasks. More generally, we will investigate model-free and model-based approaches where some interesting approaches have already been initiated and showed interesting results such as in 108. In particular, in order to better evaluate safety costs, we will take as input the output of the maneuvers and trajectories prediction system described in the previous section, which has the advantage to better estimate the road users trajectories thanks to attention mechanisms that encode interactions and behaviors. This work is done within the PhD of Yacine Ben Ameur funded by the SAMBA project.

3.3.3 Trajectory planning

State of the art on motion planning techniques have been mainly focusing on methods generating the geometric path first, and then applying a speed profile to the generated path. To mention just a few, this approach has been tackled by the following methods (or combinations): interpolating curve-based 78, 79, graph-search based 113, sampling-based 98 and optimization-based 83.

From the motion planning point of view, the inclusion of human factors is a key element for increasing the acceptance of the automated vehicle behavior and for providing a more human-like response. For that purpose, the use of data from real drivers should be envisaged to better define the motion constraints in dynamic environments, allowing to adapt the trajectories to any specific road scenario (intersections, roundabouts, merging, overtaking, lane driving, etc). For instance, motion constraints such as longitudinal and lateral accelerations as well as jerks should be properly taken into account in the generation of a human-like speed-profile, as introduced in 34.

Furthermore, the inclusion of driving factors such as energy consumption or the traffic occupancy should be considered in the multi-criteria optimization for better adapting to any driving situation. This would help to reduce the driving time (such as the commute time) or even reduce the energetic consumption and the stress of both driver and car passengers by reducing the traffic jams and the corresponding repetitive acceleration and braking maneuvers.

Finally, this planning module must fit to the time constraints for its execution in real-time to ensure safety. Thus, a complete and rapid motion planning approach is needed; it should consider the functional safety to generate real-time collision-free trajectories considering the different interactions with the surrounding vehicles to be tracked by the control. For that purpose, works presented at 36 will be extended in order to consider the interaction among the several surrounding road users as one and not as individual interactions, investigating the risk assessment metric that is the most appropriate for each specific scenario.

3.3.4 Robust control of automated vehicles

In order to execute safely a planned trajectory or a reactive maneuver, it is essential that the vehicle executes these trajectories taking into account the vehicle dynamics while ensuring safe, stable and comfortable maneuvers. A tremendous effort was deployed the last 10 years by the team partners in the area of motion planning and intelligent control. Seven PhD thesis were dedicated to the important problem of path and motion planning as well as on corresponding control-command. All are addressing the navigation of autonomous vehicles in structured but complex environments. Harsh configurations such as intersections and roundabouts need specific planning approaches taking into account the geometry and the topology of the places, but also the dynamic and kinematic constraints of each ego-vehicle and as the safety and comfort constraints.

Previously, RITS team (Inria) also implemented specific control algorithms dedicated to specific road maneuvers such as overtaking 128 and parking maneuvers 129. Control laws were designed with the theoretical proof of stability and optimality. Very interesting results were obtained in two major domains, mainly related to the controllability and stability of dynamic complex systems which are key aspects when it comes to design intelligent control algorithms for vehicles:

- Plug&Play control for highly non-linear systems: Stability analysis of autonomous vehicles. The developed Plug&Play control is able to provide stability responses for autonomous vehicles under uncontrolled circumstances, including modifications on the input/output sensors. Former RITS team was among the very first to investigate these theories for automotive applications. They were Investigated in the PhD thesis of Mr. F. Navas 124 and I. Mahtout 115. The approach deals with: the reconfiguration of existing controllers whenever changes are introduced in the system (gain scheduling), online closed loop identification of the vehicle and its components, and Automatic control reconfiguration to achieve optimal performance 12510.

- Fractional Calculus for Cooperative Car Following Control A Car-Following gap regulation controller using fractional order calculus, has been developed and has been proven to yield a more accurate description of real processes and ensure string stability of the platoons or the vehicles involved in a Cooperative Autonomous Cruise Control 66. In an effort to combine fractional calculus robust control with plug&play control, a multi-model adaptive control (MMAC) algorithm based on Youla-Kucera (YK) theory to deal with heterogeneity in cooperative adaptive cruise control (CACC) systems was proposed 67.

ASTRA will evolve by introducing intelligent cooperation between vehicles and, at the same time, autonomously driving the vehicle in a human driver way (increasing driver acceptability) but with the safety and accuracy of optimized control algorithms. To achieve this, we will rely on the existing approaches developed so far but no further research will be conducted in the lifetime of the joint team. This is mainly due to the absence of a senior researcher at ASTRA capable of carrying this topic independently at a high level. This also motivates the team to seek to recruit a new confirmed researcher in the field of the control of dynamic systems, a crucial domain for a team willing to develop and deploy advanced control architectures on real mobile platforms. In the meanwhile it would be very interesting to envisage collaborations with other Inria teams working on similar topics. A perfect example is DISCO team (Inria Saclay Research Center, head: Mrs. Catherine Bonnet). Among others, the research interests of DISCO cover: the realization and reduction of infinite-dimensional systems, Robust and BIBO parametrization and stabilization of infinite-dimensional systems, stabilization by finite-dimensional controllers (PID control), delay systems and fractional systems.

This research direction comprises a big interaction with the research axis: Large scale modeling and deployment of mobility systems in Smart Cities. The former will be essential when developing control algorithms that rely on a very small communication delay for getting a stable latency, designing stable systems. The latter will serve to analyze the effect over the traffic flow from a developed algorithm, moving from the validation of a proposed controller in a limited number of vehicles to a its study from a macroscopic perspective.

3.4 Research Axis 4: Large scale modeling and deployment of mobility systems in Smart Cities

While axes 1 to 3 deal with subjects related to the on-board intelligence of an “individual” intelligent vehicle and its autonomous navigation, axis 4 intervenes when it comes to many communicating, autonomous or automated vehicles but also when it comes to the cooperation with the static environment (infrastructure). The latter may contain and integrate: roadside and monitoring sensors (Cooperative Perception Services), signaling, communication infrastructures, cloud... The research concerns in particular the deployment of equipped vehicles on a large scale in a road or urban environment.

The research objectives are twofold.

-

First, the focus is on the modeling of systems comprising a large number of vehicles, often seen as random entities.

The methodology is mainly to explore the links between large random systems and statistical physics. This approach proves very powerful, both for macroscopic (fleet management 63) and microscopic (car-level description of traffic, formation of jams 72, 139, 77, 76) analysis. The general setting is mathematical modelling of large systems (typically in the so-called thermodynamical limit), without any a priori restriction: networks, random graphs, etc. One often aims at establishing a classification based on criteria of a twofold nature: quantitative (performance, throughput, etc) and qualitative (stability, asymptotic behavior, phase transition, complexity).

- The second objective concerns the cooperation of these communicating entities in order to address the efficiency and safety of mobility. This cooperation takes several forms. Direct or indirect communications (V2X) are dedicated to maneuver coordination, taming and improving traffic efficiency (cf. section 4.4.2), platooning, safety critical distributed coordination (cf. 4.4.3)... Crowdsourcing is another aspect that could be used for traffic modeling and prediction (cf. 4.4.1), environment augmented mapping, or global vehicles localization. A Phd student will be hired this year to work on this precise subject (cf. 4.5).

Beside this core methodology, other past activities of interest include discrete event simulation 53, 102 and resource allocation for ITS 101, 84, 85.

Finally, axis 4 does not represent a structural unit like the other axes. Its objective is to deal with the problem of scaling, deployment and multi-vehicle cooperation in a global and systemic way. On the substance, methods and theories of modeling will be investigated and the design of secure telecommunication systems will be elaborated. These models and systems are intended to be implemented in more global systems and architectures. They will interact with the other axes through these architectures and will respond in a targeted way to needs; for example, whenever a need for probabilistic modeling is expressed (e.g. section 4.5).

3.4.1 Traffic prediction in urban settings: detecting extreme events

A probabilistic forecasting method that can provide predictions of urban traffic at city level, accurate at short term and meaningful for a horizon of up to several hours, has been devised in the team 75, 71, 74, 117, 118, 735, in collaboration with C. Furtlehner (TAU, Inria Saclay). It is designed to leverage spatial and temporal dependency and can deal with missing data, both for training and running the model. The method consists in learning a sparse Gaussian copula of traffic variables, compatible with the Gaussian belief propagation algorithm. Results of tests performed on three urban datasets show a very good ability to predict flow variables and reasonably good performances on speed variables.

When investigating the output of the model, some rare but large errors are noticeable. It turns out that this corresponds to detectors which, for a long period, send values completely at odds with the ones observed during training. These badly behaving detectors may either correspond to corrupted ones, or to drastic changes of the traffic conditions on the corresponding segment, because of road work or accidents for instance.

One way of examining these events has been proposed in 90, and we plan to investigate whether it can be used to improve models. Separating sensor failure from extremal events is even more important, and this is what we plan to investigate in a PhD thesis, by careful analysis of the correlation structure of the model.

3.4.2 Taming highway traffic using cooperative automated vehicles

Several authors 68, 59, 150, 81 have suggested that it is possible to use a small proportion of automated vehicles to regulate highway traffic. These studies are set in a traffic regime which exhibits string instability, which means in terms of transfer function that any excitation of a frequency below a certain limit is amplified. We are interested here in a slightly different setting, where reaction time is taken into account for human drivers. We have shown 64 that the introduction of this delay involves a non rational transfer function, implying in particular that the system is not always stable. We have proposed a complete self-contained proof of stability conditions, based on classical complex analysis. Moreover, we bring to light a phase transition with a new propagation regime, named partial string stability, situated between string stability and string instability.

With these foundations established, the next steps are to devise a traffic stabilization scheme by means of a fleet of cooperative automated vehicles. However, contrary to the work in 68, our approach is based on a car-following model with reaction-time delay, rather than on a first order fluid model. The continuation of these studies will concern shock wave analysis and adequate traffic-stabilizing control strategies.

3.4.3 Crowdsourced mapping

The deployment of intelligent and connected vehicles, equipped with increasingly sophisticated equipment, and capable of sharing accurate positions and trajectories, is expected to lead to a substantial improvement of road safety and traffic efficiency. Nevertheless, in order to guarantee accurate positioning in all conditions, including in dense zones where GNSS signals can get degraded by multi-path effects, it is expected that sensory equipped vehicles will need to use precise maps of the environment to support their localization algorithms. Crowdsourced mapping represents a cost-effective solution to this problem, consisting in making use of measurements retrieved by multiple production vehicles equipped with standard sensors in order to build an accurate map of landmarks and maintain it up-to-date in realistic, long-term scenarios. Existing SoA crowdsourced mapping solutions rely on triangulation optimization or graph-based optimization where trade-offs between the map quality and computational scalability are still to be investigated. We propose to extend the work of 141 to improve scalability. One possible approach is to rely on a Gaussian Belief algorithm to estimate and update the position of landmarks and of the the vehicles, along with their corresponding uncertainties.

3.4.4 Cooperative automated driving involving V2X communications

Automated driving in a complex shared road requires cooperation among road entities in terms of cooperative control, cooperative perception, and cooperative path planning. This poses new research challenges that did not exist in the domain of vehicular communications e.g., communications for cooperative automated driving and intention-aware communications. Based on our experiences and know-how on mobile telecommunications, networking, and robotics domains, the ASTRA team will conduct research activities within the following domains:

- Safety critical V2V communications.

- Safety critical distributed coordination.

- Safety and performance guided V2X communication and data processing

- Vehicles' behaviors and intention-aware communications

4 Application domains

The aim of the project team is to tackle the challenges and provide breakthrough solutions for the autonomous and connected mobility. It covers the improvement of the safety, the availability and the performances of ADAS “Advanced Driver Assistance Systems” and the L3 automated systems (Traffic Jam Pilot and Highway Pilot) for privately owned vehicles as well as L4 automated systems including Robotaxi and automated transportation systems like autonomous shuttles. Enabled by 5G and the V2X connectivity in general, the extension to cooperative Automated driving and the Smart city will also be considered. There are more and more cities and highways equipping their infrastructures with sensors that can enable extended and shared perception. During the project, the developed solutions are tested for these applications. Valeo Automated Driving roadmap is addressing them through 3 programs. Cruise4U Program for multiple carriageway/highways, Drive4U for Urban environment including autonomous shuttles and eDeliver4U for last mile delivery as shown in Fig. 3.

The Cruise4U and Drive4U programs allowed to Valeo to perform open roads experiments around the world with more than 200,000 km accumulated in real conditions with plenty of use cases.

Fig. 4 shows a part of the Cruise4U experiments, while Fig. 5 shows world premieres: Drive4U open road experiments with only Valeo serial production sensors operating in Paris, Las Vegas and Tokyo.

A dedicated Automated Driving platform for the project team is under discussion in order to allow quick and easy integration, tests and validations of the Joint team developments.

5 Highlights of the year

- Noël Nadal obtained his Agrégation externe d'informatique.

- Raoul de Charette integrated the ELLIS scientific network.

5.1 Awards

- Kaouther Messaoud, Itheri Yahiaoui, Anne Verroust-Blondet and Fawzi Nashashibi received the Georges N. Saridis Best Transactions Paper Award for their paper Attention Based Vehicle Trajectory Prediction, IEEE Transactions on Intelligent Vehicles, 2021 (the awarded paper is selected among all papers published during the three calendar years preceding the year of the award).

6 New software, platforms, open data

6.1 New platforms

Participants: Paulo Resende, Benazouz BRADAI, Gaëtan Le Gall, Fawzi Nashashibi.

The creation of the team has resulted in the strengthening ofthe experimental side of the team which has always had among its objectives the validation of work on real instrumented platforms. The creation of the team has resulted in the strengthening of the experimental side of the team which has always had among its objectives the validation of work on real instrumented platforms. Thus, the team is equipped with at least four road vehicles (Cruise4U and Drive4U from Valeo, a C1 and a Zoé from Inria), 3 shuttles (2 Cybus from Inria and a NAVYA from Valeo) and 2 Cybercars (Inria) (figure 6).

7 New results

7.1 3D scene reconstruction and completion

Participants: Anh-Quan Cao, Raoul de Charette.

In this research axis, within the context of Anh Quan Cao PhD thesis, we have studied the ability to estimate the 3D scene geometry and semantics from images or 3D data. In SceneRF 20, we introduced a self-supervised method for monocular 3D scene reconstruction using Neural Radiance Fields (NeRF), trained solely from multiple posed image sequences. To enhance geometry prediction, we present new geometrical constraints and a novel probabilistic sampling strategy for effective radiance fields training. The performance surpasses the state-of-the-art on multiple benchmarks and metrics. In a collaborative work with the Technical University of Munich (TUM), we proposed a method named PaSCo 29. The latter investigates the popular Semantic Scene Completion (SSC) task, also addressed in multiple works of the team, to incorporate uncertainty estimation. Here, we extended SSC to the Panoptic Scene Completion (PSC) task, to include instance-level information, resulting in richer 3D scene understanding. Crucially, we noticed that the literature overlooks uncertainty estimation despite it's crucial importance for safety-critical application like autonomous driving. To allow uncertainty-awareness, our approach follows utilize a multi-input multi-output architecture to predict variations of the PSC output and therefore estimate the voxel-wise and instance-wise uncertainty. Experiments show our method outperforms all baselines in both Panoptic Scene Completion and uncertainty estimation on three large-scale benchmarks.

SceneRF was presented at ICCV 2023 and PaSCo is accepted at CVPR 2024 29. Both works are shared opensource.

7.2 Cross-task learning for vision algorithms

Participants: Ivan Lopes, Tuan-Hung Vu, Raoul de Charette.

In 25 we explore the ability to learn multiple dense prediction tasks to train a more robust network. Specifically, we jointly address semantic and geometry-related tasks which are known to be complementary, by leveraging attention mechanisms to enforce cross-task exchange. Extensive experiments are conducted on three diverse multi-task setups, we show their benefit across all tasks on both indoor and outdoor datasets. This work, named DenseMTL, is opensource and published in WACV 2023.

7.3 Physics-guided learning vision

Participants: Ivan Lopes, Tan Khiem Huynh, Raoul de Charette.

A major axis of the vision group is the study of machine learning under physics guidance. We explore two major direction: 1) the use of physics prior to solve vision, 2) the use of vision algorithms to discover physics. In the first one, the team had major works in the past.

1) The use of physics knowledge for vision was extensively studied in the team, in particular in past works 14, 13, 16, 7.

Recently, we addressed a novel problem of extraction of materials from an image in the context of Ivan Lopes PhD thesis in a joint work with a collaborator from Oxford University. Following the common practices in computer graphics, we formulate materials as Physically Based Rendering (PBR) to model the interaction between materials and light sources. Because such PBR materials are hard to acquire, in Material Palette 32, we propose the first method to extract PBR materials from a single real-world image. To do so, we leveraged a large-scale diffusion model and training a multi-task network to decompose textures. In addition to opensource libraries, we created a custom synthetically generated high-quality dataset of 9,000 RGB textures, TexSD, openly shared to the public. The complete method sets a new state-of-the-art. We also showcase the capabilities to edit 3D scenes with materials estimated from real photographs.

In another direction, with Weihao Xia (PhD visit student) in collaboration with University College London (UCL) and Cambridge, we introduced DREAM 27, a method for visual decoding using a biologically-inspired architecture. In particular, we studied the ability to decode the brain activities of a patient when looking at an images — thus reconstructing the image from fMRI data. This task, refered as visual decoding, is usually addressed using the support of language. In DREAM, we reason on biological principle, designing an architecture that reverses the human pathways to decipher geometry, color and semantics. The resulting method outperformed the literature.

2) Another prospective direction is the use of vision algorithms to discover physics models. In particular we seek here to estimate the symbolic models ruling light propagation. This challenging research direction, was initiated with an intern, focusing first on the discovery of the simple light attenuation models in fog. There exist physicals model for atmospheric scattering, one commonly used estimates fog from a clear image as ; where , , and are the depth map, scattering coefficient, and atmospheric light, respectively. The method employed used a sequence of images under foggy conditions to unsupervisedly discover the light attenuation models, by tracking the luminance of salient points in the video. Although the project was not finalized, the groundwork accomplished during the internship provides the foundation for a potential future publication.

In this axis, DREAM27 is published at WACV 2024 and Material Palette32 is accepted at CVPR 2024. Another work not detailed here is the journal 19,which extends a prior work and was recently accepted in T-PAMI. All works are opensource.

7.4 Language-driven vision

Participants: Mohammad Fahes, Tuan-Hung Vu, Andrei Bursuc, Patrick Pérez, Raoul de Charette.

In this axis, in the context of Mohammad Fahes PhD thesis, we address a major problem of deep-learning based computer vision algorithms which is their lack of generalization to out-of-distribution samples. Here, we build on recent advances in Vision-Language Model (VLM) to adapt or generalize the performance of a model.

In PODA 21 we propose a setting of “prompt-driven zero-shot domain adaptation”, where the only target information is reduced to a description of the target domain in natural language (e.g. driving at night). Our research builds on multimodal vision-language space (here, CLIP), to optimize the features of a network, by steering them towards a target text embedding while preserving the features content and semantics. Such augmented features can serve any proxy tasks, and our work demonstrates strong performances in semantic segmentation, object detection and classification problems.

Rather than adaptation to a specific domain, in FAMix 30 we tackle the question of generalizing a model trained on a source domain to any potential unseen domain. For instance, we were interested in training a segmentation model on synthetic driving scenes images from a game engine (GTA5) to real-world driving scenes — significantly more complex to acquire. With FAMix, we introduced a simple framework for generalizing semantic segmentation networks by employing language as the source of randomization. Our recipe comprises three key ingredients: i) the preservation of the intrinsic CLIP robustness through minimal fine-tuning, ii) language-driven local style augmentation, and iii) randomization by locally mixing the source and augmented styles during training. Extensive experiments report state-of-the-art results on various generalization benchmarks.

The work PODA21 is published at ICCV 2023 and FaMix30 is at CVPR 2024. An extension of the former work is next to ready for a submission in a journal. All works are shared opensource.

7.5 Misbehavior Detection for Collective Perception Services: A Systematic Trust-Based Evidence Approach

Participants: Jiahao Zhang, Fawzi Nashashibi.

This work has been conducted in collaboration with external partners from IRT-SystemX Institute: Ines Ben-Jemaa and Francesca Bassi.

Collective perception services (CPS) allow vehicles to extend their perception of the environment beyond their Field of View through V2X communication. To build an extended perception system, received objects are fused with the local perceived objects. The association process is a crucial step in the perceptual data fusion. We leverage the perception data association results to detect misbehaving nodes when contradictory perceived information are received. Especially, we design and implement a misbehavior detection architecture for CPS relying on an evidential object association approach 61. The result of the association process is used to assess the node trust worthiness through the subjective logic mechanism 95.

7.6 Hierarchical Attention and Graph Neural Networks for Drift-Free Pose Estimation of a ground vehicle

Participants: Fawzi Nashashibi.

This work has been conducted in collaboration with Kathia Melbouci after her departure from ASTRA at the end of 2022.

We explored an architecture that replaces traditional geometric registration and pose graph optimization of a ground veicle with a learned model utilizing attention mechanisms and graph neural networks. In a paper submitted to IEEE ICRA 2024, We propose a strategy to condense the data flow, preserving essential information required for the precise estimation of rigid poses. Our results, derived from tests on the KITTI Odometry dataset, demonstrate a significant improvement in pose estimation accuracy. This improvement is especially notable in determining rotational components when compared with results obtained through conventional multi-way registration via pose graph optimization. The corresponding code will be made available as soon as the paper status is determined.

7.7 Interpretable Goal-Based model for Vehicle Trajectory Prediction in Interactive Scenarios

Participants: Amina Ghoul, Itheri Yahiaoui, Fawzi Nashashibi.

The abilities to understand the social interaction behaviors between a vehicle and its surroundings while predicting its trajectory in an urban environment are critical for road safety in autonomous driving. Social interactions are hard to explain because of their uncertainty. In recent years, neural network-based methods have been widely used for trajectory prediction and have been shown to outperform hand-crafted methods. However, these methods suffer from their lack of interpretability. In order to overcome this limitation, we combine in 23 the interpretability of a discrete choice model with the high accuracy of a neural network-based model for the task of vehicle trajectory prediction in an interactive environment. We implement and evaluate our model using the INTERACTION dataset and demonstrate the effectiveness of our proposed architecture to explain its predictions without compromising the accuracy.

7.8 Interpretable Long Term Waypoint-Based Trajectory Prediction Model

Participants: Amina Ghoul, Itheri Yahiaoui, Fawzi Nashashibi.

Predicting the future trajectories of dynamic agents in complex environments is crucial for a variety of applications, including autonomous driving, robotics, and human-computer interaction. It is a challenging task as the behavior of the agent is unknown and intrinsically multimodal. Our key insight is that the agents behaviors are influenced not only by their past trajectories and their interaction with their immediate environment but also largely with their long term waypoint (LTW). In 22, we study the impact of adding a long-term goal on the performance of a trajectory prediction framework. We present an interpretable long term waypoint-driven prediction framework (WayDCM). WayDCM first predict an agent's intermediate goal (IG) by encoding his interactions with the environment as well as his LTW using a combination of a Discrete choice Model (DCM) and a Neural Network model (NN). Then, our model predicts the corresponding trajectories. This is in contrast to previous work which does not consider the ultimate intent of the agent to predict his trajectory. We evaluate and show the effectiveness of our approach on the Waymo Open dataset.

7.9 Motion planning and prediction

Participants: Nelson De Moura, Augustin Gervreau.

The study of different longitudinal behaviors for vehicles was completely restructured during 2023, resulting in a two-step method based on the DTW dissimilarity matrix clustering with k-means, which was published in 26. However, for the longitudinal clustering, so as to extract the different behaviors, another method had to be created, that considers the dynamic characteristics of each vehicle. This work will be publish in 2024.

For pedestrians and cyclists the main difficulty is to filter the outlier trajectories and the ones that are not representative of a overall behavior. This has been done with hierarchical clustering considering the shape and the initial and final points for clustering. This work will also be published in 2024. And from these results the first simulation agent models started to been trained.

7.10 Control and Human Factors

Participants: Nelson De Moura, Tiago Rocha.

Following prior member works 123, 60, we studied a risk prediction system to evaluate the trade-off between ethical aspects and fuel-efficiency improvements in platooning systems. Leveraging the harm concept from 123, an MDP approach is used to adjust dynamically controllers during platooning operation. Results published in 24.

7.11 Ethical decision-making for automated vehicles

Participants: Nelson De Moura.

The ethics of automated vehicles (AV) has received a great amount of attention in recent years, specifically in regard to their decisional policies in accident situations in which human harm is a likely consequence. After a discussion about the pertinence and cogency of the term “artificial moral agent” to describe AVs that would accomplish these sorts of decisions, and starting from the assumption that human harm is unavoidable in some situations, a strategy for AV decision making is proposed using only pre-defined parameters to characterize the risk of possible accidents and also integrating the Ethical Valence Theory, which paints AV decision-making as a type of claim mitigation, into multiple possible decision rules to determine the most suitable action given the specific environment and decision context. The goal of this approach is not to define how moral theory requires vehicles to behave, but rather to provide a computational approach that is flexible enough to accommodate a number of human “moral positions” concerning what morality demands and what road users may expect, offering an evaluation tool for the social acceptability of an automated vehicle's decision making. Published in 28

7.12 Landmark localization for Autonomous Vehicles

Participants: Noël Nadal, Fawzi Nashashibi, Jean-Marc Lasgouttes.

We are interested in the case where we have a map in an urban environment, with landmarks whose position is known, up to some Gaussian error. A vehicle, equipped with sensors to detect such landmarks, must determine its position based on its knowledge of the map and its observations. The performance of the proposed algorithm enables it to be used in real time at a speed consistent with the hypothesis of an urban environment (30 to 50 km/h), and the accuracy of the localization performed (error of less than 10 cm) is good enough to enable it to be used by an autonomous vehicle.

7.13 Shock Wave Estimation in Intelligent Driver Models

Participants: Guy Fayolle, Jean-Marc Lasgouttes.

We investigate the transfer function emanating from the linearization of a car-following model for human drivers, when taking into account their reaction time, which is known to be a cause of the stop-and-go traffic phenomenon. This leads to a non rational transfer function, implying nontrivial stability conditions which are explicitly given. They are in particular satisfied whenever string stability holds. It is also shown how this reaction time can introduce a regime of partial string stability, where the transfer function modulus remains smaller than 1, up to some critical frequency. Conditions are explored in the parameter space discriminating between 4 different regimes (instability, string stability, partial string stability, string instability).

Starting from the study 64, we aim first to describe the schockwaves that may form in the string instability regime. To this end, we are currently analyzing the transfer function , for of a string of vehicles, sufficiently large. This preliminary step requires a sharp analysis, for which the usual saddle point methods seem not to work.

7.14 Reflected brownian motion in a non convex cone

Participants: Guy Fayolle.

G. Fayolle, in collaboration with S. Franceschi (LMO, Paris-Saclay University) and K Raschel (CNRS, Tours University), study the stationary reflected Brownian motion in a non-convex wedge, which, compared to its convex analogue model, has been much rarely analyzed in the probabilistic literature. Two approaches are proposed for the three-quarter plane. In 62, it was proved that the stationary distribution could be found by solving a two dimensional vector boundary value problem (BVP) on a single curve (an hyperbola) for the associated Laplace transforms. The reduction to this kind of vector BVP seems to be quite new in the literature. As a matter of comparison, one single boundary condition is sufficient in the convex case. When the parameters of the model (drift, reflection angles and covariance matrix) are symmetric with respect to the bisector line of the cone, the model is reducible to a standard reflected Brownian motion in a convex cone. Finally, we construct a one-parameter family of distributions, which surprisingly provides, for any wedge (convex or not), one particular example of stationary distribution of a reflected Brownian motion.

In 17, the main result is to show that the stationary distribution can in fact be obtained by solving a boundary value problem of the same kind as the one encountered in the quarter plane, up to various dualities and symmetries. The idea is to start from Fourier (and not Laplace) transforms, allowing to get a functional equation for a single function of two complex variables.

7.15 A Markovian Analysis of IEEE 802.11 Broadcast Transmission Networks with Buffering and back-off stages

Participants: Guy Fayolle.

Following their previous analysis 65 G. Fayolle and P. Mühlethaler analyze the so-called back-off technique of the IEEE 802.11 protocol in broadcast mode with waiting queues. In contrast to existing models, packets arriving when a station (or node) is in back-off state are not discarded, but are stored in a buffer of infinite capacity. As in previous studies, the key point of our analysis hinges on the assumption that the time on the channel is viewed as a random succession of transmission slots (whose duration corresponds to the length of a packet) and mini-slots during which the back-off of the station is decremented. These events occur independently, with given probabilities. The state of a node is represented by a three-dimensional Markov chain in discrete-time, formed by the back-off counter, the number of packets at the station, and the back-off stage. The stationary behaviour can be explicitly solved. In particular, stability (ergodicity) conditions are obtained and interpreted in terms of maximum throughput 31.

7.16 Blockchain adapted to IoT via green mining and variable Proof of Work

Participants: Guy Fayolle.

Blockchain applications continue to grow in popularity, but their energy costs are clearly becoming unsustainable. In most cases, the primary cost comes from the amount of energy required for proof-of-work (PoW). G. Fayolle and Ph. Jacquet study the application of blockchains to the IoT, where most devices are underpowered and would not support the energy cost of proof of work. PoW was originally intended for two main uses: block moderation and protecting the blockchain from tampering. For IoT we suggest to replace the expensive moderation of PoW with the proposed energy-efficient green mining. The blockchain will be protected by a variable difficulty PoW. One of the results is the proof that the average mining time in variable PoW actually depends only on the average difficulty. This crucial property will allow low-difficulty PoWs to be reserved for devices with low computational capacity, while higher difficulties will be reserved for devices with the highest computational power. The consequence is to give equal opportunity to objects with low computational power compared to objects with high computational power.

7.17 Random walks in orthants and lattice path combinatorics

Participants: Guy Fayolle.

In the revised version of the second edition of the book 4 (see also the corrections), original methods were proposed to determine the invariant measure of random walks in the quarter plane with small jumps (size 1), the general solution being obtained via reduction to boundary value problems. Among other things, an important quantity, the so-called group of the walk, allows to deduce theoretical features about the nature of the solutions. In particular, when the order of the group is finite and the underlying algebraic curve is of genus 0 or 1, necessary and sufficient conditions have been given for the solution to be rational, algebraic or -finite (i.e. satisfying a linear differential equation). In this framework, number of difficult open problems related to lattice path combinatorics are currently being explored by Guy fayolle, in collaboration with A. Bostan and F. Chyzak, both from theoretical and computer algebra points of view: concrete computation of the criteria, utilization of differential Galois theory, genus greater than 1 (i.e. when some jumps are of size ), etc. A recent topic (mentioned in 2019) deals with the connections between simple product-form stochastic networks (so-called Jackson networks) and explicit solutions of functional equations for counting lattice walks. Some partial extensions of this earlier work are still under development.

8 Bilateral contracts and grants with industry

8.1 Bilateral contracts with industry

Participants: Fawzi Nashashibi, Raoul de Charette, Jean-Marc Lasgouttes, Benazouz Bradai, Paulo Resende, Zayed Alsayed, Fernando Garrido, Axel Jeanne, Nelson de Moura Martins Gomes , Noel Nadal, Mohammad Fahes, Karim Essalmi , Tetiana Martyniuk.

Valeo Group: As a result of a long-standing collaboration, the strategic partnership between INRA and VALEO led to the establishment of a joint project team in 2022. Since that date, several bilateral contracts were signed to conduct joint some of which are funded by Valeo.

- Several CIFRE theses have been developed throughout the year 2023 between Valeo and Inria : Mr. Karim ESSALMI joined ASTRA in February 2023 as a new PhD student working on Maneuver decision and Motion planning. Mrs Tetiana MARTYNIUK joined the team in June 2023 on a pre-thesis contract with a CIFRE that will start in 2024 and is working on conditioned generation of egocentric 3D driving scenes within the astra vision team.

- Other PhD students and post-docs are jointly funded by Valeo and Inria while Mr. Nelson de Moura is hired as a 2-years post-doc thanks to the national Plan de relance Programme.

- Valeo is currently a major financing partner of the “GAT” international Chaire/JointLab in which Inria is a partner. The other partners are: UC Berkeley, Shanghai Jiao-Tong University, EPFL, IFSTTAR, Stellantis and SAFRAN.

- Technology transfer is also a major collaboration topic between ASTRA and Valeo as well as the development of a road automated prototype.

- Finally, Inria and Valeo are partners of the French project SAMBA (Sécurité Active et MoBilités Autonomes) including SAFRAN Group, Inria Paris, TwinswHeel, Soben, Stanley Robotics and EXPLEO.

The work with Valeo Group is articulated around the collaboration of two Valeo teams:

Valeo DAR works on research and development for Advanced Driving Assistant Systems (ADAS). Starting from July 2022, Zayed Alsayed, Axel Jeanne, Fernando Garrido, and Paulo Resende, employees seconded by Valeo, joined the joint project team to work on the following scientific areas: localization and mapping (Sec. 3.2), decision making, motion planning & vehicle control (Sec. 3.3), and large-scale modeling and deployment of mobility systems in smart cities (Sec. 3.4).

Valeo.AI is the research laboratory of Valeo Group, and follows an academic research line. Valeo.AI collaborates with the vision group (Sec. 3.1). Starting from July 2022, Alexandre Boulch, Andrei Bursuc, Gilles Puy, Patrick Pérez, Renaud Marlet, Tuan-Hung Vu joined as part-time researchers in Astra, with frequent joint group readings, workshops and seminars. Subsequently to his departure from Valeo, in Dec. 2023 Patrick Pérez also left Astra. In 2023 the collaboration led to 3 open source realizations, 3 top-tier publications and the co-supervision of 1 internship, 2 PhDs.

9 Partnerships and cooperations

9.1 International initiatives

Palestine Polytechnic University: This a new-born collaboration between the Intelligent Systems Research Centre of PPU and ASTRA team. The academic collaborations include:

- Bilateral academic visits

- Hosting and recruiting doctoral and post-doctoral PPU students

- Participation in joint National/Horizon collaborative projects

- Involvement in teaching & education (lectures, trainings mentorships, keynotes...)

9.2 International research visitors

9.2.1 Visits of international scientists

Participants: Fawzi Nashashibi.

International visits to the team

- Professor Karim Tahboub

-

Palestine Polytechnic University (PPU)

Intelligent Systems Research Center (ISRC)

- Hebron, Palestine

-

Dates:

July-August 2023

-

Context:

He was a recipient of a Short-Term Research Mobility Grant. His two months visit was the opportunity to collaborate on the research topic of Cognitive Human-Robot Interaction based on Intention Recognition, focusing on human-autonomous vehicle interaction in crowded urban environments.