2023Activity reportProject-TeamMANAO

RNSR: 201221025F- Research center Inria Centre at the University of Bordeaux

- In partnership with:Université de Bordeaux, CNRS

- Team name: Melting the frontiers between Light, Shape and Matter

- In collaboration with:Laboratoire Bordelais de Recherche en Informatique (LaBRI)

- Domain:Perception, Cognition and Interaction

- Theme:Interaction and visualization

Keywords

Computer Science and Digital Science

- A5. Interaction, multimedia and robotics

- A5.1.1. Engineering of interactive systems

- A5.1.6. Tangible interfaces

- A5.3.5. Computational photography

- A5.4. Computer vision

- A5.4.4. 3D and spatio-temporal reconstruction

- A5.5. Computer graphics

- A5.5.1. Geometrical modeling

- A5.5.2. Rendering

- A5.5.3. Computational photography

- A5.5.4. Animation

- A5.6. Virtual reality, augmented reality

- A6.2.3. Probabilistic methods

- A6.2.5. Numerical Linear Algebra

- A6.2.6. Optimization

- A6.2.8. Computational geometry and meshes

Other Research Topics and Application Domains

- B3.1. Sustainable development

- B3.1.1. Resource management

- B3.6. Ecology

- B5. Industry of the future

- B5.1. Factory of the future

- B9. Society and Knowledge

- B9.2. Art

- B9.2.2. Cinema, Television

- B9.2.3. Video games

- B9.6. Humanities

- B9.6.1. Psychology

- B9.6.6. Archeology, History

- B9.6.10. Digital humanities

1 Team members, visitors, external collaborators

Research Scientists

- Pascal Barla [Team leader, INRIA, Researcher, HDR]

- Gael Guennebaud [INRIA, Researcher]

- Romain Pacanowski [INRIA, Researcher, HDR]

Faculty Members

- Pierre Benard [UNIV BORDEAUX, Associate Professor]

- Patrick Reuter [UNIV BORDEAUX, Associate Professor, HDR]

Post-Doctoral Fellows

- Jean Basset [INRIA, Post-Doctoral Fellow, from Feb 2023]

- Morgane Gerardin [INRIA, Post-Doctoral Fellow, until Jun 2023]

- Pierre Mezieres [INRIA, Post-Doctoral Fellow, from Jul 2023]

PhD Students

- Corentin Cou [CNRS]

- Melvin Even [INRIA]

- Gary Fourneau [INRIA]

- Pierre La Rocca [UNIV BORDEAUX]

- Simon Lucas [UNIV BORDEAUX]

- Lea Marquet [INRIA, from Nov 2023]

- Panagiotis Tsiapkolis [UBISOFT, CIFRE]

Technical Staff

- Marjorie Paillet [INRIA, Engineer, until Oct 2023]

Interns and Apprentices

- Pablo Leboulanger [UNIV BORDEAUX, Intern, from Mar 2023 until Jul 2023]

- Bastien Morel [INRIA, Intern, from Mar 2023 until Aug 2023]

- Coralie Rigaut [INRIA, Intern, from Feb 2023 until Jul 2023]

Administrative Assistant

- Anne-Laure Gautier [INRIA]

External Collaborators

- Aurelie Bugeau [UNIV BORDEAUX, from Sep 2023, HDR]

- Morgane Gerardin [UNIV TOULOUSE III, from Jul 2023]

- Xavier Granier [UNIV PARIS SACLAY, HDR]

2 Overall objectives

2.1 General Introduction

Computer generated images are ubiquitous in our everyday life. Such images are the result of a process that has seldom changed over the years: the optical phenomena due to the propagation of light in a 3D environment are simulated taking into account how light is scattered 61, 38 according to shape and material characteristics of objects. The intersection of optics (for the underlying laws of physics) and computer science (for its modeling and computational efficiency aspects) provides a unique opportunity to tighten the links between these domains in order to first improve the image generation process (computer graphics, optics and virtual reality) and next to develop new acquisition and display technologies (optics, mixed reality and machine vision).

Most of the time, light, shape, and matter properties are studied, acquired, and modeled separately, relying on realistic or stylized rendering processes to combine them in order to create final pixel colors. Such modularity, inherited from classical physics, has the practical advantage of permitting to reuse the same models in various contexts. However, independent developments lead to un-optimized pipelines and difficult-to-control solutions since it is often not clear which part of the expected result is caused by which property. Indeed, the most efficient solutions are most often the ones that blur the frontiers between light, shape, and matter to lead to specialized and optimized pipelines, as in real-time applications (like Bidirectional Texture Functions 71 and Light-Field rendering 36). Keeping these three properties separated may lead to other problems. For instance:

- Measured materials are too detailed to be usable in rendering systems and data reduction techniques have to be developed 70, 72, leading to an inefficient transfer between real and digital worlds;

- It is currently extremely challenging (if not impossible) to directly control or manipulate the interactions between light, shape, and matter. Accurate lighting processes may create solutions that do not fulfill users' expectations;

- Artists can spend hours and days in modeling highly complex surfaces whose details will not be visible 92 due to inappropriate use of certain light sources or reflection properties.

Most traditional applications target human observers. Depending on how deep we take into account the specificity of each user, the requirement of representations, and algorithms may differ.

|

|

|

| Auto-stereoscopy display | HDR display | Printing both geometry and material |

| ©Nintendo | ©Dolby Digital | 53 |

Examples of new display technologies

With the evolution of measurement and display technologies that go beyond conventional images (e.g., as illustrated in Figure 1, High-Dynamic Range Imaging 82, stereo displays or new display technologies 57, and physical fabrication 28, 45, 53) the frontiers between real and virtual worlds are vanishing 41. In this context, a sensor combined with computational capabilities may also be considered as another kind of observer. Creating separate models for light, shape, and matter for such an extended range of applications and observers is often inefficient and sometimes provides unexpected results. Pertinent solutions must be able to take into account properties of the observer (human or machine) and application goals.

2.2 Methodology

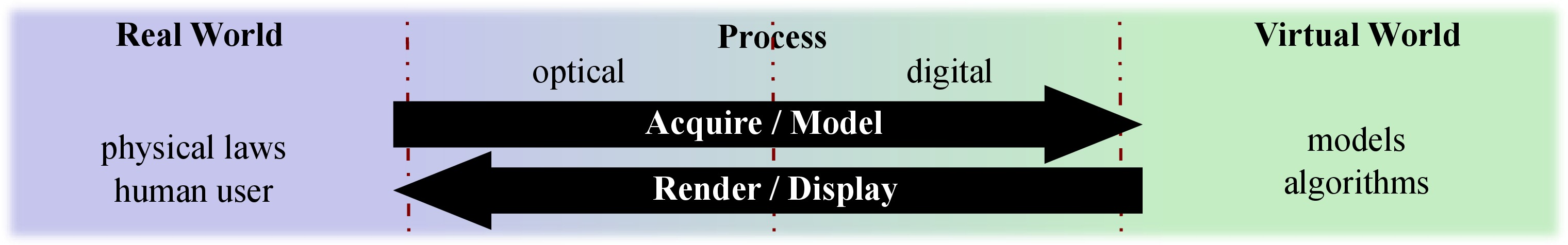

Interactions/Transfers between real and virtual worlds.

2.2.1 Using a global approach

The main goal of the MANAO project is to study phenomena resulting from the interactions between the three components that describe light propagation and scattering in a 3D environment: light, shape, and matter. Improving knowledge about these phenomena facilitates the adaption of the developed digital, numerical, and analytic models to specific contexts. This leads to the development of new analysis tools, new representations, and new instruments for acquisition, visualization, and display.

To reach this goal, we have to first increase our understanding of the different phenomena resulting from the interactions between light, shape, and matter. For this purpose, we consider how they are captured or perceived by the final observer, taking into account the relative influence of each of the three components. Examples include but are not limited to:

- The manipulation of light to reveal reflective 33 or geometric properties 99, as mastered by professional photographers;

- The modification of material characteristics or lighting conditions 98 to better understand shape features, for instance to decipher archaeological artifacts;

- The large influence of shape on the captured variation of shading 80 and thus on the perception of material properties 95.

Based on the acquired knowledge of the influence of each of the components, we aim at developing new models that combine two or three of these components. Examples include the modeling of Bidirectional Texture Functions (BTFs) 44 that encode in a unique representation effects of parallax, multiple light reflections, and also shadows without requiring to store separately the reflective properties and the meso-scale geometric details, or Light-Fields that are used to render 3D scenes by storing only the result of the interactions between light, shape, and matter both in complex real environments and in simulated ones.

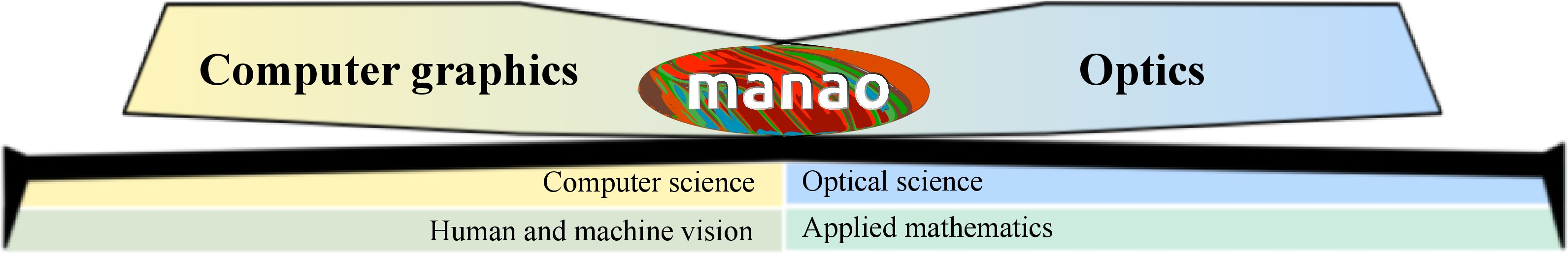

One of the strengths of MANAO is that we are inter-connecting computer graphics and optics (Figure 2). On one side, the laws of physics are required to create images but may be bent to either increase performance or user's control: this is one of the key advantage of computer graphics approach. It is worth noticing that what is not possible in the real world may be possible in a digital world. However, on the other side, the introduced approximations may help to better comprehend the physical interactions of light, shape, and matter.

2.2.2 Taking observers into account

The MANAO project specifically aims at considering information transfer, first from the real world to the virtual world (acquisition and creation), then from computers to observers (visualization and display). For this purpose, we use a larger definition of what an observer is: it may be a human user or a physical sensor equipped with processing capabilities. Sensors and their characteristics must be taken into account in the same way as we take into account the human visual system in computer graphics. Similarly, computational capabilities may be compared to cognitive capabilities of human users. Some characteristics are common to all observers, such as the scale of observed phenomena. Some others are more specifics to a set of observers. For this purpose, we have identified two classes of applications.

- Physical systems Provided our partnership that leads to close relationships with optics, one novelty of our approach is to extend the range of possible observers to physical sensors in order to work on domains such as simulation, mixed reality, and testing. Capturing, processing, and visualizing complex data is now more and more accessible to everyone, leading to the possible convergence of real and virtual worlds through visual signals. This signal is traditionally captured by cameras. It is now possible to augment them by projecting (e.g., the infrared laser of Microsoft Kinect) and capturing (e.g., GPS localization) other signals that are outside the visible range. These supplemental information replace values traditionally extracted from standard images and thus lower down requirements in computational power 68. Since the captured images are the result of the interactions between light, shape, and matter, the approaches and the improved knowledge from MANAO help in designing interactive acquisition and rendering technologies that are required to merge the real and the virtual world. With the resulting unified systems (optical and digital), transfer of pertinent information is favored and inefficient conversion is likely avoided, leading to new uses in interactive computer graphics applications, like augmented reality 32, 41 and computational photography 81.

- Interactive visualization This direction includes domains such as scientific illustration and visualization, artistic or plausible rendering. In all these cases, the observer, a human, takes part in the process, justifying once more our focus on real-time methods. When targeting average users, characteristics as well as limitations of the human visual system should be taken into account: in particular, it is known that some configurations of light, shape, and matter have masking and facilitation effects on visual perception 92. For specialized applications, the expertise of the final user and the constraints for 3D user interfaces lead to new uses and dedicated solutions for models and algorithms.

3 Research program

3.1 Related Scientific Domains

Related scientific domains of the MANAO project

The MANAO project aims at studying, acquiring, modeling, and rendering the interactions between the three components that are light, shape, and matter from the viewpoint of an observer. As detailed more lengthily in the next section, such a work will be done using the following approach: first, we will tend to consider that these three components do not have strict frontiers when considering their impacts on the final observers; then, we will not only work in computer graphics, but also at the intersection of computer graphics and optics, exploring the mutual benefits that the two domains may provide. It is thus intrinsically a transdisciplinary project (as illustrated in Figure 3) and we expect results in both domains.

Thus, the proposed team-project aims at establishing a close collaboration between computer graphics (e.g., 3D modeling, geometry processing, shading techniques, vector graphics, and GPU programming) and optics (e.g., design of optical instruments, and theories of light propagation). The following examples illustrate the strengths of such a partnership. First, in addition to simpler radiative transfer equations 46 commonly used in computer graphics, research in the later will be based on state-of-the-art understanding of light propagation and scattering in real environments. Furthermore, research will rely on appropriate instrumentation expertise for the measurement 58, 59 and display 57 of the different phenomena. Reciprocally, optics researches may benefit from the expertise of computer graphics scientists on efficient processing to investigate interactive simulation, visualization, and design. Furthermore, new systems may be developed by unifying optical and digital processing capabilities. Currently, the scientific background of most of the team members is related to computer graphics and computer vision. A large part of their work have been focused on simulating and analyzing optical phenomena as well as in acquiring and visualizing them. Combined with the close collaboration with the optics laboratory LP2N and with the students issued from the “Institut d'Optique”, this background ensures that we can expect the following results from the project: the construction of a common vocabulary for tightening the collaboration between the two scientific domains and creating new research topics. By creating this context, we expect to attract (and even train) more trans-disciplinary researchers.

At the boundaries of the MANAO project lie issues in human and machine vision. We have to deal with the former whenever a human observer is taken into account. On one side, computational models of human vision are likely to guide the design of our algorithms. On the other side, the study of interactions between light, shape, and matter may shed some light on the understanding of visual perception. The same kind of connections are expected with machine vision. On the one hand, traditional computational methods for acquisition (such as photogrammetry) are going to be part of our toolbox. On the other hand, new display technologies (such as the ones used for augmented reality) are likely to benefit from our integrated approach and systems. In the MANAO project we are mostly users of results from human vision. When required, some experimentation might be done in collaboration with experts from this domain, like with the European PRISM project. For machine vision, provided the tight collaboration between optical and digital systems, research will be carried out inside the MANAO project.

Analysis and modeling rely on tools from applied mathematics such as differential and projective geometry, multi-scale models, frequency analysis 48 or differential analysis 80, linear and non-linear approximation techniques, stochastic and deterministic integrations, and linear algebra. We not only rely on classical tools, but also investigate and adapt recent techniques (e.g., improvements in approximation techniques), focusing on their ability to run on modern hardware: the development of our own tools (such as Eigen) is essential to control their performances and their abilities to be integrated into real-time solutions or into new instruments.

3.2 Research axes

The MANAO project is organized around four research axes that cover the large range of expertise of its members and associated members. We briefly introduce these four axes in this section. More details and their inter-influences that are illustrated in the Figure 2 will be given in the following sections.

Axis 1 is the theoretical foundation of the project. Its main goal is to increase the understanding of light, shape, and matter interactions by combining expertise from different domains: optics and human/machine vision for the analysis and computer graphics for the simulation aspect. The goal of our analyses is to identify the different layers/phenomena that compose the observed signal. In a second step, the development of physical simulations and numerical models of these identified phenomena is a way to validate the pertinence of the proposed decompositions.

In Axis 2, the final observers are mainly physical captors. Our goal is thus the development of new acquisition and display technologies that combine optical and digital processes in order to reach fast transfers between real and digital worlds, in order to increase the convergence of these two worlds.

Axes 3 and 4 focus on two aspects of computer graphics: rendering, visualization and illustration in Axis 3, and editing and modeling (content creation) in Axis 4. In these two axes, the final observers are mainly human users, either generic users or expert ones (e.g., archaeologist 84, computer graphics artists).

3.3 Axis 1: Analysis and Simulation

Challenge: Definition and understanding of phenomena resulting from interactions between light, shape, and matter as seen from an observer point of view.

Results: Theoretical tools and numerical models for analyzing and simulating the observed optical phenomena.

To reach the goals of the MANAO project, we need to increase our understanding of how light, shape, and matter act together in synergy and how the resulting signal is finally observed. For this purpose, we need to identify the different phenomena that may be captured by the targeted observers. This is the main objective of this research axis, and it is achieved by using three approaches: the simulation of interactions between light, shape, and matter, their analysis and the development of new numerical models. This resulting improved knowledge is a foundation for the researches done in the three other axes, and the simulation tools together with the numerical models serve the development of the joint optical/digital systems in Axis 2 and their validation.

One of the main and earliest goals in computer graphics is to faithfully reproduce the real world, focusing mainly on light transport. Compared to researchers in physics, researchers in computer graphics rely on a subset of physical laws (mostly radiative transfer and geometric optics), and their main concern is to efficiently use the limited available computational resources while developing as fast as possible algorithms. For this purpose, a large set of theoretical as well as computational tools has been introduced to take a maximum benefit of hardware specificities. These tools are often dedicated to specific phenomena (e.g., direct or indirect lighting, color bleeding, shadows, caustics). An efficiency-driven approach needs such a classification of light paths 54 in order to develop tailored strategies 96. For instance, starting from simple direct lighting, more complex phenomena have been progressively introduced: first diffuse indirect illumination 52, 88, then more generic inter-reflections 61, 46 and volumetric scattering 85, 43. Thanks to this search for efficiency and this classification, researchers in computer graphics have developed a now recognized expertise in fast-simulation of light propagation. Based on finite elements (radiosity techniques) or on unbiased Monte Carlo integration schemes (ray-tracing, particle-tracing, ...), the resulting algorithms and their combination are now sufficiently accurate to be used-back in physical simulations. The MANAO project will continue the search for efficient and accurate simulation techniques, but extending it from computer graphics to optics. Thanks to the close collaboration with scientific researchers from optics, new phenomena beyond radiative transfer and geometric optics will be explored.

Search for algorithmic efficiency and accuracy has to be done in parallel with numerical models. The goal of visual fidelity (generalized to accuracy from an observer point of view in the project) combined with the goal of efficiency leads to the development of alternative representations. For instance, common classical finite-element techniques compute only basis coefficients for each discretization element: the required discretization density would be too large and to computationally expensive to obtain detailed spatial variations and thus visual fidelity. Examples includes texture for decorrelating surface details from surface geometry and high-order wavelets for a multi-scale representation of lighting 42. The numerical complexity explodes when considering directional properties of light transport such as radiance intensity (Watt per square meter and per steradian - ), reducing the possibility to simulate or accurately represent some optical phenomena. For instance, Haar wavelets have been extended to the spherical domain 87 but are difficult to extend to non-piecewise-constant data 90. More recently, researches prefer the use of Spherical Radial Basis Functions 93 or Spherical Harmonics 79. For more complex data, such as reflective properties (e.g., BRDF 73, 62 - 4D), ray-space (e.g., Light-Field 69 - 4D), spatially varying reflective properties (6D - 83), new models, and representations are still investigated such as rational functions 76 or dedicated models 30 and parameterizations 86, 91. For each (newly) defined phenomena, we thus explore the space of possible numerical representations to determine the most suited one for a given application, like we have done for BRDF 76.

|

|

|

|

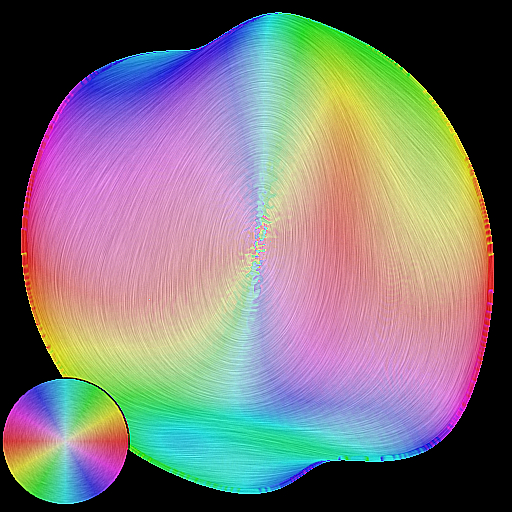

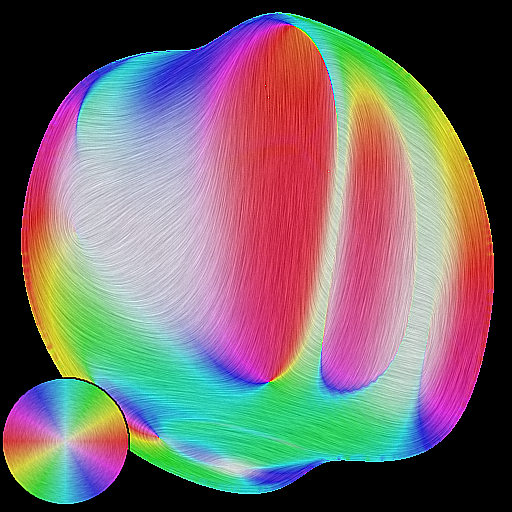

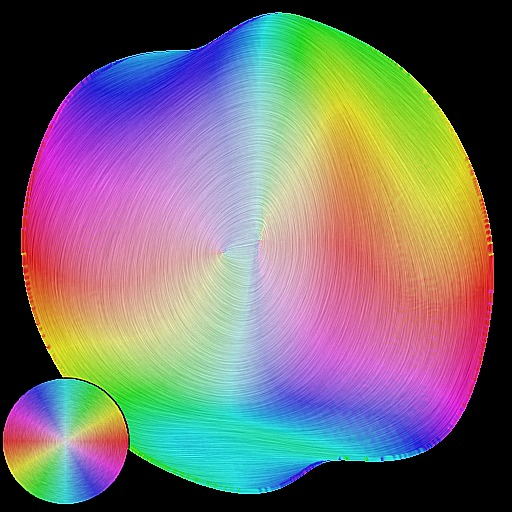

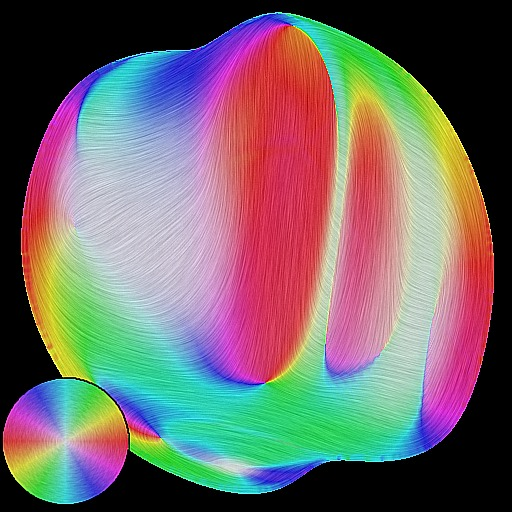

| Texuring | 1st order gradient field | Environment reflection | 2st order gradient field |

Firts-order analysis

Before being able to simulate or to represent the different observed phenomena, we need to define and describe them. To understand the difference between an observed phenomenon and the classical light, shape, and matter decomposition, we can take the example of a highlight. Its observed shape (by a human user or a sensor) is the resulting process of the interaction of these three components, and can be simulated this way. However, this does not provide any intuitive understanding of their relative influence on the final shape: an artist will directly describe the resulting shape, and not each of the three properties. We thus want to decompose the observed signal into models for each scale that can be easily understandable, representable, and manipulable. For this purpose, we will rely on the analysis of the resulting interaction of light, shape, and matter as observed by a human or a physical sensor. We first consider this analysis from an optical point of view, trying to identify the different phenomena and their scale according to their mathematical properties (e.g., differential 80 and frequency analysis 48). Such an approach has leaded us to exhibit the influence of surfaces flows (depth and normal gradients) into lighting pattern deformation (see Figure 4). For a human observer, this correspond to one recent trend in computer graphics that takes into account the human visual systems 49 both to evaluate the results and to guide the simulations.

3.4 Axis 2: From Acquisition to Display

Challenge: Convergence of optical and digital systems to blend real and virtual worlds.

Results: Instruments to acquire real world, to display virtual world, and to make both of them interact.

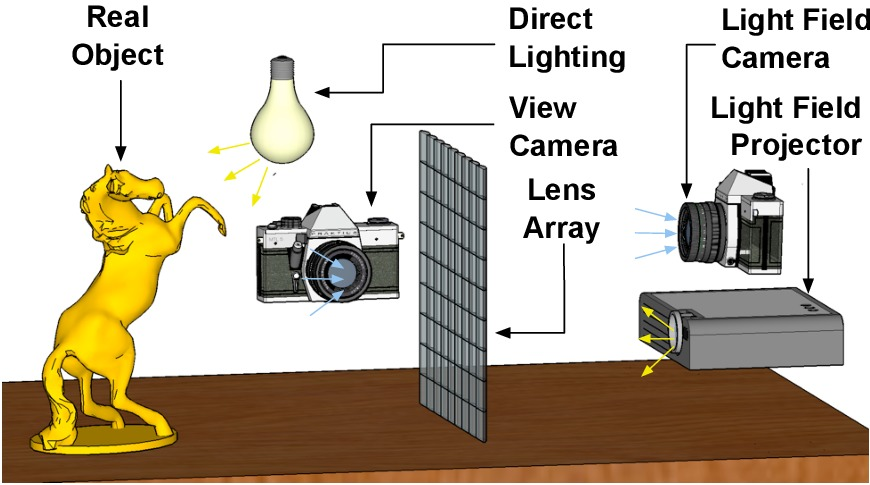

Light-Field transfer

In this axis, we investigate unified acquisition and display systems, that is systems which combine optical instruments with digital processing. From digital to real, we investigate new display approaches 69, 57. We consider projecting systems and surfaces 37, for personal use, virtual reality and augmented reality 32. From the real world to the digital world, we favor direct measurements of parameters for models and representations, using (new) optical systems unless digitization is required 51, 50. These resulting systems have to acquire the different phenomena described in Axis 1 and to display them, in an efficient manner 55, 31, 56, 59. By efficient, we mean that we want to shorten the path between the real world and the virtual world by increasing the data bandwidth between the real (analog) and the virtual (digital) worlds, and by reducing the latency for real-time interactions (we have to prevent unnecessary conversions, and to reduce processing time). To reach this goal, the systems have to be designed as a whole, not by a simple concatenation of optical systems and digital processes, nor by considering each component independently 60.

To increase data bandwidth, one solution is to parallelize more and more the physical systems. One possible solution is to multiply the number of simultaneous acquisitions (e.g., simultaneous images from multiple viewpoints 59, 78). Similarly, increasing the number of viewpoints is a way toward the creation of full 3D displays 69. However, full acquisition or display of 3D real environments theoretically requires a continuous field of viewpoints, leading to huge data size. Despite the current belief that the increase of computational power will fill the missing gap, when it comes to visual or physical realism, if you double the processing power, people may want four times more accuracy, thus increasing data size as well. To reach the best performances, a trade-off has to be found between the amount of data required to represent accurately the reality and the amount of required processing. This trade-off may be achieved using compressive sensing. Compressive sensing is a new trend issued from the applied mathematics community that provides tools to accurately reconstruct a signal from a small set of measurements assuming that it is sparse in a transform domain (e.g., 77, 102).

We prefer to achieve this goal by avoiding as much as possible the classical approach where acquisition is followed by a fitting step: this requires in general a large amount of measurements and the fitting itself may consume consequently too much memory and preprocessing time. By preventing unnecessary conversion through fitting techniques, such an approach increase the speed and reduce the data transfer for acquisition but also for display. One of the best recent examples is the work of Cossairt et al. 41. The whole system is designed around a unique representation of the energy-field issued from (or leaving) a 3D object, either virtual or real: the Light-Field. A Light-Field encodes the light emitted in any direction from any position on an object. It is acquired thanks to a lens-array that leads to the capture of, and projection from, multiple simultaneous viewpoints. A unique representation is used for all the steps of this system. Lens-arrays, parallax barriers, and coded-aperture 67 are one of the key technologies to develop such acquisition (e.g., Light-Field camera 1 60 and acquisition of light-sources 51), projection systems (e.g., auto-stereoscopic displays). Such an approach is versatile and may be applied to improve classical optical instruments 65. More generally, by designing unified optical and digital systems 74, it is possible to leverage the requirement of processing power, the memory footprint, and the cost of optical instruments.

Those are only some examples of what we investigate. We also consider the following approaches to develop new unified systems. First, similar to (and based on) the analysis goal of Axis 1, we have to take into account as much as possible the characteristics of the measurement setup. For instance, when fitting cannot be avoided, integrating them may improve both the processing efficiency and accuracy 76. Second, we have to integrate signals from multiple sensors (such as GPS, accelerometer, ...) to prevent some computation (e.g., 68). Finally, the experience of the group in surface modeling help the design of optical surfaces 63 for light sources or head-mounted displays.

3.5 Axis 3: Rendering, Visualization and Illustration

Challenge: How to offer the most legible signal to the final observer in real-time?

Results: High-level shading primitives, expressive rendering techniques for object depiction, real-time realistic rendering algorithms

Rendering techniques from realistic solutions to more expressive ones.

The main goal of this axis is to offer to the final observer, in this case mostly a human user, the most legible signal in real-time. Thanks to the analysis and to the decomposition in different phenomena resulting from interactions between light, shape, and matter (Axis 1), and their perception, we can use them to convey essential information in the most pertinent way. Here, the word pertinent can take various forms depending on the application.

In the context of scientific illustration and visualization, we are primarily interested in tools to convey shape or material characteristics of objects in animated 3D scenes. Expressive rendering techniques (see Figure 6c,d) provide means for users to depict such features with their own style. To introduce our approach, we detail it from a shape-depiction point of view, domain where we have acquired a recognized expertise. Prior work in this area mostly focused on stylization primitives to achieve line-based rendering 100, 64 or stylized shading 35, 98 with various levels of abstraction. A clear representation of important 3D object features remains a major challenge for better shape depiction, stylization and abstraction purposes. Most existing representations provide only local properties (e.g., curvature), and thus lack characterization of broader shape features. To overcome this limitation, we are developing higher level descriptions of shape 29 with increased robustness to sparsity, noise, and outliers. This is achieved in close collaboration with Axis 1 by the use of higher-order local fitting methods, multi-scale analysis, and global regularization techniques. In order not to neglect the observer and the material characteristics of the objects, we couple this approach with an analysis of the appearance model. To our knowledge, this is an approach which has not been considered yet. This research direction is at the heart of the MANAO project, and has a strong connection with the analysis we plan to conduct in Axis 1. Material characteristics are always considered at the light ray level, but an understanding of higher-level primitives (like the shape of highlights and their motion) would help us to produce more legible renderings and permit novel stylizations; for instance, there is no method that is today able to create stylized renderings that follow the motion of highlights or shadows. We also believe such tools also play a fundamental role for geometry processing purposes (such as shape matching, reassembly, simplification), as well as for editing purposes as discussed in Axis 4.

In the context of real-time photo-realistic rendering ((see Figure 6a,b), the challenge is to compute the most plausible images with minimal effort. During the last decade, a lot of work has been devoted to design approximate but real-time rendering algorithms of complex lighting phenomena such as soft-shadows 101, motion blur 48, depth of field 89, reflexions, refractions, and inter-reflexions. For most of these effects it becomes harder to discover fundamentally new and faster methods. On the other hand, we believe that significant speedup can still be achieved through more clever use of massively parallel architectures of the current and upcoming hardware, and/or through more clever tuning of the current algorithms. In particular, regarding the second aspect, we remark that most of the proposed algorithms depend on several parameters which can be used to trade the speed over the quality. Significant speed-up could thus be achieved by identifying effects that would be masked or facilitated and thus devote appropriate computational resources to the rendering 66, 47. Indeed, the algorithm parameters controlling the quality vs speed are numerous without a direct mapping between their values and their effect. Moreover, their ideal values vary over space and time, and to be effective such an auto-tuning mechanism has to be extremely fast such that its cost is largely compensated by its gain. We believe that our various work on the analysis of the appearance such as in Axis 1 could be beneficial for such purpose too.

Realistic and real-time rendering is closely related to Axis 2: real-time rendering is a requirement to close the loop between real world and digital world. We have to thus develop algorithms and rendering primitives that allow the integration of the acquired data into real-time techniques. We have also to take care of that these real-time techniques have to work with new display systems. For instance, stereo, and more generally multi-view displays are based on the multiplication of simultaneous images. Brute force solutions consist in independent rendering pipeline for each viewpoint. A more energy-efficient solution would take advantages of the computation parts that may be factorized. Another example is the rendering techniques based on image processing, such as our work on augmented reality 39. Independent image processing for each viewpoint may disturb the feeling of depth by introducing inconsistent information in each images. Finally, more dedicated displays 57 would require new rendering pipelines.

3.6 Axis 4: Editing and Modeling

Challenge: Editing and modeling appearance using drawing- or sculpting-like tools through high level representations.

Results: High-level primitives and hybrid representations for appearance and shape.

During the last decade, the domain of computer graphics has exhibited tremendous improvements in image quality, both for 2D applications and 3D engines. This is mainly due to the availability of an ever increasing amount of shape details, and sophisticated appearance effects including complex lighting environments. Unfortunately, with such a growth in visual richness, even so-called vectorial representations (e.g., subdivision surfaces, Bézier curves, gradient meshes, etc.) become very dense and unmanageable for the end user who has to deal with a huge mass of control points, color labels, and other parameters. This is becoming a major challenge, with a necessity for novel representations. This Axis is thus complementary of Axis 3: the focus is the development of primitives that are easy to use for modeling and editing.

More specifically, we plan to investigate vectorial representations that would be amenable to the production of rich shapes with a minimal set of primitives and/or parameters. To this end we plan to build upon our insights on dynamic local reconstruction techniques and implicit surfaces 4034. When working in 3D, an interesting approach to produce detailed shapes is by means of procedural geometry generation. For instance, many natural phenomena like waves or clouds may be modeled using a combination of procedural functions. Turning such functions into triangle meshes (main rendering primitives of GPUs) is a tedious process that appears not to be necessary with an adapted vectorial shape representation where one could directly turn procedural functions into implicit geometric primitives. Since we want to prevent unnecessary conversions in the whole pipeline (here, between modeling and rendering steps), we will also consider hybrid representations mixing meshes and implicit representations. Such research has thus to be conducted while considering the associated editing tools as well as performance issues. It is indeed important to keep real-time performance (cf. Axis 2) throughout the interaction loop, from user inputs to display, via editing and rendering operations. Finally, it would be interesting to add semantic information into 2D or 3D geometric representations. Semantic geometry appears to be particularly useful for many applications such as the design of more efficient manipulation and animation tools, for automatic simplification and abstraction, or even for automatic indexing and searching. This constitutes a complementary but longer term research direction.

|

|

|

|

|

|

| (a) | (b) | (c) | (d) | (e) | (f) |

A system that mimics texture (left) and shading (right) effects using image processing alone.

In the MANAO project, we want to investigate representations beyond the classical light, shape, and matter decomposition. We thus want to directly control the appearance of objects both in 2D and 3D applications (e.g., 94): this is a core topic of computer graphics. When working with 2D vector graphics, digital artists must carefully set up color gradients and textures: examples range from the creation of 2D logos to the photo-realistic imitation of object materials. Classic vector primitives quickly become impractical for creating illusions of complex materials and illuminations, and as a result an increasing amount of time and skill is required. This is only for still images. For animations, vector graphics are only used to create legible appearances composed of simple lines and color gradients. There is thus a need for more complex primitives that are able to accommodate complex reflection or texture patterns, while keeping the ease of use of vector graphics. For instance, instead of drawing color gradients directly, it is more advantageous to draw flow lines that represent local surface concavities and convexities. Going through such an intermediate structure then allows to deform simple material gradients and textures in a coherent way (see Figure 7), and animate them all at once. The manipulation of 3D object materials also raises important issues. Most existing material models are tailored to faithfully reproduce physical behaviors, not to be easily controllable by artists. Therefore artists learn to tweak model parameters to satisfy the needs of a particular shading appearance, which can quickly become cumbersome as the complexity of a 3D scene increases. We believe that an alternative approach is required, whereby material appearance of an object in a typical lighting environment is directly input (e.g., painted or drawn), and adapted to match a plausible material behavior. This way, artists will be able to create their own appearance (e.g., by using our shading primitives 94), and replicate it to novel illumination environments and 3D models. For this purpose, we will rely on the decompositions and tools issued from Axis 1.

4 Application domains

4.1 Physical Systems

Given our close relationships with researchers in optics, one novelty of our approach is to extend the range of possible observers to physical sensors in order to work on domains such as simulation, mixed reality, and testing. Capturing, processing, and visualizing complex data is now more and more accessible to everyone, leading to the possible convergence of real and virtual worlds through visual signals. This signal is traditionally captured by cameras. It is now possible to augment them by projecting (e.g., the infrared laser of Microsoft Kinect) and capturing (e.g., GPS localization) other signals that are outside the visible range. This supplemental information replaces values traditionally extracted from standard images and thus lowers down requirements in computational power. Since the captured images are the result of the interactions between light, shape, and matter, the approaches and the improved knowledge from MANAO help in designing interactive acquisition and rendering technologies that are required to merge the real and the virtual worlds. With the resulting unified systems (optical and digital), transfer of pertinent information is favored and inefficient conversion is likely avoided, leading to new uses in interactive computer graphics applications, like augmented reality, displays and computational photography.

4.2 Interactive Visualization and Modeling

This direction includes domains such as scientific illustration and visualization, artistic or plausible rendering, and 3D modeling. In all these cases, the observer, a human, takes part in the process, justifying once more our focus on real-time methods. When targeting average users, characteristics as well as limitations of the human visual system should be taken into account: in particular, it is known that some configurations of light, shape, and matter have masking and facilitation effects on visual perception. For specialized applications (such as archeology), the expertise of the final user and the constraints for 3D user interfaces lead to new uses and dedicated solutions for models and algorithms.

5 Social and environmental responsibility

5.1 Footprint of research activities

For a few years now, our team has been collectively careful in limiting its direct environmental impacts, mainly by limiting the number of flights and extending the lifetime of PCs and laptops beyond the warranty period.

5.2 Environmental involvement

Gaël Guennebaud and Pascal Barla are engaged in several actions and initiatives related to the environmental issues, both within Inria itself, and within the research and higher-education domain in general:

- They have been members of the “Local Sustainable Development Committee” of Inria Bordeaux until November 2023.

- They are animators of the “The Climate Fresk”.

- G. Guennebaud is a member of the “Social and Environmental Responsibility Committee” of the LaBRI and of the “transition network” of the University of Bordeaux.

- Ecoinfo: G. Guennebaud is involved within the GDS Ecoinfo of the CNRS, and in particular he is in charge of the development of the ecodiag tool among other activities.

- Labo1point5: G. Guennebaud is part of the labos1point5 GDR, in particular to help with the development of a module to take into account the carbon footprint of ICT devices within their carbon footprint estimation tool (GES1point5). The first version of the module has been released in October 2021.

- G. Guennebaud participate in the elaboration and dissemination of an introductory course to climate change issues and environnemental impacts of ICT, with two colleagues of the LaBRI.

- P. Barla and G. Guennebaud have compiled research projects conducted by Inria teams around environmental issues within Bordeaux research center, and prepared material for presentation to different audiences.

6 Highlights of the year

A publication in the presitigious “Natural Human Behaviour” journal 11, on the perception of material categories from specular image features, in collaboration with researchers in Psychophysics at the University of Giessen, Germany.

7 New software, platforms, open data

7.1 New software

7.1.1 Spectral Viewer

-

Keyword:

Image

-

Functional Description:

An open-source (spectral) image viewer that supports several images formats: ENVI (spectral), exr, png, jpg.

- URL:

-

Authors:

Arthur Dufay, Romain Pacanowski, Pascal Barla, Alban Fichet, David Murray

-

Contact:

Romain Pacanowski

-

Partner:

LP2N (CNRS - UMR 5298)

7.1.2 Malia

-

Name:

The Malia Rendering Framework

-

Keywords:

3D, Realistic rendering, GPU, Ray-tracing, Material appearance

-

Functional Description:

The Malia Rendering Framework is an open source library for predictive, physically-realistic rendering. It comes with several applications, including a spectral path tracer, RGB-to-spectral conversion routines a blender bridge and a spectral image viewer.

- URL:

-

Authors:

Arthur Dufay, Romain Pacanowski, Pascal Barla, David Murray, Alban Fichet

-

Contact:

Romain Pacanowski

7.1.3 FRITE

-

Keywords:

2D animation, Vector-based drawing

-

Functional Description:

2D animation software allowing non linear editing

-

Authors:

Pierre Benard, Pascal Barla

-

Contact:

Pierre Benard

7.1.4 ecodiag

-

Keywords:

CO2, Carbon footprint, Web Services

-

Functional Description:

Ecodiag is a web service to estimate the carbon footprint of the computer equipments.

-

Contact:

Gael Guennebaud

-

Partner:

Ecoinfo

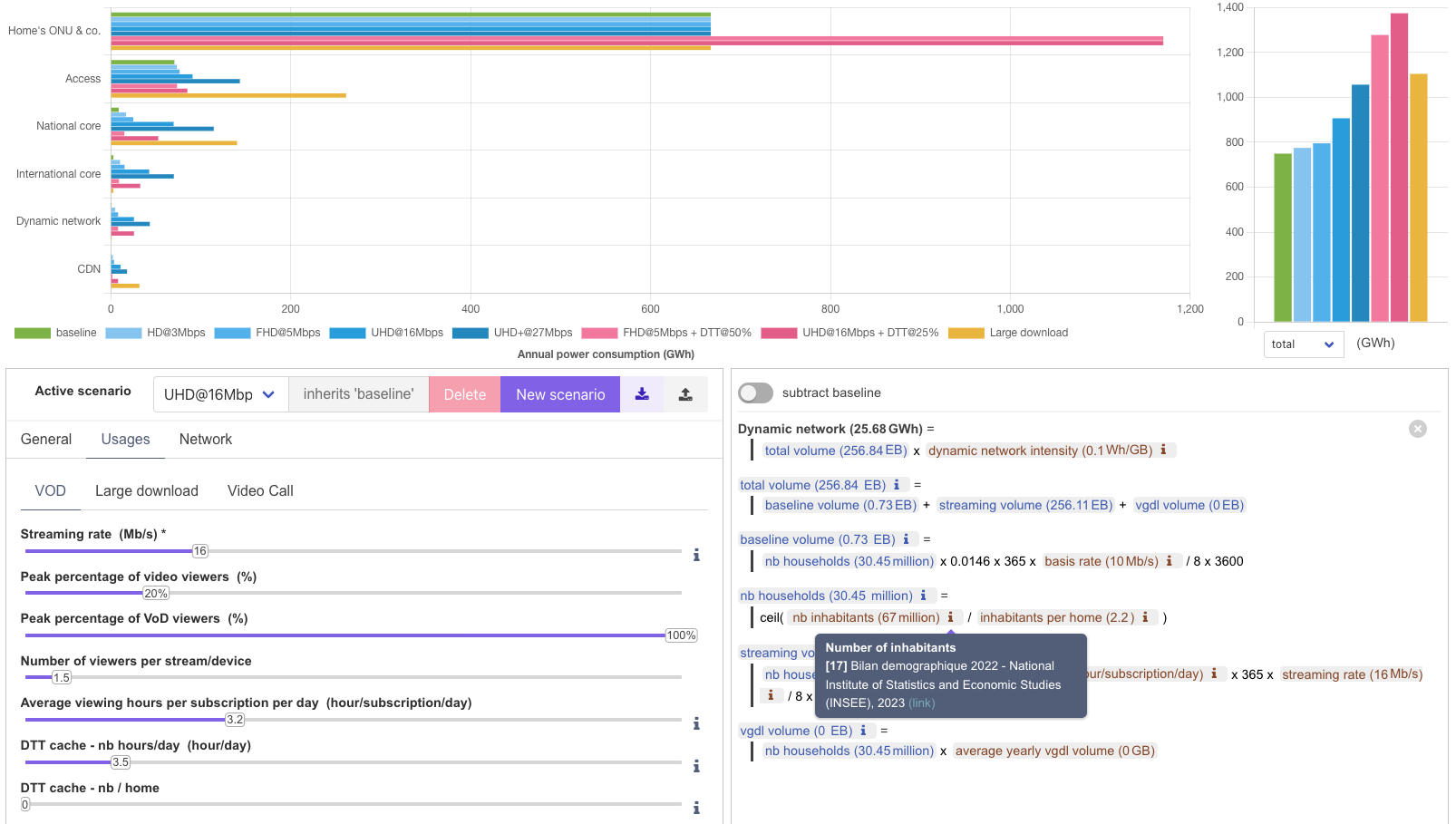

7.1.5 FtthPowerSim

-

Keywords:

Power consumption, Network simulator

-

Functional Description:

This web application is a demo of the methodology and model detailed in the paper Assessing VoD pressure on network power consumption. It's purpose is to estimate the dimensioning and power consumption of a fiber network infrastructure that is ideally sized to satisfy different usage scenarios. It is tailored to cover a territory of the size of France centered around a main IXP collocated with a unique CDN playing the role of a cache.

- URL:

-

Contact:

Gael Guennebaud

-

Partners:

Université de Bordeaux, LaBRI

7.2 New platforms

7.2.1 La Coupole

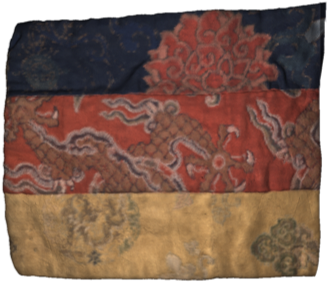

Participants: Romain Pacanowski, Marjorie Paillet, Morgane Gerardin, David Murray.

View of La Coupole

Left, view of La Coupole and the robot with the 3D scanner mounted, before the acquisition phase of the optical properties performed with a high speed camera (Right) that photographs the object for each lit LED. (Photo Credits Arthur Pequin.)

La Coupole is a measurement device that acquires spatially-varying reflectance function (SV-BRDF) on non-planar object which area can be up to 1.5m.

Its main characteristics are:

- RGB 12MP Camera (100 fps)

- 6-axes Robot

- 1080 White LED

- 3D Laser Scanner Laser: 200 resolution

- Maximal Measurement Area: 1.75 m

- Optical Resolution: 50

|

|

|

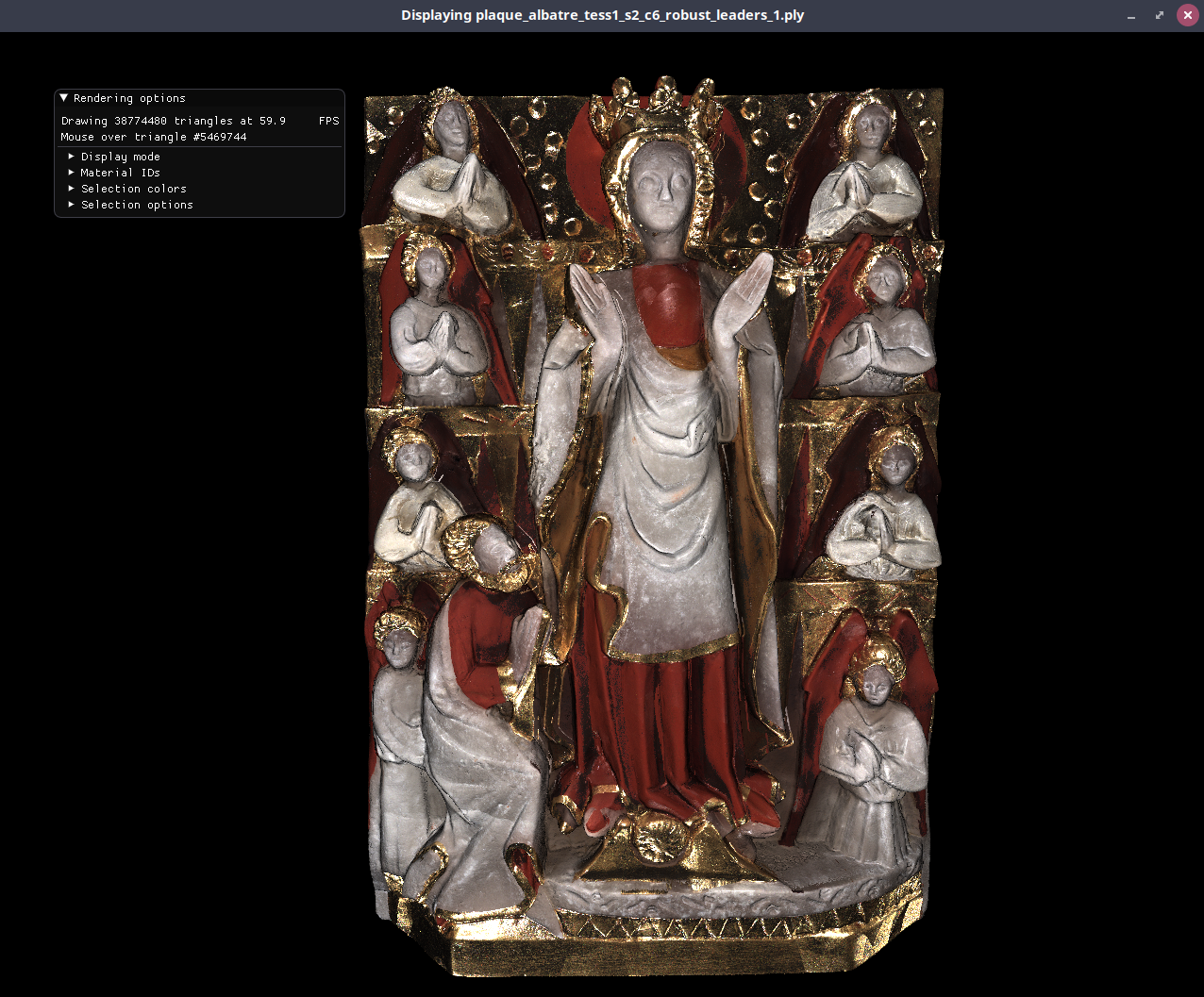

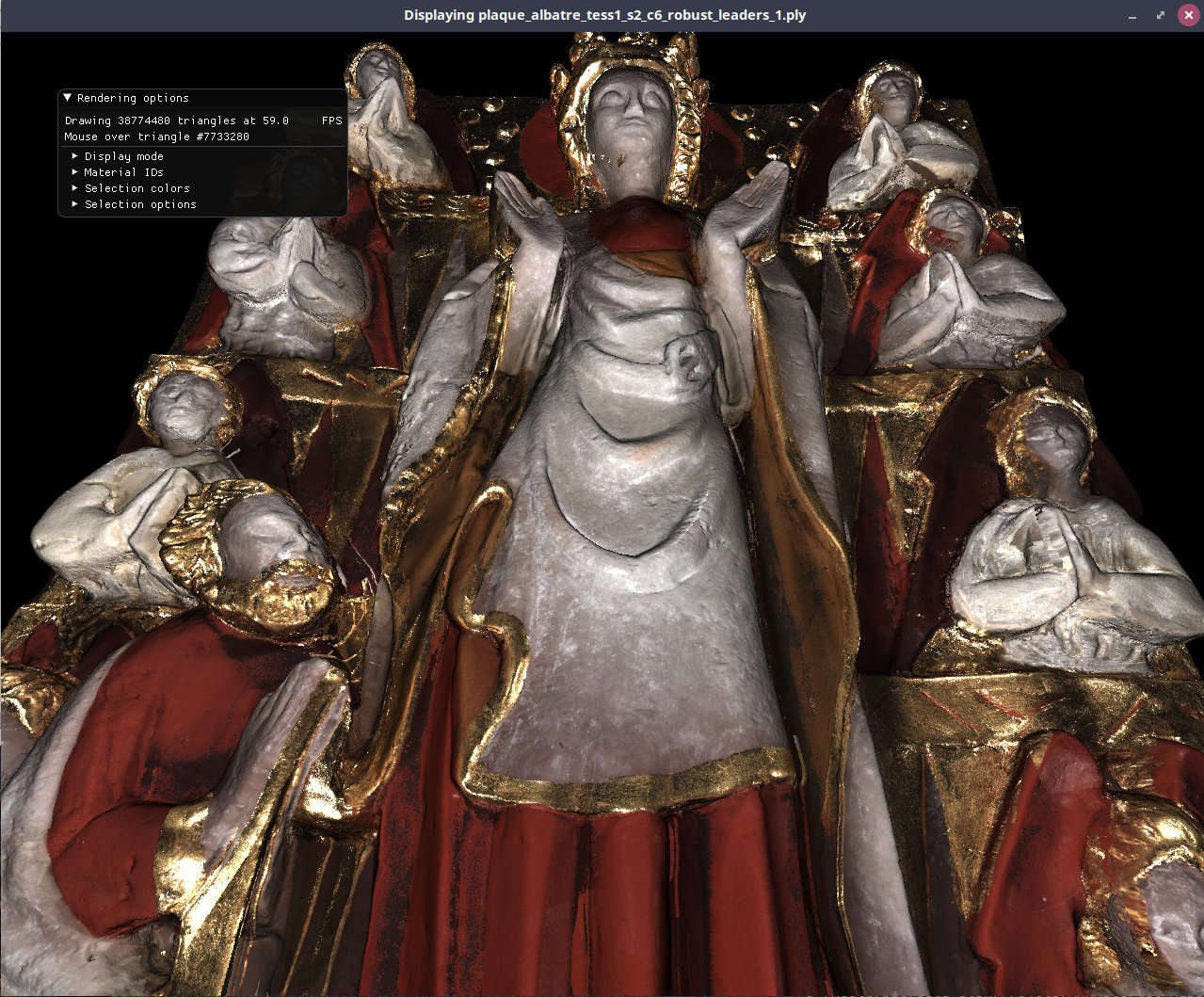

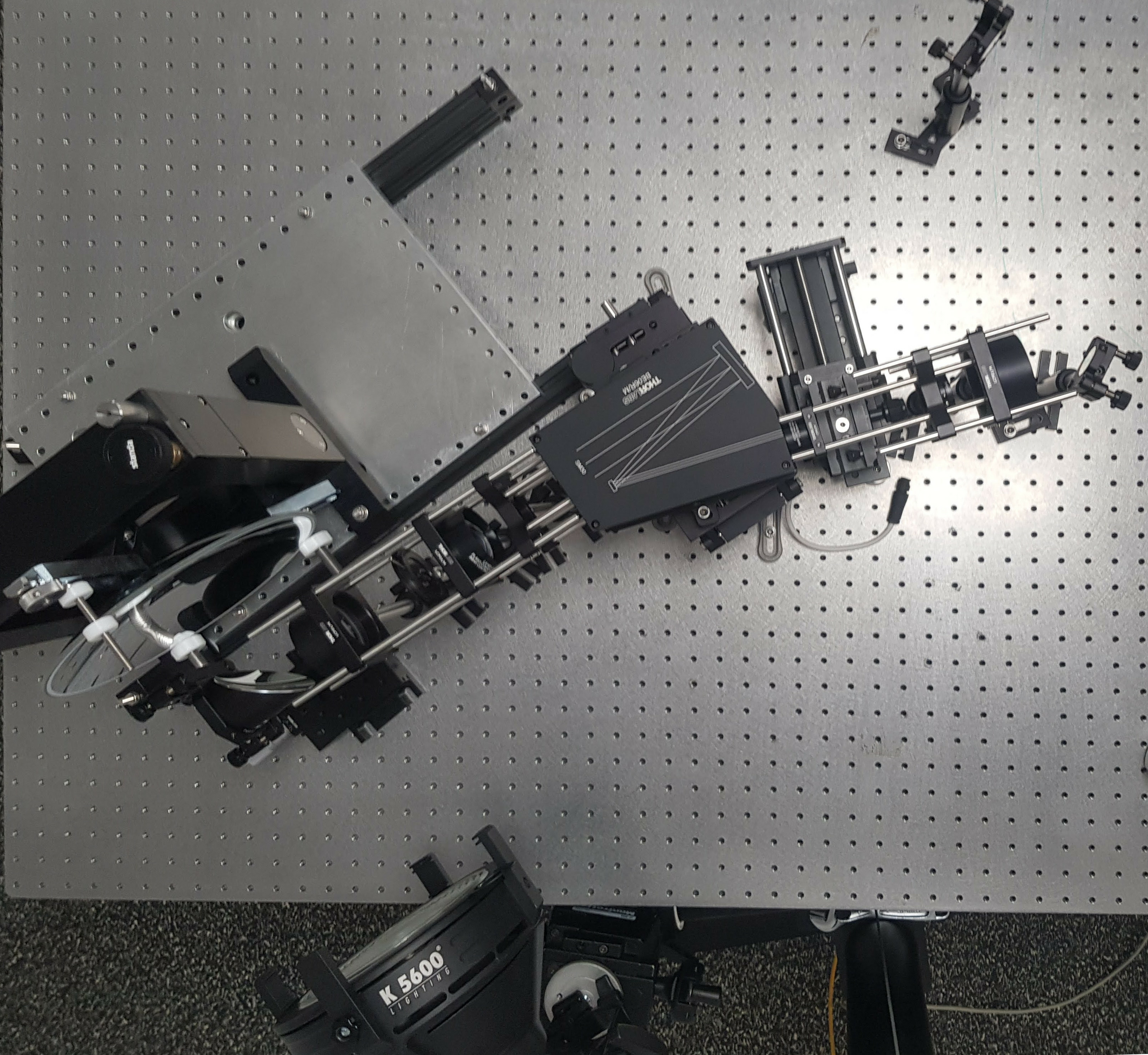

Left, official poster of the Exhibition Textiles 3D presented to the museum of Ethnography of Bordeaux. Center and Right, 3D digital reconstruction of an alabaster piece using data acquired by La Coupole.

Official poster of the Exhibition and 3D digital reconstruction of an alabaster.

Left, official poster of the Exhibition Textiles 3D presented to the museum of Ethnography of Bordeaux. Center and Right, 3D digital reconstruction of an alabaster piece using data acquired by La Coupole.

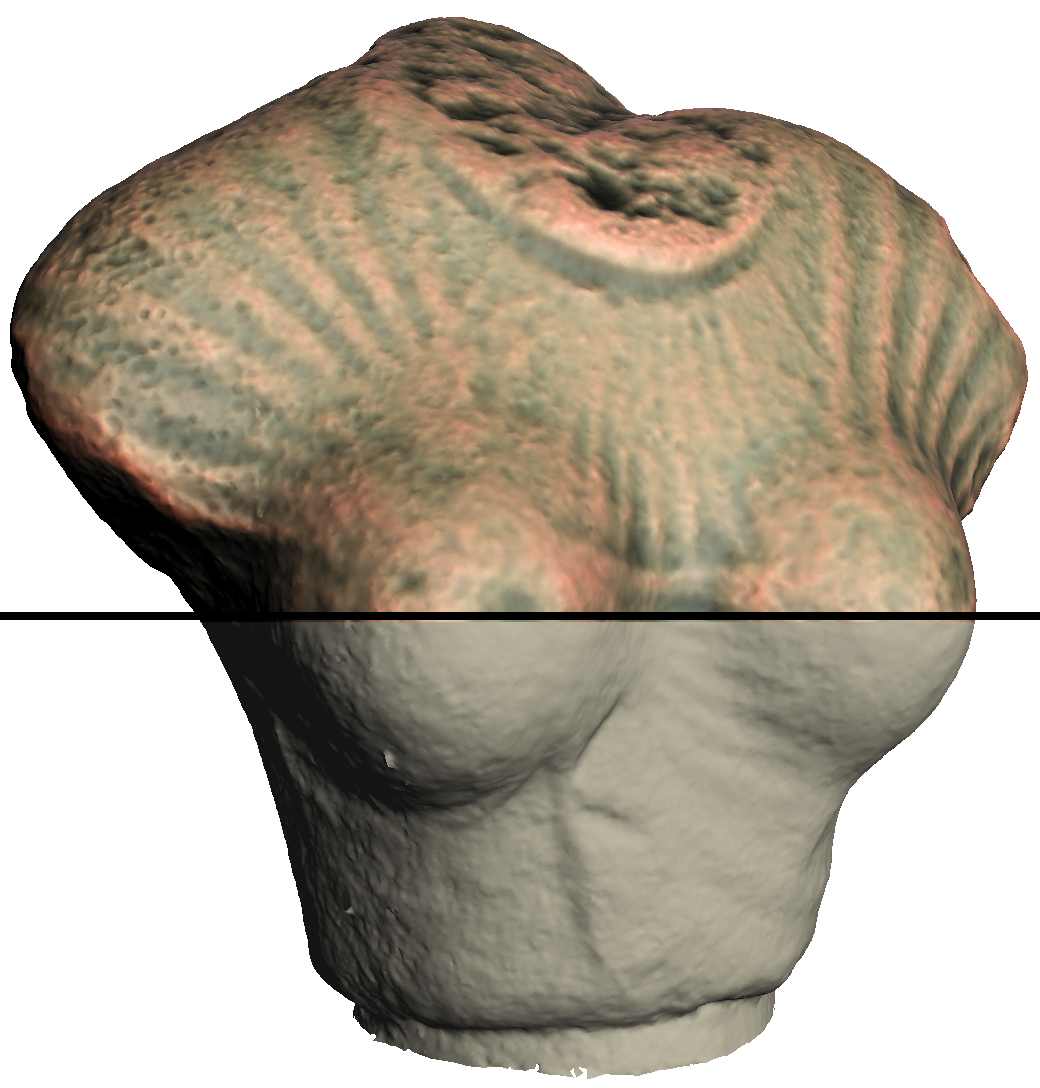

La Coupole is capable of measuring objects with an average angular accuracy of 0.22 degrees, generates 4 terabytes of data per hour of measurement and allows the measurement of spatially varying BRDFs (SV-BRDF) on a non-planar shape. The 50 mm lens combined with the camera allows to obtain a spatial resolution resolution of 50 microns which corresponds approximately to the resolution of the human visual system (for an object placed at about 20 cm from the observer). After two years of conception and realization, la Coupole was used to digitize 10 garments from the collections (cf. Figure 10) of the Ethnography Museum of Bordeaux as well as as well as a reconstruction of an alabaster (cf. Figure 9) within the framework of the project LaBeX "Albâtres" project and will continue to be used the project VESPAA.

|

|

|

| Gilet de Femme | Ceinture | Manteau |

Examples of numerical reconstructions from data acquired with La Coupole.

Numerical reconstructions with albedo (hemispherical reflectivity) per mesh vertex for different types of clothing : Left, the woman's silk and cotton vest (Tibet, late nineteenth century), a video featuring other points of view viewpoints is available here. Center,partial view of a central ornament of the apron: in navy blue quilted satin with embroidery in net representing a lion's head, and blue, white and red floche silk embroideries, a little yellow; black velvet facings; origin: China, 19th century. Right, coat made of fish skins from Siberia (twentieth century), a video showing other views is available views is availablehere.

7.2.2 Measuring Bidirectional Subscattering Reflectance and Transmission Functions

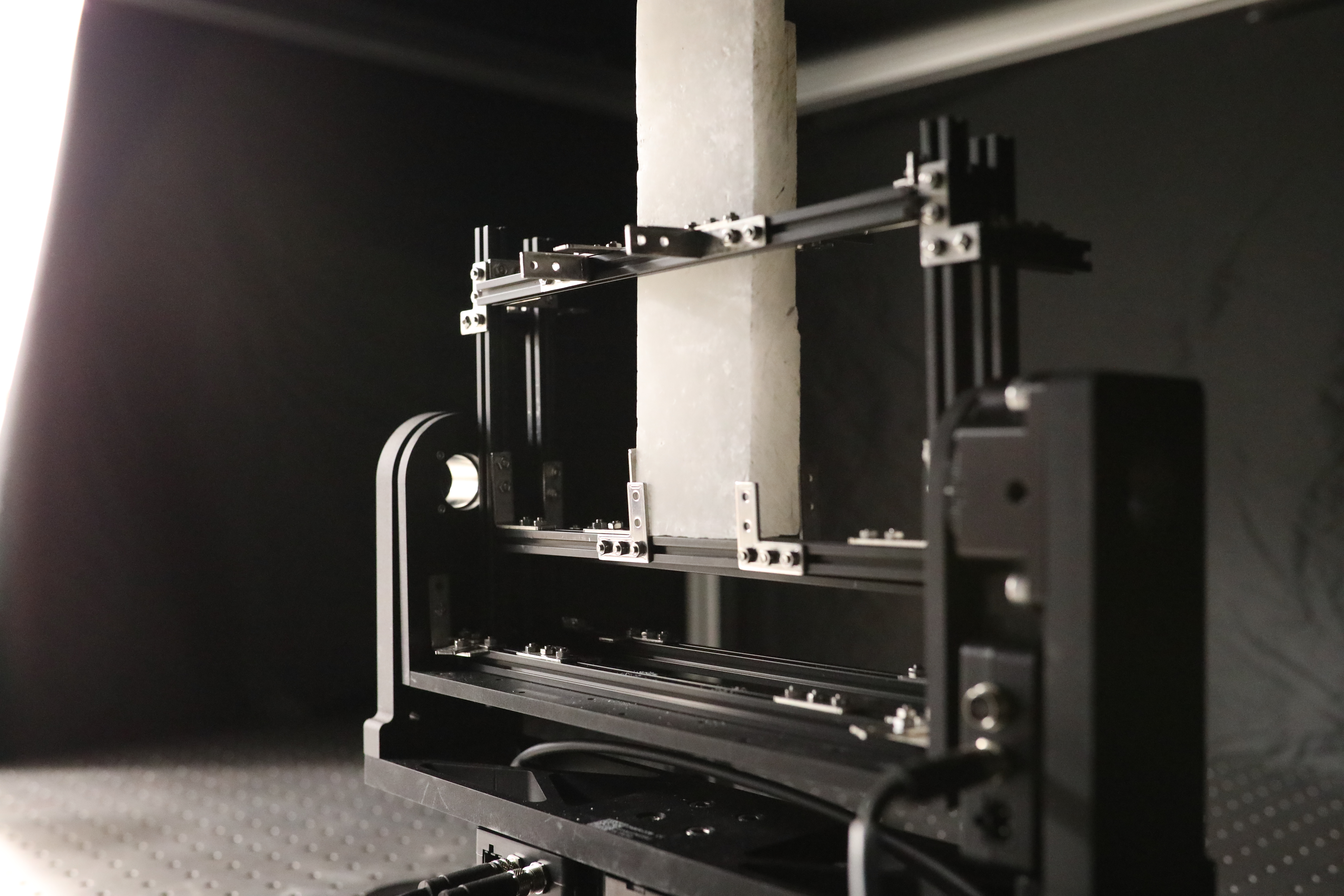

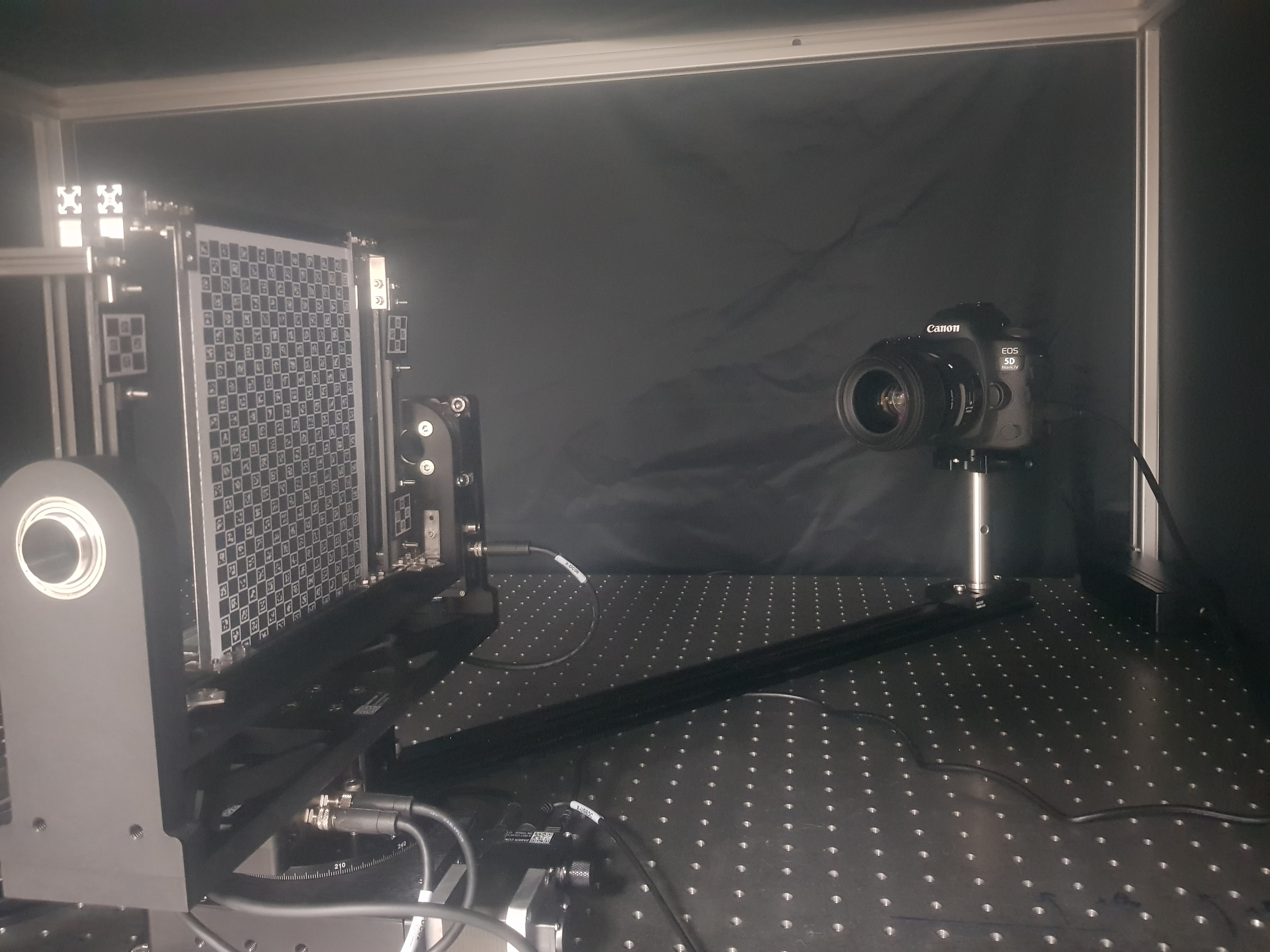

Participants: Morgane Gerardin, Romain Pacanowski.

A new platform (cf. Figure 11) to measure the volumetric properties of the light is currently being developed. The project started in March 2022 with the arrival of our new postdoctoral fellow Morgane Gérardin. The plaform permits to measure the diffusion of the light in reflection (aka BSSRDF) but also in Transmission (BSSTDF). A sample holder with two motorized axes orientates the sample wrt. to the direction of incident illumination while another motorized axis rotates a camera to image the sample (cf. Figure 12).

|

|

|

The BSSxDF measurement platform.

Measurement principle

8 New results

8.1 Analysis and Simulation

8.1.1 Material category of visual objects computed from specular image structure

Participants: Alexandra Schmidt [Univerity of Giessen], Pascal Barla, Katja Doerschner [Univerity of Giessen].

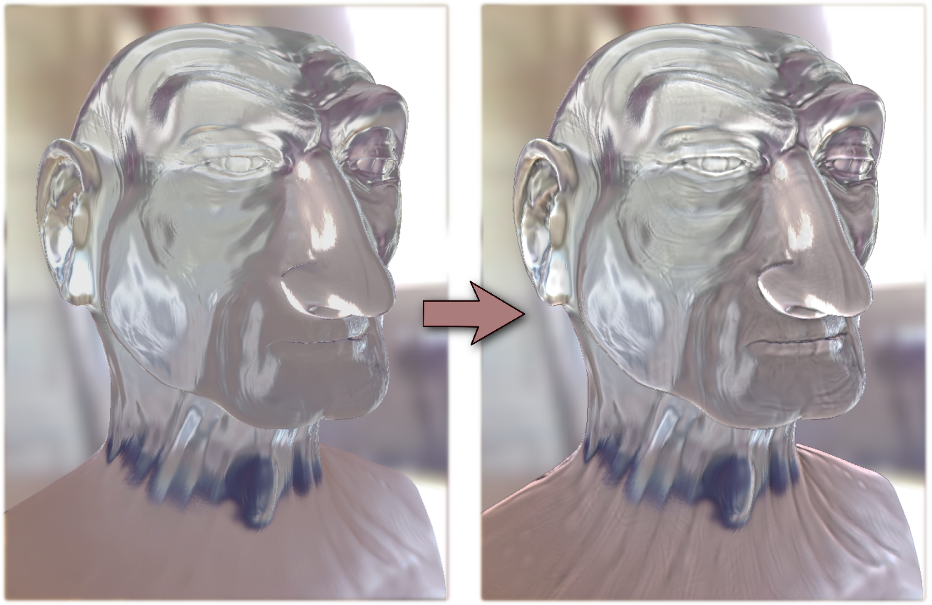

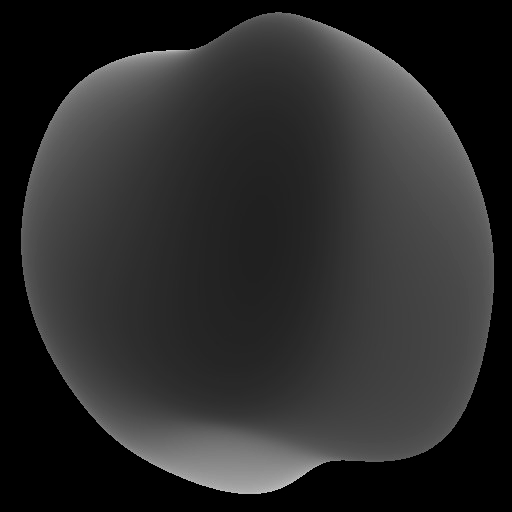

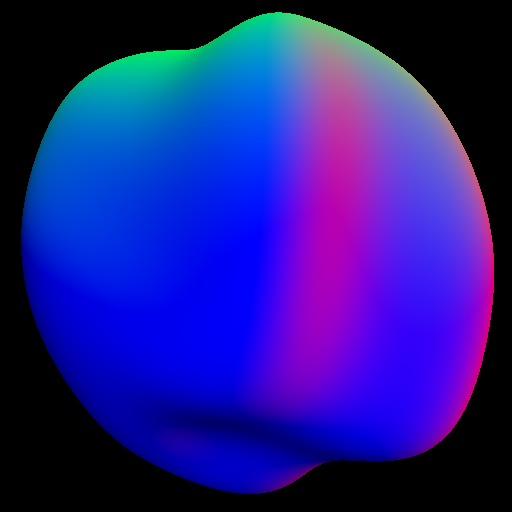

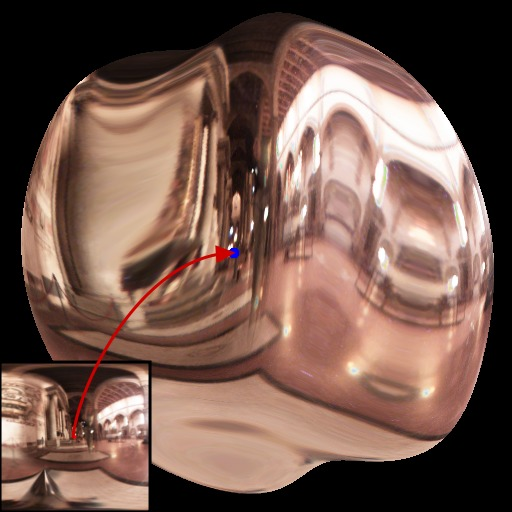

Recognizing materials and their properties visually is vital for successful interactions with our environment, from avoiding slippery floors to handling fragile objects. Yet there is no simple mapping of retinal image intensities to physical properties. In this paper 11, we investigated what image information drives material perception by collecting human psychophysical judgements about complex glossy objects (Figure 13). Variations in specular image structure—produced either by manipulating reflectance properties or visual features directly—caused categorical shifts in material appearance, suggesting that specular reflections provide diagnostic information about a wide range of material classes. Perceived material category appeared to mediate cues for surface gloss, providing evidence against a purely feedforward view of neural processing. Our results suggest that the image structure that triggers our perception of surface gloss plays a direct role in visual categorization, and that the perception and neural processing of stimulus properties should be studied in the context of recognition, not in isolation.

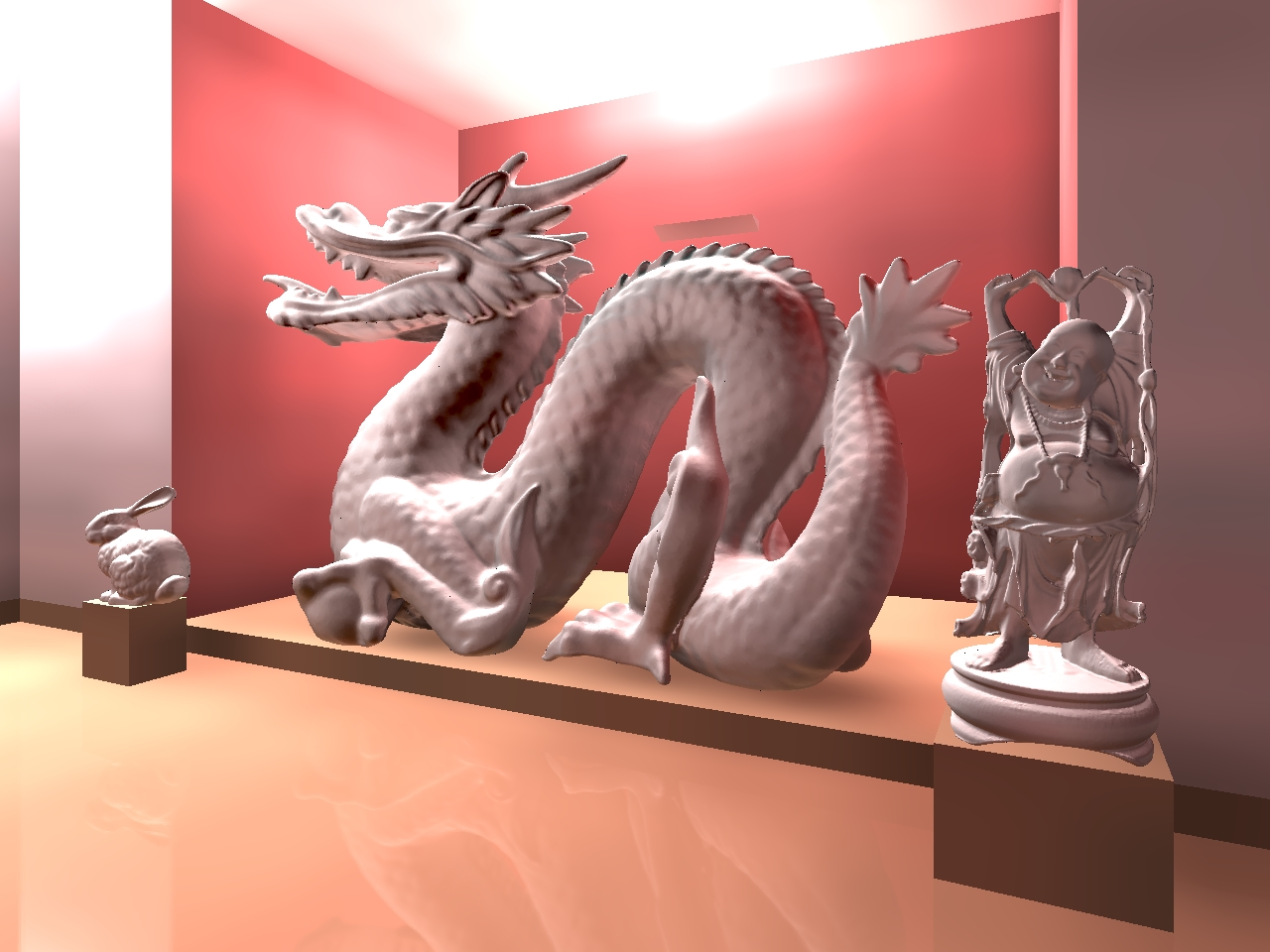

Dragon shape with (left) a 'melted chocolate' or 'mud' appearance; (center) a 'latex' or 'rubber' appearance; (right) a 'velvet', 'silk' or 'fabric' appearance.

8.2 From Acquisition to Display

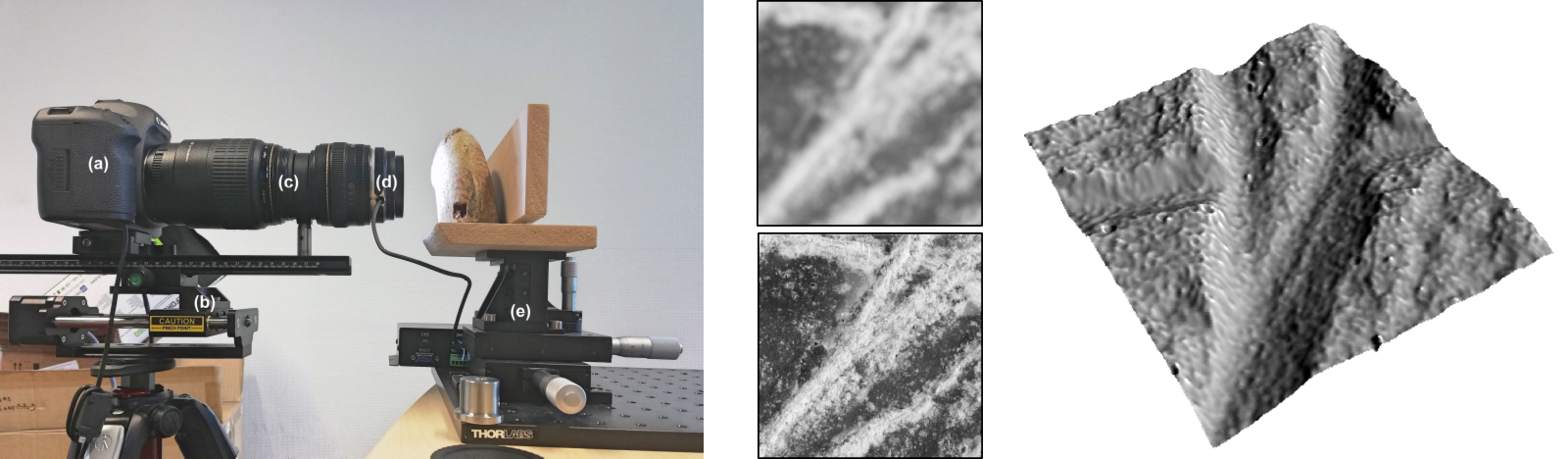

8.2.1 Depth from Focus using Windowed Linear Least Squares Regressions

Participants: Corentin Cou, Gaël Guennebaud.

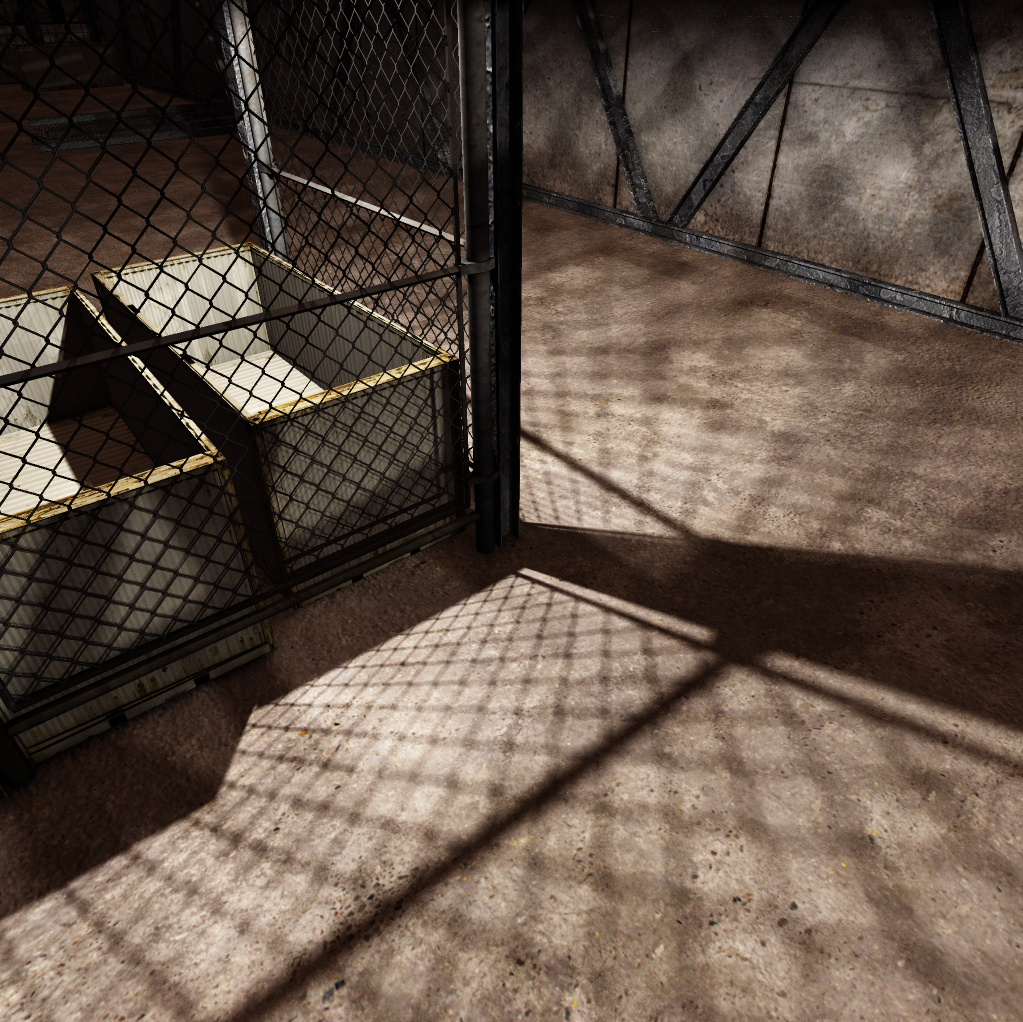

We present in this paper 9 a novel depth from focus technique. Following prior work, our pipeline starts with a focal stack and an estimation of the amount of defocus as given by, for instance, the Ring Difference Filter. To improve robustness to outliers while avoiding to rely on costly non-linear optimizations, we propose an original scheme that linearly scans the profile over a fixed size window, searching for the best peak within each window using a linearized least-squares Laplace regression. As a post-process, depth estimates with low confidence are reconstructed though an adaptive Moving Least Squares filter. We show how to objectively evaluate the performance of our approach by generating synthetic focal stacks from which the reconstructed depth maps can be compared to ground truth (Figure 14). Our results show that our method achieves higher accuracy than previous non-linear Laplace regression technique, while being orders of magnitude faster.

Depth from focus acquisition setup and results

8.3 Rendering, Visualization and Illustration

8.3.1 A Micrograin BSDF Model for the Rendering of Porous Layers

Participants: Simon Lucas, Mickaël Ribardière [Université de Poitiers], Romain Pacanowski, Pascal Barla.

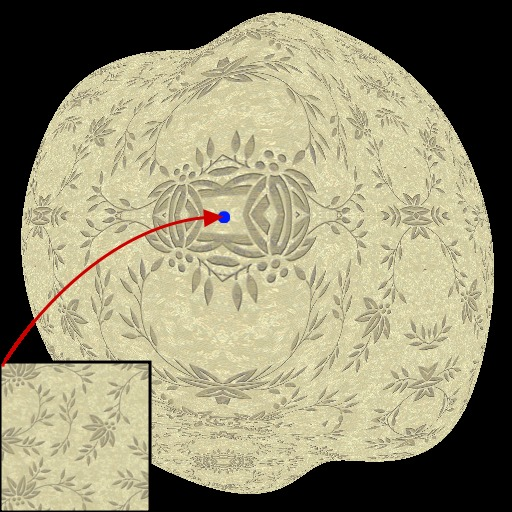

We introduce a new BSDF model for the rendering of porous layers, as found on surfaces covered by dust, rust, dirt, or sprayed paint (Figure 15). Our approach 16 is based on a distribution of elliptical opaque micrograins, extending the Trowbridge-Reitz (GGX) distribution to handle pores (i.e., spaces between micrograins). We use distance field statistics to derive the corresponding Normal Distribution Function (NDF) and Geometric Attenuation Factor (GAF), as well as a view- and light- dependent filling factor to blend between the porous and base layers. All the derived terms show excellent agreement when compared against numerical simulations. Our approach has several advantages compared to previous work. First, it decouples structural and reflectance parameters, leading to an analytical single-scattering formula regardless of the choice of micrograin reflectance. Second, we show that the classical texture maps (albedo, roughness, etc) used for spatially-varying material parameters are easily retargeted to work with our model. Finally, the BRDF parameters of our model behave linearly, granting direct multi-scale rendering using classical mip mapping.

Micrograin BSDF model

8.4 Editing and Modeling

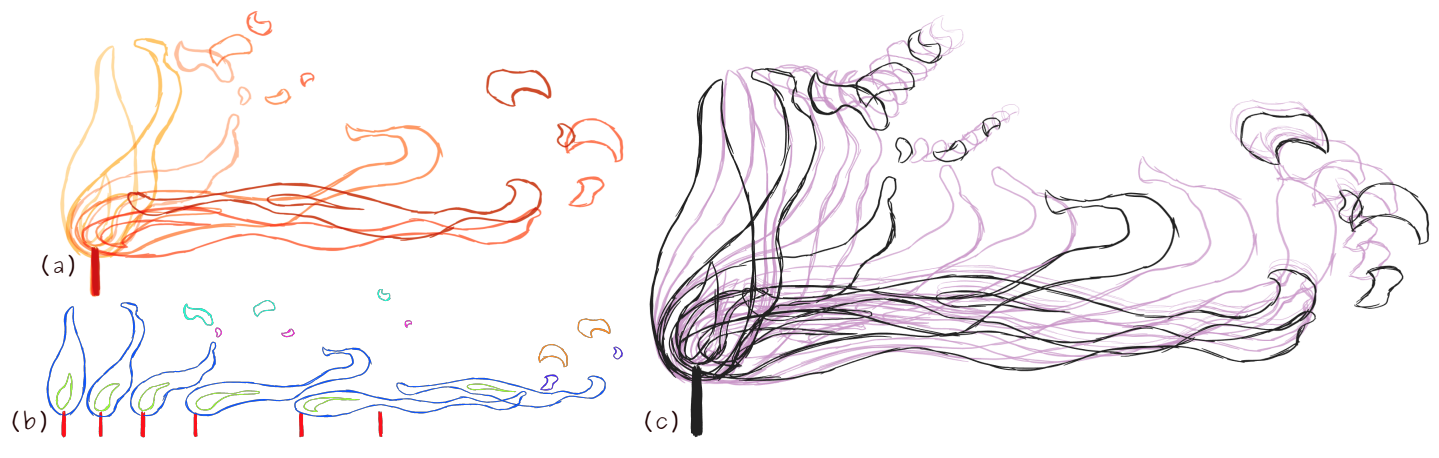

8.4.1 Non-linear Rough 2D Animation using Transient Embeddings

Participants: Melvin Even, Pierre Bénard, Pascal Barla.

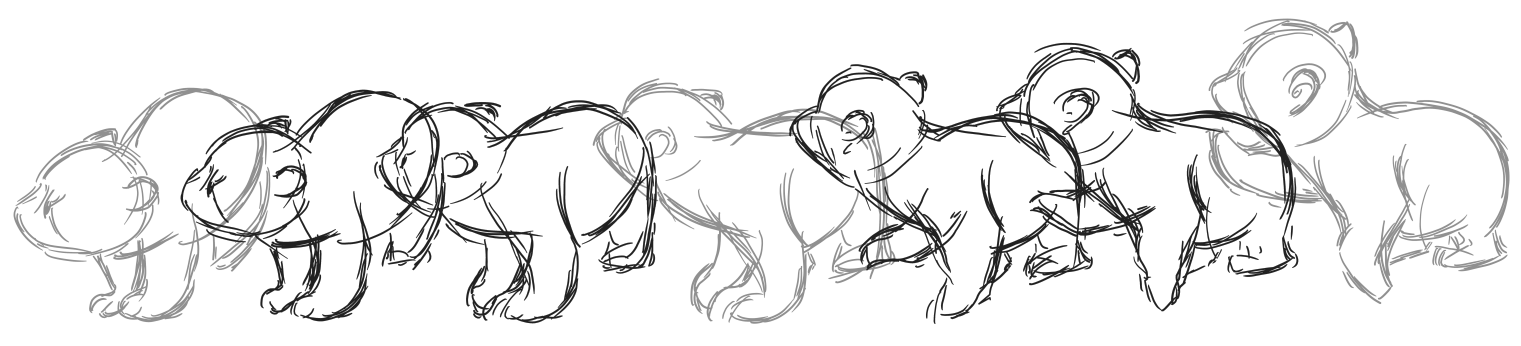

Traditional 2D animation requires time and dedication since tens of thousands of frames need to be drawn by hand for a typical production. Many computer-assisted methods have been proposed to automatize the generation of inbetween frames from a set of clean line drawings, but they are all limited by a rigid workflow and a lack of artistic controls, which is in the most part due to the one-to-one stroke matching and interpolation problems they attempt to solve. In this work 10, we take a novel view on those problems by focusing on an earlier phase of the animation process that uses rough drawings (i.e., sketches). Our key idea is to recast the matching and interpolation problems so that they apply to transient embeddings, which are groups of strokes that only exist for a few keyframes. A transient embedding carries strokes between keyframes both forward and backward in time through a sequence of transformed lattices. Forward and backward strokes are then cross-faded using their thickness to yield rough inbetweens. With our approach, complex topological changes may be introduced while preserving visual motion continuity. As demonstrated on state-of-the-art 2D animation exercises (e.g., Figure 16), our system provides unprecedented artistic control through the non-linear exploration of movements and dynamics in real-time.

Flame animation produced with our rough 2D animation system

8.4.2 Efficient Interpolation of Rough Line Drawings

Participants: Jiazhou Chen [Zhejiang University of Technology], Xinding Zhu [Zhejiang University of Technology], Melvin Even, Jean Basset, Pierre Bénard, Pascal Barla.

In traditional 2D animation, sketches drawn at distant keyframes are used to design motion, yet it would be far too labor-intensive to draw all the inbetween frames to fully visualize that motion. We propose a novel efficient interpolation algorithm that generates these intermediate frames in the artist's drawing style 8. Starting from a set of registered rough vector drawings, we first generate a large number of candidate strokes during a pre-process, and then, at each intermediate frame, we select the subset of those that appropriately conveys the underlying interpolated motion, interpolates the stroke distributions of the key drawings, and introduces a minimum amount of temporal artifacts (Figure 17). In addition, we propose quantitative error metrics to objectively evaluate different stroke selection strategies. We demonstrate the potential of our method on various animations and drawing styles, and show its superiority over competing raster- and vector-based methods.

Rough drawing animation of a bear

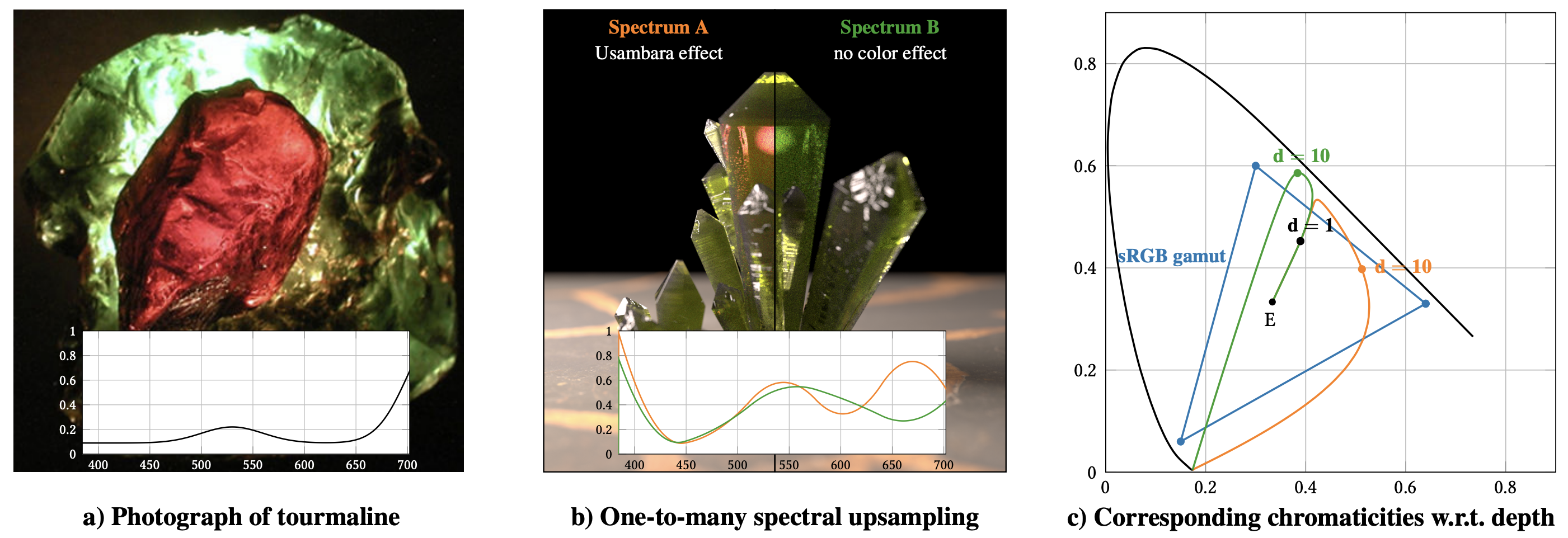

8.4.3 One-to-Many Spectral Upsampling of Reflectances and Transmittances

Participants: Laurent Belcour [Unity Labs, Grenoble], Pascal Barla.

Spectral rendering is essential for the production of physically-plausible synthetic images, but requires to introduce several changes in the content generation pipeline. In particular, the authoring of spectral material properties (e.g., albedo maps, indices of refraction, transmittance coefficients) raises new problems. While a large panel of computer graphics methods exists to upsample a RGB color to a spectrum, they all provide a one-to-one mapping. This limits the ability to control interesting color changes such as the Usambara effect or metameric spectra (Figure 18). In this work 7, we introduce a one-to-many mapping in which we show how we can explore the set of all spectra reproducing a given input color. We apply this method to different colour changing effects such as vathochromism - the change of color with depth, and metamerism.

Usambara effect

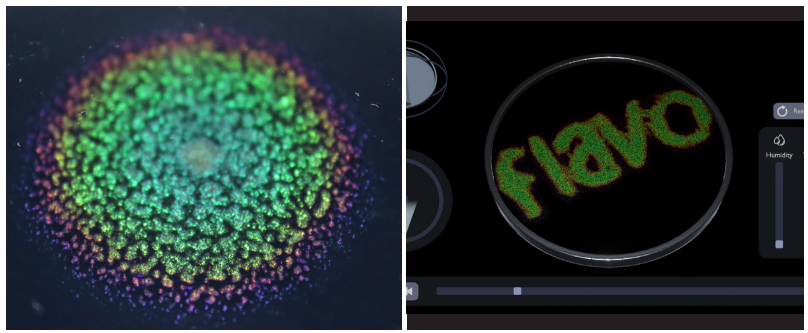

8.4.4 FlavoMetrics: Towards a Digital Tool to Understand and Tune Living Aesthetics of Flavobacteria

Participants: Clarice Risseeuw [Technical University, Delft], Jose Castro [Technical University, Delft], Pascal Barla, Elvin Karana [Technical University, Delft].

Integrating microorganisms into artefacts is a growing area of interest for HCI designers. However, the time, resources, and knowledge required to understand complex microbial behaviour limits designers from creatively exploring temporal expressions in living artefacts, i.e., living aesthetics. Bridging biodesign and computer graphics, we developed FlavoMetrics 17, an interactive digital tool that supports biodesigners in exploring Flavobacteria's living aesthetics (Figure 19). This open-source tool enables designers to virtually inoculate bacteria and manipulate stimuli to tune Flavobacteria's living colour in a digital environment. Six biodesigners evaluated the tool and reflected on its implications for their practices, for example, in (1) understanding spatio-temporal qualities of microorganisms beyond 2D, (2) biodesign education, and (3) the experience prototyping of living artefacts. With FlavoMetrics, we hope to inspire novel HCI tools for accessible and time- and resource-efficient biodesign as well as for better alignment with divergent microbial temporalities in living with living artefacts.

FlavoMetrics

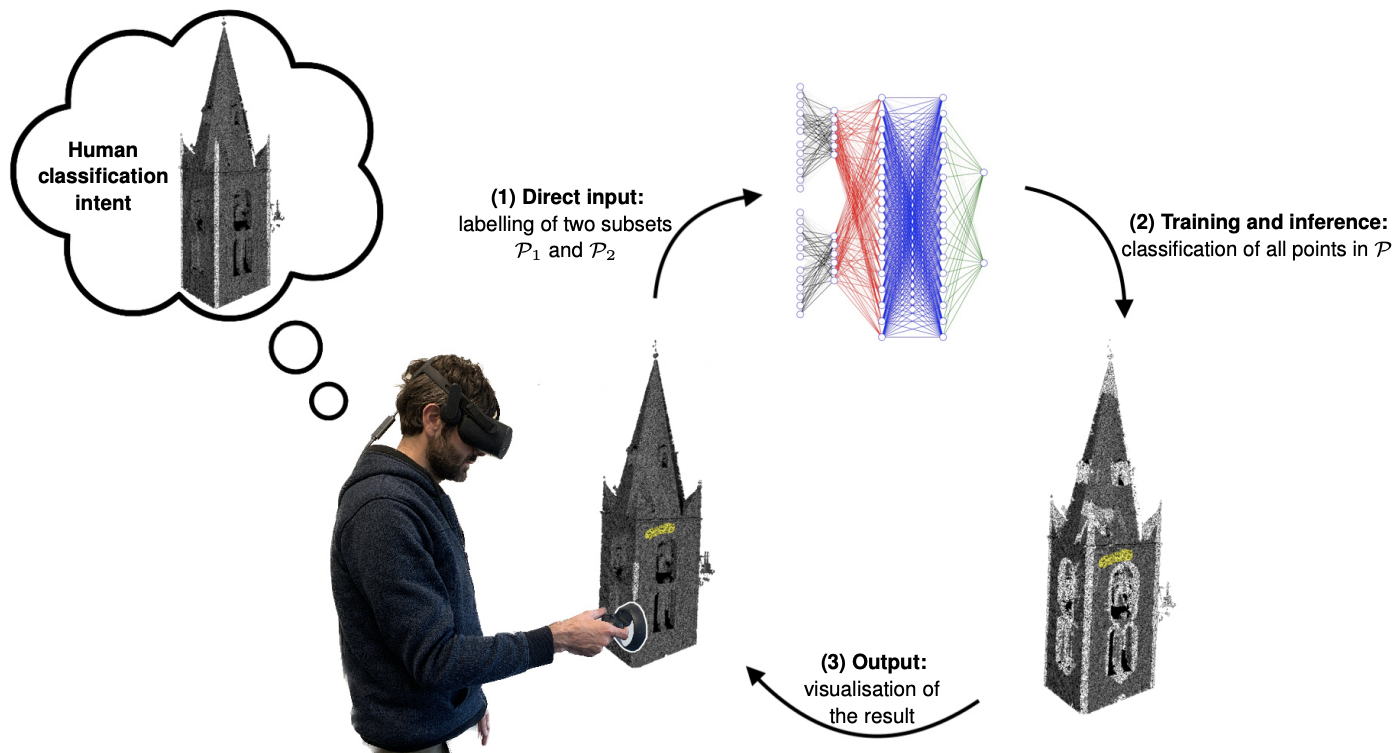

8.4.5 ImmersiveIML - Immersive interactive machine learning for 3D point cloud classification

Participants: Maxime Cordeil [University of Queensland], Thomas Billy [University of Queensland], Nicolas Mellado [CNRS, IRIT, Universite de Toulouse], Loïc Barthe [CNRS, IRIT, Universite de Toulouse], Nadine Couture [Univ. Bordeaux, ESTIA Institute of Technology], Patrick Reuter.

We propose in this work 14 an initial exploration of an interactive machine learning (IML) dialogue in immersive, interactive Virtual Reality (VR) for the classification of points in 3D point clouds. We contribute ImmersiveIML, an Immersive Analytics tool which builds on humanmachine learning trial-and-error dialogue to support an iterative classification process of points in the 3D point cloud. The interactions in ImmersiveIML are designed to be both expressive and minimal; we designed the iterative process to be supported by (1) 6-DOF controllers to allow direct interaction in large 3D point clouds via a minimal brushing technique, (2) a fast trainable machine learning model, and (3) the direct visual feedback of classification results.

This constitutes an iterative human-in-the-loop process that eventually converges to a classification according to human intent. We argue that this approach is a novel contribution that supports a constant improvement of the classification model and fast tracks classification tasks with this type of data, in an immersive scenario. We report on the design and implementation of ImmersiveIML and demonstrate its capabilities with two emblematic application scenarios: edge detection in 3D and classification of trees in a city LiDAR dataset.

Iterative human-in-the-loop classification of points in 3D point clouds

8.5 Modeling environnemental impacts of digitization

Participants: Gaël Guennebaud, Aurélie Bugeau [Université de Bordeaux, LaBRI].

Assessing the energy consumption or carbon footprint of data distribution of video streaming services is usually carried out through energy or carbon intensity figures (in Wh or gCO2e per GB). In a ICT4S paper 15 we reviewed the reasons why such approaches are likely to lead to misunderstandings and potentially to erroneous conclusions. To overcome those shortcomings, we designed a new methodology whose key idea is to consider a video streaming usage at the whole scale of a territory, and evaluate the impact of this usage on the network infrastructure. At the core of our methodology is a parametric model of a simplified network and Content Delivery Network (CDN) infrastructure, which is automatically scaled according to peak usage needs. This allows us to compare the power consumption of this infrastructure under different scenarios, ranging from a sober baseline to a generalized use of high bitrate videos. Our results show that classical efficiency indicators do not reflect the power consumption increase of more intensive Internet usage, and might even lead to misleading conclusions. This makes our approach more suitable to drive actions and study “What-if” scenarios. Our model is publicly available as a web application illustrated in Figure 21.

Parametric network model for streaming applications

9 Bilateral contracts and grants with industry

9.1 Bilateral contracts with industry

Research collaboration contract with Praxinos (2021-2024)

Participants: Melvin Even, Pierre Bénard, Pascal Barla.

This collaboration aims at defining new computer-assisted tools to facilitate the creation of 2D animations.

CIFRE PhD contract with Ubisoft (2022-2025)

Participants: Panagiotis Tsiapkolis, Pierre Bénard.

In this project, we aim at exploring artistic control in real-time stylized rendering of 3D scenes.

10 Partnerships and cooperations

10.1 International initiatives

10.1.1 Visits to international teams

Research stays abroad

Patrick Reuter

-

Visited institutions:

Monash University (Department of Human-Centred Computing) and University of Queensland (Human-Centred Computing team)

-

Country:

Australia

-

Dates:

September 2022 - August 2023

-

Context of the visit:

CRCT

-

Type of mobility:

research stay

The primary objective of this research stay is to foster collaborations and explore interactive machine learning approaches for geometry processing.

10.2 National initiatives

10.2.1 ANR

“Young Researcher” MoStyle (2021-2025)

- INRIA - LaBRI

Participants: Pierre Bénard, Pascal Barla, Melvin Even.

The main goal of this project is to investigate how computer tools can help capturing and reproducing the typicality of traditional 2D animations. Ultimately, this would allow to produce 2D animations with a unified appearance starting from roughs drawings or 3D inputs.

10.2.2 Inria Exploratory actions

Exploratory Action Ecoptics (2021-2024)

- INRIA

Participants: Gary Fourneau, Pascal Barla, Romain Pacanowski.

The main topic of this project is to contribute to the understanding of visual communication among animals. It explores new computer graphics techniques Il se propose de déveloper de nouvelles techniques de synthèse d'images afin de combler le fossé qui sépare les approches optique et écologique, connectant ainsi le transport lumineux dans des structures microscopiques à la surface d'un animal, aux signaux visuels perceptibles par un autre animal dans son habitat.

10.3 Regional initiatives

Vespaa (2022-2027)

- INRIA and Region Nouvelle Aquitaine

Participants: Romain Pacanowski, Pierre Bénard, Pascal Barla, Gael Guennebaud, Pierre Mézière.

The project VESPAA focuses on high-fidelity rendering of African artifacts stored in different museums (Angoulême, La Rochelle, Bordeaux) of the Nouvelle Aquitaine Region.

The main goals of VESPAA are:

- To improve the quality of measurements data acquired by La Coupole in order to represent complex materials (gilding, pearl, patina and varnish)

- To develop new SV-BRDF models and multi-resolution representations to visualize in real-time and with high fidelity the digitized objects under dynamic light and view directions. The targeted audience ranges from Cultural Heritage experts to mainstream public visiting the museums.

- To make durable the acquired reflectance properties by storing the measurements (geometry and tabulated data) as well as the SV-BRDF models (Source Code) in a open database.

11 Dissemination

11.1 Promoting scientific activities

Reviewer

- ACM Siggraph, ACM Siggraph Asia

- Eurographics

- Eurographics Symposium on Rendering

- ACM SIGGRAPH Symposium on Interactive 3D Graphics and Games (i3D)

- ACM Symposium on User Interface Software and Technology (UIST)

11.1.1 Journal

- IEEE Transactions on Visualization and Computer Graphics

- Journal of Industrial Ecology

- IPOL

11.1.2 Invited talks

- Appamat Workshop on Wet Materials (Pascal Barla).

11.1.3 Scientific expertise

- Gaël Guennebaud is providing expertize within two working groups of TheShiftProject on sufficiency in future Internet mobile network and on the role and impact of technologies within low GHG emission and resilient agricultural scenarios.

11.2 Teaching - Supervision - Juries

11.2.1 Teaching

The members of our team are involved in teaching computer science at University of Bordeaux and ENSEIRB-Matmeca engineering School. General computer science is concerned, as well as the following graphics related topics:

- Master: Pierre Bénard, Gaël Guennebaud, Romain Pacanowski, Advanced Image Synthesis, 50 HETD, M2, Univ. Bordeaux, France.

- Master: Gaël Guennebaud, Pierre Bénard and Simon Lucas, 3D Worlds, 60 HETD, M1, Univ. Bordeaux and IOGS, France.

- Master: Pierre Bénard, Virtual Reality, 20 HETD, M2, Univ. Bordeaux, France.

- Master: Romain Pacanowski, 3D Programming, M2, 18 HETD, Bordeaux INP ENSEIRB Matmeca, France.

- Licence: Gaël Guennebaud and Pierre La Rocca, Introduction to climate change issues and the environnemental impacts of ICT, 24 HETD, L3, Univ. Bordeaux, France.

One member is also in charge of a field of study:

- Master: Pierre Bénard, M2 “Informatique pour l'Image et le Son”, Univ. Bordeaux, France.

11.2.2 Supervision

- PhD: Corentin Cou, “Characterization of visual appearance for 3D restitution and exploration of monumental heritage”, Inria & IOGS & CNRS/INHA, G. Guennebaud, X. Granier, M. Volait, R. Pacanowski. 08/12/2024

- PhD in progress: Melvin Even, “Computer-Aided Rough 2D Animation”, Inria & Univ. Bordeaux, P. Barla, P. Bénard

- PhD in progress: Gary Fourneau, “ Modélisation de l'Apparence Visuelle pour l'Écologie Comportementale”, Inria & Univ. Bordeaux, P. Barla, R. Pacanowski

- PhD in progress: Simon Lucas, “Modélisation de l'apparence et rendu des plantes”, Inria & Univ. Bordeaux, P. Barla, R. Pacanowski

- PhD in progress: Panagiotis Tsiapkolis, “Study of Artistic Control in Real-Time Expressive Rendering”, Inria & Ubisoft, P. Bénard

- PhD in progress: Pierre La Rocca, “Modélisation paramétrique des impacts environnementaux des TIC : focus sur la numérisation de l'agriculture.”, Univ. Bordeaux, Aurélie Bugeau, G. Guennebaud

11.2.3 Juries

Phd Thesis

- Fanny Dailliez (Université Grenoble Alpes): Multiscale impact of the addition of a coating layer on the color of halftone prints.

PhD Defense Date: October 19th 2023.

Jury:

- Carinna PARRAMAN, Reviewer (CFPR)

- Romain Pacanowski Reviewer (Inria)

- Clotilde BOUST, Examiner (C2RMF)

- Evelyne MAURET, Examiner (LGP2)

- Anne BLAYO, Director (LGP2)

- Lionel CHAGAS, Supervisor, (LGP2)

- Thierry FOURNEL, Co-director, (Lab. Hubert Curien)

- Mathieu HEBERT, Supervisor, (Lab. Hubert Curien)

- Vincent Duveiller (Université Jean Monnet, IOGS): Optical models for predicting the color of dental composite materials.

PhD Defense Date:

Jury:

- GHINEA Razvan, Reviewer (University of Granada, Facultad de Ciencia)

- PILLONNET Anne, Reviewer (Université Claude Bernard Lyon 1, ILM)

- ANDRAUD Christine, Examiner (Muséum National d’Histoire Naturelle)

- PACANOWSKI Romain, Examiner (Inria)

- VYNCK Kevin, Examiner (CNRS)

- HEBERT Mathieu, Director (Université Jean Monnet, IOGS)

- CLERC Raphaël, Co-director (Université Jean Monnet, IOGS)

- SALOMON Jean-Pierre, invited (Université de Lorraine, Faculté de Nancy)

- JOST Sophie, invited (ENTPE)

11.3 Popularization

- During the national “Semaine des maths” event at INRIA (06/03/2023), Pierre Bénard gave a 45 minutes talk titled L'art et la science des films d'animation 3D.

12 Scientific production

12.1 Major publications

- 1 articleA Practical Extension to Microfacet Theory for the Modeling of Varying Iridescence.ACM Transactions on Graphics364July 2017, 65HALDOI

- 2 articleAutostereoscopic transparent display using a wedge light guide and a holographic optical element: implementation and results.Applied optics5834November 2019HALDOI

- 3 articleA Two-Scale Microfacet Reflectance Model Combining Reflection and Diffraction.ACM Transactions on Graphics364Article 66July 2017, 12HALDOI

- 4 articleA View-Dependent Metric for Patch-Based LOD Generation & Selection.Proceedings of the ACM on Computer Graphics and Interactive Techniques11May 2018HALDOI

- 5 articleInstant Transport Maps on 2D Grids.ACM Transactions on Graphics376November 2018, 13HALDOI

- 6 articleMaterial category of visual objects computed from specular image structure.Nature Human Behaviour77July 2023, 1152-1169HALDOI

12.2 Publications of the year

International journals

International peer-reviewed conferences

Conferences without proceedings

Scientific book chapters

Doctoral dissertations and habilitation theses

Reports & preprints

12.3 Other

Softwares

12.4 Cited publications

- 28 articleReliefs as images.ACM Trans. Graph.2942010, URL: http://doi.acm.org/10.1145/1778765.1778797DOIback to text

- 29 articleSurface Relief Analysis for Illustrative Shading.Computer Graphics Forum314June 2012, 1481-1490URL: http://hal.inria.fr/hal-00709492DOIback to textback to text

- 30 techreportDistribution-based BRDFs.unpublishedUniv. of Utah2007back to text

- 31 articleTime-resolved 3D Capture of Non-stationary Gas Flows.ACM Trans. Graph.2752008back to text

- 32 bookSpatial Augmented Reality: Merging Real and Virtual Worlds.A K Peters/CRC Press2005back to textback to text

- 33 articleOptimizing Environment Maps for Material Depiction.Comput. Graph. Forum (Proceedings of the Eurographics Symposium on Rendering)3042011, URL: http://www-sop.inria.fr/reves/Basilic/2011/BCRA11back to text

- 34 articleLeast Squares Subdivision Surfaces.Comput. Graph. Forum2972010, 2021-2028URL: http://hal.inria.fr/inria-00524555/enback to text

- 35 articleStyle Transfer Functions for Illustrative Volume Rendering.Comput. Graph. Forum2632007, 715-724URL: http://www.cg.tuwien.ac.at/research/publications/2007/bruckner-2007-STF/back to text

- 36 inproceedingsUnstructured lumigraph rendering.Proc. ACM SIGGRAPH2001, 425-432URL: http://doi.acm.org/10.1145/383259.383309DOIback to text

- 37 articleApplication of radial basis functions to shape description in a dual-element off-axis eyewear display: Field-of-view limit.J. Society for Information Display16112008, 1089-1098DOIback to text

- 38 articleA Survey on Participating Media Rendering Techniques.The Visual Computer2005HALback to text

- 39 inproceedingsOn-Line Visualization of Underground Structures using Context Features.Symposium on Virtual Reality Software and Technology (VRST)ACM2010, 167-170URL: http://hal.inria.fr/inria-00524818/enDOIback to text

- 40 articleNon-oriented MLS Gradient Fields.Computer Graphics ForumDecember 2013, URL: http://hal.inria.fr/hal-00857265back to text

- 41 articleLight field transfer: global illumination between real and synthetic objects.ACM Trans. Graph.2732008, URL: http://doi.acm.org/10.1145/1360612.1360656DOIback to textback to textback to textback to text

- 42 articleA novel approach makes higher order wavelets really efficient for radiosity.Comput. Graph. Forum1932000, 99-108back to text

- 43 articleA quantized-diffusion model for rendering translucent materials.ACM Trans. Graph.3042011, URL: http://doi.acm.org/10.1145/2010324.1964951DOIback to text

- 44 inproceedingsReflectance and texture of real-world surfaces.IEEE Conference on Computer Vision and Pattern Recognition (ICCV)1997, 151-157back to text

- 45 articleFabricating spatially-varying subsurface scattering.ACM Trans. Graph.2942010, URL: http://doi.acm.org/10.1145/1778765.1778799DOIback to text

- 46 bookAdvanced Global Illumination.A.K. Peters2006back to textback to text

- 47 articleFrequency analysis and sheared filtering for shadow light fields of complex occluders.ACM Trans. Graph.3022011, URL: http://doi.acm.org/10.1145/1944846.1944849DOIback to text

- 48 articleFrequency Analysis and Sheared Reconstruction for Rendering Motion Blur.ACM Trans. Graph.2832009, URL: http://hal.inria.fr/inria-00388461/enDOIback to textback to textback to text

- 49 articleSpecular reflections and the perception of shape.J. Vis.492004, 798-820URL: http://journalofvision.org/4/9/10/back to text

- 50 inproceedingsBRDF Acquisition with Basis Illumination.IEEE International Conference on Computer Vision (ICCV)2007, 1-8back to text

- 51 articleAccurate Light Source Acquisition and Rendering.ACM Trans. Graph.2232003, 621-630URL: http://hal.inria.fr/hal-00308294DOIback to textback to text

- 52 inproceedingsModeling the interaction of light between diffuse surfaces.Proc. ACM SIGGRAPH1984, 213-222back to text

- 53 articlePhysical reproduction of materials with specified subsurface scattering.ACM Trans. Graph.2942010, URL: http://doi.acm.org/10.1145/1778765.1778798DOIback to textback to text

- 54 phdthesisSimulating Global Illumination Using Adaptative Meshing.University of California1991back to text

- 55 articleFluorescent immersion range scanning.ACM Trans. Graph.2732008, URL: http://doi.acm.org/10.1145/1360612.1360686DOIback to text

- 56 articleAcquisition and analysis of bispectral bidirectional reflectance and reradiation distribution functions.ACM Trans. Graph.2942010, URL: http://doi.acm.org/10.1145/1778765.1778834DOIback to text

- 57 articleDynamic Display of BRDFs.Comput. Graph. Forum3022011, 475--483URL: http://dx.doi.org/10.1111/j.1467-8659.2011.01891.xDOIback to textback to textback to textback to text

- 58 articleTransparent and Specular Object Reconstruction.Comput. Graph. Forum2982010, 2400-2426back to text

- 59 inproceedingsA Kaleidoscopic Approach to Surround Geometry and Reflectance Acquisition.IEEE Conf. Computer Vision and Pattern Recognition Workshops (CVPRW)IEEE Computer Society2012, 29-36back to textback to textback to text

- 60 inproceedingsA theory of plenoptic multiplexing.IEEE Conf. Computer Vision and Pattern Recognition (CVPR)\bf oralIEEE Computer Society2010, 483-490back to textback to text

- 61 inproceedingsThe rendering equation.Proc. ACM SIGGRAPH1986, 143-150URL: http://doi.acm.org/10.1145/15922.15902DOIback to textback to text

- 62 inproceedingsFast, arbitrary BRDF shading for low-frequency lighting using spherical harmonics.Proc. Eurographics workshop on Rendering (EGWR)Pisa, Italy2002, 291-296back to text

-

63

articleEdge clustered fitting grids for

-polynomial characterization of freeform optical surfaces.Opt. Express19272011, 26962-26974URL: http://www.opticsexpress.org/abstract.cfm?URI=oe-19-27-26962DOIback to text - 64 articleDemarcating curves for shape illustration.ACM Trans. Graph. (SIGGRAPH Asia)2752008, URL: http://doi.acm.org/10.1145/1409060.1409110DOIback to text

- 65 articleRecording and controlling the 4D light field in a microscope using microlens arrays.J. Microscopy23522009, 144-162URL: http://dx.doi.org/10.1111/j.1365-2818.2009.03195.xDOIback to text

- 66 articlePredicted Virtual Soft Shadow Maps with High Quality Filtering.Comput. Graph. Forum3022011, 493-502URL: http://hal.inria.fr/inria-00566223/enback to text

- 67 articleProgrammable aperture photography: multiplexed light field acquisition.ACM Trans. Graph.2732008, URL: http://doi.acm.org/10.1145/1360612.1360654DOIback to text

- 68 articleOnline Tracking of Outdoor Lighting Variations for Augmented Reality with Moving Cameras.IEEE Transactions on Visualization and Computer Graphics184March 2012, 573-580URL: http://hal.inria.fr/hal-00664943DOIback to textback to text

- 69 article3D TV: a scalable system for real-time acquisition, transmission, and autostereoscopic display of dynamic scenes.ACM Trans. Graph.2332004, 814-824URL: http://doi.acm.org/10.1145/1015706.1015805DOIback to textback to textback to text

- 70 articleA data-driven reflectance model.ACM Trans. Graph.2232003, 759-769URL: http://doi.acm.org/10.1145/882262.882343DOIback to text

- 71 inproceedingsAcquisition, Synthesis and Rendering of Bidirectional Texture Functions.Eurographics 2004, State of the Art Reports2004, 69-94back to text

- 72 inproceedingsExperimental Analysis of BRDF Models.Proc. Eurographics Symposium on Rendering (EGSR)2005, 117-226back to text

- 73 bookGeometrical Considerations and Nomenclature for Reflectance.National Bureau of Standards1977back to text

- 74 articleOptical computing for fast light transport analysis.ACM Trans. Graph.2962010back to text

- 75 articleVolumetric Vector-based Representation for Indirect Illumination Caching.J. Computer Science and Technology (JCST)2552010, 925-932URL: http://hal.inria.fr/inria-00505132/enDOIback to text

- 76 articleRational BRDF.IEEE Transactions on Visualization and Computer Graphics1811February 2012, 1824-1835URL: http://hal.inria.fr/hal-00678885DOIback to textback to textback to text