Keywords

- A5.1. Human-Computer Interaction

- A5.1.1. Engineering of interactive systems

- A5.1.2. Evaluation of interactive systems

- A5.1.5. Body-based interfaces

- A5.1.6. Tangible interfaces

- A5.1.7. Multimodal interfaces

- A5.2. Data visualization

- B2.8. Sports, performance, motor skills

- B5.7. 3D printing

- B6.3.1. Web

- B6.3.4. Social Networks

- B9.2. Art

- B9.2.1. Music, sound

- B9.2.4. Theater

- B9.5. Sciences

1 Team members, visitors, external collaborators

Research Scientists

- Wendy Mackay [Team leader, Inria, Senior Researcher, HDR]

- Janin Koch [Inria, Researcher, from Oct 2021]

- Theophanis Tsandilas [Inria, Researcher, HDR]

Faculty Members

- Michel Beaudouin-Lafon [Univ Paris-Saclay, Professor, HDR]

- Sarah Fdili Alaoui [Univ Paris-Saclay, Associate Professor]

- Cedric Fleury [Univ Paris-Saclay, Associate Professor, until Feb 2021]

- Joanna Mcgrenere [University of British Columbia, Professor, Inria Chair]

Post-Doctoral Fellows

- Janin Koch [Inria, until Sep 2021]

- Antoine Loriette [Univ Paris-Saclay, until Mar 2021]

PhD Students

- Alexandre Battut [Univ Paris-Saclay]

- Arthur Fages [Univ Paris-Saclay]

- Camille Gobert [Inria]

- Tove Grimstad Bang [Univ Paris-Saclay, from Sep 2021, ANR Living Archive]

- Per Carl Viktor Gustafsson [Univ Paris-Saclay, until Dec 2021]

- Han Han [Univ Paris-Saclay]

- Capucine Nghiem [Univ Paris-Saclay, from Oct 2021]

- Miguel Renom [Univ Paris-Saclay]

- Wissal Sahel [Institut de recherche technologique System X]

- Teo Sanchez [Inria]

- Martin Tricaud [Univ Paris-Saclay]

- Oleksandra Vereschak [Sorbonne Université]

- Elizabeth Walton [Univ Paris-Saclay]

- Yiran Zhang [Univ Paris-Saclay, until Dec 2021]

- Yi Zhang [Inria, until Sep 2021]

Technical Staff

- Robert Falcasantos [Inria, Engineer, from Oct 2021]

- Nicolas Taffin [Inria, Engineer]

Interns and Apprentices

- Catarina Allen [Univ Paris-Saclay, Intern, from Mar 2021 until Aug 2021]

- Yassine Ben Lakhdar [Univ Paris-Saclay, Intern, from Mar 2021 until Aug 2021]

- Julia Biesiada [Inria, Intern, from May 2021 until Aug 2021]

- Raphaël Bournet [Univ Paris-Saclay, Intern, from May to Jul 2021]

- Alexandre Ciorascu [Univ Paris-Saclay, Intern, from May to Jul 2021]

- Bastian Destephen [UTC, Intern, from Sep until Feb 2021]

- Robert Falcasantos [Inria, Intern, from Mar 2021 until Aug 2021]

- Damien Jeanne [Univ Paris-Saclay, Intern, from Apr until Jun 2021]

- Elouan Le Bars [Univ Paris-Saclay, Intern, from Feb until Jul 2021]

- Lea Paymal [Inria, Intern, from Apr 2021 until Jul 2021]

- Vennila Vilvanathan [Univ Paris-Saclay, Intern, from Mar 2021 until Aug 2021]

- Junhang Yu [Inria, Intern, from Mar 2021 until Aug 2021]

Administrative Assistant

- Irina Lahaye [Inria]

External Collaborator

- Antoine Loriette [Sorbonne Université, from Apr 2021]

2 Overall objectives

Interactive devices are everywhere: we wear them on our wrists and belts; we consult them from purses and pockets; we read them on the sofa and on the metro; we rely on them to control cars and appliances; and soon we will interact with them on living room walls and billboards in the city. Over the past 30 years, we have witnessed tremendous advances in both hardware and networking technology, which have revolutionized all aspects of our lives, not only business and industry, but also health, education and entertainment. Yet the ways in which we interact with these technologies remains mired in the 1980s. The graphical user interface (GUI), revolutionary at the time, has been pushed far past its limits. Originally designed to help secretaries perform administrative tasks in a work setting, the GUI is now applied to every kind of device, for every kind of setting. While this may make sense for novice users, it forces expert users to use frustratingly inefficient and idiosyncratic tools that are neither powerful nor incrementally learnable.

ExSitu explores the limits of interaction — how extreme users interact with technology in extreme situations. Rather than beginning with novice users and adding complexity, we begin with expert users who already face extreme interaction requirements. We are particularly interested in creative professionals, artists and designers who rewrite the rules as they create new works, and scientists who seek to understand complex phenomena through creative exploration of large quantities of data. Studying these advanced users today will not only help us to anticipate the routine tasks of tomorrow, but to advance our understanding of interaction itself. We seek to create effective human-computer partnerships, in which expert users control their interaction with technology. Our goal is to advance our understanding of interaction as a phenomenon, with a corresponding paradigm shift in how we design, implement and use interactive systems. We have already made significant progress through our work on instrumental interaction and co-adaptive systems, and we hope to extend these into a foundation for the design of all interactive technology.

3 Research program

We characterize Extreme Situated Interaction as follows:

Extreme users. We study extreme users who make extreme demands on current technology. We know that human beings take advantage of the laws of physics to find creative new uses for physical objects. However, this level of adaptability is severely limited when manipulating digital objects. Even so, we find that creative professionals––artists, designers and scientists––often adapt interactive technology in novel and unexpected ways and find creative solutions. By studying these users, we hope to not only address the specific problems they face, but also to identify the underlying principles that will help us to reinvent virtual tools. We seek to shift the paradigm of interactive software, to establish the laws of interaction that significantly empower users and allow them to control their digital environment.

Extreme situations. We develop extreme environments that push the limits of today's technology. We take as given that future developments will solve “practical" problems such as cost, reliability and performance and concentrate our efforts on interaction in and with such environments. This has been a successful strategy in the past: Personal computers only became prevalent after the invention of the desktop graphical user interface. Smartphones and tablets only became commercially successful after Apple cracked the problem of a usable touch-based interface for the iPhone and the iPad. Although wearable technologies, such as watches and glasses, are finally beginning to take off, we do not believe that they will create the major disruptions already caused by personal computers, smartphones and tablets. Instead, we believe that future disruptive technologies will include fully interactive paper and large interactive displays.

Our extensive experience with the Digiscope WILD and WILDER platforms places us in a unique position to understand the principles of distributed interaction that extreme environments call for. We expect to integrate, at a fundamental level, the collaborative capabilities that such environments afford. Indeed almost all of our activities in both the digital and the physical world take place within a complex web of human relationships. Current systems only support, at best, passive sharing of information, e.g., through the distribution of independent copies. Our goal is to support active collaboration, in which multiple users are actively engaged in the lifecycle of digital artifacts.

Extreme design. We explore novel approaches to the design of interactive systems, with particular emphasis on extreme users in extreme environments. Our goal is to empower creative professionals, allowing them to act as both designers and developers throughout the design process. Extreme design affects every stage, from requirements definition, to early prototyping and design exploration, to implementation, to adaptation and appropriation by end users. We hope to push the limits of participatory design to actively support creativity at all stages of the design lifecycle. Extreme design does not stop with purely digital artifacts. The advent of digital fabrication tools and FabLabs has significantly lowered the cost of making physical objects interactive. Creative professionals now create hybrid interactive objects that can be tuned to the user's needs. Integrating the design of physical objects into the software design process raises new challenges, with new methods and skills to support this form of extreme prototyping.

Our overall approach is to identify a small number of specific projects, organized around four themes: Creativity, Augmentation, Collaboration and Infrastructure. Specific projects may address multiple themes, and different members of the group work together to advance these different topics.

4 Application domains

4.1 Creative industries

We work closely with creative professionals in the arts and in design, including music composers, musicians, and sound engineers; painters and illustrators; dancers and choreographers; theater groups; game designers; graphic and industrial designers; and architects.

4.2 Scientific research

We work with creative professionals in the sciences and engineering, including neuroscientists and doctors; programmers and statisticians; chemists and astrophysicists; and researchers in fluid mechanics.

5 Highlights of the year

- Wendy Mackay was named the 2021-2022 Annual Chair of Computer Science for the Collège de France

- Janin Koch joined the team as permanent member

- New contracts: Theophanis Tsandilas and Michel Beaudouin-Lafon: ANR PRC GLACIS; Theophanis Tsandilas: CNRS - University of Toronto funding for PhD mobility

- Equipex+ project CONTINUUM funded by ANR under PIA4 program; 22 partners, coordinated by CNRS, 13.6M€ funding; Scientific director: Michel Beaudouin-Lafon

- Janin Koch and Wendy Mackay successfully ran the first annual creARTathon, a creative hackathon for 35 students in HCI, AI, Art and Design, with a final public exhibit at an art gallery in Paris.

5.1 Awards

- Camille Gobert and Michel Beaudouin-Lafon: Best Paper Award and Best Demonstration Award at the Conférence Francophone sur l'Interaction Homme-Machine (IHM '20'21) for “Représentations Intermédiaires Interactives pour la Manipulation de Code LaTeX” 29

- Wendy Mackay and Michel Beaudouin-Lafon: Honorable Mention Award at ACM CHI 2021 for “SonicHoop: Using Interactive Sonification to Support Aerial Hoop Practices.” 23

- Sarah Fdili Alaoui received Best Pictorial Award at the the ACM Conference on Creativity and Cognition (C&C) 2021.

6 New software and platforms

6.1 New software

6.1.1 Digiscape

-

Name:

Digiscape

-

Keywords:

2D, 3D, Node.js, Unity 3D, Video stream

-

Functional Description:

Through the Digiscape application, the users can connect to a remote workspace and share files, video and audio streams with other users. Application running on complex visualization platforms can be easily launched and synchronized.

- URL:

-

Contact:

Olivier Gladin

-

Partners:

Maison de la simulation, UVSQ, CEA, ENS Cachan, LIMSI, LRI - Laboratoire de Recherche en Informatique, CentraleSupélec, Telecom Paris

6.1.2 Touchstone2

-

Keyword:

Experimental design

-

Functional Description:

Touchstone2 is a graphical user interface to create and compare experimental designs. It is based on a visual language: Each experiment consists of nested bricks that represent the overall design, blocking levels, independent variables, and their levels. Parameters such as variable names, counterbalancing strategy and trial duration are specified in the bricks and used to compute the minimum number of participants for a balanced design, account for learning effects, and estimate session length. An experiment summary appears below each brick assembly, documenting the design. Manipulating bricks immediately generates a corresponding trial table that shows the distribution of experiment conditions across participants. Trial tables are faceted by participant. Using brushing and fish-eye views, users can easily compare among participants and among designs on one screen, and examine their trade-offs.

Touchstone2 plots a power chart for each experiment in the workspace. Each power curve is a function of the number of participants, and thus increases monotonically. Dots on the curves denote numbers of participants for a balanced design. The pink area corresponds to a power less than the 0.8 criterion: the first dot above it indicates the minimum number of participants. To refine this estimate, users can choose among Cohen’s three conventional effect sizes, directly enter a numerical effect size, or use a calculator to enter mean values for each treatment of the dependent variable (often from a pilot study).

Touchstone2 can export a design in a variety of formats, including JSON and XML for the trial table, and TSL, a language we have created to describe experimental designs. A command-line tool is provided to generate a trial table from a TSL description.

Touchstone2 runs in any modern Web browser and is also available as a standalone tool. It is used at ExSitu for the design of our experiments, and by other Universities and research centers worldwide. It is available under an Open Source licence at https://touchstone2.org.

- URL:

-

Contact:

Wendy Mackay

-

Partner:

University of Zurich

6.1.3 UnityCluster

-

Keywords:

3D, Virtual reality, 3D interaction

-

Functional Description:

UnityCluster is middleware to distribute any Unity 3D (https://unity3d.com/) application on a cluster of computers that run in interactive rooms, such as our WILD and WILDER rooms, or immersive CAVES (Computer-Augmented Virtual Environments). Users can interact the the application with various interaction resources.

UnityCluster provides an easy solution for running existing Unity 3D applications on any display that requires a rendering cluster with several computers. UnityCluster is based on a master-slave architecture: The master computer runs the main application and the physical simulation as well as manages the input, the slave computers receive updates from the master and render small parts of the 3D scene. UnityCluster manages data distribution and synchronization among the computers to obtain a consistent image on the entire wall-sized display surface.

UnityCluster can also deform the displayed images according to the user's position in order to match the viewing frustum defined by the user's head and the four corners of the screens. This respects the motion parallax of the 3D scene, giving users a better sense of depth.

UnityCluster is composed of a set of C Sharp scripts that manage the network connection, data distribution, and the deformation of the viewing frustum. In order to distribute an existing application on the rendering cluster, all scripts must be embedded into a Unity package that is included in an existing Unity project.

-

Contact:

Cédric Fleury

-

Partner:

Inria

6.1.4 VideoClipper

-

Keyword:

Video recording

-

Functional Description:

VideoClipper is an IOS app for Apple Ipad, designed to guide the capture of video during a variety of prototyping activities, including video brainstorming, interviews, video prototyping and participatory design workshops. It relies heavily on Apple’s AVFoundation, a framework that provides essential services for working with time-based audiovisual media on iOS (https://developer.apple.com/av-foundation/). Key uses include: transforming still images (title cards) into video tracks, composing video and audio tracks in memory to create a preview of the resulting video project and saving video files into the default Photo Album outside the application.

VideoClipper consists of four main screens: project list, project, capture and import. The project list screen shows a list with the most recent projects at the top and allows the user to quickly add, remove or clone (copy and paste) projects. The project screen includes a storyboard composed of storylines that can be added, cloned or deleted. Each storyline is composed of a single title card, followed by one or more video clips. Users can reorder storylines within the storyboard, and the elements within each storyline through direct manipulation. Users can preview the complete storyboard, including all titlecards and videos, by pressing the play button, or export it to the Ipad’s Photo Album by pressing the action button.

VideoClipper offers multiple tools for editing titlecards and storylines. Tapping on the title card lets the user edit the foreground text, including font, size and color, change background color, add or edit text labels, including size, position, color, and add or edit images, both new pictures and existing ones. Users can also delete text labels and images with the trash button. Video clips are presented via a standard video player, with standard interaction. Users can tap on any clip in a storyline to: trim the clip with a non-destructive trimming tool, delete it with a trash button, open a capture screen by clicking on the camera icon, label the clip by clicking a colored label button, and display or hide the selected clip by toggling the eye icon.

VideoClipper is currently in beta test, and is used by students in two HCI classes at the Université Paris-Saclay, researchers in ExSitu as well as external researchers who use it for both teaching and research work. A beta test version is available on demand under the Apple testflight online service.

-

Contact:

Wendy Mackay

6.1.5 WildOS

-

Keywords:

Human Computer Interaction, Wall displays

-

Functional Description:

WildOS is middleware to support applications running in an interactive room featuring various interaction resources, such as our WILD and WILDER rooms: a tiled wall display, a motion tracking system, tablets and smartphones, etc. The conceptual model of WildOS is a platform, such as the WILD or WILDER room, described as a set of devices and on which one or more applications can be run.

WildOS consists of a server running on a machine that has network access to all the machines involved in the platform, and a set of clients running on the various interaction resources, such as a display cluster or a tablet. Once WildOS is running, applications can be started and stopped and devices can be added to or removed from the platform.

WildOS relies on Web technologies, most notably Javascript and node.js, as well as node-webkit and HTML5. This makes it inherently portable (it is currently tested on Mac OS X and Linux). While applications can be developed only with these Web technologies, it is also possible to bridge to existing applications developed in other environments if they provide sufficient access for remote control. Sample applications include a web browser, an image viewer, a window manager, and the BrainTwister application developed in collaboration with neuroanatomists at NeuroSpin.

WildOS is used for several research projects at ExSitu and by other partners of the Digiscope project. It was also deployed on several of Google's interactive rooms in Mountain View, Dublin and Paris. It is available under an Open Source licence at https://bitbucket.org/mblinsitu/wildos.

- URL:

-

Contact:

Michel Beaudouin-Lafon

6.1.6 StructGraphics

-

Keywords:

Data visualization, Human Computer Interaction

-

Functional Description:

StructGraphics is a user interface for creating data-agnostic and fully reusable designs of data visualizations. It enables visualization designers to construct visualization designs by drawing graphics on a canvas and then structuring their visual properties without relying on a concrete dataset or data schema. Overall, StructGraphics follows the inverse workflow than traditional visualization-design systems. Rather than transforming data dependencies into visualization constraints, it allows users to interactively define the property and layout constraints of their visualization designs and then translate these graphical constraints into alternative data structures. Since visualization designs are data-agnostic, they can be easily reused and combined with different datasets.

- URL:

- Publication:

-

Contact:

Theofanis Tsantilas

6.2 New platforms

6.2.1 WILD

Participants: Michel Beaudouin-Lafon [correspondant], Cédric Fleury, Olivier Gladin.

WILD is our first experimental ultra-high-resolution interactive environment, created in 2009. In 2019-2020 it received a major upgrade: the 16-computer cluster was replaced by new machines with top-of-the-line graphics cards, and the 32-screen display was replaced by 32 32" 8K displays resulting in a resolution of 1 giga-pixels (61 440 x 17 280) for an overall size of 5m80 x 1m70 (280ppi). An infrared frame adds multitouch capability to the entire display area. The platform also features a camera-based motion tracking system that lets users interact with the wall, as well as the surrounding space, with various mobile devices.

6.2.2 WILDER

Participants: Michel Beaudouin-Lafon [correspondant], Cédric Fleury, Olivier Gladin.

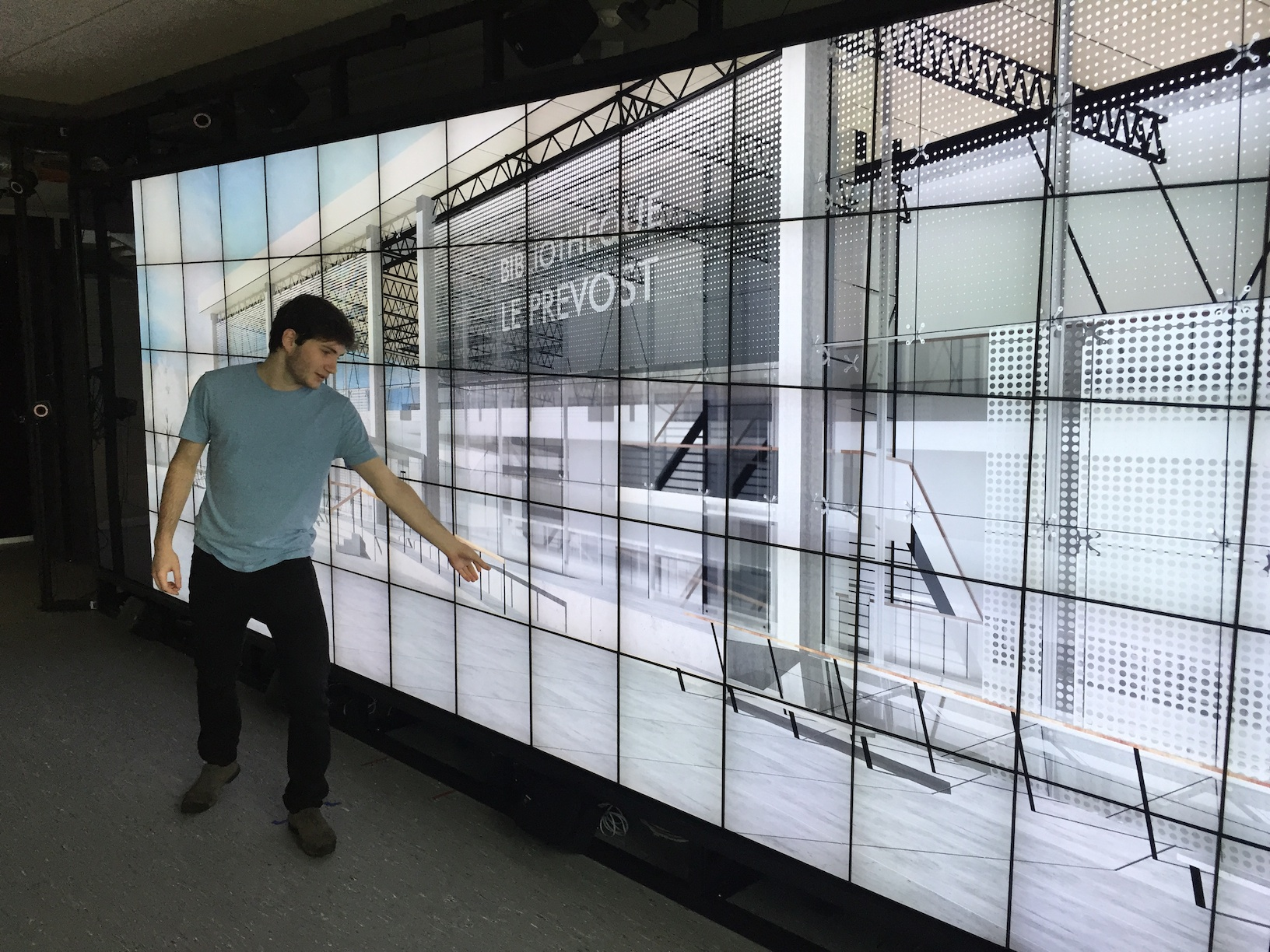

WILDER (Figure 1) is our second experimental ultra-high-resolution interactive environment, which follows the WILD platform developed in 2009. It features a wall-sized display with seventy-five 20" LCD screens, i.e. a 5m50 x 1m80 (18' x 6') wall displaying 14 400 x 4 800 = 69 million pixels, powered by a 10-computer cluster and two front-end computers. The platform also features a camera-based motion tracking system that lets users interact with the wall, as well as the surrounding space, with various mobile devices. The display uses a multitouch frame (one of the largest of its kind in the world) to make the entire wall touch sensitive.

WILDER was inaugurated in June, 2015. It is one of the ten platforms of the Digiscope Equipment of Excellence and, in combination with WILD and the other Digiscope rooms, provides a unique experimental environment for collaborative interaction.

In addition to using WILD and WILDER for our research, we have also developed software architectures and toolkits, such as WildOS and Unity Cluster, that enable developers to run applications on these multi-device, cluster-based systems.

7 New results

7.1 Fundamentals of Interaction

Participants: Michel Beaudouin-Lafon [correspondant], Wendy Mackay, Theophanis Tsandilas, Alexander Eiselmeyer, Camille Gobert, Han Han, Miguel Renom, Martin Tricaud, Yiran Zhang.

In order to better understand fundamental aspects of interaction, ExSitu conducts in-depth observational studies and controlled experiments which contribute to theories and frameworks that unify our findings and help us generate new, advanced interaction techniques. Our theoretical work also leads us to deepen or re-analyze existing theories and methodologies in order to gain new insights.

We introduced Generative Theories of Interaction 11 as a way to bridge the gap between theory and the generation of novel technological solutions. A Generative Theory of Interaction draws insights from empirical theories about human behavior in order to define specific concepts and actionable principles, which in turn serve as guidelines for analyzing, critiquing and constructing new technological artifacts. Based on our previous work and in collaboration with a colleague from Univ. Aarhus (Denmark), we illustrate the concept with three detailed examples: Instrumental Interaction, Human-Computer Partnerships, and Communities and Common Objects. Each example describes the underlying scientific theory and how we derived and applied HCI-relevant concepts and principles to the design of innovative interactive technologies. Summary tables offer sample questions that help analyze existing technology with respect to a specific theory, critique both positive and negative aspects, and inspire new ideas for constructing novel interactive systems.

At the methodological level, we developed Argus, an interactive tool that addressed a key challenge faced by HCI researchers when designing a controlled experiment, i.e., choosing the appropriate number of participants, or sample size 15. We use a priori power analysis, which examines the relationships among multiple parameters, including the complexity associated with human participants, e.g., order and fatigue effects, to calculate the statistical power of a given experiment design. Argus supports interactive exploration of statistical power, letting researchers specify experiment design scenarios with varying confounds and effect sizes. Argus then simulates data and visualizes statistical power across these scenarios, which lets them interactively weigh various trade-offs and make informed decisions about sample size.

We have continued our work exploring the notion of substrate in two areas: document editing and large multi-player games. Editing documents with description languages such as LaTeX is difficult because of the separation between the source code and the resulting formatted document. This was confirmed by our interviews of LaTeX users and led us to the concept of interactive intermediate representations (IIR). IIR are a new kind of user interface for document description languages that enable to visualize and manipulate certain pieces of code through suitable representations. They provide an intermediate substrate between code and the final document. We created i-LaTeX 29, a prototype of a LaTeX editor equipped with IIR to illustrate the power of the approach. i-LaTeX facilitates editing of mathematical formulae, tables, and images.

Viktor Gustafsson successfully defended his doctoral dissertation 36 entitled `Designing Persistent Player Narratives in Digital Game Worlds' in the area of online role-playing games. His work includes theoretical contributions with respect to `narrative substrates' based on the theory of `Instrumental Interaction' 34, 35, 11. In addition, he made multiple empirical contributions in the form of results from both qualitative and quantitative studies of game players, and a major technical contribution in the form of WeRide, a fully functional MMORPG that was used as a testbed for exploring ideas and concepts. The results of this work will be used in an Inria Startup Studio project, beginning in January 2022.

Finally we continued our long-standing stream of work on input techniques for pointing by improving pointing with eye-gaze. We introduced BayesGaze 22, a Bayesian approach of determining the selected target given an eye-gaze trajectory. This approach views each sampling point in an eye-gaze trajectory as a signal for selecting a target. It then uses the Bayes’ theorem to calculate the posterior probability of selecting a target given a sampling point, and accumulates the posterior prob- abilities weighted by sampling interval to determine the selected target. The selection results are fed back to update the prior distribution of targets, which is modeled by a categorical distribution. We showed that BayesGaze improves target selection accuracy and speed over a dwell-based selection method and the Center of Gravity Mapping (CM) method. This approach shows that both accumulating posterior and incorporating the prior are effective in improving the performance of eye-gaze based target selection, and can probably be applied to other pointing techniques.

7.2 Human-Computer Partnerships

Participants: Wendy Mackay [correspondant], Baptiste Caramiaux, Janin Koch, Téo Sanchez, Nicolas Taffin, Theophanis Tsandilas.

ExSitu is interested in designing effective human-computer partnerships where expert users control their interaction with intelligent systems. Rather than treating the human users as the `input' to a computer algorithm, we explore human-centered machine learning, where the goal is to use machine learning and other techniques to increase human capabilities. Much of human-computer interaction research focuses on measuring and improving productivity: our specific goal is to create what we call `co-adaptive systems' that are discoverable, appropriable and expressive for the user.

In the area of machine teaching, we explored how to provide users with greater agency in how their data is used to train machine learning models 24. We were interested in how novice users understand machine learning algorithms, both how they interpret the model's behavior and what strategies they use to “make it work”. Based on Marcelle 19, we developed a web-based sketch recognition algorithm based on a Deep Neural Network (DNN), called Marcelle-Sketch that end-users can train incrementally. We then ran an experiment with 12 novices to identify their strategies and (mis)understandings about the model in a realistic algorithm-teaching task. We found that participants adopt diverse teaching strategies when the type of variability affects model performance, and highlight the importance of sketch sequencing, particularly in the early stage of the teaching task. We show that users' understanding of the models is facilitated when they perform simple operations on their drawings, and identify certain inherent properties of DNN that can cause confusion. We propose a set of implications for the design of interactive machine learning systems for novice users and discuss the socio-cultural aspects of this research.

We also explored how to create software tools for generating digital sound, where users must manage complex, high-dimensional, parametric interfaces that make it difficult to explore diverse sound designs 14. We developed artificial agents that use deep reinforcement learning to explore parameter spaces in partnership with users for sound design. We conducted a series of studies that probe the creative benefits of these artificial agents and how they can be adapted to facilitate design exploration. We then developed Co-Explorer influenced by our observations of the users’ exploration strategies with parametric interfaces, and our tests of different agent exploration behavior. We evaluated Co-Explorer with professional sound designers and found that it enables a novel creative workflow that establishes an effective human–machine partnership. We describe the sound designers' diverse exploration strategies and use the results to frame design guidelines for enabling such co-exploration workflow in creative digital applications.

We further started exploring the concept of agency, which is one of the topics of interest for the development of computational co-creative systems. While agency can be broadly defined as a sense of control over the creative outcomes of a co-creative process, there is no shared understanding of how to design systems for and with agency. We reflect on the works we have developed over the last five years as a starting point for discussing the definition of agency 21. Further research in this direction will allow us to investigate how agency manifests itself in human-computer creative collaboration, also known as co-creativity. Creating a common ground in this topic allows for a more efficient process of analyzing and designing human-computer collaborative systems.

Finally, we participated in a panel entititled “Artificial Intelligence for Humankind: A Panel on How to Create Truly Interactive and Human-Centered AI for the Benefit of Individuals and Society.” at the Interact 2021 conference 32. We discussed the role of Human-Computer Interaction (HCI) in the conception, design, and implementation of human-centered artificial intelligence (AI). We emphasized the importance of ethics in the design of machine learning (ML) systems and the need for AI to create value for humans as individuals as well as for society. We discussed the opportunities of using HCI and User Experience Design methods to create advanced AI/ML-based systems that will be widely adopted, reliable, safe, trustworthy, and responsible. The resulting systems will integrate AI and ML algorithms while providing user interfaces and control panels that ensure meaningful human control.

7.3 Creativity

Participants: Sarah Fdili Alaoui [correspondant], Wendy Mackay [correspondant], Robert Falcasantos, Viktor Gustafsson, Janin Koch, Nicolas Taffin, Theophanis Tsandilas.

ExSitu is interested in understanding the work practices of creative professionals who push the limits of interactive technology. We follow a multi-disciplinary participatory design approach, working with both expert and non-expert users in diverse creative contexts.

In a pictorial, we explore with other researchers in HCI who have an artistic practice how these dual practices relate to each other, and how we might reconcile our mindful creative experiences with the formality of research 25. What benefits does such duality have, and can we illustrate the value of arts practice in HCI? The pictorial curates diverse artistic practices from the group of researchers, and offers reflection on the benefits and tensions in creativity and computing.

We explored strategies for designing tangible creativity support tools for professional writers, with the goal of transforming procrastination into a productive activity 17. After interviewing eight professional creative writers, we identified two key practices: Over Criticizing, where perfectionism and negative self-appraisal demotivates them and reduces their output; and Creative Voicing, where speaking their text aloud promotes reflection and inspires new possibilities. We then ran a structured observation study to compare writers’ perception of their own, pre-recorded text versus computer-generated voices and found that the latter distances them from their text and offers new perspectives. We then developed SonAmi, an interactive coaster that voices selected dialog whenever the author lifts their mug. Two creative writers said SonAmi made them feel they were listening to “someone else’s text” or “a podcast”, which helped them identify and improve writing issues. We show how tangible creativity support tools can build upon authors’ existing strategies i.e. voicing their own words, and take advantage of naturally occurring events e.g., taking a sip of coffee, to support productive procrastination without interfering with creative flow.

We also explored human movement 27, a rich and complex and has been studied from two opposing design approaches: technology-driven design which seeks to continuously improve movement and gesture creation and recognition for both the user and the system; and experiential design which explores nuances of aesthetic human movement, cultivates body awareness, and develops methods for movement in embodied design. We compare and contrast these approaches with respect to their intended users and contexts, focus of the movement, and respective stages of the technology design process. We conclude with a discussion of opportunities for future research that takes both perspectives into account.

We also describe the research led by the performance Radical Choreographic Object (RCO), a participatory performance where the audience members respond to instructions and interactions sent to their mobile phones as well as invitations to dance from the performers 16. The performance provides an experimental ground where we question how humans participate to the dance and how they relate to their mobile technologies. Through observations and conversations with participants during several showings, we show that the participants progressed from obeying and feeling hostage of the interactions to re-interpreting and re-appropriating them. We then discuss these findings in the light of the norms and constraints that are imposed by social behaviours and by the abundance of mobile technologies. We also reflect on the alternatives that emerge where people break free from those norms, embrace eccentricity and dare to dance.

We also studied an unusual form of creative movement: Aerial hoops are circular, hanging devices for both acrobatic exercise and artistic performance that let us explore the role of interactive sonification in physical activity 23. We developed SonicHoop, an augmented aerial hoop that generates auditory feedback via capacitive touch sensing, thus becoming a digital musical instrument that performers can play with their bodies. We compare three sonification strategies through a structured observation study with two professional aerial hoop performers. Results show that SonicHoop fundamentally changes their perception and choreographic processes: instead of translating music into movement, they search for bodily expressions that compose music. Different sound designs affect their movement differently, and auditory feedback, regardless of type of sound, improves movement quality. We discuss opportunities for using SonicHoop as an aerial hoop training tool, as a digital musical instrument, and as a creative object; as well as using interactive sonification in other acrobatic practices to explore full-body vertical interaction. This paper received an Honorable Mention award from ACM/CHI'2021.

We studied how players in Massively Multiplayer Online Role-Playing Games (MMORPGs) generate long-standing histories with their characters, but cannot express or see traces of their adventures in the game worlds 12. Our goal is to design game systems where players shape and contribute their own narratives as game content. Study one designed and play tested three Virtual Tabletop Role-Playing Game (VTTRPG) prototypes where we found that structured, graphical representations of players' traces support co-design and narrative analysis. We also identified four categories of traces: environment, build, memory and object. We introduce Play Traces, a novel analysis method for representing and co-designing with players and their narratives. A structured observation including Play Traces studied 17 players over 16 four-hour sessions in the third VTTRPG prototype. We found that players successfully (and enjoyably) co-designed novel narratives. We identified three themes for how traces can affect and support players in shaping new interactive narratives. We present four design implications describing how player-created narratives in MMORPGs should first Reveal & Pull Attention from other players, Invite & Push further exploration, Guide & Assist toward endings, and optionally Show & Hide traces. Finally, we discuss how treating players as co-designers offers a promising approach for developing the next generation of MMORPGs.

7.4 Collaboration

Participants: Sarah Fdili Alaoui [co-correspondant], Cédric Fleury [co-correspondant], Michel Beaudouin-Lafon [co-correspondant], Wendy Mackay [co-correspondant], Joanna McGrenere, Arthur Fages, Janin Koch, Yi Zhang.

ExSitu explores new ways of supporting collaborative interaction and remote communication. In particular, we studied co-located and remote collaboration on large wall-sized displays, video-conferencing systems, and computer tools to support collaborative activities for design and creativity.

We managed the successful submission of CONTINUUM, a nation-wide equipment project to advance interdisciplinary research on visualization, immersion, interaction and collaboration. A specific aspect of the projects is that all platforms will feature facilities for remote collaboration with other platforms, enabling our WILD and WILDER platforms to be used for advanced research on remote collaboration across large interactive spaces.

We also explored collaboration in a variety of creative contexts, in particular choreography and dance. We were interested in understanding how dancers collaborate as they rehearse a new dance piece, with a particular emphasis on how they use physical and digital artifacts to support this process 13. We conducted a 12-month longitudinal observational study with a dance company that re-staged a dance piece, taken from the contemporary repertoire and unknown to the dancers. The study focused on the role that artifacts play in shaping the learning of a dance piece. We showed how dancers produced an ecology of artifacts with the aim of analyzing the choreographic ideas behind the dance and sharing them with other learners. We showed that sharing these artifacts was challenging because they are idiosyncratic and embody their creator perspective and vocabulary. We then illustrated how dancers overcome this challenge by compiling artifacts and distributing the learning task among the group in order to create a common knowledge of the piece which improves the learning process. This study opens up design opportunities for technologies supporting long-term dance learning processes.

We also studied how choreographers create dance 18. Dance making is often a highly idiosyncratic, collaborative endeavor between a choreographer and a group of dancers which creates a rich context for designers of creativity-support tools (CSTs). However, long-term, ecologically valid studies of collaboration in dance making are rare, especially when mediated by digital tools. We conducted a 5-month field study in the frame of a dance course, where a choreographer and six students used a CST originally designed for choreographic writing. Our findings contrast with our initial assumptions about the role of the tool to mediate a diversity of notating styles and hierarchical roles, highlighting the value of and the challenges behind this in-the-wild study in uncovering needs and roles as they emerge over time.

Finally, we studied how couples mediate intimacy in their relationships through DearBoard, a Co-Customizable Keyboard for Everyday Messaging 20. Co-customizations are collaborative customizations in messaging apps that all conversation members can view and change, e.g. the color of chat bubbles on Facebook Messenger. Co-customizations grant new opportunities for expressing intimacy; however, most apps offer private customizations only. To investigate how people in close relationships integrate co-customizations into their established communication app ecosystems, we built DearBoard: an Android keyboard that allows two people to co-customize its color theme and a toolbar of expression shortcuts (emojis and GIFs). In a 5-week field study with 18 pairs of couples, friends, and relatives, participants expressed their shared interests, history, and knowledge of each other through co-customizations that served as meaningful decorations, interface optimizations, conversation themes, and non-verbal channels for playful, affectionate interactions. The co-ownership of the co-customizations invited participants to negotiate who customizes what and for whom they customize. This work shows how co-customizations mediate intimacy through place-making efforts and suggests new design opportunities.

8 Partnerships and cooperations

8.1 International initiatives

8.1.1 Participation in other International Programs

GRAVIDES

-

Title:

Grammars for Visualization-Design Sketching

-

Funding:

CNRS - University of Toronto Collaboration Program

-

Duration:

2021 - 2023

-

Coordinator:

Theophanis Tsandilas and Fanny Chevalier

-

Partners:

- CNRS – LISN

- University of Toronto – Dept. of Computer Science

-

Inria contact:

Theophanis Tsandilas

-

Summary:

The goal of the project is to create novel visualization authoring tools that enable common users with no design expertise to sketch and visually express their personal data.

8.2 European initiatives

8.2.1 FP7 & H2020 Projects

Humane AI

-

Title:

Toward AI Systems That Augment and Empower Humans by Understanding Us, our Society and the World Around Us

-

Duration:

Sept 2020 - August 2024

-

Coordinator:

DFKI

-

Partners:

- Aalto Korkeakoulusaatio SR (Finland)

- Agencia Estatal Consejo Superior Deinvestigaciones Cientificas (Spain)

- Albert-ludwigs-universitaet Freiburg (Germany)

- Athina-erevnitiko Kentro Kainotomias Stis Technologies Tis Pliroforias, Ton Epikoinonion Kai Tis Gnosis (Greece)

- Consiglio Nazionale Delle Ricerche (Italy)

- Deutsches Forschungszentrum Fur Kunstliche Intelligenz GMBH (Germany)

- Eidgenoessische Technische Hochschule Zuerich (Switzerland)

- Fondazione Bruno Kessler (Italy)

- German Entrepreneurship GMBH (Germany)

- INESC TEC - Instituto De Engenhariade Sistemas E Computadores, Tecnologia E Ciencia (Portugal)

- ING GROEP NV (Netherlands)

- Institut Jozef Stefan (Slovenia)

- Institut Polytechnique De Grenoble (France)

- Knowledge 4 All Foundation LBG (UK)

- Kobenhavns Universitet (Denmark)

- Kozep-europai Egyetem (Hungary)

- Ludwig-maximilians-universitaet Muenchen (Germany)

- Max-planck-gesellschaft Zur Forderung Der Wissenschaften EV (Germany)

- Technische Universitaet Wien (Austria)

- Technische Universitat Berlin (Germany)

- Technische Universiteit Delft (Netherlands)

- Thales SIX GTS France SAS (France)

- The University Of Sussex (UK)

- Universidad Pompeu Fabra (Spain)

- Universita di Pisa (Italy)

- Universiteit Leiden (Netherlands)

- University College Cork - National University of Ireland, Cork (Ireland)

- Uniwersytet Warszawski (Poland)

- Volkswagen AG (Germany)

-

Inria contact:

Wendy Mackay and Janin Koch

-

Summary:

The goal of the HumanE AI project is to create artificial intelligence technologies that synergistically work with humans, fitting seamlessly into our complex social settings and dynamically adapting to changes in our environment. Such technologies will empower humans with AI, allowing humans and human society to reach new potentials and more effectively to deal with the complexity of a networked globalized world.

ALMA

-

Title:

ALMA: Human Centric Algebraic Machine Learning

-

Duration:

Sept 2020 - August 2024

-

Coordinator:

DFKI

-

Partners:

- Deutsches Forschungszentrum Fur Kunstliche Intelligenz GMBH (Germany)

- Fiware Foundation EV (Germany)

- Fundacao D. Anna Sommer Champalimaud E DR. Carlos Montez Champalimaud (Portugal)

- Proyectos Y Sistemas de Mantenimiento SL (Spain)

- Teknologian Tutkimuskeskus VTT Oy (Finland)

- Universidad Carlos III de Madrid (Spain)

-

Inria contact:

Wendy Mackay and Janin Koch

-

Summary:

Algebraic machine learning (AML) is a new AI learning paradigm that builds upon Abstract Algebra, but rather than using statistics, instead produces models from semantic embeddings of data into discrete algebraic structures. The goal of the ALMA project is to leverage the properties of ALM to create a new generation of interactive, human-centric machine learning systems that integrate human knowledge with constraints, reduce dependence on statistical properties of the data, and the enhance explainability of the learning process.

ONE

-

Title:

ONE: Unified Principles of Interaction

-

Funding:

European Research Council (ERC Advanced Grant)

-

Duration:

October 2016 - March 2023

-

Coordinator:

Michel Beaudouin-Lafon

-

Summary:

The goal of ONE is to fundamentally re-think the basic principles and conceptual model of interactive systems to empower users by letting them appropriate their digital environment. The project addresses this challenge through three interleaved strands: empirical studies to better understand interaction in both the physical and digital worlds, theoretical work to create a conceptual model of interaction and interactive systems, and prototype development to test these principles and concepts in the lab and in the field. Drawing inspiration from physics, biology and psychology, the conceptual model combines substrates to manage digital information at various levels of abstraction and representation, instruments to manipulate substrates, and environments to organize substrates and instruments into digital workspaces.

8.3 National initiatives

ELEMENT

-

Title:

Enabling Learnability in Human Movement Interaction

-

Funding:

ANR

-

Duration:

2019 - 2022

-

Coordinator:

Baptiste Caramiaux, Sarah Fdili Alaoui, Wendy Mackay

-

Partners:

- Inria

- IRCAM

-

Inria contact:

Sarah Fdili Alaoui

-

Summary:

The goal of this project is to foster innovation in multimodal interaction, from non-verbal communication to interaction with digital media/content in creative applications, specifically by addressing two critical issues: the design of learnable gestures and movements; and the development of interaction models that adapt to a variety of user's expertise and facilitate human sensorimotor learning.

Living Archive

-

Title:

Interactive Documentation of Dance Heritage

-

Funding:

ANR JCJC

-

Duration:

2020 – 2024

-

Coordinator:

Sarah Fdili Alaoui

- Partners:

-

Inria contact:

Sarah Fdili Alaoui

-

Summary:

The goal of this project is To design interactive systems that allow dance practionners to generate interactive repositories made of self-curated collections of heterogeneous materials that capture and document their dance practices from their first person-perspective.

CAB: Cockpit and Bidirectional Assistant

-

Title:

Smart Cockpit Project

-

Duration:

Sept 2020 - August 2024

-

Coordinator:

SystemX Technological Research Institute

-

Partners:

- SystemX

- EDF

- Dassault

- RATP

- Orange

- Inria

-

Inria contact:

Wendy Mackay

-

Summary:

The goal of the CAB Smart Cockpit project is to define and evaluate an intelligent cockpit that integrates a bi-directional virtual agent that increases the real-time the capacities of operators facing complex and/or atypical situations. The project seeks to develop a new foundation for sharing agency between human users and intelligent systems: to empower rather than deskill users by letting them learn throughout the process, and to let users maintain control, even as their goals and circumstances change.

Archive Interactive Isadora Duncan

-

Title:

Archive Interactive Isadora Duncan

-

Funding:

Centre National de la Danse

-

Duration:

2019 – 2020

-

Coordinator:

Elisabeth Schwartz

-

Partners:

Rémi Ronfart, Inria Rhones Alpes

-

Inria contact:

Sarah Fdili Alaoui

-

Summary:

The goal of this project is to design an interactive system that allows to archive through graphical representations and movement based interactive systems the repertoire of Isadora Dunca.

GLACIS

-

Title:

Graphical Languages for Creating Infographics

-

Funding:

ANR

-

Duration:

2022 - 2025

-

Coordinator:

Theophanis Tsandilas

-

Partners:

- Inria Saclay (Theophanis Tsandilas, Michel Beaudouin-Lafon, Pierre Dragicevic)

- Inria Sophia Antipolis (Adrien Bousseau)

- École Centrale de Lyon (Romain Vuillemot)

- University of Toronto (Fanny Chevalier)

-

Inria contact:

Theophanis Tsandilas

-

Summary:

This project investigates interactive tools and techniques that can help graphic designers, illustrators, data journalists, and infographic artists, produce creative and effective visualizations for communication purposes, e.g., to inform the public about the evolution of a pandemic or help novices interpret global-warming predictions.

8.3.1 Investissements d'Avenir

CONTINUUM

-

Title:

Collaborative continuum from digital to human

-

Type:

EQUIPEX+ (Equipement d'Excellence)

-

Duration:

2020 – 2029

-

Coordinator:

Michel Beaudouin-Lafon

-

Partners:

- Centre National de la Recherche Scientifique (CNRS)

- Institut National de Recherche en Informatique et Automatique (Inria)

- Commissariat à l'Energie Atomique et aux Energies Alternatives (CEA)

- Université de Rennes 1

- Université de Rennes 2

- Ecole Normale Supérieure de Rennes

- Institut National des Sciences Appliquées de Rennes

- Aix-Marseille University

- Université de Technologie de Compiègne

- Université de Lille

- Ecole Nationale d'Ingénieurs de Brest

- Ecole Nationale Supérieure Mines-Télécom Atlantique Bretagne-Pays de la Loire

- Université Grenoble Alpes

- Institut National Polytechnique de Grenoble

- Ecole Nationale Supérieure des Arts et Métiers

- Université de Strasbourg

- COMUE UBFC Université de Technologie Belfort Montbéliard

- Université Paris-Saclay

- Télécom Paris - Institut Polytechnique de Paris

- Ecole Normale Supérieure Paris-Saclay

- CentraleSupélec

- Université de Versailles - Saint-Quentin

-

Budget:

13.6 Meuros public funding from ANR

-

Summary:

The CONTINUUM project will create a collaborative research infrastructure of 30 platforms located throughout France, to advance interdisciplinary research based on interaction between computer science and the human and social sciences. Thanks to CONTINUUM, 37 research teams will develop cutting-edge research programs focusing on visualization, immersion, interaction and collaboration, as well as on human perception, cognition and behaviour in virtual/augmented reality, with potential impact on societal issues. CONTINUUM enables a paradigm shift in the way we perceive, interact, and collaborate with complex digital data and digital worlds by putting humans at the center of the data processing workflows. The project will empower scientists, engineers and industry users with a highly interconnected network of high-performance visualization and immersive platforms to observe, manipulate, understand and share digital data, real-time multi-scale simulations, and virtual or augmented experiences. All platforms will feature facilities for remote collaboration with other platforms, as well as mobile equipment that can be lent to users to facilitate onboarding.

9 Dissemination

9.1 Promoting scientific activities

9.1.1 Scientific events: organisation

Member of the organizing committees

- UIST 2021, ACM Symposium on User Interface Software and Technology, Lasting Impact Award: Wendy Mackay (chair)

- UIST 2021, ACM Symposium on User Interface Software and Technology, Social Events: Janin Koch (chair)

- UIST 2021, ACM Symposium on User Interface Software and Technology, Demonstrations: Wendy Mackay (jury)

- UIST 2021, ACM Symposium on User Interface Software and Technology, Digital Participation: Jessalyn Alvina (Sub-Chair)

- CHI 2021, ACM CHI Conference on Human Factors in Computing Systems, Student Research Competition: Jessalyn Alvina (jury)

- MobileHCI 2022, ACM International Conference on Mobile Human-Computer Interaction, Local Arrangements: Jessalyn Alvina (Co-Chair)

- ACM IHM 2021, Interaction Homme-Machine: Doctoral consortium: Wendy Mackay (jury)

- ACM DIS 2021, Designing Interactive Systems: Sarah Fdili Alaoui (Sub-Chair)

9.1.2 Scientific events: selection

Chair of conference program committees

- ACM CHI 2021, CHI Conference on Human Factors in Computing Systems: Doctoral consortium: Wendy Mackay (co-chair)

Member of the conference program committees

- ACM DIS 2021, Designing Interactive Systems: Janin Koch

- ICCC 2021, Second Workshop on the Future of Co-Creative Systems: Janin Koch

Reviewer

- ACM CHI 2022, ACM CHI Conference on Human Factors in Computing Systems: Jessalyn Alvina, Michel Beaudouin-Lafon, Sarah Fdili Alaoui, Janin Koch, Wendy Mackay, Theophanis Tsandilas

- ACM UIST 2021, ACM Symposium on User Interface Software and Technology: Jessalyn Alvina, Michel Beaudouin-Lafon, Wendy Mackay, Theophanis Tsandilas

- ACM DIS 2021, Designing Interactive Systems: Jessalyn Alvina, Janin Koch, Wendy Mackay, Theophanis Tsandilas, Elizabeth Walton

- ACM MobileHCI 2021, ACM International Conference on Mobile Human-Computer Interaction: Jessalyn Alvina

- ACM TEI 2021, ACM on tangible, embedded, and embodied interaction: Janin Koch

- ACM C&C 2021, Creativity and Cognition: Sarah Fdili Alaoui, Janin Koch

- ACM IMWUT 2021, ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies: Janin Koch

- ACM CHIPLAY 2021, Proc. ACM Hum.-Computer. Interact 5, CHI PLAY, Work-In-Progress: Viktor Gustafsson

9.1.3 Journal

Member of the editorial boards

- Editor for the Human-Computer Interaction area of the ACM Books Series : Michel Beaudouin-Lafon (2013-)

- TOCHI, Transactions on Computer Human Interaction, ACM: Michel Beaudouin-Lafon (2009-), Wendy Mackay (2016-)

- JIPS, Journal d'Interaction Personne-Système, AFIHM: Michel Beaudouin-Lafon (2009-)

- Frontiers in Virtual Reality: Cédric Fleury (2019-)

- ACM Tech Briefs: Michel Beaudouin-Lafon (2021-)

Reviewer - reviewing activities

- TOCHI, Transactions on Computer Human Interaction, ACM: Janin Koch, Michel Beaudouin-Lafon, Sarah Fdili Alaoui

- HCIJ, Human Computer Interaction Journal, ACM: Janin Koch

- IEEE TOG 2021, IEE Transactions on Computational Intelligence and AI in Games: Viktor Gustafsson

9.1.4 Invited talks

- Participatory Design for Hybrid Intelligent Systems. Télécom Paris, 26 January 2021: Wendy Mackay (invited talk)

- HumanE AI Micro-projects Title: “Exploring the Impact of Agency on Human-Computer Partnerships”, 10 February 2021: Janin Koch & Wendy Mackay (invited talks)

- Artificial Intelligence Expert Virtual RoundTable, MITACs Canada/France 25 March 2021: Wendy Mackay (panelist)

- Systematic, « Data Sciences & AI » Hub sujet : l’évaluation de l’IA. Title: “Comment considérer le couple humain-machine dans l’évaluation de l’IA ?” April 15: Wendy Mackay (keynote)

- Club DS & AI (Data Science and AI), Systematic Paris Region Deep Tech Ecosystem: Atelier Evaluation des systèmes d’IA. Title: “Comment évaluer l’impact de l’IA sur les humains ?”, 20 April 2021: Wendy Mackay (Keynote)

- HumanE AI Net - Closing the Loop Symposium. Title: “Designing Human-Computer Partnerships” (19 May 2021): Wendy Mackay (keynote)

- HumanE AI Net - Closing the Loop Symposium. Title: “Visual Design Ideation with Machines” (19 May 2021): Janin Koch (invited talk)

- HI-Paris Summer School. Title: “Human-Computer Partnerships”, 10 July 2021: Wendy Mackay (keynote)

- ACM Interact “Artificial Intelligence for Humankind: A Panel on How to Create Truly Interactive and Human-Centered AI for the Benefit of Individuals and Society.’’ 2 September 2021: Wendy Mackay (panelist)

- UIST ACM Symposium on User Interface Software and Technology “Ask Me Anything about Thesis Statements”, 13 October 2021: Wendy Mackay (invited talk)

- KTH, Title: “On visual ideation with machines”, November 2021: Janin Koch (invited talk)

- Les Futurs Fantastiques. Bibliothèque National de France. “Creartathon : Combiner l’art et la science en une semaine”. 8 décembre 2021: Wendy Mackay and Nicolas Taffin (invited talk)

- NeurIPS Workshop, Title: `Human-Computer Partnerships', December 13 2021: Wendy Mackay (keynote)

- Centre Pompidou, Symposium on “Crossing arts, design and sciences to teach differently”, November 2021: Téo Sanchez

- Centre Pompidou, Symposium on “Crossing arts, design and sciences to teach differently”, November 2021: Sarah Fdili Alaoui

- CND Pantin, presentation of the Isadora Duncan project - Sarah Fdili Alaoui, Rémi Ronfard, Elisabeth Schwartz

- CND Lyon, Présentation of “conversation art & science around Isadora Duncan's movemen`" Sarah Fdili Alaoui, Rémi Ronfard, Elisabeth Schwartz and Manon Vialle

- FFF Séminars KTH “Integrating technologies in Dance: methods, creations and critical reflections " Sarah Fdili Alaoui (invited talk)

- Participation to SloMoCo Provocations : Sarah Fdili Alaoui (Invited panelist)

- CRI Paris, Tribute day to Francisco Varela on Enaction at Sarah Fdili Alaoui (invited talk)

- IRCAM-Centre Pompidou, Round Table on Feminisme Music & Tech at : Sarah Fdili Alaoui (organization and moderation)

- NIME 2021, Workshop “the Critical Perspectives on AI/ML in Musical Interface" : Sarah Fdili Alaoui (Invited Panellist)

- University of Sussex Research seminar series “Integrating technologies in Dance: methods, creations and critical reflections " : Sarah Fdili Alaoui (invited talk)

- Interview with WONOMUTE (Women Nordic Music Technology): Sarah Fdili Alaoui

- Round Table on movement notation Anestis Logothetis Centenary Symposium: Sarah Fdili Alaoui (Invited panelist)

9.1.5 Leadership within the scientific community

- CONTINUUM research infrastructure: Michel Beaudouin-Lafon (Scientific director)

- RTRA Digiteo (Research network in Computer Science), Université Paris-Saclay: Michel Beaudouin-Lafon (Director)

- Graduate School in Computer Science, Université Paris-Saclay: Michel Beaudouin-Lafon (Adjunct Director for Research)

- Labex Digicosme: Michel Beaudouin-Lafon (Co-chair of the Interaction theme)

- ACM Technology Policy Council: Michel Beaudouin-Lafon (Vice-chair)

9.1.6 Scientific expertise

- External reviewer for ERC Advanced Grants panel PE-6: Michel Beaudouin-Lafon

- Expert for Swiss National Science Foundation: Michel Beaudouin-Lafon

- Jury for the recruiting of a Professor, Université Paris-Saclay, 2021: Michel Beaudouin-Lafon, Wendy Mackay

- Jury for the mid-term of a PhD student at KTH : Sarah Fdili Alaoui

- Jury for the recruiting of a Maitre de conférence, Université de Lilles, 2021: Sarah Fdili Alaoui

- Dutch Science Foundation on Hybrid Intelligence Scientific Advisory Board, the Netherlands: Wendy Mackay (member)

- Hybrid Science Advisory Board, Aarhus University, Denmark: Wendy Mackay (member)

- SimTech Advisory Board (International Advisory Board for the Data-Integrated Simulation Science Cluster of Excellence, Stuttgart Germany): Wendy Mackay (member)

- Jury for recruiting an Assistant Professor, Darmstadt University, Germany 2020: Wendy Mackay (jury member)

- Participation to the Board for the organisation of the Année de recherche en recherche creation at ENS Saclay: Sarah Fdili Alaoui

9.1.7 Research administration

- “Commission consultatives paritaires (CCP)” Inria: Wendy Mackay (president)

- “Commission de la formation et de la vie universitaire (CFCU)" Université Paris-Saclay: Wendy Mackay (Inria representative)

- “Commission Locaux”, LISN: Theophanis Tsandilas (member)

- “Commission Scientifique”, Inria: Theophanis Tsandilas (member)

- Paris SIGCHI: Theophanis Tsandilas (vice-president)

- ACM Europe Technology Policy Committee: Michel Beaudouin-Lafon (member)

- Inria Paris-Saclay scientific mediation for Art, Science and Society: Janin Koch (co-organizer)

9.2 Teaching - Supervision - Juries

9.2.1 Teaching

- International Masters: Theophanis Tsandilas, Probabilities and Statistics, 32h, M1, Télécom Sud Paris, Institut Polytechnique de Paris

- Interaction & HCID Masters: Michel Beaudouin-Lafon, Wendy Mackay, Fundamentals of Situated Interaction, 21 hrs, M1/M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Sarah Fdili Alaoui, Creative Design, 21h, M1/M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Sarah Fdili Alaoui, Studio Art Science in collaboration with Centre Pompidou, 21h, M1/M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Michel Beaudouin-Lafon, Fundamentals of Human-Computer Interaction, 21 hrs, M1/M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Michel Beaudouin-Lafon, Groupware and Collaborative Interaction, 11 hrs, M1/M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Wendy Mackay, HCI Winter School 21 hrs, M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Wendy Mackay and Janin Koch, Design of Interactive Systems, 42 hrs, M1/M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Wendy Mackay, Advanced Design of Interactive Systems, 21 hrs, M1/M2, Univ. Paris-Saclay

- Interaction & HCID Masters: Baptiste Caramiaux, Gestural and Mobile Interaction, 24 hrs, M1/M2, Univ. Paris-Saclay

- Polytech: Cédric Fleury, Projet Java-Graphique-IHM, 24 hrs, 3st year, Univ. Paris-Saclay

- Inria & Université Paris-Saclay Creartathon Master Classes, 23 August: Michel Beaudouin-Lafon, Janin Koch, Wendy Mackay

- Licence Informatique: Michel Beaudouin-Lafon, Introduction to Human-Computer Interaction, 21h, second year, Univ. Paris-Saclay

PhD students - As teaching assistants

- Polytech App5: Arthur Fages Réalité virtuelle et intéractions, 48h, M2, Polytech Paris-Saclay, Univ. Paris-Saclay

- Polytech Et3: Arthur Fages Projet Java-Graphique IHM, 24h, L3, Polytech Paris-Saclay, Univ. Paris-Saclay

- Bachelor math and computer science: Téo Sanchez Introduction to imperative programming, 25h, L1, Univ. Paris-Saclay

- Bachelor math and computer science: Téo Sanchez Introduction to computer science, 24h, L1, Univ. Paris-Saclay

- Bachelor math and computer science: Téo Sanchez Introduction to data science, 24h, L1, Univ. Paris-Saclay

- Interaction & HCID Masters: Téo Sanchez, Interactive Machine Learning, 12h, M1, Univ. Paris-Saclay

- License Informatique: Miguel Renom, Programmation Web, 24h, L3, Polytech Paris-Saclay

- Interaction & HCID Masters: Camille Gobert, Advanced Programming of Interactive Systems, 21h, M1/M2, Univ. Paris-Saclay

- Computer Science Bachelor: Camille Gobert, Introduction à l'Interaction Humain-Machine, 12h, L2, Univ. Paris-Saclay

- Licence Double Diplôme Informatique, Mathématiques: Capucine Nghiem, TP: Introduction à la Programmation Impérative, 22h, L1, Univ. Paris-Saclay

- Licence Informatique: Capucine Nghiem, TP: Introduction à l'Informatique Graphique, 10h, L1, Univ. Paris-Saclay

9.2.2 Supervision

PhD students

- PhD: Viktor Gustafsson, Designing Persistent Player Narratives in Digital Game Worlds, 2 December 2021. Advisor: Wendy Mackay

- PhD: Yiran Zhang, Spatial consistency between real and virtual workspaces for individual and collaborative tasks in virtual environments, 16 December 2021. Advisors: Patrick Bourdot (LIMSI-CNRS) & Cédric Fleury

- PhD in progress: Téo Sanchez, Co-Learning in Interactive Systems, September 2018. Advisors: Baptiste Caramiaux & Wendy Mackay

- PhD in progress: Elizabeth Walton, Inclusive Design in Embodied Interaction, November 2018. Advisor: Wendy Mackay

- PhD in progress: Miguel Renom, Theoretical bases of human tool use in digital environments, October 2018. Advisors: Michel Beaudouin-Lafon & Baptiste Caramiaux

- PhD in progress: Han Han, Participatory design of digital environments based on interaction substrates, October 2018. Advisor: Michel Beaudouin-Lafon

- PhD in progress: Yi Zhang, Generative Design using Instrumental Interaction, Substrates and Co-adaptive Systems, October 2018. Advisor: Wendy Mackay

- PhD in progress: Martin Tricaud, Instruments and Substrates for Procedural Creation Tools, October 2019. Advisor: Michel Beaudouin-Lafon

- PhD in progress: Arthur Fages, Collaborative 3D Modeling in Augmented Reality Environments, December 2019. Advisors: Cédric Fleury & Theophanis Tsandilas

- PhD in progress: Alexandre Battut, Interactive Instruments and Substrates for Temporal Media, April 2020. Advisor: Michel Beaudouin-Lafon

- PhD in progress: Camille Gobert, Interaction Substrates for Programming, October 2020. Advisor: Michel Beaudouin-Lafon

- PhD in progress: Wissal Sahel. Co-adaptive Instruments for Smart Cockpits, November 2020. Advisors: Wendy Mackay & Nicolas Heulot (SystemX).

- PhD in progress: Capucine Nghiem. Speech-Enhanced User Interfaces for Design Sketching, October 2021. Advisors: Theophanis Tsandilas & Adrien Bousseau (Inria Sophia Antipolis)

- PhD in progress: Anna Offengwanger. Grammars and Tools for Sketch-Driven Visualization Design, October 2021. Advisors: Theophanis Tsandilas & Fanny Chevalier (University of Toronto)

- PhD in progress: Tove Grimstad Bang. Somaesthetics applied to dance documentation and transmission, September 2021. Advisor: Sarah Fdili Alaoui

Masters students

- Julia Biesada, Progressive Visual Analysis Tools for Large Time Series Data, M1. Advisors: Theophanis Tsandilas and Anastasia Bezerianos (ILDA)

- Robert Falcasantos. Empowered Design: A Methodical Approach to Overcome Obstacles and Improve Participation Quality in Codesign Projects Wendy Mackay (advisor)

- Junhang Yu “Interactive Path Visualization”: Wendy Mackay (advisor)

Other

- Capucine Nghiem, Speech-Enhanced User Interfaces for Design Sketching, Pre-doctoral internship. Advisors: Adrien Bousseau (Inria Sophia Antipolis) and Theophanis Tsandilas

- Baptiste Lorenzi, CentraleSupelec research program. Advisor: Theophanis Tsandilas

9.2.3 Juries

PhD theses

- Axel Antoine, Inria & Université de Lille, “Études des stratégies et conception d’outils pour la production de supports illustratifs d’interaction, 29 janvier 2021: Theophanis Tsandilas (reviewer)

- Alix Ducros, Université de Lyon, Place-centric design for public displays in libraries, 24 septembre 2021: Theophanis Tsandilas (reviewer)

- Elio Keddisseh, Université de Toulouse – Paul Sabatier, “Techniques d'interaction pour environnement multi-dispositifs : application à la formation sur simulateurs ferroviaires”, 19 juillet 2021: Theophanis Tsandilas (jury member)

- Eugenie Brasier, Inria & Université Paris-Saclay “xx”, 13 decembre 2022: Wendy Mackay (Président)

"Habilitation à diriger des recherches"

- Ferran Argelaguet, Inria & Université Rennes 1, “The "Infinite Loop" – Towards Adaptive 3D User Interfaces, 29 janvier 2021: Michel Beaudouin-Lafon (reviewer)

- Sotiris Manitsaris “Movement-based Human-Machine Collaboration: a Human-centred AI approach Sorbonne University, March 2021, Wendy Mackay (rapporteur)

9.3 Popularization

- CreARTathon 2021Creative Hackathon, 23-27.08 2021: Janin Koch, Wendy Mackay, Nicolas Taffin

- Workshop on artificial intelligence with high school students, with the association TRACES, June 2021: Téo Sanchez

9.3.1 Education

- High-school textbook: Michel Beaudouin-Lafon (editor and co-author). Numérique et Sciences Informatiques (NSI), 1ère spécialité (2021), Michel Beaudouin-Lafon, Benoit Groz, Mathieu Nancel, Olivier Marcé, and Emmanuel Waller. Hachette Education, Paris, France. 288 pages. ISBN 978-2-01-786630-5.

9.3.2 Interventions

- Interview for the article « La Corée du Sud voit le métavers à moitié plein » by Elise Viniacourt in Libération, 11 December 2021: Michel Beaudouin-Lafon

- Radio show « Les P'tits Bateaux », France Inter, answer to kids' questions, 7 November 2021, "How does a touchscreen work": Michel Beaudouin-Lafon

- Interview in Worldzine : « Le métavers, un monde qui ne me parait pas très imaginatif ». Interview by Aylin Ho, 7 December 2021: Michel Beaudouin-Lafon

- Talk in Rencontres Intelligence Economique de Paris-Saclay : « IA, forces et faiblesses de l’écosystème », 22 June 2021, Palaiseau: Michel Beaudouin-Lafon

- Interview for two articles in the series Tech & Futur of the web site Les Echos Start, August 2021 : « Pourquoi les jeunes tapotent leur smartphone avec leurs pouces, et leurs aînés avec leur index ? » and « Pourquoi a-t-on souvent envie d'insulter le correcteur orthographique de son smartphone ? »: Michel Beaudouin-Lafon

- Interview in the radio show Décryptage of Radio Notre-Dame for the book « Vers le cyber-monde, humain et numérique en interaction », 17 April 2021: Michel Beaudouin-Lafon

- Participation in the podcast « Cerveau connecté, fiction ou réalité ? » in the context of the event Semaine du Cerveau, March 2021, on the topic « Cerveau connecté : comment concilier humain et machine »: Michel Beaudouin-Lafon

- Interview in the series « Vies de labo » of Université Paris-Saclay, February 2021: Michel Beaudouin-Lafon.

- Interview for the Collège de France. “Appropriation et adaptation font la force du raisonnement technique humain.” interviewer: William Rowe-Pirra. Interviewed: Wendy Mackay

10 Scientific production

10.1 Major publications

- 1 inproceedingsExpressive Keyboards: Enriching Gesture-Typing on Mobile Devices.Proceedings of the 29th ACM Symposium on User Interface Software and Technology (UIST 2016)ACMTokyo, JapanACMOctober 2016, 583 - 593

- 2 inproceedingsCamRay: Camera Arrays Support Remote Collaboration on Wall-Sized Displays.Proceedings of the CHI Conference on Human Factors in Computing Systems CHI '17Denver, United StatesACMMay 2017, 6718 - 6729

- 3 inproceedingsBeyond Snapping: Persistent, Tweakable Alignment and Distribution with StickyLines.UIST '16 Proceedings of the 29th Annual Symposium on User Interface Software and TechnologyProceedings of the 29th Annual Symposium on User Interface Software and TechnologyTokyo, JapanOctober 2016

- 4 inproceedingsTouchstone2: An Interactive Environment for Exploring Trade-offs in HCI Experiment Design.CHI 2019 - The ACM CHI Conference on Human Factors in Computing SystemsProceedings of the 2019 CHI Conference on Human Factors in Computing Systems217ACMGlasgow, United KingdomACMMay 2019, 1--11

- 5 articleImageSense: An Intelligent Collaborative Ideation Tool to Support Diverse Human-Computer Partnerships.Proceedings of the ACM on Human-Computer Interaction 4CSCW1May 2020, 1-27

- 6 inproceedingsBIGnav: Bayesian Information Gain for Guiding Multiscale Navigation.ACM CHI 2017 - International conference of Human-Computer InteractionDenver, United StatesMay 2017, 5869-5880

- 7 articleShapeGuide: Shape-Based 3D Interaction for Parameter Modification of Native CAD Data.Frontiers in Robotics and AI5November 2018

- 8 inproceedingsArticulating Experience: Reflections from Experts Applying Micro-Phenomenology to Design Research in HCI.CHI '20 - CHI Conference on Human Factors in Computing SystemsHonolulu HI USA, United StatesACMApril 2020, 1-14

- 9 articleStructGraphics: Flexible Visualization Design through Data-Agnostic and Reusable Graphical Structures.IEEE Transactions on Visualization and Computer Graphics272October 2020, 315-325

- 10 inproceedingsStretchis: Fabricating Highly Stretchable User Interfaces.ACM Symposium on User Interface Software and Technology (UIST)Tokyo, JapanOctober 2016, 697-704

10.2 Publications of the year

International journals

International peer-reviewed conferences

National peer-reviewed Conferences

Conferences without proceedings

Scientific book chapters

10.3 Other

Scientific popularization

10.4 Cited publications

- 34 inproceedingsInstrumental Interaction: An Interaction Model for Designing post-WIMP User Interfaces.Proceedings of the SIGCHI Conference on Human Factors in Computing SystemsCHI '00New York, NY, USAThe Hague, The NetherlandsACM2000, 446--453URL: http://doi.acm.org/10.1145/332040.332473

- 35 inproceedingsReification, Polymorphism and Reuse: Three Principles for Designing Visual Interfaces.Proceedings of the Working Conference on Advanced Visual InterfacesAVI '00New York, NY, USAPalermo, ItalyACM2000, 102--109URL: http://doi.acm.org/10.1145/345513.345267

- 36 phdthesisDesigning Persistent Player Narratives in Digital Game Worlds.Université Paris-SaclayDecember 2021