Keywords

Computer Science and Digital Science

- A5.1.3. Haptic interfaces

- A5.1.5. Body-based interfaces

- A5.1.9. User and perceptual studies

- A5.4.2. Activity recognition

- A5.4.5. Object tracking and motion analysis

- A5.4.8. Motion capture

- A5.5.4. Animation

- A5.6. Virtual reality, augmented reality

- A5.6.1. Virtual reality

- A5.6.3. Avatar simulation and embodiment

- A5.6.4. Multisensory feedback and interfaces

- A5.10.3. Planning

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A5.11.1. Human activity analysis and recognition

- A6. Modeling, simulation and control

Other Research Topics and Application Domains

- B1.2.2. Cognitive science

- B2.5. Handicap and personal assistances

- B2.8. Sports, performance, motor skills

- B5.1. Factory of the future

- B5.8. Learning and training

- B7.1.1. Pedestrian traffic and crowds

- B9.2.2. Cinema, Television

- B9.2.3. Video games

- B9.4. Sports

1 Team members, visitors, external collaborators

Research Scientists

- Franck Multon [Team leader, Inria, Senior Researcher, HDR]

- Adnane Boukhayma [Inria, Researcher]

- Ludovic Hoyet [Inria, Researcher]

- Katja Zibrek [Inria, Starting Research Position]

Faculty Members

- Benoit Bardy [Univ de Montpellier, Professor, from Sep 2021, HDR]

- Nicolas Bideau [Univ Rennes2, Associate Professor]

- Benoit Bideau [Univ Rennes2, Professor, HDR]

- Marc Christie [Univ de Rennes I, Associate Professor]

- Armel Crétual [Univ Rennes, Associate Professor, HDR]

- Georges Dumont [École normale supérieure de Rennes, Professor, HDR]

- Diane Haering [Univ Rennes2, Associate Professor]

- Aline Hufschmitt [Univ de Rennes I, Associate Professor, from Oct 2021]

- Simon Kirchhofer [École normale supérieure de Rennes, Associate Professor, until Aug 2021]

- Richard Kulpa [Univ Rennes2, Associate Professor, HDR]

- Fabrice Lamarche [Univ de Rennes I, Associate Professor]

- Guillaume Nicolas [Univ Renne, Associate Professor]

- Anne-Hélène Olivier [Univ Rennes2, Associate Professor, HDR]

- Charles Pontonnier [École normale supérieure de Rennes, Associate Professor, HDR]

Post-Doctoral Fellows

- Pierre Raimbaud [Inria]

- Divyaksh Subhash Chander [ENS Rennes]

PhD Students

- Vicenzo Abichequer-Sangalli [Inria, co-supervised with Rainbow]

- Jean Basset [Inria, until Aug 2021]

- Jean Baptiste Bordier [Univ de Rennes I]

- Alexandre Bruckert [Univ de Rennes1, From 11/2021]

- Ludovic Burg [Univ de Rennes I]

- Thomas Chatagnon [Inria, co-supervised with Rainbow]

- Adèle Colas [Inria, co-supervised with Rainbow]

- Rebecca Crolan [École normale supérieure de Rennes, from Oct 2021]

- Louise Demestre [École normale supérieure de Rennes]

- Diane Dewez [Inria, co-supervised with Hybrid]

- Olfa Haj Mahmoud [Faurecia, until Dec.2]

- Nils Hareng [Univ Rennes2]

- Alberto Jovane [Inria, co-supervised with Rainbow]

- Qian Li [Inria]

- Claire Livet [École normale supérieure de Rennes]

- Pauline Morin [École normale supérieure de Rennes]

- Lucas Mourot [InterDigital]

- Benjamin Niay [Inria]

- Nicolas Olivier [InterDigital]

- Carole Puil [IFPEK Rennes, until Sep 2022]

- Xiaoyuan Wang [École normale supérieure de Rennes, from Oct 2021]

- Xi Wang [Univ de Rennes I, until Oct 2021]

- Tairan Yin [Inria, co-supervised with Rainbow]

- Mohamed Younes [Inria]

Technical Staff

- Robin Adili [Inria, Engineer]

- Robin Courant [Univ de Rennes1, From 11/2021]

- Ronan Gaugne [Univ de Rennes I, Engineer]

- Ific Goude [Univ de Rennes I, Engineer, from Jun 2021]

- Laurent Guillo [CNRS, Engineer]

- Shubhendu Jena [Inria, Engineer]

- Nena Markovic [Univ de Rennes1, From 12/2021]

- Maé Mavromatis [Univ de Rennes1]

- Anthony Mirabile [Univ de Rennes I, Engineer]

- Adrien Reuzeau [Inria, Engineer]

- Salome Ribault [Inria, Engineer, from Oct 2021]

- Xiaofang Wang [Univ de Reims Champagne-Ardennes, Engineer, until Oct 2021]

Interns and Apprentices

- Francois Bourel [Univ de Rennes I, from Apr 2021 until Oct 2021]

- Vincent Etien [Inria, from Feb 2021 until Jul 2021]

- Quentin Gomelet-Richard [Inria, from Jul 2021 until Nov 2021]

- Capucine Leroux [Polytech Sorbonne, from Apr 2021 until Jul 2021]

- Badr Ouannas [Inria, from Mar 2021 until Aug 2021]

- Amine Ouasfi [Inria, from Mar 2021 until Aug 2021]

- Hugo Pottier [Inria, from Feb 2021 until Jun 2021]

- Arnaud Roger [Inria, from Feb 2021 until Jul 2021]

Administrative Assistant

- Nathalie Denis [Inria]

Visiting Scientist

- Radoslaw Sterna [Jagiellonian University, Krakow, Poland, Jul 2021]

External Collaborator

- Anthony Sorel [Univ Rennes2, until Aug 2021]

2 Overall objectives

2.1 Presentation

MimeTIC is a multidisciplinary team whose aim is to better understand and model human activity in order to simulate realistic autonomous virtual humans: realistic behaviors, realistic motions and realistic interactions with other characters and users. It leads to modeling the complexity of a human body, as well as of his environment where he can pick-up information and he can act on it. A specific focus is dedicated to human physical activity and sports as it raises the highest constraints and complexity when addressing these problems. Thus, MimeTIC is composed of experts in computer science whose research interests are computer animation, behavioral simulation, motion simulation, crowds and interaction between real and virtual humans. MimeTIC also includes experts in sports science, motion analysis, motion sensing, biomechanics and motion control. Hence, the scientific foundations of MimeTIC are motion sciences (biomechanics, motion control, perception-action coupling, motion analysis), computational geometry (modeling of the 3D environment, motion planning, path planning) and design of protocols in immersive environments (use of virtual reality facilities to analyze human activity).

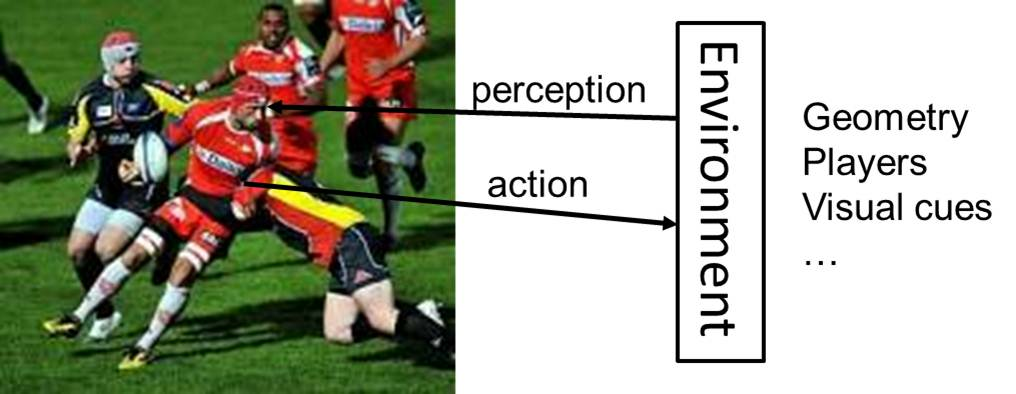

Thanks to these skills, we wish to reach the following objectives: to make virtual humans behave, move and interact in a natural manner in order to increase immersion and improve knowledge on human motion control. In real situations (see Figure 1), people have to deal with their physiological, biomechanical and neurophysiological capabilities in order to reach a complex goal. Hence MimeTIC addresses the problem of modeling the anatomical, biomechanical and physiological properties of human beings. Moreover these characters have to deal with their environment. Firstly they have to perceive this environment and pick-up relevant information. Thus, MimeTIC focuses on the problem of modeling the environment including its geometry and associated semantic information. Second, they have to act on this environment to reach their goals. It leads to cognitive processes, motion planning, joint coordination and force production in order to act on this environment.

In order to reach the above objectives, MimeTIC has to address three main challenges:

- deal with the intrinsic complexity of human beings, especially when addressing the problem of interactions between people for which it is impossible to predict and model all the possible states of the system,

- make the different components of human activity control (such as the biomechanical and physical, the reactive, cognitive, rational and social layers) interact while each of them is modeled with completely different states and time sampling,

- and measure human activity while balancing between ecological and controllable protocols, and to be able to extract relevant information in wide databases of information.

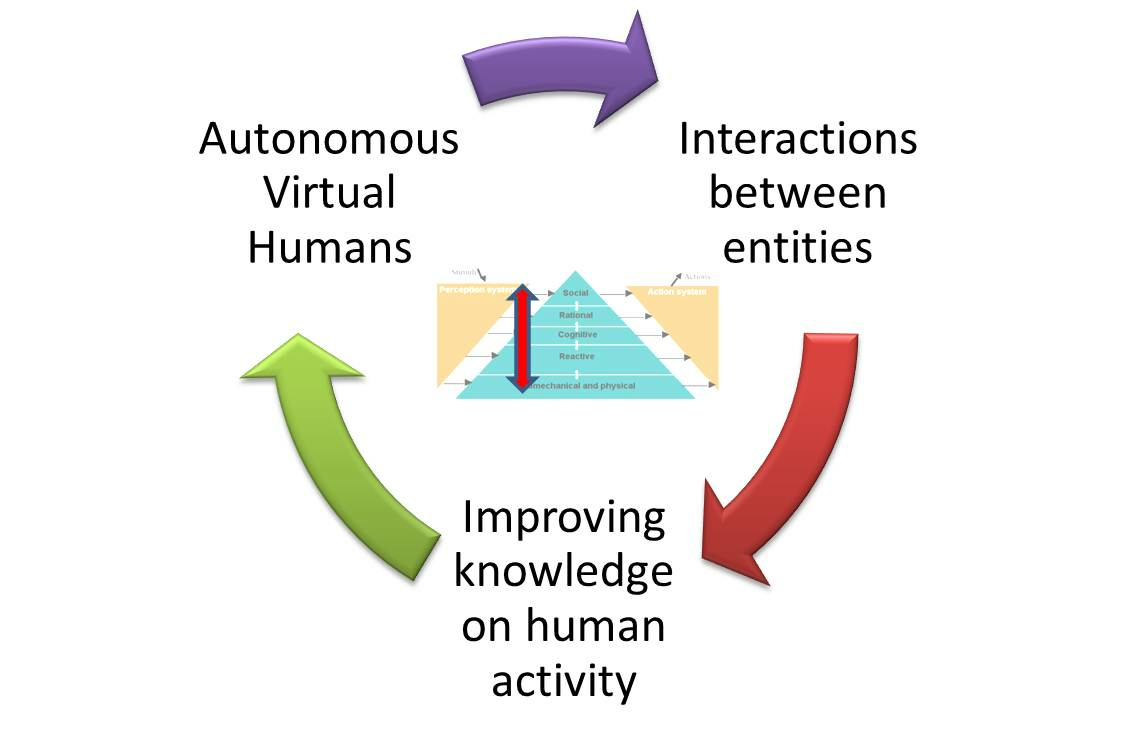

As opposed to many classical approaches in computer simulation, which mostly propose simulation without trying to understand how real people act, the team promotes a coupling between human activity analysis and synthesis, as shown in Figure 2.

In this research path, improving knowledge on human activity enables us to highlight fundamental assumptions about natural control of human activities. These contributions can be promoted in e.g. biomechanics, motion sciences, neurosciences. According to these assumptions we propose new algorithms for controlling autonomous virtual humans. The virtual humans can perceive their environment and decide of the most natural action to reach a given goal. This work is promoted in computer animation, virtual reality and has some applications in robotics through collaborations. Once autonomous virtual humans have the ability to act as real humans would in the same situation, it is possible to make them interact with others, i.e., autonomous characters (for crowds or group simulations) as well as real users. The key idea here is to analyze to what extent the assumptions proposed at the first stage lead to natural interactions with real users. This process enables the validation of both our assumptions and our models.

Among all the problems and challenges described above, MimeTIC focuses on the following domains of research:

- motion sensing which is a key issue to extract information from raw motion capture systems and thus to propose assumptions on how people control their activity,

- human activity & virtual reality, which is explored through sports application in MimeTIC. This domain enables the design of new methods for analyzing the perception-action coupling in human activity, and to validate whether the autonomous characters lead to natural interactions with users,

- interactions in small and large groups of individuals, to understand and model interactions with lot of individual variability such as in crowds,

- virtual storytelling which enables us to design and simulate complex scenarios involving several humans who have to satisfy numerous complex constraints (such as adapting to the real-time environment in order to play an imposed scenario), and to design the coupling with the camera scenario to provide the user with a real cinematographic experience,

- biomechanics which is essential to offer autonomous virtual humans who can react to physical constraints in order to reach high-level goals, such as maintaining balance in dynamic situations or selecting a natural motor behavior among the whole theoretical solution space for a given task,

- autonomous characters which is a transversal domain that can reuse the results of all the other domains to make these heterogeneous assumptions and models provide the character with natural behaviors and autonomy.

3 Research program

3.1 Biomechanics and Motion Control

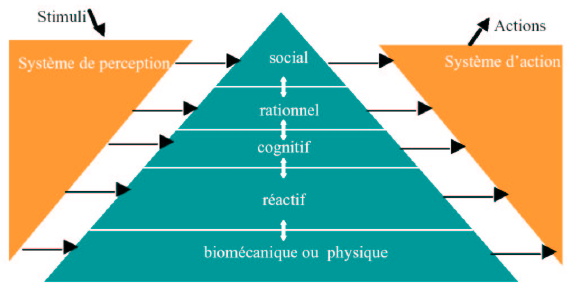

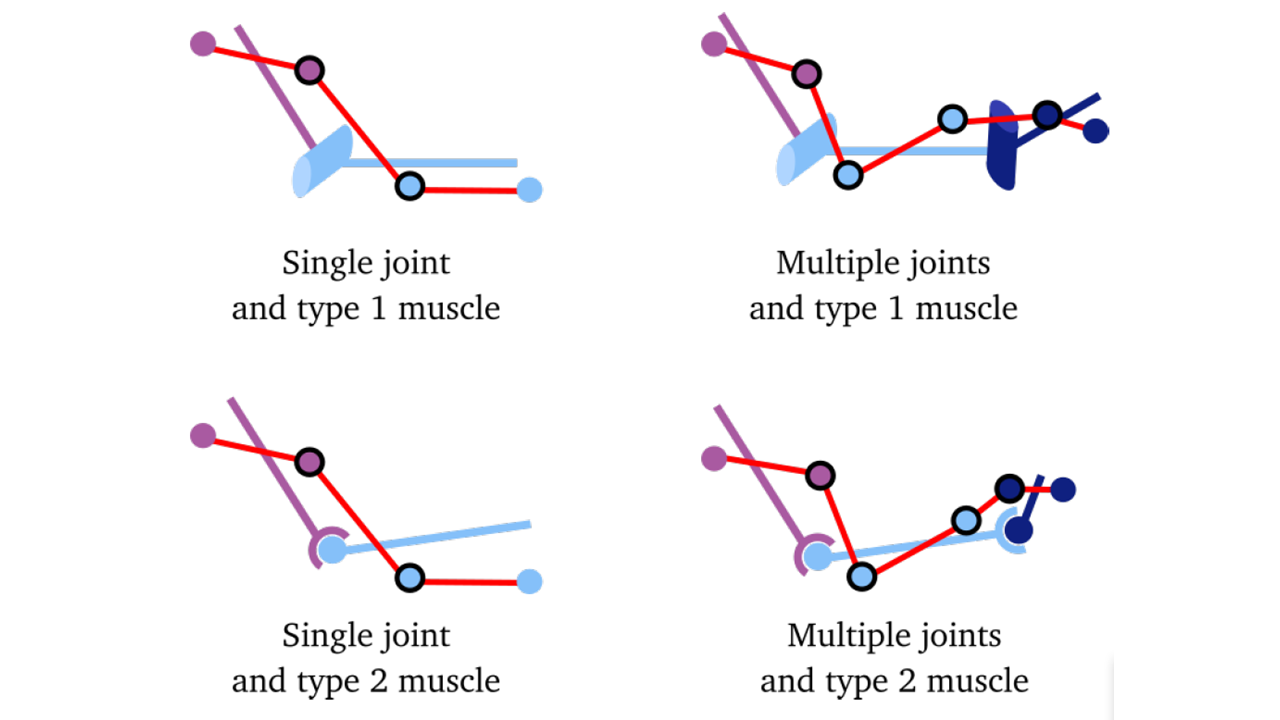

Human motion control is a highly complex phenomenon that involves several layered systems, as shown in Figure 3. Each layer of this controller is responsible for dealing with perceptual stimuli in order to decide the actions that should be applied to the human body and his environment. Due to the intrinsic complexity of the information (internal representation of the body and mental state, external representation of the environment) used to perform this task, it is almost impossible to model all the possible states of the system. Even for simple problems, there generally exists an infinity of solutions. For example, from the biomechanical point of view, there are much more actuators (i.e. muscles) than degrees of freedom leading to an infinity of muscle activation patterns for a unique joint rotation. From the reactive point of view there exists an infinity of paths to avoid a given obstacle in navigation tasks. At each layer, the key problem is to understand how people select one solution among these infinite state spaces. Several scientific domains have addressed this problem with specific points of view, such as physiology, biomechanics, neurosciences and psychology.

In biomechanics and physiology, researchers have proposed hypotheses based on accurate joint modeling (to identify the real anatomical rotational axes), energy minimization, force and torques minimization, comfort maximization (i.e. avoiding joint limits), and physiological limitations in muscle force production. All these constraints have been used in optimal controllers to simulate natural motions. The main problem is thus to define how these constraints are composed altogether such as searching the weights used to linearly combine these criteria in order to generate a natural motion. Musculoskeletal models are stereotyped examples for which there exists an infinity of muscle activation patterns, especially when dealing with antagonist muscles. An unresolved problem is to define how to use the above criteria to retrieve the actual activation patterns, while optimization approaches still leads to unrealistic ones. It is still an open problem that will require multidisciplinary skills including computer simulation, constraint solving, biomechanics, optimal control, physiology and neuroscience.

In neuroscience, researchers have proposed other theories, such as coordination patterns between joints driven by simplifications of the variables used to control the motion. The key idea is to assume that instead of controlling all the degrees of freedom, people control higher level variables which correspond to combinations of joint angles. In walking, data reduction techniques such as Principal Component Analysis have shown that lower-limb joint angles are generally projected on a unique plane whose angle in the state space is associated with energy expenditure. Although knowledge exists for specific motions, such as locomotion or grasping, this type of approach is still difficult to generalize. The key problem is that many variables are coupled and it is very difficult to objectively study the behavior of a unique variable in various motor tasks. Computer simulation is a promising method to evaluate such type of assumptions as it enables to accurately control all the variables and to check if it leads to natural movements.

Neuroscience also addresses the problem of coupling perception and action by providing control laws based on visual cues (or any other senses), such as determining how the optical flow is used to control direction in navigation tasks, while dealing with collision avoidance or interception. Coupling of the control variables is enhanced in this case as the state of the body is enriched by the large amount of external information that the subject can use. Virtual environments inhabited with autonomous characters whose behavior is driven by motion control assumptions is a promising approach to solve this problem. For example, an interesting problem in this field is to navigate in an environment inhabited with other people. Typically, avoiding static obstacles along with other people moving inside that environment is a combinatory problem that strongly relies on the coupling between perception and action.

One of the main objectives of MimeTIC is to enhance knowledge on human motion control by developing innovative experiments based on computer simulation and immersive environments. To this end, designing experimental protocols is a key point and some of the researchers in MimeTIC have developed this skill in biomechanics and perception-action coupling. Associating these researchers to experts in virtual human simulation, computational geometry and constraints solving enable us to contribute to enhance fundamental knowledge in human motion control.

3.2 Experiments in Virtual Reality

Understanding interactions between humans is challenging because it addresses many complex phenomena including perception, decision-making, cognition and social behaviors. Moreover, all these phenomena are difficult to isolate in real situations, and it is therefore highly complex to understand their individual influence on these human interactions. It is then necessary to find an alternative solution that can standardize the experiments and that allows the modification of only one parameter at a time. Video was first used since the displayed experiment is perfectly repeatable and cut-offs (stop the video at a specific time before its end) allow having temporal information. Nevertheless, the absence of adapted viewpoint and stereoscopic vision does not provide depth information that are very meaningful. Moreover, during video recording sessions, a real human acts in front of a camera and not in front of an opponent. That interaction is then not a real interaction between humans.

Virtual Reality (VR) systems allow full standardization of the experimental situations and the complete control of the virtual environment. It allows to modify only one parameter at a time and observe its influence on the perception of the immersed subject. VR can then be used to understand what information is picked up to make a decision. Moreover, cut-offs can also be used to obtain temporal information about when information is picked up. When the subject can react as in a real situation, his movement (captured in real time) provides information about his reactions to the modified parameter. Not only is the perception studied, but the complete perception-action loop. Perception and action are indeed coupled and influence each other as suggested by Gibson in 1979.

Finally, VR allows the validation of virtual human models. Some models are indeed based on the interaction between the virtual character and the other humans, such as a walking model. In that case, there are two ways to validate it. First, they can be compared to real data (e.g. real trajectories of pedestrians). But such data are not always available and are difficult to get. The alternative solution is then to use VR. The validation of the realism of the model is then done by immersing a real subject into a virtual environment in which a virtual character is controlled by the model. Its evaluation is then deduced from how the immersed subject reacts when interacting with the model and how realistic he feels the virtual character is.

3.3 Computer Animation

Computer animation is the branch of computer science devoted to models for the representation and simulation of the dynamic evolution of virtual environments. A first focus is the animation of virtual characters (behavior and motion). Through a deeper understanding of interactions using VR and through better perceptive, biomechanical and motion control models to simulate the evolution of dynamic systems, the Mimetic team has the ability to build more realistic, efficient and believable animations. Perceptual study also enables us to focus computation time on relevant information (i.e. leading to ensure natural motion from the perceptual points of view) and save time for unperceived details. The underlying challenges are (i) the computational efficiency of the system which needs to run in real-time in many situations, (ii) the capacity of the system to generalise/adapt to new situations for which data were not available, or models were not defined for, and (iii) the variability of the models, i.e. their ability to handle many body morphologies and generate variations in motions that would be specific to each virtual character.

In many cases, however, these challenges cannot be addressed in isolation. Typically character behaviors also depend on the nature and the topology of the environment they are surrounded by. In essence, a character animation system should also rely on smarter representations of the environments, in order to better perceive the environment itself, and take contextualised decisions. Hence the animation of virtual characters in our context often requires to be coupled with models to represent the environment, to reason, and to plan both at a geometric level (can the character reach this location), and at a semantic level (should it use the sidewalk, the stairs, or the road). This represents the second focus. Underlying challenges are the ability to offer a compact -yet precise- representation on which efficient path, motion planning and high-level reasoning can be performed.

Finally, a third scientific focus is digital storytelling. Evolved representations of motions and environments enable realistic animations. It is yet equally important to question how these events should be portrayed, when and under which angle. In essence, this means integrating discourse models into story models, the story representing the sequence of events which occur in a virtual environment, and the discourse representing how this story should be displayed (i.e. which events to show in which order and with which viewpoint). Underlying challenges are pertained to:

- narrative discourse representations,

- projections of the discourse into the geometry, planning camera trajectories and planning cuts between the viewpoints,

- means to interactively control the unfolding of the discourse.

By therefore establishing the foundations to build bridges between the high-level narrative structures, the semantic/geometric planning of motions and events, and low-level character animations, the Mimetic team adopts a principled and all-inclusive approach to the animation of virtual characters.

4 Application domains

4.1 Animation, Autonomous Characters and Digital Storytelling

Computer Animation is one of the main application domains of the research work conducted in the MimeTIC team, in particular in relation to the entertainment and game industries. In these domains, creating virtual characters that are able to replicate real human motions and behaviours still highlights unanswered key challenges, especially as virtual characters are required to populate virtual worlds. For instance, virtual characters are used to replace secondary actors and generate highly populated scenes that would be hard and costly to produce with real actors. This requires to create high quality replicas that appear, move and behave both individually and collectively like real humans. The three key challenges for the MimeTIC team are therefore:

- to create natural animations (i.e., virtual characters that move like real humans),

- to create autonomous characters (i.e., that behave like real humans),

- to orchestrate the virtual characters so as to create interactive stories.

First, our challenge is to create animations of virtual characters that are natural, i.e. moving like a real human real would. This challenge covers several aspects of Character Animation depending on the context of application, e.g., producing visually plausible or physically correct motions, producing natural motion sequences, etc. Our goal is therefore to develop novel methods for animating virtual characters, based on motion capture, data-driven approaches, or learning approaches. However, because of the complexity of human motion (number of degrees of freedom that can be controlled), resulting animations are not necessarily physically, biomechanically, or visually plaisible. For instance, current physics-based approaches produce physically correct motions but not necessarily perceptually plausible ones. All these reasons are why most entertainment industries (gaming and movie production for example) still mainly rely on manual animation. Therefore, research in MimeTIC on character animation is also conducted with the goal of validating the results from objective standpoint (physical, biomechanical) as well as subjective one (visual plausibility).

Second, one of the main challenges in terms of autonomous characters is to provide a unified architecture for the modeling of their behavior. This architecture includes perception, action and decisional parts. This decisional part needs to mix different kinds of models, acting at different time scales and working with different natures of data, ranging from numerical (motion control, reactive behaviors) to symbolic (goal oriented behaviors, reasoning about actions and changes). For instance, autonomous characters play the role of actors that are driven by a scenario in video games and virtual storytelling. Their autonomy allows them to react to unpredictable user interactions and adapt their behavior accordingly. In the field of simulation, autonomous characters are used to simulate the behavior of humans in different kinds of situations. They enable to study new situations and their possible outcomes. In the MimeTIC team, our focus is therefore not to reproduce the human intelligence but to propose an architecture making it possible to model credible behaviors of anthropomorphic virtual actors evolving/moving in real-time in virtual worlds. The latter can represent particular situations studied by psychologists of the behavior or to correspond to an imaginary universe described by a scenario writer. The proposed architecture should mimic all the human intellectual and physical functions.

Finally, interactive digital storytelling, including novel forms of edutainment and serious games, provides access to social and human themes through stories which can take various forms and contains opportunities for massively enhancing the possibilities of interactive entertainment, computer games and digital applications. It provides chances for redefining the experience of narrative through interactive simulations of computer-generated story worlds and opens many challenging questions at the overlap between computational narratives, autonomous behaviours, interactive control, content generation and authoring tools. Of particular interest for the MimeTIC research team, virtual storytelling triggers challenging opportunities in providing effective models for enforcing autonomous behaviours for characters in complex 3D environments. Offering both low-level capacities to characters such as perceiving the environments, interacting with the environment itself and reacting to changes in the topology, on which to build higher-levels such as modelling abstract representations for efficient reasoning, planning paths and activities, modelling cognitive states and behaviours requires the provision of expressive, multi-level and efficient computational models. Furthermore virtual storytelling requires the seamless control of the balance between the autonomy of characters and the unfolding of the story through the narrative discourse. Virtual storytelling also raises challenging questions on the conveyance of a narrative through interactive or automated control of the cinematography (how to stage the characters, the lights and the cameras). For example, estimating visibility of key subjects, or performing motion planning for cameras and lights are central issues for which have not received satisfactory answers in the literature.

4.2 Fidelity of Virtual Reality

VR is a powerful tool for perception-action experiments. VR-based experimental platforms allow exposing a population to fully controlled stimuli that can be repeated from trial to trial with high accuracy. Factors can be isolated and objects manipulations (position, size, orientation, appearance, ..) are easy to perform. Stimuli can be interactive and adapted to participants’ responses. Such interesting features allow researchers to use VR to perform experiments in sports, motion control, perceptual control laws, spatial cognition as well as person-person interactions. However, the interaction loop between users and their environment differs in virtual conditions in comparison with real conditions. When a user interacts in an environment, movement from action and perception are closely related. While moving, the perceptual system (vision, proprioception,..) provides feedback about the users’ own motion and information about the surrounding environment. That allows the user to adapt his/her trajectory to sudden changes in the environment and generate a safe and efficient motion. In virtual conditions, the interaction loop is more complex because it involves several material aspects.

First, the virtual environment is perceived through a numerical display which could affect the available information and thus could potentially introduce a bias. For example, studies observed a distance compression effect in VR, partially explained by the use of a Head Mounted Display with reduced field of view and exerting a weight and torques on the user’s head. Similarly, the perceived velocity in a VR environment differs from the real world velocity, introducing an additional bias. Other factors, such as the image contrast, delays in the displayed motion and the point of view can also influence efficiency in VR. The second point concerns the user’s motion in the virtual world. The user can actually move if the virtual room is big enough or if wearing a head mounted display. Even with a real motion, authors showed that walking speed is decreased, personal space size is modified and navigation in VR is performed with increased gait instability. Although natural locomotion is certainly the most ecological approach, the physical limited size of VR setups prevents from using it most of the time. Locomotion interfaces are therefore required. They are made up of two components, a locomotion metaphor (device) and a transfer function (software), that can also introduce bias in the generated motion. Indeed, the actuating movement of the locomotion metaphor can significantly differ from real walking, and the simulated motion depends on the transfer function applied. Locomotion interfaces cannot usually preserve all the sensory channels involved in locomotion.

When studying human behavior in VR, the aforementioned factors in the interaction loop potentially introduce bias both in the perception and in the generation of motor behavior trajectories. MimeTIC is working on the mandatory step of VR validation to make it usable for capturing and analyzing human motion.

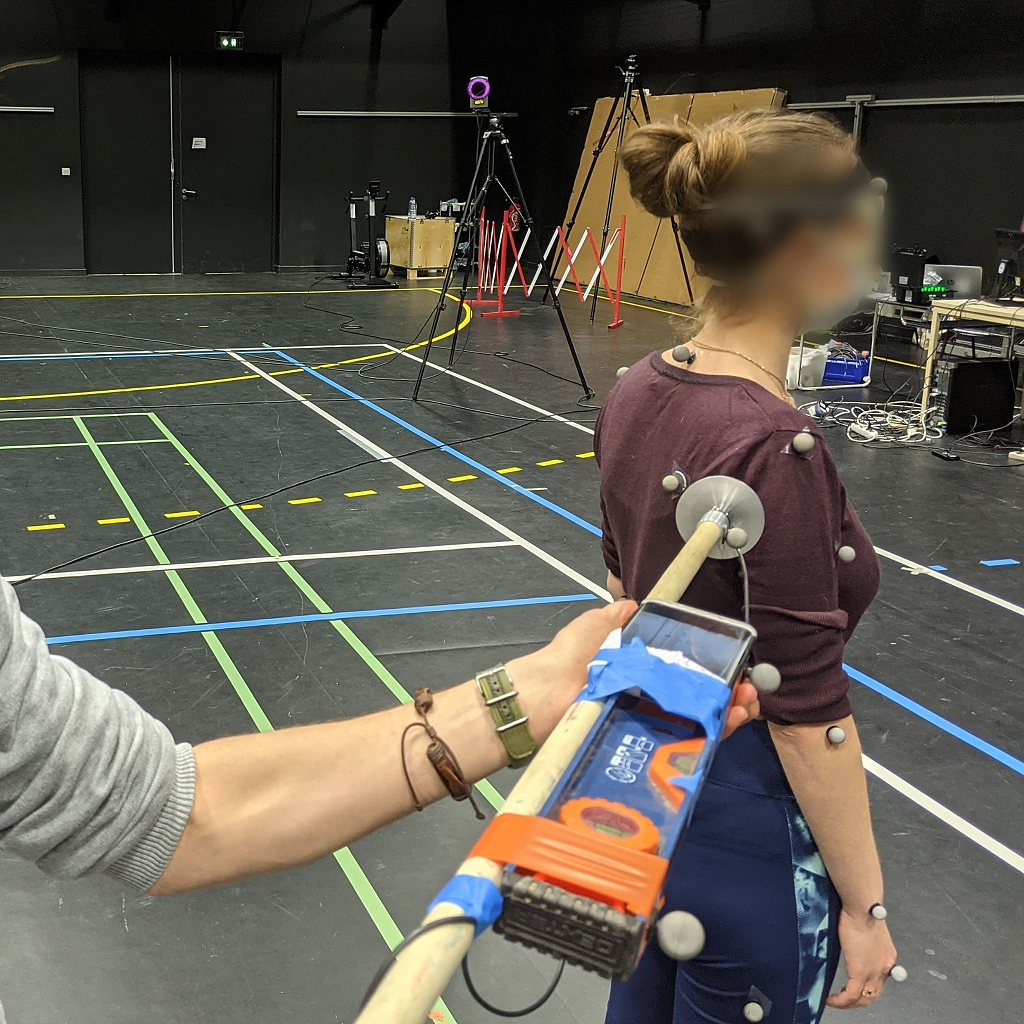

4.3 Motion Sensing of Human Activity

Recording human activity is a key point of many applications and fundamental works. Numerous sensors and systems have been proposed to measure positions, angles or accelerations of the user's body parts. Whatever the system is, one of the main problems is to be able to automatically recognize and analyze the user's performance according to poor and noisy signals. Human activity and motion are subject to variability: intra-variability due to space and time variations of a given motion, but also inter-variability due to different styles and anthropometric dimensions. MimeTIC has addressed the above problems in two main directions.

First, we have studied how to recognize and quantify motions performed by a user when using accurate systems such as Vicon (product of Oxford Metrics), Qualisys, or Optitrack (product of Natural Point) motion capture systems. These systems provide large vectors of accurate information. Due to the size of the state vector (all the degrees of freedom) the challenge is to find the compact information (named features) that enables the automatic system to recognize the performance of the user. Whatever the method used, finding these relevant features that are not sensitive to intra-individual and inter-individual variability is a challenge. Some researchers have proposed to manually edit these features (such as a Boolean value stating if the arm is moving forward or backward) so that the expertise of the designer is directly linked with the success ratio. Many proposals for generic features have been proposed, such as using Laban notation which was introduced to encode dancing motions. Other approaches tend to use machine learning to automatically extract these features. However most of the proposed approaches were used to seek a database for motions, whose properties correspond to the features of the user's performance (named motion retrieval approaches). This does not ensure the retrieval of the exact performance of the user but a set of motions with similar properties.

Second, we wish to find alternatives to the above approach which is based on analyzing accurate and complete knowledge on joint angles and positions. Hence new sensors, such as depth-cameras (Kinect, product of Microsoft) provide us with very noisy joint information but also with the surface of the user. Classical approaches would try to fit a skeleton into the surface in order to compute joint angles which, again, lead to large state vectors. An alternative would be to extract relevant information directly from the raw data, such as the surface provided by depth cameras. The key problem is that the nature of these data may be very different from classical representation of human performance. In MimeTIC, we try to address this problem in some application domains that require picking specific information, such as gait asymmetry or regularity for clinical analysis of human walking.

4.4 Sports

Sport is characterized by complex displacements and motions. One main objective is to understand the determinants of performance through the analysis of the motion itself. In the team, different sports have been studied such as the tennis serve, where the goal was to understand the contribution of each segment of the body in the performance but also the risk of injuries as well as other situation in cycling, swimming, fencing or soccer. Sport motions are dependent on visual information that the athlete can pick up in his environment, including the opponent's actions. Perception is thus fundamental to the performance. Indeed, a sportive action, as unique, complex and often limited in time, requires a selective gathering of information. This perception is often seen as a prerogative for action. It then takes the role of a passive collector of information. However, as mentioned by Gibson in 1979, the perception-action relationship should not be considered sequentially but rather as a coupling: we perceive to act but we must act to perceive. There would thus be laws of coupling between the informational variables available in the environment and the motor responses of a subject. In other words, athletes have the ability to directly perceive the opportunities of action directly from the environment. Whichever school of thought considered, VR offers new perspectives to address these concepts by complementary using real time motion capture of the immersed athlete.

In addition to better understand sports and interactions between athletes, VR can also be used as a training environment as it can provide complementary tools to coaches. It is indeed possible to add visual or auditory information to better train an athlete. The knowledge found in perceptual experiments can be for example used to highlight the body parts that are important to look at, in order to correctly anticipate the opponent's action.

4.5 Ergonomics

The design of workstations nowadays tends to include assessment steps in a Virtual Environment (VE) to evaluate ergonomic features. This approach is more cost-effective and convenient since working directly on the Digital Mock-Up (DMU) in a VE is preferable to constructing a real physical mock-up in a Real Environment (RE). This is substantiated by the fact that a Virtual Reality (VR) set-up can be easily modified, enabling quick adjustments of the workstation design. Indeed, the aim of integrating ergonomics evaluation tools in VEs is to facilitate the design process, enhance the design efficiency, and reduce the costs.

The development of such platforms asks for several improvements in the field of motion analysis and VR. First, interactions have to be as natural as possible to properly mimic the motions performed in real environments. Second, the fidelity of the simulator also needs to be correctly evaluated. Finally, motion analysis tools have to be able to provide in real-time biomechanics quantities usable by ergonomists to analyse and improve the working conditions.

In real working condition, motion analysis and musculoskeletal risk assessment raise also many scientific and technological challenges. Similarly to virtual reality, fidelity of the working process may be affected by the measurement method. Wearing sensors or skin markers, together with the need of frequently calibrating the assessment system may change the way workers perform the tasks. Whatever the measurement is, classical ergonomic assessments generally address one specific parameter, such as posture, or force, or repetitions..., which makes it difficult to design a musculoskeletal risk factor that actually represents this risk. Another key scientific challenge is then to design new indicators that better capture the risk of musculoskeletal disorders. However, this indicator has to deal with the trade-off between accurate biomechanical assessment and the difficulty to get reliable and required information in real working conditions.

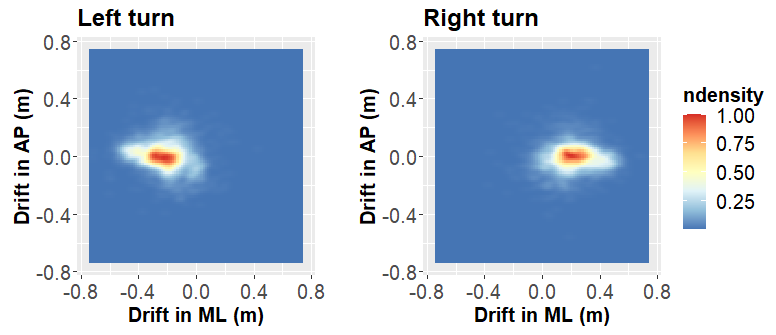

4.6 Locomotion and Interactions between walkers

Modeling and simulating locomotion and interactions between walkers is a very active, complex and competitive domain, being investigating by various disciplines such as mathematics, cognitive sciences, physics, computer graphics, rehabilitation etc. Locomotion and interactions between walkers are by definition at the very core of our society since they represent the basic synergies of our daily life. When walking in the street, we should produce a locomotor movement while taking information about our surrounding environment in order to interact with people, move without collision, alone or in a group, intercept, meet or escape to somebody. MimeTIC is an international key contributor in the domain of understanding and simulating locomotion and interactions between walkers. By combining an approach based on Human Movement Sciences and Computer Sciences, the team focuses on locomotor invariants which characterize the generation of locomotor trajectories, conducts challenging experiments focusing on visuo-motor coordination involved during interactions between walkers both using real and virtual set-ups. One main challenge is to consider and model not only the "average" behaviour of healthy younger adult but also extend to specific populations considering the effect of pathology or the effect of age (kids, older adults). As a first example, when patients cannot walk efficiently, in particular those suffering from central nervous system affections, it becomes very useful for practitioners to benefit from an objective evaluation of their capacities. To facilitate such evaluations, we have developed two complementary indices, one based on kinematics and the other one on muscle activations. One major point of our research is that such indices are usually only developed for children whereas adults with these affections are much more numerous. We extend this objective evaluation by using person-person interaction paradigm which allows studying visuo-motor strategies deficit in these specific populations.

Another fundamental question is the adaptation of the walking pattern according to anatomical constraints, such as pathologies in orthopedics, or adaptation to various human and non-human primates in paleoanthropoly. Hence, the question is to predict plausible locomotion according to a given morphology. This question raises fundamental questions about the variables that are regulated to control gait: balance control, minimum energy, minimum jerk...In MimeTIC we develop models and simulators to efficiently test hypothesis on gait control for given morphologies.

5 Highlights of the year

- MimeTIC is leading DIGISPORT, one of the 24 PIA3 EUR projects (among 81 projects submitted). This project aims at creating a graduate school to support multidisciplinary research on sports, with the main Rennes actors in sports sciences, computer sciences, electronics, data sciences, and human and social sciences. The project launch meeting took place on December 10, 2021 with political, financial, sports, academic and socio-professional representatives. It marks the launch of the project, with already 18 funding and thesis accreditations, but also and above all the opening of the DIGISPORT Master's degree, co-accredited by the two Rennes universities and the grandes écoles INSA, ENS, ENSAI, CentraleSupelec.

- A member of MimeTIC is leading one of the 12 PIA PPR projects "Sport Très Haute Performance" whose objective is to optimize the performance of French athletes for the Paris 2024 Olympics. The objective of the REVEA project is to exploit the unique properties of virtual reality to optimize the perceptual-motor and cognitive processes underlying performance. The French Boxing Federation wishes to improve the perceptual-motor anticipation capacities of boxers in opposition situations while reducing impacts and thus the risk of injuries. The French Athletics Federation wishes to improve the perceptual-motor anticipation capacities of athletes in cooperation situations (4x100m relay) without running at high intensity. The French Gymnastics Federation wishes to optimize the movements of its gymnasts by observing their own motor production to avoid increasing the physical training load even more.

5.1 Awards

- Franck Multon received the price "Transition" from the "Trophées Valorisation du Campus d'innovation", University of Rennes, for his involvement in the transfer of KIMEA software to the Moovency start-up company. November 2021.

6 New software and platforms

MimeTIC is strongly involved in the immerStar platform described below.

6.1 New software

6.1.1 AsymGait

-

Name:

Asymmetry index for clinical gait analysis based on depth images

-

Keywords:

Motion analysis, Kinect, Clinical analysis

-

Scientific Description:

The system uses depth images delivered by the Microsoft Kinect to retrieve the gait cycles first. To this end it is based on a analyzing the knees trajectories instead of the feet to obtain more robust gait event detection. Based on these cycles, the system computes a mean gait cycle model to decrease the effect of noise of the system. Asymmetry is then computed at each frame of the gait cycle as the spatial difference between the left and right parts of the body. This information is computed for each frame of the cycle.

-

Functional Description:

AsymGait is a software package that works with Microsoft Kinect data, especially depth images, in order to carry-out clinical gait analysis. First it identifies the main gait events using the depth information (footstrike, toe-off) to isolate gait cycles. Then it computes a continuous asymmetry index within the gait cycle. Asymmetry is viewed as a spatial difference between the two sides of the body.

-

Contact:

Franck Multon

-

Participants:

Edouard Auvinet, Franck Multon

6.1.2 Cinematic Viewpoint Generator

-

Keyword:

3D animation

-

Functional Description:

The software, developed as an API, provides a mean to automatically compute a collection of viewpoints over one or two specified geometric entities, in a given 3D scene, at a given time. These viewpoints satisfy classical cinematographic framing conventions and guidelines including different shot scales (from extreme long shot to extreme close-up), different shot angles (internal, external, parallel, apex), and different screen compositions (thirds,fifths, symmetric of di-symmetric). The viewpoints allow to cover the range of possible framings for the specified entities. The computation of such viewpoints relies on a database of framings that are dynamically adapted to the 3D scene by using a manifold parametric representation and guarantee the visibility of the specified entities. The set of viewpoints is also automatically annotated with cinematographic tags such as shot scales, angles, compositions, relative placement of entities, line of interest.

-

Contact:

Marc Christie

-

Participants:

Christophe Lino, Emmanuel Badier, Marc Christie

-

Partners:

Université d'Udine, Université de Nantes

6.1.3 CusToM

-

Name:

Customizable Toolbox for Musculoskeletal simulation

-

Keywords:

Biomechanics, Dynamic Analysis, Kinematics, Simulation, Mechanical multi-body systems

-

Scientific Description:

The present toolbox aims at performing a motion analysis thanks to an inverse dynamics method.

Before performing motion analysis steps, a musculoskeletal model is generated. Its consists of, first, generating the desire anthropometric model thanks to models libraries. The generated model is then kinematical calibrated by using data of a motion capture. The inverse kinematics step, the inverse dynamics step and the muscle forces estimation step are then successively performed from motion capture and external forces data. Two folders and one script are available on the toolbox root. The Main script collects all the different functions of the motion analysis pipeline. The Functions folder contains all functions used in the toolbox. It is necessary to add this folder and all the subfolders to the Matlab path. The Problems folder is used to contain the different study. The user has to create one subfolder for each new study. Once a new musculoskeletal model is used, a new study is necessary. Different files will be automaticaly generated and saved in this folder. All files located on its root are related to the model and are valuable whatever the motion considered. A new folder will be added for each new motion capture. All files located on a folder are only related to this considered motion.

-

Functional Description:

Inverse kinematics Inverse dynamics Muscle forces estimation External forces prediction

- Publications:

-

Contact:

Charles Pontonnier

-

Participants:

Antoine Muller, Charles Pontonnier, Georges Dumont, Pierre Puchaud, Anthony Sorel, Claire Livet, Louise Demestre

6.1.4 Directors Lens Motion Builder

-

Keywords:

Previzualisation, Virtual camera, 3D animation

-

Functional Description:

Directors Lens Motion Builder is a software plugin for Autodesk's Motion Builder animation tool. This plugin features a novel workflow to rapidly prototype cinematographic sequences in a 3D scene, and is dedicated to the 3D animation and movie previsualization industries. The workflow integrates the automated computation of viewpoints (using the Cinematic Viewpoint Generator) to interactively explore different framings of the scene, proposes means to interactively control framings in the image space, and proposes a technique to automatically retarget a camera trajectory from one scene to another while enforcing visual properties. The tool also proposes to edit the cinematographic sequence and export the animation. The software can be linked to different virtual camera systems available on the market.

-

Contact:

Marc Christie

-

Participants:

Christophe Lino, Emmanuel Badier, Marc Christie

-

Partner:

Université de Rennes 1

6.1.5 Kimea

-

Name:

Kinect IMprovement for Egronomics Assessment

-

Keywords:

Biomechanics, Motion analysis, Kinect

-

Scientific Description:

Kimea consists in correcting skeleton data delivered by a Microsoft Kinect in an ergonomics purpose. Kimea is able to manage most of the occlultations that can occur in real working situation, on workstations. To this end, Kimea relies on a database of examples/poses organized as a graph, in order to replace unreliable body segments reconstruction by poses that have already been measured on real subject. The potential pose candidates are used in an optimization framework.

-

Functional Description:

Kimea gets Kinect data as input data (skeleton data) and correct most of measurement errors to carry-out ergonomic assessment at workstation.

- Publications:

-

Contact:

Franck Multon

-

Participants:

Franck Multon, Hubert Shum, Pierre Plantard

-

Partner:

Faurecia

6.1.6 Populate

-

Keywords:

Behavior modeling, Agent, Scheduling

-

Scientific Description:

The software provides the following functionalities:

- A high level XML dialect that is dedicated to the description of agents activities in terms of tasks and sub activities that can be combined with different kind of operators: sequential, without order, interlaced. This dialect also enables the description of time and location constraints associated to tasks.

- An XML dialect that enables the description of agent's personal characteristics.

- An informed graph describes the topology of the environment as well as the locations where tasks can be performed. A bridge between TopoPlan and Populate has also been designed. It provides an automatic analysis of an informed 3D environment that is used to generate an informed graph compatible with Populate.

- The generation of a valid task schedule based on the previously mentioned descriptions.

With a good configuration of agents characteristics (based on statistics), we demonstrated that tasks schedules produced by Populate are representative of human ones. In conjunction with TopoPlan, it has been used to populate a district of Paris as well as imaginary cities with several thousands of pedestrians navigating in real time.

-

Functional Description:

Populate is a toolkit dedicated to task scheduling under time and space constraints in the field of behavioral animation. It is currently used to populate virtual cities with pedestrian performing different kind of activities implying travels between different locations. However the generic aspect of the algorithm and underlying representations enable its use in a wide range of applications that need to link activity, time and space. The main scheduling algorithm relies on the following inputs: an informed environment description, an activity an agent needs to perform and individual characteristics of this agent. The algorithm produces a valid task schedule compatible with time and spatial constraints imposed by the activity description and the environment. In this task schedule, time intervals relating to travel and task fulfillment are identified and locations where tasks should be performed are automatically selected.

-

Contact:

Fabrice Lamarche

-

Participants:

Carl-Johan Jorgensen, Fabrice Lamarche

6.1.7 PyNimation

-

Keywords:

Moving bodies, 3D animation, Synthetic human

-

Scientific Description:

PyNimation is a python-based open-source (AGPL) software for editing motion capture data which was initiated because of a lack of open-source software enabling to process different types of motion capture data in a unified way, which typically forces animation pipelines to rely on several commercial software. For instance, motions are captured with a software, retargeted using another one, then edited using a third one, etc. The goal of Pynimation is therefore to bridge the gap in the animation pipeline between motion capture software and final game engines, by handling in a unified way different types of motion capture data, providing standard and novel motion editing solutions, and exporting motion capture data to be compatible with common 3D game engines (e.g., Unity, Unreal). Its goal is also simultaneously to provide support to our research efforts in this area, and it is therefore used, maintained, and extended to progressively include novel motion editing features, as well as to integrate the results of our research projects. At a short term, our goal is to further extend its capabilities and to share it more largely with the animation/research community.

-

Functional Description:

PyNimation is a framework for editing, visualizing and studying skeletal 3D animations, it was more particularly designed to process motion capture data. It stems from the wish to utilize Python’s data science capabilities and ease of use for human motion research.

In its version 1.0, Pynimation offers the following functionalities, which aim to evolve with the development of the tool : - Import / Export of FBX, BVH, and MVNX animation file formats - Access and modification of skeletal joint transformations, as well as a certain number of functionalities to manipulate these transformations - Basic features for human motion animation (under development, but including e.g. different methods of inverse kinematics, editing filters, etc.). - Interactive visualisation in OpenGL for animations and objects, including the possibility to animate skinned meshes

-

Authors:

Ludovic Hoyet, Robin Adili, Benjamin Niay, Alberto Jovane

-

Contact:

Ludovic Hoyet

6.1.8 The Theater

-

Keywords:

3D animation, Interactive Scenarios

-

Functional Description:

The Theater is a software framework to develop interactive scenarios in virtual 3D environements. The framework provides means to author and orchestrate 3D character behaviors and simulate them in real-time. The tools provides a basis to build a range of 3D applications, from simple simulations with reactive behaviors, to complex storytelling applications including narrative mechanisms such as flashbacks.

-

Contact:

Marc Christie

-

Participant:

Marc Christie

6.2 New platforms

6.2.1 Immerstar Platform

Participants: Georges Dumont [contact], Ronan Gaugne, Anthony Sorel, Richard Kulpa.

With the two platforms of virtual reality, Immersia) and Immermove Immermove, grouped under the name Immerstar, the team has access to high level scientific facilities. This equipment benefits the research teams of the center and has allowed them to extend their local, national and international collaborations. The Immerstar platform was granted by a Inria CPER funding for 2015-2019 that enabled important evolutions of the equipment. In 2016, the first technical evolutions have been decided and, in 2017, these evolutions have been implemented. On one side, for Immermove, the addition of a third face to the immersive space, and the extension of the Vicon tracking system have been realized and continued this year with 23 new cameras. And, on the second side, for Immersia, the installation of WQXGA laser projectors with augmented global resolution, of a new tracking system with higher frequency and of new computers for simulation and image generation in 2017. In 2018, a Scale One haptic device has been installed. It allows, as in the CPER proposal, one or two handed haptic feedback in the full space covered by Immersia and possibility of carrying the user. Based on these supports, in 2020, we participated to a PIA3-Equipex+ proposal. This proposal CONTINUUM involves 22 partners, has been succesfully evaluated and will be granted. The CONTINUUM project will create a collaborative research infrastructure of 30 platforms located throughout France, to advance interdisciplinary research based on interaction between computer science and the human and social sciences. Thanks to CONTINUUM, 37 research teams will develop cutting-edge research programs focusing on visualization, immersion, interaction and collaboration, as well as on human perception, cognition and behaviour in virtual/augmented reality, with potential impact on societal issues. CONTINUUM enables a paradigm shift in the way we perceive, interact, and collaborate with complex digital data and digital worlds by putting humans at the center of the data processing workflows. The project will empower scientists, engineers and industry users with a highly interconnected network of high-performance visualization and immersive platforms to observe, manipulate, understand and share digital data, real-time multi-scale simulations, and virtual or augmented experiences. All platforms will feature facilities for remote collaboration with other platforms, as well as mobile equipment that can be lent to users to facilitate onboarding. The kick-off meeting of continuum will be held in 2022, Januray the 14th.

7 New results

7.1 Outline

In 2021, MimeTIC has maintained its activity in motion analysis,modelling and simulation, to support the idea that these approaches are strongly coupled in a motion analysis-synthesis loop. This idea has been applied to the main application domains of MimeTIC:

- Animation, Autonomous Characters and Digital Storytelling,

- Fidelity of Virtual Reality,

- Motion sensing of Human Activity,

- Sports,

- Ergonomics,

- Locomotion and Interactions Between Walkers.

7.2 Animation, Autonomous Characters and Digital Storytelling

MimeTIC main research path consists in associating motion analysis and synthesis to enhance the naturalness in computer animation, with applications in camera control, movie previsualisation, and autonomous virtual character control. Thus, we pushed example-based techniques in order to reach a good trade-off between simulation efficiency and naturalness of the results. In 2021, to achieve this goal, MimeTIC continued to explore the use of perceptual studies and model-based approaches, but also began to investigate deep learning.

7.2.1 Binary Graph Descriptor for Robust Relocalization on Heterogeneous Data

Participants: Marc Christie [contact], Xi Wang.

Motion analysis in open and uncontrolled environments remains a challenging task. We here address the specific issue of robust relocalization of cameras that finds many applications in SLAM system (Simultaneous Localization and Mapping): extracting camera trajectories from movies, robust augmented reality, motion matching, etc. In 2021, we proposed a novel binary graph descriptor to improve loop detection for visual SLAM systems. Our contribution is twofold: i) a graph embedding technique for generating binary descriptors which conserve both spatial and histogram information extracted from images; ii) a generic mean of combining multiple layers of heterogeneous data into the proposed binary graph descriptor, coupled with a matching and geometric checking method. We also introduce an implementation of our descriptor into an incremental Bag-of-Words (iBoW) structure that improves efficiency and scalability, and propose a method to interpret Deep Neural Network (DNN) results. We evaluate our system on synthetic and real datasets across different lighting and seasonal conditions. The proposed method outperforms state-of-the-art loop detection frameworks in terms of relocalization precision and computational performance, as well as displays high robustness against cross-condition datasets. Paper and results are reported in 30.

7.2.2 TT SLAM: Dense Monocular SLAM for Planar Environments

Participants: Marc Christie [contact], Xi Wang.

As an addition to 30, we also report our 2021 work on a novel visual SLAM method with dense planar reconstruction using a monocular camera: TT-SLAM. The method exploits planar template-based trackers (TT) to compute camera poses and reconstructs a multi planar scene representation. Multiple homographies are estimated simultaneously by clustering a set of template trackers supported by superpixelized regions. Compared to RANSAC-based multiple homographies method, data association and keyframe selection issues are handled by the continuous nature of template trackers. A non-linear optimization process is applied to all the homographies to improve the precision in pose estimation. Experiments show that the proposed method outperforms RANSAC-based multiple homographies method as well as other dense method SLAM techniques such as LSD-SLAM or DPPTAM. It competes with keypoint-based techniques like ORB-SLAM while providing dense planar reconstructions of the environment. The work is reported in 41.

7.2.3 Analyzing visual attention in movies

Participants: Marc Christie [contact], Alexandre Bruckert.

In the overarching objective of better understanding movies through their high-level visual characteristics (camera motions, camera angles, scene layouts and mise-en-scene), we have been trying to search for better automated visual saliency techniques. Indeed, the way a film director plays with the spectator gaze is a key stylistic feature. A first work consisted in better estimating this visual saliency by improving existing techniques through well-designed loss functions. Indeed, deep learning techniques are widely used to model human visual saliency, to such a point that state-of-the-art performances are now only attained by deep neural networks. However, one key part of a typical deep learning model is often neglected when it comes to modeling visual saliency: the choice of the loss function.

In this work, we explored some of the most popular loss functions that are used in deep saliency models. We demonstrate that on a fixed network architecture, modifying the loss function can significantly improve (or depreciate) the results, hence emphasizing the importance of the choice of the loss function when designing a model. We also evaluate the relevance of new loss functions for saliency prediction inspired by metrics used in style-transfer tasks. Finally, we show that a linear combination of several well-chosen loss functions leads to significant improvements in performance on different datasets as well as on a different network architecture, thus demonstrating the robustness of a combined metric.

By building on our progressive understanding of saliency in movies, we actually demonstrated that current state of the art visual saliency techniques, trained on non-cinematographic contents, fail to capture the real spectator gaze displacements in a number of situations. Indeed, in the process of making a movie, directors constantly care about where the spectator will look on the screen. Shot composition, framing, camera movements or editing are tools commonly used to direct attention. In order to provide a quantitative analysis of the relationship between those tools and gaze patterns, we propose a new eye-tracking database, containing gaze pattern information on movie sequences, as well as editing annotations. We show how state-of-the-art computational saliency techniques behave on this dataset. In this work, we expose strong links between movie editing and spectators scanpaths, and open several leads on how the knowledge of editing information could improve human visual attention modeling for cinematic content49. The dataset generated and analysed during the current study is available at https://github. com/abruckert/eye_tracking_filmmaking.

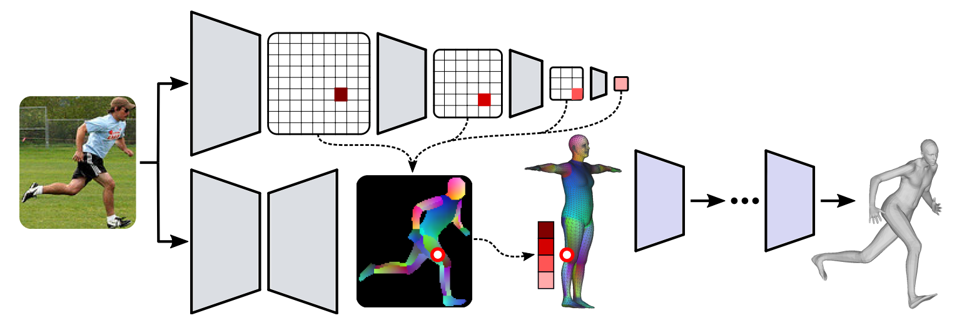

7.2.4 High-Level Features for Movie Style Understanding

Participants: Marc Christie [contact], Robin Courant.

After identifying the lack of high-level feature extraction techniques for movies, we proposed a new set of stylistic features (character pose, camera pose, scene depth, focus map, frame layering and camera motion). Automatically analysing such stylistic features in movies is a challenging task, as it requires an in-depth knowledge of cinematography. In the literature, only a handful of methods explore stylistic feature extraction, and they typically focus on limited low-level image and shot features (colour histograms, average shot lengths or shot types, amount of camera motion). However, it only capture a subset of the stylistic features which help to characterise a movie (e.g. black and white vs. coloured, or film editing). To this end, we systematically explore seven high-level features for movie style analysis: character segmentation, pose estimation, depth maps, focus maps, frame layering, camera motion type and camera pose. Our findings show that low-level features remain insufficient for movie style analysis, while high-level features seem promising. These results are reported in 43 and received the best paper award at the ICCV workshop on AI for Creative Video Editing and Understanding.

7.2.5 Camera Keyframing with Style and Control

Participants: Marc Christie [contact], Xi Wang.

We present a novel technique that enables 3D artists to synthesize camera motions in virtual environments following a camera style, while enforcing user-designed camera keyframes as constraints along the sequence (see figure 4). To solve this constrained motion in-betweening problem, we design and train a camera motion generator from a collection of temporal cinematic features (camera and actor motions) using a conditioning on target keyframes. We further condition the generator with a style code to control how to perform the interpolation between the keyframes. Style codes are generated by training a second network that encodes different camera behaviors in a compact latent space, the camera style space. Camera behaviors are defined as temporal correlations between actor features and camera motions and can be extracted from real or synthetic film clips. We further extend the system by incorporating a fine control of camera speed and direction via a hidden state mapping technique. We evaluate our method on two aspects: i) the capacity to synthesize style-aware camera trajectories with user defined keyframes; and ii) the capacity to ensure that in-between motions still comply with the reference camera style while satisfying the keyframe constraints. As a result, our system is the first style-aware keyframe in-betweening technique for camera control that balances style-driven automation with precise and interactive control of keyframes.

7.2.6 Real-Time Cinematic Tracking of Targets in Dynamic Environments

Participants: Marc Christie [contact], Ludovic Burg.

Building on our previous work on efficient visibility estimation, we proposed a novel camera tracking algorithm for virtual environments. Tracking targets in unknown 3D environments requires to simultaneously ensure a low computational cost, a good degree of reactivity and a high cinematic quality despite sudden changes. In this paper, we draw on the idea of Motion-Predictive Control to propose an efficient real-time camera tracking technique which ensures these properties. Our approach relies on the predicted motion of a target to create and evaluate a very large number of camera motions using hardware ray casting. Our evaluation of camera motions includes a range of cinematic properties such as distance to target, visibility, collision, smoothness and jitter. Experiments are conducted to display the benefits of the approach with relation to prior work. Work is reported in 34.7.2.7 FaceTuneGAN: Face Autoencoder for Convolutional Expression Transfer Using Neural Generative Adversarial Networks

Participants: Franck Multon [contact], Nicolas Olivier.

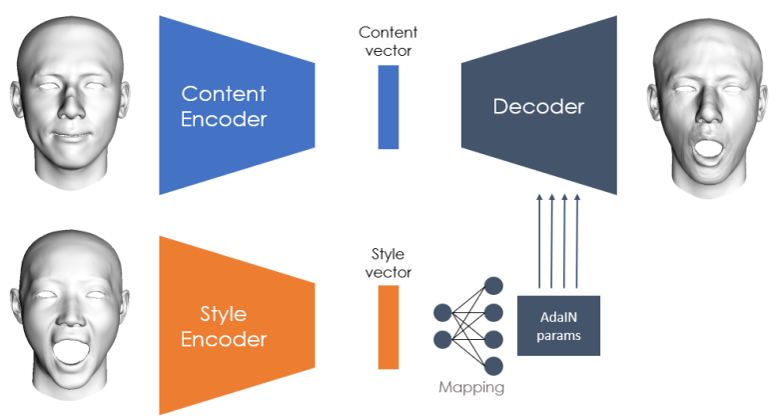

Our network architecture. The encoders extract and compress the features of their input into low-dimensional content and style vectors. A decoder reconstructs a mesh from these compact representations. The style information is passed to the decoder through AdaIN normalization layers.

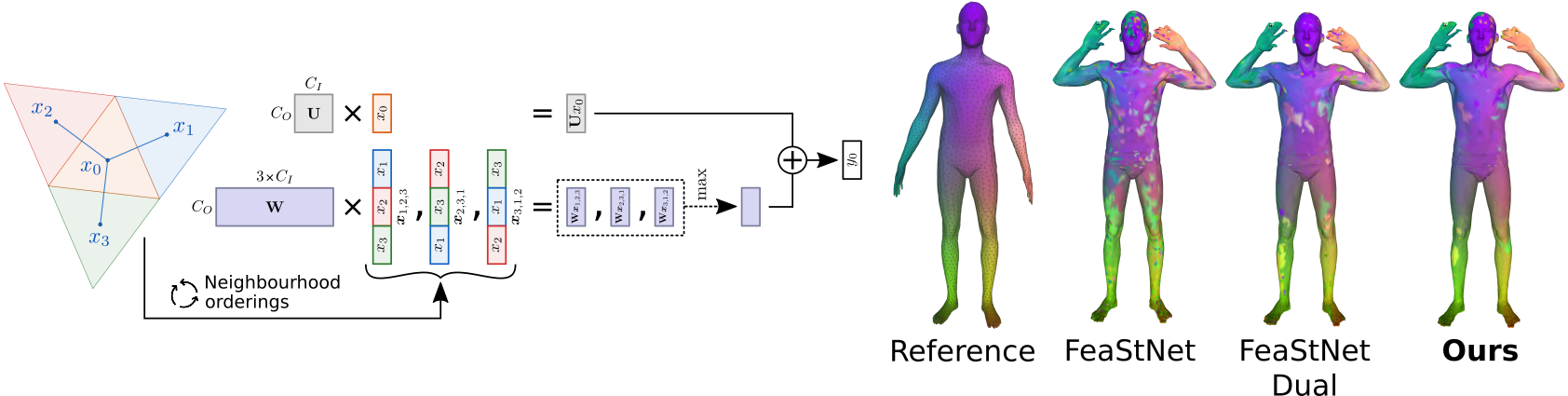

In this work 52, we present FaceTuneGAN, a new 3D face model representation decomposing and encoding separately facial identity and facial expression. We propose a first adaptation of image-to-image translation networks, that have successfully been used in the 2D domain, to 3D face geometry. Leveraging recently released large face scan databases, a neural network has been trained to decouple factors of variations with a better knowledge of the face, enabling facial expressions transfer and neutralization of expressive faces. Specifically, we design an adversarial architecture adapting the base architecture of FUNIT and using SpiralNet++ for our convolutional and sampling operations. Using two publicly available datasets (FaceScape and CoMA), FaceTuneGAN has a better identity decomposition and face neutralization than state-of-the-art techniques. The architecture is depicted in Figure 5. It also outperforms classical deformation transfer approach by predicting blendshapes closer to ground-truth data and with less of undesired artifacts due to too different facial morphologies between source and target.

7.2.8 Contact Preserving Shape Transfer For Rigging-Free Motion Retargeting

Participants: Franck Multon [contact], Jean Basset, Adnane Boukhayma.

In 2018, we introduced the idea of context graph to capture the relationship between body parts surfaces and enhance the quality of the motion retargetting problem. Hence, it becomes possible to retarget the motion of a source character to a target one while preserving the topological relationship between body parts surfaces. However this approach implies to strictly satisfy distance constraints between body parts, whereas some of them could be relaxed to preserve naturalness. In 2019, we introduced a new paradigm based on transfering the shape instead of encoding the pose constraints to tackle this problem for isolated poses.

In 2020, we extended this approach to handle continuity in motion, and non-human characters. this idea resulted from the collaboration with the Morpheo Inria team in Grenoble, in the context of the IPL AVATAR project. The proposed approaches were based on a nonlinear optimization framework, which involved manually editing the constraints to satisfy, and required a huge amount of computation time.

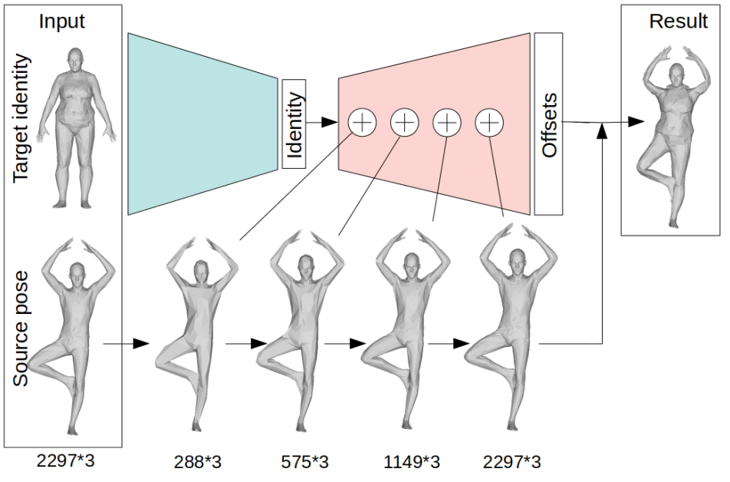

In 32, to achieve the deformation transfer, we proposed a neural encoder-decoder architecture where only identity information is encoded and where the decoder is conditioned on the pose. We use pose independent representations, such as isometry-invariant shape characteristics, to represent identity features. The model is depicted in Figure 6. Our model uses these features to supervise the prediction of offsets from the deformed pose to the result of the transfer. We show experimentally that our method outperforms state-of-the-art methods both quantitatively and qualitatively, and better generalizes better to poses not seen during training. We also introduce a fine-tuning step that allows to obtain competitive results for extreme identities, and allows to transfer simple clothing.

Overview of the proposed approach. The encoder (green) generates an identity code for the target. We feed this code to the decoder (red) along with the source, which is concatenated with the decoder features at all resolution stages. The decoder finally outputs per vertex offsets from the input source towards the identity transfer result.

7.2.9 Perception of Motion Variations in Large-Scale Virtual Human Crowds

Participants: Robin Adili [contact], Ludovic Hoyet [contact], Benjamin Niay, Anne-Hélène Olivier, Katja Zibrek.

Virtual human crowds are regularly featured in movies and video games. With a large number of virtual characters, each behaving in their own way, spectacular scenes can be produced. The more diverse the characters and their behaviors are, the more realistic the virtual crowd is expected to be perceived. Hence, creating virtual crowds is a trade-off between the cost associated with acquiring more diverse assets, namely more virtual characters with their animations, and achieving better realism. In this work 31, conducted in collaboration with Julien Pettré from the Rainbow team, our focus is on the perceived variety in virtual crowd character motions. We present an experiment exploring whether observers are able to identify virtual crowds including motion clones in the case of large-scale crowds (from 250 to 1000 characters), see figure 7. As it is not possible to acquire individual motions for such numbers of characters, we rely on a state-of-the-art motion variation approach to synthesize unique variations of existing examples for each character in the crowd. Participants then compared pairs of videos, where each character was animated either with a unique motion or using a subset of these motions. Our results show that virtual crowds with more than two motions (one per gender) were perceptually equivalent, regardless of their size. We believe these findings can help create efficient crowd applications, and are an additional step into a broader understanding of the perception of motion variety.

7.2.10 Interaction Fields: Sketching Collective Behaviours.

Participants: Marc Christie [contact], Adèle Colas [contact], Ludovic Hoyet [contact], Anne-Hélène Olivier [contact], Katja Zibrek.

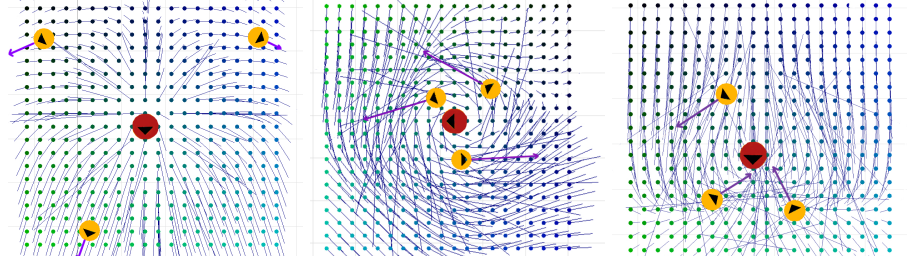

Many applications of computer graphics, such as cinema, video games, virtual reality, training scenarios, therapy, or rehabilitation, involve the design of situations where several virtual humans are engaged. In applications where a user is immersed in the virtual environment, the (collective) behavior of these virtual humans must be realistic to improve the user's sense of presence. While expressive behaviour appears to be a crucial aspect as part of the realism, collective behaviours simulated using typical crowd simulation models (e.g., social forces, potential fields, velocity selection, vision-based) usually lack expressiveness and do not allow to capture more subtle scenarios (e.g., a group of agents hiding from the user or blocking his/her way), which require the ability to simulate complex interactions. As subtle and adaptable collective behaviours are not easily modeled, there is therefore a need for more intuitive ways to design such complex scenarios. In 2020, we proposed a novel approach to sketch such interactions to define collective behaviours, which we called Interaction Fields, in collaboration with Julien Pettré and Claudio Pacchierotti in Rainbow team. Although other sketch-based approaches exist, these usually focus on goal-oriented path planning, and not on modelling social or collective behaviour. In comparison, our approach is based on a user-friendly application enabling users to draw target interactions between agents through intuitive vector fields (Figure 8). This year, we therefore extended this work to include novel features facilitating the design of expressive and collective behaviours, and conducted an experiment evaluating the usability of the approach. By considering more generic and dynamic situations, we demonstrate that we can design diversified and subtle interactions, which so far have mostly focused on predefined static scenarios.

7.2.11 Reactive Virtual Agents: A Viewpoint-Driven Approach for Bodily Nonverbal Communication

Participants: Marc Christie [contact], Ludovic Hoyet [contact], Alberto Jovane [contact], Anne-Hélène Olivier [contact], Pierre Raimbaud, Katja Zibrek.

Intelligent Virtual Agents (IVA) should be designed to perform realistic and expressive interactions that convey emotions and express personalities. These are notably expressed by humans through nonverbal behaviours in everyday interactions. Thus, the ability to perform nonverbal communication is paramount for IVA design. Nonverbal communication, notably reflected by proxemics and kinesics, contributes to the social realism of IVA interactions. In this regard, user studies have been conducted to evaluate generative models of IVAs’ expressive facial motions or body motions. These last ones in particular – divided into postures and gestures – appear to be the key to expressivity in nonverbal communication between humans, and with IVAs. Nonetheless, ensuring the expressivity of IVAs' actions is not the only challenge related to bodily nonverbal communication. IVAs should also embed the ability to react to humans or other IVAs through adapted bodily communication.

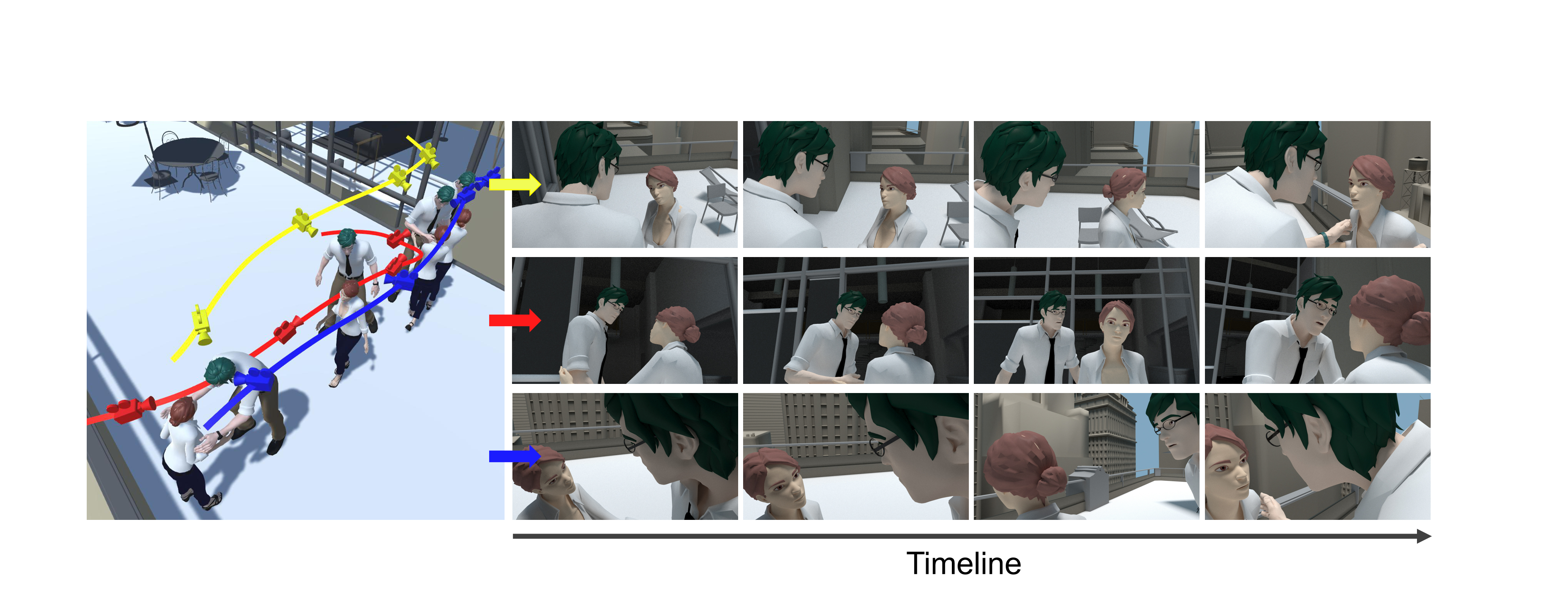

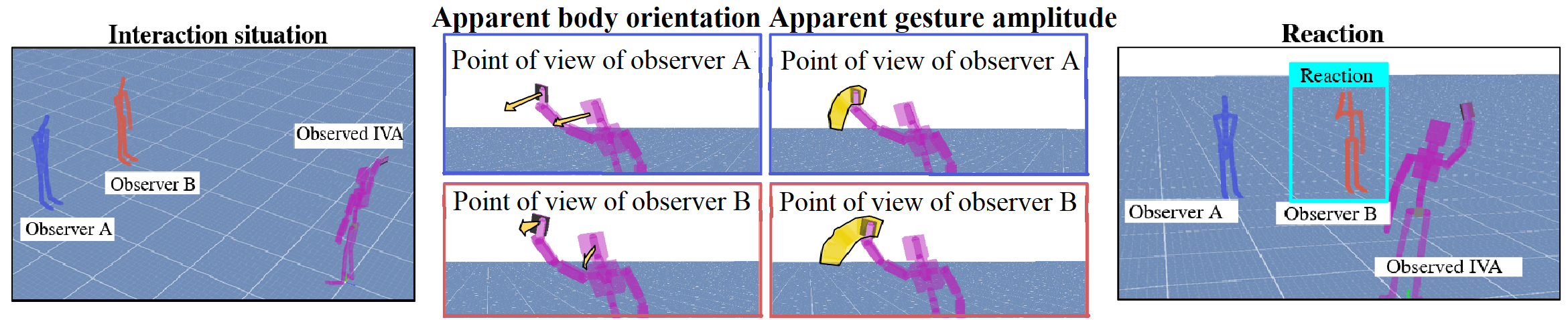

Relying on the perception-action loop involved during human interactions, this work 39 conducted in collaboration with Julien Pettré and Claudio Pacchierotti from the Rainbow team, introduces a new concept to design IVAs with reactive body motions based on a viewpoint-driven approach. We present a new paradigm for reactive behaviours simulation, based on the visual analysis of body movements, see figure 9. In this short paper, we focus on situations where the poses of all agents are known. However, the same idea can easily be extended to more complex scenarios where only partial information about the agents' poses is available. Our viewport-driven approach first analyses the observed IVA's motions from the viewpoint of the observer IVA, and then adjusts the observer IVA's reactions.

7.3 Fidelity of Virtual Reality

MimeTIC wishes to promote the use of Virtual Reality to analyze and train human motor performance. It raises the fundamental question of the transfer of knowledge and skills acquired in VR to real life. In 2021, we maintain our efforts to enhance the experience of users when interacting physically with a virtual world. We developed an original setup to carry-out experiments aiming at evaluating various haptic feedback rendering techniques. In collaboration with Hybrid team, we put many efforts in better simulating avatars of the users, and analyzed embodiment in various VR conditions, leading to several co-supervised PhD students.

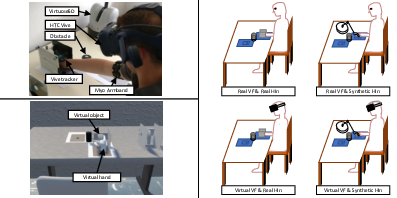

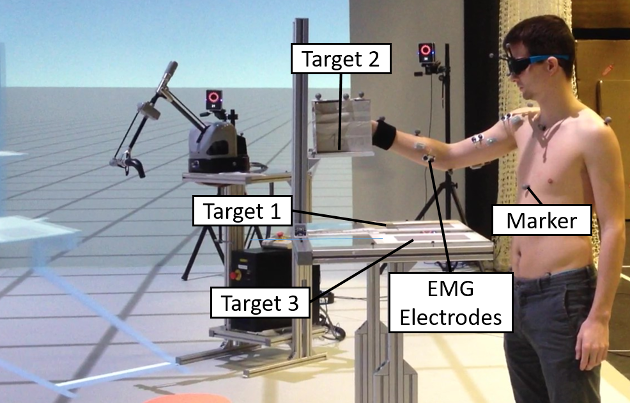

7.3.1 Biofidelity in VR

Participants: Simon Hilt [contact], Georges Dumont [contact], Charles Pontonnier [contact].