Keywords

Computer Science and Digital Science

- A1.1.1. Multicore, Manycore

- A1.5. Complex systems

- A1.5.1. Systems of systems

- A1.5.2. Communicating systems

- A2.3. Embedded and cyber-physical systems

- A2.3.1. Embedded systems

- A2.3.2. Cyber-physical systems

- A2.3.3. Real-time systems

- A2.4.1. Analysis

Other Research Topics and Application Domains

- B5.2. Design and manufacturing

- B5.2.1. Road vehicles

- B5.2.2. Railway

- B5.2.3. Aviation

- B5.2.4. Aerospace

- B6.6. Embedded systems

1 Team members, visitors, external collaborators

Research Scientist

- Liliana Cucu [Team leader, INRIA, Senior Researcher, HDR]

Faculty Member

- Avner Bar-Hen [CNAM, Professor, HDR]

PhD Students

- Chiara Daini [INRIA]

- Hawila Ismail [StatInf, CIFRE, from Oct 2022]

- Mohamed Khelassi [Gustave Eiffel University (UGE), ESIEE Paris, Gaspard Monge Laboratory (LIGM)]

- Marwan Wehaiba El Khazen [StatInf, CIFRE]

- Kevin Zagalo [INRIA]

Technical Staff

- Rihab Bennour [INRIA, Engineer]

- Hadrien Clarke [INRIA, until Sep 2022]

- Ismail Hawila [INRIA, Engineer, from Feb 2022 until Aug 2022]

- Kossivi Kougblenou [StatInf and INRIA, Engineer]

Interns and Apprentices

- Marc-Antoine Auvray [INRIA]

- Marharyta Tomina [INRIA, from Jun 2022 until Aug 2022]

- Olena Verbytska [INRIA, from May 2022 until Aug 2022]

Administrative Assistants

- Christine Anocq [INRIA]

- Nelly Maloisel [INRIA]

External Collaborators

- Yasmina Abdeddaïm [Gustave Eiffel University (UGE), ESIEE Paris, Gaspard Monge (LIGM)]

- Slim Ben Amor [StatInf]

- Adriana Gogonel [StatInf]

- Yves Sorel [INRIA]

2 Overall objectives

The Kopernic members are focusing their research on studying time for embedded communicating systems, also known as cyber-physical systems. More precisely, the team proposes a system-oriented solution to the problem of studying time properties of the cyber components of a CPS. The solution is expected to be obtained by composing probabilistic and non-probabilistic approaches for CPSs. Moreover, statistical approaches are expected to validate existing hypotheses or propose new ones for the models considered by probabilistic analyses.

The term cyber-physical systems refers to a new generation of systems with integrated computational and physical capabilities that can interact with humans through many new modalities 14. A defibrillator, a mobile phone, an autonomous car or an aircraft, they all are CPSs. Beside constraints like power consumption, security, size and weight, CPSs may have cyber components required to fulfill their functions within a limited time interval (a.k.a. time dependability), often imposed by the environment, e.g., a physical process controlled by some cyber components. The appearance of communication channels between cyber-physical components, easing the CPS utilization within larger systems, forces cyber components with high criticality to interact with lower criticality cyber components. This interaction is completed by external events from the environnement that has a time impact on the CPS. Moreover, some programs of the cyber components may be executed on time predictable processors and other programs on less time predictable processors.

Different research communities study separately the three design phases of these systems: the modeling, the design and the analysis of CPSs 27. These phases are repeated iteratively until an appropriate solution is found. During the first phase, the behavior of a system is often described using model-based methods. Other methods exist, but model-driven approaches are widely used by both the research and the industry communities. A solution described by a model is proved (functionally) correct usually by a formal verification method used during the analysis phase (third phase described below).

During the second phase of the design, the physical components (e.g., sensors and actuators) and the cyber components (e.g., programs, messages and embedded processors) are chosen often among those available on the market. However, due to the ever increasing pressure of smartphone market, the microprocessor industry provides general purpose processors based on multicore and, in a near future, based on manycore processors. These processors have complex architectures that are not time predictable due to features like multiple levels of caches and pipelines, speculative branching, communicating through shared memory or/and through a network on chip, internet, etc. Due to the time unpredictability of some processors, nowadays the CPS industry is facing the great challenge of estimating worst case execution times (WCETs) of programs executed on these processors. Indeed, the current complexity of both processors and programs does not allow to propose reasonable worst case bounds. Then, the phase of design ends with the implementation of the cyber components on such processors, where the models are transformed in programs (or messages for the communication channels) manually or by code generation techniques 17.

During the third phase of analysis, the correctness of the cyber components is verified at program level where the functions of the cyber component are implemented. The execution times of programs are estimated either by static analysis, by measurements or by a combination of both approaches 36.

These WCETs are then used as inputs to scheduling problems 29, the highest level of formalization for verifying the time properties of a CPS. The programs are provided a start time within the schedule together with an assignment of resources (processor, memory, communication, etc.). Verifying that a schedule and an associated assignment are a solution for a scheduling problem is known as a schedulability analysis.

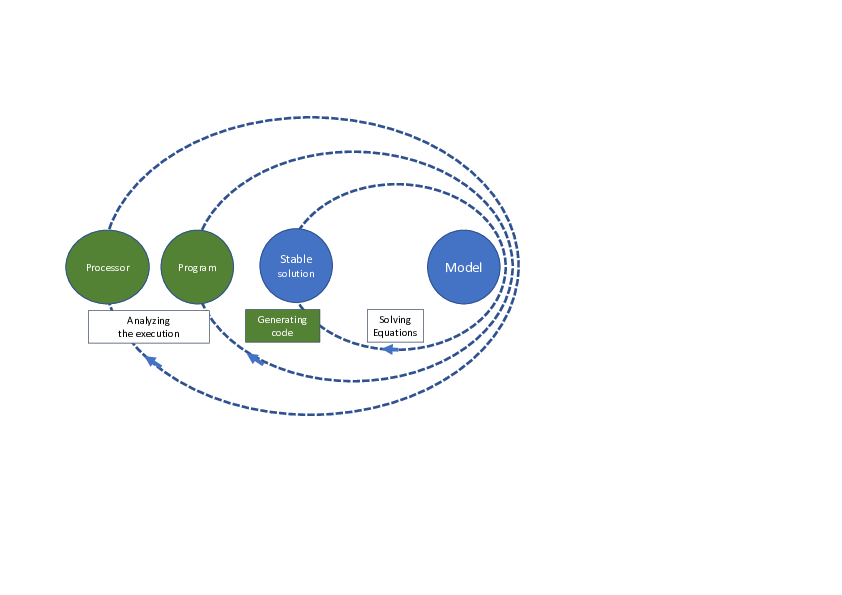

The current CPS design, exploiting formal description of the models and their transformation into physical and cyber parts of the CPS, ensures that the functional behavior of the CPS is correct. Unfortunately, there is no formal description guaranteeing today that the execution times of the generated programs is smaller than a given bound. Clearly all communities working on CPS design are aware that computing takes time26, but there is no CPS solution guaranteeing time predictability of these systems as the processors appear late within the design phase (see Figure 1). Indeed, the choice of the processor is made at the end of the CPS design process, after writing or generating the programs.

The CPS design is compared to a solar system where the model is central.

Since the processor appears late within the CPS design process, the CPS designer in charge of estimating the worst case execution time of a program or analyzing the schedulability of a set of programs inherits a difficult problem. The Kopernic main purpose is the proposition of compositional rules with respect to the time behaviour of a CPS, allowing to restrain the CPS design to analyzable instances of the WCET estimation problem and of the schedulability analysis problem.

With respect to the WCET estimation problem, we say that a rule is compositional for any two sets of measured execution times and of a program , and a WCET statistical estimator , if we obtain a safe WCET estimation for from . For instance, may be the set of measured execution times of the program while all processor features except the local cache L1 are deactivated, while is obtained, similarly, with a shared L2 cache activated. We consider that the variation of all input variables of the program follows the same sequence of values, when measuring the execution time of the program . With respect to the schedulability analysis problem, we are interested in analyzing graphs of communicating programs. A program communicates with a program if input variables of the program are among output variables of the program . A graph of communicating programs is a direct acyclic graph with programs as vertices. An edge from a program to program is defined if communicates with . The end to end response time of such graph is the longest path from any source vertex to any sink vertex of the graph, if there is, at least one path between these two vertices. A rule is compositional for any set of measured response times of program , any set of measured response times and a schedulability analysis if we obtain a safe schedulability analysis from .

Before enumerating our scientific objectives, we introduce the concept of variability factors. More precisely, the time properties of a cyber component are subject to variability factors. We understand by variability the distance between the smallest value and the largest value of a time property. With respect to the time properties of a CPS, the factors may be classified in three main classes:

- program structure: for instance, the execution time of a program that has two main branches is obtained, if appropriate composition principles apply, as the maximum between the largest execution time of each branch. In this case the branch is a variability factor on the execution time of the program;

- processor structure: for instance, the execution time of a program on a less predictable processor (e.g., one core, two levels of cache memory and one main memory) will have a larger variability than the execution time of the same program executed on a more predictable processor (e.g., one core, one main memory). In this case the cache memory is a variability factor on the execution time of the program;

- execution environment: for instance, the appearance of a pedestrian in front of a car triggers the execution of the program corresponding to the brakes in an autonomous car. In this case the pedestrian is a variability factor for the triggering of the program.

We identify three main scientific objectives to validate our research hypothesis. The three objectives are presented from program level, where we use statistical approaches, to the level of communicating programs, where we use probabilistic and non-probabilistic approaches.

The Kopernic scientific objectives are:

-

[O1] worst case execution time estimation of a program - modern processors induce an increased variability of the execution time of programs, making difficult (or even impossible) a complete static analysis to estimate such worst case. Our objective is to propose a solution composing probabilistic and non-probabilistic approaches based both on static and on statistical analyses by answering the following scientific challenges:

- a classification of the variability factors of execution times of a program with respect to the processor features. The difficulty of this challenge is related to the definition of an element belonging to the set of variability factors and its mapping to the execution time of the program.

- a compositional rule of statistical models associated to each variability factor. The difficulty of this challenge comes from the fact that a global maximum of a multicore processor cannot be obtained by upper bounding the local maxima on each core.

-

[O2] deciding the schedulability of all programs running within the same cyber component, given an energy budget - in this case the programs may have different time criticalities, but they share the same processor, possibly multicore1. Our objective is to propose a solution composing probabilistic and non-probabilistic approaches based on answers to the following scientific challenges:

- scheduling algorithms taking into account the interaction between different variability factors. The existence of time parameters described by probability distributions imposes to answer to the challenge of revisiting scheduling algorithms that lose their optimality even in the case of an unicore processor 30. Moreover, the multicore partionning problem is recognized difficult for the non-probabilistic case 34;

- schedulability analyses based on the algorithms proposed previously. In the case of predictable processors, the schedulability analyses accounting for operating systems costs increase the time dependability of CPSs 32. Moreover, in presence of variability factors, the composition property of non-probabilistic approaches is lost and new principles are required.

- [O3] deciding the schedulability of all programs communicating through predictable and non-predictable networks, given an energy budget - in this case the programs of the same cyber component execute on the same processor and they may communicate with the programs of other cyber components through networks that may be predictable (network on chip) or non-predictable (internet, telecommunications). Our objective is to propose a solution to this challenge by analysing schedulability of programs, for which existing (worst case) probabilistic solutions exist 31, communicating through networks, for which probabilistic worst-case solutions 18 and average solutions exist 28.

3 Research program

The research program for reaching these three objectives is organized according three main research axes

- Worst case execution time estimation of a program, detailed in Section 3.1;

- Building measurement-based benchmarks, detailed in Section 3.2;

- Scheduling of graph tasks on different resources within an energy budget in Section 3.3.

3.1 Worst case execution time estimation of a program

The temporal study of real-time systems is based on the estimation of the bounds for their temporal parameters and more precisely the WCET of a program executed on a given processor. The main analyses for estimating WCETs are static analyses 36, dynamic analyses 19, also called measurement-based analyses, and finally hybrid analyses that combine the two previous ones 36.

The Kopernic approach for solving the WCET estimation problem is based on (i) the identification of the impact of variability factors on the execution of a program on a processor and (ii) the proposition of compositional rules allowing to integrate the impact of each factor within a WCET estimation. Historically, the real-time community had illustrated the distribution of execution times for programs as heavy-tailed ones as intuitively the large values of execution times of programs are agreed to have a low probability of appearance. For instance Tia et al. are the first underlining this intuition within a paper introducing execution times described by probability distributions within a single core schedulability analysis 35. Since 35, a low probability is associated to large values of execution times of a program executed on a single core processor. It is, finally, in 2000 that the group of Alan Burns, within the thesis of Stewart Edgar 20, formalizes this property as a conjecture indicating that a maximal bound on the execution times of a program may be estimated by the Extreme Value Theory 23. No mathematical definition of what represents this bound for the execution time of a program has been proposed at that time. Two years later, a first attempt to define this bound has been done by Bernat et al. 16, but the proposed definition is extending the static WCET understanding as a combination of execution times of basic blocks of a program. Extremely pessimistic, the definition remains intuitive, without associating a mathematical description. After 2013, several publications from Liliana Cucu-Grosjean's group at Inria Nancy introduce a mathematical definition of a probabilistic worst-case execution time, respectively, probabilistic worst-case response time, as an appropriate formalization for a correct application of the Extreme Value Theory to the real-time problems.

We identify the following open research problems related to the first research axis:

- the generalization of modes analysis to multi-dimensional, each dimension representing a program when several programs cooperate;

- the proposition of a rules set for building programs that are time predictable for the internal architecture of a given single core and, then, of a multicore processor;

- modeling the impact of processor features on the energy consumption to better consider both worst case execution time and schedulability analyses considered within the third research axis of this proposal.

3.2 Building measurement-based benchmarks

The real-time community is facing the lack of benchmarks adapted to measurement-based analyses. Existing benchmarks for the estimation of WCET 33, 24, 21 have been used to estimate WCETs mainly for static analyses. They contain very simple programs and are not accompanied by a measurement protocol. They do not take into account functional dependencies between programs, mainly due to shared global variables which, of course, influence their execution times. Furthermore, current benchmarks do not take into account interferences due to the competition for resources, e.g., the memory shared by the different cores in a multicore. On the other hand, measurement-based analyses require execution times measured while executing programs on embedded processors, similar to those used in the embedded systems industry. For example, the mobile phone industry uses multicore based on non predictable cores with complex internal architecture, such as those of the ARM Cortex-A family. In a near future, these multicore will be found in critical embedded systems found in application domains such as avionics, autonomous cars, railway, etc., in which the team is deeply involved. This increases dramatically the complexity of measurement-based analyses compared to analyses performed on general purpose personal computers as they are currently performed.

We understand by measurement-based benchmarks a 3-uple composed by a program, a processor and a measurement protocol. The associated measurement protocols should detail the variation of the input variables (associated to sensors) of these benchmarks and their impact on the output variables (associated to actuators), as well as the variation of the processor states.

Proposing reproducibility and representativity properties that measurement-based benchmarks should follow is the strength of this research axis. We understand by the reproducibility, the property of a measurement protocol to provide the same ordered set of execution times for a fixed pair (program, processor). We understand by the representativity, the existence of a (sufficiently small) number of values for the input variables allowing a measurement protocol to provide an ordered set of execution times that ensure a convergence for the Extreme Value Index estimators.

Within this research axis we identify the following open problems:

- proving reproducibility and representativity properties while extending current benchmarks from predictable unicore processors (e.g., ARM Cortex-M4) to non predictable ones (e.g., ARM Cortex-A53 or Cortex-A7);

- proving reproducibility and representativity properties while extending unicore benchmarks to multicore processors. In this context, we face the supplementary difficulty of defining the principles that an operating system should satisfy in order to ensure a real-time behaviour.

3.3 Scheduling of graph tasks on different resources within an energy budget

Following the model-driven approach, the functional description of the cyber part of the CPS, is performed as a graph of dependent functions, e.g., a block diagram of functions in Simulink, the most widely used modeling/simulation tool in industry. Of course, a program is associated to every function. Since the graph of dependent programs becomes a set of dependent tasks when real-time constraints must be taken into account, we are facing the problem of verifying the schedulability of such dependent task sets when it is executed on a multicore processor.

Directed Acyclic Graphs (DAG) are widely used to model different types of dependent task sets. The typical model consists of a set of independent tasks where every task is described by a DAG of dependent sub-tasks with the same period inherited from the period of each task 15. In such DAG, the sub-tasks are vertices and edges are dependencies between sub-tasks. This model is well suited to represent, for example, the engine controller of a car described with Simulink. The multicore schedulability analysis may be of two types, global or partitionned. To reduce interference and interactions between sub-tasks, we focus on partitioned scheduling where each sub-task is assigned to a given core 22.

In order to propose a general DAG task model, we identify the following open research problems:

- solving the schedulability problem where probabilistic DAG tasks are executed on predictable and non predictable processors, and such that some tasks communicate through predictable networks, e.g., inside a multicore or a manycore processor, and non-predictable networks, e.g., between these processors through internet. Within this general schedulability problem; we consider five main classes of scheduling algorithms that we adapt to solve probabilistic DAG task scheduling problems. We compare the new algorithms with respect to their energy-consumption in order to propose new versions with a decreased energy consumption by integrating variation of frequencies for processor features like CPU or memory accesses.

- the validation of the proposed framework on our multicore drone case study. To answer to the challenging objective of proposing time predictable platforms for drones, we currently migrate the PX4-RT programs on heterogeneous architectures. This includes an implementation of the scheduling algorithms detailed within this research axis within current operating system, NuttX2.

4 Application domains

4.1 Avionics

Time critical solutions in this context are based on temporal and spatial isolation of the programs and the understanding of multicore interferences is crucial. Our contributions belong mainly to the solutions space for the objective [O1] identified previously.

4.2 Railway

Time critical solutions in this context concern both the proposition of an appropriate scheduler and associated schedulability analyses. Our contributions belong to the solutions space of problems dealt within objectives [O1] and [O2] identified previously.

4.3 Autonomous cars

Time critical solutions in this context concern the interaction between programs executed on multicore processors and messages transmitted through wireless communication channels. Our contributions belong to the solutions space of all three classes of problems dealt within all three Kopernic objectives identified previously.

4.4 Drones

As it is the case of autonomous cars, there is an interaction between programs and messages, suggesting that our contributions in this context belong to the solutions space of all three classes of problems dealt within the objectives identified previously.

5 Social and environmental responsibility

5.1 Impact of research results

The Kopernic members provide theoretical means to decrease the processor utilization. Such gain is estimated within to utilization gain for existing architectures or energy consumption for new architectures by decreasing the number of necessary cores.

6 Highlights of the year

6.1 Institutional life

The Kopernic team thanks Christine Anocq for her support and help without counting her hours and wishes her a beautiful, well deserved retirement.

The Kopernic team thanks the Commission d'Évaluation for its outstanding efforts, in 2022 and previous years, in defending the interests of the research community, keeping us thoroughly informed about topics relevant to the scientific life, and upholding the moral and intellectual values we are collectively proud of and which define our institute.

The Kopernic team thanks its administrative colleagues for their patience and impressive efforts to limit the impact of Inria Eksae-related institutional malfunctions, which made our everyday work very difficult in 2022. We also thank our suppliers for their patience.

7 New software and platforms

7.1 New software

7.1.1 SynDEx

-

Keywords:

Distributed, Optimization, Real time, Embedded systems, Scheduling analyses

-

Scientific Description:

SynDEx is a system level CAD software implementing the AAA methodology for rapid prototyping and for optimizing distributed real-time embedded applications. It is developed in OCaML.

Architectures are represented as graphical block diagrams composed of programmable (processors) and non-programmable (ASIC, FPGA) computing components, interconnected by communication media (shared memories, links and busses for message passing). In order to deal with heterogeneous architectures it may feature several components of the same kind but with different characteristics.

Two types of non-functional properties can be specified for each task of the algorithm graph. First, a period that does not depend on the hardware architecture. Second, real-time features that depend on the different types of hardware components, ranging amongst execution and data transfer time, memory, etc.. Requirements are generally constraints on deadline equal to period, latency between any pair of tasks in the algorithm graph, dependence between tasks, etc.

Exploration of alternative allocations of the algorithm onto the architecture may be performed manually and/or automatically. The latter is achieved by performing real-time multiprocessor schedulability analyses and optimization heuristics based on the minimization of temporal or resource criteria. For example while satisfying deadline and latency constraints they can minimize the total execution time (makespan) of the application onto the given architecture, as well as the amount of memory. The results of each exploration is visualized as timing diagrams simulating the distributed real-time implementation.

Finally, real-time distributed embedded code can be automatically generated for dedicated distributed real-time executives, possibly calling services of resident real-time operating systems such as Linux/RTAI or Osek for instance. These executives are deadlock-free, based on off-line scheduling policies. Dedicated executives induce minimal overhead, and are built from processor-dependent executive kernels. To this date, executives kernels are provided for: TMS320C40, PIC18F2680, i80386, MC68332, MPC555, i80C196 and Unix/Linux workstations. Executive kernels for other processors can be achieved at reasonable cost following these examples as patterns.

-

Functional Description:

Software for optimising the implementation of embedded distributed real-time applications and generating efficient and correct by construction code

-

News of the Year:

We improved the distribution and scheduling heuristics to take into account the needs of co-simulation.

- URL:

-

Contact:

Yves Sorel

-

Participant:

Yves Sorel

7.1.2 EVT Kopernic

-

Keywords:

Embedded systems, Worst Case Execution Time, Real-time application, Statistics

-

Scientific Description:

The EVT-Kopernic tool is an implementation of the Extreme Value Theory (EVT) for the problem of the statistical estimation of worst-case bounds for the execution time of a program on a processor. Our implementation uses the two versions of EVT - GEV and GPD - to propose two independent methods of estimation. Their results are compared and only results that are sufficiently close allow to validate an estimation. Our tool is proved predictable by its unique choice of block (GEV) and threshold (GPD) while proposant reproducible estimations.

-

Functional Description:

EVT-Kopernic is tool proposing a statistical estimation for bounds on worst-case execution time of a program on a processor. The estimator takes into account dependences between execution times by learning from the history of execution, while dealing also with cases of small variability of the execution times.

-

News of the Year:

Any statistical estimator should come with an representative measurement protocole based on the processus of composition, proved correct. We propose the first such principle of composition while using a Bayesien modeling taking into account iteratively different measurement models. The composition model has been described in a patent submitted this year with a scientific publication under preparation.

- URL:

-

Contact:

Adriana Gogonel

-

Participants:

Adriana Gogonel, Liliana Cucu

8 New results

During this year, the results of Kopernic members have covered all Kopernic three research axes.

8.1 Worst case execution time estimation of a program

Participants: Slim Ben Amor, Rihab Bennour, Hadrien Clarke, Liliana Cucu-Grosjean, Adriana Gogonel, Kossivi Kougblenou, Yves Sorel, Kevin Zagalo, Marwan Wehaiba El Khazen.

We consider WCET statistical estimators that are based on the utilization of the Extreme Value Theory 23. Compared to existing methods 36, our results require the execution of the program under study on the targeted processor or at least a cycle-accurate simulator of this processor. The originality of considering such WCET statistical estimators consists in the proposition of a black box solution with respect to the program structure. This solution is obtained by (i) comparing different Generalized Extreme Values estimators 25 and (ii) separating the impact of the processor features from those of the program structure and of the execution environment, as variability factors for the CPS time properties.

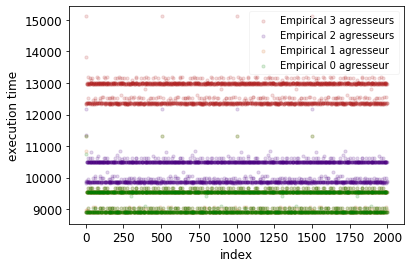

We continue the work on separating the impact of processor features and of the program structure on programs provided by Easymile within the collaborative project, STARTREC. The WCET estimations and the context of this work has been described in 9. An illustrative application on the existing TACLeBench benchmark minver of new principles provided to consolidate the justification of statistical WCET estimations within the context of the ISO26262 standard (see Figure2). This ISO26262-related justification is one of the expected outputs of the STARTREC project, while a complete WCET estimation of KDBench programs is another output. In Figure2, we present different execution profiles of the minver program executed on a barre metal T1040 in presence of several programs executed in parallel. These later executions are, also, known, as attackers.

Different execution profiles for the minver program

8.2 Building measurement-based benchmarks: KDBench

Participants: Slim Ben Amor, Rihab Bennour, Hadrien Clarke, Liliana Cucu-Grosjean, Ismail Hawila, Yves Sorel, Marwan Wehaiba El Khazen.

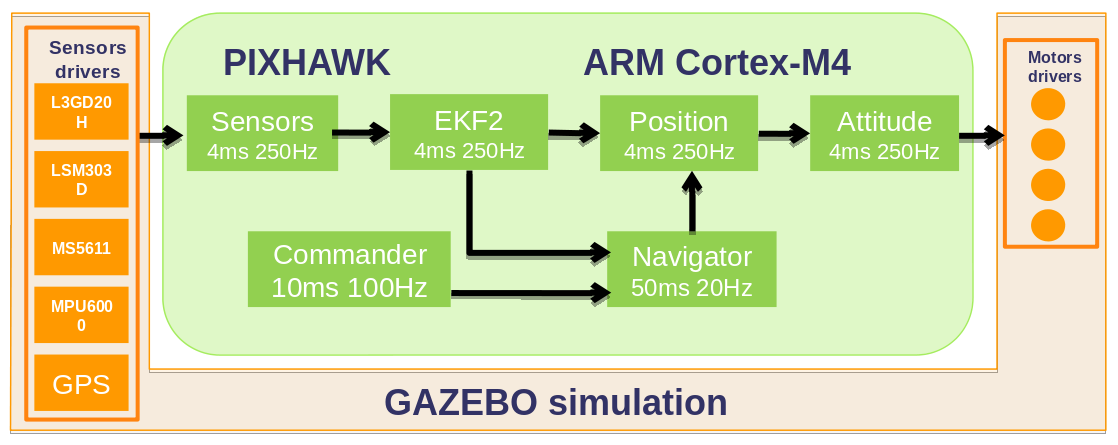

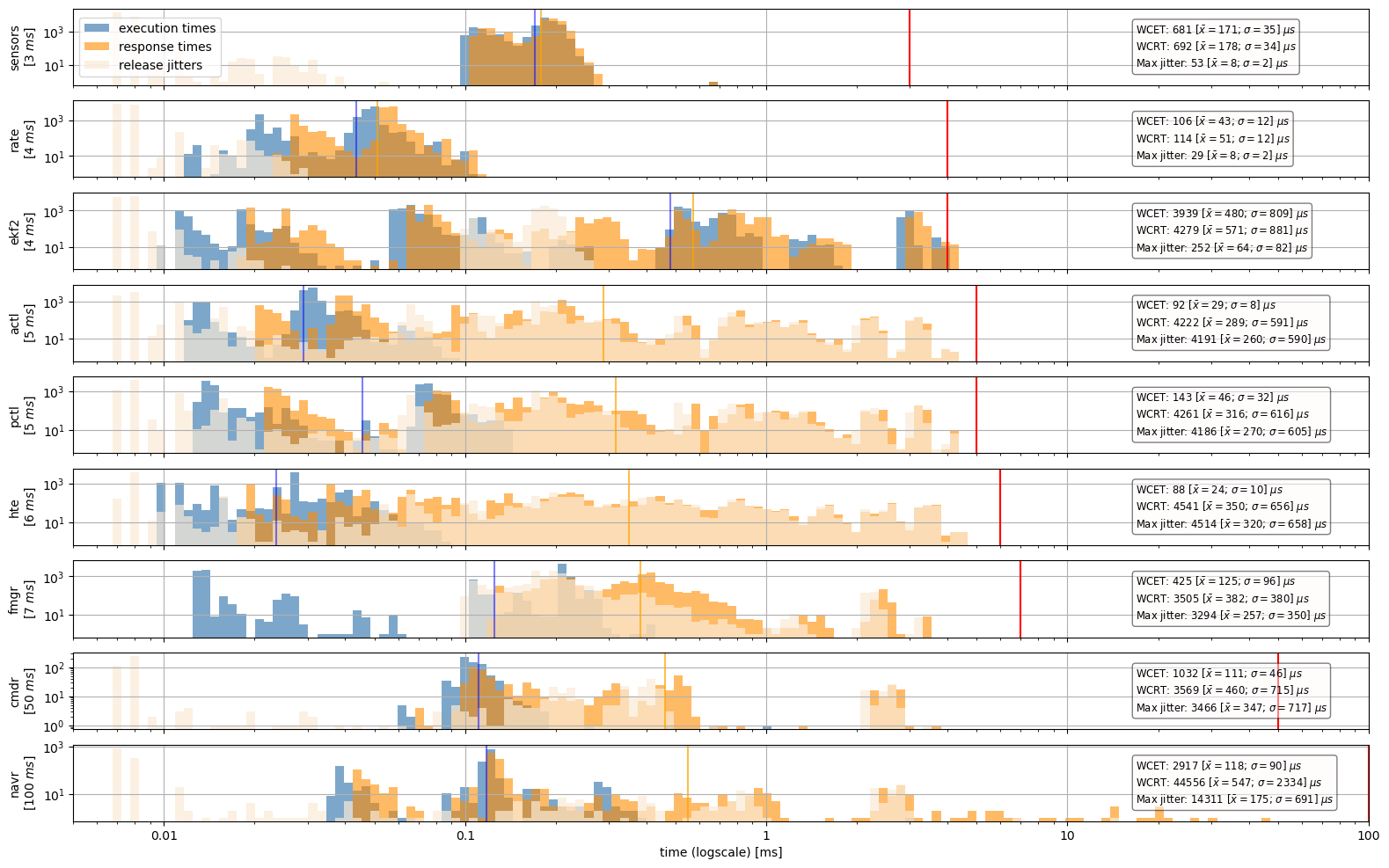

KDBench, our measurement-based benchmarks, are obtained by modifying open-source programs of the autopilot PX4 designed to control a wide range of air, land, sea and underwater drones. More precisely, the studied programs are executed on a standard Pixhawk drone board based on a predictable single core processor ARM Cortex-M4 and during the CEOS project we have transformed this set of programs into a set of dependent tasks that satisfies real-time constraints, leading to a new version of PX4, called PX4-RT. As usual, the set of dependent real-time tasks is defined by a data dependency graph. An interested reader may refer to Figure 3 which details real-time tasks corresponding to the various functions of the autopilot: sensors processing, Kalman filter (EKF2) estimating position and attitude, position control, attitude control, navigator for long term navigation, commander for modes control, actuators (electric motors) processing.

The graph structure of the autopilote programs

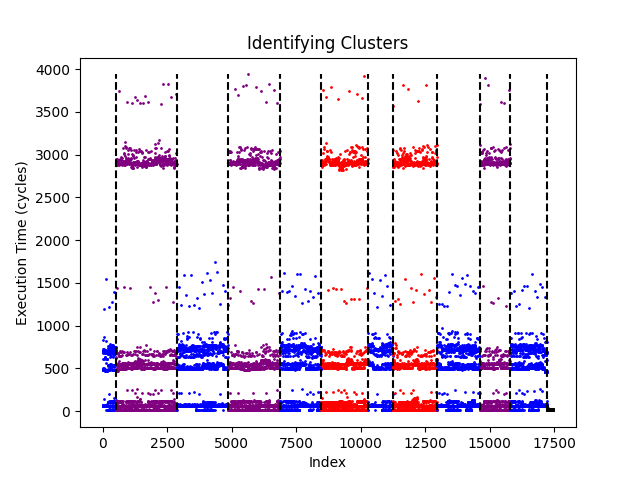

The PX4-RT programs are the open-source programs on which the KDBench are built. More formally, we understand by measurement-based benchmarks a 4-uple (, , , ) composed by a program , a microcontroller , a measurement protocol and an ordered sequence of execution times . For the program , one may provide the source code as well as the binary code and in our case we consider the PX4-RT programs. A measurement protocol may be defined by the variation of the input variables (associated to sensors) of these benchmarks. In our case, the variation of the input variables is obtained by collecting them during a simulated flight. The fourth component, the ordered set of execution times, is proposed to overcome the difficulty of the reproducibility of results while comparing execution times measured for the same program on slightly different microcontroller configurations. In Figure 4, we illustrate the ordered sequence of EKF2 execution times.

Different execution clusters for the EKF2 program of KDBench

Moreover, we provide these measurements by using information collected at the scheduler level, thus the impact of the measurement protocol is negligible on the variation of measured execution times. Last, but not least this fourth component improves the access of our community to hardware-in-the-loop (HitL) benchmarks. We understand by HitL that the execution of the benchmarks is done on a microcontroller while sensors and actuators of a considered cyber-physical system (CPS), as well as its physical environment, are simulated. Indeed, our community does not often provide numerical results for programs executed on microcontrollers because of the important effort of implementation required for such execution, or the lack of access to these processors. This may prevent the community in proposing realistic models describing the impact of existing microntrollers and thus propose results that may be not realistic w.r.t. the microntrollers used by the real-time industry. More details are provided on the web page of the Kopernic team at The KDBench website as well as within a first short publication in 13.

8.3 Scheduling of graph tasks on different resources within an energy budget

Participants: Avner Bar Hen, Slim Ben Amor, Hadrien Clarke, Liliana Cucu-Grosjean, Ismail Hawila, Yves Sorel, Kevin Zagalo.

Due to widespread of multicore processors on embedded and real-time systems, we concentrate our work on the study of the schedulability of graph tasks on such processors. We consider preemptive (both global and partitionned) fixed-priority scheduling policies. We monitor, when possible, the energy consumption required to meet deadlines as a metric comparing the efficiency of scheduling policies.

Given the difficulty of our scheduling problem, we have organized our work by searching an appropriate feasibility interval for the lighter version of this problem by considering single core processors and independent tasks with all parameters described by probabilistic distributions. Results have been proposed in 8, 12 and we consider its extension to multicore scheduling as an intermediary step towards graph tasks. For such graphs, a model and results on multicore scheduling algorithms are proposed in 7.

This picture illustrates a schedule of the PX4-RT programs

In order to motivate the introduction of graph tasks within the real-time community, we currently extend our KDBench by considering, also, scheduling related information. For instance, as shown in Figure 5 a KDBench user may display the response times as well as preemption areas for different PX4-RT programs.

9 Bilateral contracts and grants with industry

9.1 CIFRE Grant funded by StatInf

Participants: Liliana Cucu-Grosjean, Adriana Gogonel, Marwan Wehaiba El Khazen.

A CIFRE agreement between the Kopernic team and the start-up StatInf has started on October 1st, 2020. Its funding is related to study the evolution of WCET models to consider the energy consumption according to Kopernic research objectives.

9.2 CIFRE Grant funded by StatInf

Participants: Liliana Cucu-Grosjean, Slim Ben Amor, Ismail Hawila, Yves Sorel.

A CIFRE agreement between the Kopernic team and the start-up StatInf has started on October 1st, 2022. Its funding is related to study the relation between the control theory robustness and the schedulability problem using probabilistic descriptions according to Kopernic research objectives.

10 Partnerships and cooperations

Participants: Jamile Vasconcelos, George Lima, Olena Verbytska, Marharyta Tomina.

10.1 International initiatives

10.1.1 Associate Teams in the framework of an Inria International Lab or in the framework of an Inria International Program

KEPLER

-

Title:

Probabilistic foundations for time, a key concept for the certification of cyber-physical systems

-

Duration:

2020 ->

-

Coordinator:

George Lima (gmlima@ufba.br)

-

Partners:

- Universidade Federal da Bahia (Brésil)

-

Inria contact:

Liliana Cucu-Grosjean

-

Summary:

Today the term of cyber-physical systems (CPSs) refers to a new generation of systems integrating computational and physical capabilities to interact with humans. A defibrillator, a mobile phone, a car or an aircraft, they all are CPSs. Beside constraints like power consumption, security, size and weight, CPSs may have cyber components required to fulfill their functions within a limited time interval, property a.k.a safety. This expectation arrives simultaneously with the need of implementing new classes of algorithms, e.g. deep learning techniques, requiring the utilization of important computing and memory resources. These ressources may be found on multicore processors, known for increasing the execution time variability of programs. Therefore, ensuring time predictability on multicore processors is our identified challenge. The Kepler project faces this challenge by developing new mechanisms and techniques for supporting CPS applications on multicore processors, focusing on scheduling and timing analysis, for which probabilistic guarantees should be provided.

During this year, Jamile Vasconcelos has visited the Kopernic team working on the correction of existing results on the statistical WCET estimation that have been published in 2022 within the real-time community.

10.2 International research visitors

10.2.1 Visit of Ukrainian MSc students

During this year, the Kopernic team has hosted two Ukrainian MSc students allowing them to continue their studies despite the situation within their home country. Their work has been supervised by Kevin Zagalo.

10.3 National initiatives

10.3.1 PSPC

STARTREC

The STARTREC project is funded by the PSPC call. Its partners are Easymile, StatInf, Trustinsoft, Inria and CEA. Its objective is the proposition of ISO26262 compliant arguments for the autonomous driving. The results are described within Section 8.

11 Dissemination

11.1 Promoting scientific activities

11.1.1 Scientific events: organisation

General chair, scientific chair

- Liliana Cucu-Grosjean has been the scientific chair of Inria scientific days 2022

- Liliana Cucu-Grosjean has been the General Chair of the flag conference IEEE Real-Time Systems Symposium (RTSS2022)

- Liliana Cucu-Grosjean and Yasmina Abdeddaïm have been the General Chairs of the Real-Time Networks and Systems Conference (RTNS2022) 11.

Member of the organizing committees

The Kopernic PhD students have been members of the RTSS022 and RTNS2022 organizing committees.

Chair of conference program committees

- Liliana Cucu-Grosjean has been the E track chair at the architecture European conference, DATE2022

- Yasmina Abdeddaïm has been the PC co-chair of the RTSOPS 2022 workshop (ECRTS)

Member of the conference program committees

Kopernic members are regular PC members for relevant conferences like RTSS, RTAS, ECRTS, DATE, ETFA, RTNS and WFCS.

Reviewers

All Kopernic members are regularly serving as reviewers for the main journals of our domain: Journal of Real-Time Systems, Information Processing Letter, Journal of Heuristics, Journal of Systems Architecture, Journal of Signal Processing Systems, Leibniz Transactions on Embedded Systems, IEEE Transactions on Industrial Informatics, etc.

11.1.2 Leadership within the scientific community

Liliana Cucu-Grosjean has been IEEE TCRTS member since 2016.

11.1.3 Scientific expertise

- Yves Sorel is a member of the Steering Committee of System Design and Development Tools Group of Systematic Paris-Region Cluster.

11.1.4 Research administration

- Yves Sorel is member of the CDT Paris center commission

11.2 Teaching - Supervision - Juries

11.2.1 Teaching

- Yasmina Abdeddaïm, Graphs and Algorithmes, the 2nd year of Engineering school, ESIEE Paris

- Yasmina Abdeddaïm, Safe design of reactive systems, the 3rd year of Engineering school, ESIEE Paris

- Yasmina Abdeddaïm, Embedded Real-Time Software, the 3rd year of Engineering school, ESIEE Paris

- Yasmina Abdeddaïm, Artificial Intelligence, the 2nd year of Engineering school, ESIEE Paris

- Avner Bar-Hen, Spatial statistics spatiales, ENSAI

11.2.2 Supervision

- Ismail Hawila, Multicore scheduling of real-time control systems of probabilisic tasks with precedence constraints, Sorbonne university, started on October 2022, supervised by Liliana Cucu-Grosjean and Slim Ben Amor (StatInf)

- Chiara Daini, Dimmensioning of mixed-criticality embedded systems for an efficient execution of artificial intelligence algorithms, Sorbonne university, started on January 2022, supervised by Liliana Cucu-Grosjean, Yasmina Abdeddaïm (ESIEE) with the support of Adriana Gogonel (StatInf ).

- Amine Mohamed Khelassi,Using statistical methods to model and estimate the time variability of programs executed on multicore architectures, Gustave Eiffel university, started on October 2021, supervised by Yasmina Abdeddaïm and Eva Dokladalova (ESIEE) and Liliana Cucu-Grosjean.

- Marwan Wehaiba El Khazen,Statistical models for optimizing the energy consumption of cyber-physical systems, Sorbonne university, started on October 2020, supervised by Liliana Cucu- Grosjean and Adriana Gogonel (StatInf).

- Kevin Zagalo,Statistical predictability of cyber-physical systems,Sorbonne University,started on January 2020 with a defense expected in 2023, supervised by Liliana Cucu and Prof. Avner Bar-Hen (CNAM).

11.2.3 Juries

- Avner Bar-Hen has been a PhD reviewer to Yvenn Amara-Ouali, Statistical modelling of electric vehicle charging behaviours, (Univ. Paris Saclay)

- Liliana Cucu-Grosjean has been member of the following PhD juries (if HDR or reviewer, then stated below):

- Roy Jamil, Un environnement unifié pour le développement sur puce à cœurs asymétriques, ENSMA Poities (reviewer)

- Ludovic Thomas, Analyse des conséquences sur les bornes de latences des combinaisons de mécanismes d'ordonnancement, de redondance et de synchronisation dans les réseaux temps-réel, ISAE Toulouse

- Pierre-Julien Chaine, Adaptabilité de Time Sensitive Networking aux exigences de l'industrie aérospatiale, ONERA Toulouse

- Sébastien Le Nours, Contributions à la modélisation et la simulation de niveau système des architectures matérielles-logicielles des systèmes embarqués, Nantes University (HDR)

11.3 Popularization

11.3.1 Internal or external Inria responsibilities

- Liliana Cucu-Grosjean has been the Inria national harassment referee within the CNHSCT

11.3.2 Articles and contents

- Adriana Gogonel and Liliana Cucu-Grosjean, RocqStat, un produit StatInf, lauréat aux Trophées des Assises de l’Embarqué, Alumni de Télécom Paris June, 2022

- Adriana Gogonel and Liliana Cucu-Grosjean, Partenariat: cas d'usage Airbus x StatInf, David and Goliath Study 2022

11.3.3 Education

- Liliana Cucu-Grosjean has presented the probabilistic scheduling the Initiation à la recherche within the Embedded Systems MSc, University of Paris Saclay, February 2022

- A chapter disseminating introduction to new researchers on probabilistic real-time scheduling has been proposed by Kopernic members in 10

11.3.4 Interventions

- Adriana Gogonel has been a speaker at the "Day around the 2022 doctorate" of the Société Informatique de France (SIF), 2022

- Adriana Gogonel has been a speaker at the "PhD Talent Day" organized by PhD Talent French Organization, 2022

- Adriana Gogonel has participated to the panel "Women entrepreneurs in deep tech", Paris Saclay University, BPI Tour 2022

12 Scientific production

12.1 Major publications

- 1 patentSimulation Device.FR2016/050504FranceMarch 2016, URL: https://hal.science/hal-01666599

- 2 inproceedingsMeasurement-Based Probabilistic Timing Analysis for Multi-path Programs.the 24th Euromicro Conference on Real-Time Systems, ECRTS2012, 91--101

- 3 patentDispositif de caractérisation et/ou de modélisation de temps d'exécution pire-cas.1000408053FranceJune 2017, URL: https://hal.science/hal-01666535

- 4 inproceedingsLatency analysis for data chains of real-time periodic tasks. the 23rd IEEE International Conference on Emerging Technologies and Factory Automation, ETFA'18September 2018

- 5 articleReproducibility and representativity: mandatory properties for the compositionality of measurement-based WCET estimation approaches.SIGBED Review1432017, 24--31

- 6 inproceedingsScheduling Real-time HiL Co-simulation of Cyber-Physical Systems on Multi-core Architectures. the 24th IEEE International Conference on Embedded and Real-Time Computing Systems and ApplicationsAugust 2018

12.2 Publications of the year

International journals

International peer-reviewed conferences

Scientific book chapters

Edition (books, proceedings, special issue of a journal)

Reports & preprints

Other scientific publications

12.3 Cited publications

- 14 bookCyber-physical systems.IEEE2011

- 15 inproceedingsA Generalized Parallel Task Model for Recurrent Real-time Processes.2012 IEEE 33rd Real-Time Systems Symposium (RTSS)2012, 63-72

- 16 inproceedingsWCET Analysis of Probabilistic Hard Real-Time System.Proceedings of the 23rd IEEE Real-Time Systems Symposium (RTSS'02)IEEE Computer Society2002, 279--288

- 17 inproceedingsA Synchronous-Based Code Generator for Explicit Hybrid Systems Languages.Compiler Construction - 24th International Conference, CC, Joint with ETAPS2015, 69--88

- 18 inproceedingsPreliminary results for introducing dependent random variables in stochastic feasiblity analysis on CAN.the WIP session of the 7th IEEE International Workshop on Factory Communication Systems (WFCS)2008

- 19 articleA Survey of Probabilistic Timing Analysis Techniques for Real-Time Systems.LITES612019, 03:1--03:60

- 20 inproceedingsStatistical Analysis of WCET for Scheduling.the 22nd IEEE Real-Time Systems Symposium (RTSS)2001, 215--225

- 21 inproceedingsTACLeBench: A Benchmark Collection to Support Worst-Case Execution Time Research.16th International Workshop on Worst-Case Execution Time Analysis (WCET)55OASICS2016, 2:1--2:10

- 22 inproceedingsResponse time analysis of sporadic DAG tasks under partitioned scheduling.11th IEEE Symposium on Industrial Embedded Systems (SIES)05 2016, 1-10

- 23 articleOpen Challenges for Probabilistic Measurement-Based Worst-Case Execution Time.Embedded Systems Letters932017, 69--72

- 24 inproceedingsThe Mälardalen WCET Benchmarks: Past, Present And Future.10th International Workshop on Worst-Case Execution Time Analysis (WCET)15OASICS2010, 136--146

- 25 inproceedingsWork-in-Progress: Lessons learnt from creating an Extreme Value Library in Python.41st IEEE Real-Time Systems Symposium, RTSSIEEE2020, 415--418

- 26 articleComputing Needs Time.Communications of ACM5252009

- 27 bookIntroduction to embedded systems - a cyber-physical systems approach.MIT Press2017

- 28 inproceedingsReal-Time Queueing Theory.the 10th IEEE Real-Time Systems Symposium (RTSS)1996

- 29 bookMultiprocessor Scheduling for Real-Time Systems.Springer2015

- 30 inproceedingsOptimal Priority Assignment Algorithms for Probabilistic Real-Time Systems.the 19th International Conference on Real-Time and Network Systems (RTNS)2011

- 31 inproceedingsResponse Time Analysis for Fixed-Priority Tasks with Multiple Probabilistic Parameters.the IEEE Real-Time Systems Symposium (RTSS)2013

- 32 inproceedingsMonoprocessor Real-Time Scheduling of Data Dependent Tasks with Exact Preemption Cost for Embedded Systems.the 16th IEEE International Conference on Computational Science and Engieering (CSE)2013

- 33 inproceedingsPapaBench: a Free Real-Time Benchmark.6th Intl. Workshop on Worst-Case Execution Time (WCET) Analysis4OASICS2006

- 34 inproceedingsAutomatic Parallelization of Multi-Rate FMI-based Co-Simulation On Multi-core.the Symposium on Theory of Modeling & Simulation: DEVS Integrative M&S Symposium2017

- 35 inproceedingsProbabilistic Performance Guarantee for Real-Time Tasks with Varying Computation Times.IEEE Real-Time and Embedded Technology and Applications Symposium1995

- 36 articleThe worst-case execution time problem: overview of methods and survey of tools.Trans. on Embedded Computing Systems732008, 1-53