2023Activity reportProject-TeamBIOVISION

RNSR: 201622040S- Research center Inria Centre at Université Côte d'Azur

- Team name: Biologically plausible Integrative mOdels of the Visual system : towards synergIstic Solutions for visually-Impaired people and artificial visiON

- Domain:Digital Health, Biology and Earth

- Theme:Computational Neuroscience and Medicine

Keywords

Computer Science and Digital Science

- A5.1.1. Engineering of interactive systems

- A5.1.2. Evaluation of interactive systems

- A5.1.9. User and perceptual studies

- A5.3. Image processing and analysis

- A5.4. Computer vision

- A5.5.4. Animation

- A5.6. Virtual reality, augmented reality

- A5.6.1. Virtual reality

- A5.8. Natural language processing

- A6.1.1. Continuous Modeling (PDE, ODE)

- A6.1.4. Multiscale modeling

- A6.1.5. Multiphysics modeling

- A6.2.4. Statistical methods

- A6.3.3. Data processing

- A7.1.3. Graph algorithms

- A9.4. Natural language processing

- A9.7. AI algorithmics

Other Research Topics and Application Domains

- B1.1.8. Mathematical biology

- B1.2. Neuroscience and cognitive science

- B1.2.1. Understanding and simulation of the brain and the nervous system

- B1.2.2. Cognitive science

- B1.2.3. Computational neurosciences

- B2.1. Well being

- B2.5.1. Sensorimotor disabilities

- B2.5.3. Assistance for elderly

- B2.7.2. Health monitoring systems

- B9.1.2. Serious games

- B9.3. Medias

- B9.5.2. Mathematics

- B9.5.3. Physics

- B9.6.8. Linguistics

- B9.9. Ethics

1 Team members, visitors, external collaborators

Research Scientists

- Bruno Cessac [Team leader, INRIA, Senior Researcher, HDR]

- Pierre Kornprobst [INRIA, Senior Researcher, HDR]

- Hui-Yin Wu [INRIA, ISFP]

Post-Doctoral Fellow

- Sebastian Santiago Vizcay [INRIA, Post-Doctoral Fellow]

PhD Students

- Alexandre Bonlarron [INRIA]

- Johanna Delachambre [UNIV COTE D'AZUR]

- Simone Ebert [INRIA, from Oct 2023]

- Simone Ebert [UNIV COTE D'AZUR, until Sep 2023]

- Jerome Emonet [INRIA]

- Franz Franco Gallo [INRIA]

- Sebastian Gallardo Diaz [UNIV COTE D'AZUR, CIFRE, from Jun 2023]

- Erwan Petit [UNIV COTE D'AZUR, from Oct 2023]

- Florent Robert [UNIV COTE D'AZUR]

Technical Staff

- Clement Bergman [INRIA, Engineer]

- Jeremy Termoz-Masson [INRIA, Engineer, until Jul 2023]

Interns and Apprentices

- Christos Kyriazis [UNIV COTE D'AZUR, Intern, from Sep 2023]

- Christos Kyriazis [UNIV COTE D'AZUR, Intern, from Mar 2023 until May 2023]

- Erwan Petit [INRIA, Intern, from Feb 2023 until Jul 2023]

- Kateryna Pirkovets [INRIA, Intern, from Mar 2023 until Aug 2023]

Administrative Assistant

- Marie-Cecile Lafont [INRIA]

Visiting Scientists

- Matthieu Gilson [AMU, until Feb 2023]

- Laurent Perrinet [CNRS, until Apr 2023]

External Collaborators

- Aurélie Calabrese [CNRS, from Dec 2023]

- Eric Castet [CNRS]

2 Overall objectives

Vision is a key function to sense our world and perform complex tasks. It has high sensitivity and strong reliability, even though most of its input is noisy, changing, and ambiguous. A better understanding of how biological vision works opens up scientific challenges as well as promising technological, medical and societal breakthroughs. Fundamental aspects such as understanding how a visual scene is encoded by the retina into spike trains, transmitted to the visual cortex via the optic nerve through the thalamus, decoded in a fast and efficient way, and then creating a sense of perception, offers perspectives in research and technological developments for current and future generations.

Vision is not always functional though. Sometimes, "something" goes wrong. Although many visual impairments such as myopia, hypermetropia, cataract, can be cured by glasses, contact lenses, or other means like medicine or surgery, pathologies impairing the retina such as Age-Related Macular Degeneration (AMD) and Retinis Pigmentosa (RP) can not be fixed with these standard treatments 31. They result in a progressive degradation of vision (Figure 1), up to a stage of low vision (visual acuity of less than 6/18 to light perception, or a visual field of less than 10 degrees from the point of fixation) up to blindness. Thus, a person with low vision must learn to adjust to their pathology. Progress in research and technology can help them. Considering the aging of the population in developed countries and its strong correlation with the prevalence of eye diseases, low vision has already become a major societal problem.

Figure depicts a picture of a person through the eyes of a person with CFL (a scotoma blurs the image).

Central blind spot (i.e., scotoma), as perceived by an individual suffering from Central Field Loss (CFL) when looking at someone's face.

In this context, the Biovision team's research revolves around the central theme biological vision and perception, and the impact of low vision conditions. Our strategy is based upon four cornerstones: To model, to assist diagnosis, to aid visual activities like reading, and to enable personalized content creation. We aim to develop fundamental research as well as technology transfer along three entangled axes of research:

- Axis 1: Modeling the retina and the primary visual system.

- Axis 2: Diagnosis, rehabilitation, and low-vision aids.

- Axis 3: Visual media analysis and creation.

These axes form a stable, three-pillared basis for our research activities, giving our team an original combination in expertise: neuroscience modeling, computer vision, Virtual and Augmented Reality (XR), and media analysis and creation. Our research themes require strong interactions with experimental neuroscientists, modelers, ophtalmologists and patients, constituting a large network of national and international collaborators. Biovision is therefore a strongly multi-disciplinary team. We publish in international reviews and conferences in several fields including neuroscience, low vision, mathematics, physics, computer vision, multimedia, computer graphics, and human-computer interactions.

3 Research program

3.1 Axis 1 - Modeling the retina and the primary visual system.

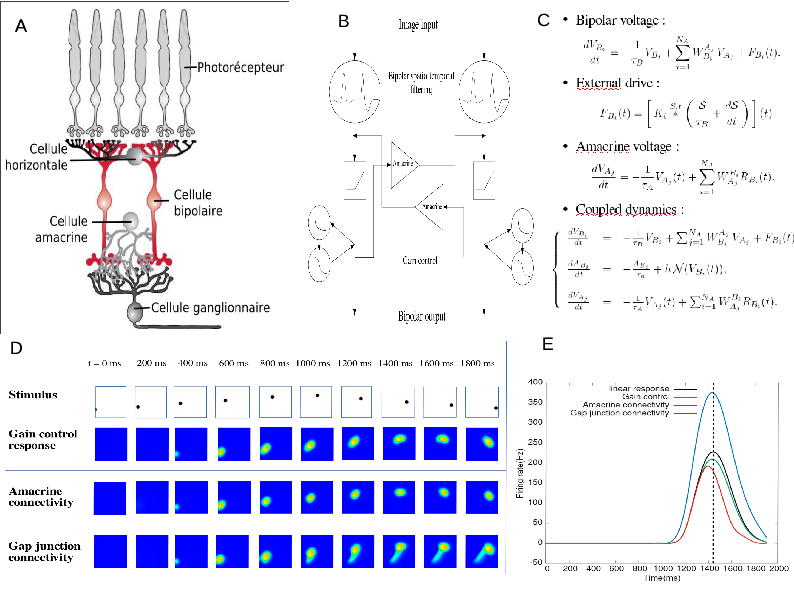

In collaboration with neuroscience labs, we derive phenomenological equations and analyze them mathematically by adopting methods from theoretical physics or mathematics (Figure 2). We also develop simulation platforms like Pranas or Macular, helping us confront theoretical predictions to numerical simulations, or allowing researchers to perform in silico experimentation under conditions rarely accessible to experimentalists (such as simultaneously recording the retina layers and the primary visual cortex1 (V1)). Specifically, our research focuses on the modeling and mathematical study of:

- Multi-scale dynamics of the retina in the presence of spatio-temporal stimuli;

- Response to motion, anticipation and surprise in the early visual system;

- Spatio-temporal coding and decoding of visual scenes by spike trains;

- Retinal pathologies.

The process of retina modeling. A) Inspired from the retina structure in biology, we, B) designe a simplified architecture keeping retina components that we want to better understand. C) From this we derive equations that we can study mathematically and/or with numerical simulations (D). In E we see an example. Here, our retina model's shows how the response to a parabolic motion where the peak of response resulting from amacrine cells connectivity or gain control is in advance with respect to the peak in response without these mechanisms. This illustrates retinal anticipation.

The process of retina modeling. A) The retina structure from biology. B) Designing a simplified architecture keeping retina components that we want to better understand (here, the role of Amacrine cells in motion anticipation); C) Deriving mathematical equations from A and B. D, E). Results from numerical modeling and mathematical modeling. Here, our retina model's response to a parabolic motion where the peak of response resulting from amacrine cells connectivity or gain control is in advance with respect to the peak in response without these mechanisms. This illustrates retinal anticipation.

3.2 Axis 2 - Diagnosis, rehabilitation, and low-vision aids.

In collaboration with low vision clinical centers and cognitive science labs, we develop computer science methods, open software and toolboxes to assist low vision patients (Figure 3), with a particular focus on Age-Related Macular Degeneration2. As AMD patients still have a plastic and functional vision in their peripheral visual field 35 they must develop efficient “Eccentric Viewing" (EV) to adapt to the central blind zone (scotoma) and to direct gaze away from the object they want to identify 41. Commonly proposed assistance tools involve visual rehabilitation methods 36 and visual aids that usually consist of magnifiers 32.

Our main research goals are:

- Understanding the relations between anatomo-functional and behavioral observations;

- Diagnosis from reading performance screening and oculomotor behavior analysis;

- Personalized and gamified rehabilitation for training eccentric viewing in VR;

- Personalized visual aid systems for daily living activities.

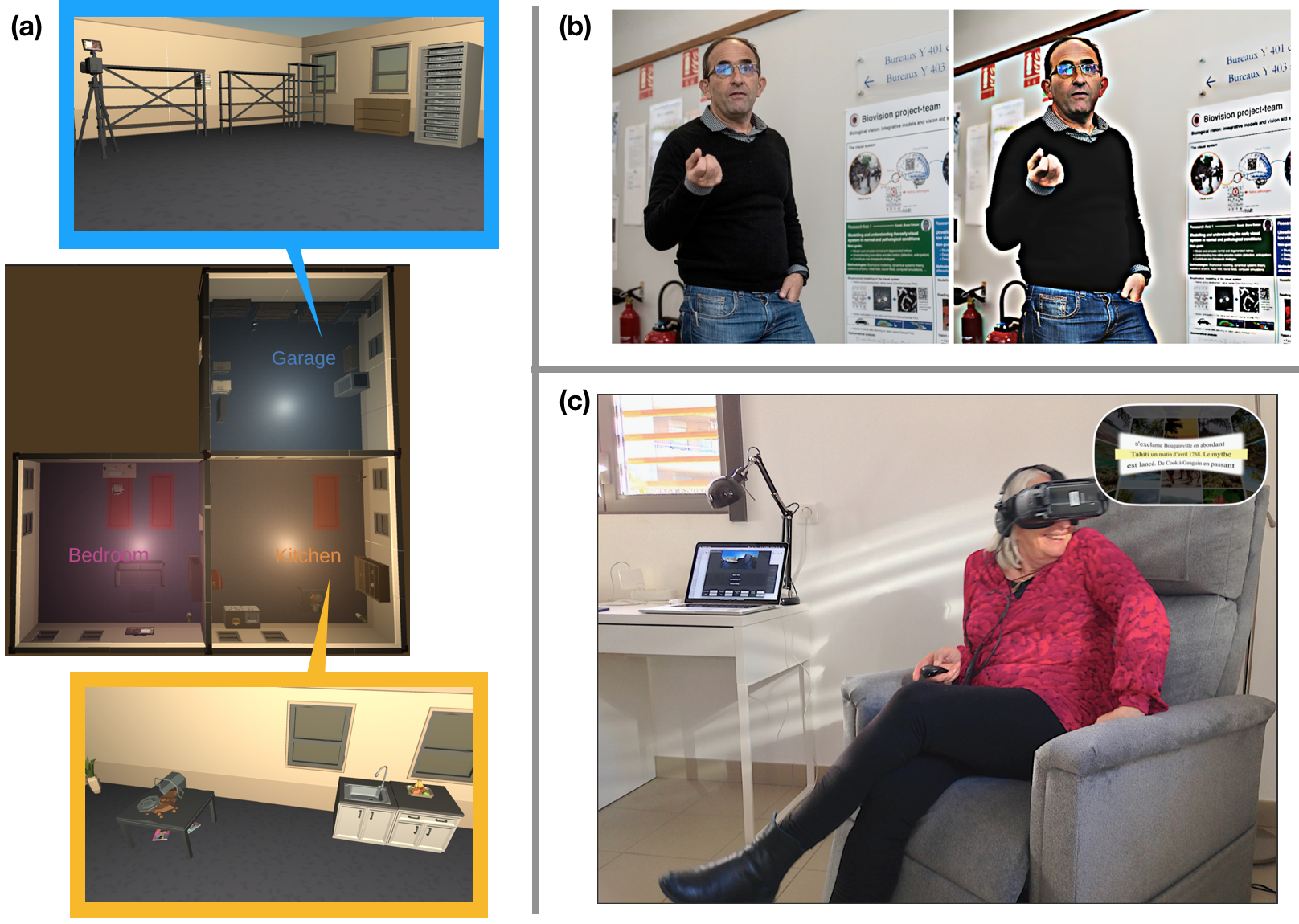

Figure depicts three images as described in the caption.

Multiple methodologies in graphics and image processing have applications towards low-vision technologies including (a) 3D virtual environments for studies of user perception and behavior, and creating 3D stimuli for model testing, (b) image enhancement techniques to magnify and increase visibility of contours of objects and people, and (c) personalization of media content such as text in 360 degrees visual space using VR headsets.

3.3 Axis 3 - Visual media analysis and creation.

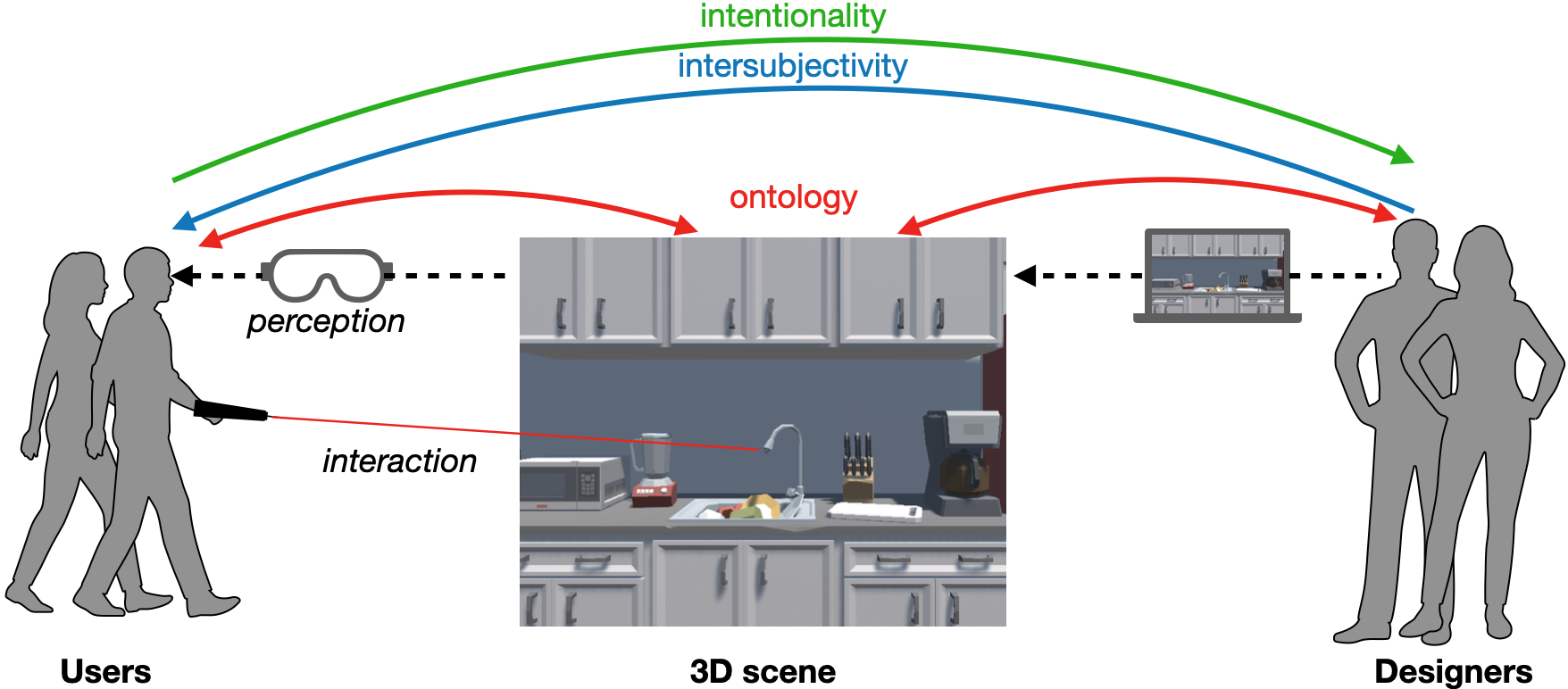

We investigate the impact of visual media design on user experience and perception, and propose assisted creativity tools for creating personalized and adapted media content (Figure 4). We employ computer vision and deep learning techniques for media understanding in film and in complex documents like newspapers. We deploy this understanding in new media platforms such as virtual and augmented reality for applications in low-vision training, accessible media design, and generation of 3D visual stimuli:

- Accessible / personalized media design for low vision training and reading platforms;

- Assisted creativity tools for 3D environments;

- Visual system and multivariate user behavior modeling in 3D contextual environments;

- Visual media understanding and gender representation in film.

On the left we have users, in the middle, the 3D scene, and on the right designers. Designers create the 3D scene through a computer interface and users interact with it through headsets and controllers. We see three main gaps of perception: ontology between the scene and its various users, intersubjectivity when communicating interactive possibilities from the designer and user, and intentionality when designers analyze user intentions

4 Application domains

4.1 Applications of low-vision studies

- Cognitive research: Virtual reality technology represents a new opportunity to conduct cognitive and behavioral research in virtual environments where all parameters can be psychophysically controlled. In the scope of ANR DEVISE, we are currently developing and using the PTVR software (Perception Toolbox for Virtual Reality) 20 to develop our own experimental protocols to study low vision. However, we believe that the potential of PTVR is much larger as it could be useful to any researcher familiar with Python programming willing to create and analyze a sophisticated experiment in VR with parsimonious code.

- Serious games: Serious games use game mechanics in order to achieve goals such as in training, education, or awareness. In our context, we want to explore serious games as a way to help low-vision patients in performing rehabilitation exercises. Virtual and augmented reality technology is a promising platform to develop such rehabilitation exercises targeted to specific pathologies due to their potential to create fully immersive environments, or inject additional information in the real world. For example, with Age-Related Macular Degeneration (AMD), our objective is to propose solutions allowing rehabilitation of visuo-perceptual-motor functions to optimally use residual portions of the peripheral retina defined from anatomo-functional exams 11.

- Vision aid-systems: A variety of aids for low-vision people are already on the market. They use various kinds of desktop (e.g., CCTVs), handheld (mobile applications), or wearable (e.g. OxSight, Helios) technologies, and offer different functionalities including magnification, image enhancement, text to speech, face and object recognition. Our goal is to design new solutions allowing autonomous interaction primarily using mixed reality – virtual and augmented reality. This technology could offer new affordable solutions developed in synergy with rehabilitation protocols to provide personalized adaptations and guidance.

4.2 Applications of vision modeling studies

-

Neuroscience research. Making in-silico experiments is a way to reduce the experimental costs, to test hypotheses and design models, and to test algorithms. Our goal is to develop a large-scale simulations platform of the normal and impaired retinas. This platefom, called Macular, allows to test hypotheses on the retina functions in normal vision (such as the role of amacrine cells in motion anticipation 45, or the expected effects of pharmacology on retina dynamics 34). It is also to mimic specific degeneracies or pharmacologically induced impairments 40, as well as to emulate electric stimulation by prostheses.

In addition, the platform provides a realistic entry to models or simulators of the thalamus or the visual cortex, in contrast to the entries usually considered in modeling studies.

- Education. Macular is also targeted as a useful tool for educational purposes, illustrating for students how the retina works and responds to visual stimuli.

4.3 Applications of visual media analysis and creation

- Engineering immersive interactive storytelling platforms. With the sharp rise in popularity of immersive virtual and augmented reality technologies, it is thus important to acknowledge their strong potential to create engaging, embodied experiences. To arrive at this goal involves investigating the impact of virtual and augmented reality platforms from an interactivity and immersivity perspective: how do people parse visual information, react, and respond in the face of content, in face of media 10, 738, 37.

- Personalized content creation and assisted creativity. Models of user perception can be integrated in tools for content creators, such as to simulate low-vision conditions to aid in the design of accessible spaces and media, and to diversify immersive scenarios used for studying user perception, for entertainment, and for rehabilitation 947.

- Media studies and social awareness. Investigating interpretable models of media analysis will allow us to provide tools to conduct qualitative media studies on large amounts of data in relation to existing societal challenges and issues. This includes understanding the impact of media design on accessibility for patients with low-vision, as well as raising awareness towards biases in media 48 and existing media analysis tools from deep learning paradigms 49.

5 Social and environmental responsibility

The research themes in the Biovision team has direct social impacts on two fronts:

- Low vision: we work in partnership with neuroscientists and ophthalmologists to design technologies for the diagnosis and rehabilitation of low-vision pathologies, addressing a strong societal challenge.

- Accessibility: in concert with researchers in media studies, we tackle the social challenge of designing accessible media, including for the population with visual impairments, as well as to address media bias both in content design as well as in machine learning approaches.

6 Highlights of the year

6.1 Awards

Simone Ebert received a prize from the Academy of Complex System, Université Côte d'Azur for her presentation in Complex Day 2023.

Simone Ebert received a DocWalker funding from the Academy DS4H, Université Côte d'Azur.

Florent Robert and Hui-Yin Wu received the best paper award at the 2023 ACM Conference on Interactive Media Experiences (ACM IMX) for their article “An Integrated Framework for Understanding Multimodal Embodied Experiences in Interactive Virtual Reality.” 7 co-authored with Marco Winckler, Lucile Sassatelli, Stephen Ramanoël and Auriane Gros.

7 New software, platforms, open data

7.1 New software

7.1.1 Macular

-

Name:

Numerical platform for simulations of the primary visual system in normal and pathological conditions

-

Keywords:

Retina, Vision, Neurosciences

-

Scientific Description:

At the heart of Macular is an object called "Cell". Basically these "cells" are inspired by biological cells, but it's more general than that. It can also be a group of cells of the same type, a field generated by a large number of cells (for example a cortical column), or an electrode in a retinal prosthesis. A cell is defined by internal variables (evolving over time), internal parameters (adjusted by cursors), a dynamic evolution (described by a set of differential equations) and inputs. Inputs can come from an external visual scene or from other synaptically connected cells. Synapses are also Macular objects defined by specific variables, parameters, and equations. Cells of the same type are connected in layers according to a graph with a specific type of synapses (intra-layer connectivity). Cells of a different type can also be connected via synapses (inter-layer connectivity).

All the information concerning the types of cells, their inputs, their synapses and the organization of the layers are stored in a file of type .mac (for "macular") defining what we call a "scenario". Different types of scenarios are offered to the user, which they can load and play, while modifying the parameters and viewing the variables (see technical section).

Macular is built around a central idea: its use and its graphical interface can evolve according to the user's objectives. It can therefore be used in user-designed scenarios, such as simulation of retinal waves, simulation of retinal and cortical responses to prosthetic stimulation, study of pharmacological impact on retinal response, etc. The user can design their own scenarios using the Macular Template Engine (see technical section).

-

Functional Description:

Macular is a simulation platform for the retina and the primary visual cortex, designed to reproduce the response to visual stimuli or to electrical stimuli produced by retinal prostheses, in normal vision conditions, or altered (pharmacology, pathology, development).

-

Release Contributions:

First release.

- URL:

-

Contact:

Bruno Cessac

-

Participants:

Bruno Cessac, Evgenia Kartsaki, Selma Souihel, Teva Andreoletti, Alex Ye, Sebastian Gallardo Diaz, Ghada Bahloul, Tristan Cabel, Erwan Demairy, Pierre Fernique, Thibaud Kloczko, Come Le Breton, Jonathan Levy, Nicolas Niclausse, Jean-Luc Szpyrka, Julien Wintz, Carlos Zubiaga Pena

7.1.2 GUsT-3D

-

Name:

Guided User Tasks Unity plugin for 3D virtual reality environments

-

Keywords:

3D, Virtual reality, Interactive Scenarios, Ontologies, User study

-

Functional Description:

We present the GUsT-3D framework for designing Guided User Tasks in embodied VR experiences, i.e., tasks that require the user to carry out a series of interactions guided by the constraints of the 3D scene. GUsT-3D is implemented as a set of tools that support a 4-step workflow to :

(1) annotate entities in the scene with names, navigation, and interaction possibilities, (2) define user tasks with interactive and timing constraints, (3) manage scene changes, task progress, and user behavior logging in real-time, and (4) conduct post-scenario analysis through spatio-temporal queries on user logs, and visualizing scene entity relations through a scene graph.

The software also includes a set of tools for processing gaze tracking data, including: - clean and synchronise the data - calculate fixations with I-VT, I-DT, IDTVR, IS5T, Remodnav, and IDVT algorithms - visualize the data (points of regard and fixations) in both real time and collectively

- URL:

-

Contact:

Hui-Yin Wu

-

Participants:

Hui-Yin Wu, Marco Alba Winckler, Lucile Sassatelli, Florent Robert

-

Partner:

I3S

7.1.3 PTVR

-

Name:

Perception Toolbox for Virtual Reality

-

Keywords:

Visual perception, Behavioral science, Virtual reality

-

Functional Description:

Some of the main assets of PTVR are: (i) The “Perimetric” coordinate system allows users to place their stimuli in 3D easily, (ii) Intuitive ways of dealing with visual angles in 3D, (iii) Possibility to define “flat screens” to replicate and extend standard experiments made on 2D screens of monitors, (iv) Save experimental results and behavioral data such as head and gaze tracking. (v) User-friendly documentation with many animated figures in 3D to easily visualize 3D subtleties, (vi) Many “demo” scripts whose observation in the VR headset is very didactic to learn using PTVR, (vii) Focus on implementing standard and innovative clinical tools for visuomotor testing and visuomotor readaptation (notably for low vision).

- URL:

-

Authors:

Eric Castet, Christophe Hugon, Jeremy Termoz-masson, Johanna Delachambre, Hui-Yin Wu, Pierre Kornprobst

-

Contact:

Pierre Kornprobst

-

Partner:

Aix-Marseille Université - CNRS Laboratoire de Psychologie Cognitive - UMR 7290 - Team ‘Perception and attention’

7.2 New platforms

Members of Biovision are marked with a .

7.2.1 CREATTIVE3D platform for navigation studies in VR

Participants: Hui-Yin Wu, Florent Robert, Lucile Sassatelli [UniCA, CNRS, I3S; Institut Universitaire de France], Marco Winckler [UniCA, CNRS, I3S].

As part of ANR CREATTIVE3D, the Biovision team has established a techonological platform in the Kahn immersive space including:

- a 40 tracked space for the Vive Pro Eye virtual reality headset with 4-8 infra-red base stations.

- GUsT-3D software 7.1.2 under Unity for the creation, management, logging, analysis, and scene graph visualization of interactive immersive experiences

- Vive Pro Eye headset integrated sensors including gyroscope and accelerometers, spatial tracking through base stations, and 120 Hz eye tracker

- External sensors including XSens Awinda Starter inertia-based motion capture costumes and Shimmer GSR physiological sensors

The platform has hosted around 60 user studies lasting over 120 hours this year, partly published in 7. It has also provided demonstrations of our virtual reality projects for visitors, collaborators, and students.

7.3 Open data

CREATTIVE3D multimodal dataset of user behavior in virtual reality

In the context of the ANR CREATTIVE3D project, we join the expertise of computer science, neuroscience, and clinical practitioners, to analyze the impact that a simulated low-vision condition has on user navigation behavior in complex road crossing scenes: a common daily situation where the difficulty to access and process visual information (e.g., traffic lights, approaching cars) in a timely fashion can lead to serious consequences on a person's safety and well-being. This dataset contains the data as part of the study described in 7.

Data link: https://zenodo.org/doi/10.5281/zenodo.8269108.

Contact: Hui-Yin Wu

8 New results

We present here the new scientific results of the team over the course of the year. For each entry, members of Biovision are marked with a .

8.1 Modeling the retina and the primary visual system

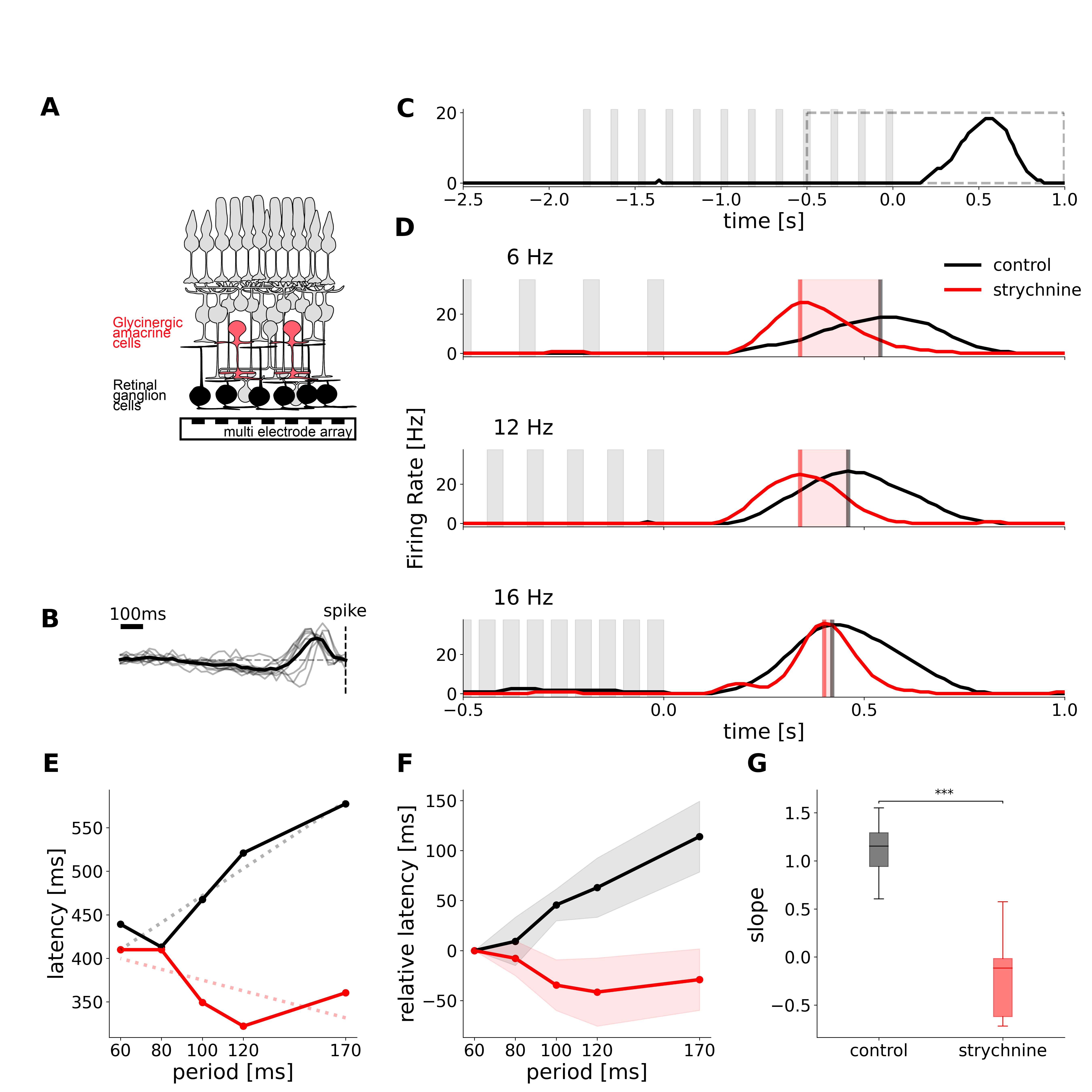

8.1.1 Temporal pattern recognition in retinal ganglion cells is mediated by dynamical inhibitory synapses

Participants: Simone Ebert, Thomas Buffet [Institut de la Vision, Sorbonne Université, Paris, France.], Semihcan Sermet [Institut de la Vision, Sorbonne Université, Paris, France.], Olivier Marre [Institut de la Vision, Sorbonne Université, Paris, France.], Bruno Cessac.

Description: A fundamental task for the brain is to generate predictions of future sensory inputs, and signal errors in these predictions. Many neurons have been shown to signal omitted stimuli during periodic stimulation, even in the retina. However, the mechanisms of this error signaling are unclear. Here we show that depressing inhibitory synapses enable the retina to signal an omitted stimulus in a flash sequence. While ganglion cells, the retinal output, responded to an omitted flash with a constant latency over many frequencies of the flash sequence, we found that this was not the case once inhibition was blocked. We built a simple circuit model and showed that depressing inhibitory synapses was a necessary component to reproduce our experimental findings (Figure 5). We also generated new predictions with this model, which we confirmed experimentally. Depressing inhibitory synapses could thus be a key component to generate the predictive responses observed in many brain areas.

This figure shows a schematic of the retina, experimental recordings of the Omitted Stimulus Response.in normal conditions and under application of strychnine which blocks glycine.

This work has been presented in the conferences Complex Days 2023 30, Neuromod 2023 18, COSYNE 2023 26, and is under revision in the journal Nature Communication21.

8.1.2 How does the inner retinal network shape the ganglion cells receptive field : a computational study

Participants: Evgenia Kartsaki [Biosciences Institute, Newcastle University, Newcastle upon Tyne, UK; UniCA, Inria, SED], Gerrit Hilgen [Biosciences Institute, Newcastle University, Newcastle upon Tyne, UK; Health & Life Sciences, Applied Sciences, Northumbria University, Newcastle upon Tyne UK], Evelyne Sernagor [Biosciences Institute, Newcastle University, Newcastle upon Tyne, UK], Bruno Cessac.

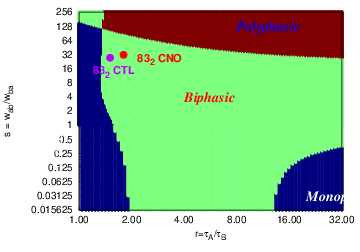

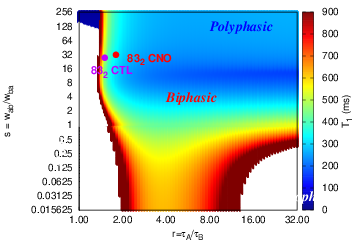

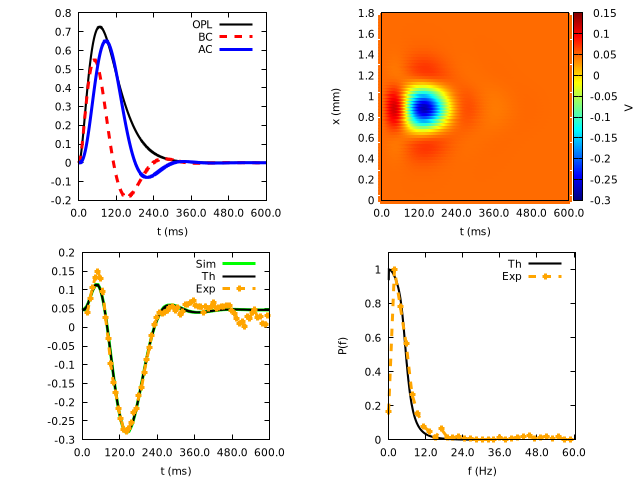

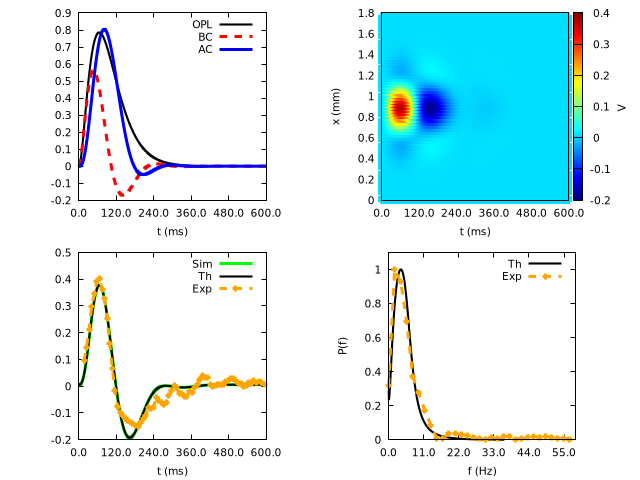

Description: We consider a model of basic inner retinal connectivity where bipolar (BCs) and amacrine (ACs) cells interconnect, and both cell types project onto ganglion cells (RGCs), modulating their response output to the brain visual areas. We derive an analytical formula for the spatio-temporal response of retinal ganglion cells to stimuli taking into account the effects of amacrine cells inhibition. This analysis reveals two important functional parameters of the network: (i) the intensity of the interactions between bipolar and amacrine cells, and, (ii) the characteristic time scale of these responses. Both parameters have a profound combined impact on the spatiotemporal features of RGC responses to light. The validity of the model is confirmed by faithfully reproducing pharmacogenetic experimental results obtained by stimulating excitatory DREADDs (Designer Receptors Exclusively Activated by Designer Drugs) expressed on ganglion cells and amacrine cells subclasses, thereby modifying the inner retinal network activity to visual stimuli in a complex, entangled manner. DREADDs are activated by"designer drugs" such as clozapine-n-oxide (CNO), resulting in an increase in free cytoplasmic calcium and profound increase in excitability in the cells that express these DREADDs (Roth, 2016). We found DREADD expression both in subsets of RGCs and in ACs in the inner nuclear layer (INL) 39. In these conditions, CNO would act both on ACs and RGCs providing a way to unravel the entangled effects of: (i) direct excitation of DREADD-expressing RGCs and increase inhibitory input onto RGCs originating from DREADD-expressing ACs; (ii) change in cells response timescale (via a change in the membrane conductance), thereby providing an experimental set up to validate our theoretical predictions. Our mathematical model allows us to explore and decipher these complex effects in a manner that would not be feasible experimentally and provides novel insights in retinal dynamics.

Figure 6 illustrates our results. This paper has been accepted for publication in the journal Neural Computation12.

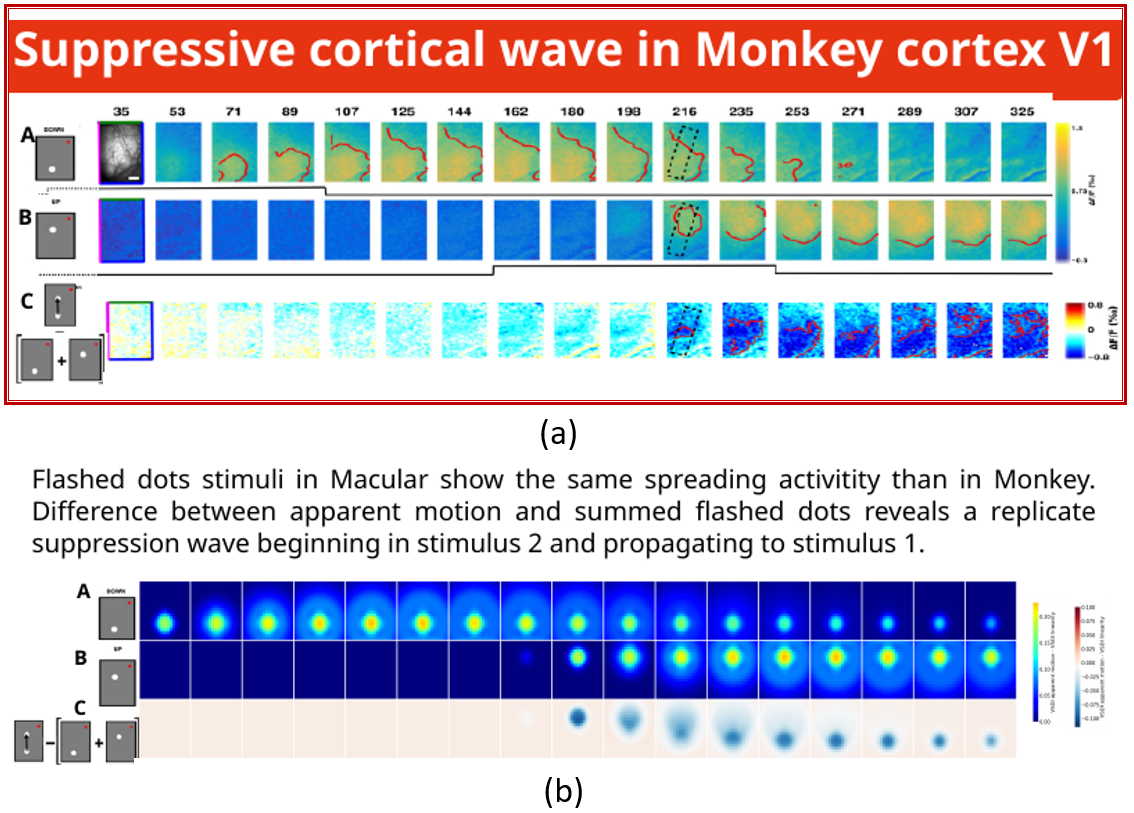

8.1.3 A retino-cortical computational model to study suppressive cortical waves in saccades

Participants: Jérôme Emonet, Bruno Cessac, Alain Destexhe [NeuroPSI - Institut des Neurosciences Paris-Saclay], Frédéric Chavane [INT - Institut de Neurosciences de la Timone], Matteo Di Volo [UCBL - Université Claude Bernard Lyon 1], Mark Wexler [INCC - UMR 8002 - Centre Neurosciences intégratives et Cognition / Integrative Neuroscience and Cognition Center], Olivier Marre [Institut de la Vision].

Description: Oculars saccades are very fast eyes movements, occurring about 3 times per second, with a maximum speed of 500/s, and with a mean speed of 200/s (Westheimer, 1954). This process is essential to explore a visual scene and capture its information. It also carries our facial recognition and reading abilities. During saccades, the representation of the visual scene moves at the same speed as the saccade. We should therefore perceive a smear or grey-out of the image content. However, we don't see neither this motion nor the smear. On the contrary, the visual scene stays stable, clear and precise. Indeed, most visual stimuli presents in saccades are not perceived, this is called "saccadic omission". A current explanation of this phenomenon is based on a signal called "corollary discharge" (Robert H. Wurtz, 2018). On one hand, this copy of the ocular motor command is transmitted to the frontal eye field where it guides the saccade and may allow a remapping of the visual field (Sommer & Wurtz 2002). On the other hand, a loss of visual sensitivity called "saccadic suppression" is caused by an inhibition signal generated by the corollary discharge and sent to the middle temporal cortex (Berman et al., 2016) resulting in a selective central inhibition of magnocellular pathway (Burr et al., 1994) and in the absence of motion perception in the saccade. However, there are limits to this explanation. When a stimulus is shown to a subject within the time of a single saccade, this stimulus is seen as smeared. This smear can be avoided by using perisaccadic stimuli mask as forward and/or backward masks (Campbell & Wurtz, 1978). This leads to the alternative explanation of saccadic omission using temporal visual masking (M.Wexler & P. Cavanagh, 2019) . In the context of the ANR ShootingStar we want to explore further this hypothesis, combining psychophysical experiments, neurophysiological experiments on the monkey primary visual cortex and modeling, by studying the role of suppressive waves propagating in the opposite direction of a motion. Their suppressive effect from the last position of the stimulus to the former could explain smear erasing. We propose a model emulating a retinal entry feeding a V1 model. This retino-cortical model produces a cortical activity corresponding to pixel intensity in voltage sensitive dye imaging (VSDI). It can reproduce cortical activity waves induced by motion objects in agreement to previous experiments (Bienvenuti et al, 2020), especially, suppressive waves experimentally observed in the monkey (Chemla et al., 2019) as a first step to model saccadic suppression (Figure 7). This work has been presented in the conference GDR NeuralNet, Marseille 27.

8.2 Diagnosis, rehabilitation, and low-vision aids

8.2.1 Constrained text generation to measure reading performance

Participants: Alexandre Bonlarron, Aurélie Calabrèse [Aix-Marseille Université (CNRS, Laboratoire de Psychologie Cognitive, Marseille, France) ], Pierre Kornprobst, Jean-Charles Régin [UniCA, I3S, Constraints and Application Lab].

Context: Measuring reading performance is one of the most widely used methods in ophthalmology clinics to judge the effectiveness of treatments, surgical procedures, or rehabilitation techniques 44. However, reading tests are limited by the small number of standardized texts available. For the MNREAD test 42, which is one of the reference tests used as an example in this work, there are only two sets of 19 sentences in French. These sentences are challenging to write because they have to respect rules of different kinds (e.g., related to grammar, length, lexicon, and display). They are also tricky to find: out of a sample of more than three million sentences from children’s literature, only four satisfy the criteria of the MNREAD reading test.

Description: To obtain more sentences, we proposed a new standardized sentence generation method. We formalize the problem as a discrete combinatorial optimization problem and utilize multivalued decision diagrams (MDD), a well-known data structure to deal with constraints. In our context, one key strength of MDD is to compute an exhaustive set of solutions without performing any search. Once the sentences are obtained, we apply a language model (GPT-2) to keep the best ones. We detail this for English and also for French where the agreement and conjugation rules are known to be more complex. Finally, with the help of GPT-2, we get hundreds of bona-fide candidate sentences. When compared with the few dozen sentences usually available in the well-known vision screening test (MNREAD), this brings a major breakthrough in the field of standardized sentence generation. Also, as it can be easily adapted for other languages, it has the potential to make the MNREAD test even more valuable and usable. More generally, this work highlights MDD as a convincing alternative for constrained text generation, especially when the constraints are hard to satisfy, but also for many other prospects.

This work has been presented in the conference IJCAI 2023 14, and in national events 13, 23.

8.2.2 A new vessel-based method to estimate automatically the position of the non-functional fovea on altered retinography from maculopathies

Participants: Aurélie Calabrèse [Aix-Marseille Université (CNRS, Laboratoire de Psychologie Cognitive, Marseille, France) ], Vincent Fournet, Séverine Dours [Aix-Marseille Université (CNRS, Laboratoire de Psychologie Cognitive, Marseille, France); Institut d’Education Sensoriel (IES) Arc-en-Ciel], Frédéric Matonti [Centre Monticelli Paradis d'Ophtalmologie], Eric Castet [Aix-Marseille Université (CNRS, Laboratoire de Psychologie Cognitive, Marseille, France) ], Pierre Kornprobst.

Context: This contribution is part of a larger initiative in the scope of ANR DEVISE. We aim at measuring and analyzing the 2D geometry of each patient's "visual field," notably the characteristics of his/her scotoma (e.g., shape, location w.r.t fovea, absolute vs. relative) and gaze fixation data. This work is based on data acquired from a Nidek MP3 micro-perimeter installed at Centre Monticelli Paradis d'Ophtalmologie. In 2021, the focus was on the estimation of the fovea position from perimetric images (see below) and on the development of a first graphical user interface to manipulate MP3 data.

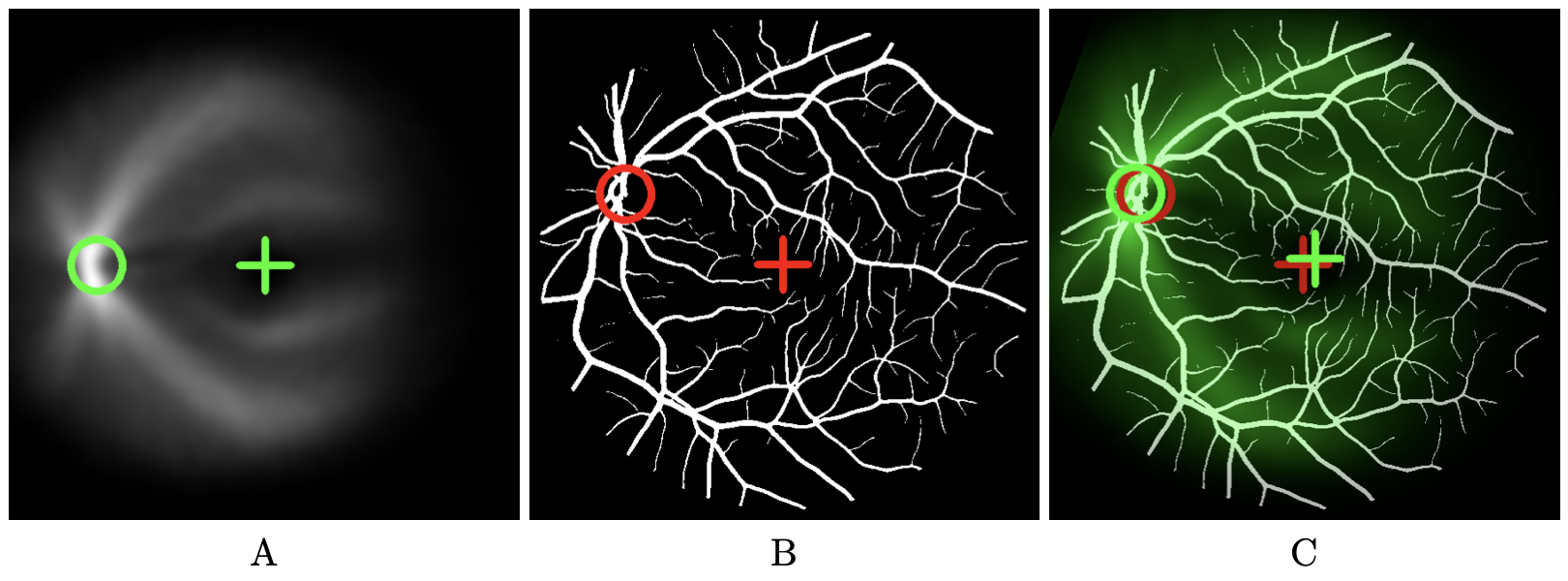

Description: In the presence of maculopathies, due to structural changes in the macula region, the fovea is usually located in pathological fundus images using normative anatomical measures (NAM). This simple method relies on two conditions: that images are acquired under standard testing conditions (primary head position and central fixation) and that the optic disk is visible entirely on the image. However, these two conditions are not always met in the case of maculopathies, in particular when it comes to fixing an image. In this work we propose a Vessel-Based Fovea Localization (VBFL) approach that relies on the retina's vessel structure to make predictions. The spatial relationship between fovea location and vessel characteristics is learnt from healthy fundus images and then used to predict fovea location in new images (Figure 8). We evaluate the VBFL method on three categories of fundus images: healthy images acquired with different head orientations and fixation locations, healthy images with simulated macular lesions and pathological images from AMD. For healthy images taken with the head tilted to the side, NAM estimation error is significantly multiplied by 4, while VBFL yields no significant increase, representing a 73% reduction in prediction error. With simulated lesions, VBFL performance decreases significantly as lesion size increases and remains better than NAM until lesion size reaches 200 deg2. For pathological images, average prediction error was 2.8 degrees, with 64% of the images yielding an error of 2.5 degrees or less. VBFL was not robust for images showing darker regions and/or incomplete representation of the optic disk. In conclusion, the vascular structure provides enough information to precisely locate the fovea in fundus images in a way that is robust to head tilt, eccentric fixation location, missing vessels and actual macular lesions.

This work was published in Translational Vision Science & Technology 11.

This figures illustrates how our method to detect the fovea works. It contains three images. The left image shows the vessel density map. This map is obtained by realigning and then averaging a set of vessel maps for which fovea and optic disk position are known. The are realigned based upon fixed positions of fovea and optic disk which are marked by a green cross and a green circle respectively. The middle image shows the vessel map where the fovea position is unknown. In our example, the ground truth is available allowing error estimates. Red cross indicates the true position of the fovea we are looking for. The right image shows the vessel density map in green-scale color superimposed on the vessels map after registration. The fovea position of the vessel density map after registration serves as an estimate for the fovea position. It is represented by a green cross. When ground truth is available (red cross), error can be estimated (distance between green and red crosses).

Illustration of the registration-based fovea localization method considering only vessel spatial distribution. (A) Vessel density map obtained by realigning and averaging a set of vessel maps, with the reference fovea and optic disk position marked by a cross and a circle respectively, (B) Vessel map in which the fovea has to be located. Here we assume that the ground truth is available allowing error estimates. Red cross indicates the true position of the fovea. (C) Vessel density map in green color superimposed on the vessels map after registration. The fovea position of the vessel density map (green cross) serves as an estimate for the fovea position. When ground truth is available (red cross), error can be estimated (distance between green and red crosses).

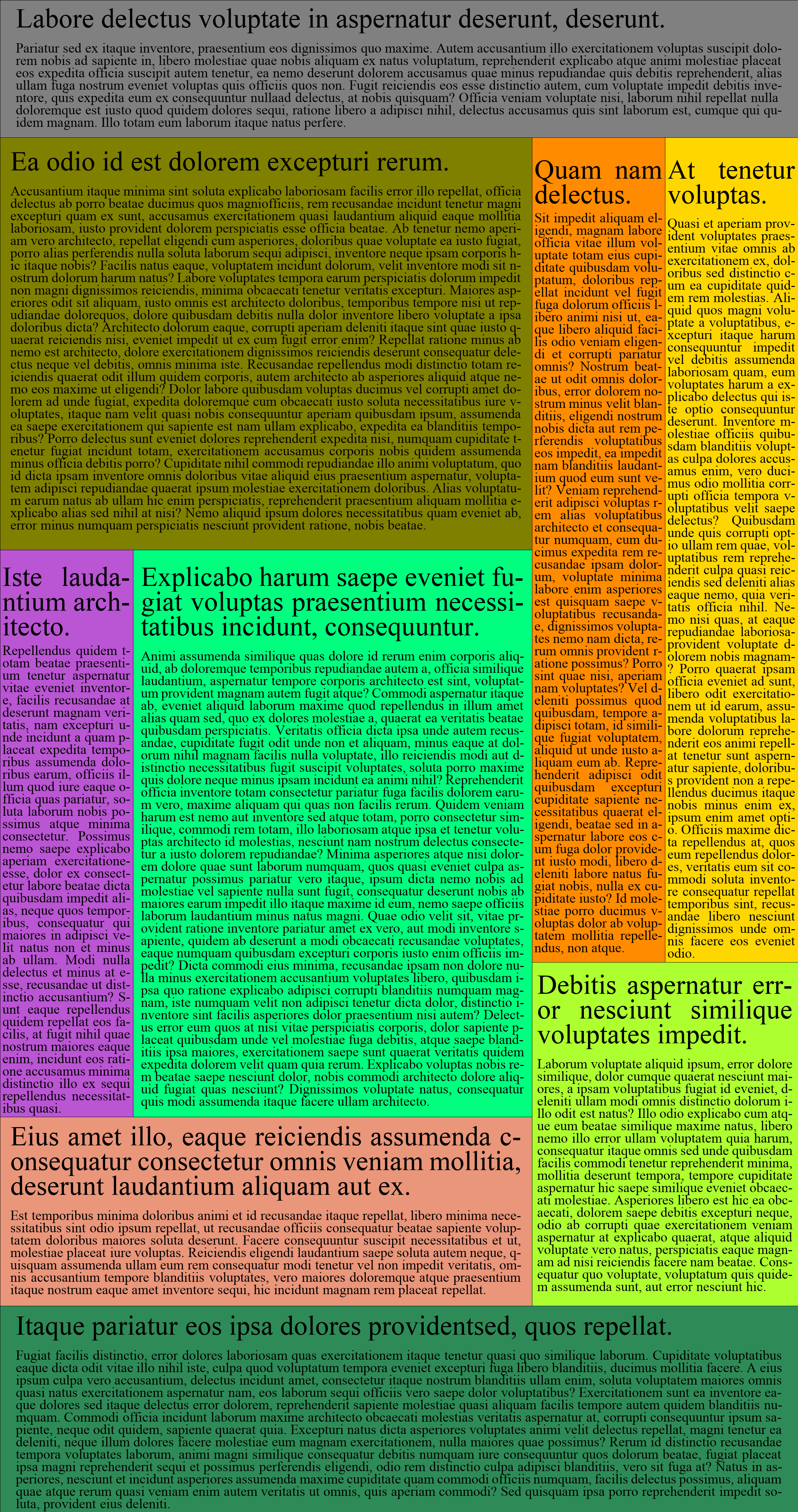

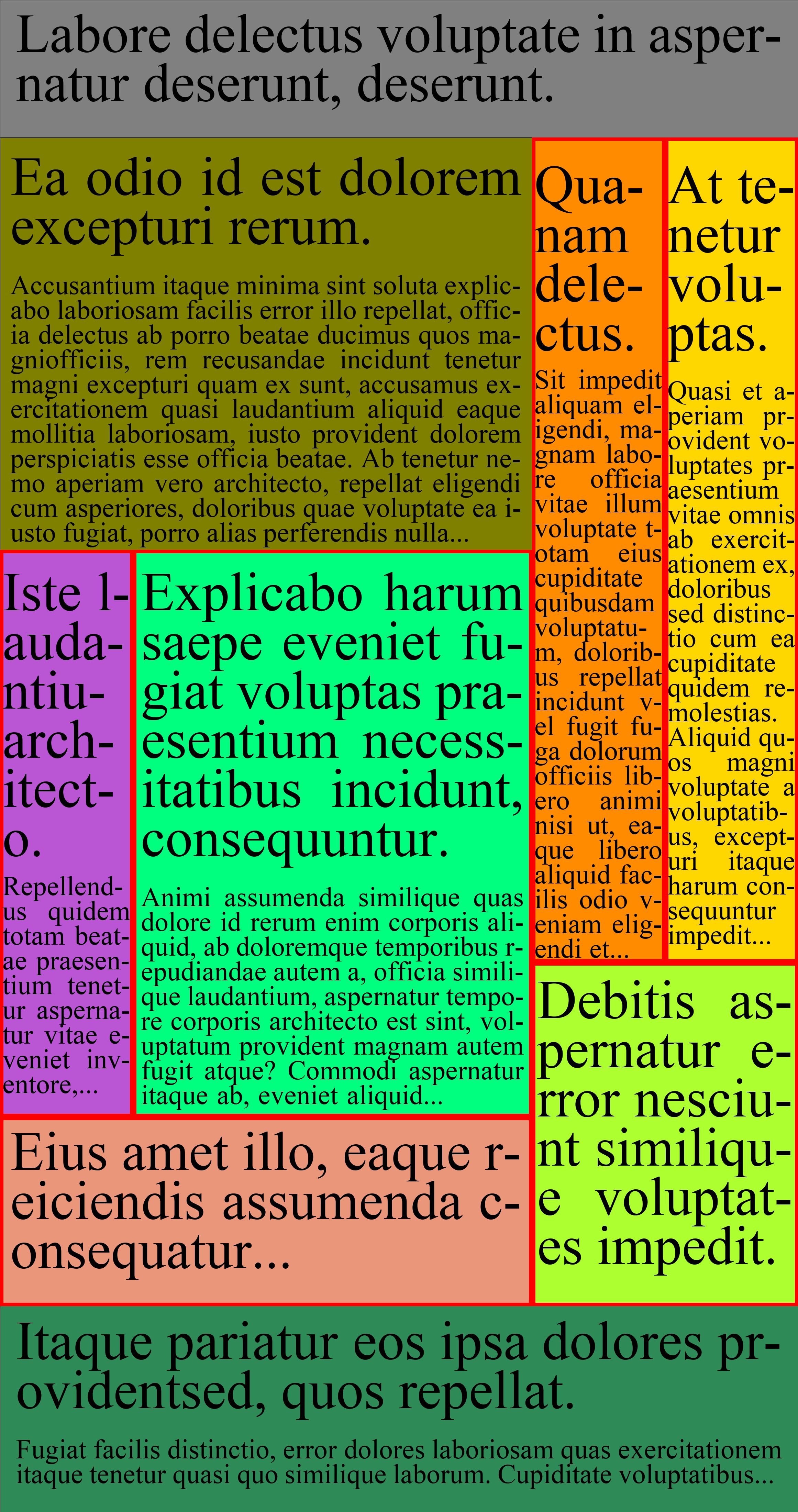

8.2.3 From Print to Online Newspapers on Small Displays: A Layouting Generation Approach Aimed at Preserving Entry Points

Participants: Sebastian Gallardo, Dorian Mazauric [UniCA, Inria, ABS Team], Pierre Kornprobst.

Context: The digital era transforms the newspaper industry, offering new digital user experiences for all readers. However, to be successful, newspaper designers stand before a tricky design challenge: translating the design and aesthetics from the printed edition (which remains a reference for many readers) and the functionalities from the online edition (continuous updates, responsiveness) to create the e-newspaper of the future, making a synthesis based on usability, reading comfort, engagement. In this spirit, our project aims to develop a novel inclusive digital news reading experience that will benefit all readers: you, me, and low vision people for whom newspapers are a way to be part of a well-evolved society.

Description: In this work we focus on how to comfortably read newspapers on a small display. Simply transposing the print newspapers into digital media can not be satisfactory because they were not designed for small displays. One key feature lost is the notion of entry points that are essential for navigation. By focusing on headlines as entry points, we show how to produce alternative layouts for small displays that preserve entry points quality (readability and usability) while optimizing aesthetics and style. Our approach consists in a relayouting approach implemented via a genetic-inspired approach. We tested it on realistic newspaper pages. For the case discussed here, we obtained more than 2000 different layouts where the font was increased by a factor of two. We show that the quality of headlines is globally much better with the new layouts than with the original layout (Figure 9). Future work will tend to generalize this promising approach, accounting for the complexity of real newspapers, with user experience quality as the primary goal.

More details are available in 22.

|

|

|

|

| (a) | (b) | (c) UW=6 | (d) UW=1 |

The figure has four panels. The first panel represents a newspaper page that is composed of several articles each of them with a headline and a body text. The goal is to see how to magnify this page while preserving reading comfort and newspaper design. The second panel illustrates the usual solution which consists in simply magnifying everything which results in a local/global navigation problem since only a portion of the page remains visible. The third panel shows an alternative solution where only the font size is increased while keeping the original design. The interest of this solution stands in the fact that we always see all the articles so that the reader may choose more easily the one to read. However, since the geometry of the page is kept the same, increasing font size may end up with headlines flowing on many lines, making them hard to read, and also aesthetically poor. The fourth panel shows the result of our approach which consists in increasing the font size as in the previous case but also exploring different layouts so that headlines do not flow on too many lines. By doing so, we provide a new self-contained layout that is easier to understand on small displays.

From print to online newspapers on small displays: (a) Print newspaper page where each article has been colored to highlight the structure. On small displays, headlines become too small to be readable and usable for navigation. Magnification is needed. (b) Pinch-zoom result with a magnification factor of two: Illustrates the common local/global navigation difficulty encountered when reading newspapers via digital kiosk applications. (c) Increasing the font with a factor two, keeping the original layout: Articles with unwanted shapes (denoted by UW, here when headlines flow on more than three lines) are boxed in red; (d) Best result of our approach showing how the page in (a) has been transformed to preserve headlines quality.

8.3 Visual media analysis and creation

8.3.1 Large scale study of the impact of low vision in virtual street crossings

Participants: Florent Robert, Hui-Yin Wu, Lucile Sassatelli [Université Côte d'Azur, CNRS, I3S; Institut Universitaire de France], Stephen Ramanoël [Université Côte d'Azur, LAMHESS; Sorbonne Université, INSERM, CNRS, Institut de la Vision], Auriane Gros [Département d’Orthophonie, UFR Médecine de Nice; Université Côte d'Azur, CoBTeK; Service Clinique Gériatrique de Soins Ambulatoires, Centre Hospitalier Universitaire de Nice, Centre Mémoire Ressources et Recherche], Marco Winckler [Université Côte d'Azur, CNRS, I3S, Inria].

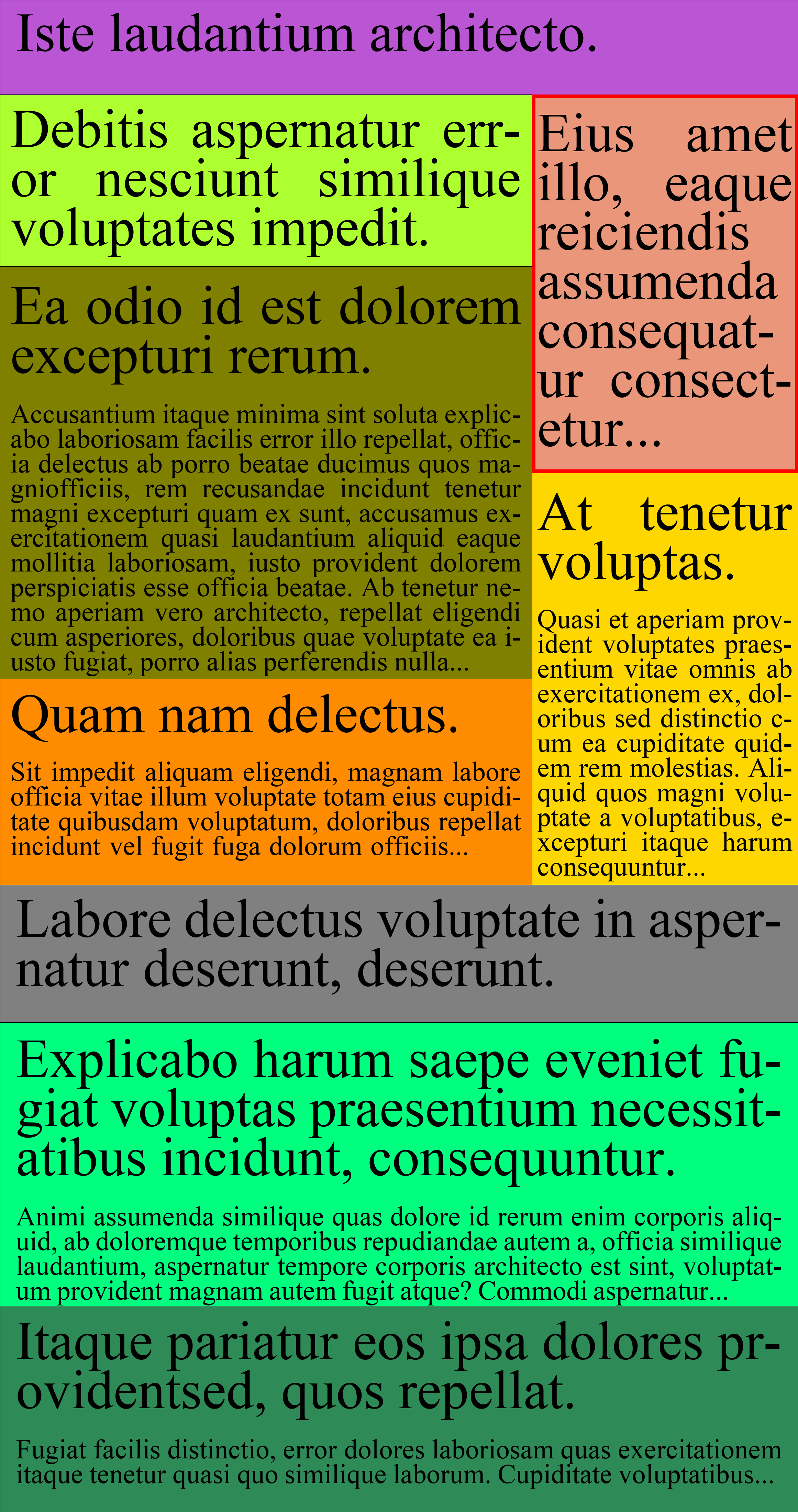

Context: In line with the objectives of ANR CREATTIVE3D, we designed and carried out a large scale study on road crossings using virtual reality technologies. Our goal was to investigate user behavior and the impact of simulated low-vision conditions in life-like contexts which could then provide much-needed data for (1) the modeling of perception and user performance in contextually rich environments, and (2) the design of personalized training scenarios and feedback.

Description: Virtual Reality (VR) technology enables “embodied interactions” in realistic environments where users can freely move and interact, with deep physical and emotional states. However, a comprehensive understanding of the embodied user experience is currently limited by the extent to which one can make relevant observations, and the accuracy at which observations can be interpreted. Paul Dourish proposed a way forward through the characterisation of embodied interactions in three senses: ontology, intersubjectivity, and intentionality. In a joint effort between computer and neuro-scientists, we built a framework to design studies that investigate multimodal embodied experiences in VR, and apply it to study the impact of simulated low-vision on user navigation. Our methodology involves the design of 3D scenarios annotated with an ontology, modeling intersubjective tasks, and correlating multimodal metrics such as gaze and physiology to derive intentions. We show how this framework enables a more fine-grained understanding of embodied interactions in behavioural research.

Using this framework, we designed a study to analyze user experience in VR, with different types of tasks (observation, locomotion, seek and retrieval) in two different movement conditions (walking physically, walking with a joystick) and two different vision conditions (normal vision, simulated low-vision), for a total of four conditions. An example scenario is shown in Figure 10.

3D labelled scene of one scenario with three tasks. Image 1 shows the key on the table and a garbage bag on the ground in a room. Image 2 shows the road crossing with a trashcan on the other side. Image 3 shows the perspective from the other side of the road with a traffic button.

Participant point of view of the scenario #4 from the study featuring multiple interactions (picking up the trash bag and pushing the traffic button) and crossing a single lane street with no cars.

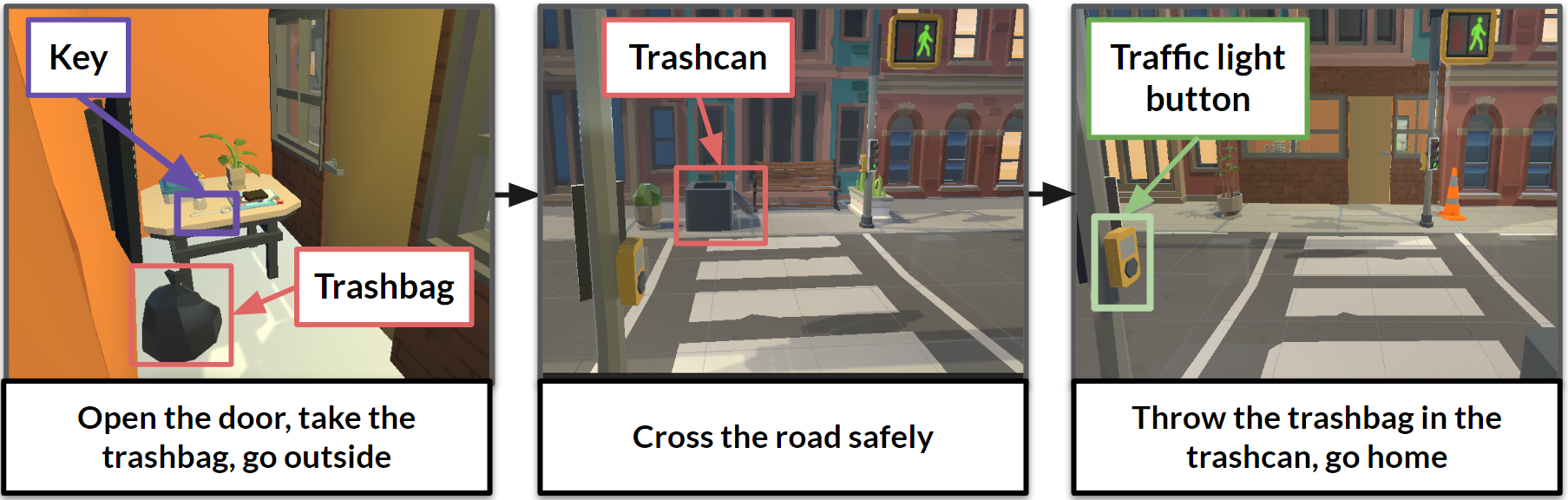

We recruited 40 participants (20 women and 20 men) through 5 university and laboratory mailing lists. The study was reviewed and approved by the Universtié Côte d'Azur's ethics review board (CER No 2022-057). Participants had normal or corrected to normal binocular vision, and provided informed consent to participate in the study. The result is the largest dataset (40 subjects with around 2.6 millions frames) on human motion in fully-annotated contexts. To our knowledge it is the only one conducted in dynamic and interactive virtual environments, with the rich multivariate indices of behavior including gaze, physiology, motion capture, rich user logs, and questionnaires. This enables fine-grained analyses on user behavior by task and multivariate user behavior. An example analysis of the electrodermal activity (EDA) of a user in one task can be seen in Figure 11 where we can observe synchronized responses to events such as crossing the street, honking, or throwing a trashbag in the trashcan.

Graphs of the EDA evolution segmented by the different tasks: get box, interact with traffic light button, go to opposite sidewalk, place box in trashcan, and go back to the start sidewalk. The top graph shows the raw EDA whereas the middle and bottom graphs shown the phasic and tonic components respectively.

Example evolution of the tonic component for EDA for one user under real walking and normal vision condition with task boundaries indicated by the colored lines. We see a small leap around the moment a car honks at the user for jaywalking.

For this work, F. Robert and H.-Y. Wu received the best paper award at the ACM Interactive Media Experiences conference 7. The developed platform is described in the Proceedings of ACM on Human Computer Interactions (PACMHCI) and presented at the ACM Symposium on Engineering Interactive Systems (EICS)10. The dataset paper is currently under review, with the dataset available on Zenodo. The technical platform is now in version 2.0 and has a CeCILL licence (IDDN.FR.001.160035.000.S.P.2022.000.41200).

Other news and development on the project can be followed from official website.

8.3.2 Analyzing Gaze Behaviors in Interactive VR Scenes

Participants: Kateryna Pirkovets, Clément Merveille, Florent Robert, Vivien Gagliano, Hui-Yin Wu, Stephen Ramanoël [Université Côte d'Azur, LAMHESS; Sorbonne Université, INSERM, CNRS, Institut de la Vision], Auriane Gros [Département d’Orthophonie, UFR Médecine de Nice; Université Côte d'Azur, CoBTeK; Service Clinique Gériatrique de Soins Ambulatoires, Centre Hospitalier Universitaire de Nice, Centre Mémoire Ressources et Recherche].

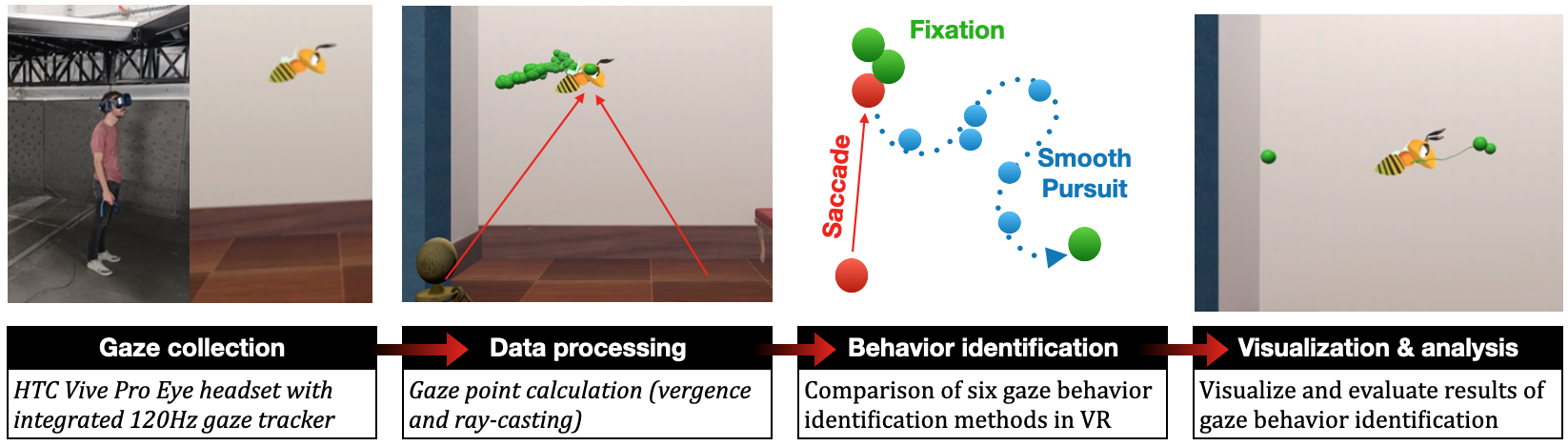

Context: Studies for understanding visual attention in 6 degrees of freedom (DoF) virtual environments, though greatly facilitated by the emergence of affordable head mounted displays (HMD), remain, however, very complicated to set up. In the context of ANR CREATTIVE3D, this work focuses on the role and choices of gaze behavior identification (GBI) methods in an eye tracking workflow, and their impact on the analysis of gaze data in large, interactive scenes in VR.

Description: Gaze is an excellent metric for understanding human attention. However, research on gaze behavior identification (GBI) – e.g., saccades, fixations, and smooth pursuits – for large interactive 3D scenes with virtual reality headsets is still in its early stages. There is currently no consensus on how to select GBI methods, and how this choice can impact the research on human attention. This work investigates the influence of GBI approaches on human gaze data analysis on mobile virtual reality (VR) scenario by re-implementing and adapting six state-of-the-art identification algorithms into a unified workflow for capture and analysis of gaze behaviors, as shown in Figure 12. To underline the potential of the system, we design a 3D scene with various static, animated, and interactive 2D and 3D stimuli with which we collected and analyzed eye tracking data during active exploration for 22 participants. Results reveal that all algorithms and 2D stimuli had a great impact on the fixation durations. Moreover, most fixation durations last for 250 msec for every stimulus. Average durations of fixations corresponding to the moving 3D stimulus are the highest, while the ones for static paintings are the lowest. Finally, our study reveals that the IS5T algorithm showed the most consistent results across stimuli and participants.

Using an animated bee as an example for the four steps, from first to last are (1) a person with the HTC Vive ProEye headset viewing the bee, (2) PoRs and two arrows pointing for the lower corners of the image to the bee in the center indicating vergence of two gaze vectors, (3) points indicating different gaze behaviors (fixations, saccades, and smooth pursuits), and (4) visualization of fixations and smooth pursuits on the bee.

Our workflow consists of four steps: collection of gaze data in an immersive VR scene, processing the data to calculate the gaze points (which we refer to as Points of Regard, PoR) from gaze vectors, classifying gaze points into various gaze behaviors for which we implement six different identification methods from literature, and finally visualizing results and analyzing metrics for various gaze behavior identification methods

The work was presented as a poster at the 2023 Annual Meeting of the NeuroMod Institute 29. The technical platform for studies of VR behavior includes the implementation of the gaze tracking and analysis framework, and is available under an open CeCILL licence (IDDN.FR.001.160035.000.S.P.2022.000.41200).

8.3.3 Through the eye of a low-vision patient : raising awareness toward AMD and its impact on social interactions using virtual reality

Participants: Johanna Delachambre, Monica Di Meo [CHU - Hôpital Pasteur, Nice], Frédérique Lagniez [CHU - Hôpital Pasteur, Nice], Christine Morfin-Bourlat [CHU - Hôpital Pasteur, Nice], Stéphanie Baillif [CHU - Hôpital Pasteur, Nice], Hui-Yin Wu, Pierre Kornprobst.

Context: Virtual reality (VR) has great potential for raising awareness, thanks to its ability to immerse users in situations different from their everyday experiences, such as various disabilities.

Description: In this work, we present a VR application aimed at raising awareness of low vision, more specifically Age-related Macular Degeneration (AMD), the leading cause of visual impairment in industrialized countries, leading to loss of central vision. Existing work describes only low-vision simulations that reproduce the perceptual effects of the disease, with little or no inclusion of real-life situations faced by patients, particularly those involving social interaction. Our application is a VR experiment where participants embody an AMD patient who must interact with several virtual agents in realistic situations. These scenarios were designed and developed on the basis of interviews with AMD patients and analyses of the literature on social interactions involving AMD. We proposed our application to normal-sighted participants equipped with a gaze-servo-controlled central vision loss simulator. We collected and analyzed physiological, behavioral and subjective data from each participant to assess the effectiveness of our experiment in raising awareness. We believe this new approach can raise awareness among the general public and help vision professionals better understand their patients' needs.

This work was presented in a workshop organized by Fedrha 17.

9 Bilateral contracts and grants with industry

9.1 Bilateral contracts with industry

Participants: Pierre Kornprobst, Sebastián Gallardo, Dorian Mazauric [UniCA, Inria, ABS Team].

- Cifre contract with the company Demain Un Autre Jour (directed by Bruno Génuit), in the scope of the PhD of Sebastián Gallardo (co-supervised by Pierre Kornprobst and Dorian Mazauric; starting date: June 5, 2023)

10 Partnerships and cooperations

10.1 International initiatives

10.1.1 Participation in other International Programs

ESTHETICS

Participants: Bruno Cessac, Rodrigo Cofré, Simone Ebert, Francisco Miqueles, Adrian Palacios, Jorge Portal-Diaz, Erwan Petit.

-

Title:

Exploring the functional Structure of THe rETIna with Closed loop Stimulation. A physiological and computational approach.

-

Partner Institution(s):

- Facultad de Ciencias, Universidad de Valparaíso, Chile

- Universidad de Valparaíso, Chile

- Institut des Neurosciences Paris-Saclay - NeuroPSI CNRS

-

Date/Duration:

2 years

-

Description

This project aims to better understand the retina's response to complex visual stimuli and unravel the role played by the lateral interneurons network (amacrine cells) in this response. For this, we will adopt an experimental methodology using real-time control and feedback loop to adapt, in real-time, visual stimulations to recorded retinal cell responses. The experiments will be done in Valparaiso (Chile). The Biovision team will develop the control software and extrapolate results at Inria Sophia-Antipolis based on its recent theoretical advances in retina modelling, in collaboration with R. Cofré (U. Valparaiso and NeuroPSI CNRS). This is, therefore, a transdisciplinary and international project at the interface between biology, computer sciences and mathematical neurosciences. Beyond a better understanding of its network structure's role in shaping the retina's response to complex spatiotemporal stimuli, this project could potentially impact methods for diagnosing neurodegenerative diseases like Alzheimer's.

10.2 National initiatives

Participants: Bruno Cessac, Pierre Kornprobst, Hui-Yin Wu.

10.2.1 ANR

ShootingStar

-

Title:

Processing of naturalistic motion in early vision

-

Programme:

ANR

-

Duration:

April 2021 - March 2025

-

Coordinator:

Mark WEXLER (CNRS‐INCC),

-

Partners:

- Institut de Neurosciences de la Timone (CNRS and Aix-Marseille Université, France)

- Institut de la Vision (IdV), Paris, France

- Unité de Neurosciences Information et Complexité, Gif sur Yvette, France

- Laboratoire Psychologie de la Perception - UMR 8242, Paris

-

Inria contact:

Bruno Cessac

-

Summary:

The natural visual environments in which we have evolved have shaped and constrained the neural mechanisms of vision. Rapid progress has been made in recent years in understanding how the retina, thalamus, and visual cortex are specifically adapted to processing natural scenes. Over the past several years it has, in particular, become clear that cortical and retinal responses to dynamic visual stimuli are themselves dynamic. For example, the response in the primary visual cortex to a sudden onset is not a static activation, but rather a propagating wave. Probably the most common motions in the retina are image shifts due to our own eye movements: in free viewing in humans, ocular saccades occur about three times every second, shifting the retinal image at speeds of 100-500 degrees of visual angle per second. How these very fast shifts are suppressed, leading to clear, accurate, and stable representations of the visual scene, is a fundamental unsolved problem in visual neuroscience known as saccadic suppression. The new Agence Nationale de la Recherche (ANR) project “ShootingStar” aims at studying the unexplored neuroscience and psychophysics of the visual perception of fast (over 100 deg/s) motion, and incorporating these results into models of the early visual system.

DEVISE

-

Title:

From novel rehabilitation protocols to visual aid systems for low vision people through Virtual Reality

-

Programme:

ANR

-

Duration:

2021–2025

-

Coordinator:

Eric Castet (Laboratoire de Psychologie Cognitive, Marseille)

-

Partners:

- CNRS/Aix Marseille University – AMU, Cognitive Psychology Laboratory

- AMU, Mediterranean Virtual Reality Center

-

Inria contact:

Pierre Kornprobst

-

Summary:

The ANR DEVISE (Developing Eccentric Viewing in Immersive Simulated Environments) aims to develop in a Virtual Reality headset new functional rehabilitation techniques for visually impaired people. A strong point of these techniques will be the personalization of their parameters according to each patient’s pathology, and they will eventually be based on serious games whose practice will increase the sensory-motor capacities that are deficient in these patients.

CREATTIVE3D

-

Title:

Creating attention driven 3D contexts for low vision

-

Programme:

ANR

-

Duration:

2022–2026

-

Coordinator:

Hui-Yin Wu

-

Partners:

- Université Côte d'Azur I3S, LAMHESS, CoBTEK laboratories

- CNRS/Aix Marseille University – AMU, Cognitive Psychology Laboratory

-

Summary:

CREATTIVE3D deploys virtual reality (VR) headsets to study navigation behaviors in complex environments under both normal and simulated low-vision conditions. We aim to model multi-modal user attention and behavior, and use this understanding for the design of assisted creativity tools and protocols for the creation of personalized 3D-VR content for low vision training and rehabilitation.

TRACTIVE

-

Title:

Towards a computational multimodal analysis of film discursive aesthetics

-

Programme:

ANR

-

Duration:

2022–2026

-

Coordinator:

Lucile Sassatelli

-

Partners:

- Université Côte d'Azur CNRS I3S

- Université Côte d'Azur, CNRS BCL

- Sorbonne Université, GRIPIC

- Université Toulouse 3, CNRS IRIT

- Université Sorbonne Paris Nord, LabSIC

-

Inria contact:

Hui-Yin Wu

-

Summary:

TRACTIVE's objective is to characterize and quantify gender representation and women objectification in films and visual media, by designing an AI-driven multimodal (visual and textual) discourse analysis. The project aims to establish a novel framework for the analysis of gender representation in visual media. We integrate AI, linguistics, and media studies in an iterative approach that both pinpoints the multimodal discourse patterns of gender in film, and quantitatively reveals their prevalence. We devise a new interpretative framework for media and gender studies incorporating modern AI capabilities. Our models, published through an online tool, will engage the general public through participative science to raise awareness towards gender-in-media issues from a multi-disciplinary perspective.

11 Dissemination

Participants: Bruno Cessac, Johanna Delachambre, Simone Ebert, Jérôme Emonet, Pierre Kornprobst, Erwan Petit, Florent Robert, Hui-Yin Wu.

11.1 Promoting scientific activities

11.1.1 Scientific events: organisation

General chair, scientific chair

- B. Cessac co-chaired the Neuromod annual Meeting, 2023

- H.-Y. Wu co-chaired the ACM IMX Workshop on intelligent cinematography and editing, 2023

11.1.2 Scientific events: selection

Reviewer

- P. Kornprobst was a reviewer for The 32nd International Joint Conference on Artificial Intelligence (IJCAI-23)

- H.-Y. Wu was a reviewer for the 19th IFIP TC13 International Conference (INTERACT 2023), the ACM SIGCHI Symposium on Engineering Interactive Computing Systems (EICS), and the IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR)

11.1.3 Journal

Reviewer - reviewing activities

- H.-Y. Wu was a reviewer for the International Journal of Human Computer Interaction (Taylor and Francis), Multimedia Tools and Applications (Springer), Computers Animations and Virtual Worlds (Wiley)

11.1.4 Invited talks

11.1.5 Research administration

- B. Cessac is a member of the Comité Scientifique de l'Institut Neuromod.

- B. Cessac is a member of the Bureau du Comité des Equipes Projets.

- B. Cessac is a member of the Comité de Suivi et Pilotage Spectrum.

- B. Cessac was a member of the Jury CRCN Inria 2023.

- P. Kornprobst has been an elected member of the Academic Council of UniCA (Conseil d'Administration) since Dec. 2019.

- H.-Y. Wu is a member of the Comité Suivi Doctoral, Centre Inria d'Université Côte d'Azur .

- H.-Y. Wu is a meeting secretary for the Comité des Equipes Projets, Centre Inria d'Université Côte d'Azur .

11.2 Teaching - Supervision - Juries

11.2.1 Teaching

- Licence 2: A. Bonlarron (58 hours, TD) Mécanismes internes des systèmes d'exploitation, Licence en informatique, UniCA, France.

- Master 1: B. Cessac (24 hours, cours) Introduction to Modelling in Neuroscience, master Mod4NeuCog, UniCA, France.

- Master 1: S. Ebert (15 hours, TD), Introduction to Modelling in Neuroscience, master Mod4NeuCog, UniCA, France.

- Master 1: E. Petit (15 hours, TD), Introduction to Modelling in Neuroscience, master Mod4NeuCog, UniCA, France.

- License 2: J. Emonet (22 hours), Introduction à l'informatique, License SV, UniCA, France.

- License 3: J. Emonet (16 hours), Programmation python et environnement linux, License BIM, UniCA, France.

- License 3: J. Emonet (20 hours), Biostatistiques, License SV, UniCA, France.

- Master 1: J. Delachambre (17.5 hours TD), Création de mondes virtuels, Master en Informatique (SI4), Polytech Nice Sophia, UniCA, France

- Master 2: J. Delachambre (3 hours TD), Techniques d'interaction et multimodalité, Master en Informatique (SI5) mineure IHM, Polytech Nice Sophia, UniCA, France

- Licence 3: F. Robert (39.5 hours TD), Analyse du besoin & Application Web, MIAGE, UniCA, France

- Master 1: H-Y. Wu (8 hours course, 15 hours TD), Création de mondes virtuels, Master en Informatique (SI4), Polytech Nice Sophia, UniCA, France

- Master 1: H-Y. Wu (12.5 hours TD), Introduction to Scientific Research, DS4H, UniCA / Master en Informatique (SI5), Polytech Nice Sophia, UniCA, France

- Master 2: H-Y. Wu (6 hours course, 3 hours TD), Techniques d'interaction et multimodalité, Master en Informatique (SI5) mineure IHM, Polytech Nice Sophia, UniCA, France

- Master 2: H-Y. Wu supervised M2 student final projects (TER), 7 hours, Master en Informatique (SI5), Polytech Nice Sophia, UniCA, France

11.2.2 Supervision

- B. Cessac supervised the PhD of Simone Ebert, "Dynamical Synapses in the retinal network", defended on December, 13th, 2023 19.

- B. Cessac supervises the PhD of Jérôme Emonet, "A retino-cortical model for the study of suppressive waves in ocular saccades".

- B. Cessac supervises the PhD of Erwan Petit, "Modeling activity waves in the retina".

- P. Kornprobst co-supervises (with J.-C. Régin) the PhD of Alexandre Bonlarron, "Pushing the limits of reading performance screening with Artificial Intelligence: Towards large-scale evaluation protocols for the Visually Impaired".

- P. Kornprobst co-supervises (with D. Mazauric) the PhD of Sebastian Gallardo, "Making newspaper layouts dynamic: Study of a new combinatorial/ geometric packing problems". Cifre contract with the company Demain un Autre Jour (Toulouse).

- P. Kornprobst and H.-Y. Wu co-supervise the PhD of Johanna Delachambre, “Social interactions in low vision: A collaborative approach based on immersive technologies”.

- H.-Y. Wu co-supervises (with M. Winckler and L. Sassatelli) the PhD of Florent Robert, “Analyzing and Understanding Embodied Interactions in Extended Reality Systems”.

- H.-Y. Wu co-supervises (with L. Sassatelli) the PhD of Franz Franco-Gallo, “ Modeling 6DoF Navigation and the Impact of Low Vision in Immersive VR Contexts”.

- H.-Y. Wu co-supervises (with M. Winckler and A. Menin) the PhD of Clément Quéré, “Apports des réalités étendues pour l'exploration visuelle de données spatio-temporelles”.

- H.-Y. Wu co-supervises (with L. Sassatelli and F. Precioso) the PhD of Julie Tores, “Deep learning to detect objectification in films and visual media”.

11.2.3 Juries

- B. Cessac was a member of the jury of Jules Bouté's thesis, titled "Hidden complexity in biologically inspired neural networks: emergent properties and dynamical behavior", September 2023.

- P. Kornprobst has been a member of the Comité de Suivi de Individuel (CSI) of Abid Ali (Stars project-team) and Nicolas Volante (GraphDeco project-team).

- H.-Y. Wu was a member of the jury of Emilie Yu's thesis, titled "Designing tools for 3D content authoring based on 3D sketching", December 2023.

11.3 Popularization

11.3.1 Interventions

- H.-Y. Wu presented her job as a researcher to students during a Chiche intervention at the ABC International School in Nice, December 2023.

12 Scientific production

12.1 Major publications

- 1 inproceedingsConstraints First: A New MDD-based Model to Generate Sentences Under Constraints.Proceedings of the Thirty-Second International Joint Conference on Artificial Intelligence (IJCAI23 )Proceedings of the Thirty-Second International Joint Conference on Artificial IntelligenceMacao SAR, ChinaAugust 2023, 1893-1901HALDOI

- 2 articleA New Vessel-Based Method to Estimate Automatically the Position of the Nonfunctional Fovea on Altered Retinography From Maculopathies.Translational vision science & technology12July 2023HALDOI

- 3 articleLinear response for spiking neuronal networks with unbounded memory.Entropy232L'institution a financé les frais de publication pour que cet article soit en libre accèsFebruary 2021, 155HALDOI

- 4 miscTemporal pattern recognition in retinal ganglion cells is mediated by dynamical inhibitory synapses.January 2023HALDOI

- 5 articleMicrosaccades enable efficient synchrony-based coding in the retina: a simulation study.Scientific Reports624086April 2016HALDOI

- 6 articleA biophysical model explains the spontaneous bursting behavior in the developing retina.Scientific Reports91December 2019, 1-23HALDOI

- 7 inproceedingsBest paperAn Integrated Framework for Understanding Multimodal Embodied Experiences in Interactive Virtual Reality.2023 IMX - ACM International Conference on Interactive Media ExperiencesNantes, FranceJune 2023, https://dl.acm.org/conference/imxHALDOIback to textback to textback to textback to textback to text

- 8 articleOn the potential role of lateral connectivity in retinal anticipation.Journal of Mathematical Neuroscience11January 2021HALDOI

- 9 articleTowards Accessible News Reading Design in Virtual Reality for Low Vision.Multimedia Tools and ApplicationsMay 2021HALback to text

- 10 articleDesigning Guided User Tasks in VR Embodied Experiences.Proceedings of the ACM on Human-Computer Interaction 61582022, 1–24HALDOIback to textback to text

12.2 Publications of the year

International journals

Invited conferences

International peer-reviewed conferences

Conferences without proceedings

Doctoral dissertations and habilitation theses

Reports & preprints

Other scientific publications

12.3 Other

Scientific popularization

12.4 Cited publications

- 31 bookThe Retina and its Disorders.Elsevier Science2011back to text

- 32 articleAn insight into assistive technology for the visually impaired and blind people: state-of- the-art and future trends.J Multimodal User Interfaces112017, 149-172DOIback to text

- 33 articleCharacterizing functional complaints in patients seeking outpatient low-vision services in the United States.Ophthalmology12182014, 1655-62DOIback to text

- 34 articleThe non linear dynamics of retinal waves.Physica D: Nonlinear PhenomenaNovember 2022HALDOIback to text

- 35 articleCortical Reorganization after Long-Term Adaptation to Retinal Lesions in Humans.J Neurosci.33462013, 18080--18086DOIback to text

- 36 articleVision Rehabilitation Preferred Practice Pattern.Ophthalmology12512018, 228-278DOIback to text

- 37 inproceedingsOn the link between emotion, attention and content in virtual immersive environments.ICIP 2022 - IEEE International Conference on Image ProcessingBordeaux, FranceOctober 2022HALDOIback to text

- 38 inproceedingsPEM360: A dataset of 360°.MMSys 2022 - 13th ACM Multimedia Systems ConferenceAthlone, IrelandJune 2022HALDOIback to text

- 39 articleA novel approach to the functional classification of retinal ganglion cells.Open Biology12210367March 2022HALDOIback to text

- 40 phdthesisHow specific classes of retinal cells contribute to vision : a computational model.Université Côte d'Azur ; Newcastle University (Newcastle upon Tyne, Royaume-Uni)March 2022HALback to text

- 41 articleLow Vision and Plasticity: Implications for Rehabilitation.Annu Rev Vis Sci.22016, 321--343DOIback to text

- 42 articleA new reading-acuity chart for normal and low vision.Ophthalmic and Visual Optics/Noninvasive Assessment of the Visual System Technical Digest, (Optical Society of America, Washington, DC., 1993.)31993, 232--235back to text

- 43 articleSelf-management programs for adults with low vision: needs and challenges.Patient Educ Couns691-3December 2007, 39-46DOIback to text

- 44 articleReading Speed as an Objective Measure of Improvement Following Vitrectomy for Symptomatic Vitreous Opacities.Ophthalmic Surg Lasers Imaging Retina518Aug 2020, 456-466back to text

- 45 articleOn the potential role of lateral connectivity in retinal anticipation.Journal of Mathematical Neuroscience11January 2021HALDOIback to text

- 46 articleGlobal prevalence of age-related macular degeneration and diseas,e burden projection for 2020 and 2040: a systematic rev,iew and meta-analysis.Lancet Glob Health22February 2014, 106-116DOIback to text

- 47 articleAn Open Virtual Reality Toolbox for Accessible News Reading.ERCIM News130July 2022HALback to text

- 48 incollectionThrough the Eyes of Women in Engineering.Texts of DiscomfortCarnegie Mellon University: ETC PressNovember 2021, 387 - 414HALDOIback to text

- 49 inproceedingsEvaluation of deep pose detectors for automatic analysis of film style.10th Eurographics Workshop on Intelligent Cinematography and EditingReims, FranceAssociation for Computing Machinery (ACM)April 2022HALback to text