Section: New Results

Creating and Interacting with Virtual Prototypes

-

Other permanent researchers: Marie-Paule Cani, Frédéric Devernay, Olivier Palombi, Damien Rohmer, Rémi Ronfard.

The challenge is to develop more effective ways to put the user in the loop during content authoring. We generally rely on sketching techniques for quickly drafting new content, and on sculpting methods (in the sense of gesture-driven, continuous distortion) for further 3D content refinement and editing. The objective is to extend these expressive modeling techniques to general content, from complex shapes and assemblies to animated content. As a complement, we are exploring the use of various 2D or 3D input devices to ease interactive 3D content creation.

EcoBrush: Interactive Control of Visually Consistent Large-Scale Ecosystems

|

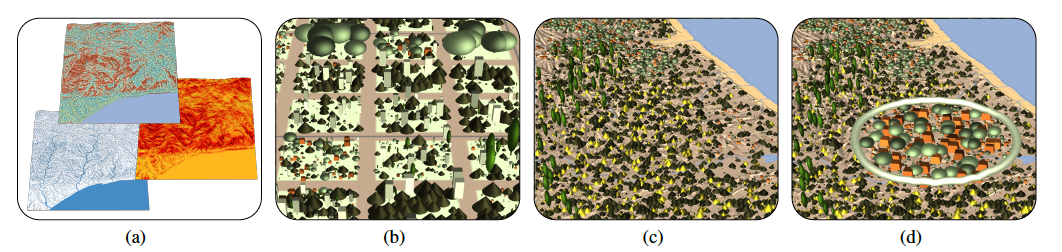

One challenge in portraying large-scale natural scenes in virtual environments is specifying the attributes of plants, such as species, size and placement, in a way that respects the features of natural ecosystems, while remaining computationally tractable and allowing user design. To address this, we combine ecosystem simulation with a distribution analysis of the resulting plant attributes to create biome-specific databases, indexed by terrain conditions, such as temperature, rainfall, sunlight and slope [18]. This is illustrated in Figure 7.

For a specific terrain, interpolated entries are drawn from this database and used to interactively synthesize a full ecosystem, while retaining the fidelity of the original simulations. A painting interface supplies users with semantic brushes for locally adjusting ecosystem age, plant density and variability, as well as optionally picking from a palette of precomputed distributions. Since these brushes are keyed to the underlying terrain properties a balance between user control and real-world consistency is maintained. Our system can be used to interactively design ecosystems up to 5 km × 5 km in extent, or to automatically generate even larger ecosystems in a fraction of the time of a full simulation, while demonstrating known properties from plant ecology such as succession, self-thinning, and underbrush, across a variety of biomes.

Authoring Landscapes by Combining Ecosystem and Terrain Erosion Simulation

|

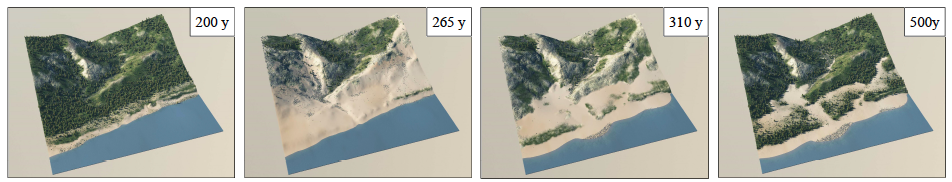

In this new paper [16], we introduced a novel framework for interactive landscape authoring that supports bi-directional feedback between erosion and vegetation simulation. This is illustrated in Figure 8. Vegetation and terrain erosion have strong mutual impact and their interplay influences the overall realism of virtual scenes. Despite their importance, these complex interactions have been neglected in computer graphics. Our framework overcomes this by simulating the effect of a variety of geomorphological agents and the mutual interaction between different material and vegetation layers, including rock, sand, humus, grass, shrubs, and trees. Users are able to exploit these interactions with an authoring interface that consistently shapes the terrain and populates it with details. Our method, validated through side-by-side comparison with real terrains, can be used not only to generate realistic static landscapes, but also to follow the temporal evolution of a landscape over a few centuries.

Shape from sensors: Curve networks on surfaces from 3D orientations

|

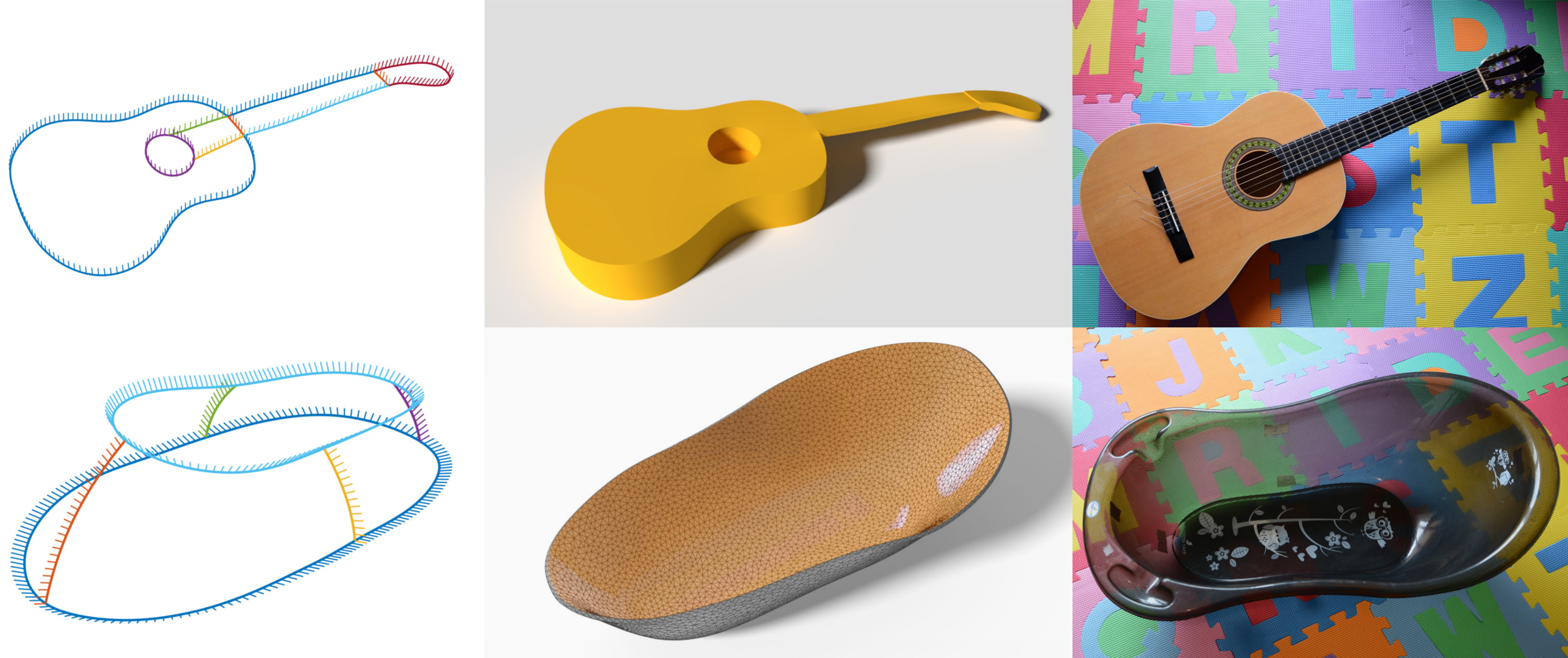

We presented a novel framework for acquisition and reconstruction of 3D curves using orientations provided by inertial sensors . This is illustrated in Figure 9. While the idea of sensor shape reconstruction is not new, we present the first method for creating well-connected networks with cell complex topology using only orientation and distance measurements and a set of user- defined constraints. By working directly with orientations, our method robustly resolves problems arising from data inconsistency and sensor noise.