Keywords

Computer Science and Digital Science

- A5.1.3. Haptic interfaces

- A5.1.7. Multimodal interfaces

- A5.4.4. 3D and spatio-temporal reconstruction

- A5.4.6. Object localization

- A5.4.7. Visual servoing

- A5.5.4. Animation

- A5.6. Virtual reality, augmented reality

- A5.6.1. Virtual reality

- A5.6.2. Augmented reality

- A5.6.3. Avatar simulation and embodiment

- A5.6.4. Multisensory feedback and interfaces

- A5.9.2. Estimation, modeling

- A5.10.2. Perception

- A5.10.3. Planning

- A5.10.4. Robot control

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A5.10.6. Swarm robotics

- A5.10.7. Learning

- A6.4.1. Deterministic control

- A6.4.3. Observability and Controlability

- A6.4.4. Stability and Stabilization

- A6.4.5. Control of distributed parameter systems

- A6.4.6. Optimal control

- A9.5. Robotics

- A9.7. AI algorithmics

- A9.9. Distributed AI, Multi-agent

Other Research Topics and Application Domains

- B2.4.3. Surgery

- B2.5. Handicap and personal assistances

- B5.1. Factory of the future

- B5.6. Robotic systems

- B8.1.2. Sensor networks for smart buildings

- B8.4. Security and personal assistance

1 Team members, visitors, external collaborators

Research Scientists

- Paolo Robuffo Giordano [Team leader, CNRS, Senior Researcher, HDR]

- François Chaumette [Inria, Senior Researcher, HDR]

- Alexandre Krupa [Inria, Senior Researcher, HDR]

- Claudio Pacchierotti [CNRS, Researcher]

- Julien Pettré [Inria, Senior Researcher, HDR]

Faculty Members

- Marie Babel [INSA Rennes, Associate Professor, HDR]

- Quentin Delamare [École normale supérieure de Rennes]

- Vincent Drevelle [Univ de Rennes I, Associate Professor]

- Maud Marchal [INSA Rennes, Professor, HDR]

- Eric Marchand [Univ de Rennes I, Professor, HDR]

Post-Doctoral Fellows

- Khairidine Benali [Inria, from Sep 2021]

- Elodie Bouzbib [Inria, from Nov 2021]

- Thomas Howard [CNRS, until Sep 2021]

- Pratik Mullick [Inria]

- Gennaro Notomista [CNRS, until Sep 2021]

PhD Students

- Vicenzo Abichequer Sangalli [Inria]

- Julien Albrand [INSA Rennes, until Sept 2021]

- Javad Amirian [Inria, until Sep 2021]

- Maxime Bernard [CNRS, from Oct 2021]

- Pascal Brault [Inria]

- Pierre Antoine Cabaret [Inria, from Oct 2021]

- Antoine Cellier [INSA Rennes, from Oct 2021]

- Thomas Chatagnon [Inria]

- Adele Colas [Inria]

- Cedric De Almeida Braga [Inria, until Feb 2021]

- Nicola De Carli [CNRS]

- Mathieu Gonzalez [Institut de recherche technologique B-com]

- Fabien Grzeskowiak [Inria, until Jun 2021]

- Alberto Jovane [Inria]

- Glenn Kerbiriou [Interdigital, from June 2021]

- Lisheng Kuang [China Scholarship Council]

- Ines Lacote [Inria]

- Emilie Leblong [Pôle Saint-Hélier]

- Fouad Makiyeh [Inria]

- Thibault Noel [Inria, from Feb 2021]

- Erwan Normand [Univ de Rennes I, from Oct 2021]

- Alexander Oliva [Inria]

- Maxime Robic [Inria]

- Lev Smolentsev [Inria]

- Gustavo Souza Vieira Dutra [Inria, Dec 2021]

- Ali Srour [CNRS, from Oct 2021]

- John Thomas [Inria]

- Guillaume Vailland [INSA Rennes, until Nov 2021]

- Tairan Yin [Inria]

Technical Staff

- Marco Aggravi [CNRS, Engineer, until Aug 2021]

- Dieudonne Atrevi [Inria, Engineer, until Apr 2021]

- Julien Bruneau [Inria, Engineer]

- Louise Devigne [INSA Rennes, Engineer]

- Julien Dufour [Inria, Engineer, from May 2021]

- Solenne Fortun [Inria, Engineer]

- Thierry Gaugry [INSA Rennes, Engineer, until Jun 2021]

- Guillaume Gicquel [CNRS, Engineer]

- Fabien Grzeskowiak [INSA Rennes, Engineer, from Jul 2021]

- Thomas Howard [INSA Rennes, Engineer, from Oct 2021]

- Joudy Nader [CNRS, Engineer]

- Noura Neji [Inria, Engineer, until Apr 2021]

- François Pasteau [INSA Rennes, Engineer]

- Yuliya Patotskaya [Inria, Engineer]

- Fabien Spindler [Inria, Engineer]

- Wouter Van Toll [Inria, Engineer, until Oct 2021]

Interns and Apprentices

- Merwane Bouri [INSA Rennes, from Aug 2021]

- Pierre Antoine Cabaret [INSA Rennes, from Feb 2021 until Aug 2021]

- Alex Coudray [Inria, from Feb 2021 until Jul 2021]

- Arthur Furet [INSA Rennes, from Jun 2021 until Aug 2021]

- Alexis Hobl [CNRS, from May 2021 until Jul 2021]

- Emilie Hummel [CNRS, from Feb 2021 until Aug 2021]

- Alexis Jensen [INSA Rennes, from Jul 2021 until Sep 2021]

- Divyesh Kanagavel [CNRS, from Feb 2021 until Aug 2021]

- Alex Keryhuel [Inria, from May 2021 until Aug 2021]

- Hussein Lezzaik [CNRS, from Feb 2021 until Aug 2021]

- Arthur Luciani [École normale supérieure de Rennes, from Feb 2021 until Jul 2021]

- Thomas Mabit [École normale supérieure de Rennes, from Feb 2021 until Aug 2021]

- Thibaut Rolland [Inria, from Feb 2021 until Jul 2021]

- Octavie Somoza Salgado [CNRS, from Mar 2021 until Aug 2021]

- Guillaume Sonnet [Inria, from May 2021 until Sep 2021]

- Gustavo Souza Vieira Dutra [INSA Rennes, Intern, from Feb 2021 until Aug 2021]

Administrative Assistant

- Hélène de La Ruée [Univ de Rennes I]

2 Overall objectives

The long-term vision of the Rainbow team is to develop the next generation of sensor-based robots able to navigate and/or interact in complex unstructured environments together with human users. Clearly, the word “together” can have very different meanings depending on the particular context: for example, it can refer to mere co-existence (robots and humans share some space while performing independent tasks), human-awareness (the robots need to be aware of the human state and intentions for properly adjusting their actions), or actual cooperation (robots and humans perform some shared task and need to coordinate their actions).

One could perhaps argue that these two goals are somehow in conflict since higher robot autonomy should imply lower (or absence of) human intervention. However, we believe that our general research direction is well motivated since: despite the many advancements in robot autonomy, complex and high-level cognitive-based decisions are still out of reach. In most applications involving tasks in unstructured environments, uncertainty, and interaction with the physical word, human assistance is still necessary, and will most probably be for the next decades. On the other hand, robots are extremely capable of autonomously executing specific and repetitive tasks, with great speed and precision, and of operating in dangerous/remote environments, while humans possess unmatched cognitive capabilities and world awareness which allow them to take complex and quick decisions; the cooperation between humans and robots is often an implicit constraint of the robotic task itself. Consider for instance the case of assistive robots supporting injured patients during their physical recovery, or human augmentation devices. It is then important to study proper ways of implementing this cooperation; finally, safety regulations can require the presence at all times of a person in charge of supervising and, if necessary, of taking direct control of the robotic workers. For example, this is a common requirement in all applications involving tasks in public spaces, like autonomous vehicles in crowded spaces, or even UAVs when flying in civil airspace such as over urban or populated areas.

Within this general picture, the Rainbow activities will be particularly focused on the case of (shared) cooperation between robots and humans by pursuing the following vision: on the one hand, empower robots with a large degree of autonomy for allowing them to effectively operate in non-trivial environments (e.g., outside completely defined factory settings). On the other hand, include human users in the loop for having them in (partial and bilateral) control of some aspects of the overall robot behavior. We plan to address these challenges from the methodological, algorithmic and application-oriented perspectives. The main research axes along which the Rainbow activities will be articulated are: three supporting axes (Optimal and Uncertainty-Aware Sensing; Advanced Sensor-based Control; Haptics for Robotics Applications) that are meant to develop methods, algorithms and technologies for realizing the central theme of Shared Control of Complex Robotic Systems.

3 Research program

3.1 Main Vision

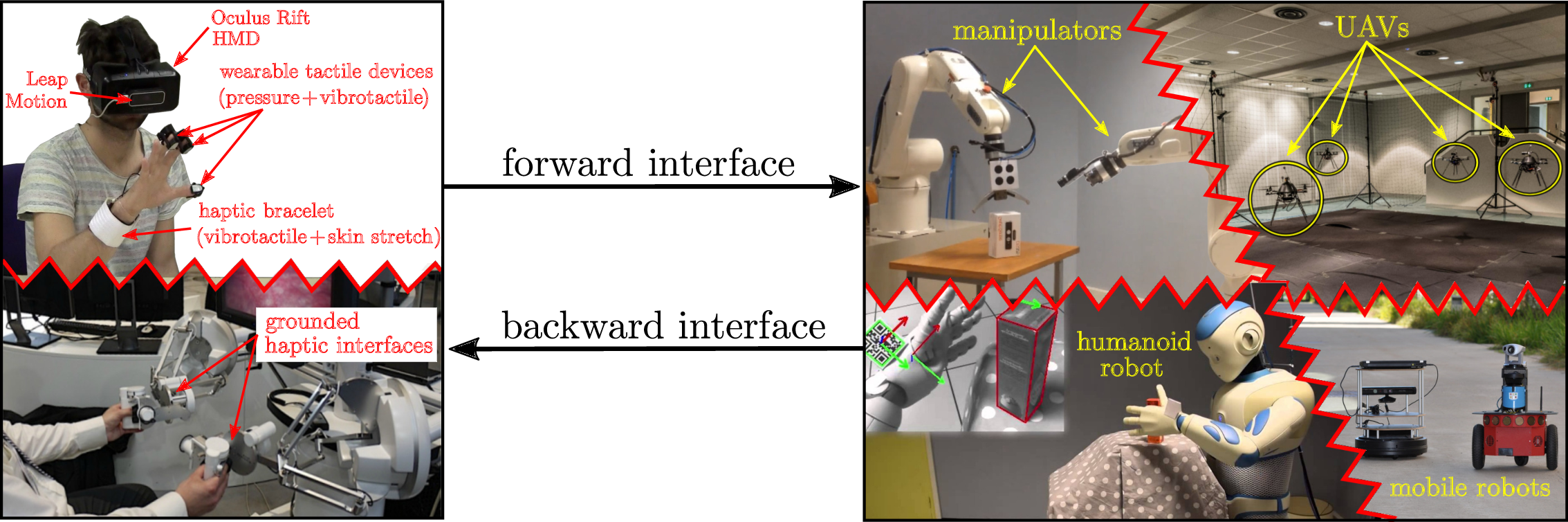

The vision of Rainbow (and foreseen applications) calls for several general scientific challenges: high-level of autonomy for complex robots in complex (unstructured) environments, forward interfaces for letting an operator giving high-level commands to the robot, backward interfaces for informing the operator about the robot `status', user studies for assessing the best interfacing, which will clearly depend on the particular task/situation. Within Rainbow we plan to tackle these challenges at different levels of depth:

- the methodological and algorithmic side of the sought human-robot interaction will be the main focus of Rainbow. Here, we will be interested in advancing the state-of-the-art in sensor-based online planning, control and manipulation for mobile/fixed robots. For instance, while classically most control approaches (especially those sensor-based) have been essentially reactive, we believe that less myopic strategies based on online/reactive trajectory optimization will be needed for the future Rainbow activities. The core ideas of Model-Predictive Control approaches (also known as Receding Horizon) or, in general, numerical optimal control methods will play a role in the Rainbow activities, for allowing the robots to reason/plan over some future time window and better cope with constraints. We will also consider extending classical sensor-based motion control/manipulation techniques to more realistic scenarios, such as deformable/flexible objects (“Advanced Sensor-based Control” axis). Finally, it will also be important to spend research efforts into the field of Optimal Sensing, in the sense of generating (again) trajectories that can optimize the state estimation problem in presence of scarce sensory inputs and/or non-negligible measurement and process noises, especially true for the case of mobile robots (“Optimal and Uncertainty-Aware Sensing” axis). We also aim at addressing the case of coordination between a single human user and multiple robots where, clearly, as explained the autonomy part plays even a more crucial role (no human can control multiple robots at once, thus a high degree of autonomy will be required by the robot group for executing the human commands);

-

the interfacing side will also be a focus of the Rainbow activities. As explained above, we will be interested in both the forward (human robot) and backward (robot human) interfaces. The forward interface will be mainly addressed from the algorithmic point of view, i.e., how to map the few degrees of freedom available to a human operator (usually in the order of 3–4) into complex commands for the controlled robot(s). This mapping will typically be mediated by an “AutoPilot” onboard the robot(s) for autonomously assessing if the commands are feasible and, if not, how to least modify them (“Advanced Sensor-based Control” axis).

The backward interface will, instead, mainly consist of a visual/haptic feedback for the operator. Here, we aim at exploiting our expertise in using force cues for informing an operator about the status of the remote robot(s). However, the sole use of classical grounded force feedback devices (e.g., the typical force-feedback joysticks) will not be enough due to the different kinds of information that will have to be provided to the operator. In this context, the recent interest in the use of wearable haptic interfaces is very interesting and will be investigated in depth (these include, e.g., devices able to provide vibro-tactile information to the fingertips, wrist, or other parts of the body). The main challenges in these activities will be the mechanical conception (and construction) of suitable wearable interfaces for the tasks at hand, and in the generation of force cues for the operator: the force cues will be a (complex) function of the robot state, therefore motivating research in algorithms for mapping the robot state into a few variables (the force cues) (“Haptics for Robotics Applications” axis);

- the evaluation side that will assess the proposed interfaces with some user studies, or acceptability studies by human subjects. Although this activity will not be a main focus of Rainbow (complex user studies are beyond the scope of our core expertise), we will nevertheless devote some efforts into having some reasonable level of user evaluations by applying standard statistical analysis based on psychophysical procedures (e.g., randomized tests and Anova statistical analysis). This will be particularly true for the activities involving the use of smart wheelchairs, which are intended to be used by human users and operate inside human crowds. Therefore, we will be interested in gaining some level of understanding of how semi-autonomous robots (a wheelchair in this example) can predict the human intention, and how humans can react to a semi-autonomous mobile robot.

Figure 1 depicts in an illustrative way the prototypical activities foreseen in Rainbow. On the righthand side, complex robots (dual manipulators, humanoid, single/multiple mobile robots) need to perform some task with high degree of autonomy. On the lefthand side, a human operator gives some high-level commands and receives a visual/haptic feedback aimed at informing her/him at best of the robot status. Again, the main challenges that Rainbow will tackle to address these issues are (in order of relevance): methods and algorithms, mostly based on first-principle modeling and, when possible, on numerical methods for online/reactive trajectory generation, for enabling the robots with high autonomy; design and implementation of visual/haptic cues for interfacing the human operator with the robots, with a special attention to novel combinations of grounded/ungrounded (wearable) haptic devices; user and acceptability studies.

3.2 Main Components

Hereafter, a summary description of the four axes of research in Rainbow.

3.2.1 Optimal and Uncertainty-Aware Sensing

Future robots will need to have a large degree of autonomy for, e.g., interpreting the sensory data for accurate estimation of the robot and world state (which can possibly include the human users), and for devising motion plans able to take into account many constraints (actuation, sensor limitations, environment), including also the state estimation accuracy (i.e., how well the robot/environment state can be reconstructed from the sensed data). In this context, we will be particularly interested in devising trajectory optimization strategies able to maximize some norm of the information gain gathered along the trajectory (and with the available sensors). This can be seen as an instance of Active Sensing, with the main focus on online/reactive trajectory optimization strategies able to take into account several requirements/constraints (sensing/actuation limitations, noise characteristics). We will also be interested in the coupling between optimal sensing and concurrent execution of additional tasks (e.g., navigation, manipulation). Formal methods for guaranteeing the accuracy of localization/state estimation in mobile robotics, mainly exploiting tools from interval analysis. The interest of these methods is their ability to provide possibly conservative but guaranteed accuracy bounds on the best accuracy one can obtain with the given robot/sensor pair, and can thus be used for planning purposes or for system design (choice of the best sensors for a given robot/task). Localization/tracking of objects with poor/unknown or deformable shape, which will be of paramount importance for allowing robots to estimate the state of “complex objects” (e.g., human tissues in medical robotics, elastic materials in manipulation) for controlling its pose/interaction with the objects of interest.

3.2.2 Advanced Sensor-based Control

One of the main competences of the previous Lagadic team has been, generally speaking, the topic of sensor-based control, i.e., how to exploit (typically onboard) sensors for controlling the motion of fixed/ground robots. The main emphasis has been in devising ways to directly couple the robot motion with the sensor outputs in order to invert this mapping for driving the robots towards a configuration specified as a desired sensor reading (thus, directly in sensor space). This general idea has been applied to very different contexts: mainly standard vision (from which the Visual Servoing keyword), but also audio, ultrasound imaging, and RGB-D.

Use of sensors for controlling the robot motion will also clearly be a central topic of the Rainbow team too, since the use of (especially onboard) sensing is a main characteristics of any future robotics application (which should typically operate in unstructured environments, and thus mainly rely on its own ability to sense the world). We then naturally aim at making the best out of the previous Lagadic experience in sensor-based control for proposing new advanced ways of exploiting sensed data for, roughly speaking, controlling the motion of a robot. In this respect, we plan to work on the following topics: “direct/dense methods” which try to directly exploit the raw sensory data in computing the control law for positioning/navigation tasks. The advantages of these methods is the little need for data pre-processing which can minimize feature extraction errors and, in general, improve the overall robustness/accuracy (since all the available data is used by the motion controller); sensor-based interaction with objects of unknown/deformable shapes, for gaining the ability to manipulate, e.g., flexible objects from the acquired sensed data (e.g., controlling online a needle being inserted in a flexible tissue); sensor-based model predictive control, by developing online/reactive trajectory optimization methods able to plan feasible trajectories for robots subjects to sensing/actuation constraints with the possibility of (onboard) sensing for continuously replanning (over some future time horizon) the optimal trajectory. These methods will play an important role when dealing with complex robots affected by complex sensing/actuation constraints, for which pure reactive strategies (as in most of the previous Lagadic works) are not effective. Furthermore, the coupling with the aforementioned optimal sensing will also be considered; multi-robot decentralised estimation and control, with the aim of devising again sensor-based strategies for groups of multiple robots needing to maintain a formation or perform navigation/manipulation tasks. Here, the challenges come from the need of devising “simple” decentralized and scalable control strategies under the presence of complex sensing constraints (e.g., when using onboard cameras, limited fov, occlusions). Also, the need of locally estimating global quantities (e.g., common frame of reference, global property of the formation such as connectivity or rigidity) will also be a line of active research.

3.2.3 Haptics for Robotics Applications

In the envisaged shared cooperation between human users and robots, the typical sensory channel (besides vision) exploited to inform the human users is most often the force/kinesthetic one (in general, the sense of touch and of applied forces to the human hand or limbs). Therefore, a part of our activities will be devoted to study and advance the use of haptic cueing algorithms and interfaces for providing a feedback to the users during the execution of some shared task. We will consider: multi-modal haptic cueing for general teleoperation applications, by studying how to convey information through the kinesthetic and cutaneous channels. Indeed, most haptic-enabled applications typically only involve kinesthetic cues, e.g., the forces/torques that can be felt by grasping a force-feedback joystick/device. These cues are very informative about, e.g., preferred/forbidden motion directions, but are also inherently limited in their resolution since the kinesthetic channel can easily become overloaded (when too much information is compressed in a single cue). In recent years, the arise of novel cutaneous devices able to, e.g., provide vibro-tactile feedback on the fingertips or skin, has proven to be a viable solution to complement the classical kinesthetic channel. We will then study how to combine these two sensory modalities for different prototypical application scenarios, e.g., 6-dof teleoperation of manipulator arms, virtual fixtures approaches, and remote manipulation of (possibly deformable) objects; in the particular context of medical robotics, we plan to address the problem of providing haptic cues for typical medical robotics tasks, such as semi-autonomous needle insertion and robot surgery by exploring the use of kinesthetic feedback for rendering the mechanical properties of the tissues, and vibrotactile feedback for providing with guiding information about pre-planned paths (with the aim of increasing the usability/acceptability of this technology in the medical domain); finally, in the context of multi-robot control we would like to explore how to use the haptic channel for providing information about the status of multiple robots executing a navigation or manipulation task. In this case, the problem is (even more) how to map (or compress) information about many robots into a few haptic cues. We plan to use specialized devices, such as actuated exoskeleton gloves able to provide cues to each fingertip of a human hand, or to resort to “compression” methods inspired by the hand postural synergies for providing coordinated cues representative of a few (but complex) motions of the multi-robot group, e.g., coordinated motions (translations/expansions/rotations) or collective grasping/transporting.

3.2.4 Shared Control of Complex Robotics Systems

This final and main research axis will exploit the methods, algorithms and technologies developed in the previous axes for realizing applications involving complex semi-autonomous robots operating in complex environments together with human users. The leitmotiv is to realize advanced shared control paradigms, which essentially aim at blending robot autonomy and user's intervention in an optimal way for exploiting the best of both worlds (robot accuracy/sensing/mobility/strength and human's cognitive capabilities). A common theme will be the issue of where to “draw the line” between robot autonomy and human intervention: obviously, there is no general answer, and any design choice will depend on the particular task at hand and/or on the technological/algorithmic possibilities of the robotic system under consideration.

A prototypical envisaged application, exploiting and combining the previous three research axes, is as follows: a complex robot (e.g., a two-arm system, a humanoid robot, a multi-UAV group) needs to operate in an environment exploiting its onboard sensors (in general, vision as the main exteroceptive one) and deal with many constraints (limited actuation, limited sensing, complex kinematics/dynamics, obstacle avoidance, interaction with difficult-to-model entities such as surrounding people, and so on). The robot must then possess a quite large autonomy for interpreting and exploiting the sensed data in order to estimate its own state and the environment one (“Optimal and Uncertainty-Aware Sensing” axis), and for planning its motion in order to fulfil the task (e.g., navigation, manipulation) by coping with all the robot/environment constraints. Therefore, advanced control methods able to exploit the sensory data at its most, and able to cope online with constraints in an optimal way (by, e.g., continuously replanning and predicting over a future time horizon) will be needed (“Advanced Sensor-based Control” axis), with a possible (and interesting) coupling with the sensing part for optimizing, at the same time, the state estimation process. Finally, a human operator will typically be in charge of providing high-level commands (e.g., where to go, what to look at, what to grasp and where) that will then be autonomously executed by the robot, with possible local modifications because of the various (local) constraints. At the same time, the operator will also receive online visual-force cues informative of, in general, how well her/his commands are executed and if the robot would prefer or suggest other plans (because of the local constraints that are not of the operator's concern). This information will have to be visually and haptically rendered with an optimal combination of cues that will depend on the particular application (“Haptics for Robotics Applications” axis).

4 Application domains

The activities of Rainbow fall obviously within the scope of Robotics. Broadly speaking, our main interest is in devising novel/efficient algorithms (for estimation, planning, control, haptic cueing, human interfacing, etc.) that can be general and applicable to many different robotic systems of interest, depending on the particular application/case study. For instance, we plan to consider

- applications involving remote telemanipulation with one or two robot arms, where the arm(s) will need to coordinate their motion for approaching/grasping objects of interest under the guidance of a human operator;

- applications involving single and multiple mobile robots for spatial navigation tasks (e.g., exploration, surveillance, mapping). In the multi-robot case, the high redundancy of the multi-robot group will motivate research in autonomously exploiting this redundancy for facilitating the task (e.g., optimizing the self-localization of the environment mapping) while following the human commands, and vice-versa for informing the operator about the status of a multi-robot group. In the single robot case, the possible combination with some manipulation devices (e.g., arms on a wheeled robot) will motivate research into remote tele-navigation and tele-manipulation;

- applications involving medical robotics, in which the “manipulators” are replaced by the typical tools used in medical applications (ultrasound probes, needles, cutting scalpels, and so on) for semi-autonomous probing and intervention;

- applications involving a direct physical “coupling” between human users and robots (rather than a “remote” interfacing), such as the case of assistive devices used for easing the life of impaired people. Here, we will be primarily interested in, e.g., safety and usability issues, and also touch some aspects of user acceptability.

These directions are, in our opinion, very promising since nowadays and future robotics applications are expected to address more and more complex tasks: for instance, it is becoming mandatory to empower robots with the ability to predict the future (to some extent) by also explicitly dealing with uncertainties from sensing or actuation; to safely and effectively interact with human supervisors (or collaborators) for accomplishing shared tasks; to learn or adapt to the dynamic environments from small prior knowledge; to exploit the environment (e.g., obstacles) rather than avoiding it (a typical example is a humanoid robot in a multi-contact scenario for facilitating walking on rough terrains); to optimize the onboard resources for large-scale monitoring tasks; to cooperate with other robots either by direct sensing/communication, or via some shared database (the “cloud”).

While no single lab can reasonably address all these theoretical/algorithmic/technological challenges, we believe that our research agenda can give some concrete contributions to the next generation of robotics applications.

5 Highlights of the year

5.1 Awards

5.2 Highlights

- P. Robuffo Giordano is part of the euRobotics “George Giralt” PhD Award panel for awarding the best PhD Thesis in robotics in Europe

- P. Robuffo Giordano has been elected member of the Section 07 of the Comité National de la Recherche Scientifique

- A. Krupa was promoted in 2021 to the grade of Inria Senior Research Scientist (Inria DR2)

- C. Pacchierotti has been proposed for the 2022 CNRS Bronze medal by the Section 7 of the CoNRS (The National Committee for Scientific Research).

6 New software and platforms

6.1 New software

6.1.1 HandiViz

-

Name:

Driving assistance of a wheelchair

-

Keywords:

Health, Persons attendant, Handicap

-

Functional Description:

The HandiViz software proposes a semi-autonomous navigation framework of a wheelchair relying on visual servoing.

It has been registered to the APP (“Agence de Protection des Programmes”) as an INSA software (IDDN.FR.001.440021.000.S.P.2013.000.10000) and is under GPL license.

-

Contact:

Marie Babel

-

Participants:

Francois Pasteau, Marie Babel

-

Partner:

INSA Rennes

6.1.2 UsTk

-

Name:

Ultrasound toolkit for medical robotics applications guided from ultrasound images

-

Keywords:

Echographic imagery, Image reconstruction, Medical robotics, Visual tracking, Visual servoing (VS), Needle insertion

-

Functional Description:

UsTK, standing for Ultrasound Toolkit, is a cross-platform extension of ViSP software dedicated to 2D and 3D ultrasound image processing and visual servoing based on ultrasound images. Written in C++, UsTK architecture provides a core module that implements all the data structures at the heart of UsTK, a grabber module that allows acquiring ultrasound images from an Ultrasonix or a Sonosite device, a GUI module to display data, an IO module for providing functionalities to read/write data from a storage device, and a set of image processing modules to compute the confidence map of ultrasound images, generate elastography images, track a flexible needle in sequences of 2D and 3D ultrasound images and track a target image template in sequences of 2D ultrasound images. All these modules were implemented on several robotic demonstrators to control the motion of an ultrasound probe or a flexible needle by ultrasound visual servoing.

- URL:

-

Contact:

Alexandre Krupa

-

Participants:

Alexandre Krupa, Fabien Spindler

-

Partners:

Inria, Université de Rennes 1

6.1.3 ViSP

-

Name:

Visual servoing platform

-

Keywords:

Augmented reality, Computer vision, Robotics, Visual servoing (VS), Visual tracking

-

Scientific Description:

Since 2005, we develop and release ViSP [1], an open source library available from https://visp.inria.fr. ViSP standing for Visual Servoing Platform allows prototyping and developing applications using visual tracking and visual servoing techniques at the heart of the Rainbow research. ViSP was designed to be independent from the hardware, to be simple to use, expandable and cross-platform. ViSP allows designing vision-based tasks for eye-in-hand and eye-to-hand systems from the most classical visual features that are used in practice. It involves a large set of elementary positioning tasks with respect to various visual features (points, segments, straight lines, circles, spheres, cylinders, image moments, pose...) that can be combined together, and image processing algorithms that allow tracking of visual cues (dots, segments, ellipses...), or 3D model-based tracking of known objects or template tracking. Simulation capabilities are also available.

[1] E. Marchand, F. Spindler, F. Chaumette. ViSP for visual servoing: a generic software platform with a wide class of robot control skills. IEEE Robotics and Automation Magazine, Special Issue on "Software Packages for Vision-Based Control of Motion", P. Oh, D. Burschka (Eds.), 12(4):40-52, December 2005.

-

Functional Description:

ViSP provides simple ways to integrate and validate new algorithms with already existing tools. It follows a module-based software engineering design where data types, algorithms, sensors, viewers and user interaction are made available. Written in C++, ViSP is based on open-source cross-platform libraries (such as OpenCV) and builds with CMake. Several platforms are supported, including OSX, iOS, Windows and Linux. ViSP online documentation allows to ease learning. More than 300 fully documented classes organized in 17 different modules, with more than 408 examples and 88 tutorials are proposed to the user. ViSP is released under a dual licensing model. It is open-source with a GNU GPLv2 or GPLv3 license. A professional edition license that replaces GNU GPL is also available.

- URL:

-

Contact:

Fabien Spindler

-

Participants:

Éric Marchand, Fabien Spindler, Francois Chaumette

-

Partners:

Inria, Université de Rennes 1

6.1.4 DIARBENN

-

Name:

Obstacle avoidance through sensor-based servoing

-

Keywords:

Servoing, Shared control, Navigation

-

Functional Description:

DIARBENN's objective is to define an obstacle avoidance solution adapted to a mobile robot such as a powered wheelchair. Through a shared control system, the system corrects progressively and if necessary the trajectory when approaching an obstacle while respecting the user's intention.

-

Contact:

Marie Babel

-

Partner:

INSA Rennes

6.2 New platforms

6.2.1 Robot Vision Platform

Participant: François Chaumette [contact], Alexandre Krupa [contact], Eric Marchand [contact], Fabien Spindler [contact].

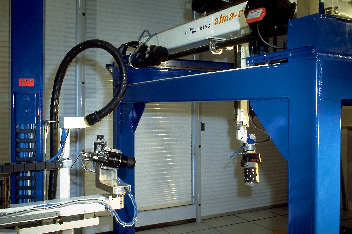

We exploit two industrial robotic systems built by Afma Robots in the nineties to validate our research in visual servoing and active vision. The first one is a 6 DoF Gantry robot, the other one is a 4 DoF cylindrical robot (see Fig. 2). These robots are equipped with monocular RGB cameras. The Gantry robot also allows mounting grippers on its end-effector. Attached to this platform, we can also find a collection of various RGB and RGB-D cameras used to validate vision-based real-time tracking algorithms.

In 2021, this platform has been used to validate experimental results in 2 accepted publications 4016.

6.2.2 Mobile Robots

Participants: Marie Babel [contact], Solenne Fortun [contact], François Pasteau [contact], Julien Pettré [contact], Quentin Delamare [contact], Fabien Spindler [contact].

To validate our research in personally assisted living topic (see Section 7.4.4), we have three electric wheelchairs, one from Permobil, one from Sunrise and the last from YouQ (see Fig. 3.a). The control of the wheelchair is performed using a plug and play system between the joystick and the low level control of the wheelchair. Such a system lets us acquire the user intention through the joystick position and control the wheelchair by applying corrections to its motion. The wheelchairs have been fitted with cameras, ultrasound and time of flight sensors to perform the required servoing for assisting handicapped people. A wheelchair haptic simulator completes this platform to develop new human interaction strategies in a virtual reality environment (see Fig. 3(b)).

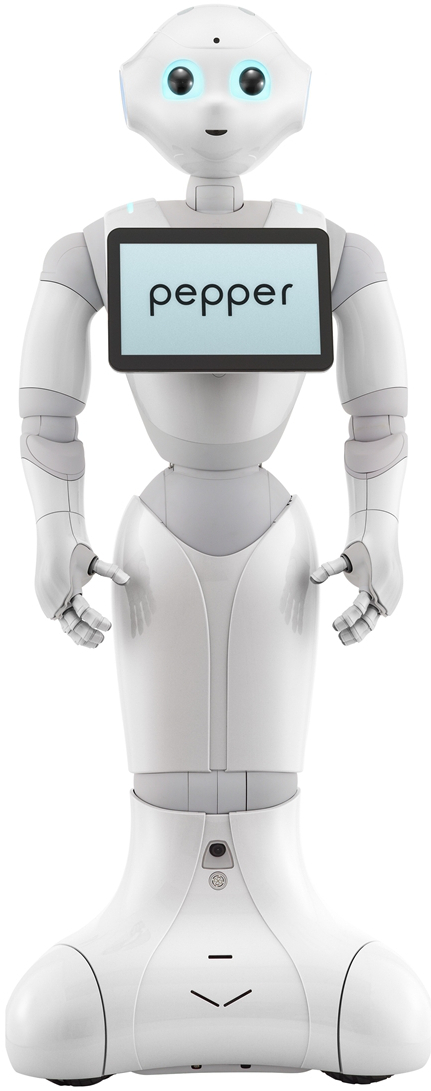

Pepper, a human-shaped robot designed by SoftBank Robotics to be a genuine day-to-day companion (see Fig. 3.c) is also part of this platform. It has 17 DoF mounted on a wheeled holonomic base and a set of sensors (cameras, laser, ultrasound, inertial, microphone) that makes this platform interesting for robot-human interactions during locomotion and visual exploration strategies (Sect. 7.2.8).

Moreover, for fast prototyping of algorithms in perception, control and autonomous navigation, the team uses a Pioneer 3DX from Adept (see Fig. 3.d). This platform is equipped with various sensors needed for autonomous navigation and sensor-based control.

In 2021, these platforms was used to obtain experimental results presented in 3 papers 2, 7, 50.

|

|

|

|

| (a) | (b) | (c) | (d) |

6.2.3 Medical Robotic Platform

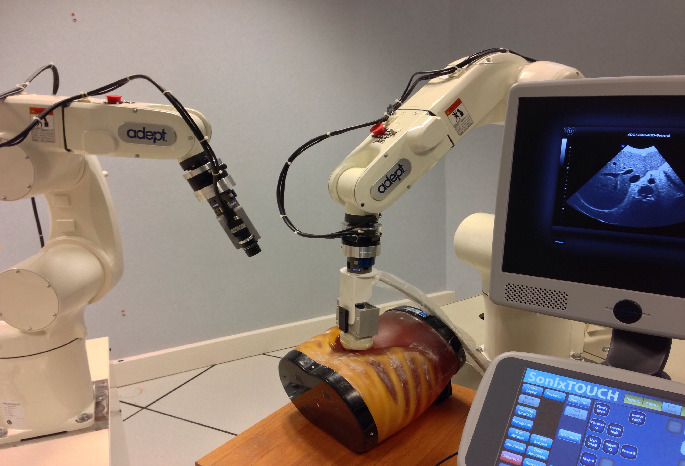

Participants: Alexandre Krupa [contact], Fabien Spindler [contact].

This platform is composed of two 6 DoF Adept Viper arms (see Figs. 4.a–b). Ultrasound probes connected either to a SonoSite 180 Plus or an Ultrasonix SonixTouch 2D and 3D imaging system can be mounted on a force torque sensor attached to each robot end-effector. The haptic Virtuose 6D or Omega 6 device (see Fig. 7.a) can also be used with this platform.

This platform was extended with a ATI Nano43 force/torque sensor attached to one of the Viper arm. It allows to perform experiments for needle insertion applications.

This testbed is of primary interest for researches and experiments concerning ultrasound visual servoing applied to probe positioning, soft tissue tracking, elastography or robotic needle insertion tasks (see Sect. 7.4.3). It can also be used to validate more classical tracking and visual servoing researches.

In 2021, this platform was used to obtain experimental results presented in 2 papers 8, 48.

|

|

|

| (a) | (b) |

6.2.4 Advanced Manipulation Platform

Participants: Claudio Pacchierotti [contact], Paolo Robuffo Giordano [contact], Fabien Spindler [contact].

This platform is composed by 2 Panda lightweight arms from Franka Emika equipped with torque sensors in all seven axes. An electric gripper, a camera, a soft hand from qbrobotics or a Reflex TakkTile 2 gripper from RightHand Labs (see Fig. 5.b) can be mounted on the robot end-effector (see Fig. 5.a). A force/torque sensor from Alberobotics is also attached to one of the robots end-effector to get more precision during torque control. This setup is used to validate our researches in coupling force and vision for controlling robot manipulators (see Section 7.2.11) and in shared control for remote manipulation (see Section 7.4.1). Other haptic devices (see Section 6.2.6) can also be coupled to this platform.

2 new papers 19, 26 published this year include experimental results obtained with this platform.

|

|

|

| (a) | (b) |

6.2.5 Unmanned Aerial Vehicles (UAVs)

Participants: Joudy Nader [contact], Paolo Robuffo Giordano [contact], Claudio Pacchierotti [contact], Fabien Spindler [contact].

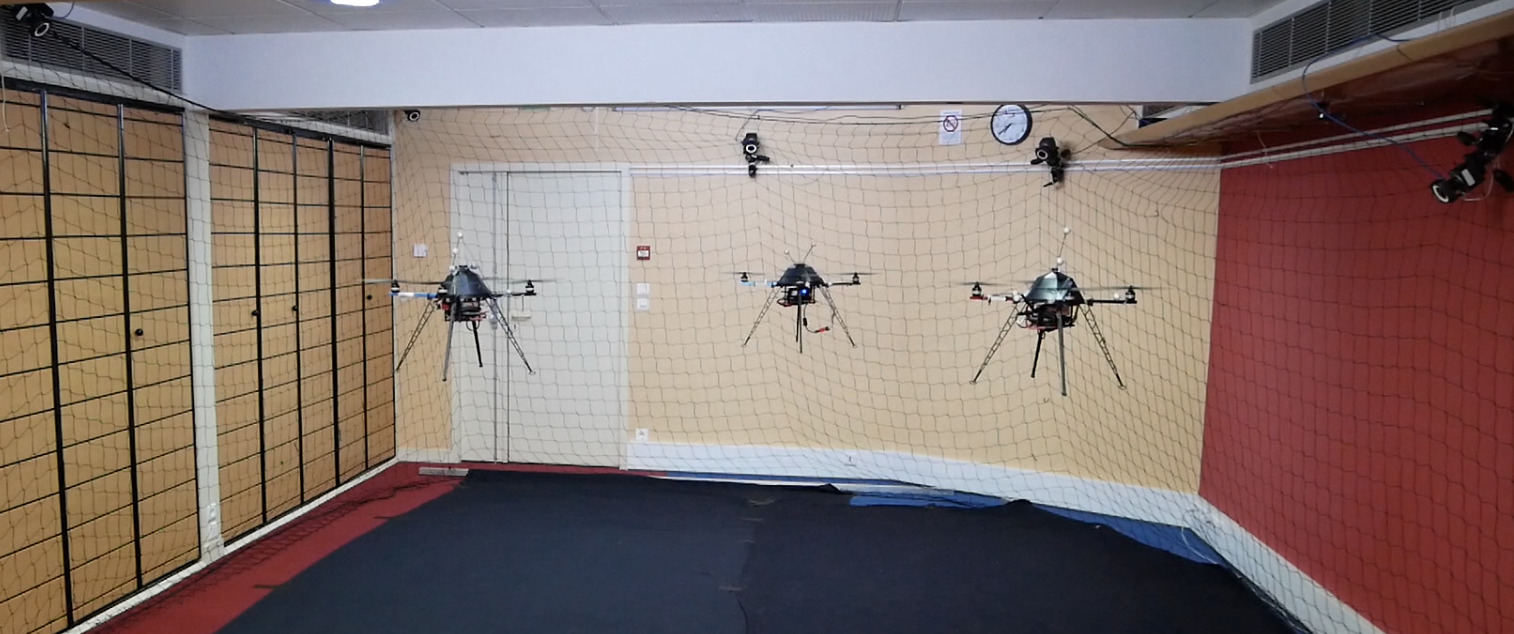

Rainbow is involved in several activities involving perception and control for single and multiple quadrotor UAVs. To this end, we exploit four quadrotors from Mikrokopter Gmbh, Germany (see Fig. 6.a), and one quadrotor from 3DRobotics, USA (see Fig. 6.b). The Mikrokopter quadrotors have been heavily customized by: reprogramming from scratch the low-level attitude controller onboard the microcontroller of the quadrotors, equipping each quadrotor with a NVIDIA Jetson TX2 board running Linux Ubuntu and the TeleKyb-3 software based on genom3 framework developed at LAAS in Toulouse (the middleware used for managing the experiment flows and the communication among the UAVs and the base station), and purchasing new Realsense RGB-D cameras for visual odometry and visual servoing. The quadrotor group is used as robotic platforms for testing a number of single and multiple flight control schemes with a special attention on the use of onboard vision as main sensory modality.

This year 3 papers 9, 7, 1 contain experimental results obtained with this platform.

|

|

|

| (a) | (b) |

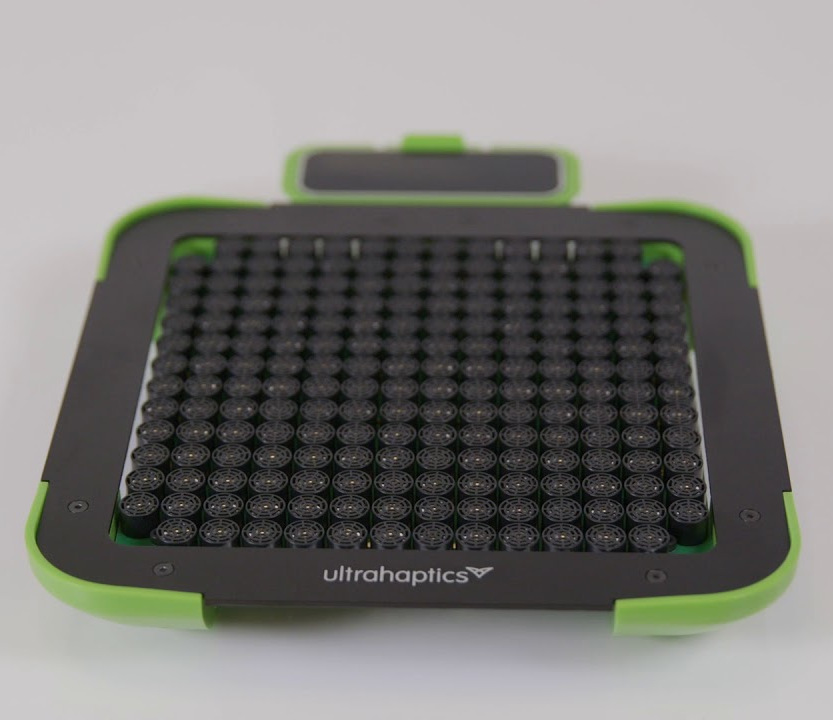

6.2.6 Haptics and Shared Control Platform

Participants: Claudio Pacchierotti [contact], Paolo Robuffo Giordano [contact], Fabien Spindler [contact].

Various haptic devices are used to validate our research in shared control. We have a Virtuose 6D device from Haption (see Fig. 7.a). This device is used as master device in many of our shared control activities (see, e.g., Sections 7.4.1). It could also be coupled to the Haption haptic glove in loan from the University of Birmingham. An Omega 6 (see Fig. 7.b) from Force Dimension and devices in loan from Ultrahaptics complete this platform that could be coupled to the other robotic platforms.

This platform was used to obtain experimental results presented in 6 papers 19, 8, 7, 42, 45 published this year.

|

|

|

||

| (a) | (b) | (c) |

6.2.7 Portable immersive room

Participants: François Pasteau [contact], Fabien Grzeskowiak [contact], Marie Babel [contact].

To validate our research on assistive robotics and its applications in virtual conditions, we very recently acquire a portable immersive room that is planned to be easily deployed in different rehabilitation structures in order to conduct clinical trials. The system has been designed by Trinoma company and has been funded by Interreg ADAPT project.

|

|

|

| (a) | (b) |

7 New results

7.1 Optimal and Uncertainty-Aware Sensing

7.1.1 3D tracking of deformable objects from RGB-D data

Participants: Alexandre Krupa, Eric Marchand.

Within our research activities on deformable object tracking, this year we proposed a novel framework for tracking the deformation of soft objects using a RGB-D camera. It requires a coarse 3D mesh and physical model of the object based on FEM whose parameters (Young Modulus, Poisson's ratio, etc) do not need to be precise. The approach consists in minimizing both a geometric error and a direct photometric intensity error while relying on the co-rotational Finite Element Method as the underlying deformation model 48. The proposed method has been validated both on synthetic data with groundtruth and real data.

7.1.2 Trajectory Generation for Optimal State Estimation

Participants: Nicola De Carli, Gennaro Notomista, Claudio Pacchierotti, Paolo Robuffo Giordano.

This activity addresses the general problem of active sensing where the goal is to analyze and synthesize optimal trajectories for a robotic system that can maximize the amount of information gathered by (few) noisy outputs (i.e., sensor readings) while at the same time reducing the negative effects of the process/actuation noise. We have recently developed a general framework for solving online the active sensing problem by continuously replanning an optimal trajectory that maximizes a suitable norm of the Constructibility Gramian (CG) 64.

In 36, we have extended this framework for considering the problem of localization for a group of multiple robots that can obtain distance measurements and communicate only with local neighbors. We showed that, thanks to a proper change of coordinates, the CG for the multi-robot group can be computed in a decentralized way with only minor approximations. This allowed us to formulate an online and decentralized trajectory generation problem for optimal localization. We considered as case study the localization of a quadrotor group with noisy distance measurements and sensing constraints, and showed the effectiveness of the appraoch via a monte-carlo simulation. We are now considering the case of bearing measurments (obtained from onboard cameras) and the associated constraints of limited fov and possible occlusions. We are also working towards an experimental validation of the approach.

In 25, we have instead considered a different active sensing problem that involves a single robot but in an environmental monitoring task. The goal is to estimate some (possibly time-varying) parameters of a distributed scalar field representative of, e.g., a gas or other quantities in the atmosphere. The robot is equipped with a sensor able to locally measure the value of this field, and the estimation goal is to recover the (unknown) field parameters (e.g., location of the source, decaying rate) by suitably planning an optimal trajectory. To this end we have formulated a trajectory optimization problem that maximizes a norm of the CG and also takes into account the energy level of the robot by modeling a battery with a discharge dynamics that depends on the control effort. The results have been validated in simulation and are quite promising. We are now working on a multi-robot formulation of this problem.

7.1.3 Leveraging Multiple Environments for Learning and Decision Making

Participants: Maud Marchal, Thierry Gaugry, Antonin Bernardin.

Learning is usually performed by observing real robot executions. Physics-based simulators are a good alternative for providing highly valuable information while avoiding costly and potentially destructive robot executions. Within the Imagine project, we presented a novel approach for learning the probabilities of symbolic robot action outcomes. This is done by leveraging different environments, such as physics-based simulators, in execution time. To this end, we proposed MENID (Multiple Environment Noise Indeterministic Deictic) rules, a novel representation able to cope with the inherent uncertainties present in robotic tasks. MENID rules explicitly represent each possible outcomes of an action, keep memory of the source of the experience, and maintain the probability of success of each outcome. We also introduced an algorithm to distribute actions among environments, based on previous experiences and expected gain. Before using physics-based simulations, we proposed a methodology for evaluating different simulation settings and determining the least time-consuming model that could be used while still producing coherent results. We demonstrated the validity of the approach in a dismantling use case, using a simulation with reduced quality as simulated system, and a simulation with full resolution where we add noise to the trajectories and some physical parameters as a representation of the real system.

7.1.4 A Plane-based Approach for Indoor Point Clouds Registration

Participant: Eric Marchand.

Traditional 3D point clouds registration algorithms, based on Iterative Closest Point (ICP), rely on point matching of large point clouds. In well-structured environments, such as buildings, planes can be segmented and used for registration, similarly to the classical point-based ICP approach. Using planes tremendously reduces the number of inputs. An efficient plane-based registration algorithm has been proposed. The optimal transformation is estimated through a two-steps approach, successively performing robust plane-to- plane minimization and non-linear robust point-to-plane registration 39, 57, 38. This work was done in cooperation with IETR Lab.

7.1.5 Visual SLAM

Participant: Eric Marchand.

We proposed a novel visual SLAM method with dense planar reconstruction using a monocular camera: TT-SLAM. The method exploits planar template-based trackers (TT) to compute camera poses and reconstructs a multi-planar scene representation. Multiple homographies are estimated simultaneously by clustering a set of template trackers supported by superpixelized regions. Compared to ou previous work (RANSAC-based multiple homographies method), data association and keyframe selection issues are handled by the continuous nature of template trackers. A non-linear optimization process is applied to all the homographies to improve the precision in pose estimation. This work 53 was done in cooperation with the Mimetic team.

We also proposed a novel binary graph descriptor to improve loop detection for visual SLAM systems. Our contribution is twofold: i) a graph embedding technique for generating binary descriptors which conserve both spatial and histogram information extracted from images; ii) a generic mean of combining multiple layers of heterogeneous data into the proposed binary graph descriptor, coupled with a matching and geometric checking method. We also introduce an implementation of our descriptor into an incremental Bag-of-Words (iBoW) structure that improves efficiency and scalability, and propose a method to interpret Deep Neural Network (DNN) results. This work 31 was done in cooperation with the Mimetic team.

7.1.6 Learn Offsets for robust 6DoF object pose estimation

Participants: Mathieu Gonzalez, Eric Marchand.

Estimating the 3D translation and orientation of an object is a challenging task that can be considered within augmented reality or robotic applications. In 16 we propose a novel approach to perform 6 DoF object pose estimation from a single RGB-D image in cluttered scenes. We adopt an hybrid pipeline in two stages: data-driven and geometric respectively. The first data-driven step consists of a classification CNN to estimate the object 2D location in the image from local patches, followed by a regression CNN trained to predict the 3D location of a set of keypoints in the camera coordinate system. We robustly perform local voting to recover the location of each keypoint in the camera coordinate system. To extract the pose information, the geometric step consists in aligning the 3D points in the camera coordinate system with the corresponding 3D points in world coordinate system by minimizing a registration error, thus computing the pose.

7.2 Advanced Sensor-Based Control

7.2.1 Trajectory Generation for Minimum Closed-Loop State Sensitivity

Participants: Pascal Brault, Ali Srour, Quentin Delamare, Paolo Robuffo Giordano.

The goal of this research activity is to propose a new point of view in addressing the control of robots under parametric uncertainties: rather than striving to design a sophisticated controller with some robustness guarantees for a specific system, we propose to attain robustness (for any choice of the control action) by suitably shaping the reference motion trajectory so as to minimize the state sensitivity to parameter uncertainty of the resulting closed-loop system.

In 34 we have proposed to couple the previously introduced “state sensitivity’’ metric with an “input sensitivity” metric, which allows us to obtain trajectories that, when perturbed, require minimal change of the control inputs and in the final tracking error. We applied this machinery to the case of a planar quadrotor. An off-the-shelf nonlinear optimization scheme was also employed for allowing us to take into account (nonlinear) input constraints. A large statistical analysis was performed in simulation, showing the effectiveness of the approach in producing intrinsically-robust motion plans. We are now working towards an implementation on a real quadorotor by considering offsets in the center of mass (CoM) as one of the main sources of uncertainty. We are also working on the combination of sensitivity and observability metrics for taking into account both robustness and optimal state estimation when producing motion plans. Finally, we are studying how to formulate an optimization problem that can optimize both the trajectory and the control gains of a familty of controllers for further improving the robustness of the generated trajectory.

7.2.2 Comfortable path generation for wheelchair navigation

Participants: Guillaume Vailland, Marie Babel.

In the case of non-holonomic robot navigation, path planning algorithms such as Rapidly-exploring Random Tree (RRT) rarely provides feasible and smooth path without the need of additional processing. Furthermore, in a transport context like power wheelchair navigation, user comfort should be a priority and influence path planning strategy.

We then proposed a local path planner which guarantees curvature bounded value and continuous Cubic Bézier piecewise curves connections. To simulate and test this Cubic Bézier local path planner, we developed a new RRT version (CBB-RRT*) which generates on-the fly comfortable path adapted to non-holonomic constraints 51.

7.2.3 UWB beacon navigation of assisted power wheelchair

Participants: Vincent Drevelle, Marie Babel, Eric Marchand, François Pasteau, Merwane Bouri.

Typical problems in robots are those of perception of the environment and localization. Visual sensors are poorly adapted to the context of autonomous wheelchair navigation, both in terms of acceptability (intrusiveness) and in terms of adaptation to the wheelchair and of overall cost.

New sensors, based on Ultra Wide Band (UWB) radio technology, are emerging in particular for indoor localization and object tracking applications. This low-cost system allows for the measurement of distances between fixed beacons and a mobile sensor, in order to obtain localization at decimeter level accuracy in the best case. We seek to exploit these sensors for the navigation of a wheelchair, despite the low accuracy of the measurements they provide.

The problem here lies in the definition of an autonomous or shared sensor based control solution, which fully exploits the notion of measurement uncertainty related to UWB beacons. By modeling the measurements of uncertain distances by intervals, we will try to propagate these uncertainties to the calculation of the speeds to be applied to the wheelchair. This will be done by using the methods of set inversion and constraint propagation, which lead to the characterization of solutions in the form of sets.

7.2.4 Visual Servoing for Cable-Driven Parallel Robots

Participant: François Chaumette.

This study was done in collaboration with IRT Jules Verne (Zane Zake, Nicolo Pedemonte) and LS2N (Stéphane Caro) in Nantes (see Section 8.2). It was devoted to the analysis of the robustness of visual servoing to modeling and calibration errors for cable-driven parallel robots. Zane Zake defended her Phd in February and her previous works on pose estimation, control workspace, and tension management have been published this year 55, 54, 56.

7.2.5 Singularities in visual servoing

Participant: François Chaumette.

This study is done in the scope of the ANR Sesame project (see Section 9.3).

We have performed a complete theoretical study about the singularities of image-based visual servoing and pose estimation (PnP problem) from the observation of four image points. Highly original results have been exhibited. In particular, it was shown that 2 to 6 camera positions correspond to singularities for a general configuration of 4 non-coplanar points, while it was wrongly believed before that no singularities occur for such configuration 4

7.2.6 Multi-sensor-based control for accurate and safe assembly

Participants: John Thomas, François Chaumette.

This study is done in the scope of the BPI Lichie project 9.3. Its goal is to design sensor-based control strategies coupling vision and proximetry data for ensuring precise positioning while avoiding obstacles in dense environements. The targeted application is the assembly of satellite parts.

7.2.7 Visual servo of a satellite constellation

Participants: Maxime Robic, Eric Marchand, François Chaumette.

This study is also done in the scope of the BPI Lichie project 9.3. Its goal is to control the orientation of a satellite constellation from a camera mounted on each of them to track particular objects on the ground. We study new control law compatible with the control of the satellites.

7.2.8 Visual Exploration of an Indoor Environment

Participants: Thibault Noël, Eric Marchand, François Chaumette.

This study is done in collaboration with the Creative company in Rennes (see Section 7.2.8) It is devoted to the exploration of indoor environments by a mobile robot, Pepper typically (see Section 6.2.2) for a complete and accurate reconstruction of the environment.

7.2.9 Model-Based Deformation Servoing of Soft Objects

Participants: Fouad Makiyeh, Alexandre Krupa, Maud Marchal, François Chaumette.

This study takes place in the context of the GentleMAN project (see Section 9.1.3). The objective is to elaborate a new visual servoing approach aiming to control the shape of an object towards a desired deformation. This year we developed a new control approach that relies on a coarse model of the soft object to be manipulated. This model is composed by a 3D mesh and we chose to represent the mechanical behavior of the object using a Mass-Spring model because it provides real-time capability. We derived an analytical expression of a new controller that allows us to indirectly move any feature point of the soft object to a desired 3D position by acting with the end-effector of a robot on a distant manipulated point. This controller was implemented in an eye-to-hand visual servoing scheme using a RGB-D camera and the approach was tested on several soft objects with different geometries and materials. The experimental results demonstrated that this approach can accurately position a feature point belonging to a soft object to a desired 3D location even if it is based on a model that approximates the physical behavior of the real object. This work has been recently submitted to the special isssue of IEEE RA-L devoted to robotic handling of deformable objects.

7.2.10 Manipulation of a deformable wire by two UAVs

Participants: Lev Smolentsev, Alexande Krupa, François Chaumette.

This study takes place in the context of the CominLabs MAMBO project (see Section 9.4). Its main objective is the development of a visual-based control framework for performing autonomous manipulation of a deformable wire attached between two UAVs using data provided by onboard RGB-D cameras. Toward this direction, we have developed a visual servoing approach that considers as visual features the coefficients of a parabolic curve representing the shape of the wire and we analytically derived the interaction matrix that relates the variations of this features to the RGB-D camera displacement. Preliminary results obtained from experiments using an eye-to-hand RGB-D camera observing a wire with one extremity attached to a 6-DOF robotic arm validated the modelling and the design of a control law that automatically positions the wire to a desired shape configuration.

7.2.11 Coupling Force and Vision for Controlling Robot Manipulators

Participants: Alexander Oliva, François Chaumette, Paolo Robuffo Giordano.

The goal of this activity is about coupling visual and force information for advanced manipulation tasks. To this end, we are exploiting the recently acquired Panda robot (see Sect. 6.2.4), a state-of-the-art 7-dof manipulator arm with torque sensing in the joints, and the possibility to command torques at the joints or forces at the end-effector. The use of vision in torque-controlled robot is limited because of many issues, among which the difficulty of fusing low-rate images (about 30 Hz) with high-rate torque commands (about 1 kHz), the delays caused by any image processing and tracking algorithms, and the unavoidable occlusions that arise when the end-effector needs to approach an object to be grasped.

In this context we have proposed a general framework for combining force and visual information directly in the visual feature space, by reformulating and unifying the classical admittance control law in the image space. The proposed visual/force control framework has been extensively evaluated via numerous experiments performed on the Panda robot in peg-in-hole tasks where both the pose and the exchanged forces could be regulated with high accuracy and good stability 26. We have recently considered the case of visual/force control for moving targets by exploiting a Kalman filter that can estimate the target state and provide this information to the control loop. In order to fast prototyping the developments on these activities, we have also developed a realistic dynamic simulator of the Franka robot called “FrankaSim’’ that has been publicly released.

7.2.12 End-to-end deep visual servoing

Participants: Eric Marchand, Samuel Felton.

We proposed a deep architecture and the associated learning strategy for end-to-end direct visual servoing. The considered approach allows to sequentially predict, in , the velocity of a camera mounted on the robot’s end-effector for positioning tasks. Positioning is achieved with high precision despite large initial errors in both cartesian and image spaces. Training is fully done in simulation, alleviating the burden of data collection. We demonstrate the efficiency of our method in experiments in both simulated and real-world environments. We also show that the proposed approach is able to handle multiple scenes. This work 40 is done in collaboration with the Lacodam team.

We also proposed a new framework to perform VS in the latent space learned by a convolutional autoencoder. We show that this latent space avoids explicit feature extraction and tracking issues and provides a good representation, smoothing the cost function of the VS process. Besides, our experiments show that this unsupervised learning approach allows us to obtain, without labelling cost, an accurate end-positioning, often on par with the best DVS methods in terms of accuracy but with a larger convergence area. This work 15 is done in collaboration with the Lacodam team.

7.3 Haptic Cueing for Robotic Applications

7.3.1 Wearable Haptics Systems Design

Participants: Claudio Pacchierotti, Maud Marchal, Thomas Howard, Xavier de Tinguy.

We have been working on wearable haptics since few years now, both from the hardware (design of interfaces) and software (rendering and interaction techniques) points of view.

In 3, we presents an approach for automatically adapting the hardware design of a wearable haptic interface for a given user. We analyze the performance of a 3-DoF fingertip cutaneous device as a function of its main geometrical dimensions. Then, starting from the user's fingertip characteristics, we define a numerical procedure that best adapts the dimension of the device to (i) maximize the range of renderable haptic stimuli, (ii) avoid unwanted contacts between the device and the skin, (iii) avoid singular configurations, and (iv) minimize the device encumbrance and weight. Together with the mechanical analysis and evaluation of the adapted design, we present a MATLAB script that calculates the device dimensions customized for a target fingertip as well as an online CAD utility for generating a ready-to-print STL file of the personalized design.

One of the main issues when designing haptic systems for the fingertip is their tracking, especially when interacting with tangible/real objects at the same time. In this respect, in 14, we combined tracking information from a tangible object instrumented with capacitive sensors and an optical tracking system, to improve contact rendering when interacting with tangibles in Virtual Reality. Combining capacitive sensing with optical tracking significantly improves the visuohaptic synchronization and immersion of the experience, which is promising for haptic-enabled interaction with tangible environments.

Finally, in the framework of H2020 project TACTILITY, we are working on designing interaction and rendering techniques for wearable electrotactile interfaces. In this respect, we proposed the use of electrotactile feedback to render the interpenetration distance between the user's finger and the virtual content that is touched 52. The approach consists of modulating the perceived intensity (frequency and pulse width modulation) of the electrotactile stimuli according to the registered interpenetration distance. We assessed the performance of four different interpenetration feedback approaches: electrotactile-only, visual-only, electrotactile and visual, and no interpenetration feedback. Results suggest that electrotactile feedback could be an efficient replacement of visual feedback for enhancing contact information in virtual reality avoiding the need of active visual focus and the rendering of additional visual artefacts.

7.3.2 Mid-Air Haptic Feedback

Participants: Claudio Pacchierotti, Thomas Howard, Guillaume Gicquel, Maud Marchal.

In the framework of H2020 projects H-Reality and E-TEXTURE, we are working to develop novel mid-air haptics paradigms that can convey the information spectrum of touch sensations in the real world, motivating the need to develop new, natural interaction techniques.

In 45, we developed an open-source framework to aid in designing mid-air stimuli, named DOLPHIN. It allows to the study of the impact of rendering parameters on perceived stimulus properties. This platform-agnostic framework standardizes stimulus descriptions as a step toward more replicability and easier communication in the field. It enables reproduction of stimuli between perceptual experiments and ensures that stimuli used in applications correspond to those evaluated in prior perceptual studies.

In 62, we kept studying the perceptual aspects of ultrasound haptic stimulation, investigating the influence of the rendering sampling strategies on a user's ability to differentiate arc curvatures.

7.3.3 Encountered-Type Haptic Devices

Participants: Maud Marchal, Thomas Howard.

Encountered-Type Haptic Displays (ETHDs) provide haptic feedback by positioning a tangible surface for the user to encounter. This allows users to freely elicit haptic feedback with a surface during a virtual simulation. ETHDs differ from most of current haptic devices which rely on an actuator always in contact with the user. In 23, we intend to describe and analyze the different research efforts carried out in this field. In addition, we analyze ETHD literature concerning definitions, history, hardware, haptic perception processes involved, interactions and applications. The paper proposes a formal definition of ETHDs, a taxonomy for classifying hardware types, and an analysis of haptic feedback used in literature. Taken together the overview of this survey intends to encourage future work in the ETHD field.

In 22, we propose an example of ETHD with an approach towards an infinite surface haptic display. Our approach, named ENcountered-Type ROtating Prop Approach (ENTROPiA) is based on a cylindrical spinning prop attached to a robot's end-effector serving as an ETHD. This type of haptic display allows the users to have an unconstrained, free-hand contact with a surface being provided by a robotic device for the users to encounter a surface to be touched. In our approach, the sensation of touching a virtual surface is given by an interaction technique that couples with the sliding movement of the prop under the users' finger by tracking their hand location and establishing a path to be explored. This approach enables large motion for a larger surface rendering, permits rendering multi-textured haptic feedback, and leverages the ETHD approach introducing large motion and sliding/friction sensations. As a part of our contribution, a proof of concept was designed for illustrating our approach. A user study was conducted to assess the perception of our approach showing a significant performance for rendering the sensation of touching a large flat surface. Our approach could be used to render large haptic surfaces in applications such as rapid prototyping for automobile design.

In 44, we propose a novel haptic paradigm for object manipulation in 3D immersive VR. It uses a robotic manipulator to move tangible objects in its workspace such that they match the pose of virtual objects to be interacted with. Users can then naturally touch, grasp and manipulate a virtual object while feeling congruent and realistic haptic feedback from the tangible proxy. The tangible proxies can detach from the robot, allowing natural and unconstrained manipulation in the 3D virtual environment. When a manipulated virtual object comes into contact with the virtual environment, the robotic manipulator acts as an encounter-type haptic display, positioning itself so as to render reaction forces of the environment onto the manipulated physical object.

7.3.4 Multimodal Cutaneous Haptics to Assist Navigation

Participants: Louise Devigne, Marco Aggravi, Inès Lacôte, Pierre-Antoine Cabaret, François Pasteau, Maud Marchal, Claudio Pacchierotti, Marie Babel.

Within the project Inria Challenge DORNELL, we got interested on using cutaneous haptics for aiding the navigation of people with sensory disabilities. In particular, we investigated the ability of vibrotactile sensations and tap stimulations in conveying haptic motion illusions 43 in a handle-like device. We also evaluated the capability of vibrotactile feedback for rendering spatialized impacts with external (virtual) objects.

7.4 Shared Control Architectures

7.4.1 Shared Control for Remote Manipulation

Participants: Paolo Robuffo Giordano, Claudio Pacchierotti, Rahaf Rahal, Raul Fernandez Fernandez.

As teleoperation systems become more sophisticated and flexible, the environments and applications where they can be employed become less structured and predictable. This desirable evolution toward more challenging robotic tasks requires an increasing degree of training, skills, and concentration from the human operator. In this respect, shared control algorithms have been investigated as one of the main tools to design complex but intuitive robotic teleoperation systems, helping operators in carrying out several increasingly difficult robotic applications such as assisted vehicle navigation, surgical robotics, brain-computer interface manipulation, rehabilitation. Indeed, this approach makes it possible to share the available degrees of freedom of the robotic system between the operator and an autonomous controller.

Along this general line of research, during this year we gave the following contributions:

- in 24 we presented an adaptive impedance control architecture for robotic teleoperation of contact tasks featuring continuous interaction with the environment. We used Learning from Demonstration (LfD) as a framework to learn variable stiffness control policies. Then, the learnt state-varying stiffness was used to command the remote manipulator, so as to adapt its interaction with the environment based on the sensed forces. The proposed system only relies on the on-board torque sensors of a commercial robotic manipulator and it does not require any additional hardware or user input for the estimation of the required stiffness. We also provide a passivity analysis of our system, where the concept of energy tanks is used to guarantee a stable behavior. Finally, the system was evaluated in a representative teleoperated cutting application. Results showed that the proposed variable-stiffness approach outperforms two standard constant-stiffness approaches in terms of safety and robot tracking performance.

- in 19 we focused on robotic manipulation of fragile, compliant objects, such as food items. In particular we developed a haptic-based, Learning from Demonstration (LfD) policy that enables pre-trained autonomous grasping of food items using an anthropomorphic robotic system. The policy combines data from teleoperation and direct human manipulation of objects, embodying human intent and interaction areas of significance. We evaluated the proposed solution against a recent state-of-the-art LfD policy as well as against two standard impedance controller techniques. The results showed that the proposed policy performs significantly better than the other considered techniques, leading to high grasping success rates while guaranteeing the integrity of the food at hand.

- in 5 we have proposed a shared control for robot manipulators transporting an object on a tray. Differently from many existing studies about remotely operated robots with firm grasping capabilities, we considered the case in which, in principle, the object can break its contact with the robot end-effector. The proposed shared-control approach automatically regulates the remote robot motion, commanded by the user, and the end-effector orientation to prevent the object from sliding over the tray. Furthermore, the human operator is provided with haptic cues informing about the discrepancy between the commanded and executed robot motion, assisting the operator throughout the task execution. We carried out several experiments and user's studies employing a 7-DOF torque-controlled manipulator. In all experiments, the results clearly show that our control approach outperforms the other solutions in terms of sliding prevention, robustness, commands tracking, and user's preference.

7.4.2 Shared Control for Multiple Robots

Participants: Marco Aggravi, Paolo Robuffo Giordano, Claudio Pacchierotti.

Following our previous works on flexible formation control of multiple robots with global requirements, in particular connectivity maintenance, in 9, 7, 1 we have instead presented a decentralized haptic-enabled connectivity-maintenance control framework for heterogeneous human-robot teams. The proposed framework controls the coordinated motion of a team consisting of mobile robots and one human, for collaboratively achieving various exploration and SAR tasks. The human user physically becomes part of the team, moving in the same environment than the robots, while receiving rich haptic feedback about the team connectivity and the direction toward a safe path. We carried out two human subjects studies, both in simulated and real environments. The results showed that the proposed approach is effective and viable in a wide range of SAR scenarios. Moreover, providing haptic feedback showed increased performance with respect to providing visual information only. Finally, conveying distinct feedback regarding the team connectivity and the path to follow performed better than providing the same information combined together.

7.4.3 Shared Control of Flexible Needles

Participant: Marco Aggravi, Claudio Pacchierotti, Alexandre Krupa.

We proposed a shared-control strategy where the user is only in charge of teleoperating directly and intuitively in the 3D ultrasound image the needle tip desired position via the use of a haptic interface. In this approach, an autonomous "low level" controller based on visual sevoing using 3D ultrasound images is in charge of handling the complexity of the 6-DOF motion that needs to be applied to the needle base in such a way to reach the desired needle tip position. We also proposed in this shared-control strategy to assist the user 3D navigation through kinesthetic stimulation by increasing the stiffness of the haptic device in the direction that is orthogonal to the one that points to the anatomical target and provide to the user a feedback on the needle tip cutting force. This force is obtained by subtracting an estimate of the friction force acting along the needle shaft from the total force that is measured at the base of the needle by a force sensor 8. In order to obtain a real-time estimate, we proposed a method that relies on the deformation of the needle shaft that is automatically tracked in 3D ultrasound. We then provided to the user both stimulation for 3D navigation assistance toward the target and the cutting force feedback by using the grounded haptic interface and a wearable cutaneous interface on the user forearm. We carried out a human subject study to validate the insertion system in a gelatine phantom and compare seven different feedback techniques. The best performance was registered when providing navigation cues through kinesthetic feedback and needle tip cutting force through cutaneous vibrotactile feedback.

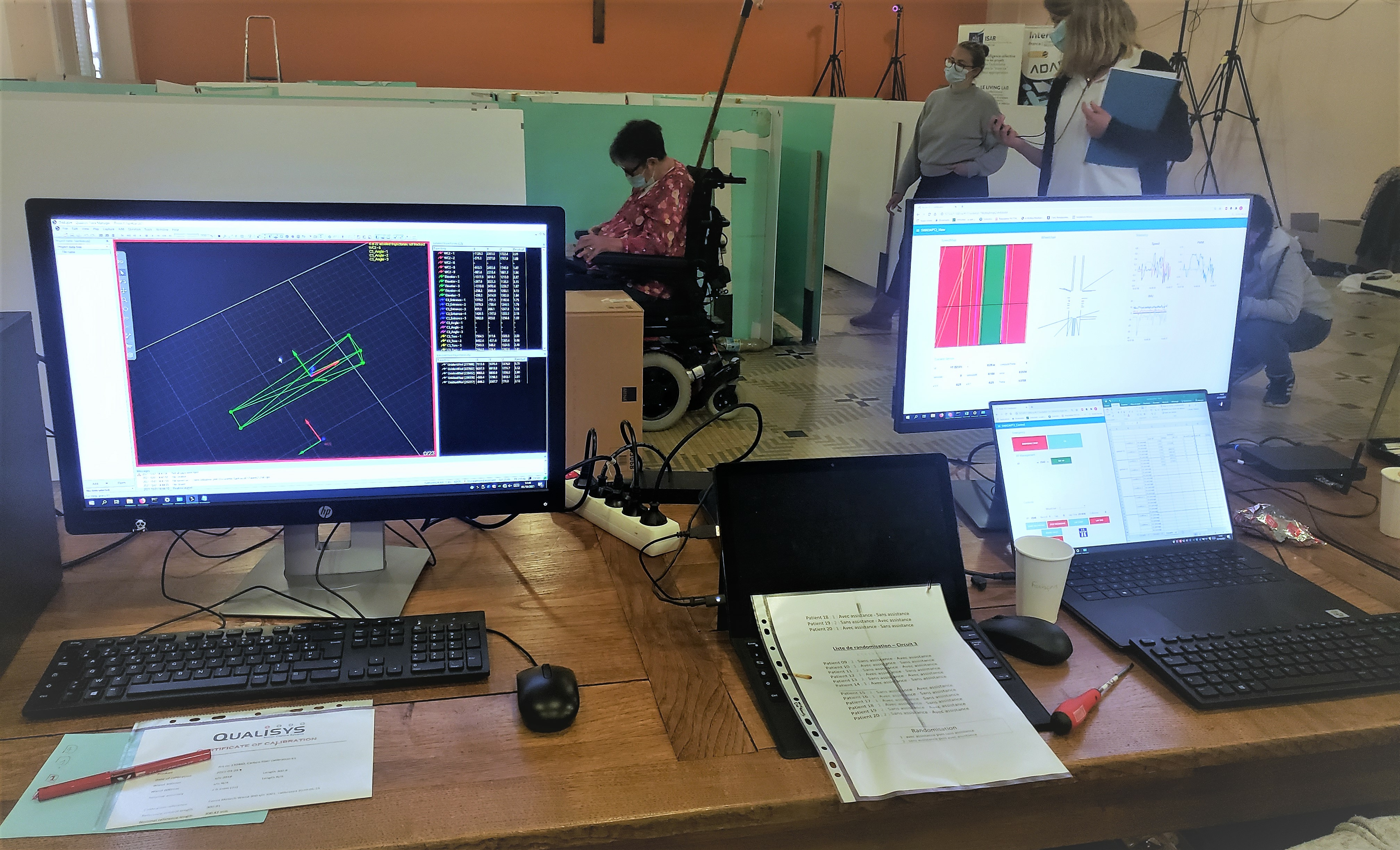

7.4.4 Shared Control of a Wheelchair for Navigation Assistance

Participants: Louise Devigne, François Pasteau, Marie Babel.

Power wheelchairs allow people with motor disabilities to have more mobility and independence. In order to improve the access to mobility for people with disabilities, we previously designed a semi-autonomous assistive wheelchair system which progressively corrects the trajectory as the user manually drives the wheelchair and smoothly avoids obstacles.

Despite the COVID situation, INSA and the rehabilitation center of Pôle Saint Hélier managed to co-organize clinical trials in July 2021 at INSA and in September 2021 at Pôle Saint Hélier. Based on the previous trial results 2, the objective was to evaluate the clinical benefit of a driving assistance for people with disabilities experiencing high difficulties while steering a wheelchair. 18 people participated to the trials. We clearly confirmed the excellent ability of the system to assist users and the relevant usage of such an assistive technology.

In addition, in collaboration with MIS laboratory (Fabio Morbidi, Guillame Caron), we evaluated the use of additional visual sensors such as spherical vision to enhance the navigation experience and situation awareness by providing adequate feedback to the user 37. The idea is to generate an augmented view of the surrounding environment, presented to the user on a display. We conducted user trial at INSA in July 2021 with able-bodied subjects and older adults with mobility impairments. Our field results indicate that SpheriCol is effective in improving safety and situational awareness, and in supporting a driver's decision during challenging but prevalent maneuvers, such as reversing into an elevator or corridor centering.

Finally, driving safely such a vehicle is a daily challenge particularly in urban environments while navigating on sidewalks, negotiating curbs or dealing with uneven grounds. Indeed, differences of elevation have been reported to be one of the most challenging environmental barrier to negotiate, with tipping and falling being the most common accidents power wheelchair users encounter. To this aim, we proposed a shared-control algorithm which provides assistance while navigating with a wheelchair in an environment consisting of negative obstacles. We designed a dedicated sensor-based control law allowing trajectory correction while approaching negative obstacles e.g. steps, curbs, descending slopes. This shared control method takes into account the humanin-the loop factor. We are currently preparing clinical trials and ethics committee (Comité de Protection des Personnes) procedures to evaluate the clinical benefit of it.

7.4.5 Multisensory power wheelchair simulator

Participants: Guillaume Vailland, Louise Devigne, François Pasteau, Marie Babel.