Keywords

Computer Science and Digital Science

- A2.5. Software engineering

- A5.1. Human-Computer Interaction

- A5.1.1. Engineering of interactive systems

- A5.1.2. Evaluation of interactive systems

- A5.1.3. Haptic interfaces

- A5.1.4. Brain-computer interfaces, physiological computing

- A5.1.5. Body-based interfaces

- A5.1.6. Tangible interfaces

- A5.1.7. Multimodal interfaces

- A5.1.8. 3D User Interfaces

- A5.1.9. User and perceptual studies

- A5.2. Data visualization

- A5.6. Virtual reality, augmented reality

- A5.6.1. Virtual reality

- A5.6.2. Augmented reality

- A5.6.3. Avatar simulation and embodiment

- A5.6.4. Multisensory feedback and interfaces

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A6. Modeling, simulation and control

- A6.2. Scientific computing, Numerical Analysis & Optimization

- A6.3. Computation-data interaction

Other Research Topics and Application Domains

- B1.2. Neuroscience and cognitive science

- B2.4. Therapies

- B2.5. Handicap and personal assistances

- B2.6. Biological and medical imaging

- B2.8. Sports, performance, motor skills

- B5.1. Factory of the future

- B5.2. Design and manufacturing

- B5.8. Learning and training

- B5.9. Industrial maintenance

- B6.4. Internet of things

- B8.1. Smart building/home

- B8.3. Urbanism and urban planning

- B9.1. Education

- B9.2. Art

- B9.2.1. Music, sound

- B9.2.2. Cinema, Television

- B9.2.3. Video games

- B9.4. Sports

- B9.6.6. Archeology, History

1 Team members, visitors, external collaborators

Research Scientists

- Anatole Lecuyer [Team leader, Inria, Senior Researcher, HDR]

- Ferran Argelaguet [Inria, Researcher, HDR]

- Marc Macé [CNRS, Researcher, HDR]

- Léa Pillette [CNRS, Researcher, from Nov 2022]

Faculty Members

- Bruno Arnaldi [INSA Rennes, Emeritus, from Nov 2022, HDR]

- Bruno Arnaldi [INSA Rennes, Professor, until Nov 2022, HDR]

- Mélanie Cogné [Université Rennes I, Associate Professor, CHU Rennes]

- Valérie Gouranton [INSA Rennes, Associate Professor, HDR]

Post-Doctoral Fellows

- Elodie Bouzbib [Inria, Co-supervised with RAINBOW]

- Panagiotis Kourtesis [Inria, until Sep 2022]

PhD Students

- Antonin Cheymol [Inria, INSA Rennes]

- Gwendal Fouché [Inria, INSA Rennes]

- Adelaïde Genay [Inria, Co-supervised with POTIOC]

- Vincent Goupil [SOGEA Bretagne, INSA Rennes]

- Lysa Gramoli [ORANGE LABS, INSA Rennes]

- Jeanne Hecquard [Inria, Université Rennes I, from Oct 2022]

- Gabriela Herrera Altamira [Inria, Co-supervised with Loria]

- Emilie Hummel [Inria, INSA Rennes]

- Salome Le Franc [CHRU Rennes, until Oct 2022, Université Rennes I]

- Julien Lomet [Université Paris 8, Université Rennes 2]

- Maé Mavromatis [Inria, INSA Rennes]

- Yann Moullec [Université Rennes I]

- Grégoire Richard [Inria, Co-supervised with LOKI]

- Mathieu Risy [INSA Rennes, from Oct 2022]

- Sony Saint-Auret [Inria, from Oct 2022, INSA Rennes]

- Emile Savalle [Université Rennes I, from Oct 2022]

- Sebastian Santiago Vizcay [Inria, INSA Rennes]

Technical Staff

- Alexandre Audinot [INSA Rennes, Engineer]

- Ronan Gaugne [Université Rennes I, Engineer, Technical director of Immersia]

- Florian Nouviale [INSA Rennes, Engineer]

- Adrien Reuzeau [INSA Rennes, Engineer, from Oct 2022]

- Justine Saint-Aubert [Inria, Engineer]

Administrative Assistant

- Nathalie Denis [Inria]

Visiting Scientist

- Yutaro Hirao [University of Tokyo, until Sep 2022]

External Collaborators

- Rebecca Fribourg [Centrale Nantes]

- Guillaume Moreau [IMT-Atlantique, Professor, HDR]

- Jean-Marie Normand [Centrale Nantes, HDR]

2 Overall objectives

Our research project belongs to the scientific field of Virtual Reality (VR) and 3D interaction with virtual environments. VR systems can be used in numerous applications such as for industry (virtual prototyping, assembly or maintenance operations, data visualization), entertainment (video games, theme parks), arts and design (interactive sketching or sculpture, CAD, architectural mock-ups), education and science (physical simulations, virtual classrooms), or medicine (surgical training, rehabilitation systems). A major change that we foresee in the next decade concerning the field of Virtual Reality relates to the emergence of new paradigms of interaction (input/output) with Virtual Environments (VE).

As for today, the most common way to interact with 3D content still remains by measuring user's motor activity, i.e., his/her gestures and physical motions when manipulating different kinds of input device. However, a recent trend consists in soliciting more movements and more physical engagement of the body of the user. We can notably stress the emergence of bimanual interaction, natural walking interfaces, and whole-body involvement. These new interaction schemes bring a new level of complexity in terms of generic physical simulation of potential interactions between the virtual body and the virtual surrounding, and a challenging "trade-off" between performance and realism. Moreover, research is also needed to characterize the influence of these new sensory cues on the resulting feelings of "presence" and immersion of the user.

Besides, a novel kind of user input has recently appeared in the field of virtual reality: the user's mental activity, which can be measured by means of a "Brain-Computer Interface" (BCI). Brain-Computer Interfaces are communication systems which measure user's electrical cerebral activity and translate it, in real-time, into an exploitable command. BCIs introduce a new way of interacting "by thought" with virtual environments. However, current BCI can only determine a small amount of mental states and hence a small number of mental commands. Thus, research is still needed here to extend the capacities of BCI, and to better exploit the few available mental states in virtual environments.

Our first motivation consists thus in designing novel “body-based” and “mind-based” controls of virtual environments and reaching, in both cases, more immersive and more efficient 3D interaction.

Furthermore, in current VR systems, motor activities and mental activities are always considered separately and exclusively. This reminds the well-known “body-mind dualism” which is at the heart of historical philosophical debates. In this context, our objective is to introduce novel “hybrid” interaction schemes in virtual reality, by considering motor and mental activities jointly, i.e., in a harmonious, complementary, and optimized way. Thus, we intend to explore novel paradigms of 3D interaction mixing body and mind inputs. Moreover, our approach becomes even more challenging when considering and connecting multiple users which implies multiple bodies and multiple brains collaborating and interacting in virtual reality.

Our second motivation consists thus in introducing a “hybrid approach” which will mix mental and motor activities of one or multiple users in virtual reality.

3 Research program

The scientific objective of Hybrid team is to improve 3D interaction of one or multiple users with virtual environments, by making full use of physical engagement of the body, and by incorporating the mental states by means of brain-computer interfaces. We intend to improve each component of this framework individually and their subsequent combinations.

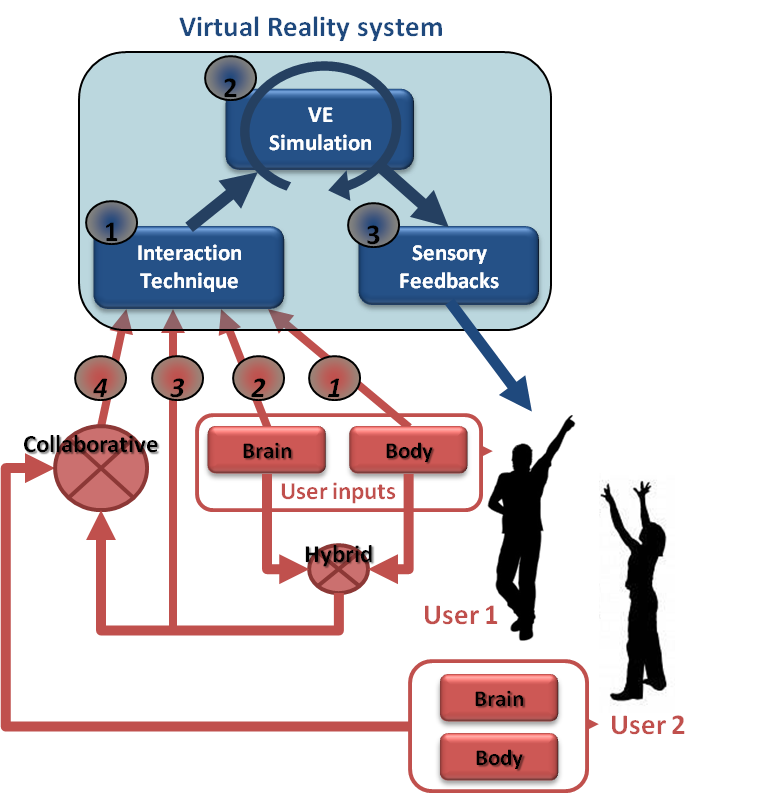

The “hybrid” 3D interaction loop between one or multiple users and a virtual environment is depicted in Figure 1. Different kinds of 3D interaction situations are distinguished (red arrows, bottom): 1) body-based interaction, 2) mind-based interaction, 3) hybrid and/or 4) collaborative interaction (with at least two users). In each case, three scientific challenges arise which correspond to the three successive steps of the 3D interaction loop (blue squares, top): 1) the 3D interaction technique, 2) the modeling and simulation of the 3D scenario, and 3) the design of appropriate sensory feedback.

The 3D interaction loop involves various possible inputs from the user(s) and different kinds of output (or sensory feedback) from the simulated environment. Each user can involve his/her body and mind by means of corporal and/or brain-computer interfaces. A hybrid 3D interaction technique (1) mixes mental and motor inputs and translates them into a command for the virtual environment. The real-time simulation (2) of the virtual environment is taking into account these commands to change and update the state of the virtual world and virtual objects. The state changes are sent back to the user and perceived through different sensory feedbacks (e.g., visual, haptic and/or auditory) (3). These sensory feedbacks are closing the 3D interaction loop. Other users can also interact with the virtual environment using the same procedure, and can eventually “collaborate” using “collaborative interactive techniques” (4).

This description is stressing three major challenges which correspond to three mandatory steps when designing 3D interaction with virtual environments:

- 3D interaction techniques: This first step consists in translating the actions or intentions of the user (inputs) into an explicit command for the virtual environment. In virtual reality, the classical tasks that require such kinds of user command were early classified into four 47: navigating the virtual world, selecting a virtual object, manipulating it, or controlling the application (entering text, activating options, etc). However, adding a third dimension and using stereoscopic rendering along with advanced VR interfaces cause many 2D techniques to become inappropriate. It is thus necessary to design specific interaction techniques and adapted tools. This challenge is here renewed by the various kinds of 3D interaction which are targeted. In our case, we consider various situations, with motor and/or cerebral inputs, and potentially multiple users.

- Modeling and simulation of complex 3D scenarios: This second step corresponds to the update of the state of the virtual environment, in real-time, in response to all the potential commands or actions sent by the user. The complexity of the data and phenomena involved in 3D scenarios is constantly increasing. It corresponds for instance to the multiple states of the entities present in the simulation (rigid, articulated, deformable, fluids, which can constitute both the user’s virtual body and the different manipulated objects), and the multiple physical phenomena implied by natural human interactions (squeezing, breaking, melting, etc). The challenge consists here in modeling and simulating these complex 3D scenarios and meeting, at the same time, two strong constraints of virtual reality systems: performance (real-time and interactivity) and genericity (e.g., multi-resolution, multi-modal, multi-platform, etc).

- Immersive sensory feedbacks: This third step corresponds to the display of the multiple sensory feedbacks (output) coming from the various VR interfaces. These feedbacks enable the user to perceive the changes occurring in the virtual environment. They are closing the 3D interaction loop, making the user immersed, and potentially generating a subsequent feeling of presence. Among the various VR interfaces which have been developed so far we can stress two kinds of sensory feedback: visual feedback (3D stereoscopic images using projection-based systems such as CAVE systems or Head Mounted Displays); and haptic feedback (related to the sense of touch and to tactile or force-feedback devices). The Hybrid team has a strong expertice in haptic feedback, and in the design of haptic and “pseudo-haptic” rendering 48. Note that a major trend in the community, which is strongly supported by the Hybrid team, relates to a “perception-based” approach, which aims at designing sensory feedbacks which are well in line with human perceptual capabilities.

These three scientific challenges are addressed differently according to the context and the user inputs involved. We propose to consider three different contexts, which correspond to the three different research axes of the Hybrid research team, namely: 1) body-based interaction (motor input only), 2) mind-based interaction (cerebral input only), and then 3) hybrid and collaborative interaction (i.e., the mixing of body and brain inputs from one or multiple users).

3.1 Research Axes

The scientific activity of Hybrid team follows three main axes of research:

- Body-based interaction in virtual reality. Our first research axis concerns the design of immersive and effective "body-based" 3D interactions, i.e., relying on a physical engagement of the user’s body. This trend is probably the most popular one in VR research at the moment. Most VR setups make use of tracking systems which measure specific positions or actions of the user in order to interact with a virtual environment. However, in recent years, novel options have emerged for measuring “full-body” movements or other, even less conventional, inputs (e.g. body equilibrium). In this first research axis we focus on new emerging methods of “body-based interaction” with virtual environments. This implies the design of novel 3D user interfaces and 3D interactive techniques, new simulation models and techniques, and innovant sensory feedbacks for body-based interaction with virtual worlds. It involves real-time physical simulation of complex interactive phenomena, and the design of corresponding haptic and pseudo-haptic feedback.

- Mind-based interaction in virtual reality. Our second research axis concerns the design of immersive and effective “mind-based” 3D interactions in Virtual Reality. Mind-based interaction with virtual environments relies on Brain-Computer Interface technology, which corresponds to the direct use of brain signals to send “mental commands” to an automated system such as a robot, a prosthesis, or a virtual environment. BCI is a rapidly growing area of research and several impressive prototypes are already available. However, the emergence of such a novel user input is also calling for novel and dedicated 3D user interfaces. This implies to study the extension of the mental vocabulary available for 3D interaction with VEs, the design of specific 3D interaction techniques “driven by the mind” and, last, the design of immersive sensory feedbacks that could help improve the learning of brain control in VR.

- Hybrid and collaborative 3D interaction. Our third research axis intends to study the combination of motor and mental inputs in VR, for one or multiple users. This concerns the design of mixed systems, with potentially collaborative scenarios involving multiple users, and thus, multiple bodies and multiple brains sharing the same VE. This research axis therefore involves two interdependent topics: 1) collaborative virtual environments, and 2) hybrid interaction. It should end up with collaborative virtual environments with multiple users, and shared systems with body and mind inputs.

4 Application domains

4.1 Overview

The research program of the Hybrid team aims at next generations of virtual reality and 3D user interfaces which could possibly address both the “body” and “mind” of the user. Novel interaction schemes are designed, for one or multiple users. We target better integrated systems and more compelling user experiences.

The applications of our research program correspond to the applications of virtual reality technologies which could benefit from the addition of novel body-based or mind-based interaction capabilities:

- Industry: with training systems, virtual prototyping, or scientific visualization;

- Medicine: with rehabilitation and reeducation systems, or surgical training simulators;

- Entertainment: with movie industry, content customization, video games or attractions in theme parks,

- Construction: with virtual mock-ups design and review, or historical/architectural visits.

- Cultural Heritage: with acquisition, virtual excavation, virtual reconstruction and visualization

5 Social and environmental responsibility

5.1 Impact of research results

A salient initiative carried out by Hybrid in relation to social responsibility on the field of health is the Inria Covid-19 project “VERARE”. VERARE is a unique and innovative concept implemented in record time thanks to a close collaboration between the Hybrid research team and the teams from the intensive care and physical and rehabilitation medicine departments of Rennes University Hospital. VERARE consists in using virtual environments and VR technologies for the rehabilitation of Covid-19 patients, coming out of coma, weakened, and with strong difficulties in recovering walking. With VERARE, the patient is immersed in different virtual environments using a VR headset. He is represented by an “avatar”, carrying out different motor tasks involving his lower limbs, for example : walking, jogging, avoiding obstacles, etc. Our main hypothesis is that the observation of such virtual actions, and the progressive resumption of motor activity in VR, will allow a quicker start to rehabilitation, as soon as the patient leaves the ICU. The patient will then be able to carry out sessions in his room, or even from his hospital bed, in simple and secure conditions, hoping to obtain a final clinical benefit, either in terms of motor and walking recovery or in terms of hospital length of stay. The project started at the end of April 2020, and we were able to deploy a first version of our application at the Rennes hospital in mid-June 2020 only 2 months after the project started. Covid patients are now using our virtual reality application at the Rennes University Hospital, and the clinical evaluation of VERARE is still on-going and expected to be achieved and completed in 2023. The project is also pushing the research activity of Hybrid on many aspects, eg haptics, avatars, and VR user experience, with 3 papers published in IEEE TVCG in 2022.

6 Highlights of the year

6.1 Salient news

- Arrival of Marc Macé (CR CNRS) from IRIT Lab (January)

- Habilitation thesis of Valérie Gouranton (March)

- Arrival of Léa Pillette as new CNRS research scientist in the team (November)

- Emeritus of Bruno Arnaldi (November)

6.2 Awards

- IEEE VGTC Virtual Reality Academy: induction of Anatole Lécuyer (2022).

- IEEE VR 3DUI Contest 2022: Third Place for Hybrid team.

- IEEE TVCG Best Associate Editor, Honorary Mention Award for Anatole Lécuyer.

- Best Paper Award at ICAT-EGVE 2022 Conference for the paper “Manipulating the Sense of Embodiment in Virtual Reality: a study of the interactions between the senses of agency, self-location and ownership” authored by Martin Guy, Camille Jeunet-Kelway, Guillaume Moreau and Jean-Marie Normand.

- Laureate of “Trophée Valorisation du Campus d’Innovation de Rennes” in category “Numérique” (Bruno Arnaldi, Valérie Gouranton, Florian Nouviale).

- “Open-Science Award” for OpenViBE software in category "Documentation".

7 New software and platforms

7.1 New software

7.1.1 OpenVIBE

-

Keywords:

Neurosciences, Interaction, Virtual reality, Health, Real time, Neurofeedback, Brain-Computer Interface, EEG, 3D interaction

-

Functional Description:

OpenViBE is a free and open-source software platform devoted to the design, test and use of Brain-Computer Interfaces (BCI). The platform consists of a set of software modules that can be integrated easily and efficiently to design BCI applications. The key features of OpenViBE software are its modularity, its high performance, its portability, its multiple-user facilities and its connection with high-end/VR displays. The designer of the platform enables users to build complete scenarios based on existing software modules using a dedicated graphical language and a simple Graphical User Interface (GUI). This software is available on the Inria Forge under the terms of the AGPL licence, and it was officially released in June 2009. Since then, the OpenViBE software has already been downloaded more than 60000 times, and it is used by numerous laboratories, projects, or individuals worldwide. More information, downloads, tutorials, videos, documentations are available on the OpenViBE website.

-

Release Contributions:

Added: - Metabox to perform log of signal power - Artifacted files for algorithm tests

Changed: - Refactoring of CMake build process - Update wildcards in gitignore - Update CSV File Writer/Reader - Stimulations only

Removed: - Ogre games and dependencies - Mensia distribution

Fixed: - Intermittent compiler bug

-

News of the Year:

Python2 support dropped in favour of Python3 New feature boxes: - Riemannian geometry - Multimodal Graz visualisation - Artefact detection - Features selection - Stimulation validator

Support for Ubuntu 18.04 Support for Fedora 31

- URL:

-

Contact:

Anatole Lecuyer

-

Participants:

Cedric Riou, Thierry Gaugry, Anatole Lecuyer, Fabien Lotte, Jussi Lindgren, Laurent Bougrain, Maureen Clerc Gallagher, Théodore Papadopoulo, Thomas Prampart

-

Partners:

INSERM, GIPSA-Lab

7.1.2 Xareus

-

Name:

Xareus

-

Keywords:

Virtual reality, Augmented reality, 3D, 3D interaction, Behavior modeling, Interactive Scenarios

-

Scientific Description:

Xareus mainly contains a scenario engine (#SEVEN) and a relation engine (#FIVE) #SEVEN is a model and an engine based on petri nets extended with sensors and effectors, enabling the description and execution of complex and interactive scenarios #FIVE is a framework for the development of interactive and collaborative virtual environments. #FIVE was developed to answer the need for an easier and a faster design and development of virtual reality applications. #FIVE provides a toolkit that simplifies the declaration of possible actions and behaviours of objects in a VE. It also provides a toolkit that facilitates the setting and the management of collaborative interactions in a VE. It is compliant with a distribution of the VE on different setups. It also proposes guidelines to efficiently create a collaborative and interactive VE.

-

Functional Description:

Xareus is implemented in C# and is available as libraries. An integration to the Unity3D engine, also exists. The user can focus on domain-specific aspects for his/her application (industrial training, medical training, etc) thanks to Xareus modules. These modules can be used in a vast range of domains for augmented and virtual reality applications requiring interactive environments and collaboration, such as in training. The scenario engine based on Petri nets with the addition of sensors and effectors that allow the execution of complex scenarios for driving Virtual Reality applications. Xareus comes with a scenario editor integrated to Unity 3D for creating, editing and remotely controlling and running scenarios. The relation engine contains software modules that can be interconnected and helps in building interactive and collaborative virtual environments.

-

Release Contributions:

This version is up to date with Unity 3D 2021.3 LTS and gathers previously separate tools #FIVE and #SEVEN

-

News of the Year:

#FIVE and #SEVEN tools have been gathered in the same software named Xareus. It was updated to be compatible with the last version and capabilities of Unity3D 2021.3 LTS

- URL:

- Publications:

-

Contact:

Valerie Gouranton

-

Participants:

Florian Nouviale, Valerie Gouranton, Bruno Arnaldi, Vincent Goupil, Carl-Johan Jorgensen, Emeric Goga, Adrien Reuzeau, Alexandre Audinot

7.1.3 AvatarReady

-

Name:

A unified platform for the next generation of our virtual selves in digital worlds

-

Keywords:

Avatars, Virtual reality, Augmented reality, Motion capture, 3D animation, Embodiment

-

Scientific Description:

AvatarReady is an open-source tool (AGPL) written in C#, providing a plugin for the Unity 3D software to facilitate the use of humanoid avatars for mixed reality applications. Due to the current complexity of semi-automatically configuring avatars coming from different origins, and using different interaction techniques and devices, AvatarReady aggregates several industrial solutions and results from the academic state of the art to propose a simple and fast way to use humanoid avatars in mixed reality in a seamless way. For example, it is possible to automatically configure avatars from different libraries (e.g., rocketbox, character creator, mixamo), as well as to easily use different avatar control methods (e.g., motion capture, inverse kinematics). AvatarReady is also organized in a modular way so that scientific advances can be progressively integrated into the framework. AvatarReady is furthermore accompanied by a utility to generate ready-to-use avatar packages that can be used on the fly, as well as a website to display them and offer them for download to users.

-

Functional Description:

AvatarReady is a Unity tool to facilitate the configuration and use of humanoid avatars for mixed reality applications. It comes with a utility to generate ready-to-use avatar packages and a website to display them and offer them for download.

- URL:

-

Authors:

Ludovic Hoyet, Fernando Argelaguet Sanz, Adrien Reuzeau

-

Contact:

Ludovic Hoyet

7.1.4 ElectroStim

-

Keywords:

Virtual reality, Unity 3D, Electrotactility, Sensory feedback

-

Scientific Description:

ElectroStim provides an agnostic haptic rendering framework able to exploit electrical stimulation capabilities, test quickly different prototypes of electrodes, and have a fast and easy way to author electrotactile sensations so they can quickly be compared when used as tactile feedback in VR interactions. The framework was designed to exploited electrotactile tactile feedback but it can also be extended to other tactile rendering system such as vibrotactile feedback. Furthermore, it is designed to be easily extendable to other types of haptic sensations.

-

Functional Description:

This software provides the tools necessary to control an electrotactile stimulator in Unity 3D. The software allows precise control of the system to generate tactile sensations in virtual reality applications.

- Publication:

-

Authors:

Sebastian Santiago Vizcay, Fernando Argelaguet Sanz

-

Contact:

Fernando Argelaguet Sanz

7.2 New platforms

7.2.1 Immerstar

Participants: Florian Nouviale, Ronan Gaugne.

URL: Immersia website

With the two virtual reality technological platforms Immersia and Immermove, grouped under the name Immerstar, the team has access to high-level scientific facilities. This equipment benefits the research teams of the center and has allowed them to extend their local, national and international collaborations. The Immerstar platform was granted by a CPER-Inria funding for the 2015-2019 period which had enabled several important evolutions. In particular, in 2018, a haptic system covering the entire volume of the Immersia platform was installed, allowing various configurations from single haptic device usage to dual haptic devices usage with either one or two users. In addition, a motion platform designed to introduce motion feedback for powered wheelchair simulations has also been incorporated (see Figure 2).

We celebrated the twentieth anniversary of the Immersia platform in November 2019 by inaugurating the new haptic equipment. We proposed scientific presentations and received 150 participants, and visits for the support services in which we received 50 persons.

Based on these support, in 2020, we participated to a PIA3-Equipex+ proposal that obtained a funding in 2021. This proposal CONTINUUM involves 22 partner, has been succesfully evaluated and will be granted. The CONTINUUM project will create a collaborative research infrastructure of 30 platforms located throughout France, to advance interdisciplinary research based on interaction between computer science and the human and social sciences. Thanks to CONTINUUM, 37 research teams will develop cutting-edge research programs focusing on visualization, immersion, interaction and collaboration, as well as on human perception, cognition and behaviour in virtual/augmented reality, with potential impact on societal issues. CONTINUUM enables a paradigm shift in the way we perceive, interact, and collaborate with complex digital data and digital worlds by putting humans at the center of the data processing workflows. The project will empower scientists, engineers and industry users with a highly interconnected network of high-performance visualization and immersive platforms to observe, manipulate, understand and share digital data, real-time multi-scale simulations, and virtual or augmented experiences. All platforms will feature facilities for remote collaboration with other platforms, as well as mobile equipment that can be lent to users to facilitate onboarding.

The Immerstar platform is involved in a new National Research Infrastructure since the end of 2021. This new research infrastructure gathers the main platforms of CONTINUUM.

Immerstar is also involved in EUR Digisport led by University of Rennes 2 and PIA4 DemoES AIR led by University of Rennes 1.

8 New results

8.1 Virtual Reality Tools and Usages

8.1.1 Assistive Robotic Technologies for Next-Generation Smart Wheelchairs: Codesign and Modularity to Improve Users' Quality of Life.

Participants: Valérie Gouranton [contact].

This work 23 describes the robotic assistive technologies developed for users of electrically powered wheelchairs, within the framework of the European Union’s Interreg ADAPT (Assistive Devices for Empowering Disabled People Through Robotic Technologies) project. In particular, special attention is devoted to the integration of advanced sensing modalities and the design of new shared control algorithms. In response to the clinical needs identified by our medical partners, two novel smart wheelchairs with complementary capabilities and a virtual reality (VR)-based wheelchair simulator have been developed. These systems have been validated via extensive experimental campaigns in France (see Figure 3) and the United Kingdom.

This work was done in collaboration with Rainbow Team, MIS - Modélisation Information et Systèmes - UR UPJV 4290, University College of London, Pôle Saint-Hélier - Médecine Physique et de Réadaptation [Rennes], CNRS-AIST JRL - Joint Robotics Laboratory and IRSEEM - Institut de Recherche en Systèmes Electroniques Embarqués

8.1.2 The Rubber Slider Metaphor: Visualisation of Temporal and Geolocated Data

Participants: Ferran Argelaguet [contact], Antonin Cheymol, Gwendal Fouché, Lysa Gramoli, Yutaro Hirao, Emilie Hummel, Maé Mavromatis, Yann Moullec, Florian Nouviale.

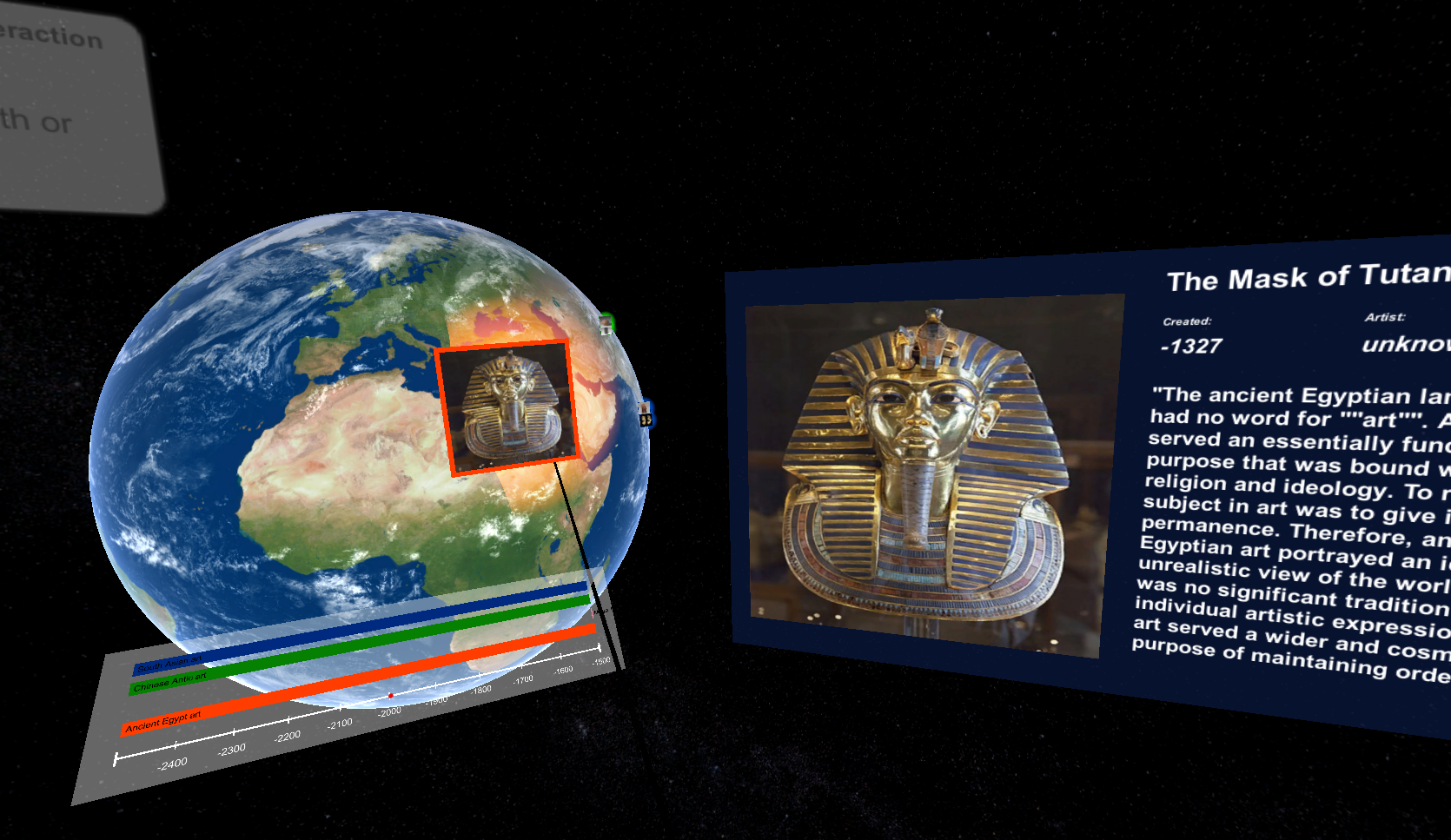

In the context of the IEEE VR 2022 3DUI Contest entitled ”Arts, Science, Information, and Knowledge - Visualized and Interacted”, this work 30 presents a VR application to highlight the usage of the rubber slider metaphor. The rubber slider is an augmentation of usual 2D slider controls where users can bend the slider axis in order to control an additional degree of freedom value in the application (see Figure 4). This demonstration immerses users in a Virtual Environment where they will be able to explore a database of art pieces geolocated on a 3D earth model and their corresponding art movements displayed on a timeline interface. Our application obtained the third place in the contest.

8.1.3 Timeline Design Space for Immersive Exploration of Time-Varying Spatial 3D Data

Participants: Ferran Argelaguet [contact], Gwendal Fouché.

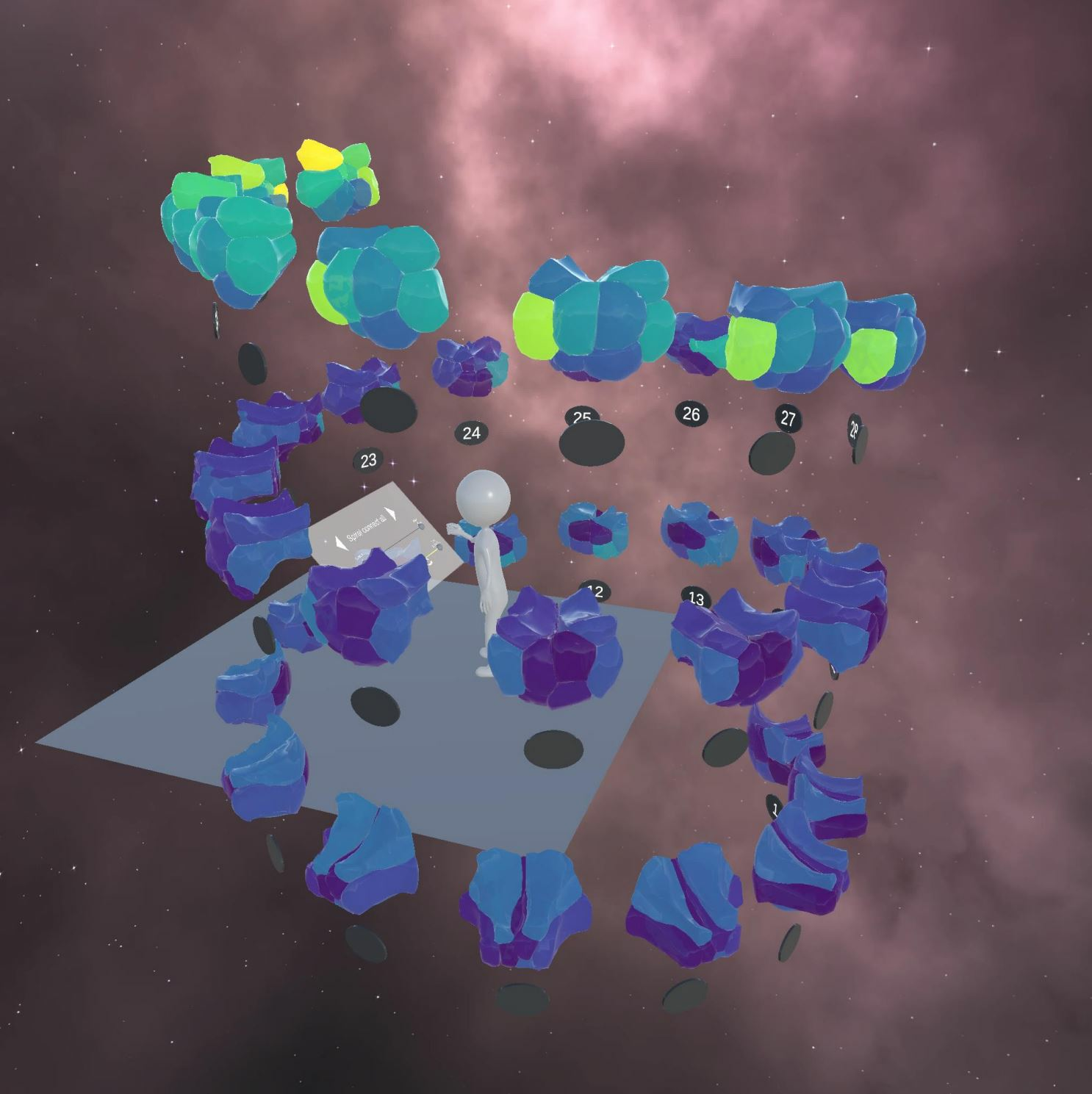

In the context of the Inria Challenge Naviscope, we have explored the usages of virtual reality for the visualization of biological data. Microscopy image observation is commonly performed on 2D screens, which limits human capacities to grasp volumetric, complex, and discrete biological dynamics. First, we published a perspective paper 28 providing an overall discussion of potential benefits and usages of virtual and augmented reality. Secondly, we explored how we could leverage virtual reality to improve the exploration of such datasets using timeline visualization. Timelines are common visualizations to represent and manipulate temporal data. However, timeline visualizations rarely consider spatio-temporal 3D data (e.g. mesh or volumetric models) directly. In this work 32, leveraging the increased workspace and 3D interaction capabilities of virtual reality (VR), we first propose a timeline design space for 3D temporal data extending the timeline design space proposed by Brehmer et al. The proposed design space adapts the scale, layout and representation dimensions to account for the depth dimension and how the 3D temporal data can be partitioned and structured. Moreover, an additional dimension is introduced, the support, which further characterizes the 3D dimension of the visualization. The design space is complemented by discussing the interaction methods required for the efficient visualization of 3D timelines in VR (see Figure 5). Secondly, we evaluate the benefits of 3D timelines through a formal evaluation (n=21). Taken together, our results showed that time-related tasks can be achieved more comfortably using timelines, and more efficiently for specific tasks requiring the analysis of the surrounding temporal context. Finally, we illustrate the use of 3D timelines with a use-case on morphogenetic analysis in which domain experts in cell imaging were involved in the design and evaluation process.

8.1.4 Control Your Virtual Agent in its Daily-activities for Long Periods

Participants: Valérie Gouranton [contact], Lysa Gramoli, Bruno Arnaldi.

Simulating human behavior through virtual agents is a key feature to improve the credibility of virtual environments (VE). For many use cases, such as daily activities data generation, having a good ratio between the agent's control and autonomy is required to impose specific activities while letting the agent be autonomous. In this work 41, we propose a model allowing a user to configure the level of the agent's decision making autonomy according to their requirements. Our model, based on a BDI architecture, combines control constraints given by the user, an internal model simulating human daily needs for autonomy, and a scheduling process to create an activity plan considering these two parts. Using a calendar, the activities that must be performed in the required time can be given by the user. In addition, the user can indicate whether interruptions can happen during the activity calendar to apply an effect induced by the internal model. The plan generated by our model can be executed in the VE by an animated agent in real-time. To show that our model manages well the ratio between control and autonomy, we use a 3D home environment to compare the results with the input parameters (see Figure 6).

This work has been done in collaboration with Orange Labs (Jeremy Lacoche and Anthony Foulonneau).

8.1.5 A BIM-based model to study wayfinding signage using virtual reality

Participants: Valérie Gouranton [contact], Vincent Goupil, Bruno Arnaldi.

Wayfinding signage is essential in a large building to find one’s way. Unfortunately, there are no methodologies and standards for designing signage. A good sign system therefore depends on the experience of the signage company. Getting lost in public infrastructures might be disorienting or cause anxiety. Designing an efficient signage system is challenging as the building needs to communicate a lot of information in a minimum of space. In this work 40, we propose a model to study wayfinding signage based on BIM models and the BIM open library, which allows the integration of signage design into a BIM model to perform analyses and comparisons. The study of signage is based on the user’s perception, and virtual reality is a tool that best approximates this today (see Figure 7). Our model helps to perform signage analysis in building design and to compare objectively the wayfinding signage in a BIM model using virtual reality.

This work was done in collaboration with Vinci Construction (Anne-Solene Michaud) and CHU Rennes (Jean-Yves Gauvrit).

8.1.6 A Systematic Review of Navigation Assistance Systems for People with Dementia

Participants: Léa Pillete [contact], Guillaume Moreau, Jean-Marie Normand, Anatole Lécuyer, Melanie Cogné.

Technological developments provide solutions to alleviate the tremendous impact on the health and autonomy due to the impact of dementia on navigation abilities. We systematically reviewed 25 the literature on devices tested to provide assistance to people with dementia during indoor, outdoor and virtual navigation (PROSPERO ID number: 215585). Medline and Scopus databases were searched from inception. Our aim was to summarize the results from the literature to guide future developments. Twenty-three articles were included in our study. Three types of information were extracted from these studies. First, the types of navigation advice the devices provided were assessed through: (i) the sensorial modality of presentation, e.g., visual and tactile stimuli, (ii) the navigation content, e.g., landmarks, and (iii) the timing of presentation, e.g., systematically at intersections. Second, we analyzed the technology that the devices were based on, e.g., smartphone. Third, the experimental methodology used to assess the devices and the navigation outcome was evaluated. We report and discuss the results from the literature based on these three main characteristics. Finally, based on these considerations, recommendations are drawn, challenges are identified and potential solutions are suggested. Augmented reality-based devices, intelligent tutoring systems and social support should particularly further be explored.

This work was done in collaboration with the CHU Rennes.

8.2 Avatars and Virtual Embodiment

8.2.1 Multi-sensory display of self-avatar's physiological state: virtual breathing and heart beating can increase sensation of effort in VR

Participants: Anatole Lécuyer [contact], Yann Moullec, Mélanie Cogné, Justine Saint-Aubert.

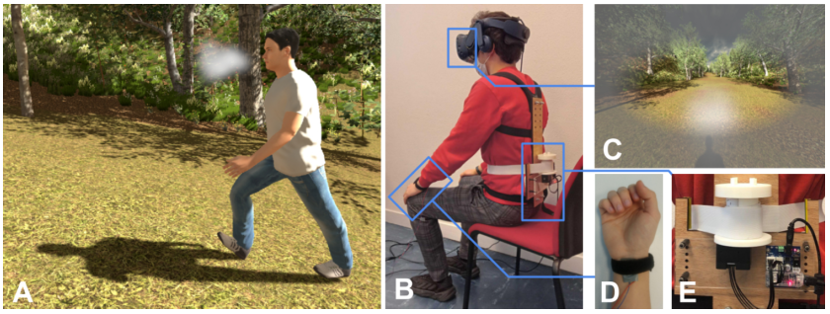

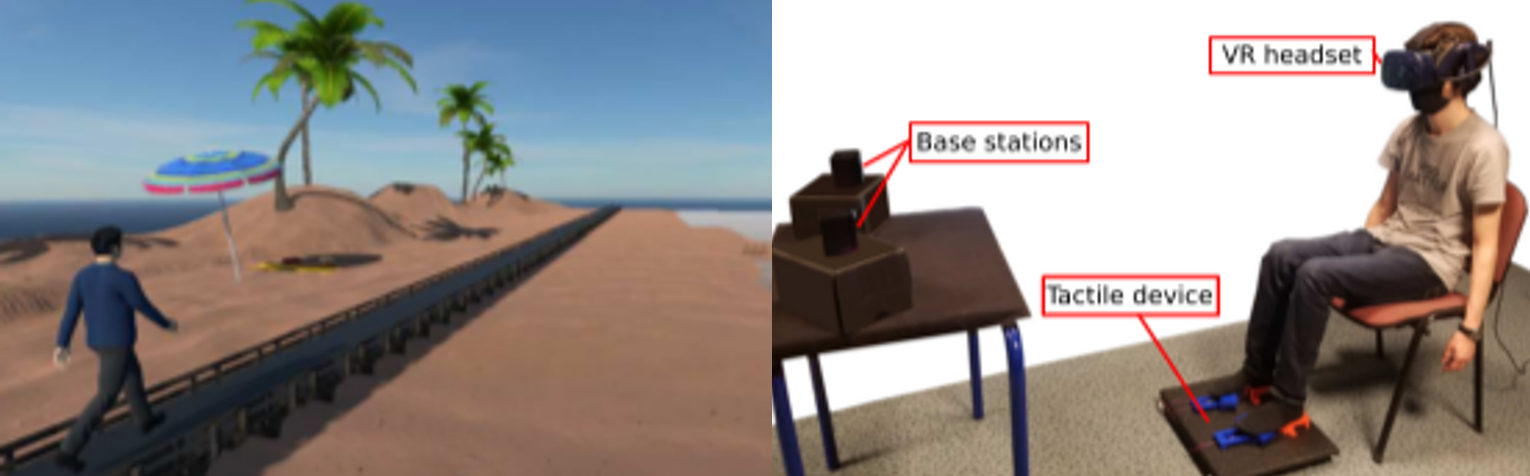

In this work 24 we explored the multi-sensory display of self-avatars’ physiological state in Virtual Reality (VR), as a means to enhance the connection between the users and their avatar (see Figure 8). Our approach consists in designing and combining a coherent set of visual, auditory and haptic cues to represent the avatar’s cardiac and respiratory activity. These sensory cues are modulated depending on the avatar’s simulated physical exertion. We notably introduce a novel haptic technique to represent respiratory activity using a compression belt simulating abdominal movements that occur during a breathing cycle. A series of experiments was conducted to evaluate the influence of our multi-sensory rendering techniques on various aspects of the VR user experience, including the sense of virtual embodiment and the sensation of effort during a walking simulation. A first study (N=30) that focused on displaying cardiac activity showed that combining sensory modalities significantly enhances the sensation of effort. A second study (N=20) that focused on respiratory activity showed that combining sensory modalities significantly enhances the sensation of effort as well as two sub-components of the sense of embodiment. Interestingly, the user’s actual breathing tended to synchronize with the simulated breathing, especially with the multi-sensory and haptic displays. A third study (N=18) that focused on the combination of cardiac and respiratory activity showed that combining both rendering techniques significantly enhances the sensation of effort. Taken together, our results promote the use of our novel breathing display technique and multi-sensory rendering of physiological parameters in VR applications where effort sensations are prominent, such as for rehabilitation, sport training, or exergames.

This work was done in collaboration with CHU Rennes.

8.2.2 Influence of Vibrations on Impression of Walking and Embodiment With First- and Third-Person Avatar

Participants: Anatole Lécuyer [contact], Justine Saint-Aubert, Mélanie Cogné.

Previous studies have explored ways to increase the impression of walking of static VR users making use of vibrotactile underfoot feedback (see Figure 9). In this work 27, we investigated the influence of vibratory feedback when a static user embodies an avatar from a first-or third-person perspective: (i) The benefit of tactile feedback compared to simulation without tactile feedback is evaluated. (ii) We also examined the interest of using phase-based rendering simulating gait phases over other tactile rendering. To this end, we describe a user study (n = 44) designed to evaluate the influence of different tactile renderings on the impression of walking of static VR users. Participants observed a walking avatar from either a first- or a third-person perspective and compared 3 conditions: without tactile rendering, with constant tactile rendering reproducing simple contact information, with tactile rendering based on gait phases. The results show that, overall, constant and phase-based rendering both improve the impression of walking in the first- and third-person perspective. However, such tactile rendering decreases the impression of walking of some participants when the avatar is observed from a first-person perspective. Interestingly, results also show that phase-based rendering does not improve the impression of walking from a first-person perspective compared to the constant rendering, but it does improve the impression of walking from a third-person perspective. Our results then support the use of both tactile rendering from a first-person perspective and the use of phase-based rendering from a third-person perspective.

This work was done in collaboration with CHU Rennes.

8.2.3 Influence of user posture and virtual exercise on impression of locomotion during VR observation

Participants: Anatole Lécuyer [contact], Justine Saint-Aubert, Mélanie Cogné, Mélanie Cogné.

A seated user watching his avatar walking in Virtual Reality (VR) may have an impression of walking. In this work 26, we show that such an impression can be extended to other postures and other locomotion exercises. We present two user studies in which participants wore a VR headset and observed a first-person avatar performing virtual exercises. In the first experiment, the avatar walked and the participants (n=36) tested the simulation in 3 different postures (standing, sitting and Fowler's posture). In the second experiment, other participants (n=18) were sitting and observed the avatar walking, jogging or stepping over virtual obstacles. We evaluated the impression of locomotion by measuring the impression of walking (respectively jogging or stepping) and embodiment in both experiments. The results show that participants had the impression of locomotion in either sitting, standing and Fowler’s posture. However, Fowler’s posture significantly decreased both the level of embodiment and the impression of locomotion. The sitting posture seems to decrease the sense of agency compared to standing posture. Results also show that the majority of the participants experienced an impression of locomotion during the virtual walking, jogging, and stepping exercises. The embodiment was not influenced by the type of virtual exercise. Overall, our results suggest that an impression of locomotion can be elicited in different users' postures and during different virtual locomotion exercises. They provide valuable insight for numerous VR applications in which the user observes a self-avatar moving, such as video games, gait rehabilitation, training, etc.

8.2.4 What Can I Do There? Controlling AR Self-Avatars to Better Perceive Affordances of the Real World

Participants: Anatole Lécuyer [contact], Adélaïde Genay.

In this work 39 we explored a new usage of Augmented Reality (AR) to extend perception and interaction within physical areas ahead of ourselves. To do so, we proposed to detach ourselves from our physical position by creating a controllable "digital copy" of our body that can be used to navigate in local space from a third-person perspective (see Figure 10). With such a viewpoint, we aim to improve our mental representation of distant space and understanding of action possibilities (called affordances), without requiring us to physically enter this space. Our approach relies on AR to virtually integrate the user’s body in remote areas in the form of an avatar. We discuss concrete application scenarios and propose several techniques to manipulate avatars in the third person as a part of a larger conceptual framework. Finally, through a user study employing one of the proposed techniques (puppeteering), we evaluate the validity of using third-person embodiment to extend our perception of the real world to areas outside of our proximal zone. We found that this approach succeeded in enhancing the user's accuracy and confidence when estimating their action capabilities at distant locations.

We propose a novel approach to increase the connection with a self-avatar in virtual reality (A), by displaying its physiological state and physical exertion. It is based on a multi-sensory setup (B) involving visual, auditory and haptic displays. It includes viControl of self-avatars visualized through an Augmented Reality headset to better perceive interactions and affordances in the physical surroundings. Left Testing fire exit paths with a gamepad. Center Planning and testing a route before climbing by controlling the avatar's limbs with gestures. Right Evaluating possible actions on a distant step stool with body-tracking mappig.

This work was done in collaboration with Inria POTIOC team.

8.2.5 Investigating Dual Body Representations During Anisomorphic 3D Manipulation

Participants: Ferran Argelaguet [contact], Anatole Lécuyer.

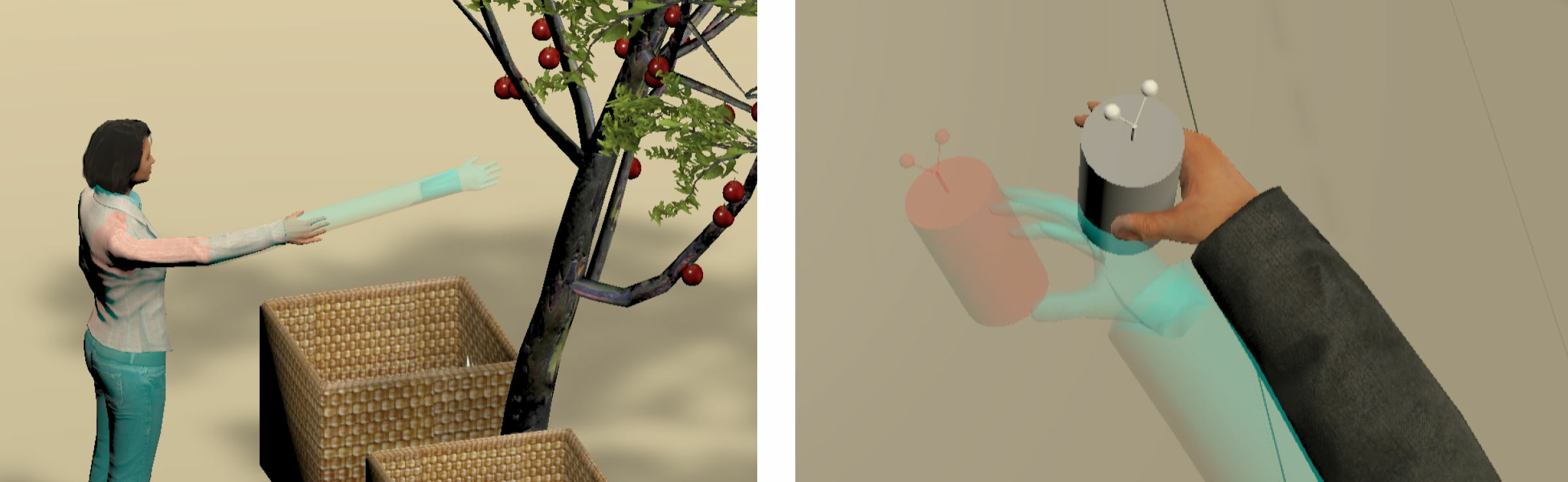

In virtual reality, several manipulation techniques distort users’ motions, for example to reach remote objects or increase precision. These techniques can become problematic when used with avatars, as they create a mismatch between the real performed action and the corresponding displayed action, which can negatively impact the sense of embodiment. In this work 17, we propose to use a dual representation during anisomorphic interaction (see Figure 11). A co-located representation serves as a spatial reference and reproduces the exact users’ motion, while an interactive representation is used for distorted interaction. We conducted two experiments, investigating the use of dual representations with amplified motion (with the Go-Go technique) and decreased motion (with the PRISM technique). Two visual appearances for the interactive representation and the co-located one were explored. This exploratory study investigating dual representations in this context showed that it was possible to feel a global sense of embodiment towards such representations, and that they had no impact on performance. While interacting seemed more important than showing exact movements for agency during out-of-reach manipulation, people felt more in control of the realistic arm during close manipulation. We also found that people globally preferred having a single representation, but opinions diverge especially for the Go-Go technique.

This work was done in collaboration with the Inria Mimetic Team.

8.2.6 Studying the Role of Self and External Touch in the Appropriation of Dysmorphic Hands

Participants: Jean-Marie Normand [contact], Antonin Cheymol, Rebecca Fribourg, Anatole Lécuyer, Ferran Argelaguet.

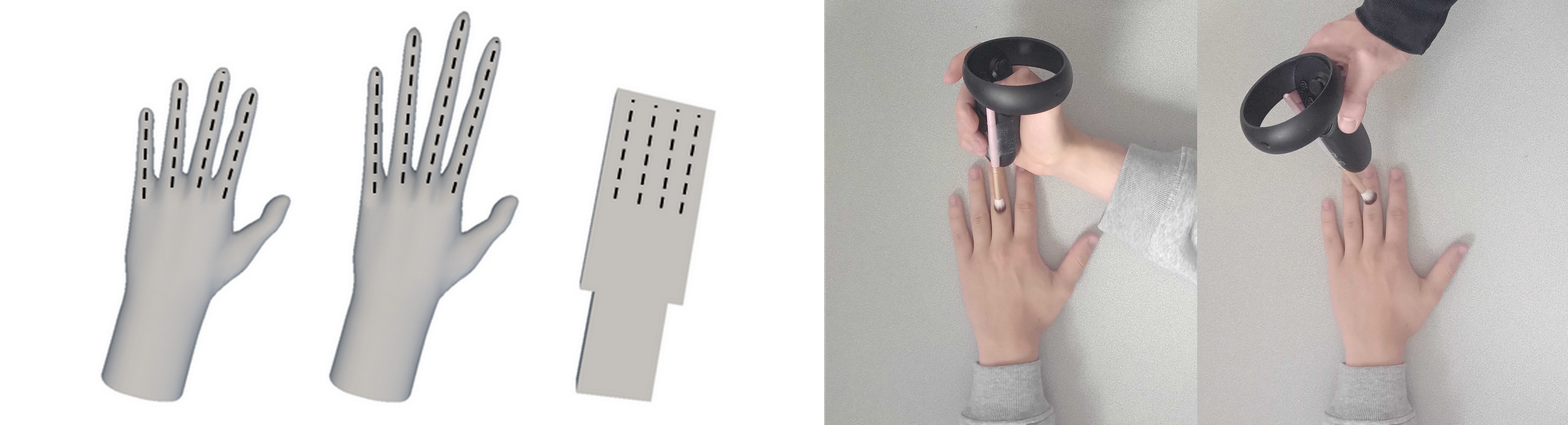

In Virtual Reality, self-touch (ST) stimulation is a promising method of sense of body ownership (SoBO) induction that does not require an external effector. However, its applicability to dysmorphic bodies has not been explored yet and remains uncertain due to the requirement to provide incongruent visuomotor sensations. In this work 31, we studied the effect of ST stimulation on dysmorphic hands via haptic retargeting, as compared to a classical external-touch (ET) stimulation, on the SoBO (see Figure 12). Our results indicate that ST can induce similar levels of dysmorphic SoBO than ET stimulation, but that some types of dysmorphism might decrease the ST stimulation accuracy due to the nature of the re-targeting that they induce.

This work was done in collaboration with the University of Tokyo.

8.2.7 Manipulating the Sense of Embodiment in Virtual Reality: a study of the interactions between the senses of agency, ownership and self-location

Participants: Jean-Marie Normand [contact], Martin Guy, Guillaume Moreau.

In Virtual Reality (VR), the Sense of Embodiment (SoE) corresponds to the feeling of controlling and owning a virtual body, usually referred to as an avatar. The SoE is generally divided into three components: the Sense of Agency (SoA) which characterises the level of control of the user over the avatar, the Sense of Self-Location (SoSL) which is the feeling to be located in the avatar and the Sense of Body-Ownership (SoBO) that represents the attribution of the virtual body to the user. While previous studies showed that the SoE can be manipulated by disturbing either the SoA, the SoBO or the SoSL, the relationships and interactions between these three components still remain unclear. In this work 33, we aim at extending the understanding of the SoE and the interactions between its components by 1) experimentally manipulating them in VR via a biased visual feedback, and 2) understanding if each sub-component can be selectively altered or not. To do so, we designed a within-subject experiment (see Figure 13) where 47 right-handed participants had to perform movements of their right-hand under different experimental conditions impacting the sub-components of embodiment: the SoA was modified by impacting the control of the avatar with visual biased feedback, the SoBO was altered by modifying the realism of the virtual right hand (anthropomorphic cartoon hand or non-anthropomorphic stick “fingers”) and the SoSL was controlled via the user's point of view (first or third person). After each trial, participants rated their level of agency, ownership and self-location on a 7-item Likert scale. Results' analysis revealed that the three components could not be selectively altered in this experiment. Nevertheless, these preliminary results pave the way to further studies.

This work was done in collaboration with the University of Bordeaux.

8.2.8 Comparing Experimental Designs for Virtual Embodiment Studies

Participants: Anatole Lécuyer [contact], Ferran Argelaguet, Grégroire Richard.

When designing virtual embodiment studies, one of the key choices is the nature of the experimental factors, either between-subjects or within-subjects. However, it is well known that each design has advantages and disadvantages in terms of statistical power, sample size requirements and confounding factors. In this work 36, we reported a within-subjects experiment (N=92) comparing self-reported embodiment scores under a visuomotor task with two conditions: synchronous motions and asynchronous motions with a latency of 300ms. With the gathered data, using a Monte-Carlo method, we created numerous simulations of within- and between-subjects experiments by selecting subsets of the data. In particular, we explored the impact of the number of participants on the replicability of the results from the 92 within-subjects experiment. For the between-subjects simulations, only the first condition for each user was considered to create the simulations. The results showed that while the replicability of the results increased as the number of participants increased for the within-subjects simulations, no matter the number of participants, between-subjects simulations were not able to replicate the initial results. We discuss the potential reasons that could have led to this surprising result and potential methodological practices to mitigate them.

This work was done in collaboration with the Inria team Loki.

8.3 Haptic Feedback

8.3.1 Use of Electrotactile Feedback for Finger-Based Interactions in Virtual Reality

Participants: Ferran Argelaguet [contact], Sebastian Saint-Vizcay, Panagiotis Kourtesis.

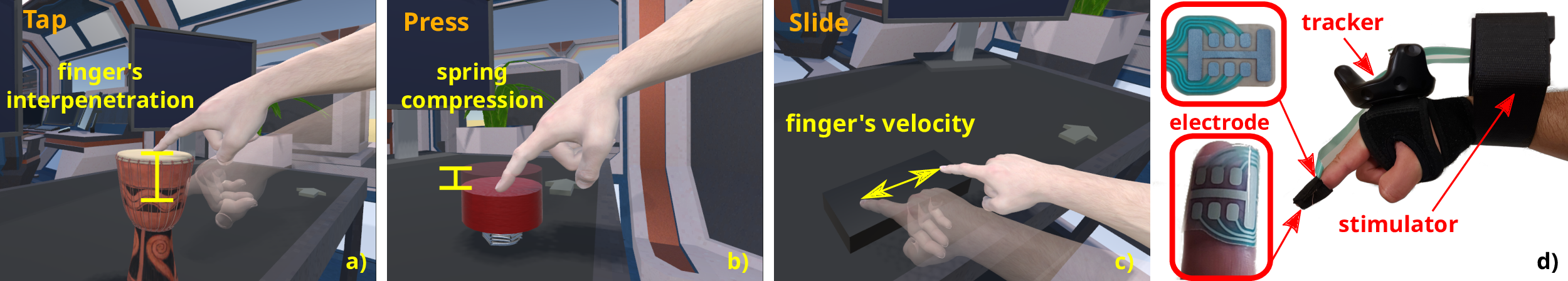

The use of electrotactile feedback in Virtual Reality (VR) has shown promising results for providing tactile information and sensations. While progress has been made to provide custom electrotactile feedback for specific interaction tasks, it remains unclear which modulations and rendering algorithms are preferred in rich interaction scenarios.

First, we proposed a technological overview of electrotactile feedback, as well a systematic review and meta-analysis of its applications for hand-based interactions 19. We discussed the different electrotactile systems according to the type of application. We also discussed over a quantitative congregation of the findings, to offer a high-level overview into the state-of-art and suggested future directions. Moreover, we also proposed a unified tactile rendering architecture and explore the most promising modulations to render finger interactions in VR 37, 38. Based on a literature review, we designed six electrotactile stimulation patterns/effects (EFXs) striving to render different tactile sensations. In a user study (N=18), we assessed the six EFXs in three diverse finger imnteractions: 1) tapping on a virtual object; 2) pressing down a virtual button; 3) sliding along a virtual surface (see Figure 14). Results showed that the preference for certain EFXs depends on the task at hand. No significant preference was detected for tapping (short and quick contact); EFXs that render dynamic intensities or dynamic spatio-temporal patterns were preferred for pressing (continuous dynamic force); EFXs that render moving sensations were preferred for sliding (surface exploration). The results showed the importance of the coherence between the modulation and the interaction being performed and the study proved the versatility of electrotactile feedback and its efficiency in rendering different haptic information and sensations. Finally, we examined the association between performance and perception and the potential effects that tactile feedback modalities (electrotactile, vibrotactile) could generate 20. In a user study (N=24) participants performed a standardized Fitts's law target acquisition task by using three feedback modalities: visual, visuo-electrotactile, and visuo-vibrotactile. The users completed 3 target sizes × 2 distances × 3 feedback modalities = 18 trials. The size perception, distance perception, and (movement) time perception were assessed at the end of each trial. Performance-wise, the results showed that electrotactile feedback facilitates a significantly better accuracy compared to vibrotactile and visual feedback, while vibrotactile provided the worst accuracy. Electrotactile and visual feedback enabled a comparable reaction time, while the vibrotactile offered a substantially slower reaction time than visual feedback.

This work was done in collaboration with the Inria RAINBOW team.

8.3.2 Watch out for the Robot! Designing Visual Feedback Safety Techniques When Interacting With Encountered-Type Haptic Displays.

Participants: Anatole Lécuyer [contact], Ferran Argelaguet.

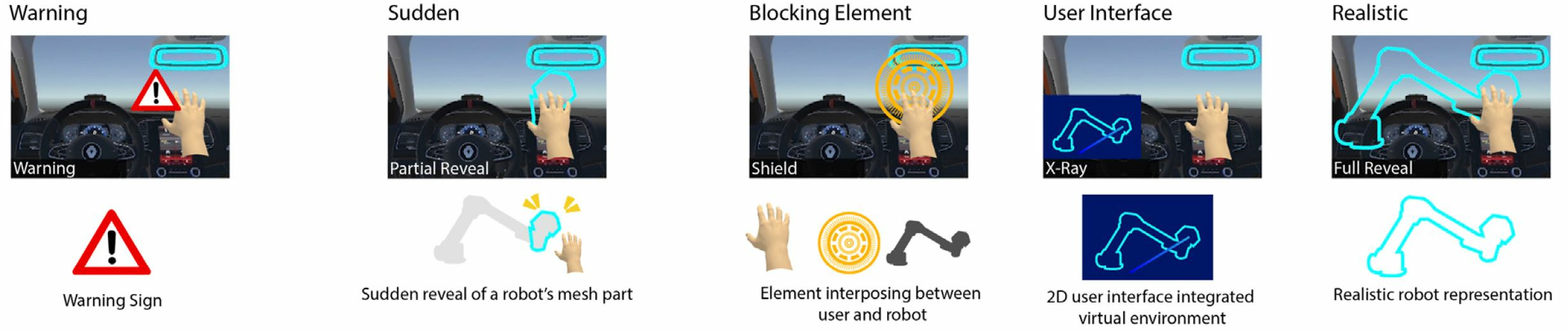

Encountered-Type Haptic Displays (ETHDs) enable users to touch virtual surfaces by using robotic actuators capable of co-locating real and virtual surfaces without encumbering users with actuators. One of the main challenges of ETHDs is to ensure that the robotic actuators do not interfere with the VR experience by avoiding unexpected collisions with users. This work 22 presented a design space for safety techniques using visual feedback to make users aware of the robot’s state and thus reduce unintended potential collisions. The blocks that compose this design space focus on what and when the feedback is displayed and how it protects the user (see Figure 15). Using this design space, a set of 18 techniques was developed exploring variations of the three dimensions. An evaluation questionnaire focusing on immersion and perceived safety was designed and evaluated by a group of experts, which was used to provide a first assessment of the proposed techniques.

This work was done in collaboration with the Inria Loki team.

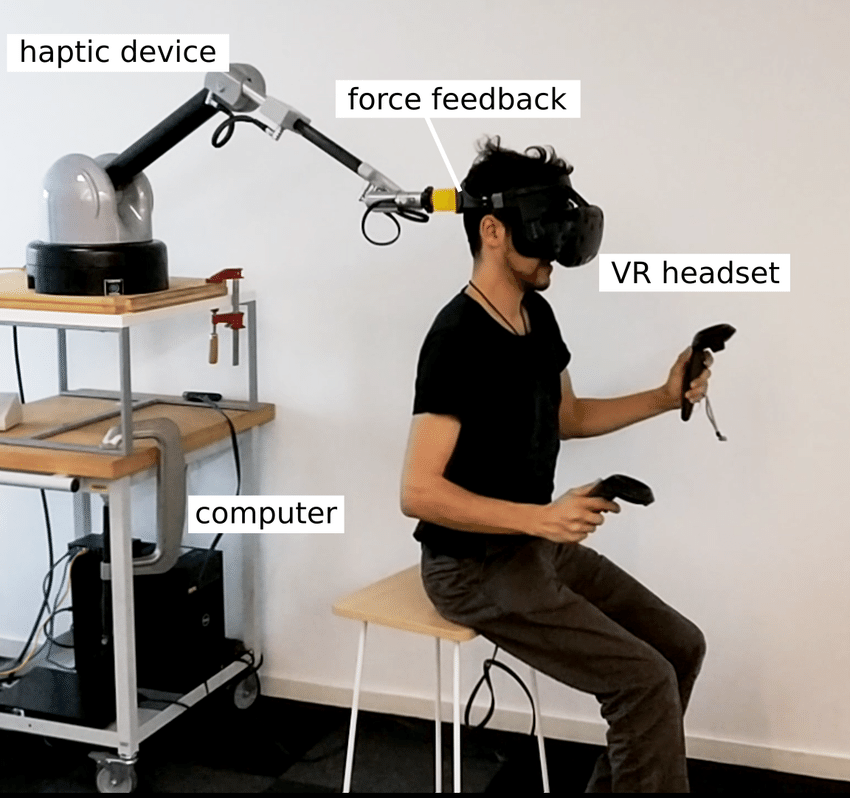

8.3.3 The “Kinesthetic HMD”: Inducing Self-Motion Sensations in Immersive Virtual Reality With Head-Based Force Feedback

Participants: Anatole Lécuyer [contact].

The sensation of self-motion is essential in many virtual reality applications, from entertainment to training, such as flying and driving simulators. If the common approach used in amusement parks is to actuate the seats with cumbersome systems, multisensory integration can also be leveraged to get rich effects from lightweight solutions. In this work 16, we introduced a novel approach called the “Kinesthetic HMD”: actuating a head-mounted display with force feedback in order to provide sensations of self-motion (see Figure 16). We discuss its design considerations and demonstrate an augmented flight simulator use case with a proof-of-concept prototype. We conducted a user study assessing our approach’s ability to enhance self-motion sensations. Taken together, our results show that our Kinesthetic HMD provides significantly stronger and more egocentric sensations than a visual-only self-motion experience. Thus, by providing congruent vestibular and proprioceptive cues related to balance and self-motion, the Kinesthetic HMD represents a promising approach for a variety of virtual reality applications in which motion sensations are prominent.

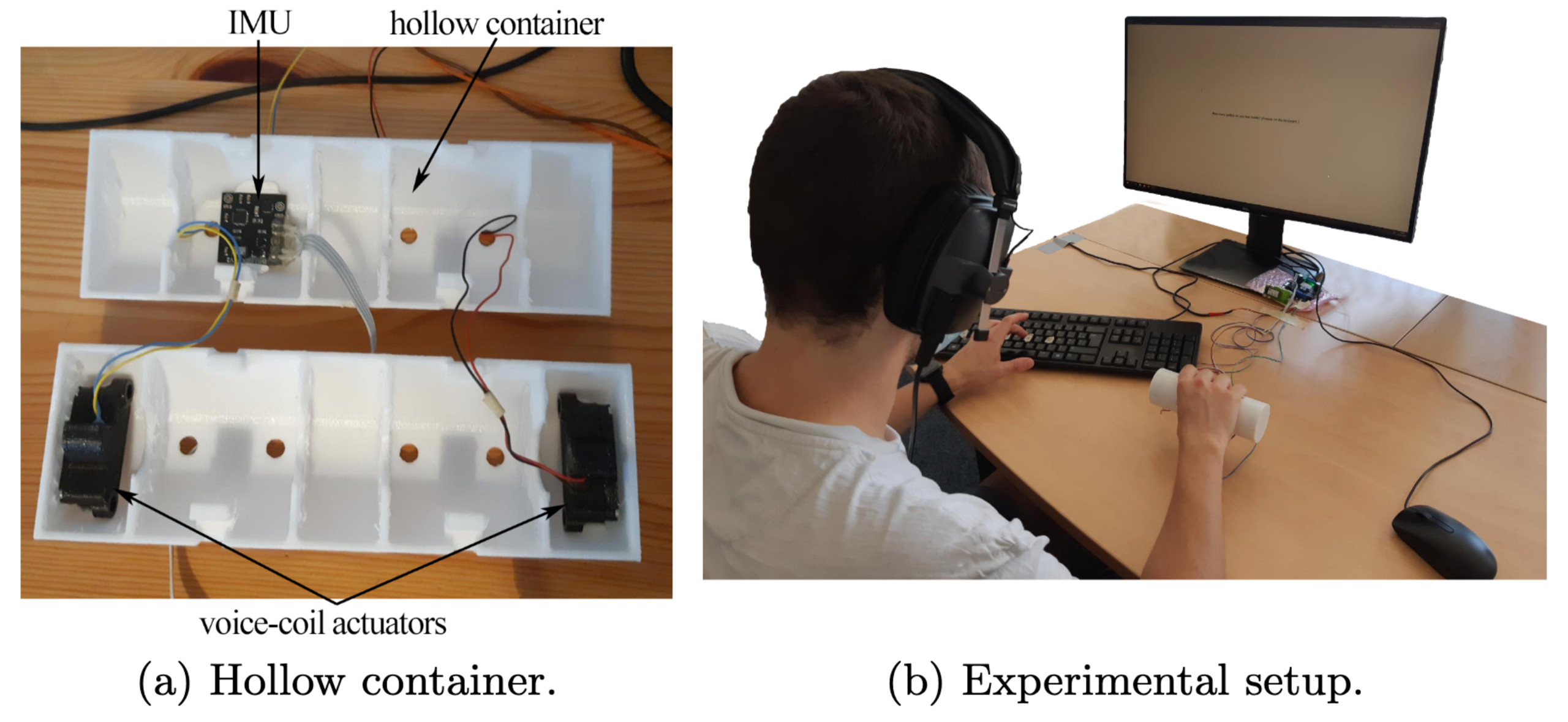

8.3.4 Haptic Rattle: Multi-Modal Rendering of Virtual Objects Inside a Hollow Container

Participants: Valérie Gouranton [contact], Ronan Gaugne [contact], Emilie Hummel, Anatole Lécuyer.

The sense of touch plays a strong role in the perception of the properties and characteristics of hollow objects. The action of shaking a hollow container to get an insight of its content is a natural and common interaction. In 35, we present a multi-modal rendering approach for the simulation of virtual moving objects inside a hollow container, based on the combination of haptic and audio cues generated by voice-coils actuators and high-fidelity headphones, respectively. We conducted a user study. Thirty participants were asked to interact with a target cylindrical hollow object and estimate the number of moving objects inside (see Figure 17), relying on haptic feedback only, audio feedback only, or a combination of both. Results indicate that the combination of various senses is important in the perception of the content of a container.

This work was done in collaboration with Inrap, and the Rainbow team.

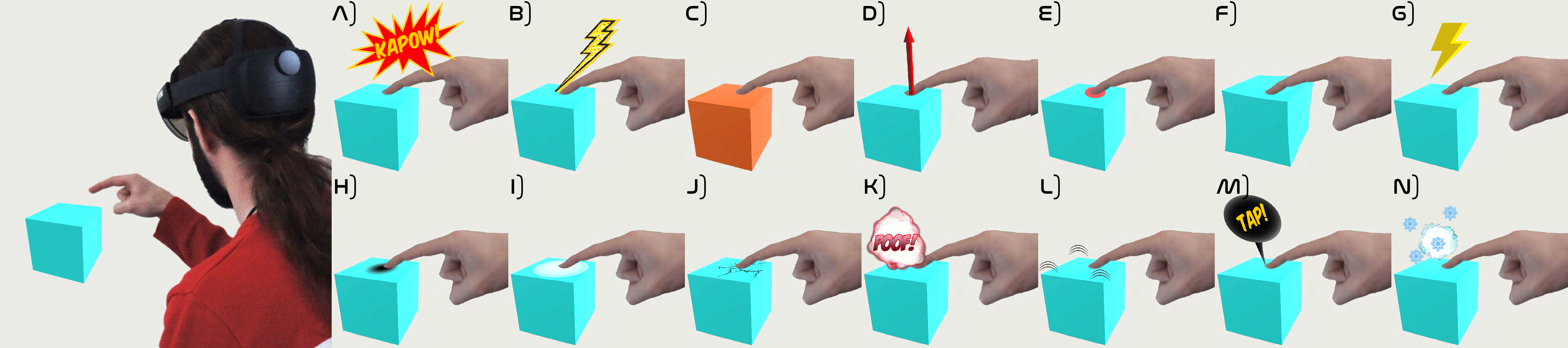

8.3.5 “Kapow”!: Studying the Design of Visual Feedback for Representing Contacts in Extended Reality

Participants: Anatole Lécuyer [contact], Julien Cauquis, Géry Casiez, Jean-Marie Normand.

In absence of haptic feedback, the perception of contact with virtual objects can rapidly become a problem in extended reality (XR) applications. XR developers often rely on visual feedback to inform the user and display contact information. However, as for today, there is no clear path on how to design and assess such visual techniques. In this work 29, we propose a design space for the creation of visual feedback techniques meant to represent contact with virtual surfaces in XR. Based on this design space, we conceived a set of various visual techniques, including novel approaches based on onomatopoeia and inspired by cartoons, or visual effects based on physical phenomena (see Figure 18). Then, we conducted an online preliminary user study with 60 participants, consisting in assessing 6 visual feedback techniques in terms of user experience. We could notably assess, for the first time, the potential influence of the interaction context by comparing the participants' answers in two different scenarios: industrial versus entertainment conditions. Taken together, our design space and initial results could inspire XR developers for a wide range of applications in which the augmentation of contact seems prominent, such as for vocational training, industrial assembly/maintenance, surgical simulation, videogames, etc.

8.4 Brain Computer Interfaces

8.4.1 Toward an Adapted Neurofeedback for Post-stroke Motor Rehabilitation: State of the Art and Perspectives

Participants: Anatole Lécuyer [contact], Salomé Lefranc, Gabriela Herrera.

Stroke is a severe health issue, and motor recovery after stroke remains an important challenge in the rehabilitation field. Neurofeedback (NFB), as part of a brain–computer interface, is a technique for modulating brain activity using on-line feedback that has proved to be useful in motor rehabilitation for the chronic stroke population in addition to traditional therapies. Nevertheless, its use and applications in the field still leave unresolved questions. The brain pathophysiological mechanisms after stroke remain partly unknown, and the possibilities for intervention on these mechanisms to promote cerebral plasticity are limited in clinical practice. In NFB motor rehabilitation, the aim is to adapt the therapy to the patient’s clinical context using brain imaging, considering the time after stroke, the localization of brain lesions, and their clinical impact, while taking into account currently used biomarkers and technical limitations. These modern techniques also allow a better understanding of the physiopathology and neuroplasticity of the brain after stroke. In this work 21, we conducted a narrative literature review of studies using NFB for post-stroke motor rehabilitation. The main goal was to decompose all the elements that can be modified in NFB therapies, which can lead to their adaptation according to the patient's context and according to the current technological limits. Adaptation and individualization of care could derive from this analysis to better meet the patients' needs. We focused on and highlighted the various clinical and technological components considering the most recent experiments. The second goal was to propose general recommendations and enhance the limits and perspectives to improve our general knowledge in the field and allow clinical applications. We highlighted the multidisciplinary approach of this work by combining engineering abilities and medical experience. Engineering development is essential for the available technological tools and aims to increase neuroscience knowledge in the NFB topic. This technological development was born out of the real clinical need to provide complementary therapeutic solutions to a public health problem, considering the actual clinical context of the post-stroke patient and the practical limits resulting from it.

This work was done in collaboration with CHU Rennes, LORIA, PERSEUS and EMPENN teams.

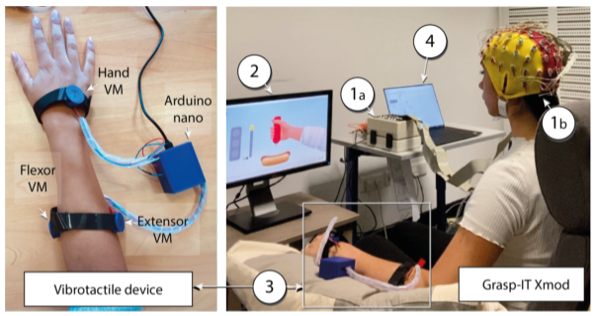

8.4.2 Grasp-IT Xmod: A Multisensory Brain-Computer Interface for Post-Stroke Motor Rehabilitation

Participants: Anatole Lécuyer [contact], Gabriela Herrera.

This work presented Grasp-IT Xmod 34, a game-based brain-computer interface that aims to improve post-stroke upper limb motor rehabilitation (see Figure 19). Indeed, stroke survivors need extensive rehabilitation work including kinesthetic motor imagery (KMI) to stimulate neurons and recover lost motor function. But KMI is intangible without feedback. After recording the electrical activity of the brain with an electroencephalographic system during kinesthetic motor imagery (KMI), multisensory feedback is given based on the quality of the KMI. This feedback is composed of a visual environment and, in a more original way, of a vibrotactile device placed on the forearm. In addition to an affording and motivating situation, the vibrotactile feedback, synchronous to the visual feedback, aims at encouraging the incorporation of the imagined movement in the user in order to improve his IMK performance and consequently the rehabilitation process.

The Grasp-IT Xmod Brain-Computer Interface. Right) 1a) Electroencephalography acquisition system with 1b) referring to the electrode cap. 2) Screen used for the visual feedback. 3) Vibrotactile device used for haptic feedback. 4) Experimenter computer to control the BCI and analyze the EEG signal. Left) Details of the vibrotactile device with three vibration motors (VM).

This work was done in collaboration with UMR LORIA and PERSEUS team (University of Lorraine).

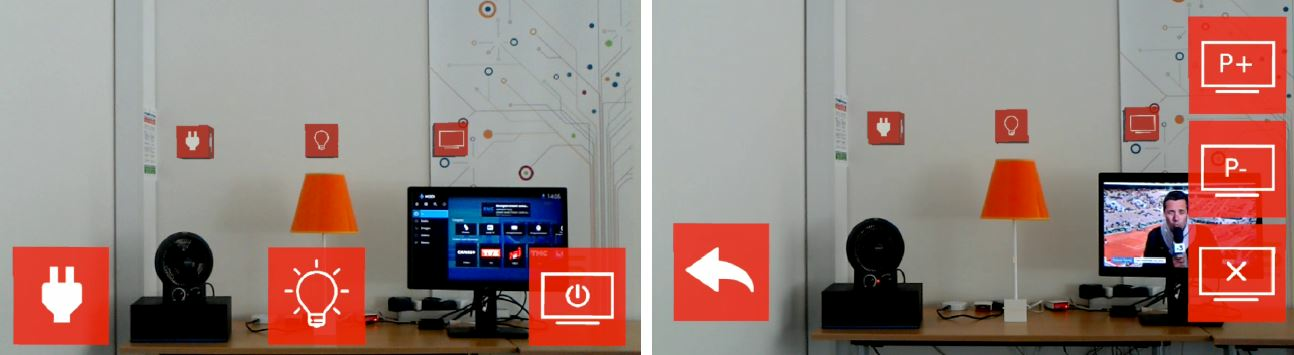

8.4.3 Designing Functional Prototypes Combining BCI and AR for Home Automation

Participants: Anatole Lécuyer [contact].

In this technology report 43 we presented how to design functional prototypes of smart home systems, based on Augmented Reality (AR) and Brain-Computer Interfaces (BCI). A prototype was designed and integrated into a home automation platform, aiming to illustrate the potential of combining EEG-based interaction with Augmented Reality interfaces for operating home appliances. Our proposed solution enables users to interact with different types of appliances from “on-off”-based objects like lamps, to multiple command objects like televisions (see Figure 20). This technology report presents the different steps of the design and implementation of the system, and proposes general guidelines regarding the future development of such solutions. These guidelines start with the description of the functional and technical specifications that should be met, before the introduction of a generic and modular software architecture that can be maintained and adapted for different types of BCI, AR displays and connected objects. Overall this technology report paves the way to the development of a new generation of smart home systems, exploiting brain activity and Augmented Reality for direct interaction with multiple home appliances.

Illustration of the implemented AR interface. The default view of the system (Left) represents the different objects in the field of view, with associated flickering icons. The fan and the light could be switched ON or OFF with a single command. The interaction with television was conducted through a hierarchical menu. After selecting the TV, the possible commands to issue appeared on the interface (Right).

This work was done in collaboration with Orange.

8.5 Art and Cultural Heritage

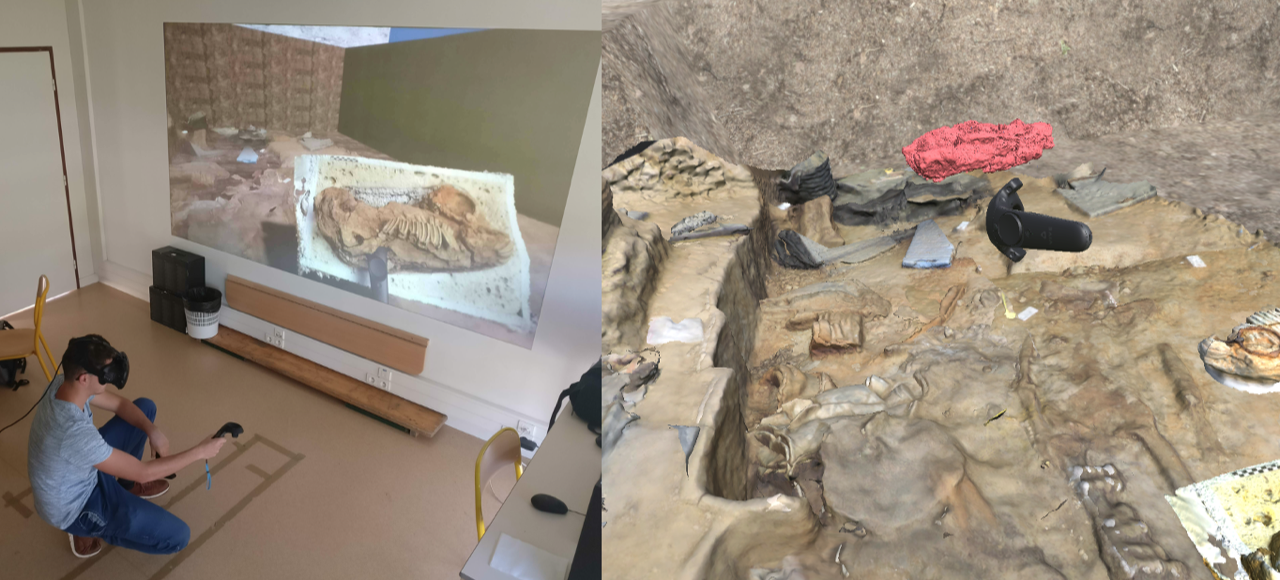

8.5.1 eXtended Reality for Cultural Heritage

Participants: Ronan Gaugne [contact], Valérie Gouranton [contact], Jean-Marie Normand, Flavien Lécuyer.

3D data production techniques, although increasingly used by archaeologists and Cultural Heritage practitioners, are most often limited to the production of 2D or 3D images. Beyond the modes of visualization of these data, it is necessary to wonder about their possible interactions and uses. Virtual Reality, Augmented Reality, and Mixed Reality, collectively known as eXtended Reality, XR, or Cross Reality, make it possible to envisage natural and/or complex interactions with 3D digital environments. These are physical, tangible, or haptic (i.e., with effort feedback) interactions, which can be understood through different modalities or metaphors, associated with procedures or gestures. These interactions can be integrated by archaeologists in the "operating chain" of an operation (from the ground to the study phase), or be part of a functional reconstitution of the procedures and gestures of the past, in order to help understand an object, a site, or even a human activity. The different case studies presented in 45 result from collaborations between archaeologists, historians, and computer scientists. They illustrate different interactions in 3D environments, whether they are operational (support for excavation processes)(see Figure 21) or functional (archaeological objects, human activities of the past).

This work was done in collaboration with Inrap, and UMR Trajectoires.

8.5.2 Use of Different Digitization Methods for the Analysis of Cut Marks on the Oldest Bone Found in Brittany (France)

Participants: Valérie Gouranton [contact], Ronan Gaugne [contact].

Archaeological 3D digitization of skeletal elements is an essential aspect of the discipline. Objectives are various: archiving of data (especially before destructive sampling for biomolecular studies for example), study or for pedagogical purposes to allow their manipulation. As techniques are rapidly evolving, the question that arises is the use of appropriate methods to answer the different questions and guarantee sufficient quality of information. The combined use of different 3D technologies for the study of a single Mesolithic bone fragment from Brittany (France), see Figure 22, Left, proposed in 15 was an opportunity to compare different 3D digitization methods. This oldest human bone of Brittany, a clavicle constituted of two pieces, was dug up from the mesolithic shell midden of Beg-er-Vil in Quiberon and dated from ca. 8200 to 8000 years BP. They are bound to post-mortem processing, realized on fresh bone in order to remove the integuments, which it is necessary to better qualify. The clavicle was studied through a process that combines advanced 3D image acquisition, 3D processing, and 3D printing with the goal to provide relevant support for the experts involved in the work. The bones were first studied with a metallographic microscopy, scanned with a CT scan, and digitized with photogrammetry in order to get a high quality textured model. The CT scan appeared to be insufficient for a detailed analysis; the study was thus completed with a μ-CT providing a very accurate 3D model of the bone. Several 3D-printed copies of the collarbone were produced, using different scales, in order to support knowledge sharing between the experts involved in the study, see Figure 22, Right. The 3D models generated from μCT and photogrammetry were combined to provide an accurate and detailed 3D model. This model was used to study desquamation and the different cut marks, including their angle of attack. These cut marks were also studied with traditional binoculars and digital microscopy. This last technique allowed characterizing their type, revealing a probable meat cutting process with a flint tool. This work of crossed analyses allows us to document a fundamental patrimonial piece, and to ensure its preservation. Copies are also available for the regional museums.

This work was done in collaboration with Inrap, UMR CReAAH, and UMR ArchAm.

8.5.3 Sport heritage in VR: Real Tennis case study

Participants: Ronan Gaugne [contact], Valérie Gouranton [contact].

Traditional Sports and Games (TSG) are as varied as human cultures. Preserving knowledge of these practices is essential as they are an expression of intangible cultural heritage as emphasized by UNESCO in 1989. With the increasing development of virtual reconstructions in the domain of Cultural Heritage, and thank to advances in the production and 3D animation of virtual humans, interactive simulations and experiences of these activities have emerged to preserve this intangible heritage. In 18, we proposed a methodological approach to design an immersive reconstitution of a TSG in Virtual Reality, with a formalization of the elements involved in such a reconstitution and we illustrated this approach with the example of real tennis (Jeu de Paume or Courte Paume in French) (see Figure 23). As a result, we presented first elements of evaluation of the resulting VR application, including performance tests, a preliminary pilot study and the interview of high-ranked players. Real tennis is a racket sport that has been played for centuries and is considered the ancestor of tennis. It was a very popular sport in Europe during the Renaissance period, practiced by every layer of the society. It is still practiced today in few courts in world, especially in France, United Kingdom, Australia and USA. It has been listed in the Inventory of Intangible Cultural Heritage in France since 2012.

This work was done in collaboration with Inrap, and UMR Trajectoires. This work was done in the context of the M2 internship of Pierre Duc-Martin (INSA Rennes).

8.5.4 Could you relax in an artistic co-creative virtual reality experience?

Participants: Ronan Gaugne [contact], Valérie Gouranton [contact], Julien Lomet.

The work presented in 42 contributes to the design and study of artistic collaborative virtual environments through the presentation of immersive and interactive digital artwork installation and the evaluation of the impact of the experience on visitor's emotional state. The experience is centered on a dance performance, involves collaborative spectators who are engaged to the experience through full-body movements (see Figure 24), and is structured in three times, a time of relaxation and discovery of the universe, a time of co-creation and a time of co-active contemplation.

The collaborative artwork “Creative Harmony”, was designed within a multidisciplinary team of artists, researchers and computer scientists from different laboratories. The aesthetic of the artistic environment is inspired by the German Romantism painting from 19th century. In order to foster co-presence, each participant of the experience is associated to an avatar that aims to represent both its body and movements. The music is an original composition designed to develop a peaceful and meditative ambiance to the universe of “Creative Harmony”. The evaluation of the impact on visitor's mood is based on “Brief Mood Introspection Scale” (BMIS), a standard tool widely used in psychological and medical context. We also present an assessment of the experience through the analysis of questionnaires filled by the visitors. We observed a positive increase in the Positive-Tired indicator and a decrease in the Negative-Relaxed indicator, demonstrating the relaxing capabilities of the immersive virtual environment.

This work was done in collaboration with the team Arts: pratiques et poétiques in University Rennes 2 (Joel Laurent), and UMR Inrev in University Paris 8 (Cedric Plessiet).

9 Bilateral contracts and grants with industry

9.1 Grants with Industry

InterDigital

Participants: Nicolas Olivier, Ferran Argelaguet [contact].

This grant started in February 2019. It supports Nicolas's Olivier CIFRE PhD program with InterDigital company on “Avatar Stylization”. This PhD is co-supervised with the MimeTIC team. The PhD was successfully defended on March 2022.

Orange Labs

Participants: Lysa Gramoli, Bruno Arnaldi, Valérie Gouranton [contact].

This grant started in October 2020. It supports Lysa Gramoli's PhD program with Orange Labs company on “Simulation of autonomous agents in connected virtual environments”.

Sogea Bretagne

Participants: Vincent Goupil, Bruno Arnaldi, Valérie Gouranton [contact].

This grant started in October 2020. It supports Vincent Goupil's CIFRE PhD program with Sogea Bretagne company on “Hospital 2.0: Generation of Virtual Reality Applications by BIM Extraction”.

10 Partnerships and cooperations

10.1 International initiatives

10.1.1 Participation in other International Programs

SURRÉARISME

Participants: Jean-Marie Normand, Guillaume Moreau.

-

Titre:

SURRÉARISME: Exploring Perceptual Realism in Mixed Reality using Novel Near-Eye Display Technologies

-

Partners:

- École Centrale de Nantes, France

- IMT Atlantique Brest, France

- The University of Tokyo, Japon

-

Program:

PHC SAKURA

-

Date/Duration:

February 2022 - December 2022

-

Additionnal info/keywords:

Mixed Reality; Perception

10.1.2 H2020 projects

H-Reality

Participants: Anatole Lécuyer [contact].

H-Reality project on cordis.europa.eu

-

Title:

Mixed Haptic Feedback for Mid-Air Interactions in Virtual and Augmented Realities

-

Duration:

From October 1, 2018 to March 31, 2022

-

Partners:

- INSTITUT NATIONAL DE RECHERCHE EN INFORMATIQUE ET AUTOMATIQUE (Inria), France

- ULTRALEAP LIMITED (ULTRAHAPTICS), United Kingdom

- ACTRONIKA (ACA), France

- INSTITUT NATIONAL DES SCIENCES APPLIQUEES DE RENNES (INSA Rennes), France

- CENTRE NATIONAL DE LA RECHERCHE SCIENTIFIQUE CNRS (CNRS), France

- TECHNISCHE UNIVERSITEIT DELFT (TU Delft), Netherlands

-

Inria contact:

Claudio Pacchierotti

-

Coordinator:

THE UNIVERSITY OF BIRMINGHAM (UoB), United Kingdom

-

Summary: