Keywords

Computer Science and Digital Science

- A5.1.2. Evaluation of interactive systems

- A5.1.3. Haptic interfaces

- A5.1.7. Multimodal interfaces

- A5.4.4. 3D and spatio-temporal reconstruction

- A5.4.6. Object localization

- A5.4.7. Visual servoing

- A5.6. Virtual reality, augmented reality

- A5.6.1. Virtual reality

- A5.6.2. Augmented reality

- A5.6.3. Avatar simulation and embodiment

- A5.6.4. Multisensory feedback and interfaces

- A5.9.2. Estimation, modeling

- A5.10.2. Perception

- A5.10.3. Planning

- A5.10.4. Robot control

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A5.10.6. Swarm robotics

- A5.10.7. Learning

- A6.4.1. Deterministic control

- A6.4.3. Observability and Controlability

- A6.4.4. Stability and Stabilization

- A6.4.5. Control of distributed parameter systems

- A6.4.6. Optimal control

- A9.5. Robotics

- A9.7. AI algorithmics

- A9.9. Distributed AI, Multi-agent

Other Research Topics and Application Domains

- B2.4.3. Surgery

- B2.5. Handicap and personal assistances

- B2.5.1. Sensorimotor disabilities

- B2.5.2. Cognitive disabilities

- B2.5.3. Assistance for elderly

- B5.1. Factory of the future

- B5.6. Robotic systems

- B8.1.2. Sensor networks for smart buildings

- B8.4. Security and personal assistance

1 Team members, visitors, external collaborators

Research Scientists

- Paolo Robuffo Giordano [Team leader, CNRS, Senior Researcher, HDR]

- François Chaumette [INRIA, Senior Researcher, HDR]

- Alexandre Krupa [INRIA, Senior Researcher, HDR]

- Claudio Pacchierotti [CNRS, Researcher, HDR]

- Julien Pettré [INRIA, Senior Researcher, until Jun 2022, HDR]

- Marco Tognon [INRIA, Researcher, from Nov 2022]

Faculty Members

- Marie Babel [INSA RENNES, Professor, HDR]

- Quentin Delamare [ENS RENNES, Associate Professor]

- Vincent Drevelle [UNIV RENNES I, Associate Professor]

- Sylvain Guegan [INSA RENNES, Associate Professor]

- Maud Marchal [INSA RENNES, Professor, HDR]

- Éric Marchand [UNIV RENNES I, Professor, HDR]

Post-Doctoral Fellow

- Elodie Bouzbib [Inria]

PhD Students

- Jose Eduardo Aguilar Segovia [INRIA, from Oct 2022]

- Maxime Bernard [CNRS]

- Pascal Brault [INRIA]

- Pierre-Antoine Cabaret [INRIA]

- Antoine Cellier [INSA RENNES]

- Nicola De Carli [CNRS]

- Samuel Felton [UNIV RENNES 1, PhD student Lacodam co-encadrement Eric Marchand]

- Mathieu Gonzalez [B - COM, CIFRE, until Sep 2022]

- Glenn Kerbiriou [Interdigital]

- Lisheng Kuang [CSC Scholarship]

- Ines Lacote [INRIA]

- Emilie Leblong [POLE ST HELIER]

- Fouad Makiyeh [INRIA]

- Maxime Manzano [INSA RENNES, from Sep 2022]

- Antonio Marino [UNIV RENNES I, from Sep 2022]

- Lendy Mulot [INSA, from Oct 2022]

- Thibault Noel [CREATIVE]

- Erwan Normand [UNIV RENNES I]

- Alexander Oliva [INRIA, until Feb 2022]

- Mandela Ouafo Fonkoua [INRIA, from Oct 2022]

- Maxime Robic [INRIA]

- Lev Smolentsev [INRIA]

- Ali Srour [CNRS]

- John Thomas [INRIA]

- Sebastian Vizcay [INRIA, PhD HYBRID, co-encadrement Maud Marchal et Claudio Pacchierotti]

- Xi Wang [UNIV RENNES 1, PhD MIMETIC - co-encadrement Eric Marchand]

- Tairan yin [INRIA, until Jun 2022]

Technical Staff

- Louise Devigne [INRIA, Engineer]

- Marco Ferro [CNRS, Engineer, from Sep 2022]

- Guillaume Gicquel [CNRS, Engineer, from Oct 2022]

- Guillaume Gicquel [INSA RENNES, Engineer, until Sep 2022]

- Fabien Grzeskowiak [INSA, Engineer, from Jul 2022]

- Fabien Grzeskowiak [INSA RENNES, Engineer, until Jun 2022]

- Thomas Howard [CNRS, Engineer]

- Romain Lagneau [INRIA, Engineer, from Dec 2022]

- Antonio Marino [UNIV RENNES I, Engineer, from Apr 2022 until Aug 2022]

- François Pasteau [INSA RENNES, Engineer]

- Pierre Perraud [INRIA, Engineer, from Dec 2022]

- Fabien Spindler [INRIA]

- Sebastien Thomas [Inria, Engineer, from Feb 2022]

- Thomas Voisin [INRIA, from Sep 2022]

Interns and Apprentices

- Lorenzo Balandi [Univ. Bologna, from Sep 2022]

- Elliott Daniello [INSA, Intern, from Jun 2022 until Sep 2022]

- Eva Goichon [INSA, Intern, from Apr 2022 until Sep 2022]

- Nicolas Laurent [CNRS, Intern, from Feb 2022 until Aug 2022]

- Quentin Milot [INSA, Intern, from Apr 2022 until Sep 2022]

- Lendy Mulot [ENS RENNES, from Feb 2022 until Jul 2022]

- Mathis Robert [CNRS, Intern, from Feb 2022 until Aug 2022]

- Arthur Salaun [CNRS, Intern, from Apr 2022 until Sep 2022]

- Thomas Vitry [ENS Rennes, Intern, from May 2022 until Jul 2022]

- Mark Wijnands [UNIV RENNES I, from Sep 2022]

Administrative Assistant

- Hélène de La Ruée [UNIV RENNES I]

Visiting Scientist

- Unnikrishnan Radhakrishnan [UNIV AARHUS, from Sep 2022, Visiting PhD student]

2 Overall objectives

The long-term vision of the Rainbow team is to develop the next generation of sensor-based robots able to navigate and/or interact in complex unstructured environments together with human users. Clearly, the word “together” can have very different meanings depending on the particular context: for example, it can refer to mere co-existence (robots and humans share some space while performing independent tasks), human-awareness (the robots need to be aware of the human state and intentions for properly adjusting their actions), or actual cooperation (robots and humans perform some shared task and need to coordinate their actions).

One could perhaps argue that these two goals are somehow in conflict since higher robot autonomy should imply lower (or absence of) human intervention. However, we believe that our general research direction is well motivated since: despite the many advancements in robot autonomy, complex and high-level cognitive-based decisions are still out of reach. In most applications involving tasks in unstructured environments, uncertainty, and interaction with the physical word, human assistance is still necessary, and will most probably be for the next decades. On the other hand, robots are extremely capable of autonomously executing specific and repetitive tasks, with great speed and precision, and of operating in dangerous/remote environments, while humans possess unmatched cognitive capabilities and world awareness which allow them to take complex and quick decisions; the cooperation between humans and robots is often an implicit constraint of the robotic task itself. Consider for instance the case of assistive robots supporting injured patients during their physical recovery, or human augmentation devices. It is then important to study proper ways of implementing this cooperation; finally, safety regulations can require the presence at all times of a person in charge of supervising and, if necessary, of taking direct control of the robotic workers. For example, this is a common requirement in all applications involving tasks in public spaces, like autonomous vehicles in crowded spaces, or even UAVs when flying in civil airspace such as over urban or populated areas.

Within this general picture, the Rainbow activities will be particularly focused on the case of (shared) cooperation between robots and humans by pursuing the following vision: on the one hand, empower robots with a large degree of autonomy for allowing them to effectively operate in non-trivial environments (e.g., outside completely defined factory settings). On the other hand, include human users in the loop for having them in (partial and bilateral) control of some aspects of the overall robot behavior. We plan to address these challenges from the methodological, algorithmic and application-oriented perspectives. The main research axes along which the Rainbow activities will be articulated are: three supporting axes (Optimal and Uncertainty-Aware Sensing; Advanced Sensor-based Control; Haptics for Robotics Applications) that are meant to develop methods, algorithms and technologies for realizing the central theme of Shared Control of Complex Robotic Systems.

3 Research program

3.1 Main Vision

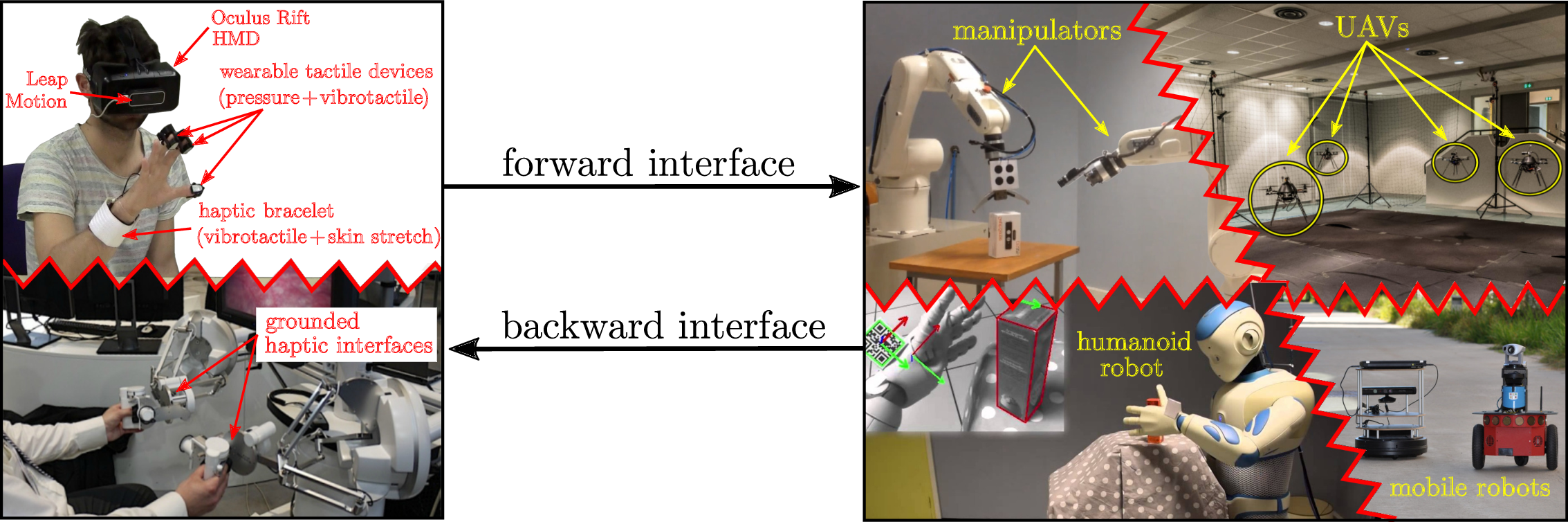

The vision of Rainbow (and foreseen applications) calls for several general scientific challenges: high-level of autonomy for complex robots in complex (unstructured) environments, forward interfaces for letting an operator giving high-level commands to the robot, backward interfaces for informing the operator about the robot `status', user studies for assessing the best interfacing, which will clearly depend on the particular task/situation. Within Rainbow we plan to tackle these challenges at different levels of depth:

- the methodological and algorithmic side of the sought human-robot interaction will be the main focus of Rainbow. Here, we will be interested in advancing the state-of-the-art in sensor-based online planning, control and manipulation for mobile/fixed robots. For instance, while classically most control approaches (especially those sensor-based) have been essentially reactive, we believe that less myopic strategies based on online/reactive trajectory optimization will be needed for the future Rainbow activities. The core ideas of Model-Predictive Control approaches (also known as Receding Horizon) or, in general, numerical optimal control methods will play a role in the Rainbow activities, for allowing the robots to reason/plan over some future time window and better cope with constraints. We will also consider extending classical sensor-based motion control/manipulation techniques to more realistic scenarios, such as deformable/flexible objects (“Advanced Sensor-based Control” axis). Finally, it will also be important to spend research efforts into the field of Optimal Sensing, in the sense of generating (again) trajectories that can optimize the state estimation problem in presence of scarce sensory inputs and/or non-negligible measurement and process noises, especially true for the case of mobile robots (“Optimal and Uncertainty-Aware Sensing” axis). We also aim at addressing the case of coordination between a single human user and multiple robots where, clearly, as explained the autonomy part plays even a more crucial role (no human can control multiple robots at once, thus a high degree of autonomy will be required by the robot group for executing the human commands);

-

the interfacing side will also be a focus of the Rainbow activities. As explained above, we will be interested in both the forward (human robot) and backward (robot human) interfaces. The forward interface will be mainly addressed from the algorithmic point of view, i.e., how to map the few degrees of freedom available to a human operator (usually in the order of 3–4) into complex commands for the controlled robot(s). This mapping will typically be mediated by an “AutoPilot” onboard the robot(s) for autonomously assessing if the commands are feasible and, if not, how to least modify them (“Advanced Sensor-based Control” axis).

The backward interface will, instead, mainly consist of a visual/haptic feedback for the operator. Here, we aim at exploiting our expertise in using force cues for informing an operator about the status of the remote robot(s). However, the sole use of classical grounded force feedback devices (e.g., the typical force-feedback joysticks) will not be enough due to the different kinds of information that will have to be provided to the operator. In this context, the recent interest in the use of wearable haptic interfaces is very interesting and will be investigated in depth (these include, e.g., devices able to provide vibro-tactile information to the fingertips, wrist, or other parts of the body). The main challenges in these activities will be the mechanical conception (and construction) of suitable wearable interfaces for the tasks at hand, and in the generation of force cues for the operator: the force cues will be a (complex) function of the robot state, therefore motivating research in algorithms for mapping the robot state into a few variables (the force cues) (“Haptics for Robotics Applications” axis);

- the evaluation side that will assess the proposed interfaces with some user studies, or acceptability studies by human subjects. Although this activity will not be a main focus of Rainbow (complex user studies are beyond the scope of our core expertise), we will nevertheless devote some efforts into having some reasonable level of user evaluations by applying standard statistical analysis based on psychophysical procedures (e.g., randomized tests and Anova statistical analysis). This will be particularly true for the activities involving the use of smart wheelchairs, which are intended to be used by human users and operate inside human crowds. Therefore, we will be interested in gaining some level of understanding of how semi-autonomous robots (a wheelchair in this example) can predict the human intention, and how humans can react to a semi-autonomous mobile robot.

Figure 1 depicts in an illustrative way the prototypical activities foreseen in Rainbow. On the righthand side, complex robots (dual manipulators, humanoid, single/multiple mobile robots) need to perform some task with high degree of autonomy. On the lefthand side, a human operator gives some high-level commands and receives a visual/haptic feedback aimed at informing her/him at best of the robot status. Again, the main challenges that Rainbow will tackle to address these issues are (in order of relevance): methods and algorithms, mostly based on first-principle modeling and, when possible, on numerical methods for online/reactive trajectory generation, for enabling the robots with high autonomy; design and implementation of visual/haptic cues for interfacing the human operator with the robots, with a special attention to novel combinations of grounded/ungrounded (wearable) haptic devices; user and acceptability studies.

3.2 Main Components

Hereafter, a summary description of the four axes of research in Rainbow.

3.2.1 Optimal and Uncertainty-Aware Sensing

Future robots will need to have a large degree of autonomy for, e.g., interpreting the sensory data for accurate estimation of the robot and world state (which can possibly include the human users), and for devising motion plans able to take into account many constraints (actuation, sensor limitations, environment), including also the state estimation accuracy (i.e., how well the robot/environment state can be reconstructed from the sensed data). In this context, we will be particularly interested in devising trajectory optimization strategies able to maximize some norm of the information gain gathered along the trajectory (and with the available sensors). This can be seen as an instance of Active Sensing, with the main focus on online/reactive trajectory optimization strategies able to take into account several requirements/constraints (sensing/actuation limitations, noise characteristics). We will also be interested in the coupling between optimal sensing and concurrent execution of additional tasks (e.g., navigation, manipulation). Formal methods for guaranteeing the accuracy of localization/state estimation in mobile robotics, mainly exploiting tools from interval analysis. The interest of these methods is their ability to provide possibly conservative but guaranteed accuracy bounds on the best accuracy one can obtain with the given robot/sensor pair, and can thus be used for planning purposes or for system design (choice of the best sensors for a given robot/task). Localization/tracking of objects with poor/unknown or deformable shape, which will be of paramount importance for allowing robots to estimate the state of “complex objects” (e.g., human tissues in medical robotics, elastic materials in manipulation) for controlling its pose/interaction with the objects of interest.

3.2.2 Advanced Sensor-based Control

One of the main competences of the previous Lagadic team has been, generally speaking, the topic of sensor-based control, i.e., how to exploit (typically onboard) sensors for controlling the motion of fixed/ground robots. The main emphasis has been in devising ways to directly couple the robot motion with the sensor outputs in order to invert this mapping for driving the robots towards a configuration specified as a desired sensor reading (thus, directly in sensor space). This general idea has been applied to very different contexts: mainly standard vision (from which the Visual Servoing keyword), but also audio, ultrasound imaging, and RGB-D.

Use of sensors for controlling the robot motion will also clearly be a central topic of the Rainbow team too, since the use of (especially onboard) sensing is a main characteristics of any future robotics application (which should typically operate in unstructured environments, and thus mainly rely on its own ability to sense the world). We then naturally aim at making the best out of the previous Lagadic experience in sensor-based control for proposing new advanced ways of exploiting sensed data for, roughly speaking, controlling the motion of a robot. In this respect, we plan to work on the following topics: “direct/dense methods” which try to directly exploit the raw sensory data in computing the control law for positioning/navigation tasks. The advantages of these methods is the little need for data pre-processing which can minimize feature extraction errors and, in general, improve the overall robustness/accuracy (since all the available data is used by the motion controller); sensor-based interaction with objects of unknown/deformable shapes, for gaining the ability to manipulate, e.g., flexible objects from the acquired sensed data (e.g., controlling online a needle being inserted in a flexible tissue); sensor-based model predictive control, by developing online/reactive trajectory optimization methods able to plan feasible trajectories for robots subjects to sensing/actuation constraints with the possibility of (onboard) sensing for continuously replanning (over some future time horizon) the optimal trajectory. These methods will play an important role when dealing with complex robots affected by complex sensing/actuation constraints, for which pure reactive strategies (as in most of the previous Lagadic works) are not effective. Furthermore, the coupling with the aforementioned optimal sensing will also be considered; multi-robot decentralised estimation and control, with the aim of devising again sensor-based strategies for groups of multiple robots needing to maintain a formation or perform navigation/manipulation tasks. Here, the challenges come from the need of devising “simple” decentralized and scalable control strategies under the presence of complex sensing constraints (e.g., when using onboard cameras, limited fov, occlusions). Also, the need of locally estimating global quantities (e.g., common frame of reference, global property of the formation such as connectivity or rigidity) will also be a line of active research.

3.2.3 Haptics for Robotics Applications

In the envisaged shared cooperation between human users and robots, the typical sensory channel (besides vision) exploited to inform the human users is most often the force/kinesthetic one (in general, the sense of touch and of applied forces to the human hand or limbs). Therefore, a part of our activities will be devoted to study and advance the use of haptic cueing algorithms and interfaces for providing a feedback to the users during the execution of some shared task. We will consider: multi-modal haptic cueing for general teleoperation applications, by studying how to convey information through the kinesthetic and cutaneous channels. Indeed, most haptic-enabled applications typically only involve kinesthetic cues, e.g., the forces/torques that can be felt by grasping a force-feedback joystick/device. These cues are very informative about, e.g., preferred/forbidden motion directions, but are also inherently limited in their resolution since the kinesthetic channel can easily become overloaded (when too much information is compressed in a single cue). In recent years, the arise of novel cutaneous devices able to, e.g., provide vibro-tactile feedback on the fingertips or skin, has proven to be a viable solution to complement the classical kinesthetic channel. We will then study how to combine these two sensory modalities for different prototypical application scenarios, e.g., 6-dof teleoperation of manipulator arms, virtual fixtures approaches, and remote manipulation of (possibly deformable) objects; in the particular context of medical robotics, we plan to address the problem of providing haptic cues for typical medical robotics tasks, such as semi-autonomous needle insertion and robot surgery by exploring the use of kinesthetic feedback for rendering the mechanical properties of the tissues, and vibrotactile feedback for providing with guiding information about pre-planned paths (with the aim of increasing the usability/acceptability of this technology in the medical domain); finally, in the context of multi-robot control we would like to explore how to use the haptic channel for providing information about the status of multiple robots executing a navigation or manipulation task. In this case, the problem is (even more) how to map (or compress) information about many robots into a few haptic cues. We plan to use specialized devices, such as actuated exoskeleton gloves able to provide cues to each fingertip of a human hand, or to resort to “compression” methods inspired by the hand postural synergies for providing coordinated cues representative of a few (but complex) motions of the multi-robot group, e.g., coordinated motions (translations/expansions/rotations) or collective grasping/transporting.

3.2.4 Shared Control of Complex Robotics Systems

This final and main research axis will exploit the methods, algorithms and technologies developed in the previous axes for realizing applications involving complex semi-autonomous robots operating in complex environments together with human users. The leitmotiv is to realize advanced shared control paradigms, which essentially aim at blending robot autonomy and user's intervention in an optimal way for exploiting the best of both worlds (robot accuracy/sensing/mobility/strength and human's cognitive capabilities). A common theme will be the issue of where to “draw the line” between robot autonomy and human intervention: obviously, there is no general answer, and any design choice will depend on the particular task at hand and/or on the technological/algorithmic possibilities of the robotic system under consideration.

A prototypical envisaged application, exploiting and combining the previous three research axes, is as follows: a complex robot (e.g., a two-arm system, a humanoid robot, a multi-UAV group) needs to operate in an environment exploiting its onboard sensors (in general, vision as the main exteroceptive one) and deal with many constraints (limited actuation, limited sensing, complex kinematics/dynamics, obstacle avoidance, interaction with difficult-to-model entities such as surrounding people, and so on). The robot must then possess a quite large autonomy for interpreting and exploiting the sensed data in order to estimate its own state and the environment one (“Optimal and Uncertainty-Aware Sensing” axis), and for planning its motion in order to fulfil the task (e.g., navigation, manipulation) by coping with all the robot/environment constraints. Therefore, advanced control methods able to exploit the sensory data at its most, and able to cope online with constraints in an optimal way (by, e.g., continuously replanning and predicting over a future time horizon) will be needed (“Advanced Sensor-based Control” axis), with a possible (and interesting) coupling with the sensing part for optimizing, at the same time, the state estimation process. Finally, a human operator will typically be in charge of providing high-level commands (e.g., where to go, what to look at, what to grasp and where) that will then be autonomously executed by the robot, with possible local modifications because of the various (local) constraints. At the same time, the operator will also receive online visual-force cues informative of, in general, how well her/his commands are executed and if the robot would prefer or suggest other plans (because of the local constraints that are not of the operator's concern). This information will have to be visually and haptically rendered with an optimal combination of cues that will depend on the particular application (“Haptics for Robotics Applications” axis).

4 Application domains

The activities of Rainbow fall obviously within the scope of Robotics. Broadly speaking, our main interest is in devising novel/efficient algorithms (for estimation, planning, control, haptic cueing, human interfacing, etc.) that can be general and applicable to many different robotic systems of interest, depending on the particular application/case study. For instance, we plan to consider

- applications involving remote telemanipulation with one or two robot arms, where the arm(s) will need to coordinate their motion for approaching/grasping objects of interest under the guidance of a human operator;

- applications involving single and multiple mobile robots for spatial navigation tasks (e.g., exploration, surveillance, mapping). In the multi-robot case, the high redundancy of the multi-robot group will motivate research in autonomously exploiting this redundancy for facilitating the task (e.g., optimizing the self-localization of the environment mapping) while following the human commands, and vice-versa for informing the operator about the status of a multi-robot group. In the single robot case, the possible combination with some manipulation devices (e.g., arms on a wheeled robot) will motivate research into remote tele-navigation and tele-manipulation;

- applications involving medical robotics, in which the “manipulators” are replaced by the typical tools used in medical applications (ultrasound probes, needles, cutting scalpels, and so on) for semi-autonomous probing and intervention;

- applications involving a direct physical “coupling” between human users and robots (rather than a “remote” interfacing), such as the case of assistive devices used for easing the life of impaired people. Here, we will be primarily interested in, e.g., safety and usability issues, and also touch some aspects of user acceptability.

These directions are, in our opinion, very promising since nowadays and future robotics applications are expected to address more and more complex tasks: for instance, it is becoming mandatory to empower robots with the ability to predict the future (to some extent) by also explicitly dealing with uncertainties from sensing or actuation; to safely and effectively interact with human supervisors (or collaborators) for accomplishing shared tasks; to learn or adapt to the dynamic environments from small prior knowledge; to exploit the environment (e.g., obstacles) rather than avoiding it (a typical example is a humanoid robot in a multi-contact scenario for facilitating walking on rough terrains); to optimize the onboard resources for large-scale monitoring tasks; to cooperate with other robots either by direct sensing/communication, or via some shared database (the “cloud”).

While no single lab can reasonably address all these theoretical/algorithmic/technological challenges, we believe that our research agenda can give some concrete contributions to the next generation of robotics applications.

5 Highlights of the year

5.1 Awards

- C. Pacchierotti has received the CNRS Bronze Medal (institute INS2I)

- M. Marchal, M. Babel, and C. Pacchierotti have received the “Best Paper Award”, Eurohaptics 2022, Hamburg, Germany

- C. Pacchierotti receives the “RAS Most Active Technical Committee Award” for the IEEE RAS Technical Committee on Haptics, during his tenure as Chair

- Maxime Robic has been awarded as the winner of the 3rd jury price of "Ma thèse en 180 secondes" during the national final in Lyon in May 2022.

5.2 Highlights

- P. Robuffo Giordano is one of the two IEEE RAS Distinguished Lecturers for Multi-Robot Systems

- Horizon Europe project RĔGO started in October 2022

6 New software and platforms

6.1 New software

6.1.1 HandiViz

-

Name:

Driving assistance of a wheelchair

-

Keywords:

Health, Persons attendant, Handicap

-

Functional Description:

The HandiViz software proposes a semi-autonomous navigation framework of a wheelchair relying on visual servoing.

It has been registered to the APP (“Agence de Protection des Programmes”) as an INSA software (IDDN.FR.001.440021.000.S.P.2013.000.10000) and is under GPL license.

-

Contact:

Marie Babel

-

Participants:

François Pasteau, Marie Babel

-

Partner:

INSA Rennes

6.1.2 UsTk

-

Name:

Ultrasound toolkit for medical robotics applications guided from ultrasound images

-

Keywords:

Echographic imagery, Image reconstruction, Medical robotics, Visual tracking, Visual servoing (VS), Needle insertion

-

Functional Description:

UsTK, standing for Ultrasound Toolkit, is a cross-platform extension of ViSP software dedicated to 2D and 3D ultrasound image processing and visual servoing based on ultrasound images. Written in C++, UsTK architecture provides a core module that implements all the data structures at the heart of UsTK, a grabber module that allows acquiring ultrasound images from an Ultrasonix or a Sonosite device, a GUI module to display data, an IO module for providing functionalities to read/write data from a storage device, and a set of image processing modules to compute the confidence map of ultrasound images, generate elastography images, track a flexible needle in sequences of 2D and 3D ultrasound images and track a target image template in sequences of 2D ultrasound images. All these modules were implemented on several robotic demonstrators to control the motion of an ultrasound probe or a flexible needle by ultrasound visual servoing.

- URL:

-

Contact:

Alexandre Krupa

-

Participants:

Alexandre Krupa, Fabien Spindler

-

Partners:

Inria, Université de Rennes 1

6.1.3 ViSP

-

Name:

Visual servoing platform

-

Keywords:

Augmented reality, Computer vision, Robotics, Visual servoing (VS), Visual tracking

-

Scientific Description:

Since 2005, we develop and release ViSP [1], an open source library available from https://visp.inria.fr. ViSP standing for Visual Servoing Platform allows prototyping and developing applications using visual tracking and visual servoing techniques at the heart of the Rainbow research. ViSP was designed to be independent from the hardware, to be simple to use, expandable and cross-platform. ViSP allows designing vision-based tasks for eye-in-hand and eye-to-hand systems from the most classical visual features that are used in practice. It involves a large set of elementary positioning tasks with respect to various visual features (points, segments, straight lines, circles, spheres, cylinders, image moments, pose...) that can be combined together, and image processing algorithms that allow tracking of visual cues (dots, segments, ellipses...), or 3D model-based tracking of known objects or template tracking. Simulation capabilities are also available.

We have extended ViSP with a new open-source dynamical simulator named FrankaSim based on CoppeliaSim and ROS for the popular Franka Emika Robot [2]. The simulator fully integrated in the ViSP ecosystem features a dynamic model that has been accurately identified from a real robot, leading to more realistic simulations. Conceived as a multipurpose research simulation platform, it is well suited for visual servoing applications as well as, in general, for any pedagogical purpose in robotics. All the software, models and CoppeliaSim scenes presented in this work are publicly available under free GPL-2.0 license.

[1] E. Marchand, F. Spindler, F. Chaumette. ViSP for visual servoing: a generic software platform with a wide class of robot control skills. IEEE Robotics and Automation Magazine, Special Issue on "Software Packages for Vision-Based Control of Motion", P. Oh, D. Burschka (Eds.), 12(4):40-52, December 2005. URL: https://hal.inria.fr/inria-00351899v1

[2] A. A. Oliva, F. Spindler, P. Robuffo Giordano and F. Chaumette. ‘FrankaSim: A Dynamic Simulator for the Franka Emika Robot with Visual-Servoing Enabled Capabilities’. In: ICARCV 2022 - 17th International Conference on Control, Automation, Robotics and Vision. Singapore, Singapore, 11th Dec. 2022, pp. 1–7. URL: https://hal.inria.fr/hal-03794415.

-

Functional Description:

ViSP provides simple ways to integrate and validate new algorithms with already existing tools. It follows a module-based software engineering design where data types, algorithms, sensors, viewers and user interaction are made available. Written in C++, ViSP is based on open-source cross-platform libraries (such as OpenCV) and builds with CMake. Several platforms are supported, including OSX, iOS, Windows and Linux. ViSP online documentation allows to ease learning. More than 300 fully documented classes organized in 17 different modules, with more than 408 examples and 88 tutorials are proposed to the user. ViSP is released under a dual licensing model. It is open-source with a GNU GPLv2 or GPLv3 license. A professional edition license that replaces GNU GPL is also available.

- URL:

-

Contact:

Fabien Spindler

-

Participants:

Éric Marchand, Fabien Spindler, François Chaumette

-

Partners:

Inria, Université de Rennes 1

6.1.4 DIARBENN

-

Name:

Obstacle avoidance through sensor-based servoing

-

Keywords:

Servoing, Shared control, Navigation

-

Functional Description:

DIARBENN's objective is to define an obstacle avoidance solution adapted to a mobile robot such as a powered wheelchair. Through a shared control system, the system corrects progressively and if necessary the trajectory when approaching an obstacle while respecting the user's intention.

-

Contact:

Marie Babel

-

Participants:

Marie Babel, François Pasteau, Sylvain Guegan

-

Partner:

INSA Rennes

6.2 New platforms

The platforms described in the next sections are labeled by the University of Rennes 1 and by ROBOTEX 2.0.

6.2.1 Robot Vision Platform

Participant: François Chaumette, Eric Marchand, Fabien Spindler [contact].

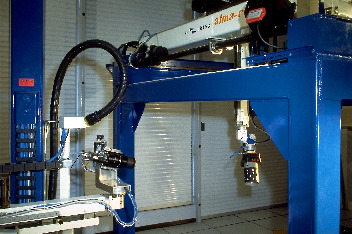

We exploit two industrial robotic systems built by Afma Robots in the nineties to validate our research in visual servoing and active vision. The first one is a 6 DoF Gantry robot, the other one is a 4 DoF cylindrical robot (see Fig. 2). The Gantry robot allows mounting grippers on its end-effector. Attached to these robots, we can also find a collection of various RGB and RGB-D cameras used to validate vision-based real-time tracking algorithms.

In 2022, this platform has been used to validate experimental results in 3 accepted publications 15, 42, 41.

In this image we can see our Gantry robot.

6.2.2 Mobile Robots

Participants: Marie Babel, François Pasteau, Fabien Spindler [contact].

To validate our research in personally assisted living topic (see Sect. 7.4.3), we have three electric wheelchairs, one from Permobil, one from Sunrise and the last from YouQ (see Fig. 3.a). The control of the wheelchair is performed using a plug and play system between the joystick and the low level control of the wheelchair. Such a system lets us acquire the user intention through the joystick position and control the wheelchair by applying corrections to its motion. The wheelchairs have been fitted with cameras, ultrasound and time of flight sensors to perform the required servoing for assisting handicapped people. A wheelchair haptic simulator completes this platform to develop new human interaction strategies in a virtual reality environment (see Fig. 3(b)).

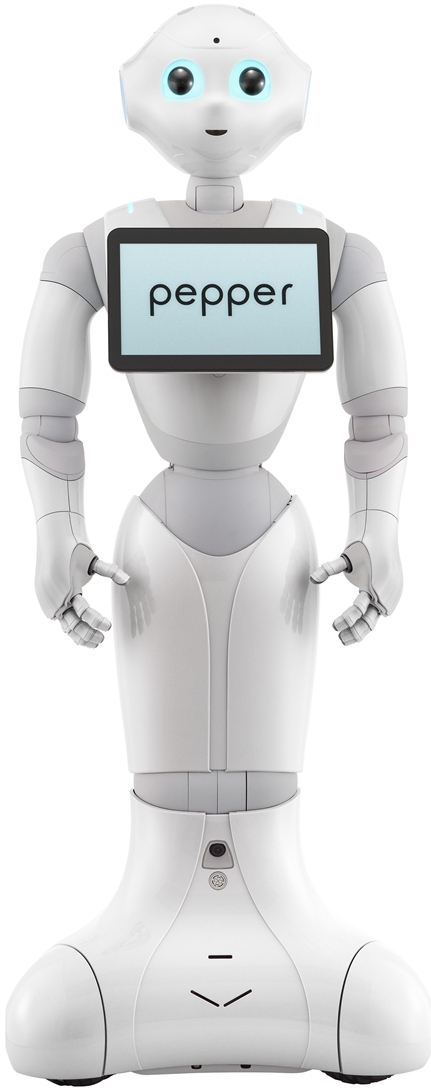

Pepper, a human-shaped robot designed by SoftBank Robotics to be a genuine day-to-day companion (see Fig. 3.c) is also part of this platform. It has 17 DoF mounted on a wheeled holonomic base and a set of sensors (cameras, laser, ultrasound, inertial, microphone) that makes this platform interesting for robot-human interactions during locomotion and visual exploration strategies (Sect. 7.2.6).

Moreover, for fast prototyping of algorithms in perception, control and autonomous navigation, the team uses a Pioneer 3DX from Adept (see Fig. 3.d). This platform is equipped with various sensors needed for autonomous navigation and sensor-based control.

In 2022, these platforms were used to obtain experimental results presented in 3 papers 21, 36, 28.

|

|

|

|

| (a) | (b) | (c) | (d) |

6.2.3 Advanced Manipulation Platform

Participants: Alexandre Krupa, Claudio Pacchierotti, Paolo Robuffo Giordano, François Chaumette, Fabien Spindler [contact].

This platform is composed by 2 Panda lightweight arms from Franka Emika equipped with torque sensors in all seven axes. An electric gripper, a camera, a soft hand from qbrobotics or a Reflex TakkTile 2 gripper from RightHand Labs (see Fig. 4.b) can be mounted on the robot end-effector (see Fig. 4.a). A force/torque sensor from Alberobotics is also attached to one of the robots end-effector to get more precision during torque control. This setup is used to validate our activities in coupling force and vision for controlling robot manipulators (see Section 7.2.11) and in controlling the deformation of soft objects (Sect. 7.2.7). Other haptic devices (see Section 6.2.5) can also be coupled to this platform.

This year we stopped the medical robotics platform and integrated the two 6 DoF Adept Viper arms that were part of it into this advanced manipulation platform. The two Vipers are now used to manipulate deformable objects. We also introduce a new UR 5 robot that was primary used in the Hybrid team.

5 new papers 35, 39, 43, 38, 26 and 1 PhD thesis 51 published this year include experimental results obtained with this platform.

|

|

|

||

| (a) | (b) | (c) |

6.2.4 Unmanned Aerial Vehicles (UAVs)

Participants: Joudy Nader, Paolo Robuffo Giordano, Claudio Pacchierotti, Pierre Perraud, Fabien Spindler [contact].

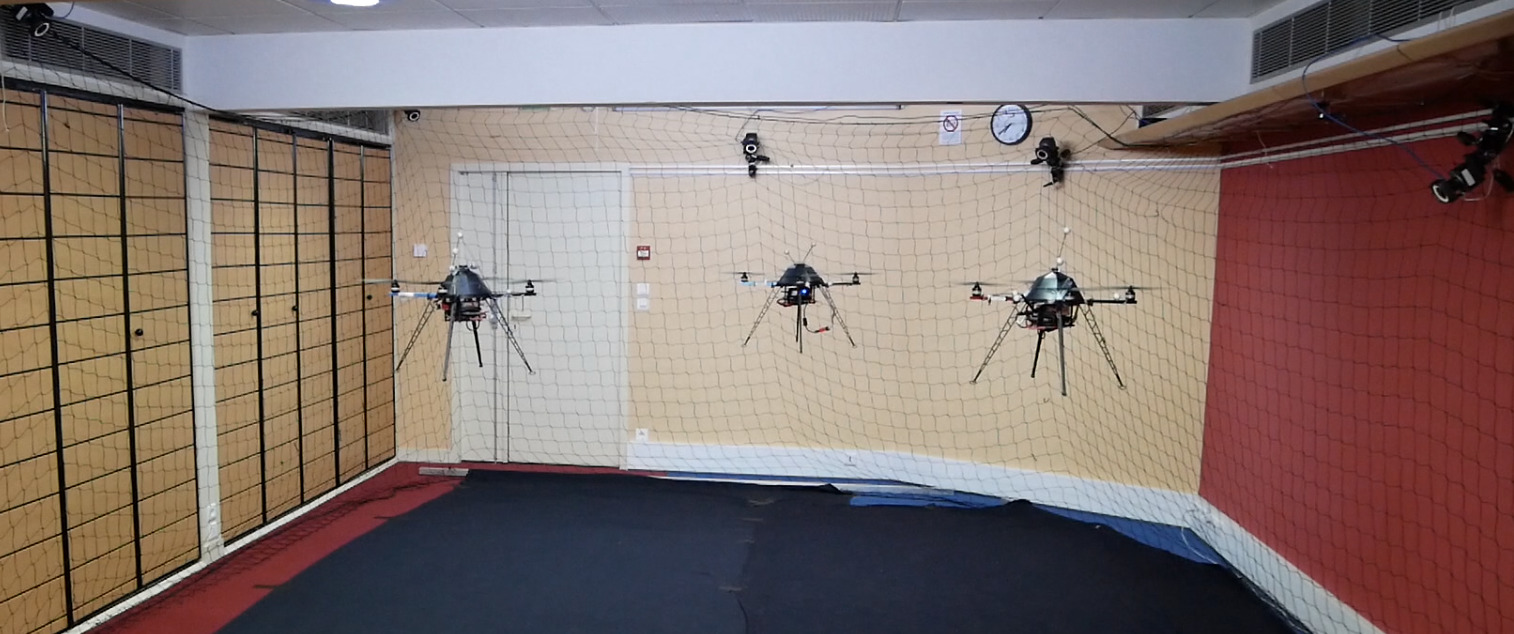

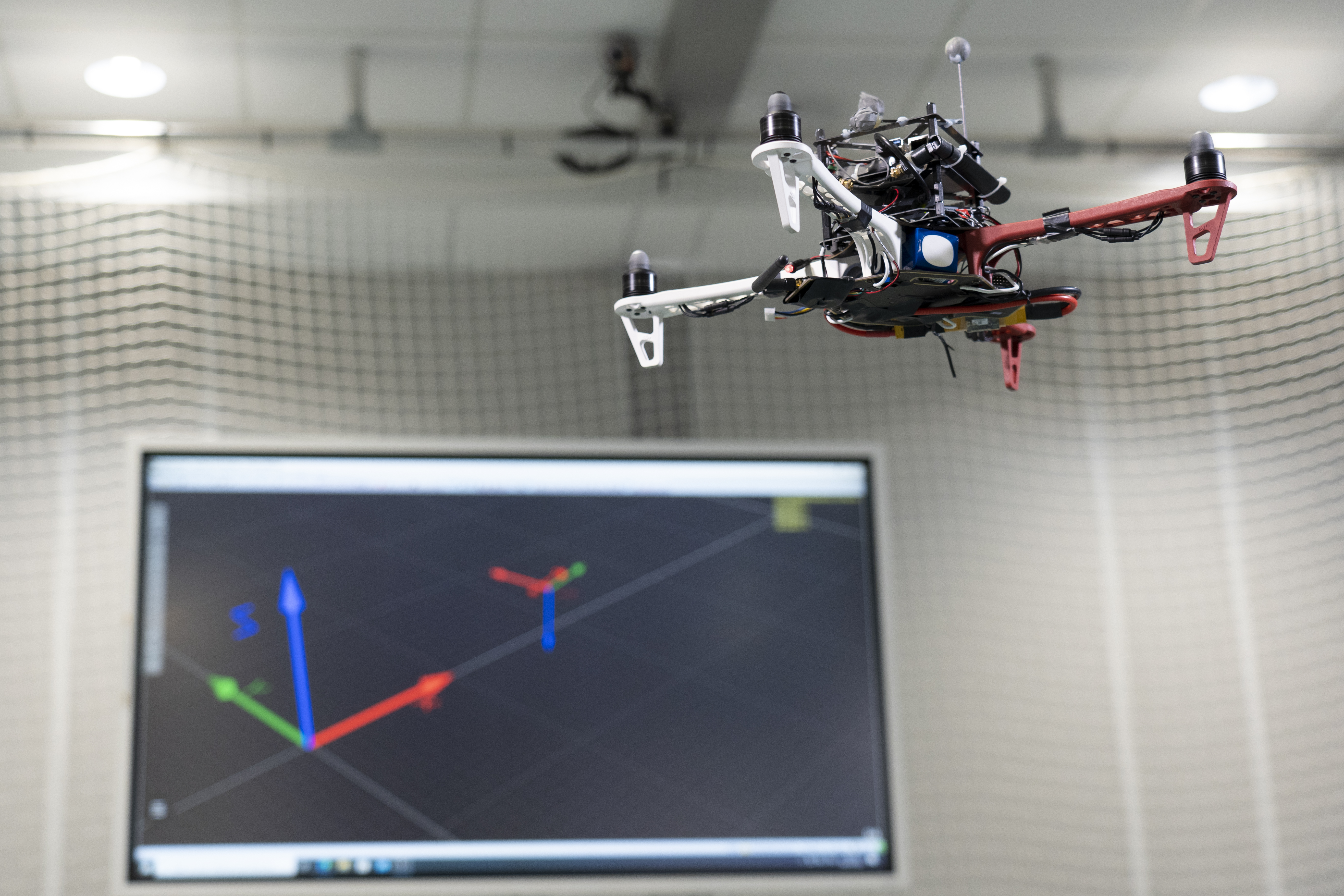

Rainbow is involved in several activities involving perception and control for single and multiple quadrotor UAVs. To this end, we exploit four quadrotors from Mikrokopter Gmbh, Germany (see Fig. 5.a), and one quadrotor from 3DRobotics, USA (see Fig. 5.b). The Mikrokopter quadrotors have been heavily customized by: reprogramming from scratch the low-level attitude controller onboard the microcontroller of the quadrotors, equipping each quadrotor with a NVIDIA Jetson TX2 board running Linux Ubuntu and the TeleKyb-3 software based on genom3 framework developed at LAAS in Toulouse (the middleware used for managing the experiment flows and the communication among the UAVs and the base station), and purchasing new Realsense RGB-D cameras for visual odometry and visual servoing. The quadrotor group is used as robotic platforms for testing a number of single and multiple flight control schemes with a special attention on the use of onboard vision as main sensory modality.

This year we equipped a meeting room with a Qualisys motion capture system. This room is used as a second flying arena from January to August and allows us to fly our drones in a much larger volume than the first arena.

To make evolve the platform's hardware, we began testing a new drone architecture based on a DJI F450 equipped with a Pixhawk, a Jetson TX2 and an Intel Realsense D405 RGB-D camera. The first experiments allowed us to carry out a visual servoing using ViSP to position the drone w.r.t. a target.

This year, 1 paper 10 contains experimental results obtained with this platform.

|

|

|

||

| (a) | (b) | (c) |

6.2.5 Haptics and Shared Control Platform

Participants: Claudio Pacchierotti, Paolo Robuffo Giordano, Maud Marchal, Marie Babel, Fabien Spindler [contact].

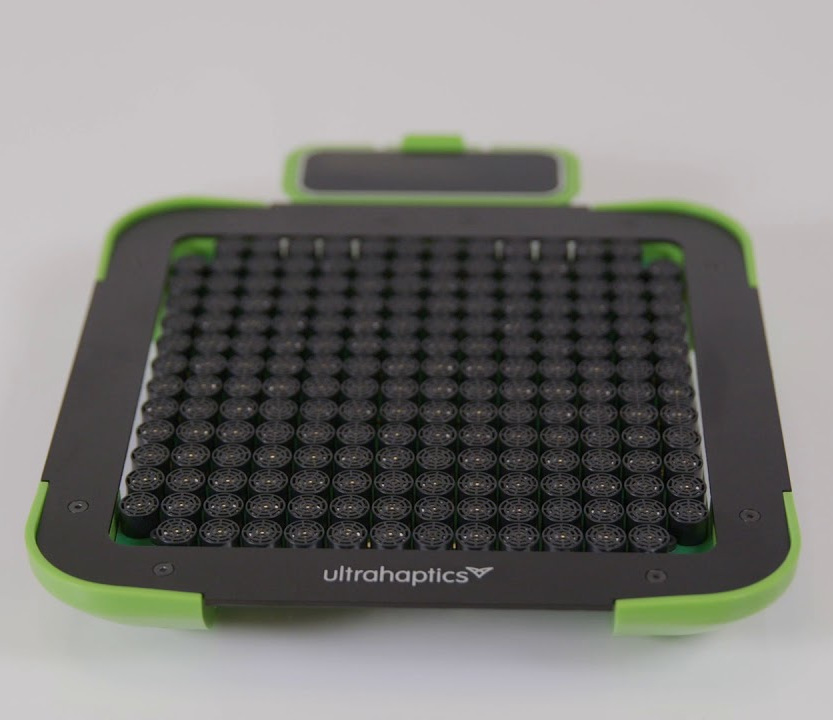

Various haptic devices are used to validate our research in shared control. We design wearable haptics devices to give user feedback and use also some devices out of the shelf. We have a Virtuose 6D device from Haption (see Fig. 6.a). This device is used as master device in many of our shared control activities. It could also be coupled to the Haption haptic glove in loan from the University of Birmingham. An Omega 6 (see Fig. 6.b) from Force Dimension and devices in loan from Ultrahaptics complete this platform that could be coupled to the other robotic platforms.

This platform was used to obtain experimental results presented in 7 papers 10, 13, 30, 31, 32, 26, 17 and 1 HdR 52 published this year.

|

|

|

||

| (a) | (b) | (c) |

6.2.6 Portable immersive room

Participants: François Pasteau, Fabien Grzeskowiak, Marie Babel [contact].

To validate our research on assistive robotics and its applications in virtual conditions, we very recently acquire a portable immersive room that is planned to be easily deployed in different rehabilitation structures in order to conduct clinical trials. The system has been designed by Trinoma company and has been funded by Interreg ADAPT project.

As the platform is very new, there has been no related publication this year.

|

|

|

| (a) | (b) |

7 New results

7.1 Optimal and Uncertainty-Aware Sensing

7.1.1 Trajectory Generation for Optimal State Estimation

Participants: Nicola De Carli, Claudio Pacchierotti, Paolo Robuffo Giordano.

This activity addresses the general problem of active sensing where the goal is to analyze and synthesize optimal trajectories for a robotic system that can maximize the amount of information gathered by (few) noisy outputs (i.e., sensor readings) while at the same time reducing the negative effects of the process/actuation noise. We have previously developed a general framework for solving online the active sensing problem by continuously replanning an optimal trajectory that maximizes a suitable norm of the Constructibility Gramian (CG) 55.

In 37 we have considered an active sensing problem that involves multiple robots in an environmental monitoring task over long time horizons. The approach exploits the norm of the constructability Gramian for learning a distributed multi-robot control policy using the reinforcement learning paradigm. The learned policy is then combined with energy constraints using the constraint-driven control framework in order to achieve persistent environmental monitoring. The proposed approach is tested in a simulated multi-robot persistent environmental monitoring scenario where a team of robots with limited availability of energy is to be controlled in a coordinated fashion in order to estimate the concentration of a gas diffusing in the environment.

7.1.2 Leveraging Multiple Environments for Learning and Decision Making

Participants: Maud Marchal, Thierry Gaugry, Antonin Bernardin.

Learning is usually performed by observing real robot executions. Physics-based simulators are a good alternative for providing highly valuable information while avoiding costly and potentially destructive robot executions. Within the Imagine project, we presented a novel approach for learning the probabilities of symbolic robot action outcomes. This is done by leveraging different environments, such as physics-based simulators, in execution time. To this end, we proposed MENID (Multiple Environment Noise Indeterministic Deictic) rules, a novel representation able to cope with the inherent uncertainties present in robotic tasks. MENID rules explicitly represent each possible outcomes of an action, keep memory of the source of the experience, and maintain the probability of success of each outcome. We also introduced an algorithm to distribute actions among environments, based on previous experiences and expected gain. Before using physics-based simulations, we proposed a methodology for evaluating different simulation settings and determining the least time-consuming model that could be used while still producing coherent results. We demonstrated the validity of the approach in a dismantling use case, using a simulation with reduced quality as simulated system, and a simulation with full resolution where we add noise to the trajectories and some physical parameters as a representation of the real system.

7.1.3 Visual SLAM

Participant: Mathieu Gonzalez, Eric Marchand.

We propose a new SLAM system that uses the semantic segmentation of objects and structures in the scene. Semantic information is relevant as it contains high level information which may make SLAM more accurate and robust. Our contribution is twofold: i) A new SLAM system based on ORB-SLAM2 that creates a semantic map made of clusters of points corresponding to objects instances and structures in the scene. ii) A modification of the classical Bundle Adjustment formulation to constrain each cluster using geometrical priors, which improves both camera localization and reconstruction and enables a better understanding of the scene. This work 2746 was done in cooperation with the IRT B-Com.

We extend this approach to dynamic environment. Indeed, classical visual simultaneous localization and mapping algorithms usually assume the environment to be rigid. This assumption limits the applicability of those algorithms as they are unable to accurately estimate the camera poses and world structure in real life scenes containing moving objects (e.g. cars, bikes, pedestrians, etc.). To tackle this issue, we propose TwistSLAM: a semantic, dynamic and stereo SLAM system that can track dynamic objects in the environment. Our algorithm creates clusters of points according to their semantic class. Thanks to the definition of inter-cluster constraints modeled by mechanical joints (function of the semantic class), a novel constrained bundle adjustment is then able to jointly estimate both poses and velocities of moving objects along with the classical world structure and camera trajectory. This work 16 was done in cooperation with the IRT B-Com.

We also proposed a novel binary graph descriptor to improve loop detection for visual SLAM systems. Our contribution is twofold: i) a graph embedding technique for generating binary descriptors which conserve both spatial and histogram information extracted from images; ii) a generic mean of combining multiple layers of heterogeneous data into the proposed binary graph descriptor, coupled with a matching and geometric checking method. We also introduce an implementation of our descriptor into an incremental Bag-of-Words (iBoW) structure that improves efficiency and scalability, and propose a method to interpret Deep Neural Network (DNN) results. This work 22 was done in cooperation with the Mimetic team.

7.2 Advanced Sensor-Based Control

7.2.1 Trajectory Generation for Minimum Closed-Loop State Sensitivity

Participants: Pascal Brault, Ali Srour, Simon Wasiela, Paolo Robuffo Giordano.

The goal of this research activity is to propose a new point of view in addressing the control of robots under parametric uncertainties: rather than striving to design a sophisticated controller with some robustness guarantees for a specific system, we propose to attain robustness (for any choice of the control action) by suitably shaping the reference motion trajectory so as to minimize the state sensitivity to parameter uncertainty of the resulting closed-loop system. This activity in the scope of the ANR project CAMP (Sect. 9.3).

Over the past years, we have developed the notion of closed-loop “state sensitivity” and “input sensitivity” metrics. These allow formulating an optimal trajectory optimization problem whose solution results in a reference trajectory that, when perturbed, requires minimal change of the control inputs and has minimal deviations in the final tracking error. In 23 we have shown how one can formulate an optimization problem for generating a trajectory that minimizes the sensitivity to uncertain parameters and, at the same time, maximizes the observability of states to be estimated. These two objectives are shown to be competing against each other in the general case, and a suitable pareto-based strategy is introduced for optimizing the two objectives at once. During the year we have also worked on several additional extensions that resulted in three papers submitted to the next ICRA 2023 conference.

- together with Ali Srour we have extended the trajectory optimization problem to also consider the control gains as possible optimization variables. We could then generate minimally-sensitive trajectories that further reduce the state sensitivity by a proper choice of the control gains. The idea has been tested on three case studies involving a 3D quadrotor with a Lee (geometric) controller, a near-hovering (NH) controller and a sliding mode controller. The results of a large-scale simulative campaign showed an interesting pattern in the approach to the final hovering state, as well as unexpected trends in the optimized gains (e.g., from a statistical point of view, the “derivative” gains of the position loop were often reduced w.r.t. their initial values whereas the proportional gains were increased in average). These findings motivate us to keep working on the idea of jointly optimizing the motion and control gains for attaining robustness

- together with Pascal Brault, we have developed a novel algorithm for computing the tubes of perturbed state trajectories given a known range for the parametric uncertainty. The computation of the tubes can be easily obtained from the knowledge of the state sensitivity matrix, and the tube computation can be extended to any function of the states (including the control action). Availability of the tubes allows then formulating an improved trajectory optimization problem in which the size of the tube is minimized (rather than a generic norm of the state sensitivity matrix), and the tubes on the inputs (or any other function of the states) can be used in the constraints for enforcing a “robust” constraint satisfaction

- together with Simon Wasiela (LAAS-CNRS), we have proposed a global control-aware motion planner for optimizing the state sensitivity metric and producing collision-free reference motions that are robust against parametric uncertainties for a large class of complex dynamical systems. In particular, we have proposed a RRT*-based planner called SAMP that uses an appropriate steering method to first compute a (near) time-optimal and kinodynamically feasible trajectory that is then locally deformed to improve robustness and decrease its sensitivity to uncertainties. The evaluation performed on planar/full-3D quadrotor UAV models shows that the SAMP method produces low sensitivity robust solutions with a much higher performance than a planner directly optimizing the sensitivity.

7.2.2 UWB beacon navigation of assisted power wheelchair

Participants: Vincent Drevelle, Marie Babel, Eric Marchand, François Pasteau.

New sensors, based on ultra-wideband (UWB) radio technology, are emerging for indoor localization and object tracking applications. Contrarily to vision, these sensors are low-cost, non-intrusive and easy to adapt on the wheelchair. They enable measuring distances between fixed beacons and mobile sensors. We seek to exploit these UWB sensors for the navigation of a wheelchair, despite the low accuracy of the measurements they provide. The problem here lies in defining an autonomous or shared sensor-based control solution, which takes into account the measurement uncertainty related to UWB beacons.

Because of multipath or non-line-of-sight propagation, erroneous measurements can perturb radio beacon ranging in cluttered indoors environment. This happens when people, objects or even the wheelchair and its driver mask the direct line-of-sight between the two beacons.

We designed a robust wheelchair positioning method, based on an extended Kalman filter with outlier identification and rejection. The method fuses UWB range measurements with low-cost gyro and motor commands to estimate the orientation and position of the wheelchair. A dataset with various density of people around the wheelchair driver has been recorded. Preliminary results show decimeter-level planar accuracy in crowded environment.

Based on this pose estimator, a demonstration of autonomous navigation between defined poses has been shown to practitioners and power wheelchair users during the IH2A boot camp. This will provide the ground of coarse-grained navigation, while the use of sensor-based control and complementary sensors is investigated for precision tasks.

7.2.3 Singularity and stability issues in visual servoing

Participant: François Chaumette.

This study is done in the scope of the ANR Sesame project (see Section 9.3). Following our previous works about the complete determination of the singular configurations of image-based visual servoing using four points, we are now interested in determining the complete set of local minima and saddle points when using again point coordinates as visual features and classical controllers based on the pseudo-inverse of the interaction matrix or following a Levenberg-Marquardt minimization strategy. Preliminary results based on a clever modeling of the system and the intensive use of symbolic calculation are promising.

7.2.4 Multi-sensor-based control for accurate and safe assembly

Participants: John Thomas, François Pasteau, François Chaumette.

This study is done in the scope of the BPI Lichie project 9.3. Its goal is to design sensor-based control strategies coupling vision and proximetry data for ensuring precise positioning while avoiding obstacles in dense environments. The targeted application is the assembly of satellite parts.

In a first part of this study, we designed a general ring of proximetry sensors and modeled the system so that tasks such that plane-to-plane positioning can be achieved 43.

7.2.5 Visual servo of a satellite constellation

Participants: Maxime Robic, Eric Marchand, François Chaumette.

This study is also done in the scope of the BPI Lichie project 9.3. Its goal is to control the orientation of a satellite constellation from a camera mounted on each of them to track particular objects on the ground. In a first part, we concentrated on a single satellite and designed an image-based control scheme able to compensate for the satellite, Earth, and potential object motions 42. This control scheme has then be adapted in order to take into account the dynamic response of the satellite 41

7.2.6 Visual Exploration of an Indoor Environment

Participants: Thibault Noël, Eric Marchand, François Chaumette.

This study is done in collaboration with the Creative company in Rennes (see Section 7.2.6). It is devoted to the exploration of indoor environments by a mobile robot, typically Pepper (see Section 6.2.2), for a complete and accurate reconstruction of the environment.

In this context, we studied a new sampling-based path planning approach, focusing on the challenges linked to autonomous exploration. The proposed method relies on the definition of a disk graph of free-space bubbles, from which we derive a biased sampling function that expands the graph towards known free space for maximal navigability and frontiers discovery. The proposed method demonstrates an exploratory behavior similar to Rapidly-exploring Random Trees, while retaining the connectivity and flexibility of a graph-based planner. We demonstrate the interest of our method by first comparing its path planning capabilities against state-of-the-art approaches, before discussing exploration-specific aspects, namely replanning capabilities and incremental construction of the graph. A simple frontiers-driven exploration controller derived from the proposed planning method has also been demonstrated 36

7.2.7 Model-Based Deformation Servoing of Soft Objects

Participants: Fouad Makiyeh, Alexandre Krupa, Maud Marchal, François Chaumette.

This study takes place in the context of the GentleMAN project (see Section 9.1.2). We proposed a complete pipeline for positioning a feature point of a soft object to a desired 3D position, by acting on a different manipulation point using a robotic manipulator. For that purpose, the analytic relation between the feature point displacement and the robot motion was derived using a coarse mass-spring model (MSM), while taking into consideration the propagation delay introduced by a MSM. From this modeling step, a novel closed-loop controller was designed for performing the positioning task. To get rid of the model approximations, the object is tracked in real-time using a RGB-D sensor, thus allowing to correct on-line any drift between the object and its model. Our model-based and vision-based controller was validated in real experiments and the results showed promising performance in terms of accuracy, efficiency and robustness 35.

7.2.8 Constraint-based simulation of passive suction cups

Participants: Maud Marchal.

In 12, we proposed a physics-based model of suction phenomenon to achieve simulation of deformable objects like suction cups. Our model uses a constraint-based formulation to simulate the variations of pressure inside suction cups. The respective internal pressures are represented as pressure constraints which are coupled with anti-interpenetration and friction constraints. Furthermore, our method is able to detect multiple air cavities using information from collision detection. We solve the pressure constraints based on the ideal gas law while considering several cavity states. We test our model with a number of scenarios reflecting a variety of uses, for instance, a spring loaded jumping toy, a manipulator performing a pick and place task, and an octopus tentacle grasping a soda can. We also evaluate the ability of our model to reproduce the physics of suction cups of varying shapes, lifting objects of different masses, and sliding on a slippery surface. The results show promise for various applications such as the simulation in soft robotics and computer animation.

7.2.9 Manipulation of a deformable wire by two UAVs

Participants: Lev Smolentsev, Alexande Krupa, François Chaumette.

This study takes place in the context of the CominLabs MAMBO project (see Section 9.4). Its main objective is the development of a visual-based control framework for performing autonomous manipulation of a deformable wire attached between two UAVs using data provided by onboard RGB-D cameras. Toward this direction, we developed a visual servoing approach that controls the deformation of a suspended tether cable subject to gravity. The cable shape is modelled with a parabolic curve together with the orientation of the plane containing the tether. The visual features considered are the parabolic coefficients and the yaw angle of that plane. We derived the analytical expression of the interaction matrix that relates the variation of these visual features to the velocities of the cable extremities. Simulations and experimental results obtained with a robotic arm manipulating one extremity of the cable with an eye-to-hand configuration demonstrated the efficiency of this visual servoing approach to deform the tether cable toward a desired shape configuration. This work has been submitted in September to the next IEEE ICRA conference.

7.2.10 Multi-Robot Formation Control and Localization

Participant: Paolo Robuffo Giordano, Claudio Pacchierotti, Maxime Bernard, Nicola De Carli, Lorenzo Balandi.

Systems composed by multiple robots are useful in several applications where complex tasks need to be performed. Examples range from target tracking, to search and rescue operations and to load transportation. In many cases, it is desirable to have a flexible formation where interaction links can be created/lost at runtime depending on the task and environment (e.g., for better maneuvering in cluttered spaces). In 14 we have considered the problem of identifying in a decentralized way a possibly faulty robot in the group, and then proposed a decentralized procedure for reconfigure the group topology for safely removing the faulty agent. The approach is validated via numerical simulations.

Another important problem for multi-robot applications is the need of having a good inter-robot localization (i.e., estimation of relative poses) for then implementing any higher-level formation control. In this context, we have worked on a decentralized trajectory optimization problem that allows a group of robots to (reactively) replan individual optimal trajectories for estimating at best their relative poses from the available measurements (e.g., distances of bearings). This work in now in preparation for a submission to the IEEE Transactions on Network Control Systems.

We are also considering approaches from a different point of view involving the use of machine learning for easing the computational and communication burden of (more classical) model-based formation control and estimation laws. In this direction, we have submitted to the IEEE ICRA 2023 a preliminary work where we show how a network can be trained to estimate in a decentralized way the connectivity level of a group of robots by learning from an established model-based estimator. The advantage of the network is that, after a successful training, it has a much lower computational and communication complexity w.r.t. the model-based approach, and appears to scale better w.r.t. the group size. We are now studying how to extend this initial promising result to more complex multi-robot case studies.

7.2.11 Coupling Force and Vision for Controlling Robot Manipulators

Participants: Alexander Oliva, François Chaumette, Paolo Robuffo Giordano, Mark Wijnands.

The goal of this activity is about coupling visual and force information for advanced manipulation tasks 51. To this end, we are exploiting the recently acquired Panda robot (see Sect. 6.2.3), a state-of-the-art 7-dof manipulator arm with torque sensing in the joints, and the possibility to command torques at the joints or forces at the end-effector. The use of vision in torque-controlled robots is limited because of many issues, among which the difficulty of fusing low-rate images (about 30 Hz) with high-rate torque commands (about 1 kHz).

In 38 we presented results of dynamic visual servoing for the case of moving targets while in interaction with the environment. To this end, we proposed the derivation of a feature space impedance controller for tracking a moving object as well as an Extended Kalman Filter based on the visual servoing kinematics for increasing the rate of the visual information and estimating the target velocity for both the cases of PBVS and IBVS with image point features. We validated the approach via simulations and real experiments involving a Peg-in-Hole insertion task with a moving part.

7.2.12 Deep visual servoing

Participants: Eric Marchand, Samuel Felton.

We proposed a new framework to perform VS in the latent space learned by a convolutional autoencoder. We show that this latent space avoids explicit feature extraction and tracking issues and provides a good representation, smoothing the cost function of the VS process. Besides, our experiments show that this unsupervised learning approach allows us to obtain, without labelling cost, an accurate end-positioning, often on par with the best direct visual servoing methods in terms of accuracy but with a larger convergence area. This work 15 is done in collaboration with the Lacodam team.

7.3 Haptic Cueing for Robotic Applications

7.3.1 Wearable Haptics Systems Design

Participants: Claudio Pacchierotti, Maud Marchal, Lisheng Kuang, Marco Aggravi.

We have been working on wearable haptics since few years now, both from the hardware (design of interfaces) and software (rendering and interaction techniques) points of view 52, 53.

In 30, we developed a wearable cutaneous device capable of applying lateral stretch and position/location haptic feedback to the user’s skin. It is composed of a 2D Cartesian-like structure able to move a pin on the plane parallel to the skin. The pin houses a small metallic sphere of 8 mm of diameter. The sphere can be either left free to rotate when the pin moves, providing location feedback about its absolute position, or kept fixed, providing skin stretch about its relative displacement. Results of a human navigation task show an average navigation error of 0.26 m, which is comparable to state-of-the-art vibrotactile guidance techniques using two vibrating armbands. Similarly, in 31, 32, we presented a 2-degrees-of-freedom (2-DoF) hand-held haptic device for human navigation. It is composed of two platforms moving with respect to each other, actuated by two servomotors housed in one of structures. The device implements a rigid coupling mechanism between the two platforms, based on a three-legged 3-4R constrained parallel linkage, with the two servomotors actuating two of these legs. The device can apply position/kinesthetic haptic feedback to the user hand(s).

In 13, we explored the use of wearable haptics to render contacts during virtual crowd navigation. We focus on the behavioural changes occurring with or without haptic rendering during a navigation task in a dense crowd, as well as on potential after-effects introduced by the use haptic rendering. We designed an experiment (N=23) where participants navigated in a crowded virtual train station without, then with, and then again without haptic feedback of their collisions with virtual characters. Results show that providing haptic feedback improved the overall realism of the interaction, as participants more actively avoided collisions.

Finally, in the framework of H2020 project TACTILITY, we are working on designing interaction and rendering techniques for wearable electrotactile interfaces. Electrotactile feedback is provided by a system comprised of electrodes and stimulators (actuators). The electrical current travels through the subdermal area between the anode(s) and cathode(s) and stimulates the nerves endings (i.e., skin’s receptors). The area of the skin where the electrode contacts the skin is stimulated, however, the sensation may be spread further when the contact point is near nerve bundles. The way electrotactile systems function is therefore completely different from mechanical and thermal tactile interfaces, as electrotactile feedback is not mediated by any skin receptor. We wrote a review paper on this topic 19, focusing on how electrotactile can be used for immersive Virtual Reality (VR) interactions.

In 20, we carried out a user study in which participants performed a standardized Fitts's law target acquisition task by using three feedback modalities: visual, visuo-electrotactile, and visuo-vibrotactile. Performance-wise, the results showed that electrotactile feedback facilitates a significantly better accuracy compared to vibrotactile and visual feedback, while vibrotactile provided the worst accuracy. Electrotactile and visual feedback enabled a comparable reaction time, while the vibrotactile offered a substantially slower reaction time than visual feedback. These outcomes highlighted the importance of (electrotactile) haptic feedback on performance, and importantly the significance of action-specific effects on spatial and time perception in VR.

In 44, 45, we proposed a tactile rendering architecture to render finger interactions in VR. We designed six electrotactile stimulation patterns/effects (EFXs) striving to render different tactile sensations. In a user study, we assessed the six EFXs in three diverse finger interactions: 1) tapping on a virtual object; 2) pressing down a virtual button; 3) sliding along a virtual surface. Results showed the importance of the coherence between the modulation and the interaction being performed and the study proved the versatility of electrotactile feedback and its efficiency in rendering different haptic information and sensations.

7.3.2 Mid-Air Haptic Feedback

Participants: Claudio Pacchierotti, Thomas Howard, Guillaume Gicquel, Maud Marchal, Lendy Mulot.

In the framework of H2020 projects H-Reality and E-TEXTURE, we have been working to develop novel mid-air haptics paradigms that can convey the information spectrum of touch sensations in the real world, motivating the need to develop new, natural interaction techniques. Both projects ended in 2022.

In 17, we investigated how to achieve sensations of continuity or gaps within tactile 2D curves rendered by ultrasound mid-air haptics (UMH). We studied the perception of pairs of amplitude-modulated focused ultrasound stimuli, investigate perceptual effects which may arise from providing simultaneous UMH stimuli, and provide perception-based rendering guidelines for generating continuous or discontinuous sensations of tactile shapes. Mean gap detection thresholds were found at 32.3 mm spacing between focal points, but a high within- and between-subject variability was observed.

In 49, 50, we presented an overview of mid-air haptic technologies for interacting in immersive environments, also analyzing the future perspectives of the field.

7.3.3 Encountered-Type Haptic Devices

Participants: Elodie Bouzbib.

Encountered-Type Haptic Displays (ETHDs) provide haptic feedback by positioning a tangible surface for the user to encounter. This allows users to freely elicit haptic feedback with a surface during a virtual simulation. ETHDs differ from most of current haptic devices which rely on an actuator always in contact with the user.

In 24, we used a Failure Mode and Effects Analysis approach to identify, organize, and analyze the failure modes and their causes in the different stages of an ETHD scenario and highlight appropriate solutions from the literature to mitigate them. We help justify these interfaces’ lack of deployment, to ultimately identify guidelines for future ETHD designers.

7.3.4 Multimodal Cutaneous Haptics to Assist Navigation and Interaction in VR

Participants: Louise Devigne, Marco Aggravi, Inès Lacôte, Pierre-Antoine Cabaret, François Pasteau, Maud Marchal, Claudio Pacchierotti, Marie Babel.

Within the project Inria Challenge 9.3, we got interested on using cutaneous haptics for aiding the navigation of people with sensory disabilities. In particular, we investigated the ability of vibrotactile sensations and tap stimulations in conveying haptic motion and sensory illusions.

In 34, we investigated whether continuous mechanical stimulations, or taps, that are activated in sequence, can also create a convincing illusion of haptic motion across the skin. Moreover, we also test whether an increased curvature of the contact surface impacts the quality of the felt illusion. Results showed that the proposed “tap” stimulation was as efficient as a 120 Hz vibrotactile stimulation in conveying the haptic motion illusion. Moreover, results showed that the curvature of the contact surface had little effect on the quality of the sensation. In 33, we extended this study to investigate the discrimination of velocity changes in apparent haptic motion and the robustness of this perception, considering two stimulating sensations and two directions of motion. Results show that participants were better at discriminating the velocity of the illusory motion when comparing stimulations with higher differences in the actuators activation delay.

In 25, we designed a tangible cylindrical handle that allows interaction with virtual objects extending beyond it. The handle is fitted with a pair of vibrotactile actuators with the objective of providing in-hand spatialized cues indicating direction and distance of impacts. We evaluated its capability for rendering spatialized impacts with external virtual objects. Results show that it performs very well for conveying an impact’s direction and moderately well for conveying an impact’s distance to the user.

In 29, we devised a multi-modal rendering approach for the simulation of virtual moving objects inside a hollow container, based on the combination of haptic and audio cues generated by voice-coils actuators and high-fidelity headphones, respectively. Thirty participants were asked to interact with a target cylindrical hollow object and estimate the number of moving objects inside, relying on haptic feedback only, audio feedback only, or a combination of both. Results indicate that the combination of various senses is important in the perception of the content of a container.

The use of a mobility aid can be challenging for people with both motor and visual impairment. In order to help them increase their autonomy, we proposed in 28 to design an adapted feedback through vibrotactile handles adaptable to any walker, by indicating the proximity of obstacles on the way of the user. We conducted a technical validation study on 14 able-bodied blindfolded participants. The objective was to perform without collisions and as fast as possible on a circuit. Through a specific user study, we collected user feedback after 2 months of daily-life use by a blind end-user with cerebellar syndrom. Results show a major improvement of the safety of navigation.

7.3.5 Digital Twins for robotics

Participants: Claudio Pacchierotti.

Among the most recent enabling technologies, Digital Twins (DTs) emerge as data-intensive network-based computing solutions in multiple domains—from Industry 4.0 to Connected Health. A DT works as a virtual system for replicating, monitoring, predicting, and improving the processes and the features of a physical system – the Physical Twin (PT), connected in real-time with its DT. Such a technology, based on advances in fields like the Internet of Things (IoT) and machine learning, proposes novel ways to face the issues of complex systems as in Human-Robot Interaction (HRI) domains.

In 11, we proposed a physical-digital twinning approach to improve the understanding and the management of the PT in contexts of HRI according to the interdisciplinary perspective of neuroergonomics.

7.4 Shared Control Architectures

7.4.1 Shared Control for Multiple Robots

Participants: Marco Aggravi, Paolo Robuffo Giordano, Claudio Pacchierotti.

Following our previous works on flexible formation control of multiple robots with global requirements, in particular connectivity maintenance, in 10 we have instead presented a decentralized haptic-enabled connectivity-maintenance control framework for heterogeneous human-robot teams. The proposed framework controls the coordinated motion of a team consisting of mobile robots and one human, for collaboratively achieving various exploration and SAR tasks. The human user physically becomes part of the team, moving in the same environment than the robots, while receiving rich haptic feedback about the team connectivity and the direction toward a safe path. We carried out human subjects studies, both in simulated and real environments. The results showed that the proposed approach is effective and viable in a wide range of scenarios.

7.4.2 Shared Control for Virtual Character Animation

Participants: Claudio Pacchierotti.

Shared control techniques can also be used to adapt the motion of virtual characters to respond to some external stimuli, e.g., the motion of the user's avatar.

In 18, we proposed the design of viewpoint-dependent motion warping units that perform autonomous subtle updates on animations through the specification of visual motion features such as visibility, or spatial extent. We express the problem as a specific case of visual servoing, where the warping of a given character motion is regulated by a number of visual features to enforce. Results demonstrate the relevance of the approach for different motion editing tasks and its potential to empower virtual characters with attention-aware communication abilities. The effects of non-verbal communication was then studied more in depth in 40, where we investigate the role of gazing in VR.

7.4.3 Shared Control of a Wheelchair for Navigation Assistance

Participants: Louise Devigne, François Pasteau, Marie Babel.

Power wheelchairs allow people with motor disabilities to have more mobility and independence. In order to improve the access to mobility for people with disabilities, we previously designed a semi-autonomous assistive wheelchair system which progressively corrects the trajectory as the user manually drives the wheelchair and smoothly avoids obstacles.

Ecologicals experiment have been conducted in two museums, Musée des beaux Arts and Musée de Bretagne, both in Rennes 48, 54. During the experiments, a sample of 20 powered wheelchair users composed of expert users as well as users experiencing high difficulties while steering a wheelchair, were asked to visit a museum during one hour using a powered wheelchair equipped with a driving assistance module. Some users were accompanied with their relatives. We clearly confirmed the excellent ability of the system to assist users in an ecological environnement as well as the beneficial effect on their relatives induced by their awareness of the user and surrounding safety. Results show that usability and learning are very good as well as usefulness. The social dimensions and the use intention were good, indicating a propensity of the users to use the device. Other studies are underway, particularly in elderly care facilities.