2023Activity reportProject-TeamPOTIOC

RNSR: 201221023D- Research center Inria Centre at the University of Bordeaux

- In partnership with:Université de Bordeaux, CNRS

- Team name: Novel Multimodal Interactions for a Stimulating User Experience

- In collaboration with:Laboratoire Bordelais de Recherche en Informatique (LaBRI)

- Domain:Perception, Cognition and Interaction

- Theme:Interaction and visualization

Keywords

Computer Science and Digital Science

- A3.2.2. Knowledge extraction, cleaning

- A3.4.1. Supervised learning

- A5.1.1. Engineering of interactive systems

- A5.1.2. Evaluation of interactive systems

- A5.1.4. Brain-computer interfaces, physiological computing

- A5.1.6. Tangible interfaces

- A5.1.7. Multimodal interfaces

- A5.1.8. 3D User Interfaces

- A5.2. Data visualization

- A5.6. Virtual reality, augmented reality

- A5.6.1. Virtual reality

- A5.6.2. Augmented reality

- A5.6.3. Avatar simulation and embodiment

- A5.6.4. Multisensory feedback and interfaces

- A5.9. Signal processing

- A5.9.2. Estimation, modeling

- A9.2. Machine learning

- A9.3. Signal analysis

Other Research Topics and Application Domains

- B1.2. Neuroscience and cognitive science

- B2.1. Well being

- B2.5.1. Sensorimotor disabilities

- B2.6.1. Brain imaging

- B3.1. Sustainable development

- B3.6. Ecology

- B9.1. Education

- B9.1.1. E-learning, MOOC

- B9.5.3. Physics

- B9.6.1. Psychology

1 Team members, visitors, external collaborators

Research Scientists

- Martin Hachet [Team leader, INRIA, Senior Researcher, HDR]

- Pierre Dragicevic [INRIA, Researcher, HDR]

- Yvonne Jansen [CNRS, Researcher]

- Fabien Lotte [INRIA, Senior Researcher, HDR]

- Arnaud Prouzeau [INRIA, ISFP]

- Sebastien Rimbert [INRIA, ISFP]

Faculty Member

- Stephanie Cardoso [UNIV BORDEAUX MONTAI, Associate Professor Delegation, until Aug 2023]

Post-Doctoral Fellows

- Hessam Djavaherpour [UNIV BORDEAUX, Post-Doctoral Fellow]

- Claudia Krogmeier [INRIA, Post-Doctoral Fellow, from Feb 2023]

PhD Students

- Come Annicchiarico [UDL]

- Ambre Assor [INRIA]

- Vincent Casamayou [UNIV BORDEAUX]

- Edwige Chauvergne [INRIA]

- Pauline Dreyer [INRIA, from Oct 2023]

- Aymeric Ferron [INRIA, from Mar 2023]

- Morgane Koval [INRIA]

- Juliette Le Meudec [INRIA, from Oct 2023]

- Valerie Marissens [INRIA, from Dec 2023]

- Maudeline Marlier [SNCF, CIFRE]

- Leana Petiot [INRIA, from Oct 2023]

- Clara Rigaud [SORBONNE UNIVERSITE, until Jun 2023]

- Emma Tison [UNIV BORDEAUX]

- David Trocellier [UNIV BORDEAUX]

- Marc Welter [INRIA]

Technical Staff

- Axel Bouneau [INRIA, Engineer]

- Justin Dillmann [INRIA, Engineer]

- Pauline Dreyer [INRIA, Technician, until Sep 2023]

Interns and Apprentices

- Ines Audinet [INRIA, Intern, from Nov 2023]

- Jonathan Baum [INRIA, Intern, from Mar 2023 until Aug 2023]

- Loic Bechon [INRIA, Intern, from Mar 2023 until Jun 2023]

- Emilie Clement [INRIA, Intern, from Apr 2023 until Jul 2023]

- Aymeric Ferron [INRIA, Intern, until Jan 2023]

- Juliette Le Meudec [INRIA, Intern, from Mar 2023 until Aug 2023]

- Juliette Meunier [INRIA, Intern, from May 2023 until Aug 2023]

- Leana Petiot [INRIA, Intern, from Feb 2023 until Jun 2023]

- Juliette Ribes [UNIV BORDEAUX, Intern, from Jun 2023 until Jul 2023]

- Zachary Traylor [INRIA, Intern, until Jan 2023]

Administrative Assistant

- Anne-Lise Pernel [INRIA, from Mar 2023]

2 Overall objectives

The standard human-computer interaction paradigm based on mice, keyboards, and 2D screens, has shown undeniable benefits in a number of fields. It perfectly matches the requirements of a wide number of interactive applications including text editing, web browsing, or professional 3D modeling. At the same time, this paradigm shows its limits in numerous situations. This is for example the case in the following activities: i) active learning educational approaches that require numerous physical and social interactions, ii) artistic performances where both a high degree of expressivity and a high level of immersion are expected, and iii) accessible applications targeted at users with special needs including people with sensori-motor and/or cognitive disabilities.

To overcome these limitations, Potioc investigates new forms of interaction that aim at pushing the frontiers of the current interactive systems. In particular, we are interested in approaches where we vary the level of materiality (i.e., with or without physical reality), both in the output and the input spaces. On the output side, we explore mixed-reality environments, from fully virtual environments to very physical ones, or between both using hybrid spaces. Similarly, on the input side, we study approaches going from brain activities, that require no physical actions of the user, to tangible interactions, which emphasize physical engagement. By varying the level of materiality, we adapt the interaction to the needs of the targeted users.

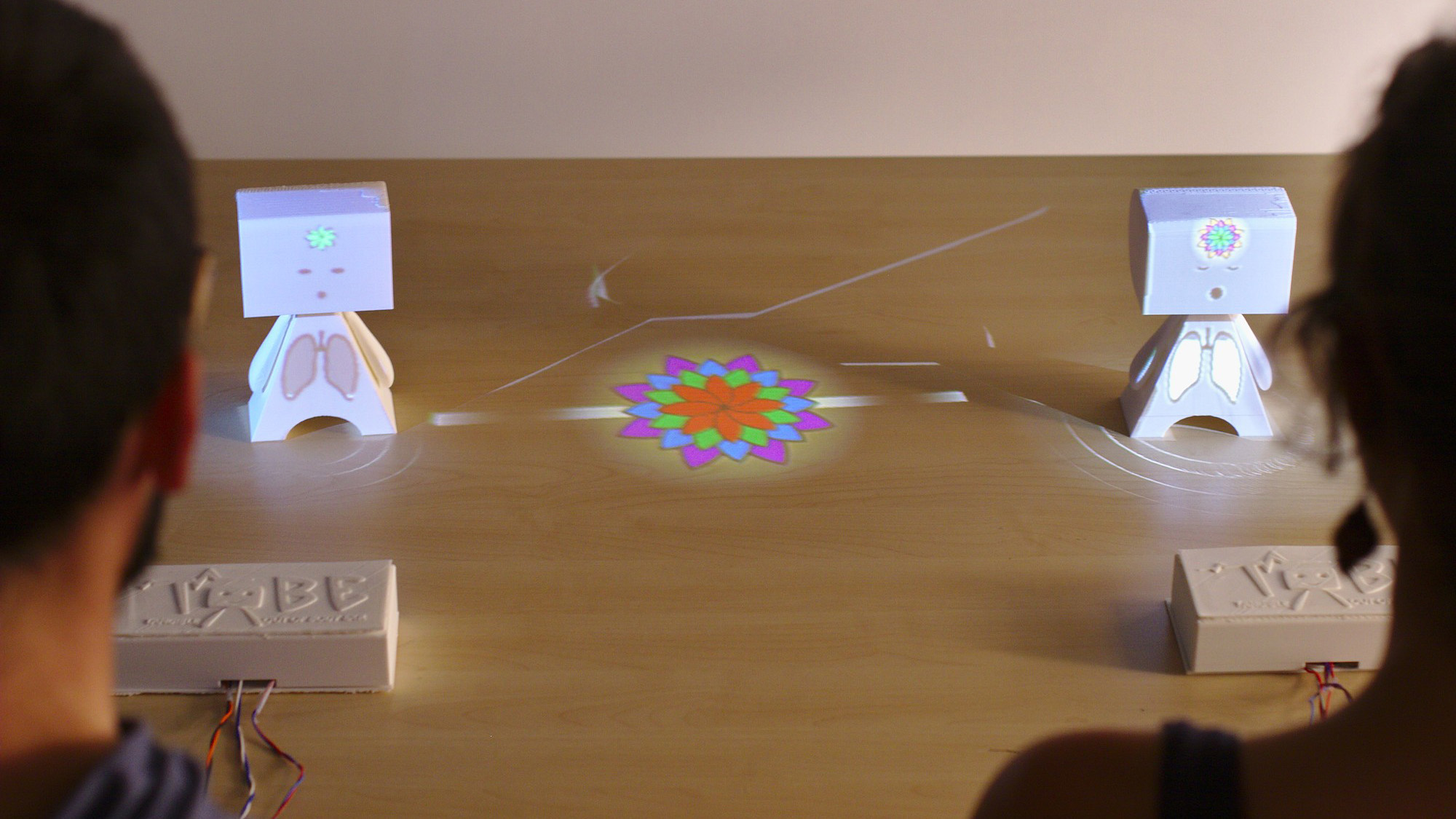

Two users are facing a physical puppet on which digital information are projected.

The main applicative domains targeted by Potioc are Education, Art, Entertainment and Well-being. For these domains, we design, develop, and evaluate new approaches that are mainly dedicated to non-expert users. In this context, we thus emphasize approaches that stimulate curiosity, engagement, and pleasure of use.

3 Research program

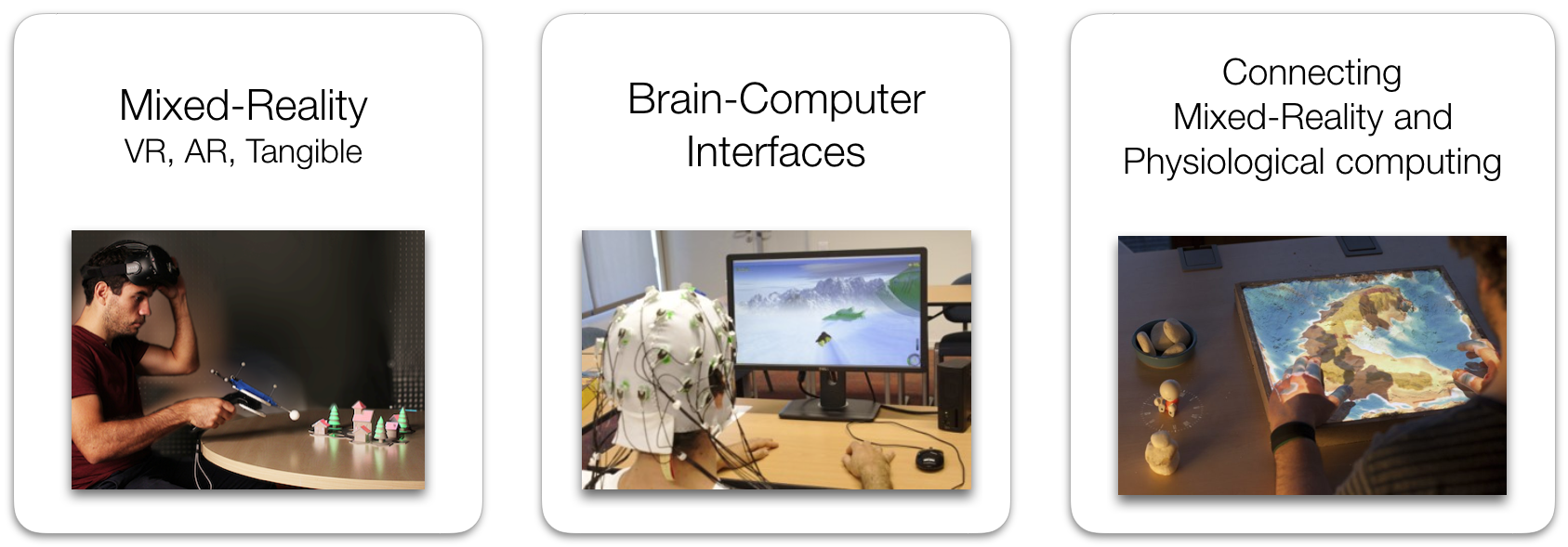

To achieve our overall objective, we follow two main research axes, plus one transverse axis, as illustrated in Figure 2.

In the first axis dedicated to Interaction in Mixed-Reality spaces, we explore interaction paradigms that encompass virtual and/or physical objects. We are notably interested in hybrid environments that co-locate virtual and physical spaces, and we also explore approaches that allow one to move from one space to the other.

The second axis is dedicated to Brain-Computer Interfaces (BCI), i.e., systems enabling user to interact by means of brain activity only. We target BCI systems that are reliable and accessible to a large number of people. To do so, we work on brain signal processing algorithms as well as on understanding and improving the way we train our users to control these BCIs.

Finally, in the transverse axis, we explore new approaches that involve both mixed-reality and neuro-physiological signals. In particular, tangible and augmented objects allow us to explore interactive physical visualizations of human inner states. Physiological signals also enable us to better assess user interaction, and consequently, to refine the proposed interaction techniques and metaphors.

Three images visually represent Potioc's research axes: a user with a HMD facing physical objects, a user wearing an EEG in front of a screen, and an sandbox augmented with digital information projected on it.

From a methodological point of view, for these three axes, we work at three different interconnected levels. The first level is centered on the human sensori-motor and cognitive abilities, as well as user strategies and preferences, for completing interaction tasks. We target, in a fundamental way, a better understanding of humans interacting with interactive systems. The second level is about the creation of interactive systems. This notably includes development of hardware and software components that will allow us to explore new input and output modalities, and to propose adapted interaction techniques. Finally, in a last higher level, we are interested in specific application domains. We want to contribute to the emergence of new applications and usages, with a societal impact.

4 Application domains

4.1 Education

Education is at the core of the motivations of the Potioc group. Indeed, we are convinced that the approaches we investigate—which target motivation, curiosity, pleasure of use and high level of interactivity—may serve education purposes. To this end, we collaborate with experts in Educational Sciences and teachers for exploring new interactive systems that enhance learning processes. We are currently investigating the fields of astronomy, optics, and neurosciences. We have also worked with special education centres for the blind on accessible augmented reality prototypes. Currently, we collaborate with teachers to enhance collaborative work for K-12 pupils. In the future, we will continue exploring new interactive approaches dedicated to education, in various fields. Popularization of Science is also a key domain for Potioc. Focusing on this subject allows us to get inspiration for the development of new interactive approaches.

4.2 Art

Art, which is strongly linked with emotions and user experiences, is also a target area for Potioc. We believe that the work conducted in Potioc may be beneficial for creation from the artist point of view, and it may open new interactive experiences from the audience point of view. As an example, we have worked with colleagues who are specialists in digital music, and with musicians. We have also worked with jugglers and we are currently working with a scenographer with the goal of enhancing interactivity of physical mockups and improve user experience.

4.3 Entertainment

Similarly, entertainment is a domain where our work may have an impact. We notably explored BCI-based gaming and non-medical applications of BCI, as well as mobile Augmented Reality games. Once again, we believe that our approaches that merge the physical and the virtual world may enhance the user experience. Exploring such a domain will raise numerous scientific and technological questions.

4.4 Well-being

Finally, well-being is a domain where the work of Potioc can have an impact. We have notably shown that spatial augmented reality and tangible interaction may favor mindfulness activities, which have been shown to be beneficial for well-being. More generally, we explore introspectibles objects, which are tangible and augmented objects that are connected to physiological signals and that foster introspection. We explore these directions for general public, including people with special needs.

5 Social and environmental responsibility

5.1 Mental Health and accessibility

In collaboration with colleagues in neuropsychology (Antoinette Prouteau), we are exploring how augmented reality can help to better explain schizophrenia and fight against stigmatization. This project is directly linked to Emma Tison's PhD thesis and our Inria ”Action exploratoire” LiveIt.

Previously, we have been interested in designing and developing tools that can be used by people suffering from physiological or cognitive disorders. In particular, we used the PapARt tool developed in the team to contribute making board games accessible for people suffering from visual impairment 58. We also designed a MOOC player, called AIANA, in order to ease the access of people with cognitive disorders (attention, memory). See the dedicated page.

As part of our research on Brain-Computer Interfaces, we also worked with users with severe motor impairment (notably tetraplegic users or stroke patients) to restore or replace some of their lost functions, by designing BCI-based assistive technologies or motor rehabilitation approaches, see, e.g., 1.

5.2 Augmented reality for environmental challenges

In response to the big challenge of climate change, we are currently orienting our research towards approaches that may contribute to pro-environmental behaviors. To do so, we are framing research directions and building projects with the objective of putting our expertise in HCI, visualization, and mixed-reality to work for the reduction of human impact on the planet (see 45). In 2023, we officially started the ANR Be·aware project dedicated to this subject with colleagues in environmental sciences (CIRED) and behavioral sciences (Lessac). See also ARwavs in 8.10.

5.3 Humanitarian information visualization

Members of the team are involved in research promoting humanitarian goals, such as studying how to design visualizations of humanitarian data in a way that promotes charitable donations to traveling migrants and other populations in need, or how to convey income inequalities in a way that makes people more likely to favor redistribution. Some of this research has been featured in a recent HDR dissertation (see Section 8.15), but more research is underway.

5.4 Gender Equality

Members of the team are involved in research on gender in academia, such as patterns of collaboration between men and women, and gender balance in organization and steering committees, and among award recipients (more in the 2022 RADAR report).

6 Highlights of the year

- Pierre Dragicevic defended his HDR.

- ERC “Proof of concept” grant SPEARS (Skill Performance Estimation from cARdiac Signals) accepted

6.1 Awards

- Yvonne Jansen received the bronze medal of CNRS.

- Fabien Lotte received the Nature Mentorship Award 2023 (“Mid-career” category)

- Fabien Lotte received the Lovelace-Babbage Award 2023 from the French academy of science in collaboration with the French Informatics Society

7 New software, platforms, open data

7.1 New software

7.1.1 SHIRE

-

Name:

Simulation of Hobit for an Interactive and Remote Experience

-

Keywords:

Unity 3D, Optics, Education

-

Functional Description:

SHIRE allows to have a simulation of the HOBIT device, an augmented table enhancing the teaching of optics at university level, in a completely digital version. It can be used outside of the classroom and still provide an experience similar to the one available on HOBIT. SHIRE can be used in a collaborative way, thanks to the connectivity between several instances of the software, allowing to work in groups on the same session.

-

Contact:

Vincent Casamayou

-

Participants:

Vincent Casamayou, Justin Dillmann, Martin Hachet, Bruno Bousquet, Lionel Canioni

7.2 New platforms

7.2.1 OpenVIBE

Participants: Axel Bouneau, Fabien Lotte.

External collaborators: Thomas Prampart [Inria Rennes - Hybrid], Anatole Lécuyer [Inria Rennes - Hybrid].

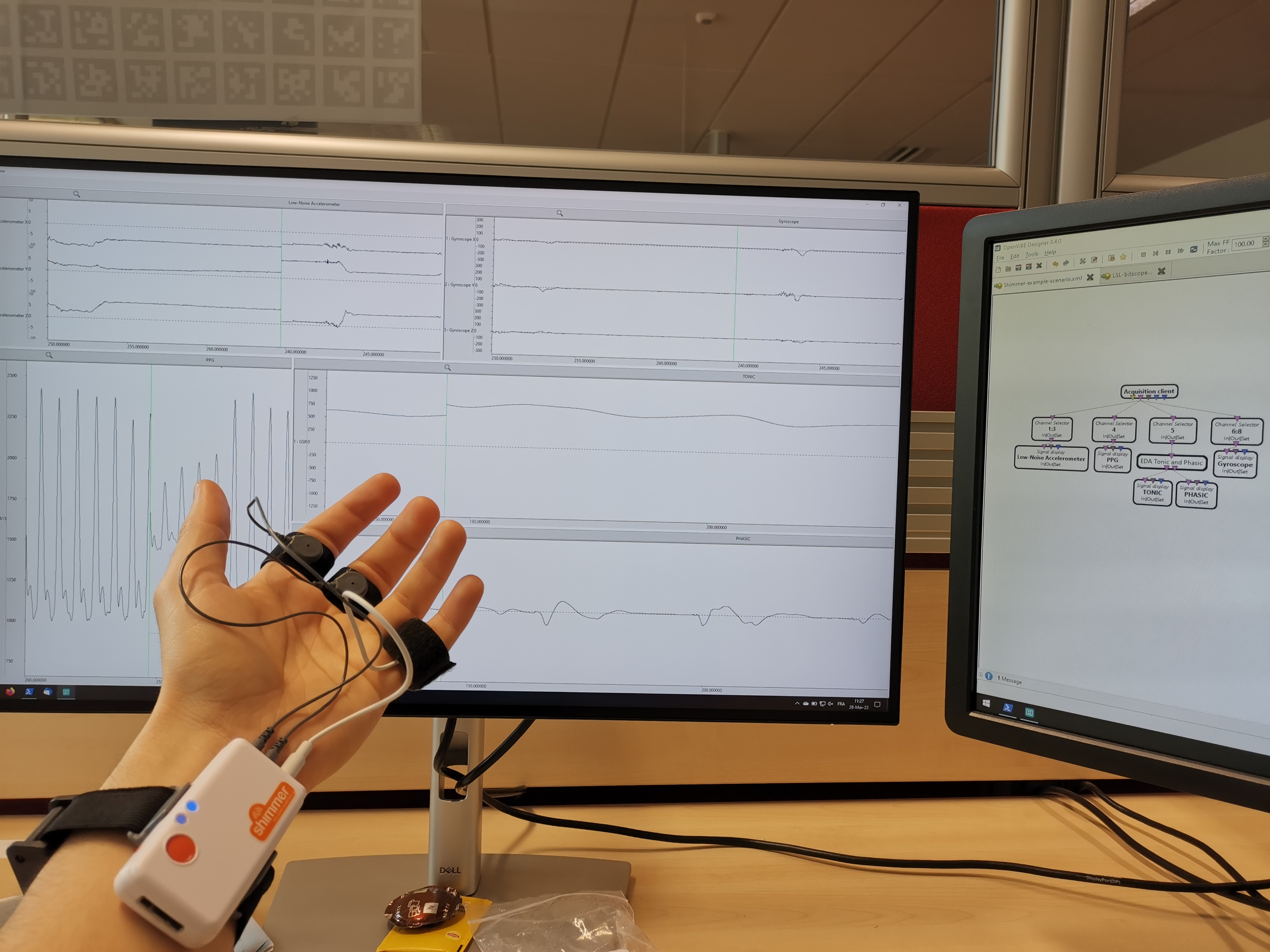

OpenViBE is an open-source and free software dedicated to designing, testing and using brain-computer interfaces. In 2023, version 3.5.0 of OpenViBE was released, and some developpement has been made towards the upcomming 3.6.0 release. These updates include, among others, the following features that were developped by the Potioc team.

OpenViBE designer:

- New metaboxes: tonic and phasic filters for Galvanic Skin Response (GSR) (3.6.0)

- New metabox: asymmetry index for Electroencephalography (EEG) (3.6.0)

- New box: pulse rate calculator for Photoplethysmography (PPG) (3.6.0)

- Various bug fixes (3.5.0) and (3.6.0)

OpenViBE Acquisition server:

- Updated driver: Shimmer GSR driver (preformance and user interface improvements) (3.5.0)

a picture showing a user wearing the shimmer, a wristband with fingertip sensors.

7.3 Open data

- osf.io/nu4z3/ – Research material and particpants' sketches shared on OSF for the VIS'23 short paper Towards Autocomplete Strategies for Visualization Construction40

- osf.io/q5fr6/ – Fabrication instructions for the prototype generated for the project Edo: A Participatory Data Physicalization on the Climate Impact of Dietary Choices38

- osf.io/ufzp5/ – Research material (including data and analysis scripts) and Preregistration shared on OSF for the paper Decoupling Judgment and Decision Making: A Tail of Two Tails20.

- osf.io/v4yxs/ – Research material (including data and analysis scripts) and Preregistration shared on OSF for the paper Augmented Reality waste accumulation visualization evaluation45.

- zenodo.org/records/7554429 – A large public EEG database with users' profile information for motor imagery Brain-Computer Interface research 49

8 New results

8.1 Practical work in quantum physics

Participants: Vincent Casamayou, Justin Dillmann, Martin Hachet.

External collaborators: Bruno Bousquet [Univ. Bordeaux], Lionel Canioni [Univ. Bordeaux], Jean-Paul Guillet [Univ. Bordeaux].

HQBIT (Hybrid Quantum Bench for Innovative Teaching), is a tangible and AR optical bench that we are developing to perform quantum optics experiments, based on the HOBIT platform. It allows the user to reproduce quantum optics experiments in a real-time simulated environment, using tangible reproductions of optical elements, and augmented reality to provide pedagogical information. This simulation includes numerous optical components (detectors, nonlinear crystal, pulsed laser and so on) and light characteristics (e.g. photons statistics and entanglement), which are required to recreate quantum optics phenomenons. This system was designed directly in collaboration with physicians working in the optics field to ensure the precision and accuracy of the simulation. Using HQBIT students can reproduce from scratch, key quantum optics experiments such as Alain Aspect’s experiment, that demonstrate the entanglement between a pair of photons, or the Mach-Zehnder Interferometer, that illustrate wave-particle duality. See 4 and 48.

A view of the desktop version of the HOBIT software

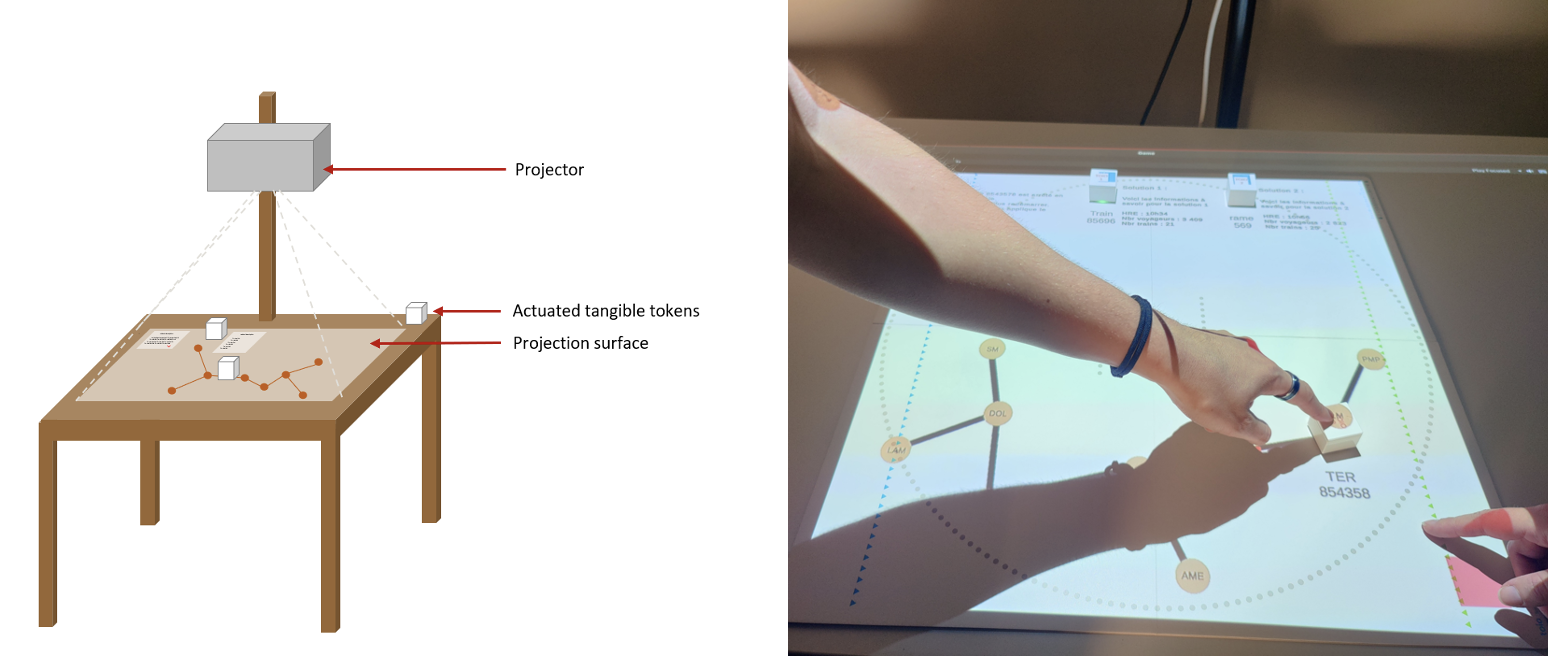

8.2 Tangible interfaces for Railroad Traffic Monitoring

Participants: Maudeline Marlier, Arnaud Prouzeau, Martin Hachet.

Maudeline Marlier started her PhD in March 2022 at Potioc on a Cifre contract with the SNCF on the use of tangible interaction in railroad traffic monitoring control rooms. Automation in such context is increasing, particularly with the expanding train traffic worldwide, the role of humans remains essential. This is particularly true in case of crisis, where operators with different expertise need to collaborate to make critical decisions. We conducted an in-the-field study to observe how operators work and collaborate, especially in crisis scenarios, and identify the limits of the current approaches. Inspired by this, we developed a prototype to investigate the use of actuated tangible tokens on a tabletop to visualise and collaboratively explore different solutions to resolve incidents (see Fig. 5). To gauge the implications and opportunities of our approach we performed formative evaluations with operators in a high-speed train control centre. Our results suggest that actuated tangible tokens are promising for collaboration, allowing the addition of information with a simple gesture and understanding easily through movements on the table. A paper on this is going to be submitted at ACM ISS 2024.

Left: Schema of the prototype with a projector, a table and actuated tangible tokens. Right: An up-close picture of two people interacting with the tangible actuated tokens on the table.

Schema (left) and picture (right) of the implemented prototype with projection and actuated tangible tokens.

8.3 Avatars in Augmented Reality

Participants: Adélaïde Genay, Martin Hachet.

External collaborators: Anatole Lécuyer [Inria Rennes - Hybrid], Kiyoshi Kiyokawa [NAIST, Japan], Riku Otono [NAIST, Japan].

In 2023, we have continued our work with our colleagues from Japan, how it is possible to enhance the Sense of Embodiment (SoE) by introducing transitions between one real body and its virtual counterpart. In particular, we have showed that visual transitions effectively improve the SoE 32. We are also exploring priming approaches that tend to show that SoE can be enhanced when the subject follows a mental preparation protocol.

8.4 Knowledge Resources in Fabrication Workshops

Participants: Clara Rigaud, Yvonne Jansen.

External collaborators: Gilles Bailly.

Creating, using, and sharing knowledge resources is central to the culture and practice of fabrication workshops. We reviewed the research literature on these knowledge resources including the fields of Human Computer Interaction, but also Education and Design. We identify four goals that a maker may pursue when creating a resource: (1) Representing a fabrication project, (2) Reusing one's work, (3) Supporting reflection et (4) Communicating. For each of these goals, we articulate two challenges that makers may face. We then illustrate, through four examples, how this set of challenges can be used as a grid to compare different approaches, before discussing the relationship between the challenges and the tools to address them. This work resulted in an article published at IHM 36.

8.5 Position paper: An Interdisciplinary Approach to Designing and Evaluating Data-Driven Interactive Experiences for Sustainable Decision-Making

Participants: Yvonne Jansen, Ambre Assor, Pierre Dragicevic, Aymeric Ferron, Arnaud Prouzeau, Martin Hachet.

External collaborators: Thomas Le Gallic [CIRED], Ivan Ajdukovic [LESSAC], Eleonora Montagner [LESSAC], Eli Spiegelman [LESSAC], Angela Sutan [LESSAC].

We wrote a position paper on our interdisciplinary research approach taken in the ANR Be·Aware project: Running long-term field studies is a valuable yet slow and costly approach to test the efficacy of interaction designs on people's everyday decision making and behavior. In this position paper we described the rationale behind an interdisciplinary approach to designing and evaluating data-driven interactive experiences for sustainable decision-making. We are a group consisting of HCI and visualization researchers, energy-economy modeling experts who work on mitigation pathways, and behavioral economists who run controlled experiments to study how people make decisions in an incentivized context. The intent of this collaboration is twofold: (1) we study how to design efficient data-driven experiences to help people build an intuition concerning the sustainability of daily choices and actions; (2) we explore the mutual benefit of working across disciplinary boundaries to go beyond the limits of our respective methodological toolboxes. We illustrated our approach through an example of a work-in-progress.

8.6 Exploring how to support Inuits in learning of RADAR imagery color scales data for sea ice safety

Participants: Hessam Djavaherpour, Yvonne Jansen.

External collaborators: Lynn Moorman [Mount Royal University], Faramarz Samavati [University of Calgary].

The changing climate and increasingly unpredictable sea ice conditions have created life-threatening risks for Inuit, the residents of the Arctic, who depend on the ice for transportation and livelihood. In response, they are turning to technology (e.g., RADAR imagery from the Canadian RADARSAT satellite) to augment their traditional knowledge of the ice and to map potential hazards. The difficulty lies in the actual RADAR interpretation process. In order to support understanding of the RADAR image content, we reported a work-in-progress, INTUIT, a physicalization that represents the RADAR reflection strength, which is highly influenced by surface roughness, as a tactile texture. Such tactile texture is made by resampling the RADAR imagery to a number of UV cells and mapping the average brightness value of each cell to a physical variable. A proof of concept was designed for a region in Baffin Island (Nunavut) and sent to the Arctic for initial feedback. Preliminary study results were promising and suggest that INTUIT could facilitate the interpretation learning process for RADAR imagery.

8.7 Simulation of schizophrenia in AR

Participants: Claudia Krogmeier, Emma Tison, Justin Dillmann, Arnaud Prouzeau, Martin Hachet.

External collaborators: Antoinette Prouteau [Université de Bordeaux].

In collaboration with colleagues in neurophysiology from Université of Bordeaux, we explore the use of AR to let students in mental health better understand what schizophrenia is. This work is part of the Inria ”Action Exploratoire” LiveIt and benefit from and interdisciplinary PhD funding from Univeersité of Bordeaux.

Symptoms of schizophrenia are often difficult for those without schizophrenia to understand, which can lead to significant stigmatization towards those who experience this disorder. There exist numerous simulations of schizophrenia across different modalities such as audio recordings, desktop simulations, as well as virtual and augmented reality simulations which have tried to mitigate stigma. While many of these simulations have proven efficacious in reducing multiple elements of stigma, several others have instead increased the desire for social distance from those with schizophrenia. In our work, we are designing an interactive simulation of schizophrenia within an augmented reality application. We will investigate how users experience various symptoms, and determine if the developed simulation reduces stigma towards those with schizophrenia. We will collect both qualitative and quantitative data in order to understand the user’s experience with the simulation, and expand upon our prototype in the future in order to investigate the representation of additional symptomatology through augmented reality.

8.8 Use of Space in Immersive Analytics

Participants: Arnaud Prouzeau, Edwige Chauvergne, Pierre Dragicevic, Martin Hachet.

External collaborators: Yidan Zhang [Monash University], Barrett Ens [Monash University], Sarah Goodwin [Monash University], Kadek Satriadi [Monash University].

An immersive system is one whose technology allows us to “step through the glass” of a computer display to engage in a visceral experience of interaction with digitally-created elements. As immersive technologies have rapidly matured to bring commercially successful virtual and augmented reality (VR/AR) devices and mass market applications, researchers have sought to leverage its benefits, such as enhanced sensory perception and embodied interaction, to aid human data understanding and sensemaking. In 2023, we focused mainly on the immersive visualisation of spatio-temporal datasets and on storytelling.

We particularly focused on space-time cube visualisation. The space-time cube is a common approach for spatial-temporal data visualisation. However, little research has investigated its design choices in virtual environments. We conducted two user studies to explore the relative effectiveness of visual encodings for an immersive layered space-time cube. In the first study, we evaluated data encodings on different map scales (See Fig. 6). Participants performed value identification, value comparison, and trend identification tasks. In the second study, we investigated the effect of shapes of visual objects on the perception of encoding for comparison and trend tasks. Our results suggested that colour overall performs the best while the effectiveness of area and thickness varies for different map scales and task types. The impact of shape is limited, as the results show that colour is more effective than area across the majority of studied shapes. We believe the findings will guide future designs of virtual space-time cube visualisations in enhancing data understanding. This work is under review at the conference CHI 2024.

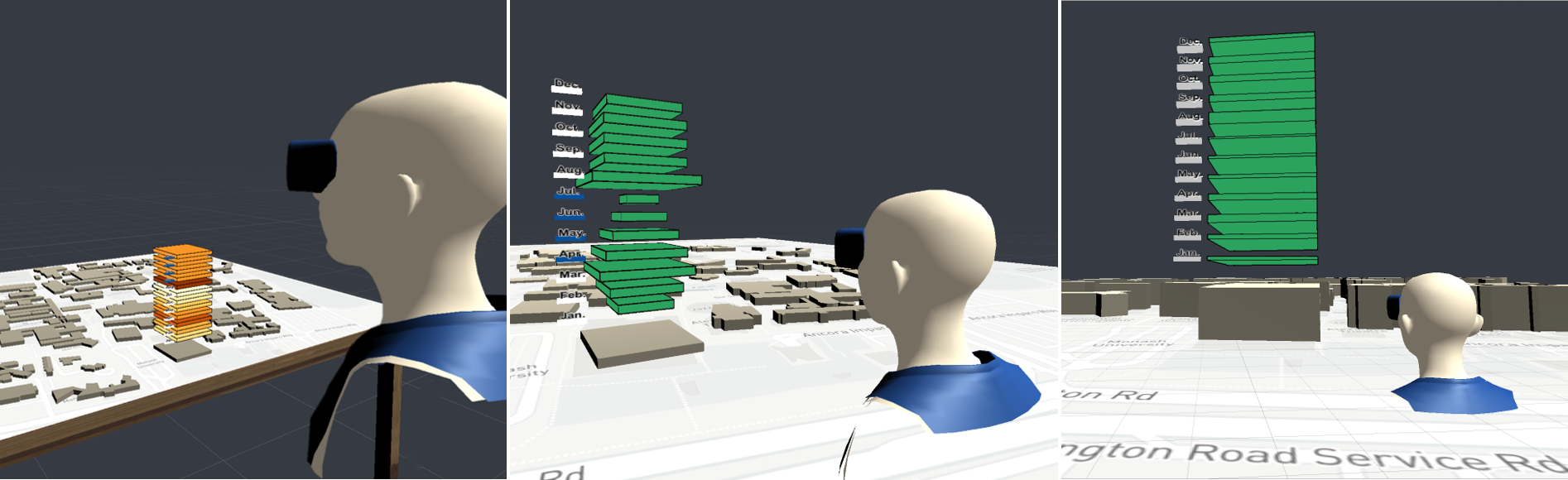

Three scenarios from left to right. Left: a user is located in front of a tabletop. A campus map is on the tabletop with a colour-encoded space time cube for a single building. Middle: a user is located on a medium scale map looking at a area-encoded space time cube for a single building. Right: a user is located on a large scale map looking at a thickness-encoded space time cube for a single building.

llustration of the immersive space-time cube prototype used in Study 1. A campus map showing one year's worth of monthly energy consumption data for the selected building. The study tested the accuracy, speed and user preference of three popular data encodings (colour, area, thickness) across three map scales (small, medium, large) and for three types of user task (identify, compare and trend). Left: colour encoding on small scale. Middle: area encoding on medium scale. Right: thickness encoding on large scale.

With the PhD student Edwige Chauvergne, we also started exploring the use of immersive technologies to visualise data in the context of storytelling. More specifically we are studying the transition between abstract visualisation with quantitative information with an exocentric view and the context of one specific data point with an egocentric point of view that can be more engaging, visceral and lead to an enhanced emotional response. We used data on feminicides in France as a case study.

8.9 Immersive and Collaborative Practical Activities for Education

Participants: Juliette Le Meudec, Arnaud Prouzeau, Martin Hachet.

External collaborators: Anastasia Bezerianos [Equipe ILDa - Inria Saclay], Chloé Mercier [Equipe Mnemosyne - Inria Bordeaux].

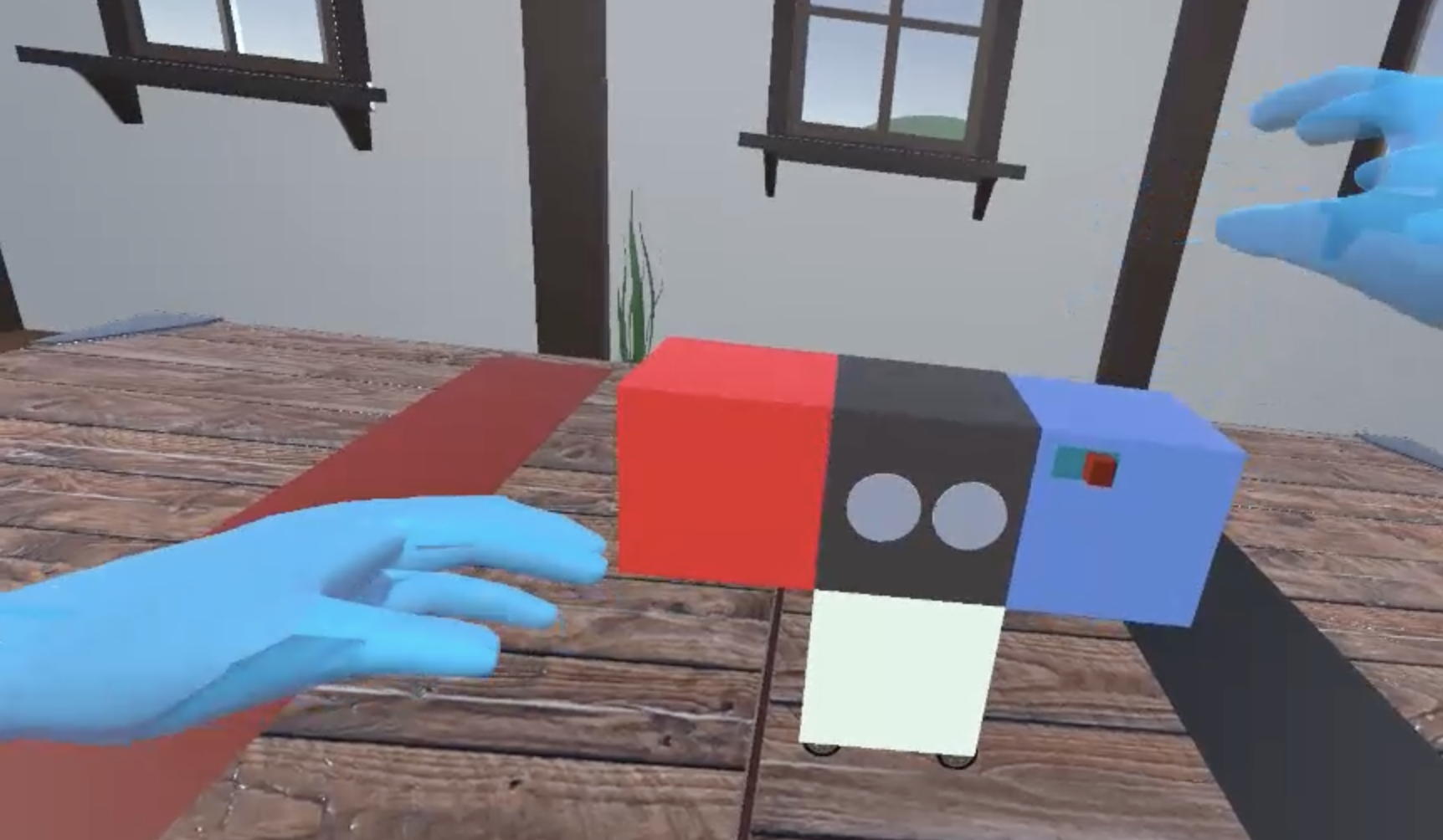

A screenshot of the VR applications with four cubes assembled to make a vehicules.

Immersive technologies hold significant promise in engaging students in educational activities. Theses technologies can foster interaction, immersion, and collaboration among students, creating unique opportunities to enhance various aspects of learning. However, collaboration in virtual reality can be challenging. Habits of collaboration developed in real life may not necessarily translate to this environment. For instance, some communication and problem-solving methods may require some adaptation as there is a lack of some "social clues" in VR such as the absence of facial expressions, giving rise to challenges associated with the use of these emerging educational technologies. In addition, VR also offers highly interesting new perspectives for education, enabling us to imagine new ways of working together. Therefore, it becomes imperative to closely explore the process of collaboration in virtual reality, with a focus on the learning perspective, an area where virtual reality is experiencing significant growth.

We thus implemented a virtual reality application specifically designed to study collaboration between two individuals engaged in an educational task focused on creative problem-solving. This task was inspired by a task designed in the ANR project CreaCube () with real physical robots. In this task, participants are asked to assemble a vehicle with 4 cubes provided without any explanations about the functionality of each cube (see Fig. 7). They have to observe the cubes and trial different combinations to allow them understand what each cube do. Our application offers a unique way of studying collaborative processes in the specific context of learning, opening up new avenues for improving educational experiences using virtual reality. This is part of the ANR JCJC project ICARE and is going to be submitted as a Work In Progress at IHM 2024.

8.10 ARwavs (updated 2023)

Participants: Ambre Assor, Pierre Dragicevic, Arnaud Prouzeau, Martin Hachet.

Virtual objects displayed in the real world.

The negative impact humans have on the environment is partly caused by thoughtless consumption leading to unnecessary waste. A likely contributing factor is the relative invisibility of waste: waste produced by individuals is either out of their sight or quickly taken away. Nevertheless, waste disposal systems sometimes break down, creating natural information displays of waste production that can have educational value. We take inspiration from such natural displays and introduce a class of situated visualizations we call Augmented Reality Waste Accumulation Visualizations or ARwavs, which are literal representations of waste data embedded in users' familiar environment. We implemented examples of ARwavs (see Figure 8) and demonstrated them in feedback sessions with experts in pro-environmental behavior, and during a large tech exhibition event. We discuss general design principles and trade-offs for ARwavs. Finally, we conducted a study suggesting that ARwavs yield stronger emotional responses than non-immersive waste accumulation visualizations and plain numbers.

Late 2022, we published an early preprint on this work 45. In 2023, we published the work in the ACM Journal on Computing and Sustainable Societies (in press) and gave a demo at the CHI 2023 conference 25.

8.11 Handling Non-Visible Referents in Situated Visualizations

Participants: Ambre Assor, Arnaud Prouzeau, Martin Hachet, Pierre Dragicevic.

Situated visualizations are a type of visualization where data is presented next to its physical referent (i.e., the physical object, space, or person it refers to), often using augmented-reality displays. While situated visualizations can be beneficial in various contexts and have received research attention, they are typically designed with the assumption that the physical referent is visible. However, in practice, a physical referent may be obscured by another object, such as a wall, or may be outside the user's visual field. In a paper published in the Transactions on Visualization & Computer Graphics (TVCG) 16, we proposed a conceptual framework and a design space to help researchers and user interface designers handle non-visible referents in situated visualizations. We first provided an overview of techniques proposed in the past for dealing with non-visible objects in the areas of 3D user interfaces, 3D visualization, and mixed reality. From this overview, we derived a design space that applies to situated visualizations and employ it to examine various trade-offs, challenges, and opportunities for future research in this area.

8.12 Designing Resource Allocation Tools to Promote Fair Allocation: Do Visualization and Information Framing Matter?

Participants: Pierre Dragicevic.

External collaborators: Arnav Verma, Luiz Morais, Fanny Chevalier.

Studies on human decision-making focused on humanitarian aid have found that cognitive biases can hinder the fair allocation of resources. However, few HCI and Information Visualization studies have explored ways to overcome those cognitive biases. This work published at the CHI 2023 Conference on Human Factors in Computing Systems 39 investigates whether the design of interactive resource allocation tools can help to promote allocation fairness. We specifically studied the effect of presentation format (using text or visualization) and a specific framing strategy (showing resources allocated to groups or individuals). In our three crowdsourced experiments, we provided different tool designs to split money between two fictional programs that benefit two distinct communities. Our main finding indicated that individual-framed visualizations and text may be able to curb unfair allocations caused by group-framed designs. This work opens new perspectives that can motivate research on how interactive tools and visualizations can be engineered to combat cognitive biases that lead to inequitable decisions.

8.13 Decoupling Judgment and Decision Making: A Tale of Two Tails

Participants: Pierre Dragicevic.

External collaborators: Başak Oral, Alexandru Telea, Evanthia Dimara.

Is it true that if citizens understand hurricane probabilities, they will make more rational decisions for evacuation? Finding answers to such questions is not straightforward in the literature because the terms “judgment” and “decision making” are often used interchangeably. This terminology conflation leads to a lack of clarity on whether people make suboptimal decisions because of inaccurate judgments of information conveyed in visualizations or because they use alternative yet currently unknown heuristics. To decouple judgment from decision making, we reviewed relevant concepts from the literature and presented two preregistered experiments (N=601) to investigate if the task (judgment vs. decision making), the scenario (sports vs. humanitarian), and the visualization (quantile dotplots, density plots, probability bars) affect accuracy. While experiment 1 was inconclusive, we found evidence for a difference in experiment 2. Contrary to our expectations and previous research, which found decisions less accurate than their direct-equivalent judgments, our results pointed in the opposite direction. Our findings further revealed that decisions were less vulnerable to status-quo bias, suggesting decision makers may disfavor responses associated with inaction. We also found that both scenario and visualization types can influence people's judgments and decisions. Although effect sizes are not large and results should be interpreted carefully, we concluded that judgments cannot be safely used as proxy tasks for decision making, and discuss implications for visualization research and beyond. This work was published in the IEEE Transactions on Visualization and Computer Graphics 20. Materials and preregistrations are available at osf.io/ufzp5/.

8.14 Zooids (book section)

Participants: Pierre Dragicevic.

External collaborators: Mathieu Le Goc, Charles Perin, Sean Follmer, Jean-Daniel Fekete.

Physical data visualizations tap into our lifelong experience of perceiving and manipulating the physical world, either alone or with other people. However, most physical visualizations are either monolithic and static, or require human intervention to be rearranged. Back in 2018, we drew inspiration from existing physical interactive systems and data storytelling practices with physical tokens to develop Zooids, tiny wheeled robots that can move rapidly on any horizontal surface. We used Zooids to explore physical data visualizations that can (1) be manipulated by humans, and (2) update themselves through computerized mechanisms. In 2023, we wrote a section 43 in the book Making with Data: Physical Design and Craft in a Data-Driven World to explain our design process.

8.15 Small Data – Visualizing Simple Datasets for Communication and Decision Making (HDR)

Participants: Pierre Dragicevic.

In 2023, Pierre Dragicevic defended his habilitation thesis 44. This habilitation thesis presents research projects that explore how data visualizations can be used to communicate quantitative facts and support decisions. The thesis identifies the umbrella topic of “small data” as the common denominator for these projects, as they all involve datasets that are smaller than what is typically found in the information visualization literature. Although much of this literature focuses on large datasets, this thesis shows that we often do not know how to best visualize even small datasets, and that interesting research questions can also arise from such datasets.

The first part of this thesis focuses on supporting rational judgments and decisions with data visualizations. It examines whether data visualization can improve reasoning with base rates, it looks at whether a cognitive bias called the attraction effect transfers to data visualizations, and discusses how to generally evaluate data visualizations for decision making.

The second part of this thesis discusses how to support effective communication with data visualizations. It begins by exploring different ways in which researchers can communicate their data to their peers: first through tabular visualizations, and then through multiverse analyses. It then reports on two studies examining how to communicate data to large audiences: a replication study on whether trivial charts can hinder truthful communication, and a study on how to convey data on humanitarian issues.

Finally, a concluding chapter brings together several of the research problems discussed here by offering perspectives on how data visualization can support rational decisionmaking and effective communication on humanitarian issues. It discusses how can the effective altruism movement inform research in this area, and how may immersive displays be used to connect people to invisible or distant suffering.

8.16 Impact of the baseline temporal selection on the ERD/ERS analysis for Motor Imagery-based BCI.

Participants: Sébastien Rimbert, David Trocellier, Fabien Lotte.

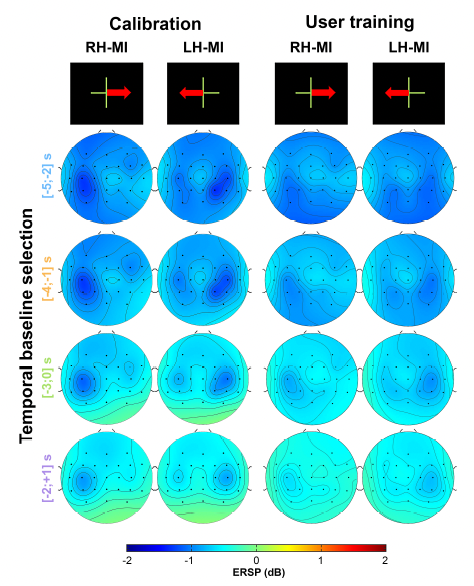

Motor Imagery-based Brain-Computer Interfaces (MI-BCIs) are neurotechnologies that exploit the modulation of sensorimotor rhythms over the motor cortices, respectively known as Event-Related Desynchronization (ERD) and Synchronization (ERS). The interpretation of ERD/ERS is directly related to the selection of the baseline used to estimate them, and might result in a misleading ERD/ERS visualization. In fact, in BCI paradigms, if two trials are separated by a few seconds, taking a baseline close to the end of the previous trial could result in an over-estimation of the ERD, while taking a baseline too close to the upcoming trial could result in an underestimation of the ERD. This phenomenon may cause a functional misinterpretation of the ERD/ERS phenomena in MI-BCI studies. This may also impair BCI performances for MI vs Rest classification, since such baselines are often used as resting states. In this paper, we propose to investigate the effect of several baseline time window selections on ERD/ERS modulations and BCI performances. Our results show that considering the selected temporal baseline effect is essential to analyze the modulations of ERD/ERS during MI-BCI use 37.

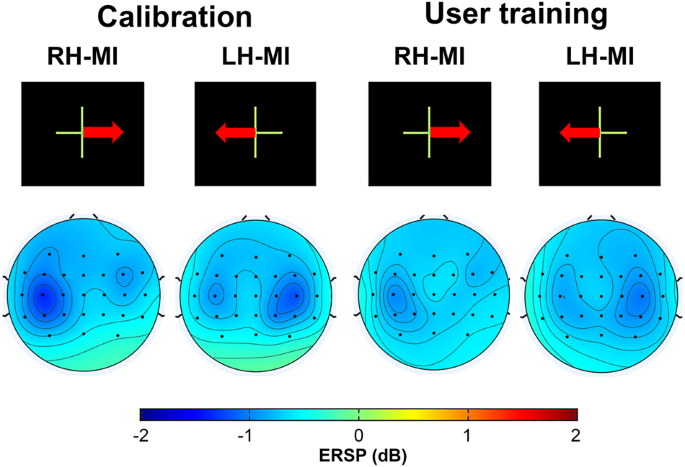

This Figure shows ERD patterns during both left and right hand motor imagery,for the calibration and user training phases for different baselines. They show clear ERD over the controlateral motor cortices during MI.

Topographic map of ERD/ERS% (grand average, n=71) in the alpha/mu+beta band during the right-hand and left-hand MIs for both calibration and user training sessions for different baselines. A blue colour corresponds to a strong ERD and a red one to a strong ERS. Red electrodes indicate a significant difference (p0.01) with a FDR (False Discovery Rate) correction.

8.17 Long-term kinesthetic motor imagery practice with a BCI: Impacts on user experience, motor cortex oscillations and BCI performances.

Participants: Sébastien Rimbert.

External collaborators: Stéphanie Fleck [Univ. Lorraine].

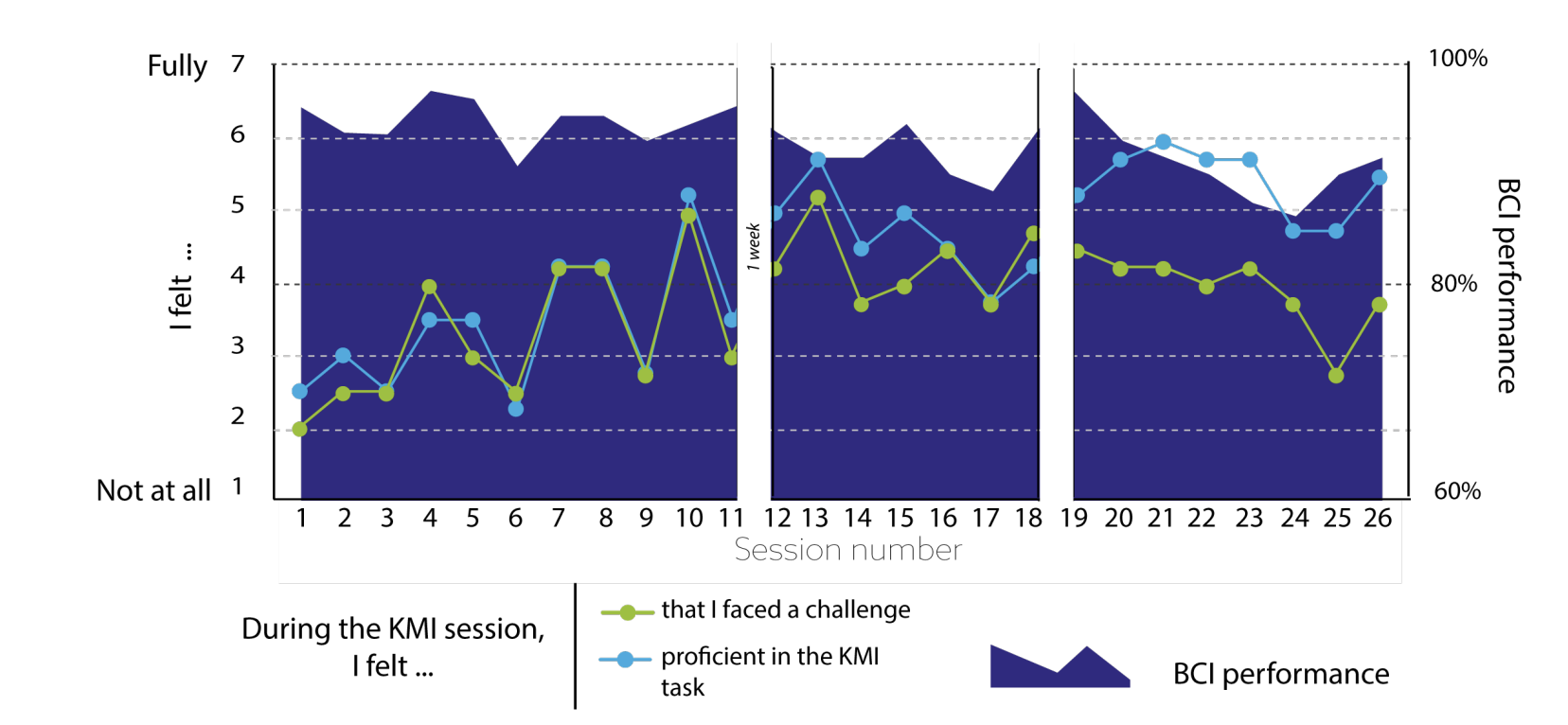

Kinesthetic motor imagery (KMI) generates specific brain patterns in sensorimotor rhythm over the motor cortex (called event-related (de)-synchronization, ERD/ERS), allowing KMI to be detected by a Brain-Computer Interface (BCI) through electroencephalographic (EEG) signal. Because performing a KMI task stimulates synaptic plasticity, KMI-based BCIs hold promise for many applications requiring long-term KMI practice (e.g., sports training or post-stroke rehabilitation). However, there is a lack of studies on the long-term KMI-based BCI interactions, especially regarding the relationship between intrapersonal factors and motor pattern variations. This pilot study aims to understand better (i) how brain motor patterns change over time for a given individual, (ii) whether intrapersonal factors might influence BCI practice, and (iii) how the brain motor patterns underlying the KMI task (i.e., ERDs and ERSs) are modulated over time by the BCI user's experience. To this end, we recruited an expert in this mental task who performed over 2080 KMIs in 26 different sessions over a period of five months. The originality of this study lies in the detailed examination of cross-referenced data from EEG signals, BCI data performance, and 13 different surveys. The results show that this repetitive and prolonged practice did not diminish his well-being and, in particular, generated a sense of automatization of the task. We observed a progressive attenuation of the ERD amplitude and a concentration in the motor areas as the sessions accumulated. All these elements point to a phenomenon of neural efficiency. If confirmed by other studies, this phenomenon could call into question the quality of the BCI in providing continuous stimulation to the user. In addition, the results of this pilot study provide insights into what might influence the response of the motor cortex (e.g. emotions, task control, diet, etc.) and promising opportunities for improving the instructional design of BCIs intended for long-term use 21.

This Figure shows a fluctuating accuracy across sessions (but no clear learning effect), a globally increasing feeling of KMI proficiency, and an increasing then decreasing feeling of challenge.

Diagram describing the BCI performance of accuracy (TS+LR method) and the feeling of the KMI task proficiency and of being faced with a challenge for all sessions.

8.18 Detection of Motor Cerebral Activity During General Anesthesia

Participants: Sebastien Rimbert, Fabien Lotte, Valérie Marissens.

External collaborators: Claude Mestelman [CHRU Brabois], Laurent Bougrain [Univ. Lorraine], Denis Schmartz [Erasme Hospital], Ana Maria Cebolla [Univ. Bruxelles], Guy Cheron [Univ. Bruxelles], Seyed Javad Bidgoli [CHRU Brugmann].

8.18.1 Detection of Motor Cerebral Activity After Median Nerve Stimulation During General Anesthesia (STIM-MOTANA): Protocol for a Prospective Interventional Study

Background Accidental awareness during general anesthesia (AAGA) is defined as an unexpected awareness of the patient during general anesthesia. This phenomenon occurs in 1%-2% of high-risk practice patients and can cause physical suffering and psychological after-effects, called posttraumatic stress disorder. In fact, no monitoring techniques are satisfactory enough to effectively prevent AAGA; therefore, new alternatives are needed. Because the first reflex for a patient during an AAGA is to move, but cannot do so because of the neuromuscular blockers, we believe that it is possible to design a brain-computer interface (BCI) based on the detection of movement intention to warn the anesthetist. To do this, we propose to describe and detect the changes in terms of motor cortex oscillations during general anesthesia with propofol, while a median nerve stimulation is performed. We believe that our results could enable the design of a BCI based on median nerve stimulation, which could prevent AAGA. Objective To our knowledge, no published studies have investigated the detection of electroencephalographic (EEG) patterns in relation to peripheral nerve stimulation over the sensorimotor cortex during general anesthesia. The main objective of this study is to describe the changes in terms of event-related desynchronization and event-related synchronization modulations, in the EEG signal over the motor cortex during general anesthesia with propofol while a median nerve stimulation is performed 22.

8.18.2 Detection of Cerebral Electroencephalographic Patterns After Median Nerve Stimulation During Propofol-Induced General Anesthesia : a Prospective Interventional Cohort Study

Devices used to assess depth of anesthesia and clinical parameters may not be sufficient to prevent intraoperative accidental awareness. An alternative would be to detect the patient's intention to move in order to alert the medical staff. We believe that the data obtained after multiple median nerve stimulation (MNS) during general anesthesia will help us to prevent intraoperative awareness. In this prospective, interventional trial, 30 volunteers aged from 18 to 81 years with informed consent will be enrolled for scheduled surgery from 15th January 2023 to 31st December 2026. This study is approved by the CHU Brugmann ethical committee (CE 2021/225) and is registered at EUDRACT (2021-006457-56) and ClinicalTrials.gov (NCT05272202). EEG data based on MNS is first recorded with the patient awake, then a second recording is made under general anesthesia during the surgery. MNS is obtained by electrodes placed on the wrist. Our intermediate results (n=4 curarized patients) shows the ERS in the mu/beta frequency band after MNS before general anesthesia. After propofol induction, the post-stimulation ERS disappears significantly. The preliminary data extracted from the ERD/ERS consecutive to MNS seems to disappear with high concentration of propofol contrary to previous studies at lower levels2. Therefore, this study is not conclusive in terms of the ERD/ERS patterns used but other EEG features (i.e., brain connectivity, somatosensory evoked potential) could be investigated and will be the subject of future research 52.

8.19 Improving motor imagery detection with a BCI based on somesthetic non invasive stimulations

Participants: Sebastien Rimbert.

One of the most prominent BCI types of interaction is Motor Imagery (MI)-based BCI. Users control a system by performing MI tasks, e.g., imagining hand/foot movements detected from EEG signals. Indeed, movements and imagination of movements activate similar neural networks, enabling the MI-based BCI to exploit the modulations known as Event-Related Desynchronization (ERD) and Event-Related Synchronization (ERS). However, two important challenges remain before using such MI-BCIs on a large scale: (i) be able to detect the MI of the user without any temporal markers for instructions (often given by sound or visual cues) and (ii) achieve sufficient accuracy (>80%) to ensure the reliability of a BCI device that could be used by the participants. We presented this work at the BCI meeting 2023 51.

8.20 DUPE MIBCI: database with user's profile and EEG signals for motor imagery brain computer interface research:

Participants: Pauline Dreyer, Aline Roc, Camille Benaroch, Thibaut Monseigne, Sebastien Rimbert, Fabien Lotte.

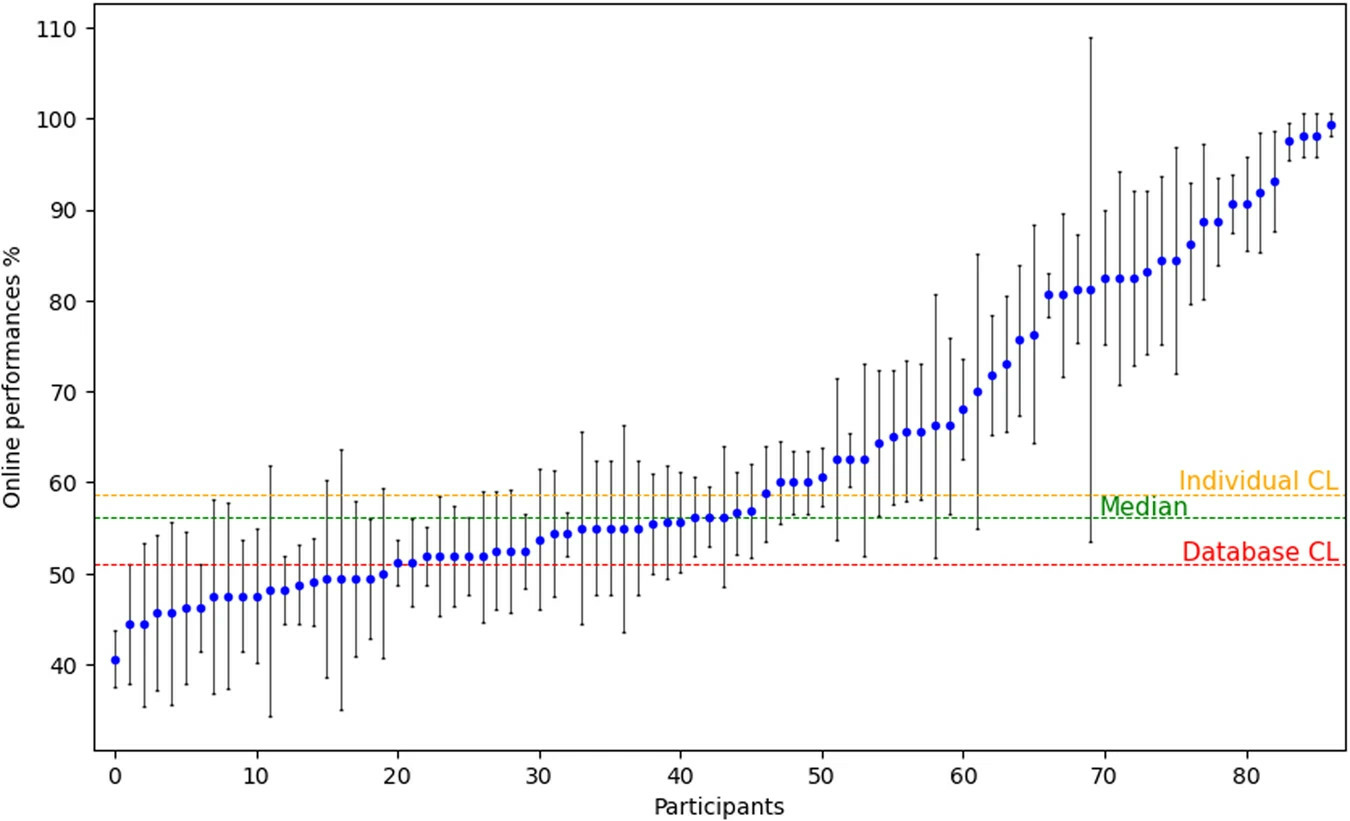

The creation of the large BCI database published on Zenodo on January 9, 2023, containing (electroencephalographic signals from 87 human participants, associated BCI user performances, detailed information on demographics, personality profile, cognitive traits, and experimental instructions and codes) led to an article 19 published in Scientific Data by Nature on September 5, 2023. It presents the database and explains 1) the experimental protocols, 2) the usefulness of the database for different studies, 3) and the technical validation of the database. Indeed, our results show consistency with the literature, both in terms of classification performance for right-left discrimination (see Fig. 11) and in terms of ERSP variation. During the motor imagery tasks, there is an activation of the motor cortex with contralateral ERD (see Fig. 12).

A figure showing the accuracy obtained by all participants as a scatter plot, with accuracies ranging from chance level to over 90%.

A figure displaying EEG topographies showing the expected contralateral mu and beta desynchronisation during left and right hand motor imagery.

Such database could prove useful for various studies, including but not limited to: (1) studying the relationships between BCI users' profiles and their BCI performances, (2) studying how EEG signals properties varies for different users' profiles and MI tasks, (3) using the large number of participants to design cross-user BCI machine learning algorithms or (4) incorporating users' profile information into the design of EEG signal classification algorithms.

8.21 Towards curating personalized art exhibitions in Virtual Reality with multimodal Electroencephalography-based Brain-Computer-Interfaces:

Participants: Marc Welter, Axel Bouneau, Jonathan Baum, Ines Audinet, Fabien Lotte.

The EEG correlates of art preference are not yet well understood scientifically. We contributed a literature review on the oscillatory EEG correlates of art preference that was presented during as posters at the BCI Society Meeting and in Torun, and submitted for publication in the journal Frontiers in Neuroergonomics. We also developed a novel experimental protocol to measure a subject's aesthetic experience in a virtual museum environment with EEG, GSR and PPG. To this end, we also established a data set of diverse visual art works that will be released in the public domain. This work was presented as a talk at CORTICO2023 and at the BCI Society Meeting 2023 in a masterclass 56. We, then, collected physiological data and subjective preference ratings from 20 healthy adult subjects. Preliminary data analysis suggests that, at least for some persons, preference for static visual art correlates positively with an increase of activity in the EEG alpha frequency band measured by occipital electrodes located above the visual cortex.

8.22 Independent linear discriminant analysis: The first step in including covariates in EEG classification

Participants: David Trocellier, Fabien Lotte.

The classification algorithms usually used in BCI are based on an analysis called linear discriminant analysis (LDA). Despite being the state of the art in online classification algorithm in our field, this algorithm is known not to be robust to neuronal changes that can appear during BCI such as fatigue or anxiety. To address these limitations, we developed a modification of the LDA algorithm, which we called invariant LDA (iLDA). This new model aimed to be robust to covariates known to influence BCI control. It showed significant improvements in classification accuracy on simulated data. However, so far, it has not demonstrated yet improvement in classification performance on real EEG data for the covariates we tested (Fatigue, anxiety, motivation, attention). This work has been presented at national and international conferences 55.

8.23 Riemannian ElectroCardioGraphic signal classification

Participants: Fabien Lotte.

External collaborators: Aurelien Appriou [Flit Sport].

Estimating mental states such as cognitive workload from ElectroCardioGraphic (ECG) signals is a key but challenging step for many fields such as ergonomics, physiological computing, medical diagnostics or sport training. So far, the most commonly used machine learning algorithms to do so are linear classifiers such as Support Vector Machines (SVMs), often resulting in modest classification accuracies. However, Riemannian Geometry-based Classifiers (RGC), and more particularly the Tangent Space Classifiers (TSC), have recently shown to lead to state-of-the-art performances for ElectroEncephaloGraphic (EEG) signals classification. However, RGCs have never been explored for classifying ECG signals. Therefore, in this paper we design the first Riemannian geometry-based TSC for ECG signals, evaluate it for classifying two levels of cognitive workload, i.e., low versus high workload, and compare results to the ones obtained using an algorithm that is commonly used in the literature: the SVM. Our results indicated that the proposed ECG-TSC significantly outperformed an ECG-SVM classifier (a commonly used algorithm in the literature) when using 6, 10, 20, 30 and 40seconds time windows, suggesting an optimal time window length of 120 seconds (65.3% classification accuracy for the TSC, 57.8% for the SVM). Altogether, our results showed the value of RGCs to process ECG signals, opening the door to many other promising ECG classification applications. This work was published as a conference paper in the 4th Latin-American Workshop on Computational Neuroscience 24.

8.24 Novel SPD matrix representations considering cross-frequency coupling for EEG classification using Riemannian geometry

Participants: Fabien Lotte.

External collaborators: Maria Sayu Yamamoto [Univ. Paris-Saclay], Apolline Mellot [Univ. Paris-Saclay], Sylvain Chevallier [Univ. Paris-Saclay].

Accurate classification of cognitive states from Electroencephalographic (EEG) signals is crucial in neuroscience applications such as Brain-Computer Interfaces (BCIs). Classification pipelines based on Riemannian geometry are often state-of-the-art in the BCI field. In this type of BCI, covariance matrices based on EEG signals of independent frequency bands are used as classification features. However, there is significant neuroscience evidence of neural interactions across frequency bands, such as cross-frequency coupling (CFC). Therefore, in this paper, we propose novel symmetric positive definite (SPD) matrix representations considering CFC for Riemannian geometry-based EEG classification. The spatial interactions of phase and amplitude within and between frequency bands are described in three different CFC SPD matrices. This allows us to include additional discriminative neurophysiological features that are not available in the conventional Riemannian EEG features. Our method was evaluated using a mental workload classification task from a public passive BCI dataset. Our fused model of the three CFC covariance matrices showed statistically significant improvements in average classification accuracies from the conventional covariance matrix in the theta and alpha bands by 18.32% and in the beta and gamma bands by 4.34% with smaller standard deviations. This result confirmed the effectiveness of considering more diverse neurophysiological interactions within and between frequency bands for Riemannian EEG classification. This work was published at the EUSIPCO 2023 conference 41.

8.25 Modeling complex EEG data distribution on the Riemannian manifold toward outlier detection and multimodal classification

Participants: Fabien Lotte.

External collaborators: Maria Sayu Yamamoto [Univ. Paris-Saclay], Yuichi Tanaka, Toshihisa Tanaka, Frédéric Dehais, Md Rabiul Islam, Khadijeh Sadatnejad.

The usage of Riemannian geometry for Brain-computer interfaces (BCIs) has gained momentum in recent years. Most of the machine learning techniques proposed for Riemannian BCIs consider the data distribution on a manifold to be unimodal. However, the distribution is likely to be multimodal rather than unimodal since high-data variability is a crucial limitation of electroencephalography (EEG). In this paper, we propose a novel data modeling method for considering complex data distributions on a Riemannian manifold of EEG covariance matrices, aiming to improve BCI reliability. Our method, Riemannian spectral clustering (RiSC), represents EEG covariance matrix distribution on a manifold using a graph with proposed similarity measurement based on geodesic distances, then clusters the graph nodes through spectral clustering. This allows flexibility to model both a unimodal and a multimodal distribution on a manifold. RiSC can be used as a basis to design an outlier detector named outlier detection Riemannian spectral clustering (oden-RiSC) and a multimodal classifier named multimodal classifier Riemannian spectral clustering (mcRiSC). All required parameters of odenRiSC/mcRiSC are selected in data-driven manner. Moreover, there is no need to pre-set a threshold for outlier detection and the number of modes for multimodal classification. The experimental evaluation revealed odenRiSC can detect EEG outliers more accurately than existing methods and mcRiSC outperformed the standard unimodal classifier, especially on highvariability datasets. odenRiSC/mcRiSC are anticipated to contribute to making real-life BCIs outside labs and neuroergonomics applications more robust. This work was published in the journal IEEE Transactions on Biomedical Engineering 23.

9 Bilateral contracts and grants with industry

9.1 Bilateral contracts with industry

SNCF - Cifre:

Participants: Maudeline Marlier, Arnaud Prouzeau, Martin Hachet.

- Duration: 2022-2025

- Local coordinator: Arnaud Prouzeau

- We started a collaboration with SNCF around the PhD thesis (Cifre) of Maudeline Marlier. The objective is to rethink railway control rooms with interactive tabletop projections.

10 Partnerships and cooperations

10.1 International research visitors

10.1.1 Visits to international teams

Research stays abroad

Ambre Assor

-

Visited institution:

Ecole de Technology Supérieur (ETS) Montréal

-

Country:

Canada

-

Dates:

01/06/2023 – 01/09/2023

-

Context of the visit:

Research visit with Michael McGuffin to work on transition between abstract and situated visualisation in augmented reality.

-

Mobility program/type of mobility:

Research stay cofinanced by the LaBRI AAP Générique and the ANR Ember project.

Morgane Koval

-

Visited institution:

University of Toronto

-

Country:

Canada

-

Dates:

26/06/2023 – 14/08/2023

-

Context of the visit:

Research visit with Fanny Chevalier to work on visualizing travel uncertainty on maps.

-

Mobility program/type of mobility:

Research stay cofinanced by the LaBRI AAP Générique and the ANR Ember project.

Fabien Lotte & Marc Welter

-

Visited institution:

Tokyo University of Agriculture and Technology (TUAT)

-

Country:

Japan

-

Dates:

10/08/2023 – 28/08/2023

-

Context of the visit:

Research stay to collaborate on Brain-Computer Interfaces in Prof. Toshihisa Tanaka laboratory at TUAT. F. Lotte was a visiting professor there, and M. Welter a visiting PhD student.

-

Mobility program/type of mobility:

Research stay financed by TUAT GIR (Global Innovation Research) program.

David Trocellier

-

Visited institution:

Nanyang Technological University (NTU)

-

Country:

Singapore

-

Dates:

01/10/2023 – 31/12/2023

-

Context of the visit:

Visit to the laboratory of Cuntai Guan (CBCR) to discover one of the leading deep learning teams in the field of BCI and to work on the development of new classification classification algorithms robust to factors known to have an influence on BCI control performance.

-

Mobility program/type of mobility:

Research stay financed by the ”Académie Française” and the ”collège des écoles doctorales” of Univ. Bordeaux.

10.2 European initiatives

10.2.1 Horizon Europe

CHRISTERA Project BITSCOPE

:

Participants: Marc Welter, Axel Bouneau, Fabien Lotte, Jonathan Baum.

- Title: BITSCOPE: Brain Integrated Tagging for Socially Curated Online Personalised Experiences

- Duration: 2022-2025

- Partners: Dublin City (Ireland), Univ. Polytechnic Valencia (Spain), Univ. Nicolas Corpernicus (Poland), Inria (France)

- Coordinator: Tomas Ward

- This project presents a vision for brain computer interfaces (BCI) which can enhance social relationships in the context of sharing virtual experiences. In particular we propose BITSCOPE, that is, Brain-Integrated Tagging for Socially Curated Online Personalised Experiences. We envisage a future in which attention, memorability and curiosity elicited in virtual worlds will be measured without the requirement of “likes” and other explicit forms of feedback. Instead, users of our improved BCI technology can explore online experiences leaving behind an invisible trail of neural data-derived signatures of interest. This data, passively collected without interrupting the user, and refined in quality through machine learning, can be used by standard social sharing algorithms such as recommender systems to create better experiences. Technically the work concerns the development of a passive hybrid BCI (phBCI). It is hybrid because it augments electroencephalography with eye tracking data, galvanic skin response, heart rate and movement in order to better estimate the mental state of the user. It is passive because it operates covertly without distracting the user from their immersion in their online experience and uses this information to adapt the application. It represents a significant improvement in BCI due to the emphasis on improved denoising facilitating operation in home environments and the development of robust classifiers capable of taking inter- and intra-subject variations into account. We leverage our preliminary work in the use of deep learning and geometrical approaches to achieve this improvement in signal quality. The user state classification problem is ambitiously advanced to include recognition of attention, curiosity, and memorability which we will address through advanced machine learning, Riemannian approaches and the collection of large representative datasets in co-designed user centred experiments.

Inria-DFKI NEARBY

:

Participants: Fabien Lotte, Sebastien Rimbert.

- Title: Noise and Variability Free Brain-Computer Interfaces

- Duration: 2023-2027

- Partners: Inria Bordeaux (Potioc & Mnemosyne), DFKI Saarbrucken, DFKI Bremen

- Coordinators: Fabien Lotte & Maurice Rekrut

- While Brain-Computer Interfaces (BCI) are promising for many applications, e.g., assistive technologies, man-machine teaming or motor rehabilitation, they are barely used uut-of-the-lab due to a poor reliability.Electroencephalographic (EEG) brain signals are indeed very noisy and variable, both between and within users. To address these issues, we first propose to join Inria and DFKI forces to build large-scale multicentric and open EEG-BCI databases, with controlled noise and variability sources, for BCIs based on motor and speech activity. Building on this data we will then design new Artificial Intelligence algorithms, notably based on Deep Learning, dedicated to EEG denoising and variability-robust EEG-decoding. Such algorithms will be implemented both on open-source software as well as FPGA hardware, and then demonstrated in two out-of-the-lab BCI applications: Human-Robot collaboration and exoskeleton control

10.3 National initiatives

ANR Project BeAware

:

Participants: Martin Hachet, Yvonne Jansen, Pierre Dragicevic, Arnaud Prouzeau, Fabien Lotte, Aymeric Ferron, Ambre Assor.

- Duration: 2023-2026

- Partners: CIRED, LESSAC-BSB

- Coordinator: Martin Hachet

- BeAware explores how augmented reality (AR) systems can reduce the spatial and temporal distance between people’s choices and their environmental impact. We design interactive visualizations that integrate concrete environmental consequences (e.g. waste accumulation, rare earth mining) directly into people’s surroundings. This interdisciplinary research will be informed and validated by incentivized and controlled behavioral economics experiments based on game-theoretical models, and be guided by real environmental data and scenarios

- website: BeAware

ANR Project EMBER

:

Participants: Pierre Dragicevic, Martin Hachet, Yvonne Jansen, Arnaud Prouzeau, Ambre Assor.

- Duration: 2020-2024

- Partners: Inria/AVIZ, Sorbonne Université

- Coordinator: Pierre Dragicevic

- The goal of the project is to study how embedding data into the physical world can help people to get insights into their own data. While the vast majority of data analysis and visualization takes place on desktop computers located far from the objects or locations the data refers to, in situated and embedded data visualizations, the data is directly visualized near the physical space, object, or person it refers to.

- website: Ember

ANR JCJC ICARE

:

Participants: Arnaud Prouzeau, Martin Hachet, Yvonne Jansen, Juliette Le Meudec.

- Duration: 2023-2026

- Partners: Inria/ILDa, Monash University, Queensland University

- Coordinator: Arnaud Prouzeau

- In this project, we explore the use of immersive technologies for collaborative learning. First in fully virtual reality environments and then in heterogeneous ones which include different types of devices (e.g. AR/VR, wall displays, desktops), we will design interaction techniques to improve how people collaborate in practical learning activities.

- website: ICARE

ANR BCI4IA

:

Participants: Sebastien Rimbert, Fabien Lotte, Valerie Marissens.

- Duration: 2023-2027

- Partners: Inria, LORIA, CHRU Nancy, CHU Brugmann, Univ. Libre Bruxelles

- Coordinator: Claude Meistelman

- The BCI4IA project aims to design a brain-computer interface to enable reliable general anesthesia (GA) monitoring, in particular to detect intraoperative awareness. Currently, there is no satisfactory solution to do so whereas it causes severe post-traumatic stress disorder. "I couldn't breathe, I couldn't move or open my eyes, or even tell the doctors I wasn't asleep." This testimony shows that a patient's first reaction during an intraoperative awareness is usually to move to alert the medical staff. Unfortunately, during most surgery, the patient is curarized, which causes neuromuscular block and prevents any movement. To prevent intraoperative awareness, we propose to study motor brain activity under GA using electroencephalography (EEG) to detect markers of motor intention (MI) combined with general brain markers of consciousness. We will analyze a combination of MI markers (relative powers, connectivity) under the propofol anesthetics, with a brain-computer interface based on median nerve stimulation to amplify them. Doing so will also require to design new machine learning algorithms based on one-class (rest class) EEG classification, since no EEG examples of the patient's MI under GA are available to calibrate the BCI. Our preliminary results are very promising to bring an original solution to this problem which causes serious traumas.

- website: BCI4IA

ANR PROTEUS

:

Participants: Fabien Lotte, Sebastien Rimbert, Pauline Dreyer, David Trocellier.

- Duration: 2023-2027

- Partners: Inria, LAMSADE, ISAE-SupAero, Wisear

- Coordinator: Fabien Lotte

- Whereas BCI are very promising for various applications they are not reliable. Their reliability degrades even more when used across contexts (e.g., across days, for changing users' states or applications used) due to various sources of variabilities. Project PROTEUS proposes to make BCIs robust to such variabilities by 1) Systematically measuring BCI and brain signal variabilities across various contexts while sharing the collected databases; 2) Characterising, understanding and modelling the variability and their sources based on these new databases; and 3) Tackling these variabilities by designing new machine learning algorithms optimally invariant to them according to our models, and using the resulting BCIs for two practical applications affected by variabilities: tetraplegic BCI user training and auditory attention monitoring at home or in flight.

- website: PROTEUS

AeX Inria Live-It

:

Participants: Arnaud Prouzeau, Martin Hachet, Emma Tison, Claudia Krogmeier, Justin Dillmann.

- Duration: 2022-2024

- Partners: Université de Bordeaux - NeuroPsychology

- Coordinator: Arnaud Prouzeau and Martin Hachet

- In collaboration with colleagues in neuropsychology (Antoinette Prouteau), we are exploring how augmented reality can help to better explain schizophrenia and fight against stigmatization. This project is directly linked to Emma Tison's PhD thesis.

AeX I-Am

:

Participants: Pierre Dragicevic, Leana Petiot.

- Duration: 2023-2026

- Partners: Flower team, Université de Bordeaux - Psychology

- Coordinator: Pierre Dragicevic and Hélène Sauzéon

- Title: The influence of augmented reality on autobiographical memory: a study of involuntary and false memories. Abstract: Although the Metaverse quickly raised a number of questions about its potential benefits and dangers for humans, augmented reality (AR) has made its way into our lives without raising such questions. The present program proposes to initiate this questioning by evaluating the impact of AR on our autobiographical memory, i.e. the memory that characterizes the "self" of each of us, by investigating the human and technical factors conducive to or, on the contrary, protective of memory biases.

- website: I-am

11 Dissemination

11.1 Promoting scientific activities

11.1.1 Scientific events: organisation

General chair, scientific chair

- General Chair EnergyVis Workshop at IEEE VIS (Arnaud Prouzeau )

- General Chair Immersive Generative Design Workshop at IEEE ISMAR (Arnaud Prouzeau )

- General Chair ACM TEI'25 (Yvonne Jansen )

Member of the organizing committees

- Demo/Poster Chair ICAT-EGVE (Claudia Krogmeier )

- Workshop Chair IEEE ISMAR (Arnaud Prouzeau )

- Workshop Chair IHM (Arnaud Prouzeau )

11.1.2 Scientific events: selection

Chair of conference program committees

- IEEE VIS, Area Program Chair (Pierre Dragicevic )

Member of the conference program committees

- IEEE VIS (Arnaud Prouzeau , Yvonne Jansen )

- 4th Latin American Workshop on Computational Neuroscience (LAWCN) 2023 (Fabien Lotte )

- IEEE ICAT 2023 (Fabien Lotte )

- CORTICO Days 2023 (Fabien Lotte )

Reviewer

- Arnaud Prouzeau : IEEE VR, ACM CHI, IHM, EuroVIS, IEEE ISMAR, ACM VRST, ACM ISS

- Pierre Dragicevic : ACM CHI, JPI, ACM TOCHI, IEEE VIS, EuroVis

- Yvonne Jansen : IEEE TVCG, ACM ToCHI, ACM CHI

- Aymeric Ferron : IEEE ISMAR, ACM IHM

- Hessam Djavaherpour : ACM CHI, IEEE Vis, ACM UIST

- Fabien Lotte : Int. BCI Meeting, CORTICO, IEEE ICAT, ACM CHI, IEEE VR

- Sébastien Rimbert : IEEE SMC, CORTICO

11.1.3 Journal

Member of the editorial boards

- Member of the editorial board of the Journal of Perceptual Imaging (JPI) (Pierre Dragicevic )

- Member of the editorial board of the Springer Human–Computer Interaction Series (HCIS) (Pierre Dragicevic ).

- Member of the advisory committee and editorial board of The Journal of Visualization and Interaction (JoVI) (Yvonne Jansen )

- Member of the editorial board (Associate Editor) of the Journal Brain-Computer Interfaces (Fabien Lotte )

- Member of the editorial board (Associate Editor) of the Journal IEEE Transactions on Biomedical Engineering (Fabien Lotte )

- Member of the editorial board of Journal of Neural Engineering (Fabien Lotte )

- Member of the editorial board (co-specialty chief editor) of Frontiers in Neuroergonomics, section Neurotechnology and Systems Neuroergonomics (Fabien Lotte )

Reviewer - reviewing activities

- Fabien Lotte : Frontiers in Human Neuroscience

- Sébastien Rimbert : Frontiers in Human Neuroscience, Frontiers in Neuroscience, PeerJ, Sensors

- Marc Welter : Journal of Perceptual Imaging

11.1.4 Invited talks

- Hessam Djavaherpour : Invited for a talk at the ATLAS Institute, University of Colorado Boulder.

-

Fabien Lotte

:

- "Towards understanding and tackling variabilities in Brain-Computer Interactions", Univ. Essex BCI Lab online BCI lectures series, online, Inaugural invited talk, December 2023

- "On Variabilities Affecting Brain-Computer Interactions", 4th Latin-American Workshop on Computational Neursocience, Envigado, Colombia, closing keynote, November 2023

- "Can Neurotechnologies contribute to global Peace?", USERN Virtual congress 2023, online, invited talk, November 2023

- “Brain-computer Interface-based Motor and Cognitive Rehabilitation”, NCU-RIKEN Workshop on Complex System for Health, Torun, Poland, invited plenary talk, Sept. 2023

- "Machine Learning, Human Factors and Software solutions for out-of-the-lab BCI use", MBT Conference 2.0: Methods in Mobile EEG, Belgrade, Serbia, Keynote, Sept. 2023

- "Comprendre et dompter les variabilités dans les interactions cerveau-ordinateur", Neurotechnologies Master Class France, online, invited plenary talk, Sept. 2023.

- “Machine Learning methods to decode cognitive, affective and conative states from EEG signals”, IntEr-HRI Competition (Intrinsic Error Evaluation during Human -Robot Interaction), IJCAI 2023, Macao/online, keynote, August 2023

- "Passive Brain-Computer Interfaces for cognitive, perceptive, affective and conative states estimation", invited seminar, Macnica company, Tokyo, Japan, August 2023

- "Towards understanding and tackling variabilities in Brain-Computer Interactions", Tokyo University of Agriculture and Technology, invited lecture, Tokyo, Japan, August 2023

- « Interagir avec des environnements réels ou virtuels par l'activité cérébrale: promesses, fantasmes et réalité », Colloque EPIQUE, Paris, France, keynote, July 2023

- « Les Interfaces Cerveau-Ordinateur », « Digital Natives », le colloque MMI Bordeaux, Bordeaux, May 2023

- "Machine and User learning aspects in Sensorimotor Brain-Computer Interface design", Research Training Group Neuromodulation kick-off workshop, keynote, University of Oldenburg, invited plenary talk, Oldenburg, Germany, March 2023

- "Addressing within-subject BCI variability with Riemannian classifiers", ISAE-Supaéero, Toulouse, France, January 2023

- Marc Welter : Decoding Aeshthetic Preference with multi-modal passive BCI, HYBRID, IRISA, Rennes, France, June 2023

- Sébastien Rimbert : Towards a better understanding of ERD and ERS in the context of BCI, CuttingEEG Conference, Lyon, France, Octobre 2023

Invited Poster:

- M. Welter & F. Lotte, 'EEG Oscillatory Correlates of Aesthetic Experience', Second International Workshop on Complex Systems Science and Health Neuroscience, Torun, Poland, September 2023 (Marc Welter , Fabien Lotte )

11.1.5 Scientific expertise

Hiring committes

- Prof. Ecole Centrale Nantes (Martin Hachet )

- Prof. Ecole Centrale Lyon (Martin Hachet )

- Prof. Univ. Copenhagen (Martin Hachet )

- Member of Search Committee for Univ. Copenhagen (Pierre Dragicevic )

Funded project reviewing

- Scientific monitor for European FET project TOUCHLESS (Pierre Dragicevic )

11.1.6 Research administration

- President ”Commission des Emplois de Recherche”, Inria Bordeaux (Martin Hachet )

- Member of the “Commission des Emplois Recherche”, Innria Bordeaux (Fabien Lotte )

- Bureau du Hub France de ”Australian-French Association for Research and Innovation (AFRAN)” (Arnaud Prouzeau )

- Member of the CER (Comité d'Éthique de la Recherche) Paris-Saclay (Pierre Dragicevic , Yvonne Jansen )

- Member of the CER Université de Bordeaux (Pierre Dragicevic , Yvonne Jansen )

- Co-departement leader of the Image & Sound department at LaBRI (Yvonne Jansen )

- Member of the scientic council of LaBRI (Yvonne Jansen , Aymeric Ferron )

- Member of the council of the EDMI doctoral school (Aymeric Ferron )

- Members of the governing board of CORTICO, the French association for BCI research (www.cortico.fr) (Fabien Lotte , Sebastien Rimbert )

11.2 Teaching - Supervision - Juries

11.2.1 Teaching

- Doctoral School: Interaction, Réalité Virtuelle et Réalité augmentée, 12h eqTD, EDMI, Université de Bordeaux (Martin Hachet )

- Master: Unity programing, 9h, M2 Université de Bordeaux (Vincent Casamayou )