2023Activity reportProject-TeamRAINBOW

RNSR: 201822637G- Research center Inria Centre at Rennes University

- In partnership with:CNRS, Institut national des sciences appliquées de Rennes, Université de Rennes

- Team name: Sensor-based Robotics and Human Interaction

- In collaboration with:Institut de recherche en informatique et systèmes aléatoires (IRISA)

- Domain:Perception, Cognition and Interaction

- Theme:Robotics and Smart environments

Keywords

Computer Science and Digital Science

- A5.1.2. Evaluation of interactive systems

- A5.1.3. Haptic interfaces

- A5.1.7. Multimodal interfaces

- A5.1.9. User and perceptual studies

- A5.4.4. 3D and spatio-temporal reconstruction

- A5.4.6. Object localization

- A5.4.7. Visual servoing

- A5.6. Virtual reality, augmented reality

- A5.6.1. Virtual reality

- A5.6.2. Augmented reality

- A5.6.3. Avatar simulation and embodiment

- A5.6.4. Multisensory feedback and interfaces

- A5.9.2. Estimation, modeling

- A5.10.2. Perception

- A5.10.3. Planning

- A5.10.4. Robot control

- A5.10.5. Robot interaction (with the environment, humans, other robots)

- A5.10.6. Swarm robotics

- A5.10.7. Learning

- A6.4.1. Deterministic control

- A6.4.3. Observability and Controlability

- A6.4.4. Stability and Stabilization

- A6.4.5. Control of distributed parameter systems

- A6.4.6. Optimal control

- A9.5. Robotics

- A9.7. AI algorithmics

- A9.9. Distributed AI, Multi-agent

Other Research Topics and Application Domains

- B2.4.3. Surgery

- B2.5. Handicap and personal assistances

- B2.5.1. Sensorimotor disabilities

- B2.5.2. Cognitive disabilities

- B2.5.3. Assistance for elderly

- B5.1. Factory of the future

- B5.6. Robotic systems

- B8.1.2. Sensor networks for smart buildings

- B8.4. Security and personal assistance

1 Team members, visitors, external collaborators

Research Scientists

- Paolo Robuffo Giordano [Team leader, CNRS, Senior Researcher, HDR]

- François Chaumette [INRIA, Senior Researcher, HDR]

- Alexandre Krupa [INRIA, Senior Researcher, HDR]

- Claudio Pacchierotti [CNRS, Researcher, HDR]

- Marco Tognon [INRIA, ISFP]

Faculty Members

- Marie Babel [INSA RENNES, Associate Professor, HDR]

- Vincent Drevelle [UNIV RENNES, Associate Professor]

- Sylvain Guegan [INSA RENNES, Associate Professor Delegation, until Aug 2023]

- Maud Marchal [INSA RENNES, Professor, HDR]

- Éric Marchand [UNIV RENNES, Professor, HDR]

Post-Doctoral Fellow

- Elodie Bouzbib [INRIA, Post-Doctoral Fellow, until Nov 2023]

PhD Students

- Jose Eduardo Aguilar Segovia [INRIA]

- Lorenzo Balandi [INRIA, from Oct 2023]

- Maxime Bernard [CNRS]

- Antoine Bout [INSA RENNES, from Oct 2023]

- Pascal Brault [ENS RENNES, ATER, until Aug 2023]

- Pierre-Antoine Cabaret [INRIA]

- Nicola De Carli [CNRS]

- Glenn Kerbiriou [INTERDIGITAL]

- Lisheng Kuang [CSC Scholarship, until Aug 2023]

- Ines Lacote [INRIA]

- Theo Le Terrier [INSA RENNES, from Oct 2023]

- Emilie Leblong [POLE ST HELIER]

- Fouad Makiyeh [INRIA]

- Maxime Manzano [INSA RENNES]

- Antonio Marino [UNIV RENNES]

- Lendy Mulot [INSA RENNES]

- Thibault Noel [CREATIVE]

- Erwan Normand [UNIV RENNES]

- Mandela Ouafo Fonkoua [INRIA]

- Mattia Piras [INRIA, from Dec 2023]

- Leon Raphalen [CNRS, from Sep 2023]

- Maxime Robic [INRIA]

- Lev Smolentsev [INRIA]

- Ali Srour [CNRS]

- John Thomas [INRIA]

- Oluwapelumi Williams [INSA RENNES, until Aug 2023]

Technical Staff

- Gianluca Corsini [CNRS, Engineer, from Dec 2023]

- Louise Devigne [INRIA, Engineer]

- Samuel Felton [INRIA, Engineer]

- Marco Ferro [CNRS, Engineer]

- Guillaume Gicquel [INSA RENNES, Engineer]

- Fabien Grzeskowiak [INSA RENNES, Engineer]

- Thomas Howard [INSA RENNES, Engineer, until Oct 2023]

- Romain Lagneau [INRIA, Engineer]

- Paul Mefflet [CNRS, Engineer, from Nov 2023]

- François Pasteau [INSA RENNES, Engineer]

- Pierre Perraud [INRIA, Engineer, until Nov 2023]

- Esteban Restrepo [CNRS, Engineer, from Feb 2023]

- Olivier Roussel [INRIA, Engineer, from Apr 2023]

- Fabien Spindler [INRIA, Engineer]

- Sebastien Thomas [INRIA, Engineer]

- Thomas Voisin [INRIA]

Interns and Apprentices

- Lorenzo Balandi [INRIA, Intern, until Feb 2023]

- Riccardo Belletti [INRIA, Intern, from Sep 2023]

- Martin Bichon Reynaud [ENS RENNES, Intern, from Sep 2023]

- Sarah Emery [ENS RENNES, Intern, until Jun 2023]

- Gauthier Gendreau [INRIA, Intern, from Apr 2023 until Aug 2023]

- Theo Goupil [CNRS, Intern, from Jun 2023 until Aug 2023]

- Noe Guillaumin [INRIA, Intern, from Apr 2023 until Aug 2023]

- Evelyne Le Bezvoet [ENS PARIS-SACLAY, Intern, from Jun 2023 until Jul 2023]

- Nicolas Martinet [CNRS, Intern, from Dec 2023]

- Loane Vadaine [INRIA, Intern, from May 2023 until Jul 2023]

- Quentin Zanini [CNRS, from Feb 2023 until Jul 2023]

Administrative Assistant

- Hélène de La Ruée [UNIV RENNES]

Visiting Scientists

- Tommaso Belvedere [SAPIENZA ROME, from Mar 2023 until Sep 2023]

- Massimiliano Bertoni [UNIV PADOUE, from Oct 2023]

- Salvatore Marcellini [UNIV NAPLES, from Feb 2023 until Jul 2023]

- Francesca Pagano [UNIV NAPLES, from Sep 2023]

- Danilo Troisi [UNIV PISE, from Nov 2023]

2 Overall objectives

The long-term vision of the Rainbow team is to develop the next generation of sensor-based robots able to navigate and/or interact in complex unstructured environments together with human users. Clearly, the word “together”' can have very different meanings depending on the particular context: for example, it can refer to mere co-existence (robots and humans share some space while performing independent tasks), human-awareness (the robots need to be aware of the human state and intentions for properly adjusting their actions), or actual cooperation (robots and humans perform some shared task and need to coordinate their actions).

One could perhaps argue that these two goals are somehow in conflict since higher robot autonomy should imply lower (or absence of) human intervention. However, we believe that our general research direction is well motivated since: despite the many advancements in robot autonomy, complex and high-level cognitive-based decisions are still out of reach. In most applications involving tasks in unstructured environments, uncertainty, and interaction with the physical word, human assistance is still necessary, and will most probably be for the next decades. On the other hand, robots are extremely capable of autonomously executing specific and repetitive tasks, with great speed and precision, and of operating in dangerous/remote environments, while humans possess unmatched cognitive capabilities and world awareness which allow them to take complex and quick decisions; the cooperation between humans and robots is often an implicit constraint of the robotic task itself. Consider for instance the case of assistive robots supporting injured patients during their physical recovery, or human augmentation devices. It is then important to study proper ways of implementing this cooperation; finally, safety regulations can require the presence at all times of a person in charge of supervising and, if necessary, of taking direct control of the robotic workers. For example, this is a common requirement in all applications involving tasks in public spaces, like autonomous vehicles in crowded spaces, or even UAVs when flying in civil airspace such as over urban or populated areas.

Within this general picture, the Rainbow activities will be particularly focused on the case of (shared) cooperation between robots and humans by pursuing the following vision: on the one hand, empower robots with a large degree of autonomy for allowing them to effectively operate in non-trivial environments (e.g., outside completely defined factory settings). On the other hand, include human users in the loop for having them in (partial and bilateral) control of some aspects of the overall robot behavior. We plan to address these challenges from the methodological, algorithmic and application-oriented perspectives. The main research axes along which the Rainbow activities will be articulated are: three supporting axes (Optimal and Uncertainty-Aware Sensing; Advanced Sensor-based Control; Haptics for Robotics Applications) that are meant to develop methods, algorithms and technologies for realizing the central theme of Shared Control of Complex Robotic Systems.

3 Research program

3.1 Main Vision

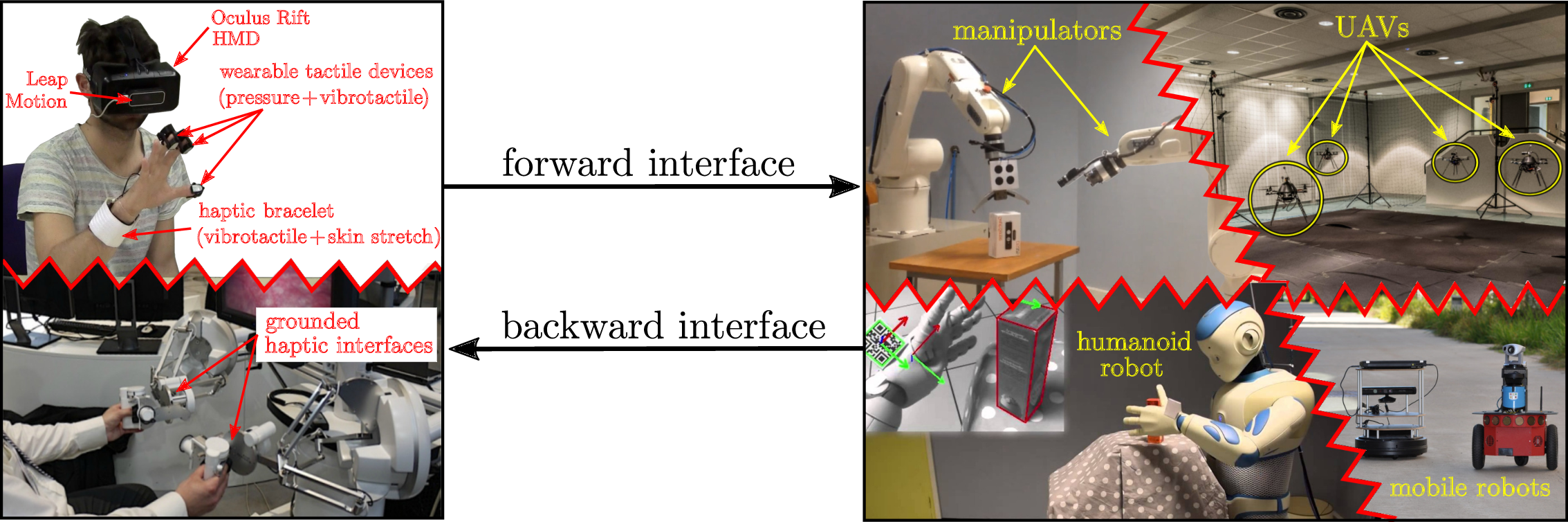

The vision of Rainbow (and foreseen applications) calls for several general scientific challenges: high-level of autonomy for complex robots in complex (unstructured) environments, forward interfaces for letting an operator giving high-level commands to the robot, backward interfaces for informing the operator about the robot `status', user studies for assessing the best interfacing, which will clearly depend on the particular task/situation. Within Rainbow we plan to tackle these challenges at different levels of depth:

- the methodological and algorithmic side of the sought human-robot interaction will be the main focus of Rainbow. Here, we will be interested in advancing the state-of-the-art in sensor-based online planning, control and manipulation for mobile/fixed robots. For instance, while classically most control approaches (especially those sensor-based) have been essentially reactive, we believe that less myopic strategies based on online/reactive trajectory optimization will be needed for the future Rainbow activities. The core ideas of Model-Predictive Control approaches (also known as Receding Horizon) or, in general, numerical optimal control methods will play a role in the Rainbow activities, for allowing the robots to reason/plan over some future time window and better cope with constraints. We will also consider extending classical sensor-based motion control/manipulation techniques to more realistic scenarios, such as deformable/flexible objects (“Advanced Sensor-based Control” axis). Finally, it will also be important to spend research efforts into the field of Optimal Sensing, in the sense of generating (again) trajectories that can optimize the state estimation problem in presence of scarce sensory inputs and/or non-negligible measurement and process noises, especially true for the case of mobile robots (“Optimal and Uncertainty-Aware Sensing” axis). We also aim at addressing the case of coordination between a single human user and multiple robots where, clearly, as explained the autonomy part plays even a more crucial role (no human can control multiple robots at once, thus a high degree of autonomy will be required by the robot group for executing the human commands);

-

the interfacing side will also be a focus of the Rainbow activities. As explained above, we will be interested in both the forward (human robot) and backward (robot human) interfaces. The forward interface will be mainly addressed from the algorithmic point of view, i.e., how to map the few degrees of freedom available to a human operator (usually in the order of 3–4) into complex commands for the controlled robot(s). This mapping will typically be mediated by an “AutoPilot” onboard the robot(s) for autonomously assessing if the commands are feasible and, if not, how to least modify them (“Advanced Sensor-based Control” axis).

The backward interface will, instead, mainly consist of a visual/haptic feedback for the operator. Here, we aim at exploiting our expertise in using force cues for informing an operator about the status of the remote robot(s). However, the sole use of classical grounded force feedback devices (e.g., the typical force-feedback joysticks) will not be enough due to the different kinds of information that will have to be provided to the operator. In this context, the recent interest in the use of wearable haptic interfaces is very interesting and will be investigated in depth (these include, e.g., devices able to provide vibro-tactile information to the fingertips, wrist, or other parts of the body). The main challenges in these activities will be the mechanical conception (and construction) of suitable wearable interfaces for the tasks at hand, and in the generation of force cues for the operator: the force cues will be a (complex) function of the robot state, therefore motivating research in algorithms for mapping the robot state into a few variables (the force cues) (“Haptics for Robotics Applications” axis);

- the evaluation side that will assess the proposed interfaces with some user studies, or acceptability studies by human subjects. Although this activity will not be a main focus of Rainbow (complex user studies are beyond the scope of our core expertise), we will nevertheless devote some efforts into having some reasonable level of user evaluations by applying standard statistical analysis based on psychophysical procedures (e.g., randomized tests and Anova statistical analysis). This will be particularly true for the activities involving the use of smart wheelchairs, which are intended to be used by human users and operate inside human crowds. Therefore, we will be interested in gaining some level of understanding of how semi-autonomous robots (a wheelchair in this example) can predict the human intention, and how humans can react to a semi-autonomous mobile robot.

An illustration of the prototypical activities foreseen in Rainbow in which a human operator is in partial (and high-level) control of single/multiple complex robots performing semi-autonomous tasks

Figure 1 depicts in an illustrative way the prototypical activities foreseen in Rainbow. On the righthand side, complex robots (dual manipulators, humanoid, single/multiple mobile robots) need to perform some task with high degree of autonomy. On the lefthand side, a human operator gives some high-level commands and receives a visual/haptic feedback aimed at informing her/him at best of the robot status. Again, the main challenges that Rainbow will tackle to address these issues are (in order of relevance): methods and algorithms, mostly based on first-principle modeling and, when possible, on numerical methods for online/reactive trajectory generation, for enabling the robots with high autonomy; design and implementation of visual/haptic cues for interfacing the human operator with the robots, with a special attention to novel combinations of grounded/ungrounded (wearable) haptic devices; user and acceptability studies.

3.2 Main Components

Hereafter, a summary description of the four axes of research in Rainbow.

3.2.1 Optimal and Uncertainty-Aware Sensing

Future robots will need to have a large degree of autonomy for, e.g., interpreting the sensory data for accurate estimation of the robot and world state (which can possibly include the human users), and for devising motion plans able to take into account many constraints (actuation, sensor limitations, environment), including also the state estimation accuracy (i.e., how well the robot/environment state can be reconstructed from the sensed data). In this context, we will be particularly interested in devising trajectory optimization strategies able to maximize some norm of the information gain gathered along the trajectory (and with the available sensors). This can be seen as an instance of Active Sensing, with the main focus on online/reactive trajectory optimization strategies able to take into account several requirements/constraints (sensing/actuation limitations, noise characteristics). We will also be interested in the coupling between optimal sensing and concurrent execution of additional tasks (e.g., navigation, manipulation). Formal methods for guaranteeing the accuracy of localization/state estimation in mobile robotics, mainly exploiting tools from interval analysis. The interest of these methods is their ability to provide possibly conservative but guaranteed accuracy bounds on the best accuracy one can obtain with the given robot/sensor pair, and can thus be used for planning purposes or for system design (choice of the best sensors for a given robot/task). Localization/tracking of objects with poor/unknown or deformable shape, which will be of paramount importance for allowing robots to estimate the state of “complex objects” (e.g., human tissues in medical robotics, elastic materials in manipulation) for controlling its pose/interaction with the objects of interest.

3.2.2 Advanced Sensor-based Control

One of the main competences of the previous Lagadic team has been, generally speaking, the topic of sensor-based control, i.e., how to exploit (typically onboard) sensors for controlling the motion of fixed/ground robots. The main emphasis has been in devising ways to directly couple the robot motion with the sensor outputs in order to invert this mapping for driving the robots towards a configuration specified as a desired sensor reading (thus, directly in sensor space). This general idea has been applied to very different contexts: mainly standard vision (from which the Visual Servoing keyword), but also audio, ultrasound imaging, and RGB-D.

Use of sensors for controlling the robot motion will also clearly be a central topic of the Rainbow team too, since the use of (especially onboard) sensing is a main characteristic of any future robotics application (which should typically operate in unstructured environments, and thus mainly rely on its own ability to sense the world). We then naturally aim at making the best out of the previous Lagadic experience in sensor-based control to propose new advanced ways of exploiting sensed data for, roughly speaking, controlling the motion of a robot. In this respect, we plan to work on the following topics: “direct/dense methods” which try to directly exploit the raw sensory data in computing the control law for positioning/navigation tasks. The advantages of these methods is the little need for data pre-processing which can minimize feature extraction errors and, in general, improve the overall robustness/accuracy (since all the available data is used by the motion controller); sensor-based interaction with objects of unknown/deformable shapes, for gaining the ability to manipulate, e.g., flexible objects from the acquired sensed data (e.g., controlling online a needle being inserted in a flexible tissue); sensor-based model predictive control, by developing online/reactive trajectory optimization methods able to plan feasible trajectories for robots subjects to sensing/actuation constraints with the possibility of (onboard) sensing for continuously replanning (over some future time horizon) the optimal trajectory. These methods will play an important role when dealing with complex robots affected by complex sensing/actuation constraints, for which pure reactive strategies (as in most of the previous Lagadic works) are not effective. Furthermore, the coupling with the aforementioned optimal sensing will also be considered; multi-robot decentralised estimation and control, with the aim of devising again sensor-based strategies for groups of multiple robots needing to maintain a formation or perform navigation/manipulation tasks. Here, the challenges come from the need of devising “simple” decentralized and scalable control strategies under the presence of complex sensing constraints (e.g., when using onboard cameras, limited fov, occlusions). Also, the need of locally estimating global quantities (e.g., common frame of reference, global property of the formation such as connectivity or rigidity) will also be a line of active research.

3.2.3 Haptics for Robotics Applications

In the envisaged shared cooperation between human users and robots, the typical sensory channel (besides vision) exploited to inform the human users is most often the force/kinesthetic one (in general, the sense of touch and of applied forces to the human hand or limbs). Therefore, a part of our activities will be devoted to study and advance the use of haptic cueing algorithms and interfaces for providing a feedback to the users during the execution of some shared task. We will consider: multi-modal haptic cueing for general teleoperation applications, by studying how to convey information through the kinesthetic and cutaneous channels. Indeed, most haptic-enabled applications typically only involve kinesthetic cues, e.g., the forces/torques that can be felt by grasping a force-feedback joystick/device. These cues are very informative about, e.g., preferred/forbidden motion directions, but are also inherently limited in their resolution since the kinesthetic channel can easily become overloaded (when too much information is compressed in a single cue). In recent years, the arise of novel cutaneous devices able to, e.g., provide vibro-tactile feedback on the fingertips or skin, has proven to be a viable solution to complement the classical kinesthetic channel. We will then study how to combine these two sensory modalities for different prototypical application scenarios, e.g., 6-dof teleoperation of manipulator arms, virtual fixtures approaches, and remote manipulation of (possibly deformable) objects; in the particular context of medical robotics, we plan to address the problem of providing haptic cues for typical medical robotics tasks, such as semi-autonomous needle insertion and robot surgery by exploring the use of kinesthetic feedback for rendering the mechanical properties of the tissues, and vibrotactile feedback for providing with guiding information about pre-planned paths (with the aim of increasing the usability/acceptability of this technology in the medical domain); finally, in the context of multi-robot control we would like to explore how to use the haptic channel for providing information about the status of multiple robots executing a navigation or manipulation task. In this case, the problem is (even more) how to map (or compress) information about many robots into a few haptic cues. We plan to use specialized devices, such as actuated exoskeleton gloves able to provide cues to each fingertip of a human hand, or to resort to “compression” methods inspired by the hand postural synergies for providing coordinated cues representative of a few (but complex) motions of the multi-robot group, e.g., coordinated motions (translations/expansions/rotations) or collective grasping/transporting.

3.2.4 Shared Control of Complex Robotics Systems

This final and main research axis will exploit the methods, algorithms and technologies developed in the previous axes for realizing applications involving complex semi-autonomous robots operating in complex environments together with human users. The leitmotiv is to realize advanced shared control paradigms, which essentially aim at blending robot autonomy and user's intervention in an optimal way for exploiting the best of both worlds (robot accuracy/sensing/mobility/strength and human's cognitive capabilities). A common theme will be the issue of where to “draw the line” between robot autonomy and human intervention: obviously, there is no general answer, and any design choice will depend on the particular task at hand and/or on the technological/algorithmic possibilities of the robotic system under consideration.

A prototypical envisaged application, exploiting and combining the previous three research axes, is as follows: a complex robot (e.g., a two-arm system, a humanoid robot, a multi-UAV group) needs to operate in an environment exploiting its onboard sensors (in general, vision as the main exteroceptive one) and deal with many constraints (limited actuation, limited sensing, complex kinematics/dynamics, obstacle avoidance, interaction with difficult-to-model entities such as surrounding people, and so on). The robot must then possess a quite large autonomy for interpreting and exploiting the sensed data in order to estimate its own state and the environmental one (“Optimal and Uncertainty-Aware Sensing” axis), and for planning its motion in order to fulfil the task (e.g., navigation, manipulation) by coping with all the robot/environment constraints. Therefore, advanced control methods able to exploit the sensory data at its most, and able to cope online with constraints in an optimal way (by, e.g., continuously replanning and predicting over a future time horizon) will be needed (“Advanced Sensor-based Control” axis), with a possible (and interesting) coupling with the sensing part for optimizing, at the same time, the state estimation process. Finally, a human operator will typically be in charge of providing high-level commands (e.g., where to go, what to look at, what to grasp and where) that will then be autonomously executed by the robot, with possible local modifications because of the various (local) constraints. At the same time, the operator will also receive online visual-force cues informative of, in general, how well her/his commands are executed and if the robot would prefer or suggest other plans (because of the local constraints that are not of the operator's concern). This information will have to be visually and haptically rendered with an optimal combination of cues that will depend on the particular application (“Haptics for Robotics Applications” axis).

4 Application domains

The activities of Rainbow fall obviously within the scope of Robotics. Broadly speaking, our main interest is in devising novel/efficient algorithms (for estimation, planning, control, haptic cueing, human interfacing, etc.) that can be general and applicable to many different robotic systems of interest, depending on the particular application/case study. For instance, we plan to consider

- applications involving remote telemanipulation with one or two robot arms, where the arm(s) will need to coordinate their motion for approaching/grasping objects of interest under the guidance of a human operator;

- applications involving single and multiple mobile robots for spatial navigation tasks (e.g., exploration, surveillance, mapping). In the multi-robot case, the high redundancy of the multi-robot group will motivate research in autonomously exploiting this redundancy for facilitating the task (e.g., optimizing the self-localization of the environment mapping) while following the human commands, and vice-versa for informing the operator about the status of a multi-robot group. In the single robot case, the possible combination with some manipulation devices (e.g., arms on a wheeled robot) will motivate research into remote tele-navigation and tele-manipulation;

- applications involving medical robotics, in which the “manipulators” are replaced by the typical tools used in medical applications (ultrasound probes, needles, cutting scalpels, and so on) for semi-autonomous probing and intervention;

- applications involving a direct physical “coupling” between human users and robots (rather than a “remote” interfacing), such as the case of assistive devices used for easing the life of impaired people. Here, we will be primarily interested in, e.g., safety and usability issues, and also touch some aspects of user acceptability.

These directions are, in our opinion, very promising since nowadays and future robotics applications are expected to address more and more complex tasks: for instance, it is becoming mandatory to empower robots with the ability to predict the future (to some extent) by also explicitly dealing with uncertainties from sensing or actuation; to safely and effectively interact with human supervisors (or collaborators) for accomplishing shared tasks; to learn or adapt to the dynamic environments from small prior knowledge; to exploit the environment (e.g., obstacles) rather than avoiding it (a typical example is a humanoid robot in a multi-contact scenario for facilitating walking on rough terrains); to optimize the onboard resources for large-scale monitoring tasks; to cooperate with other robots either by direct sensing/communication, or via some shared database (the “cloud”).

While no single lab can reasonably address all these theoretical/algorithmic/technological challenges, we believe that our research agenda can give some concrete contributions to the next generation of robotics applications.

5 Highlights of the year

5.1 Awards

- Maud Marchal has been nominated as a Junior Member of Institut Universitaire de France (2023-2028).

- The article 47 coauthored by F. Makiyeh, F. Chaumette, M. Marchal and A. Krupa was among the 10 finalists for the best paper award at the IEEE/RSJ IROS conference held in Detroit in October 2023.

- Marie Babel started a term as deputy director for the GDR Robotique.

- Claudio Pacchierotti has been nominated Co-Chair of the IEEE Technical Committee on Telerobotics.

5.2 Highlights

- Maud Marchal is the Principal Investigator of an ERC Consolidator Grant (2023-2028) for her project ADVHANDTURE

6 New software, platforms, open data

6.1 New software

6.1.1 HandiViz

-

Name:

Driving assistance of a wheelchair

-

Keywords:

Health, Persons attendant, Handicap

-

Functional Description:

The HandiViz software proposes a semi-autonomous navigation framework of a wheelchair relying on visual servoing.

It has been registered to the APP (“Agence de Protection des Programmes”) as an INSA software (IDDN.FR.001.440021.000.S.P.2013.000.10000) and is under GPL license.

-

Contact:

Marie Babel

-

Participants:

François Pasteau, Marie Babel

-

Partner:

INSA Rennes

6.1.2 UsTk

-

Name:

Ultrasound toolkit for medical robotics applications guided from ultrasound images

-

Keywords:

Echographic imagery, Image reconstruction, Medical robotics, Visual tracking, Visual servoing (VS), Needle insertion

-

Functional Description:

UsTK, standing for Ultrasound Toolkit, is a cross-platform extension of ViSP software dedicated to 2D and 3D ultrasound image processing and visual servoing based on ultrasound images. Written in C++, UsTK architecture provides a core module that implements all the data structures at the heart of UsTK, a grabber module that allows acquiring ultrasound images from an Ultrasonix or a Sonosite device, a GUI module to display data, an IO module for providing functionalities to read/write data from a storage device, and a set of image processing modules to compute the confidence map of ultrasound images, generate elastography images, track a flexible needle in sequences of 2D and 3D ultrasound images and track a target image template in sequences of 2D ultrasound images. All these modules were implemented on several robotic demonstrators to control the motion of an ultrasound probe or a flexible needle by ultrasound visual servoing.

- URL:

-

Contact:

Alexandre Krupa

-

Participants:

Alexandre Krupa, Fabien Spindler

-

Partners:

Inria, Université de Rennes 1

6.1.3 ViSP

-

Name:

Visual servoing platform

-

Keywords:

Computer vision, Robotics, Visual servoing (VS), Visual tracking

-

Scientific Description:

Since 2005, we develop and release ViSP [1], an open source library available from https://visp.inria.fr. ViSP standing for Visual Servoing Platform allows prototyping and developing applications using visual tracking and visual servoing techniques at the heart of the Rainbow research. ViSP was designed to be independent from the hardware, to be simple to use, expandable and cross-platform. ViSP allows designing vision-based tasks for eye-in-hand and eye-to-hand systems from the most classical visual features that are used in practice. It involves a large set of elementary positioning tasks with respect to various visual features (points, segments, straight lines, circles, spheres, cylinders, image moments, pose...) that can be combined together, and image processing algorithms that allow tracking of visual cues (dots, segments, ellipses...), or 3D model-based tracking of known objects or template tracking. Simulation capabilities are also available.

We have extended ViSP with a new open-source dynamical simulator named FrankaSim based on CoppeliaSim and ROS for the popular Franka Emika Robot [2]. The simulator fully integrated in the ViSP ecosystem features a dynamic model that has been accurately identified from a real robot, leading to more realistic simulations. Conceived as a multipurpose research simulation platform, it is well suited for visual servoing applications as well as, in general, for any pedagogical purpose in robotics. All the software, models and CoppeliaSim scenes presented in this work are publicly available under free GPL-2.0 license.

We have also recently introduced a module dedicated to deep neural networks (DNN) to facilitate image classification and object detection. This module is used to infer the convolutional networks Faster-RCNN, SSD-MobileNet, ResNet 10, Yolo v3, Yolo v4, Yolo v5, Yolo v7 and Yolo v8, which simultaneously predict object boundaries and prediction scores at each position.

[1] E. Marchand, F. Spindler, F. Chaumette. ViSP for visual servoing: a generic software platform with a wide class of robot control skills. IEEE Robotics and Automation Magazine, Special Issue on "Software Packages for Vision-Based Control of Motion", P. Oh, D. Burschka (Eds.), 12(4):40-52, December 2005. URL: https://hal.inria.fr/inria-00351899v1

[2] A. A. Oliva, F. Spindler, P. Robuffo Giordano and F. Chaumette. ‘FrankaSim: A Dynamic Simulator for the Franka Emika Robot with Visual-Servoing Enabled Capabilities’. In: ICARCV 2022 - 17th International Conference on Control, Automation, Robotics and Vision. Singapore, Singapore, 11th Dec. 2022, pp. 1–7. URL: https://hal.inria.fr/hal-03794415.

-

Functional Description:

ViSP provides simple ways to integrate and validate new algorithms with already existing tools. It follows a module-based software engineering design where data types, algorithms, sensors, viewers and user interaction are made available. Written in C++, ViSP is based on open-source cross-platform libraries (such as OpenCV) and builds with CMake. Several platforms are supported, including OSX, iOS, Windows and Linux. ViSP online documentation allows to ease learning. More than 307 fully documented classes organized in 18 different modules, with more than 475 examples and 114 tutorials are proposed to the user. ViSP is released under a dual licensing model. It is open-source with a GNU GPLv2 or GPLv3 license. A professional edition license that replaces GNU GPL is also available.

- URL:

-

Contact:

Fabien Spindler

-

Participants:

Éric Marchand, Fabien Spindler, François Chaumette, Olivier Roussel

6.1.4 DIARBENN

-

Name:

Obstacle avoidance through sensor-based servoing

-

Keywords:

Servoing, Shared control, Navigation

-

Functional Description:

DIARBENN's objective is to define an obstacle avoidance solution adapted to a mobile robot such as a powered wheelchair. Through a shared control system, the system corrects progressively and if necessary the trajectory when approaching an obstacle while respecting the user's intention.

-

Contact:

Marie Babel

-

Participants:

Marie Babel, François Pasteau, Sylvain Guegan

-

Partner:

INSA Rennes

6.2 New platforms

The platforms described in the next sections are labeled by the University of Rennes 1 and by ROBOTEX 2.0.

6.2.1 Robot Vision Platform

Participant: François Chaumette, Eric Marchand, Fabien Spindler [contact].

We are using an industrial robot built by Afma Robots in the nineties to validate our research in visual servoing and active vision. This robot is a 6 DoF Gantry on which it is possible to mount a gripper and an RGB-D camera on its end effector (see Fig. 2). This equipment is mainly used to validate vision-based visual servoing and real-time tracking algorithms. It should be noted that the 4 DoF cylindrical robot acquired in 1995 has been withdrawn from the platform.

In 2023, this platform has been used to validate experimental results in 1 accepted publication 38 and in 1 PhD thesis 66.

In this image we can see our Gantry robot.

6.2.2 Mobile Robots

Participants: Marie Babel, François Pasteau, Fabien Spindler [contact].

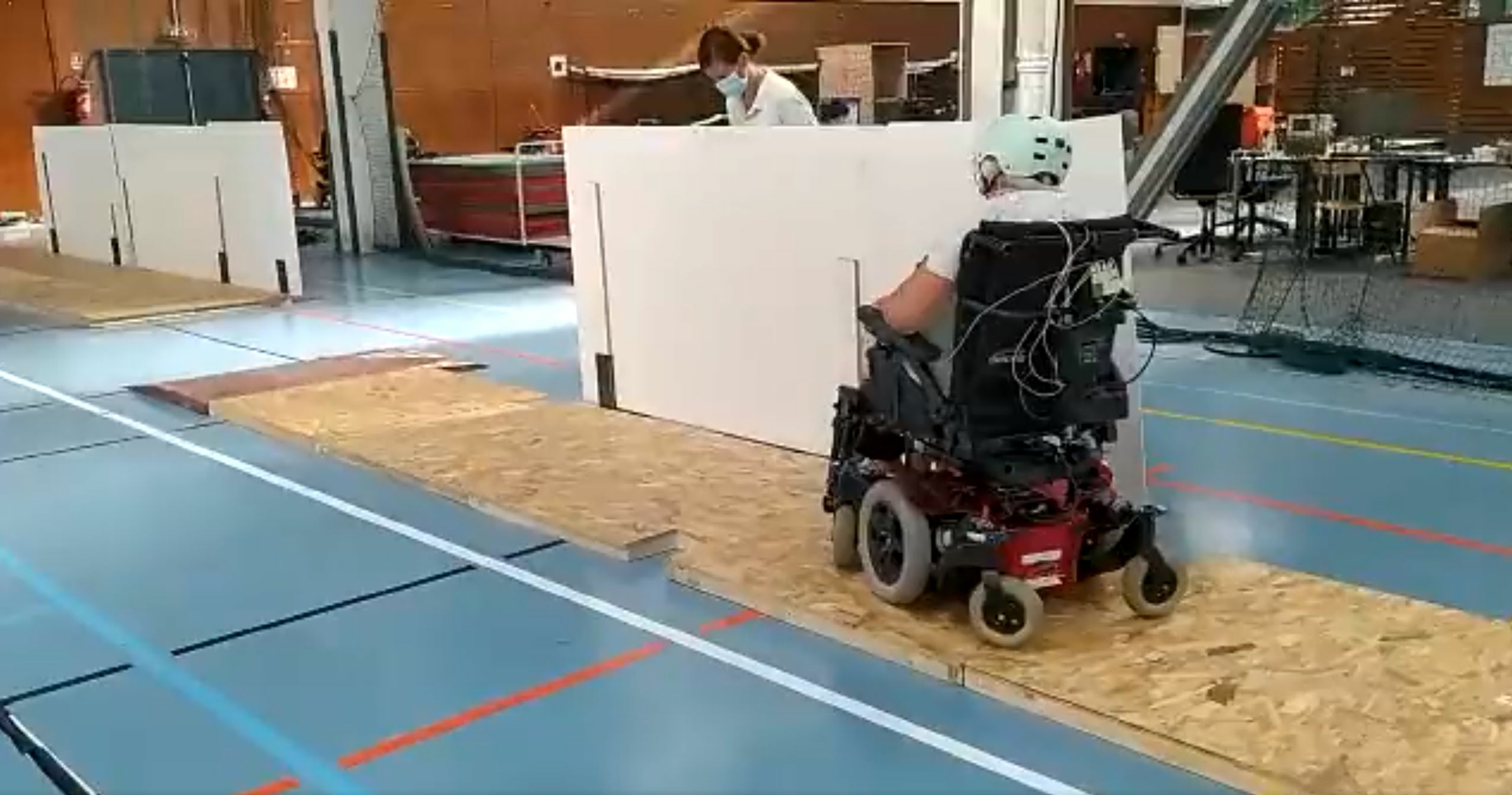

To validate our research in personally assisted living topic (see Sect. 7.4.2), we have three electric wheelchairs, one from Permobil, one from Sunrise and the last from YouQ (see Fig. 3.a). The control of the wheelchair is performed using a plug and play system between the joystick and the low level control of the wheelchair. Such a system lets us acquire the user intention through the joystick position and control the wheelchair by applying corrections to its motion. The wheelchairs have been fitted with cameras, ultrasound and time of flight sensors to perform the required servoing for assisting handicapped people. A wheelchair haptic simulator completes this platform to develop new human interaction strategies in a virtual reality environment (see Fig. 3(b)).

Moreover, for fast prototyping of algorithms in perception, control and autonomous navigation, the team uses a Pioneer 3DX from Adept (see Fig. 3.d). This platform is equipped with various sensors needed for autonomous navigation and sensor-based control.

Note that the Pepper robot, a human-shaped robot designed by SoftBank Robotics, has been removed from the platform.

In 2023, these platforms were used to obtain experimental results presented in 5 papers 23, 9, 13, 20, 46.

|

|

|

| (a) | (b) | (c) |

In the left image, our wheelchairs from Permobil, Sunrise and YouQ. In the middle image our wheelchair simulator. In the next image, our Pioneer P3DX mobile robot equipped with a camera mounted on a pan-tilt head.

6.2.3 Advanced Manipulation Platform

Participants: Alexandre Krupa, Claudio Pacchierotti, Paolo Robuffo Giordano, François Chaumette, Fabien Spindler [contact].

This platform consists of by 2 Panda lightweight 7 DoF arms from Franka Emika equipped with torque sensors in all seven axes. An electric gripper, a camera, a soft hand from qbrobotics or a Reflex TakkTile 2 gripper from RightHand Labs (see Fig. 4.b) can be mounted on the robot end-effector (see Fig. 4.a). A force/torque sensor from Alberobotics is also attached to one of the robots end-effector to provide greater accuracy in torque control.

Two Adept 6 DoF arms (one Viper 650 robot and one Viper 850 robot) and a 6 DoF Universal Robots UR5, which can also be fitted with a force sensor and a camera, complete the platform.

This setup is mainly used to manipulate deformable objects and to validate our activities in coupling force and vision for controlling robot manipulators (see Section 7.4.1) and in controlling the deformation of soft objects (Sect. 7.2.8). Other haptic devices (see Section 7.3) can also be coupled to this platform.

In 2023, 5 new papers 47, 56, 27, 31, 58 and 1 PhD thesis 65. were published including experimental results obtained with this platform.

|

|

|

||

| (a) | (b) | (c) |

In the left image our Franka robot equipped with the Pisa SoftHand grasping a box. In the middle image the Reflex TakkTile 2 gripper grasping a yellow ball. In the right image The 5 arms composing the platform with in the foreground a Franka, in the second plan our two Viper robots, then in the background, a UR 5 arm and our second Franka robot.

6.2.4 Unmanned Aerial Vehicles (UAVs)

Participants: Gianluca Corsini, Paolo Robuffo Giordano, Marco Tognon, Claudio Pacchierotti, Pierre Perraud, Fabien Spindler [contact].

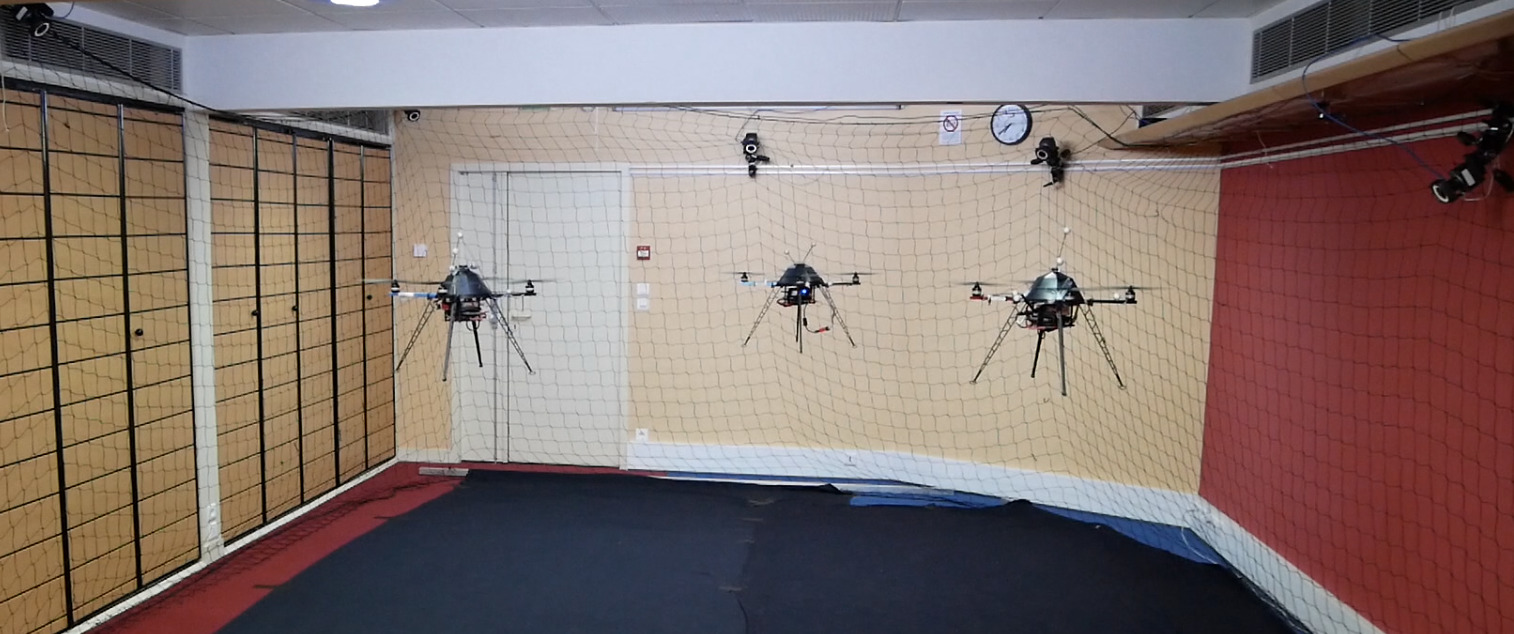

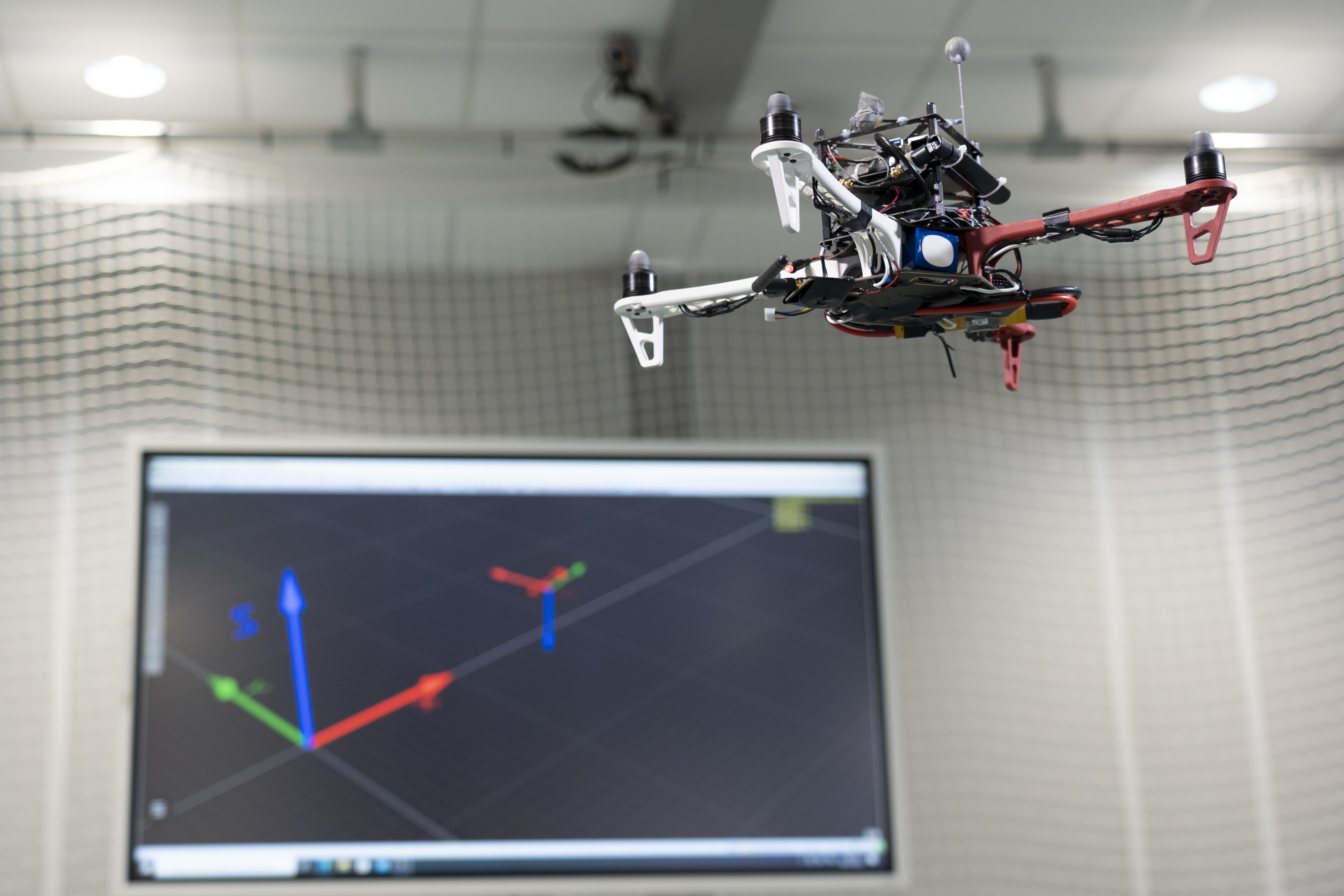

Rainbow is involved in several activities concerning perception and control for single and multiple quadrotor UAVs. To this end, we can exploit two indoor flying arenas. The first is relatively small (3m x 5m x H1.8m) and is equipped with 11 Vicon cameras for motion capture. The second (9m x 9m x H2.5m) is equipped with 8 Qualisys cameras and is available from January to August. It allows us to fly our drones in a much larger volume than the first arena.

In these arenas, we operate four quadrotors from Mikrokopter Gmbh, Germany (see Fig. 5.a) which have been heavily customised by: reprogramming from scratch the low-level attitude controller onboard the microcontroller of the quadrotors, equipping each quadrotor with a NVIDIA Jetson TX2 board running Linux Ubuntu and the TeleKyb-3 software based on genom3 framework developed at LAAS in Toulouse (the middleware used for managing the experiment flows and the communication among the UAVs and the base station), and purchasing new Realsense RGB-D cameras for visual odometry and visual servoing.

To upgrade the platform's hardware, we first set up a new drone architecture based on a DJI F450 equipped with a Pixhawk capable of communicating with a Jetson TX2, itself connected to an Intel Realsense RGB-D camera. With the DJI F450s coming to the end of their life, we continued to renew the hardware by developing a new mechanical structure using 3D printing to house a Pixhawk and a Jetson Nano. This new generation of more modular drones has been called Acanthis.

The quadrotor group is used as robotic platform for testing a number of single and multiple flight control schemes with a special attention on the use of onboard vision as main sensory modality. The first experiments allowed us to carry out a visual servoing using ViSP to position the drone w.r.t. a target.

In 2023, 2 PhD thesies 63, 66 contains experimental results obtained with this platform.

|

|

|

||

| (a) | (b) | (c) |

In the left image, one of our Mikrokopter quadrotor. In the middle image, 3 Mikrokopter quadrotors flying in the small arena equipped with Vicon Motion Capture System. In the right image, our new DJI-F450 drone equipped with a Pixhawk board flying in our larger arena equipped with a Qualisys Motion Capture system.

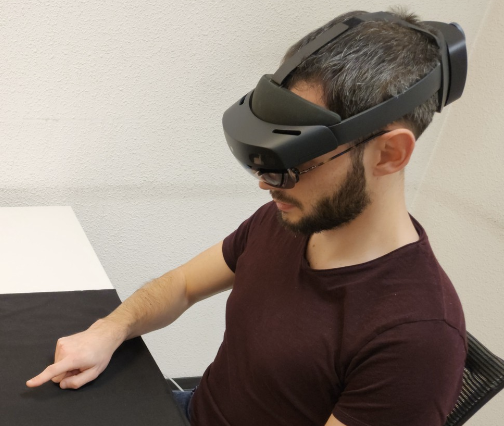

6.2.5 Interactive interfaces and systems

Participants: Claudio Pacchierotti, Paolo Robuffo Giordano, Maud Marchal, Marie Babel, Fabien Spindler [contact].

Interactive technologies enables the communication between artificial systems and human users. Examples of such technologies are haptic interfaces and virtual reality headsets.

Various haptic devices are used to validate our research in, e.g., shared control, extended reality. We design wearable haptics devices to give user feedback and use also some devices out of the shelf. We have a Virtuose 6D device from Haption (see Fig. 6.a). This device is used as master device in many of our shared control activities. An Omega 6 (see Fig. 6.b) from Force Dimension and devices from Ultrahaptics (see Fig. 6.c) complete this platform that could be coupled to the other robotic platforms.

Similarly, in order to augment the immersiveness of virtual scenarios, we make use of virtual and augmented reality headsets. We have HTC Vive headsets for VR and Microsoft Hololens for AR interactions (see Fig. 6.d).

In 2023, this platform was used to obtain experimental results presented in 13 papers 6, 7, 8, 15, 17, 18, 21, 22, 24, 25, 27, 45, 49 and 1 PhD thesis 64.

|

|

|

|

|||

| (a) | (b) | (c) | (d) |

Left image, our Virtuose 6D haptic device, in the middle-left our Omega6 haptic device, in the middle-right image the Ultraleap STRATOS device, and in the right our Microsoft Hololens 2 AR headset

6.2.6 Portable immersive room

Participants: François Pasteau, Fabien Grzeskowiak, Marie Babel [contact].

To validate our research on assistive robotics and its applications in virtual conditions, we very recently acquired a portable immersive room that is planned to be easily deployed in different rehabilitation structures in order to conduct clinical trials. The system has been designed by Trinoma company and has been funded by Interreg ADAPT project.

In 2023, this platform was used to obtain experimental results presented in 1 paper 42.

|

|

|

| (a) | (b) |

On person sitting on the wheelchair simulator placed in the portable Immersive room

7 New results

7.1 Optimal and Uncertainty-Aware Sensing

7.1.1 Trajectory Generation for Optimal State Estimation

Participants: Nicola De Carli, Lorenzo Balandi, Paolo Robuffo Giordano.

This activity addresses the general problem of active sensing where the goal is to charactrerize and generate optimal trajectories for single or multiple robotic systems that can maximize the amount of information gathered by (few) noisy outputs (i.e., sensor readings) while at the same time reducing the negative effects of the process/actuation noise. We have previously developed a general framework for solving online the active sensing problem by continuously replanning an optimal trajectory that maximizes a suitable norm of the Constructibility Gramian (CG) 67. The following works have extended or leveraged this formulation in several directions. Part of these activities is in the scope of the ANR project MULTISHARED (Sect. 9.3.10).

In 36 we have considered the active sensing problem for formations of drones measuring relative bearings. To be able to localize their relative positions from bearing measurements, the drone formation must satisfy specific Persistency of Excitation (PE) conditions. In this work we haved proposed a solution that can meet these PE conditions by maximizing the information collected from onboard cameras via a distributed gradient-based algorithm. Additionally, we have also shown how to consider presence of an additional task of interest (e.g., formation control) besides the active sensing task, thanks to the use of Quadratic Program-based control with Control Lyapunov Functions (CLFs).

In 4 we have instead considered the problem of persistently monitoring a set of moving targets using a team of aerial vehicles equipped with a camera with limited range and Field of View (FoV) providing bearing measurements. The aerial vehicles implement an Information Consensus Filter (ICF) to estimate the state of the target(s), and the ICF is proven to be uniformly globally exponentially stable under a Persistency of Excitation (PE) condition. The goal of the work is to then propose a distributed control scheme that allows maintaining a prescribed minimum PE level so as to ensure filter convergence. At the same time, the agents in the group are also allowed to perform additional tasks of interest while maintaining a collective observability of the target(s).

7.1.2 Visual SLAM

Participant: Mathieu Gonzalez, Eric Marchand.

Most classical SLAM systems rely on the static scene assumption, which limits their applicability in real world scenarios. Recent SLAM frameworks have been proposed to simultaneously track the camera and moving objects. However they are often unable to estimate the canonical pose of the objects and exhibit a low object tracking accuracy. To solve this problem we proposed this year TwistSLAM++, a semantic, dynamic, SLAM system that fuses stereo images and LiDAR information. Using semantic information, we track potentially moving objects and associate them to 3D object detections in LiDAR scans to obtain their pose and size. Then, we perform registration on consecutive object scans to refine object pose estimation. Finally, object scans are used to estimate the shape of the object and constrain map points to lie on the estimated surface within the bundle adjustment. We show on classical benchmarks that this fusion approach based on multimodal information improves the accuracy of object tracking. This work 41 was done in cooperation with the IRT B-Com.

7.2 Advanced Sensor-Based Control

7.2.1 Trajectory Generation for Minimum Closed-Loop State Sensitivity

Participants: Pascal Brault, Ali Srour, Paolo Robuffo Giordano.

The goal of this research activity is to propose a new point of view in addressing the control of robots under parametric uncertainties: rather than striving to design a sophisticated controller with some robustness guarantees for a specific system, we propose to attain robustness (for any choice of the control action) by suitably shaping the reference motion trajectory so as to minimize the state sensitivity to parameter uncertainty of the resulting closed-loop system. This activity in the scope of the ANR project CAMP (Sect. 9.3.9).

Over the past years, we have developed the notion of closed-loop “state sensitivity” and “input sensitivity” metrics. These allow formulating an optimal trajectory optimization problem whose solution results in a reference trajectory that, when perturbed, requires minimal change of the control inputs and has minimal deviations in the final tracking error. During the year we have also worked on several additional extensions that resulted in the following contributions:

- together with Ali Srour we have extended in 57 the trajectory optimization problem to also consider the control gains as possible optimization variables. We could then generate minimally-sensitive trajectories that further reduce the state sensitivity by a proper choice of the control gains. The idea has been tested on three case studies involving a 3D quadrotor with a Lee (geometric) controller, a near-hovering (NH) controller and a sliding mode controller. The results of a large-scale simulative campaign showed an interesting pattern in the approach to the final hovering state, as well as unexpected trends in the optimized gains (e.g., from a statistical point of view, the “derivative” gains of the position loop were often reduced w.r.t. their initial values whereas the proportional gains were increased in average). These findings motivate us to keep working on the idea of jointly optimizing the motion and control gains for attaining robustness

- together with Andrea Pupa and Cristian Secchi (Univ. Modena and Reggio Emilia, Italy), we have proposed in 51 a trajectory optimization formulation for determining the optimal energy tank initialization that allows implementing passive actions while being robust to model uncertainties. Indeed, energy tanks have gained popularity inside the robotics and control communities over the last years since they represent a powerful tool to enforce passivity (and, thus, input/output stability) of a controlled robot, possibly interacting with uncertain environments. However, an open issue when using energy tanks is the choice of the initial tank energy (a free parameter). In this work, we show how this initial energy can be optimally chosen for guaranteeting execution of a task also in presence of model uncertainties, thanks to the clever use of the state sensitivity (and derived quantities) in the optimization problem

- together with Simon Wasiela (LAAS-CNRS), we have proposed in 59 a global control-aware motion planner for optimizing the state sensitivity metric and producing collision-free reference motions that are robust against parametric uncertainties for a large class of complex dynamical systems. In particular, we have proposed a RRT*-based planner called SAMP that uses an appropriate steering method to first compute a (near) time-optimal and kinodynamically feasible trajectory that is then locally deformed to improve robustness and decrease its sensitivity to uncertainties. The evaluation performed on planar/full-3D quadrotor UAV models shows that the SAMP method produces low sensitivity robust solutions with a much higher performance than a planner directly optimizing the sensitivity.

7.2.2 UWB beacon navigation of assisted power wheelchair

Participants: Vincent Drevelle, Marie Babel, François Pasteau, Theo Le Terrier.

New sensors, based on ultra-wideband (UWB) radio technology, are emerging for indoor localization and object tracking applications. Contrarily to vision, these sensors are low-cost, non-intrusive and easy to adapt on the wheelchair. They enable measuring distances between fixed beacons and mobile sensors. We seek to exploit these UWB sensors for the navigation of a wheelchair, despite the low accuracy of the measurements they provide. The problem here lies in defining an autonomous or shared sensor-based control solution, which takes into account the measurement uncertainty related to UWB beacons.

Because of multipath or non-line-of-sight propagation, erroneous measurements can perturb radio beacon ranging in cluttered indoors environment. This happens when people, objects or even the wheelchair and its driver mask the direct line-of-sight between the two beacons.

We designed a robust wheelchair positioning method, based on an extended Kalman filter with outlier identification and rejection. The method fuses UWB range measurements with low-cost gyro and motor commands to estimate the orientation and position of the wheelchair. A dataset with various density of people around the wheelchair driver has been recorded. Preliminary results show decimeter-level planar accuracy in crowded environment.

Based on this pose estimator, a demonstration of autonomous navigation between defined poses has been shown to practitioners and power wheelchair users during the IH2A boot camp. This will provide the ground of coarse-grained navigation, while the use of sensor-based control and complementary sensors is investigated for precision tasks.

7.2.3 Imitation Learning for Visual Servoing

Participant: Paolo Robuffo Giordano.

One active research direction in robotics is to make them adaptive and easy to use also by unskilled operators. In this context, the framework of dynamical system-based imitation learning plays an important role. In fact, it allows to realize stable and complex robotic tasks without explicitly coding them, thus facilitating the robot use. However, the adaptation capabilities of dynamical systems have not been fully exploited due to the lack of closed-loop implementations making use of visual feedback. In this regard, the integration of visual information allows higher flexibility to cope with environmental changes. To this end, in 50 we have presented a dynamical system-based imitation learning for visual servoing based on the large projection task priority formulation. The proposed scheme enables complex and stable visual tasks, as demonstrated by a simulation analysis and experiments with a robotic manipulator.

7.2.4 Visual servo of the orientation of an Earth observation satellite

Participants: Maxime Robic, Eric Marchand, François Chaumette.

This study is done in the scope of the BPI Lichie project (see 9.3.8). Its goal is to control the orientation of a satellite to track particular objects on the Earth. In a first part, we designed an image-based control scheme able to compensate for the satellite, Earth, and potential object motions. We are currently considering how to avoid motion-blur effects in the images acquired by the camera.

7.2.5 Multi-sensor-based control for accurate and safe assembly

Participants: John Thomas, François Pasteau, François Chaumette.

This study is also done in the scope of the BPI Lichie project (see 9.3.8). Its goal is to design sensor-based control strategies coupling vision and proximetry data for ensuring precise positioning while avoiding obstacles in dense environments. The targeted application is the assembly of satellite parts.

In a first part of this study, we designed a general ring of proximetry sensors and modeled the system so that tasks such that plane-to-plane positioning can be achieved. The stability analysis of this system has been done in 31 while its expression using screw formalism has been presented at 58

7.2.6 Sensor-based Control for Cable-Driven Parallel Robots

Participant: François Chaumette.

This study is done through the Ph.D. of Thomas Rousseau at IRT Jules Verne in collaboration with Stéphane Caro (LS2N) (see 8.2.2). We use a combination of proximetry sensors and an eye-to-hand vision sensor so that the plaform of a cable-driven parallel robot is able to inspect large parts 53. The stability analysis of this system has been presented in 54

7.2.7 Visual Exploration of an Indoor Environment

Participants: Thibault Noël, Eric Marchand, François Chaumette.

This study is done in collaboration with the Creative company in Rennes (see Section 7.2.7). It is devoted to the exploration of indoor environments by a mobile robot for a complete and accurate reconstruction of the environment.

In this context, we proposed a novel roadmap construction method in unknown environments, which relies on the extraction of the Hamilton-Jacobi skeleton of the free space. This skeleton is used to construct a graph of free-space bubbles, effectively compressing the skeleton information in a sparse data structure but retaining its topology. The bubbles also enforce safety directly in the roadmap structure. We first demonstrate the relevance of this approach for standard path-planning tasks. We also propose a frontiers-based exploration strategy able to autonomously and safely build a complete 2D map of the environment 23.

7.2.8 Shape servoing of soft objects using Fourier descriptors

Participants: Fouad Makiyeh, Alexandre Krupa, Maud Marchal, François Chaumette.

This study takes place in the context of the GentleMAN project (see Section 9.1.1). In 47, we proposed a shape visual servoing approach to deform a soft object toward a desired 3D shape using a limited number of handling points. For this purpose, the shape of the deformable object is represented using Fourier descriptors. We derived the analytical relation that provides the variation of the Fourier coefficients as a function of the movements of the handling points by considering a mass-spring model (MSM) and a control law was then designed from this relation. Since the MSM provides an approximation of the object behavior, which in practice can lead to a drift between the object and its model, an online realignment of the model with the real object is performed by tracking its surface from data provided by a remote RGB-D camera. Simulation results validated the approach for the case where many points interact on a 2D soft object while experimental results obtained with two robotic arms demonstrated the autonomous shaping of a 3D soft object.

7.2.9 Constraint-based simulation of passive suction cups

Participants: Maud Marchal.

In 5, we proposed a physics-based model of suction phenomenon to achieve simulation of deformable objects like suction cups. Our model uses a constraint-based formulation to simulate the variations of pressure inside suction cups. The respective internal pressures are represented as pressure constraints which are coupled with anti-interpenetration and friction constraints. Furthermore, our method is able to detect multiple air cavities using information from collision detection. We solve the pressure constraints based on the ideal gas law while considering several cavity states. We test our model with a number of scenarios reflecting a variety of uses, for instance, a spring loaded jumping toy, a manipulator performing a pick and place task, and an octopus tentacle grasping a soda can. We also evaluate the ability of our model to reproduce the physics of suction cups of varying shapes, lifting objects of different masses, and sliding on a slippery surface. The results show promise for various applications such as the simulation in soft robotics and computer animation.

7.2.10 Deep visual servoing

Participants: Eric Marchand, Samuel Felton.

We proposed a new visual servoing method that controls a robot's motion in a latent space. We aim to extract the best properties of two previously proposed servoing methods: we seek to obtain the accuracy of photometric methods such as Direct Visual Servoing (DVS), as well as the behavior and convergence of pose-based visual servoing (PBVS). Photometric methods suffer from limited convergence area due to a highly non-linear cost function, while PBVS requires estimating the pose of the camera which may introduce some noise and incurs a loss of accuracy. Our approach relies on shaping (with metric learning) a latent space, in which the representations of camera poses and the embeddings of their respective images are tied together. By leveraging the multimodal aspect of this shared space, our control law minimizes the difference between latent image representations thanks to information obtained from a set of pose embeddings 38.

7.2.11 Multi-Robot Formation Control and Localization

Participant: Paolo Robuffo Giordano, Claudio Pacchierotti, Maxime Bernard, Nicola De Carli, Lorenzo Balandi, Esteban Restrepo, Antonio Marino, Francesca Pagano.

Systems composed by multiple robots are useful in several applications where complex tasks need to be performed. Examples range from target tracking, to search and rescue operations and to load transportation. We have been very active over the last years on the topics of coordination, estimation, localization and control of multiple robots under the possible guidance of a human operator (see, e.g., Sect. 9.3.10). During this year we have produced the contributions discussed in Sect. 7.1.1 (which consider “active sensing” tasks or constraints) as well as the following ones focused on other aspects of multi-robotics:

- in 34 we have proposed a decentralized connectivity maintenance algorithm for controlling a group of quadrotor UAVs with limited field of view (FOV) and not sharing a common reference frame for collectively expressing measurements and commands. This is in contrast to the vast majority of previous works on this topic which, instead, make the (simplifying) assumptions of omnidirectional sensing and presence of a common shared frame. This goal is achieved by designing a gradient-based connectivity-maintenance controller able to take into account the presence of a limited FOV. The controller needs availability of the UAVs relative orientations which are not assumed to be directly measurable. We then also proposed a novel decentralized estimator of the UAV relative orientation for correctly implementing the connectivity-maintenance action. The framework is validated in realistic simulations involving a human operator in control of 6 UAVs

- in 28 we consider the problem of increasing the robustness of a multi-agent network to node failure or removal. This is obtained by proposing a control approach able to maintain biconnectivity of an initially biconnected graph. Remarkably, if the graph is not initially biconnected or if the property is lost after a node removal or failure, our approach is also able to render the graph biconnected after a time instant which can be tuned by the user. The proposed control algorithm is completely distributed using only locally available information and requires the estimation of a single global parameter akin to existing connectivity maintenance algorithms in the literature. Numerical simulations illustrate the effectiveness of the approach

- in 62 we have addressed the problem of designing a control algorithm for multi-agent nonholonomic vehicles, to make them adopt a formation around a non pre-specified point on the plane, and with common but non pre-imposed orientation. We consider the rendezvous problem for second-order (force-controlled) nonholonomic systems with time-varying measurement delays for which velocity measurements are not available. The novelty and most appealing feature of the approach is that it is physics-based; it relies on the design of distributed dynamic controllers that may be assimilated to second-order mechanical systems. The consensus task is achieved by making the controllers, not the vehicles themselves directly, achieve consensus. Then, the vehicles are steered into a formation by virtue of fictitious mechanical couplings with their respective controllers. We cover different settings of increasing technical difficulty, from consensus formation control of second-order integrators to second-order nonholonomic vehicles and in scenarii including both state- and output-feedback control. The approach is tested in a realistic case study in which the vehicles communicate over a common WiFi network that introduces time-varying delays.

- in 29 we have addressed the problem of simultaneous topology identification and synchronization of a complex dynamical network with directed interconnections. In many situations of complex dynamical networks the topology of the interconnections between the nodes (agents) is unknown, therefore the network is not directly accessible to control or analysis. We studied the graph identification problem using an edge-agreement framework that allows us to write the system in a form usually found in the literature of adaptive control. Based on this representation we could propose a new algorithm where the dynamics of an auxiliary network are designed to be -persistently exciting, allowing us to identify the unknown topology while guaranteeing that the synchronization behavior of the system is achieved (preserved). This result distinguishes itself from the the previous works in that we were able to provide strong stability results for the identification errors. Such characterization of the error trajectories is not only stronger than the convergence results generally proposed in the previous literature, but we believe that it is also an important property that may allow to apply these results to more complex systems and applications. This effectiveness of this approach was also demonstrated with a numerical example.

- We are also considering approaches from a different point of view involving the use of machine learning for easing the computational and communication burden of (more classical) model-based formation control and estimation laws. In this direction, we have submitted to the IEEE Transactions on Control of Network Systems a work where we study the conditions for input-state stability (ISS) and incremental input-state stability (δISS) of Gated Graph Neural Networks (GGNNs). We show that this recurrent version of Graph Neural Net-works (GNNs) can be expressed as a dynamical distributed system and, as a consequence, can be analysed using model-based techniques to assess its stability and robustness properties. Then, the stability criteria found can be exploited as constraints during the training process to enforce the internal stability of the neural network. We illustrate these findings in two distributed control examples, flocking and multi-robot motion control, showing that the use of these conditions increases the performance and robustness of the gated GNNs

7.2.12 Warping Character Animations using Visual Motion Features

Participants: Claudio Pacchierotti.

We addressed the challenge of efficiently and automatically adapting existing character animations to new contexts. While some methods exist to edit animations, few consider the impact of camera angles on visual features and how they affect character motion. The proposed solution introduces viewpoint-dependent motion warping units that make subtle adjustments to animations based on specified visual motion features like visibility and spatial extent. This technique is framed as a visual servoing problem, regulating animation warping by controlling specific visual features. The results demonstrate the effectiveness of this approach in various motion editing tasks and suggest its potential in enhancing virtual characters' communication abilities by incorporating attention-awareness 16.

7.3 Haptic Cueing for Robotic Applications and Virtual Reality (VR)

7.3.1 Wearable haptics for human-centered robotics and Virtual Reality (VR)

Participants: Claudio Pacchierotti, Maud Marchal, Lisheng Kuang.

We have been working on wearable haptics since few years now, both from the hardware (design of interfaces) and software (rendering and interaction techniques) points of view. This line of research has continued also in this year.

In 17, we present a versatile 4-DoF hand wearable haptic device tailored for VR. Its adaptable design accommodates various end-effectors, facilitating a wide spectrum of tactile experiences. Comprising a fixed upper body attached to the hand's back and interchangeable end-effectors on the palm, the device employs articulated arms actuated by four servo motors. The work outlines its design, kinematics, and a positional control strategy enabling diverse end-effector functionality. Through three distinct end-effector demonstrations mimicking interactions with rigid, curved, and soft surfaces, we showcase its capabilities. Human trials in immersive VR confirm its efficacy in delivering immersive interactions with varied virtual objects, prompting discussions on additional end-effector designs.

In 12, we conducted experiments to assess various haptic feedback types during needle insertion by a remote robot into soft tissues. Our study involved human subjects and analyzed grounded kinesthetic, wearable cutaneous vibrotactile, and wearable cutaneous pressure feedback. Results highlighted that employing different channels—kinesthetic and cutaneous—enhanced performance in identifying tissue layers compared to utilizing the same commercial kinesthetic interface for both feedback types. Additionally, cutaneous pressure feedback was found more effective in representing the elastic component than vibrotactile sensations. Interestingly, our findings suggest that delocalized wearable cutaneous sensations effectively render the elastic component, emphasizing the importance of multi-channel feedback in simulating needle-tissue interactions.

In 32, we investigated the potential of wearable electrotactile feedback for improving contact information in virtual reality (VR)interactions. Introducing a novel electrotactile rendering method, we modulated stimuli intensity based on the user's finger-to-virtual surface interpenetration distance. In an initial study (N=21), compared to visual or no feedback, interpenetration feedback notably enhanced contact precision and accuracy. Calibration precision for electrotactile stimuli emerged as crucial, prompting a second study (N=16) comparing non-VR keyboard versus VR direct interaction calibration techniques. Despite similar usability and accuracy, the VR method notably excelled in speed. These findings advocate wearable electrotactile feedback as a potent substitute for visual feedback, promising improved contact perception in VR interactions.

In 60, we have introduced a prototyping toolkit designed to facilitate wearable haptic interaction experiences, addressing challenges in integrating various smart materials and devices into coherent experiences due to disparate programming interfaces. The toolkit integrates actuators and sensors into the design environment, accommodating humans, robots, and the interactive space. It offers an easy-to-use graphical interface for non-programmers, seamless device extensibility via the Robot Operating System (ROS), and the ability to develop complex actuation sequences. It enables manual or adaptive actuation of sensations based on sensor readings, providing a modular and versatile system for diverse haptic interactions.

7.3.2 Affective and persuasive haptics for Virtual Reality (VR)

Participants: Claudio Pacchierotti.

Affective and persuasive haptics in Virtual Reality (VR) constitute an evolving frontier that explores the integration of tactile feedback to evoke emotional responses and influence user behavior within virtual environments. By leveraging haptic technologies, these systems aim to create immersive experiences that go beyond visual and auditory stimuli, introducing touch as a compelling tool for emotional engagement and persuasion.

In 44, we investigated promoting positive social interactions in VR by enhancing empathy among users through affective wearable haptic feedback. Our study utilized a virtual meeting scenario where a human user interacts with virtual agents, experiencing the stress level changes of a presenting virtual agent. Employing compression belts and vibrators mimicking the presenter's breathing and heart rate, we conducted a user study comparing “sympathetic” haptic feedback to an “indifferent” feedback approach. While results varied among users, generally, sympathetic wearable haptic rendering was preferred and enhanced empathy and perceived connection to the presenter. These findings advocate for integrating affective haptics in social VR applications, particularly in fostering positive relationships between users.

In 55, we investigated the impact of synchronized tactile feedback with speech on social interactions within Virtual Reality (VR). Participants engaged in immersive VR scenarios involving verbal communication with virtual agents across two experiments. In the first, we augmented speech with vibrotactile feedback for one virtual agent, resulting in enhanced co-presence, persuasiveness, and leadership perception. In the second experiment, participants' own speech augmented with vibrotactile feedback led to heightened co-presence and self-perceived persuasiveness. These findings underscore the significant potential of haptic feedback in VR to augment social interactions, indicating broader applications in contexts where verbal communication is pivotal.

In 52, we investigated gaze behavior's impact on social interactions in virtual reality. Analyzing a within-subject experiment involving 21 users navigating a virtual street with idle or moving crowds of agents, we manipulated agents' gaze (averted, directed, shifting). Results revealed the preserved stare-in-the-crowd effect in dynamic scenarios, influencing users' gaze and social anxiety. Directed gazes affected gaze interaction and proximity behaviors, while locomotion remained unchanged. These findings underscore the significance of virtual agents' gaze in VR environments and emphasize the need for further exploration to better comprehend factors influencing gaze and locomotion interactions in such settings.

7.3.3 Mid-Air Haptic Feedback

Participants: Claudio Pacchierotti, Maud Marchal, Thomas Howard, Guillaume Gicquel, Lendy Mulot.

In the framework of H2020 projects H-Reality and E-TEXTURE, we have been working to develop novel mid-air haptics paradigms that can convey the information spectrum of touch sensations in the real world, motivating the need to develop new, natural interaction techniques. Both projects ended in 2022, but we have continued to work on this exciting subject.

In 15, we explore merging acoustically transparent tangible objects (ATTs) with ultrasound mid-air haptic (UMH) feedback for seamless haptic interactions with digital content. Balancing unique strengths and limitations, both methods offer unobtrusive user experiences. The work outlines the haptic design space and technical prerequisites for this fusion. Addressing potential sound interference by ATTs affecting UMH delivery, we examine single ATT surfaces' impact on ultrasound focal points. Human trials assessing detection thresholds, motion discrimination, and UMH localization reveal that ATT surfaces can maintain UMH fidelity. Findings indicate the feasibility of combining ATTs and UMH, fostering potential applications in haptic technology without compromising UMH effectiveness.

In 49, we explored mid-air shape rendering methodologies. Dynamic Tactile Pointers (DTP) have emerged as a superior method for conveying shape information by guiding an amplitude-modulated focal point along contours. Contrarily, the conventional approach, Spatio-Temporal Modulation (STM), produces blurry shapes affecting identification accuracy. Although DTP yields clearer shapes, they are perceived weaker compared to STM. To address this, we propose investigating Spatio-temporally-modulated Tactile Pointers (STP), aiming to enhance tactile intensity using spatio-temporal modulation instead of amplitude modulation. This exploration seeks to improve perceived intensity while maintaining shape clarity in ultrasound-based haptic feedback systems.

In 21, we explored enhancing ultrasound mid-air haptic (UMH) shape rendering methods. Spatio-temporal modulation (STM) offers design flexibility but produces blurry shapes, while Dynamic Tactile Pointers (DTP) enhance clarity but with reduced stimulus intensity. Introducing Spatio-temporally-modulated Tactile Pointers (STP), we aimed to improve shape clarity while maintaining strong vibrotactile sensations. Our experiments revealed that STP shapes were perceived as notably stronger than DTP shapes, with improved shape identification akin to DTP and surpassing STM. This work provided insights for effective UMH shape rendering, shedding light on vibrotactile shape perception and potentially guiding future psychophysical investigations in UMH technology.

In 22, we introduced and assessed mid-air ultrasound haptic strategies for 2-degree-of-freedom position and orientation guidance in Virtual Reality. Four strategies for position guidance and two for orientation guidance were devised and evaluated in a human subject study. Findings revealed significant enhancements in positioning performance within static scenarios compared to visual feedback alone. Conversely, orientation guidance significantly improved performance in dynamic scenarios but not in static settings, highlighting the effectiveness of these strategies based on environmental context in VR.

7.3.4 Encounter-Type Haptic Devices

Participants: Claudio Pacchierotti, Lisheng Kuang, Elodie Bouzbib.

Encounter-Type Haptic Displays (ETHDs) provide haptic feedback by positioning a tangible surface for the user to encounter. This allows users to freely elicit haptic feedback with a surface during a virtual simulation. ETHDs differ from most of current haptic devices which rely on an actuator always in contact with the user.