Section: New Results

Narratives in Crowdsourced Evaluation of Visualizations: A Double-Edged Sword?

Participants : Evanthia Dimara [correspondant] , Pierre Dragicevic, Anastasia Bezerianos.

|

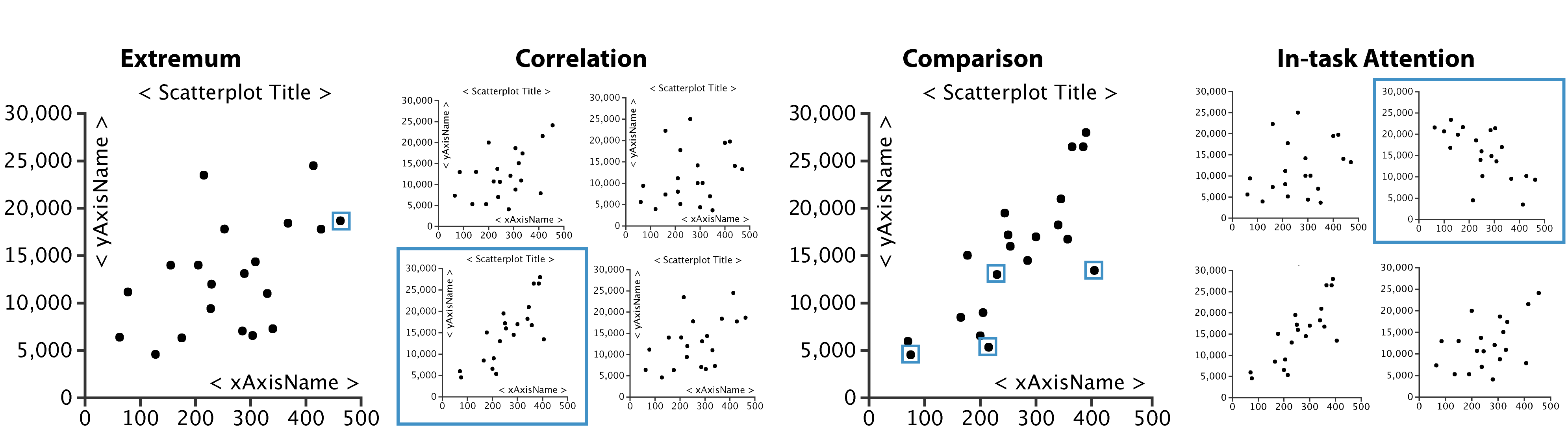

We explore the effects of providing task context when evaluating visualization tools using crowdsourcing. We gave crowdworkers i) abstract information visualization tasks without any context, ii) tasks where we added semantics to the dataset, and iii) tasks with two types of backstory narratives: an analytic narrative and a decision-making narrative. Contrary to our expectations, we did not find evidence that adding data semantics increases accuracy, and further found that our backstory narratives can even decrease accuracy. Adding dataset semantics can however increase attention and provide subjective benefits in terms of confidence, perceived easiness, task enjoyability and perceived usefulness of the visualization. Nevertheless, our backstory narratives did not appear to provide additional subjective benefits. These preliminary findings suggest that narratives may have complex and unanticipated effects, calling for more studies in this area.

More on the project Web page: http://www.aviz.fr/narratives.