Section: New Results

Virtual reality for Musical Performance

Participants : Florent Berthaut, Martin Hachet.

Immersive virtual environments open new perspectives for music interaction, notably for the visualization of sound processes and of musical structures, for the navigation in musical compositions, for the manipulation of sound parameters and for musical collaboration. Research conducted by Florent Berthaut and Martin Hachet, in collaboration with Myriam Desainte-Catherine from the SCRIME/LaBRI, explore these new possibilities.

Among the current projects, development of the Drile immersive virtual musical instrument was pursued in order to enable various scenographic setups that will be evaluated in the context of public performance. New perspectives for the Tunnels, 3D widgets for musical modulation (see Figure 6 ), were published as a Poster in the Proceedings of the Symposium on 3D User Interfaces (3DUI) [10] . Novel 3D selection techniques that take music interaction constraints into account are also being designed.

Another project was conducted with David Janin and Benjamin Martin from the LaBRI on new musical models that will be used to improve the hierarchical musical structures manipulated with Drile. It was published in the International Conference on Semantic Computing [11] .

A collaboration was started with researchers of the Center for Computer Research on Music and Acoustics (CCRMA) of Stanford University. Florent Berthaut was invited for two months at CCRMA, where he worked with Luke Dahl and Chris Chafe on the implementation of musical collaboration modes in immersive virtual environments. A first result is the design of 3D musical collaboration widgets for Drile, which will be evaluated with musicians.

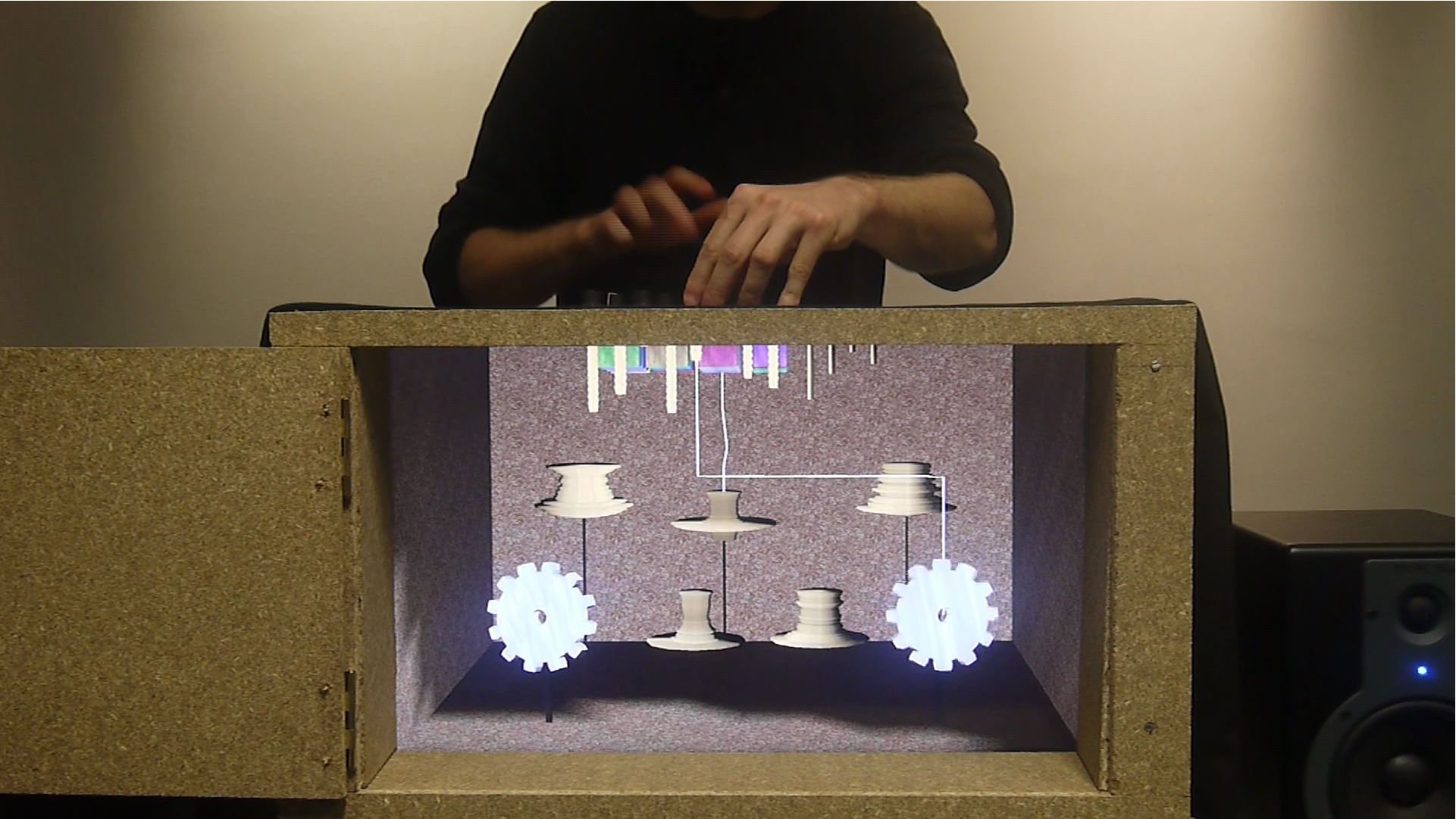

Another project was initiated with researchers of the Bristol Interaction and Graphics group of the University of Bristol. This project aims at improving the audience experience with Digital Musical Instruments (DMIs). These instruments are often confusing for spectators because of the variety of used components and because of the lack of physical continuity between musicians gestures and the resulting sound. A novel approach was implemented using a mixed-reality system in order to reveal the mechanisms of DMIs (see Figure 7 ). A description of this approach and of the first prototype will be submitted to the conference on New Interfaces for Musical Expression.